Oracle PL/SQL Programming (2014)

Part V. PL/SQL Application Construction

Chapter 20. Managing PL/SQL Code

Writing the code for an application is just one step toward putting that application into production and then maintaining the code base. It is not possible within the scope of this book to fully address the entire life cycle of application design, development, and deployment. I do have room, however, to offer some ideas and advice about the following topics:

Managing and analyzing code in the database

When you compile PL/SQL program units, the source code is loaded into the data dictionary in a variety of forms (the text of the code, dependency relationships, parameter information, etc.). You can then use SQL statements to retrieve information about those program units, making it easier to understand and manage your application code.

Using compile-time warnings

Starting with Oracle Database 10g, Oracle has added significant new and transparent capabilities to the PL/SQL compiler. The compiler will now automatically optimize your code, often resulting in substantial improvements in performance. In addition, the compiler will provide warnings about your code that will help you improve its readability, performance, and/or functionality.

Managing dependencies and recompiling code

Oracle automatically manages dependencies between database objects. It is very important to understand how these dependencies work, how to minimize invalidation of program units, and how best to recompile program units.

Testing PL/SQL programs

Testing our programs to verify correctness is central to writing and deploying successful applications. You can strengthen your own homegrown tests with automated testing frameworks, both open source and commercial.

Tracing PL/SQL code

Most of the applications we write are very complex—so complex, in fact, that we can get lost inside our own code. Code instrumentation (which means, mostly, inserting trace calls in your programs) can provide the additional information needed to make sense of what we write.

Debugging PL/SQL programs

Many development tools now offer graphical debuggers based on Oracle’s DBMS_DEBUG API. These provide the most powerful way to debug programs, but they are still just a small part of the overall debugging process. In this chapter I also discuss program tracing and explore some of the techniques and (dare I say) philosophical approaches you should utilize to debug effectively.

Protecting stored code

Oracle offers a way to “wrap” source code so that confidential and proprietary information can be hidden from prying eyes (unless, of course, those eyes download an “unwrapper” utility). This feature is most useful to vendors who sell applications based on PL/SQL stored code.

Using edition-based redefinition

New to Oracle Database 11g Release 2, this feature allows database administrators to “hot patch” PL/SQL application code. Prior to this release, if you needed to recompile a production package with state (package-level variables), you would risk the dreaded ORA-04068 error unless you scheduled downtime for the application—and that would require you to kick the users off the system. Now, new versions of code and underlying database tables can be compiled into the application while it is being used, reducing the downtime for Oracle applications. This is primarily a DBA feature, but it is covered lightly in this chapter.

Managing Code in the Database

When you compile a PL/SQL program unit, its source code is stored in the database itself. Information about that program unit is then made available through a set of data dictionary views. This approach to compiling and storing code information offers two tremendous advantages:

Information about that code is available via the SQL language

You can write queries and even entire PL/SQL programs that read the contents of these data dictionary views, obtain lots of fascinating and useful information about your code, and even change the state of your application code.

The database manages dependencies between your stored objects

In the world of PL/SQL, you don’t have to “make” an executable that is then run by users. There is no “build process” for PL/SQL. The database takes care of all such housekeeping details for you, letting you focus more productively on implementing business logic.

The following sections introduce you to some of the most commonly accessed sources of information in the data dictionary.

Overview of Data Dictionary Views

The Oracle data dictionary is a jungle—lushly full of incredible information, but often with less than clear pathways to your destination. There are hundreds of views built on hundreds of tables, many complex interrelationships, special codes, and, all too often, nonoptimized view definitions. A subset of this multitude is particularly handy to PL/SQL developers; I will take a closer look at the key views in a moment. First, it is important to know that there are three types or levels of data dictionary views:

USER_*

Views that show information about the database objects owned by the currently connected schema.

ALL_*

Views that show information about all of the database objects to which the currently connected schema has access (either because it owns them or because it has been granted access to them). Generally they have the same columns as the corresponding USER views, with the addition of an OWNER column in the ALL views.

DBA_*

Views that show information about all the objects in the database. Generally they have the same columns as the corresponding ALL views. Exceptions include the v$, gx$, and x$ views.

I’ll work with the USER views in this chapter; you can easily modify any scripts and techniques to work with the ALL views by adding an OWNER column to your logic. The following are some views a PL/SQL developer is most likely to find useful:

USER_ARGUMENTS

The arguments (parameters) in all the procedures and functions in your schema.

USER_DEPENDENCIES

The dependencies to and from objects you own. This view is mostly used by Oracle to mark objects INVALID when necessary, and also by IDEs to display the dependency information in their object browsers. Note: for full dependency analysis, you will want to use ALL_DEPENDENCIES, in case your program unit is called by a program unit owned by a different schema.

USER_ERRORS

The current set of compilation errors for all stored objects (including triggers) you own. This view is accessed by the SHOW ERRORS SQL*Plus command, described in Chapter 2. You can, however, write your own queries against it as well.

USER_IDENTIFIERS (PL/Scope)

Introduced in Oracle Database 11g and populated by the PL/Scope compiler utility. Once populated, this view provides you with information about all the identifiers (program names, variables, etc.) in your code base. This is a very powerful code analysis tool.

USER_OBJECTS

The objects you own (excepting database links). You can, for instance, use this view to see if an object is marked INVALID, find all the packages that have “EMP” in their names, etc.

USER_OBJECT_SIZE

The size of the objects you own. Actually, this view will show you the source, parsed, and compile sizes for your code. Although it is used mainly by the compiler and the runtime engine, you can use it to identify the large programs in your environment, good candidates for pinning into the SGA.

USER_PLSQL_OBJECT_SETTINGS

Information about the characteristics of a PL/SQL object that can be modified through the ALTER and SET DDL commands, such as the optimization level, debug settings, and more. Introduced in Oracle Database 10g.

USER_PROCEDURES

Information about stored program units (not just procedures, as the name would imply), such as the AUTHID setting, whether the program was defined as DETERMINISTIC, and so on.

USER_SOURCE

The text source code for all objects you own (in Oracle9i Database and above, including database triggers and Java source). This is a very handy view, because you can run all sorts of analyses of the source code against it using SQL and, in particular, Oracle Text.

USER_STORED_SETTINGS

PL/SQL compiler flags. Use this view to discover which programs have been compiled using native compilation.

USER_TRIGGERS and USER_TRIGGER_COLS

The database triggers you own (including source code and description of triggering event) and any columns identified with the triggers. You can write programs against this view to enable or disable triggers for a particular table.

You can view the structures of each of these views either with a DESCRIBE command in SQL*Plus or by referring to the appropriate Oracle documentation. The following sections provide some examples of the ways you can use these views.

Display Information About Stored Objects

The USER_OBJECTS view contains the following key information about an object:

OBJECT_NAME

Name of the object

OBJECT_TYPE

Type of the object (e.g., PACKAGE, FUNCTION, TRIGGER)

STATUS

Status of the object: VALID or INVALID

LAST_DDL_TIME

Timestamp indicating the last time that this object was changed

The following SQL*Plus script displays the status of PL/SQL code objects:

/* File on web: psobj.sql */

SELECT object_type, object_name, status

FROM user_objects

WHERE object_type IN (

'PACKAGE', 'PACKAGE BODY', 'FUNCTION', 'PROCEDURE',

'TYPE', 'TYPE BODY', 'TRIGGER')

ORDER BY object_type, status, object_name

The output from this script will be similar to the following:

OBJECT_TYPE OBJECT_NAME STATUS

-------------------- ------------------------------ ----------

FUNCTION DEVELOP_ANALYSIS INVALID

NUMBER_OF_ATOMICS INVALID

PACKAGE CONFIG_PKG VALID

EXCHDLR_PKG VALID

Notice that two of my modules are marked as INVALID. See the section Recompiling Invalid Program Units for more details on the significance of this setting and how you can change it to VALID.

Display and Search Source Code

You should always maintain the source code of your programs in text files (or via a development tool specifically designed to store and manage PL/SQL code outside of the database). When you store these programs in the database, however, you can take advantage of SQL to analyze your source code across all modules, which may not be a straightforward task with your text editor.

The USER_SOURCE view contains all of the source code for objects owned by the current user. The structure of USER_SOURCE is as follows:

Name Null? Type

------------------------------- -------- ----

NAME NOT NULL VARCHAR2(30)

TYPE VARCHAR2(12)

LINE NOT NULL NUMBER

TEXT VARCHAR2(4000)

where:

NAME

Is the name of the object

TYPE

Is the type of the object (ranging from PL/SQL program units to Java source to trigger source)

LINE

Is the line number

TEXT

Is the text of the source code

USER_SOURCE is a very valuable resource for developers. With the right kind of queries, you can do things like:

§ Display source code for a given line number.

§ Validate coding standards.

§ Identify possible bugs or weaknesses in your source code.

§ Look for programming constructs not identifiable from other views.

Suppose, for example, that I have set as a rule that individual developers should never hardcode one of those application-specific error numbers between −20,999 and −20,000 (such hardcodings can lead to conflicting usages and lots of confusion). I can’t stop a developer from writing code like this:

RAISE_APPLICATION_ERROR (-20306, 'Balance too low');

but I can create a package that allows me to identify all the programs that have such a line in them. I call it my “validate standards” package; it is very simple, and its main procedure looks like this:

/* Files on web: valstd.* */

PROCEDURE progwith (str IN VARCHAR2)

IS

TYPE info_rt IS RECORD (

NAME user_source.NAME%TYPE

, text user_source.text%TYPE

);

TYPE info_aat IS TABLE OF info_rt

INDEX BY PLS_INTEGER;

info_aa info_aat;

BEGIN

SELECT NAME || '-' || line

, text

BULK COLLECT INTO info_aa

FROM user_source

WHERE UPPER (text) LIKE '%' || UPPER (str) || '%'

AND NAME <> 'VALSTD'

AND NAME <> 'ERRNUMS';

disp_header ('Checking for presence of "' || str || '"');

FOR indx IN info_aa.FIRST .. info_aa.LAST

LOOP

pl (info_aa (indx).NAME, info_aa (indx).text);

END LOOP;

END progwith;

Once this package is compiled into my schema, I can check for usages of −20,NNN numbers with this command:

SQL> EXEC valstd.progwith ('-20')

==================

VALIDATE STANDARDS

==================

Checking for presence of "-20"

CHECK_BALANCE - RAISE_APPLICATION_ERROR (-20306, 'Balance too low');

MY_SESSION - PRAGMA EXCEPTION_INIT(dblink_not_open,-2081);

VSESSTAT - CREATE DATE : 1999-07-20

Notice that the –2081 and 1999-07-20 “hits” in my output are not really a problem; they show up only because I didn’t define my filter narrowly enough.

This is a fairly crude analytical tool, but you could certainly make it more sophisticated. You could also have it generate HTML that is then posted on your intranet. You could then run the valstd scripts every Sunday night through a DBMS_JOB-submitted job, and each Monday morning developers could check the intranet for feedback on any fixes needed in their code.

Use Program Size to Determine Pinning Requirements

The USER_OBJECT_SIZE view gives you the following information about the size of the programs stored in the database:

SOURCE_SIZE

Size of the source in bytes. This code must be in memory during compilation (including dynamic/automatic recompilation).

PARSED_SIZE

Size of the parsed form of the object in bytes. This representation must be in memory when any object that references this object is compiled.

CODE_SIZE

Code size in bytes. This code must be in memory when the object is executed.

Here is a query that allows you to show code objects that are larger than a given size. You might want to run this query to identify the programs that you will want to pin into the database using DBMS_SHARED_POOL (see Chapter 24 for more information on this package) in order to minimize the swapping of code in the SGA:

/* File on web: pssize.sql */

SELECT name, type, source_size, parsed_size, code_size

FROM user_object_size

WHERE code_size > &&1 * 1024

ORDER BY code_size DESC

Obtain Properties of Stored Code

The USER_PLSQL_OBJECT_SETTINGS view (introduced in Oracle Database 10g) provides information about the following compiler settings of a stored PL/SQL object:

PLSQL_OPTIMIZE_LEVEL

Optimization level that was used to compile the object

PLSQL_CODE_TYPE

Compilation mode for the object

PLSQL_DEBUG

Whether or not the object was compiled for debugging

PLSQL_WARNINGS

Compiler warning settings that were used to compile the object

NLS_LENGTH_SEMANTICS

NLS length semantics that were used to compile the object

Possible uses for this view include:

§ Identifying any programs that are not taking full advantage of the optimizing compiler (an optimization level of 1 or 0):

§ /* File on web: low_optimization_level.sql */

§ SELECT owner, name

§ FROM user_plsql_object_settings

WHERE plsql_optimize_level IN (1,0);

§ Determining if any stored programs have disabled compile-time warnings:

§ /* File on web: disable_warnings.sql */

§ SELECT NAME, plsql_warnings

§ FROM user_plsql_object_settings

WHERE plsql_warnings LIKE '%DISABLE%';

The USER_PROCEDURES view lists all functions and procedures, along with associated properties, including whether a function is pipelined, parallel enabled, or aggregate. USER_PROCEDURES will also show you the AUTHID setting for a program (DEFINER or CURRENT_USER). This can be very helpful if you need to see quickly which programs in a package or group of packages use invoker rights or definer rights. Here is an example of such a query:

/* File on web: show_authid.sql */

SELECT AUTHID

, p.object_name program_name

, procedure_name subprogram_name

FROM user_procedures p, user_objects o

WHERE p.object_name = o.object_name

AND p.object_name LIKE '<package or program name criteria>'

ORDER BY AUTHID, procedure_name;

Analyze and Modify Trigger State Through Views

Query the trigger-related views (USER_TRIGGERS, USER_TRIG_COLUMNS) to do any of the following:

§ Enable or disable all triggers for a given table. Rather than writing this code manually, you can execute the appropriate DDL statements from within a PL/SQL program. See the section Maintaining Triggers in Chapter 19 for an example of such a program.

§ Identify triggers that execute only when certain columns are changed, but do not have a WHEN clause. A best practice for triggers is to include a WHEN clause to make sure that the specified columns actually have changed values (rather than simply writing the same value over itself).

Here is a query you can use to identify potentially problematic triggers lacking a WHEN clause:

/* File on web: nowhen_trigger.sql */

SELECT *

FROM user_triggers tr

WHERE when_clause IS NULL AND

EXISTS (SELECT 'x'

FROM user_trigger_cols

WHERE trigger_owner = USER

AND trigger_name = tr.trigger_name);

Analyze Argument Information

A very useful view for programmers is USER_ARGUMENTS. It contains information about each of the arguments of each of the stored programs in your schema. It offers, simultaneously, a wealth of nicely parsed information about arguments and a bewildering structure that is very hard to work with.

Here is a simple SQL*Plus script to dump the contents of USER_ARGUMENTS for all the programs in the specified package:

/* File on web: desctest.sql */

SELECT object_name, argument_name, overload

, POSITION, SEQUENCE, data_level, data_type

FROM user_arguments

WHERE package_name = UPPER ('&&1');

A more elaborate PL/SQL-based program for displaying the contents of USER_ARGUMENTS may be found in the show_all_arguments.sp file on the book’s website.

You can also write more specific queries against USER_ARGUMENTS to identify possible quality issues with your code base. For example, Oracle recommends that you stay away from the LONG datatype and instead use LOBs. In addition, the fixed-length CHAR datatype can cause logic problems; you are much better off sticking with VARCHAR2. Here is a query that uncovers the usage of these types in argument definitions:

/* File on web: long_or_char.sql */

SELECT object_name, argument_name, overload

, POSITION, SEQUENCE, data_level, data_type

FROM user_arguments

WHERE data_type IN ('LONG','CHAR');

You can even use USER_ARGUMENTS to deduce information about a package’s program units that is otherwise not easily obtainable. Suppose that I want to get a list of all the procedures and functions defined in a package specification. You will say: “No problem! Just query the USER_PROCEDURES view.” And that would be a fine answer, except that it turns out that USER_PROCEDURES doesn’t tell you whether a program is a function or a procedure (in fact, it can be both, depending on how the program is overloaded!).

You might instead want to turn to USER_ARGUMENTS. It does, indeed, contain that information, but it is far less than obvious. To determine whether a program is a function or a procedure, you must check to see if there is a row in USER_ARGUMENTS for that package-program combination that has a POSITION of 0. That is the value Oracle uses to store the RETURN “argument” of a function. If it is not present, then the program must be a procedure.

The following function uses this logic to return a string that indicates the program type (if it is overloaded with both types, the function returns “FUNCTION, PROCEDURE”). Note that the list_to_string function used in the main body is provided in the file:

/* File on web: program_type.sf */

FUNCTION program_type (owner_in IN VARCHAR2,

package_in IN VARCHAR2,

program_in IN VARCHAR2)

RETURN VARCHAR2

IS

c_function_pos CONSTANT PLS_INTEGER := 0;

TYPE type_aat IS TABLE OF all_objects.object_type%TYPE

INDEX BY PLS_INTEGER;

l_types type_aat;

retval VARCHAR2 (32767);

BEGIN

SELECT CASE MIN (position)

WHEN c_function_pos THEN 'FUNCTION'

ELSE 'PROCEDURE'

END

BULK COLLECT INTO l_types

FROM all_arguments

WHERE owner = owner_in

AND package_name = package_in

AND object_name = program_in

GROUP BY overload;

IF l_types.COUNT > 0

THEN

retval := list_to_string (l_types, ',', distinct_in => TRUE);

END IF;

RETURN retval;

END program_type;

Finally, you should also know that the built-in package DBMS_DESCRIBE provides a PL/SQL API to provide much of the same information as USER_ARGUMENTS. There are differences, however, in the way these two elements handle datatypes.

Analyze Identifier Usage (Oracle Database 11g’s PL/Scope)

It doesn’t take long for the volume and complexity of a code base to present serious maintenance and evolutionary challenges. I might need, for example, to implement a new feature in some portion of an existing program. How can I be sure that I understand the impact of this feature andmake all the necessary changes? Prior to Oracle Database 11g, the tools I could use to perform impact analysis were largely limited to queries against ALL_DEPENDENCIES and ALL_SOURCE. Now, with PL/Scope, I can perform much more detailed and useful analyses.

PL/Scope collects data about identifiers in PL/SQL source code when it compiles your code, and makes it available in static data dictionary views. This collected data, accessible through USER_IDENTIFIERS, includes very detailed information about the types and usages (including declarations, references, assignments, etc.) of each identifier, plus information about the location of each usage in the source code.

Here is the description of the USER_IDENTIFIERS view:

Name Null? Type

--------------------- -------- ---------------

NAME VARCHAR2(128)

SIGNATURE VARCHAR2(32)

TYPE VARCHAR2(18)

OBJECT_NAME NOT NULL VARCHAR2(128)

OBJECT_TYPE VARCHAR2(13)

USAGE VARCHAR2(11)

USAGE_ID NUMBER

LINE NUMBER

COL NUMBER

USAGE_CONTEXT_ID NUMBER

You can write queries against USER_IDENTIFIERS to mine your code for all sorts of information, including violations of naming conventions. PL/SQL editors such as Toad are likely to start offering user interfaces to PL/Scope soon, making it easy to analyze your code. Until that happens, you will need to construct your own queries (or use those produced and made available by others).

To use PL/Scope, you must first ask the PL/SQL compiler to analyze the identifiers of your program when it is compiled. You do this by changing the value of the PLSCOPE_SETTINGS compilation parameter. You can do this for a session or even an individual program unit, as shown here:

ALTER SESSION SET plscope_settings='IDENTIFIERS:ALL'

You can see the value of PLSCOPE_SETTINGS for any particular program unit with a query against USER_PLSQL_OBJECT_SETTINGS.

Once PL/Scope has been enabled, whenever you compile a program unit, Oracle will populate the data dictionary with detailed information about how each identifier in your program (variables, types, programs, etc.) is used.

Let’s take a look at a few examples of using PL/Scope. Suppose I create the following package specification and procedure, with PL/Scope enabled:

/* File on web: 11g_plscope.sql */

ALTER SESSION SET plscope_settings='IDENTIFIERS:ALL'

/

CREATE OR REPLACE PACKAGE plscope_pkg

IS

FUNCTION plscope_func (plscope_fp1 NUMBER)

RETURN NUMBER;

PROCEDURE plscope_proc (plscope_pp1 VARCHAR2);

END plscope_pkg;

/

CREATE OR REPLACE PROCEDURE plscope_proc1

IS

plscope_var1 NUMBER := 0;

BEGIN

plscope_pkg.plscope_proc (TO_CHAR (plscope_var1));

DBMS_OUTPUT.put_line (SYSDATE);

plscope_var1 := 1;

END plscope_proc1;

/

I can verify PL/Scope settings as follows:

SELECT name, plscope_settings

FROM user_plsql_object_settings

WHERE name LIKE 'PLSCOPE%'

NAME PLSCOPE_SETTINGS

------------------------------ ----------------

PLSCOPE_PKG IDENTIFIERS:ALL

PLSCOPE_PROC1 IDENTIFIERS:ALL

Let’s determine what has been declared in the process of compiling these two program units:

SELECT name, TYPE

FROM user_identifiers

WHERE name LIKE 'PLSCOPE%' AND usage = 'DECLARATION'ORDER BY type, usage_id

NAME TYPE

--------------- -----------

PLSCOPE_FP1 FORMAL IN

PLSCOPE_PP1 FORMAL IN

PLSCOPE_FUNC FUNCTION

PLSCOPE_PKG PACKAGE

PLSCOPE_PROC1 PROCEDURE

PLSCOPE_PROC PROCEDURE

PLSCOPE_VAR1 VARIABLE

Now I’ll discover all locally declared variables:

SELECT a.name variable_name, b.name context_name, a.signature

FROM user_identifiers a, user_identifiers b

WHERE a.usage_context_id = b.usage_id

AND a.TYPE = 'VARIABLE'

AND a.usage = 'DECLARATION'

AND a.object_name = 'PLSCOPE_PROC1'

AND a.object_name = b.object_nameORDER BY a.object_type, a.usage_id

VARIABLE_NAME CONTEXT_NAME SIGNATURE

-------------- ------------- --------------------------------

PLSCOPE_VAR1 PLSCOPE_PROC1 401F008A81C7DCF48AD7B2552BF4E684

Impressive, yet PL/Scope can do so much more. I would like to know all the locations in my program unit in which this variable is used, as well as the type of usage:

SELECT usage, usage_id, object_name, object_type

FROM user_identifiers sig

, (SELECT a.signature

FROM user_identifiers a

WHERE a.TYPE = 'VARIABLE'

AND a.usage = 'DECLARATION'

AND a.object_name = 'PLSCOPE_PROC1') variables

WHERE sig.signature = variables.signature

ORDER BY object_type, usage_id

USAGE USAGE_ID OBJECT_NAME OBJECT_TYPE

----------- ---------- ------------------------------ -------------

DECLARATION 3 PLSCOPE_PROC1 PROCEDURE

ASSIGNMENT 4 PLSCOPE_PROC1 PROCEDURE

REFERENCE 7 PLSCOPE_PROC1 PROCEDURE

ASSIGNMENT 9 PLSCOPE_PROC1 PROCEDURE

You should be able to see, even from these simple examples, that PL/Scope offers enormous potential in helping you better understand your code and analyze the impact of change on that code.

Lucas Jellema of AMIS has produced more interesting and complex examples of using PL/Scope to validate naming conventions. You can find these queries in the 11g_plscope_amis.sql file on the book’s website.

In addition, I have created a helper package and demonstration scripts to help you get started with PL/Scope. Check out the plscope_helper*.* files, as well as the other plscope*.* files.

Managing Dependencies and Recompiling Code

Another very important phase of PL/SQL compilation and execution is the checking of program dependencies. A dependency (in PL/SQL) is a reference from a stored program to some database object outside that program. Server-based PL/SQL programs can have dependencies on tables, views, types, procedures, functions, sequences, synonyms, object types, package specifications, etc. Program units are not, however, dependent on package bodies or object type bodies; these are the “hidden” implementations.

Oracle’s basic dependency principle for PL/SQL is, loosely speaking:

Do not use the currently compiled version of a program if any of the objects on which it depends have changed since it was compiled.

The good news is that most dependency management happens automatically, from the tracking of dependencies to the recompilation required to keep everything synchronized. You can’t completely ignore this topic, though, and the following sections should help you understand how, when, and why you’ll need to intervene.

In Oracle Database 10g and earlier, dependencies were tracked with the granularity of a program unit. So if a procedure was dependent upon a function within a package or a column within a table, the dependent unit was the package or the table. This granularity has been the standard from the dawn of PL/SQL—until recently.

Beginning with Oracle Database 11g, the granularity of dependency tracking has improved. Instead of tracking the dependency to the unit (for example, a package or a table), the grain is now the element within the unit (for example, the columns in a table and the formal parameters of a subprogram). This fine-grained dependency tracking means that your program will not be invalidated if you add a program or overload an existing program in an existing package. Likewise, if you add a column to a table, the database will not automatically invalidate all PL/SQL programs that reference the table—only those programs that reference all columns, as in a SELECT * or by using the anchored declaration %ROWTYPE, will be affected. The following sections explore this situation in detail.

The section Qualify All References to Variables and Columns in SQL Statements in Chapter 3 provides an example of this fine-grained dependency management.

NOTE

It would be nice to report on the fine-grained dependencies that Oracle Database 11g manages, but as of Oracle Database 11g Release 2, this data is not available in any of the data dictionary views. I hope that they will “published” for our use in the future.

If, however, you are not yet building and deploying applications on Oracle Database 11g or later, object-level dependency tracking means that almost any change to underlying database objects will cause a wide ripple effect of invalidations.

Analyzing Dependencies with Data Dictionary Views

You can use several of the data dictionary views to analyze dependency relationships.

Let’s take a look at a simple example. Suppose that I have a package named bookworm on the server. This package contains a function that retrieves data from the books table. After I create the table and then create the package, both the package specification and the body are VALID:

SELECT object_name, object_type, status

FROM USER_OBJECTS

WHERE object_name = 'BOOKWORM';

OBJECT_NAME OBJECT_TYPE STATUS

------------------------------ ------------------ -------

BOOKWORM PACKAGE VALID

BOOKWORM PACKAGE BODY VALID

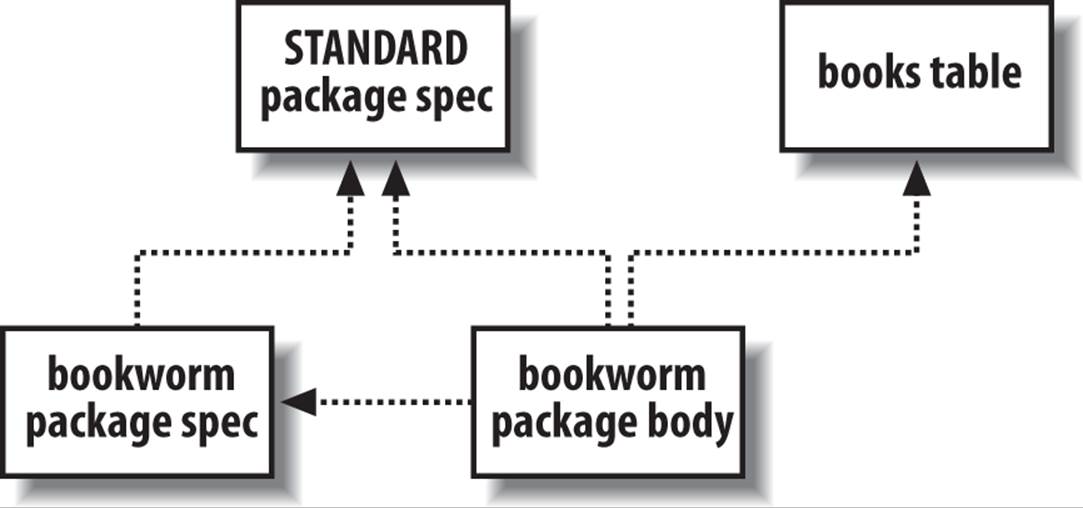

Behind the scenes, when I compiled my PL/SQL program, the database determined a list of other objects that BOOKWORM needs in order to compile successfully. I can explore this dependency graph using a query of the data dictionary view USER_DEPENDENCIES:

SELECT name, type, referenced_name, referenced_type

FROM USER_DEPENDENCIES

WHERE name = 'BOOKWORM';

NAME TYPE REFERENCED_NAME REFERENCED_TYPE

--------------- -------------- --------------- ---------------

BOOKWORM PACKAGE STANDARD PACKAGE

BOOKWORM PACKAGE BODY STANDARD PACKAGE

BOOKWORM PACKAGE BODY BOOKS TABLE

BOOKWORM PACKAGE BODY BOOKWORM PACKAGE

Figure 20-1 illustrates this information as a directed graph, where the arrows indicate a “depends on” relationship. In other words, the figure shows that:

§ The bookworm package specification and body both depend on the built-in package named STANDARD (see the sidebar Flying the STANDARD).

§ The bookworm package body depends on its corresponding specification and on the books table.

Figure 20-1. Dependency graph of the bookworm package

For the purpose of tracking dependencies, the database records a package specification and body as two different entities. Every package body will have a dependency on its corresponding specification, but the specification will never depend on its body. Nothing depends on the body. Hey, the package might not even have a body!

If you’ve been responsible for maintaining someone else’s code during your career, you will know that performing impact analysis relies not so much on “depends-on” information as it does on “referenced-by” information. Let’s say that I’m contemplating a change in the structure of the books table. Naturally, I’d like to know everything that might be affected:

SELECT name, type

FROM USER_DEPENDENCIES

WHERE referenced_name = 'BOOKS'

AND referenced_type = 'TABLE';

NAME TYPE

------------------------------ ------------

ADD_BOOK PROCEDURE

TEST_BOOK PACKAGE BODY

BOOK PACKAGE BODY

BOOKWORM PACKAGE BODY

FORMSTEST PACKAGE

As you can see, in addition to the bookworm package, there are some programs in my schema I haven’t told you about—but fortunately, the database never forgets. Nice!

As clever as the database is at keeping track of dependencies, it isn’t clairvoyant: in the data dictionary, the database can only track dependencies of local stored objects written with static calls. There are plenty of ways that you can create programs that do not appear in the USER_DEPENDENCIES view. These include external programs that embed SQL or PL/SQL, remote stored procedures or client-side tools that call local stored objects, and any programs that use dynamic SQL.

As I was saying, if I alter the table’s structure by adding a column:

ALTER TABLE books MODIFY popularity_index NUMBER (8,2);

then the database will immediately and automatically invalidate all program units that depend on the books table (or, in Oracle Database 11g and later, only those program units that reference this column). Any change in the DDL time of an object—even if you just rebuild it with no changes—will cause the database to invalidate dependent program units (see the sidebar Avoiding Those Invalidations). Actually, the database’s automatic invalidation is even more sophisticated than that; if you own a program that performs a particular DML statement on a table in another schema, and your privilege to perform that operation gets revoked, this action will also invalidate your program.

After the change, a query against USER_OBJECTS shows me the following information:

/* File on web: invalid_objects.sql */

SQL> SELECT object_name, object_type, status

FROM USER_OBJECTS

WHERE status = 'INVALID';

OBJECT_NAME OBJECT_TYPE STATUS

------------------------------ ------------------ -------

ADD_BOOK PROCEDURE INVALID

BOOK PACKAGE BODY INVALID

BOOKWORM PACKAGE BODY INVALID

FORMSTEST PACKAGE INVALID

FORMSTEST PACKAGE BODY INVALID

TEST_BOOK PACKAGE BODY INVALID

By the way, this again illustrates a benefit of the two-part package arrangement: for the most part, the package bodies have been invalidated, but not the specifications. As long as the specification doesn’t change, program units that depend on the package will not be invalidated. The only specification that has been invalidated here is for FORMSTEST, which depends on the books table because (as I happen to know) it uses the anchored declaration books%ROWTYPE.

One final note: another way to look at programmatic dependencies is to use Oracle’s DEPTREE_FILL procedure in combination with the DEPTREE or IDEPTREE views. As a quick example, if I run the procedure using:

BEGIN DEPTREE_FILL('TABLE', USER, 'BOOKS'); END;

I can then get a nice listing by selecting from the IDEPTREE view:

SQL> SELECT * FROM IDEPTREE;

DEPENDENCIES

-------------------------------------------

TABLE SCOTT.BOOKS

PROCEDUE SCOTT.ADD_BOOK

PACKAGE BODY SCOTT.BOOK

PACKAGE BODY SCOTT.TEST_BOOK

PACKAGE BODY SCOTT.BOOKWORM

PACKAGE SCOTT.FORMSTEST

PACKAGE BODY SCOTT.FORMSTEST

This listing shows the result of a recursive “referenced-by” query. If you want to use these objects yourself, execute the $ORACLE_HOME/rdbms/admin/utldtree.sql script to build the utility procedure and views in your own schema. Or, if you prefer, you can emulate it with a query such as:

SELECT RPAD (' ', 3*(LEVEL-1)) || name || ' (' || type || ') '

FROM user_dependencies

CONNECT BY PRIOR RTRIM(name || type) =

RTRIM(referenced_name || referenced_type)

START WITH referenced_name = 'name' AND referenced_type = 'type'

Now that you’ve seen how the server keeps track of relationships among objects, let’s explore one way that the database takes advantage of such information.

FLYING THE STANDARD

All but the most minimal database installations will have a built-in package named STANDARD available in the database. This package gets created along with the data dictionary views from catalog.sql and contains many of the core features of the PL/SQL language, including:

§ Functions such as INSTR and LOWER

§ Comparison operators such as NOT, =, and >

§ Predefined exceptions such as DUP_VAL_ON_INDEX and VALUE_ERROR

§ Subtypes such as STRING and INTEGER

You can view the source code for this package by looking at the file standard.sql, which you will normally find in the $ORACLE_HOME/rdbms/admin subdirectory.

STANDARD’s specification is the “root” of the PL/SQL dependency graph; that is, it depends upon no other PL/SQL programs, but most PL/SQL programs depend upon it. This package is explored in more detail in Chapter 24.

Fine-Grained Dependency (Oracle Database 11g)

One of the nicest features of PL/SQL is its automated dependency tracking. The Oracle database automatically keeps track of all database objects on which a program unit is dependent. If any of those objects are subsequently modified, the program unit is marked INVALID and must be recompiled. For example, in the case of the scope_demo package, the inclusion of the query from the employees table means that this package is marked as being dependent on that table.

As I mentioned earlier, prior to Oracle Database 11g, dependency information was recorded only with the granularity of the object as a whole. If any change at all is made to that object, all dependent program units are marked INVALID, even if the change does not affect those program units.

Consider the scope_demo package. It is dependent on the employees table, but it refers only to the department_id and salary columns. In Oracle Database 10g, I can change the size of the first_name column and this package will be marked INVALID.

In Oracle Database 11g, Oracle fine-tuned its dependency tracking down to the element within an object. In the case of tables, the Oracle database now records that a program unit depends on specific columns within a table. With this approach, the database can avoid unnecessary recompilations, making it easier for you to evolve your application code base.

In Oracle Database 11g and later, I can change the size of my first_name column and this package is not marked INVALID, as you can see here:

ALTER TABLE employees MODIFY first_name VARCHAR2(2000)

/

Table altered.

SELECT object_name, object_type, status

FROM all_objects

WHERE owner = USER AND object_name = 'SCOPE_DEMO'/

OBJECT_NAME OBJECT_TYPE STATUS

------------------------------ ------------------- -------

SCOPE_DEMO PACKAGE VALID

SCOPE_DEMO PACKAGE BODY VALID

Note, however, that unless you fully qualify all references to PL/SQL variables inside your embedded SQL statements, you will not be able to take full advantage of this enhancement.

Specifically, qualification of variable names will avoid invalidation of program units when new columns are added to a dependent table.

Consider that original, unqualified SELECT statement in set_global:

SELECT COUNT (*)

INTO l_count

FROM employees

WHERE department_id = l_inner AND salary > l_salary;

As of Oracle Database 11g, fine-grained dependency means that the database will note that the scope_demo package is dependent only on department_id and salary.

Now suppose that the DBA adds a column to the employees table. Since there are unqualified references to PL/SQL variables in the SELECT statement, it is possible that the new column name will change the dependency information for this package. Namely, if the new column name is the same as an unqualified reference to a PL/SQL variable, the database will now resolve that reference to the column name. Thus, the database will need to update the dependency information for scope_demo, which means that it needs to invalidate the package.

If, conversely, you do qualify references to all your PL/SQL variables inside embedded SQL statements, then when the database compiles your program unit, it knows that there is no possible ambiguity. Even when columns are added, the program unit will remain VALID.

Note that the INTO list of a query is not actually a part of the SQL statement. As a result, variables in that list do not persist into the SQL statement that the PL/SQL compiler derives. Consequently, qualifying (or not qualifying) that variable with its scope name will have no bearing on the database’s dependency analysis.

Remote Dependencies

Server-based PL/SQL immediately becomes invalid whenever there is a change in a local object on which it depends. However, if it depends on an object in a remote database and that object changes, the local database does not attempt to invalidate the calling PL/SQL program in real time. Instead, the local database defers the checking until runtime.

Here is a program that has a remote dependency on the procedure recompute_prices, which lives across the database link findat.ldn.world:

PROCEDURE synch_em_up (tax_site_in IN VARCHAR2, since_in IN DATE)

IS

BEGIN

IF tax_site_in = 'LONDON'

THEN

recompute_prices@findat.ldn.world(cutoff_time => since_in);

END IF;

END;

If you recompile the remote procedure and some time later try to run synch_em_up, you are likely to get an ORA-04062 error with accompanying text such as timestamp (or signature) of package “SCOTT.recompute_prices” has been changed. If your call is still legal, the database will recompile synch_em_up, and if it succeeds, its next invocation should run without error. To understand the database’s remote procedure call behavior, you need to know that the PL/SQL compiler always stores two kinds of information about each referenced remote procedure—its timestamp and its signature:

Timestamp

The most recent date and time (down to the second) when an object’s specification was reconstructed, as given by the TIMESTAMP column in the USER_OBJECTS view. For PL/SQL programs, this is not necessarily the same as the most recent compilation time because it’s possible to recompile an object without reconstructing its specification. (Note that this column is of the DATE datatype, not the newer TIMESTAMP datatype.)

Signature

A footprint of the actual shape of the object’s specification. Signature information includes the object’s name and the ordering, datatype family, and mode of each parameter.

So, when I compiled synch_em_up, the database retrieved both the timestamp and the signature of the remote procedure called recomputed_prices, and stored a representation of them with the bytecode of synch_em_up.

How do you suppose the database uses this information at runtime? The model is simple: it uses either the timestamp or the signature, depending on the current value of the parameter REMOTE_DEPENDENCIES_MODE. If that timestamp or signature information, which is stored in the local program’s bytecode, doesn’t match the actual value of the remote procedure at runtime, you get the ORA-04062 error.

Oracle’s default remote dependency mode is the timestamp method, but this setting can sometimes cause unnecessary recompilations. The DBA can change the database’s initialization parameter REMOTE_DEPENDENCIES_MODE, or you can change your session’s setting, like this:

ALTER SESSION SET REMOTE_DEPENDENCIES_MODE = SIGNATURE;

or, inside PL/SQL:

EXECUTE IMMEDIATE 'ALTER SESSION SET REMOTE_DEPENDENCIES_MODE = SIGNATURE';

Thereafter, for the remainder of that session, every PL/SQL program run will use the signature method. As a matter of fact, Oracle’s client-side tools always execute this ALTER SESSION...SIGNATURE command as the first thing they do after connecting to the database, overriding the database setting.

Oracle Corporation recommends using signature mode on client tools like Oracle Forms and timestamp mode on server-to-server procedure calls. Be aware, though, that signature mode can cause false negatives—situations where the runtime engine thinks that the signature hasn’t changed, but it really has—in which case the database does not force an invalidation of a program that calls it remotely. You can wind up with silent computational errors that are difficult to detect and even more difficult to debug. Here are several risky scenarios:

§ Changing only the default value of one of the called program’s formal parameters. The caller will continue to use the old default value.

§ Adding an overloaded program to an existing package. The caller will not bind to the new version of the overloaded program even if it is supposed to.

§ Changing just the name of a formal parameter. The caller may have problems if it uses named parameter notation.

In these cases, you will have to perform a manual recompilation of the caller. In contrast, the timestamp mode, while prone to false positives, is immune to false negatives. In other words, it won’t miss any needed recompilations, but it may force recompilation that is not strictly required. This safety is no doubt why Oracle uses it as the default for server-to-server RPCs.

NOTE

If you do use the signature method, Oracle recommends that you add any new functions or procedures at the end of package specifications because doing so reduces false positives.

In the real world, minimizing recompilations can make a significant difference in application availability. It turns out that you can trick the database into thinking that a local call is really remote so that you can use signature mode. This is done using a loopback database link inside a synonym. Here is an example that assumes you have an Oracle Net service name “localhost” that connects to the local database:

CREATE DATABASE LINK loopback

CONNECT TO bob IDENTIFIED BY swordfish USING 'localhost'

/

CREATE OR REPLACE PROCEDURE volatilecode AS

BEGIN

-- whatever

END;

/

CREATE OR REPLACE SYNONYM volatile_syn FOR volatilecode@loopback

/

CREATE OR REPLACE PROCEDURE save_from_recompile AS

BEGIN

...

volatile_syn;

...

END;

/

To take advantage of this arrangement, your production system would then include an invocation such as this:

BEGIN

EXECUTE IMMEDIATE 'ALTER SESSION SET REMOTE_DEPENDENCIES_MODE=SIGNATURE';

save_from_recompile;

END;

/

As long as you don’t do anything that alters the signature of volatilecode, you can modify and recompile it without invalidating save_from_recompile or causing a runtime error. You can even rebuild the synonym against a different procedure entirely. This approach isn’t completely without drawbacks; for example, if volatilecode outputs anything using DBMS_OUTPUT, you won’t see it unless save_from_recompile retrieves it explicitly over the database link and then outputs it directly. But for many applications, such workarounds are a small price to pay for the resulting increase in availability.

Limitations of Oracle’s Remote Invocation Model

Through Oracle Database 11g Release 2, there is no direct way for a PL/SQL program to use any of the following package constructs on a remote server:

§ Variables (including constants)

§ Cursors

§ Exceptions

This limitation applies not only to client PL/SQL calling the database server, but also to server-to-server RPCs.

The simple workaround for variables is to use “get and set” programs to encapsulate the data. In general, you should be doing that anyway because it is an excellent programming practice. Note, however, that Oracle does not allow you to reference remote package public variables, even indirectly. So if a subprogram simply refers to a package public variable, you cannot invoke the subprogram through a database link.

The workaround for cursors is to encapsulate them with open, fetch, and close subprograms. For example, if you’ve declared a book_cur cursor in the specification of the book_maint package, you could put this corresponding package body on the server:

PACKAGE BODY book_maint

AS

prv_book_cur_status BOOLEAN;

PROCEDURE open_book_cur IS

BEGIN

IF NOT book_maint.book_cur%ISOPEN

THEN

OPEN book_maint.book_cur;

END IF;

END;

FUNCTION next_book_rec RETURN books%ROWTYPE

IS

l_book_rec books%ROWTYPE;

BEGIN

FETCH book_maint.book_cur INTO l_book_rec;

prv_book_cur_status := book_maint.book_cur%FOUND;

RETURN l_book_rec;

END;

FUNCTION book_cur_is_found RETURN BOOLEAN

IS

BEGIN

RETURN prv_book_cur_status;

END;

PROCEDURE close_book_cur IS

BEGIN

IF book_maint.book_cur%ISOPEN

THEN

CLOSE book_maint.book_cur;

END IF;

END;

END book_maint;

Unfortunately, this approach won’t work around the problem of using remote exceptions; the exception “datatype” is treated differently from true datatypes. Instead, you can use the RAISE_APPLICATION_ERROR procedure with a user-defined exception number between −20000 and −20999. See Chapter 6 for a discussion of how to write a package to help your application manage this type of exception.

Recompiling Invalid Program Units

In addition to becoming invalid when a referenced object changes, a new program may be in an invalid state as the result of a failed compilation. In any event, no PL/SQL program marked as INVALID will run until a successful recompilation changes its status to VALID. Recompilation can happen in one of three ways:

Automatic runtime recompilation

The PL/SQL runtime engine will, under many circumstances, automatically recompile an invalid program unit when that program unit is called.

ALTER...COMPILE recompilation

You can use an explicit ALTER command to recompile the package.

Schema-level recompilation

You can use one of many alternative built-ins and custom code to recompile all invalid program units in a schema or database instance.

Automatic runtime compilation

Since Oracle maintains information about the status of program units compiled into the database, it knows when a program unit is invalid and needs to be recompiled. When a user connected to the database attempts to execute (directly or indirectly) an invalid program unit, the database will automatically attempt to recompile that unit.

You might then wonder: why do we need to explicitly recompile program units at all? There are two reasons:

§ In a production environment, “just in time” recompilation can have a ripple effect, in terms of both performance degradation and cascading invalidations of other database objects. You’ll greatly improve the user experience by recompiling all invalid program units when users are not accessing the application (if at all possible).

§ Recompilation of a program unit that was previously executed by another user connected to the same instance can and usually will result in an error that looks like this:

§ ORA-04068: existing state of packages has been discarded

§ ORA-04061: existing state of package "SCOTT.P1" has been invalidated

§ ORA-04065: not executed, altered or dropped package "SCOTT.P1"

ORA-06508: PL/SQL: could not find program unit being called

This type of error occurs when a package that has state (one or more variables declared at the package level) has been recompiled. All sessions that had previously initialized that package are now out of sync with the newly compiled package. When the database tries to reference or run an element of that package, it cannot “find program unit” and throws an exception.

The solution? Well, you (or the application) could trap the exception and then simply call that same program unit again. The package state will be reset (that’s what the ORA-4068 error message is telling us), and the database will be able to execute the program. Unfortunately, the states of all packages, including DBMS_OUTPUT and other built-in packages, will also have been reset in that session. It is very unlikely that users will be able to continue running the application successfully.

What this means for users of PL/SQL-based applications is that whenever the underlying code needs to be updated (recompiled), all users must stop using the application. That is not an acceptable scenario in today’s world of “always on” Internet-based applications. Oracle Database 11gRelease 2 finally addressed this problem by offering support for “hot patching” of application code through the use of edition-based redefinition. This topic is covered briefly at the end of this chapter.

The bottom line on automatic recompilation bears repeating: prior to Oracle Database 11g Release 2, in live production environments, do not do anything that will invalidate or recompile (automatically or otherwise) any stored objects for which sessions might have instantiations that will be referred to again.

Fortunately, development environments don’t usually need to worry about ripple effects, and automatic recompilation outside of production can greatly ease our development efforts. While it might still be helpful to recompile all invalid program units (explored in the following sections), it is not as critical a step.

ALTER...COMPILE recompilation

You can always recompile a program unit that has previously been compiled into the database using the ALTER...COMPILE command. In the case presented earlier, for example, I know by looking in the data dictionary that three program units were invalidated.

To recompile these program units in the hope of setting their status back to VALID, I can issue these commands:

ALTER PACKAGE bookworm COMPILE BODY REUSE SETTINGS;

ALTER PACKAGE book COMPILE BODY REUSE SETTINGS;

ALTER PROCEDURE add_book COMPILE REUSE SETTINGS;

Notice the inclusion of REUSE SETTINGS. This clause ensures that all the compilation settings (optimization level, warnings level, etc.) previously associated with this program unit will remain the same. If you do not include REUSE SETTINGS, then the current settings of the session will be applied upon recompilation.

Of course, if you have many invalid objects, you will not want to type ALTER COMPILE commands for each one. You could write a simple query, like the following one, to generate all the ALTER commands:

SELECT 'ALTER ' || object_type || ' ' || object_name

|| ' COMPILE REUSE SETTINGS;'

FROM user_objects

WHERE status = 'INVALID'

But the problem with this “bulk” approach is that as you recompile one invalid object, you may cause many others to be marked INVALID. You are much better off relying on more sophisticated methods for recompiling all invalid program units; these are covered next.

Schema-level recompilation

Oracle offers a number of ways to recompile all invalid program units in a particular schema. Unless otherwise noted, the following utilities must be run from a schema with SYSDBA authority. All files listed here may be found in the $ORACLE_HOME/Rdbms/Admin directory. The options include:

utlip.sql

Invalidates and recompiles all PL/SQL code and views in the entire database. (Actually, it sets up some data structures, invalidates the objects, and prompts you to restart the database and run utlrp.sql.)

utlrp.sql

Recompiles all of the invalid objects in serial. This is appropriate for single-processor hardware. If you have a multiprocessor machine, you’ll probably want to use utlrcmp.sql instead.

utlrcmp.sql

Like utlrp.sql, recompiles all invalid objects, but in parallel; it works by submitting multiple recompilation requests into the database’s job queue. You can supply the “degree of parallelism” as an integer argument on the command line. If you leave it null or supply 0, then the script will attempt to select the proper degree of parallelism on its own. However, even Oracle warns that this parallel version may not yield dramatic performance results because of write contention on system tables.

DBMS_UTILITY.RECOMPILE_SCHEMA

This procedure has been around since Oracle8 Database and can be run from any schema; SYSDBA authority is not required. It will recompile program units in the specified schema. Its header is defined as follows:

DBMS_UTILITY.COMPILE_SCHEMA (

schema VARCHAR2

, compile_all BOOLEAN DEFAULT TRUE,

, reuse_settings BOOLEAN DEFAULT FALSE

);

Prior to Oracle Database 10g, this utility was poorly designed and often invalidated as many program units as it recompiled to VALID status. Now, it seems to work as one would expect.

UTL_RECOMP

This built-in package, first introduced in Oracle Database 10g, was designed for database upgrades or patches that require significant recompilation. It has two programs, one that recompiles invalid objects serially and one that uses DBMS_JOB to recompile in parallel. To recompile all of the invalid objects in a database instance in parallel, for example, a DBA needs only to run this single command:

UTL_RECOMP.recomp_parallel

When running this parallel version, it uses the DBMS_JOB package to queue up the recompile jobs. When this happens, all other jobs in the queue are temporarily disabled to avoid conflicts with the recompilation.

Here is an example of calling the serial version to recompile all invalid objects in the SCOTT schema:

CALL UTL_RECOMP.recomp_serial ('SCOTT');

If you have multiple processors, the parallel version may help you complete your recompilations more rapidly. As Oracle notes in its documentation of this package, however, compilation of stored programs results in updates to many catalog structures and is I/O-intensive; the resulting speedup is likely to be a function of the speed of your disks.

Here is an example of requesting recompilation of all invalid objects in the SCOTT schema, using up to four simultaneous threads for the recompilation steps:

CALL UTL_RECOMP.recomp_parallel ('SCOTT', 4);

NOTE

Solomon Yakobson, an outstanding Oracle DBA and general technologist, has also written a recompile utility that can be used by non-DBAs to recompile all invalid program units in dependency order. It handles stored programs, views (including materialized views), triggers, user-defined object types, and dimensions. You can find the utility in a file named recompile.sql on the book’s website.

AVOIDING THOSE INVALIDATIONS

When a database object’s DDL time changes, the database’s usual modus operandi is to immediately invalidate all of its dependents on the local database.

In Oracle Database 10g and later releases, recompiling a stored program via its original creation script will not invalidate dependents. This feature does not extend to recompiling a program using ALTER...COMPILE or via automatic recompilation, which will invalidate dependents. Note that even if you use a script, the database is very picky; if you change anything in your source code—even just a single letter—that program’s dependents will be marked INVALID.

Compile-Time Warnings

Compile-time warnings can greatly improve the maintainability of your code and reduce the chance that bugs will creep into it. Compile-time warnings differ from compile-time errors; with warnings, your program will still compile and run. You may, however, encounter unexpected behavior or reduced performance as a result of running code that is flagged with warnings.

This section explores how compile-time warnings work and which issues are currently detected. Let’s start with a quick example of applying compile-time warnings in your session.

A Quick Example

A very useful compile-time warning is PLW-06002: Unreachable code. Consider the following program (available in the cantgothere.sql file on the book’s website). Because I have initialized the salary variable to 10,000, the conditional statement will always send me to line 9. Line 7 will never be executed:

/* File on web: cantgothere.sql */

1 PROCEDURE cant_go_there

2 AS

3 l_salary NUMBER := 10000;

4 BEGIN

5 IF l_salary > 20000

6 THEN

7 DBMS_OUTPUT.put_line ('Executive');

8 ELSE

9 DBMS_OUTPUT.put_line ('Rest of Us');

10 END IF;

11 END cant_go_there;

If I compile this code in any release prior to Oracle Database 10g, I am simply told “Procedure created.” If, however, I have enabled compile-time warnings in my session in this release or later, when I try to compile the procedure I get this response from the compiler:

SP2-0804: Procedure created with compilation warnings

SQL> SHOW err

Errors for PROCEDURE CANT_GO_THERE:

LINE/COL ERROR

-------- --------------------------------------

7/7 PLW-06002: Unreachable code

Given this warning, I can now go back to that line of code, determine why it is unreachable, and make the appropriate corrections.

Enabling Compile-Time Warnings

Oracle allows you to turn compile-time warnings on and off, and also to specify the types of warnings that interest you. There are three categories of warnings:

Severe

Conditions that could cause unexpected behavior or actual wrong results, such as aliasing problems with parameters.

Performance

Conditions that could cause performance problems, such as passing a VARCHAR2 value to a NUMBER column in an UPDATE statement.

Informational

Conditions that do not affect performance or correctness, but that you might want to change to make the code more maintainable.

Oracle lets you enable/disable compile-time warnings for a specific category, for all categories, and even for specific, individual warnings. You can do this with either the ALTER DDL command or the DBMS_WARNING built-in package.

To turn on compile-time warnings in your system as a whole, issue this command:

ALTER SYSTEM SET PLSQL_WARNINGS='string'

The following command, for example, turns on compile-time warnings in your system for all categories:

ALTER SYSTEM SET PLSQL_WARNINGS='ENABLE:ALL';

This is a useful setting to have in place during development because it will catch the largest number of potential issues in your code.

To turn on compile-time warnings in your session for severe problems only, issue this command:

ALTER SESSION SET PLSQL_WARNINGS='ENABLE:SEVERE';

And if you want to alter compile-time warnings settings for a particular, already compiled program, you can issue a command like this:

ALTER PROCEDURE hello COMPILE PLSQL_WARNINGS='ENABLE:ALL' REUSE SETTINGS;

NOTE

Make sure to include REUSE SETTINGS to make sure that all other settings (such as the optimization level) are not affected by the ALTER command.

You can tweak your settings with a very high level of granularity by combining different options. For example, suppose that I want to see all performance-related issues, that I will not concern myself with server issues for the moment, and that I would like the compiler to treat PLW-05005: function exited without a RETURN as a compile error. I would then issue this command:

ALTER SESSION SET PLSQL_WARNINGS=

'DISABLE:SEVERE'

,'ENABLE:PERFORMANCE'

,'ERROR:05005';

I especially like this “treat as error” option. Consider the PLW-05005: function returns without value warning. If I leave PLW-05005 simply as a warning, then when I compile my no_return function, shown next, the program does compile, and I can use it in my application:

SQL> CREATE OR REPLACE FUNCTION no_return

2 RETURN VARCHAR2

3 AS

4 BEGIN

5 DBMS_OUTPUT.PUT_LINE (

6 'Here I am, here I stay');

7 END no_return;

8 /

SP2-0806: Function created with compilation warnings

SQL> SHOW ERR

Errors for FUNCTION NO_RETURN:

LINE/COL ERROR

-------- -----------------------------------------------------------------

1/1 PLW-05005: function NO_RETURN returns without value at line 7

If I now alter the treatment of that error with the ALTER SESSION command just shown and then recompile no_return, the compiler stops me in my tracks:

Warning: Procedure altered with compilation errors

By the way, I could also change the settings for that particular program only, to flag this warning as a “hard” error, with a command like this:

ALTER PROCEDURE no_return COMPILE PLSQL_WARNINGS = 'error:6002' REUSE SETTINGS

/

You can, in each of these variations of the ALTER command, also specify ALL as a quick and easy way to refer to all compile-time warning categories, as in:

ALTER SESSION SET PLSQL_WARNINGS='ENABLE:ALL';

Oracle also provides the DBMS_WARNING package, which provides the same capabilities to set and change compile-time warning settings through a PL/SQL API. DBMS_WARNING goes beyond the ALTER command, allowing you to make changes to those warning controls that you care about while leaving all the others intact. You can also easily restore the original settings when you’re done.

DBMS_WARNING was designed to be used in install scripts in which you might need to disable a certain warning, or treat a warning as an error, for individual program units being compiled. You might not have any control over the scripts surrounding those for which you are responsible. Each script’s author should be able to set the warning settings he wants, while inheriting a broader set of settings from a more global scope.

Some Handy Warnings

In the following sections, I present a subset of all the warnings Oracle has implemented, with an example of the type of code that will elicit the warning and descriptions of interesting behavior (where present) in the way that Oracle has implemented compile-time warnings.

To see the full list of warnings available in any given Oracle version, search for the PLW section of the Error Messages book of the Oracle documentation set.

PLW-05000: Mismatch in NOCOPY qualification between specification and body

The NOCOPY compiler hint tells the Oracle database that, if possible, you would like it to not make a copy of your IN OUT arguments. This can improve the performance of programs that pass large data structures, such as collections or CLOBs.

You need to include the NOCOPY hint in both the specification and the body of your program (relevant for packages and object types). If the hint is not present in both, the database will apply whatever is specified in the specification.

Here is an example of code that will generate this warning:

/* File on web: plw5000.sql */

PACKAGE plw5000

IS

TYPE collection_t IS

TABLE OF VARCHAR2 (100);

PROCEDURE proc (

collection_in IN OUT NOCOPY

collection_t);

END plw5000;

PACKAGE BODY plw5000

IS

PROCEDURE proc (

collection_in IN OUT

collection_t)

IS

BEGIN

DBMS_OUTPUT.PUT_LINE ('Hello!');

END proc;

END plw5000;

Compile-time warnings will display as follows:

SQL> SHOW ERRORS PACKAGE BODY plw5000

Errors for PACKAGE BODY PLW5000:

LINE/COL ERROR

-------- -----------------------------------------------------------------

3/20 PLW-05000: mismatch in NOCOPY qualification between specification

and body

3/20 PLW-07203: parameter 'COLLECTION_IN' may benefit from use of the

NOCOPY compiler hint

PLW-05001: Previous use of ‘string’ (at line string) conflicts with this use

This warning will make itself heard when you have declared more than one variable or constant with the same name. It can also pop up if the parameter list of a program defined in a package specification is different from that of the definition in the package body.

You may be saying to yourself: I’ve seen that error before, but it is a compilation error, not a warning. And, in fact, you are right, in that the following program simply will not compile:

/* File on web: plw5001.sql */

PROCEDURE plw5001

IS

a BOOLEAN;

a PLS_INTEGER;

BEGIN

a := 1;

DBMS_OUTPUT.put_line ('Will not compile');

END plw5001;

You will receive the following compile error: PLS-00371: at most one declaration for ‘A’ is permitted in the declaration section.

So why is there a warning for this situation? Consider what happens when I remove the assignment to the variable named a:

SQL> CREATE OR REPLACE PROCEDURE plw5001

2 IS

3 a BOOLEAN;

4 a PLS_INTEGER;

5 BEGIN

6 DBMS_OUTPUT.put_line ('Will not compile?');

7 END plw5001;

8 /

Procedure created.

The program compiles! The database does not flag the PLS-00371 because I have not actually used either of the variables in my code. The PLW-05001 warning fills that gap by giving me a heads up if I have declared, but not yet used, variables with the same name, as you can see here:

SQL> ALTER PROCEDURE plw5001 COMPILE plsql_warnings = 'enable:all';

SP2-0805: Procedure altered with compilation warnings

SQL> SHOW ERRORS

Errors for PROCEDURE PLW5001:

LINE/COL ERROR

-------- -----------------------------------------------------------------

4/4 PLW-05001: previous use of 'A' (at line 3) conflicts with this use

PLW-05003: Same actual parameter (string and string) at IN and NOCOPY may have side effects

When you use NOCOPY with an IN OUT parameter, you are asking PL/SQL to pass the argument by reference, rather than by value. This means that any changes to the argument are made immediately to the variable in the outer scope. “By value” behavior (NOCOPY is not specified or the compiler ignores the NOCOPY hint), on the other hand, dictates that changes within the program are made to a local copy of the IN OUT parameter. When the program terminates, these changes are then copied to the actual parameter. (If an error occurs, the changed values are not copied back to the actual parameter.)

Use of the NOCOPY hint increases the possibility that you will run into the issue of argument aliasing, in which two different names point to the same memory location. Aliasing can be difficult to understand and debug; a compile-time warning that catches this situation will come in very handy.

Consider this program:

/* File on web: plw5003.sql */

PROCEDURE very_confusing (

arg1 IN VARCHAR2

, arg2 IN OUT VARCHAR2

, arg3 IN OUT NOCOPY VARCHAR2

)

IS

BEGIN

arg2 := 'Second value';

DBMS_OUTPUT.put_line ('arg2 assigned, arg1 = ' || arg1);

arg3 := 'Third value';

DBMS_OUTPUT.put_line ('arg3 assigned, arg1 = ' || arg1);

END;

It’s a simple enough program: pass in three strings, two of which are IN OUT; assign values to those IN OUT arguments; and display the value of the first IN argument’s value after each assignment.

Now I will run this procedure, passing the very same local variable as the argument for each of the three parameters:

SQL> DECLARE

2 str VARCHAR2 (100) := 'First value';

3 BEGIN

4 DBMS_OUTPUT.put_line ('str before = ' || str);

5 very_confusing (str, str, str);

6 DBMS_OUTPUT.put_line ('str after = ' || str);

7 END;

8 /

str before = First value

arg2 assigned, arg1 = First value

arg3 assigned, arg1 = Third value

str after = Second value

Notice that while very_confusing is still running, the value of the arg1 argument was not affected by the assignment to arg2. Yet when I assigned a value to arg3, the value of arg1 (an IN argument) was changed to “Third value”! Furthermore, when very_confusing terminated, the assignment to arg2 was applied to the str variable. Thus, when control returned to the outer block, the value of the str variable was set to “Second value”, effectively writing over the assignment of “Third value”.

As I said earlier, parameter aliasing can be very confusing. So, if you enable compile-time warnings, programs such as plw5003 may be revealed to have potential aliasing problems:

SQL> CREATE OR REPLACE PROCEDURE plw5003

2 IS

3 str VARCHAR2 (100) := 'First value';

4 BEGIN

5 DBMS_OUTPUT.put_line ('str before = ' || str);

6 very_confusing (str, str, str);

7 DBMS_OUTPUT.put_line ('str after = ' || str);

8 END plw5003;

9 /

SP2-0804: Procedure created with compilation warnings

SQL> SHOW ERR

Errors for PROCEDURE PLW5003:

LINE/COL ERROR

-------- -----------------------------------------------------------------

6/4 PLW-05003: same actual parameter(STR and STR) at IN and NOCOPY

may have side effects

6/4 PLW-05003: same actual parameter(STR and STR) at IN and NOCOPY

may have side effects

PLW-05004: Identifier string is also declared in STANDARD or is a SQL built-in

Many PL/SQL developers are unaware of the STANDARD package and its implications for their PL/SQL code. For example, it is common to find programmers who assume that names like INTEGER and TO_CHAR are reserved words in the PL/SQL language. That is not the case. They are, respectively, a datatype and a function declared in the STANDARD package.

STANDARD is one of the two default packages of PL/SQL (the other is DBMS_STANDARD). Because STANDARD is a default package, you do not need to qualify references to datatypes like INTEGER, NUMBER, PLS_INTEGER, and so on with “STANDARD”—but you could, if you so desired.

PLW-5004 notifies you if you happen to have declared an identifier with the same name as an element in STANDARD (or a SQL built-in; most built-ins—but not all—are declared in STANDARD).

Consider this procedure definition:

/* File on web: plw5004.sql

1 PROCEDURE plw5004

2 IS

3 INTEGER NUMBER;

4

5 PROCEDURE TO_CHAR

6 IS

7 BEGIN

8 INTEGER := 10;

9 END TO_CHAR;

10 BEGIN

11 TO_CHAR;

12 END plw5004;

Compile-time warnings for this procedure will display as follows:

LINE/COL ERROR

-------- -----------------------------------------------------------------

3/4 PLW-05004: identifier INTEGER is also declared in STANDARD

or is a SQL builtin

5/14 PLW-05004: identifier TO_CHAR is also declared in STANDARD

or is a SQL builtin

You should avoid reusing the names of elements defined in the STANDARD package unless you have a very specific reason to do so.

PLW-05005: Function string returns without value at line string

This warning makes me happy. A function that does not return a value is a very badly designed function. This is a warning that I would recommend you ask the database to treat as an error with the “ERROR:5005” syntax in your PLSQL_WARNINGS setting.

You already saw one example of such a function: no_return. That was a very obvious chunk of code; there wasn’t a single RETURN in the entire executable section. Your code will, of course, be more complex. The fact that a RETURN may not be executed could well be hidden within the folds of complex conditional logic.

At least in some of these situations, though, the database will still detect the problem. The following program demonstrates one such situation:

1 FUNCTION no_return (

2 check_in IN BOOLEAN)

3 RETURN VARCHAR2

4 AS

5 BEGIN

6 IF check_in

7 THEN

8 RETURN 'abc';

9 ELSE

10 DBMS_OUTPUT.put_line (

11 'Here I am, here I stay');

12 END IF;

13 END no_return;

Oracle has detected a branch of logic that will not result in the execution of a RETURN, so it flags the program with a warning. The plw5005.sql file on the book’s website contains even more complex conditional logic, demonstrating that the warning is raised for less trivial code structures as well.

PLW-06002: Unreachable code

The Oracle database now performs static (compile-time) analysis of your program to determine if any lines of code in your program will never be reached during execution. This is extremely valuable feedback to receive, but you may find that the compiler warns you of this problem on lines that do not, at first glance, seem to be unreachable. In fact, Oracle notes in the description of the action to take for this error that you should “disable the warning if much code is made unreachable intentionally and the warning message is more annoying than helpful.”

You already saw an example of this compile-time warning in the section A Quick Example. Now consider the following code:

/* File on web: plw6002.sql */

1 PROCEDURE plw6002

2 AS

3 l_checking BOOLEAN := FALSE;

4 BEGIN

5 IF l_checking

6 THEN

7 DBMS_OUTPUT.put_line ('Never here...');

8 ELSE

9 DBMS_OUTPUT.put_line ('Always here...');

10 GOTO end_of_function;

11 END IF;

12 <<end_of_function>>

13 NULL;

14 END plw6002;

In Oracle Database 10g and later, you will see the following compile-time warnings for this program:

LINE/COL ERROR

-------- ------------------------------

5/7 PLW-06002: Unreachable code

7/7 PLW-06002: Unreachable code

13/4 PLW-06002: Unreachable code

I see why line 7 is marked as unreachable: l_checking is set to FALSE, and so line 7 can never run. But why is line 5 marked unreachable? It seems as though, in fact, that code will always be run! Furthermore, line 13 will always be run as well because the GOTO will direct the flow of execution to that line through the label. Yet it too is tagged as unreachable.

The reason for this behavior is that prior to Oracle Database 11g, the unreachable code warning is generated after optimization of the code. In Oracle Database 11g and later, the analysis of unreachable code is much cleaner and more helpful.