Statistics Done Wrong: The Woefully Complete Guide (2015)

Chapter 11. Hiding the Data

I’ve talked about the common mistakes made by scientists and how the best way to spot them is with a bit of outside scrutiny. Peer reviewers provide some of this scrutiny, but they don’t have time to extensively reanalyze data and read code for typos—they can check only that the methodology makes sense. Sometimes they spot obvious errors, but subtle problems are usually missed.1

This is one reason why many journals and professional societies require researchers to make their data available to other scientists upon request. Full datasets are usually too large to print in the pages of a journal, and online publication of results is rare—full data is available online for less than 10% of papers published by top journals, with partial publication of select results more common.2 Instead, authors report their results and send the complete data to other scientists only if they ask for a copy. Perhaps they will find an error or a pattern the original scientists missed, or perhaps they can use the data to investigate a related topic. Or so it goes in theory.

Captive Data

In 2005, Jelte Wicherts and colleagues at the University of Amsterdam decided to analyze every recent article in several prominent journals of the American Psychological Association (APA) to learn about their statistical methods. They chose the APA partly because it requires authors to agree to share their data with other psychologists seeking to verify their claims. But six months later, they had received data for only 64 of the 249 studies they sought it for. Almost three-quarters of authors never sent their data.3

Of course, scientists are busy people. Perhaps they simply didn’t have time to compile their datasets, produce documents describing what each variable meant and how it was measured, and so on. Or perhaps their motive was self-preservation; perhaps their data was not as conclusive as they claimed. Wicherts and his colleagues decided to test this. They trawled through all the studies, looking for common errors that could be spotted by reading the paper, such as inconsistent statistical results, misuse of statistical tests, and ordinary typos. At least half of the papers had an error, usually minor, but 15% reported at least one statistically significant result that was significant only because of an error.

Next Wicherts and his colleagues looked for a correlation between these errors and an unwillingness to share data. There was a clear relationship. Authors who refused to share their data were more likely to have committed an error in their paper, and their statistical evidence tended to be weaker.4 Because most authors refused to share their data, Wicherts could not dig for deeper statistical errors, and many more may be lurking.

This is certainly not proof that authors hid their data because they knew their results were flawed or weak; there are many possible confounding factors. Correlation doesn’t imply causation, but it does waggle its eyebrows suggestively and gesture furtively while mouthing, “Look over there.”[19] And the surprisingly high error rates demonstrate why data should be shared. Many errors are not obvious in the published paper and will be noticed only when someone reanalyzes the original data from scratch.

Obstacles to Sharing

Sharing data isn’t always as easy as posting a spreadsheet online, though some fields do facilitate it. There are gene sequencing databases, protein structure databanks, astronomical observation databases, and earth observation collections containing the contributions of thousands of scientists. Medical data is particularly tricky, however, since it must be carefully scrubbed of any information that may identify a patient. And pharmaceutical companies raise strong objections to sharing their data on the grounds that it is proprietary. Consider, for example, the European Medicines Agency (EMA).

In 2007, researchers from the Nordic Cochrane Center sought data from the EMA about two weight-loss drugs. They were conducting a systematic review of the effectiveness of the drugs and knew that the EMA, as the authority in charge of allowing drugs onto the European market, would have trial data submitted by the manufacturers that was perhaps not yet published publicly. But the EMA refused to disclose the data on the grounds that it might “unreasonably undermine or prejudice the commercial interests of individuals or companies” by revealing their trial design methods and commercial plans. They rejected the claim that withholding the data could harm patients.

After three and a half years of bureaucratic wrangling and after reviewing each study report and finding no secret commercial information, the European Ombudsman finally ordered the EMA to release the documents. In the meantime, one of the drugs had been taken off the market because of side effects including serious psychiatric problems.5

Academics use similar justifications to keep their data private. While they aren’t worried about commercial interests, they are worried about competing scientists. Sharing a dataset may mean being beaten to your next discovery by a freeloader who acquired the data, which took you months and thousands of dollars to collect, for free. As a result, it is common practice in some fields to consider sharing data only after it is no longer useful to you—once you have published as many papers about it as you can.

Fear of being scooped is a powerful obstacle in academia, where career advancement depends on publishing many papers in prestigious journals. A junior scientist cannot afford to waste six months of work on a project only to be beaten to publication by someone else. Unlike in basketball, there is no academic credit for assists; if you won’t get coauthor credit, why bother sharing the data with anyone? While this view is incompatible with the broader goal of the rapid advancement of science, it is compelling for working scientists.

Apart from privacy, commercial concerns, and academic competition, there are practical issues preventing data sharing. Data is frequently stored in unusual formats produced by various scientific instruments or analysis packages, and spreadsheet software saves data in proprietary or incompatible formats. (There is no guarantee that your Excel spreadsheet or SPSS data file will be readable 30 years from now, or even by a colleague using different software.) Not all data can easily be uploaded as a spreadsheet anyway—what about an animal behavior study that recorded hours of video or a psychology study supported by hours of interviews? Even if sufficient storage space were found to archive hundreds of hours of video, who would bear the costs and would anyone bother to watch it?

Releasing data also requires researchers to provide descriptions of the data format and measurement techniques—what equipment settings were used, how calibration was handled, and so on. Laboratory organization is often haphazard, so researchers may not have the time to assemble their collection of spreadsheets and handwritten notes; others may not have a way of sharing gigabytes of raw data.

Data Decay

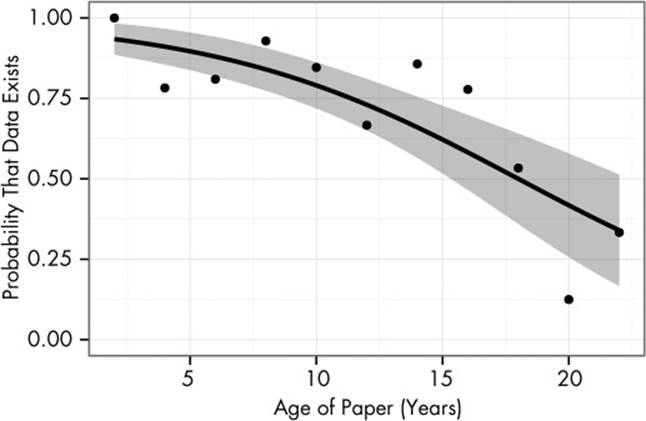

Another problem is the difficulty of keeping track of data as computers are replaced, technology goes obsolete, scientists move to new institutions, and students graduate and leave labs. If the dataset is no longer in use by its creators, they have no incentive to maintain a carefully organized personal archive of datasets, particularly when data has to be reconstructed from floppy disks and filing cabinets. One study of 516 articles published between 1991 and 2011 found that the probability of data being available decayed over time. For papers more than 20 years old, fewer than half of datasets were available.6,7 Some authors could not be contacted because their email addresses had changed; others replied that they probably have the data, but it’s on a floppy disk and they no longer have a floppy drive or that the data was on a stolen computer or otherwise lost. The decay is illustrated in Figure 11-1.[20]

Figure 11-1. As papers get older, the probability that their data is still in existence decays. The solid line is a fitted curve, and the gray band is its 95% confidence band; the points indicate the average availability rates for papers at each age. This plot only includes papers for which authors could be contacted.

Various startups and nonprofits are trying to address this problem. Figshare, for instance, allows researchers to upload gigabytes of data, plots, and presentations to be shared publicly in any file format. To encourage sharing, submissions are given a digital object identifier (DOI), a unique ID commonly used to cite journal articles; this makes it easy to cite the original creators of the data when reusing it, giving them academic credit for their hard work. The Dryad Digital Repository partners with scientific journals to allow authors to deposit data during the article submission process and encourages authors to cite data they have relied on. Dryad promises to convert files to new formats as older formats become obsolete, preventing data from fading into obscurity as programs lose the ability to read it. Dryad also keeps copies of data at several universities to guard against accidental loss.

The eventual goal is to make it easy to get credit for the publication and reuse of your data. If another scientist uses your data to make an important discovery, you can bask in the reflected glory, and citations of your data can be listed in the same way citations of your papers are. With this incentive, scientists may be able to justify the extra work to deposit their datasets online. But will this be enough? Scientific practice changes very slowly. And will anyone bother to check the data for errors?

Just Leave Out the Details

It is difficult to ask for data you do not know exists. Journal articles are often highly abridged summaries of the years of research they report on, and scientists have a natural prejudice toward reporting the parts that worked. If a measurement or test turned out to be irrelevant to the final conclusions, it will be omitted. If several outcomes were measured and one showed statistically insignificant changes during the study, it won’t be mentioned unless the insignificance is particularly interesting.

Journal space limits frequently force the omission of negative results and detailed methodological details. It is not uncommon for major journals to enforce word limits on articles: the Lancet, for example, requires articles to be less than 3,000 words, while Science limits articles to 4,500 words and suggests that methods be described in an online supplement to the article. Online-only journals such as PLOS ONE do not need to pay for printing, so there are no length limits.

Known Unknowns

It’s possible to evaluate studies to see what they left out. Scientists leading medical trials are required to provide detailed study plans to ethical review boards before starting a trial, so one group of researchers obtained a collection of these protocols from a Danish review board.8 The protocols specify how many patients will be recruited, what outcomes will be measured, how missing data (such as patient dropouts or accidental sample losses) will be handled, what statistical analyses will be performed, and so on. Many study protocols had important missing details, however, and few published papers matched the protocols.

We have seen how important it is for studies to collect a sufficiently large sample of data, and most of the ethical review board filings detailed the calculations used to determine an appropriate sample size. However, less than half of the published papers described the sample-size calculation in detail. It also appears that recruiting patients for clinical trials is difficult—half of the studies recruited different numbers of patients than they intended to, and sometimes the researchers did not explain why this happened or what impact it may have on the results.

Worse, many of the scientists omitted results. The review board filings listed outcomes that would be measured by each study: side-effect rates, patient-reported symptoms, and so on. Statistically significant changes in these outcomes were usually reported in published papers, but statistically insignificant results were omitted, as though the researchers had never measured them. Obviously, this leads to hidden multiple comparisons. A study may monitor many outcomes but report only the few that are statistically significant. A casual reader would never know that the study had monitored the insignificant outcomes. When surveyed, most of the researchers denied omitting outcomes, but the review board filings belied their claims. Every paper written by a researcher who denied omitting outcomes had, in fact, left some outcomes unreported.

Outcome Reporting Bias

In medicine, the gold standard of evidence is a meta-analysis of many well-conducted randomized trials. The Cochrane Collaboration, for example, is an international group of volunteers that systematically reviews published randomized trials about various issues in medicine and then produces a report summarizing current knowledge in the field and the treatments and techniques best supported by the evidence. These reports have a reputation for comprehensive detail and methodological scrutiny.

However, if boring results never appear in peer-reviewed publications or are shown in insufficient detail to be of use, the Cochrane researchers will never include them in reviews, causing what is known as outcome reporting bias, where systematic reviews become biased toward more extreme and more interesting results. If the Cochrane review is to cover the use of a particular steroid drug to treat pregnant women entering labor prematurely, with the target outcome of interest being infant mortality rates, it’s no good if some of the published studies collected mortality data but didn’t describe it in any detail because it was statistically insignificant.[21]

A systematic review of Cochrane systematic reviews revealed that more than a third are probably affected by outcome reporting bias. Reviewers sometimes did not realize outcome reporting bias was present, instead assuming the outcome simply hadn’t been measured. It’s impossible to exactly quantify how a review’s results would change if unpublished results were included, but by their estimate, a fifth of statistically significant review results could become insignificant, and a quarter could have their effect sizes decrease by 20% or more.9

Other reviews have found similar problems. Many studies suffer from missing data. Some patients drop out or do not appear for scheduled checkups. While researchers frequently note that data was missing, they frequently do not explain why or describe how patients with incomplete data were handled in the analysis, though missing data can cause biased results (if, for example, those with the worst side effects drop out and aren’t counted).10 Another review of medical trials found that most studies omit important methodological details, such as stopping rules and power calculations, with studies in small specialist journals faring worse than those in large general medicine journals.11

Medical journals have begun to combat this problem by coming up with standards, such as the CONSORT checklist, which requires reporting of statistical methods, all measured outcomes, and any changes to the trial design after it began. Authors are required to follow the checklist’s requirements before submitting their studies, and editors check to make sure all relevant details are included. The checklist seems to work; studies published in journals that follow the guidelines tend to report more essential detail, though not all of it.12 Unfortunately, the standards are inconsistently applied, and studies often slip through with missing details.13 Journal editors will need to make a greater effort to enforce reporting standards.

Of course, underreporting is not unique to medicine. Two-thirds of academic psychologists admit to sometimes omitting some outcome variables in their papers, creating outcome reporting bias. Psychologists also often report on several experiments in the same paper, testing the same phenomenon from different angles, and half of psychologists admit to reporting only the experiments that worked. These practices persist even though most survey respondents agreed they are probably indefensible.14

In biological and biomedical research, the problem often isn’t reporting of patient enrollment or power calculations. The problem is the many chemicals, genetically modified organisms, specially bred cell lines, and antibodies used in experiments. Results can be strongly dependent on these factors, but many journals do not have reporting guidelines for these factors, and the majority of chemicals and cells referred to in biomedical papers are not uniquely identifiable, even in journals with strict reporting requirements.15 Attempt to replicate the findings, as the Bayer and Amgen researchers mentioned earlier did, and you may find it difficult to accurately reproduce the experiment. How can you replicate an immunology paper when it does not state which antibodies to order from the supplier?[22]

We see that published papers aren’t faring very well. What about unpublished studies?

Science in a Filing Cabinet

Earlier you saw the impact of multiple comparisons and truth inflation on study results. These problems arise when studies make numerous comparisons with low statistical power, giving a high rate of false positives and inflated estimates of effect sizes, and they appear everywhere in published research.

But not every study is published. We only ever see a fraction of medical research, for instance, because few scientists bother publishing “We Tried This Medicine and It Didn’t Seem to Work.” In addition, editors of prestigious journals must maintain their reputation for groundbreaking results, and peer reviewers are naturally prejudiced against negative results. When presented with papers with identical methods and writing, reviewers grade versions with negative results more harshly and detect more methodological errors.16

Unpublished Clinical Trials

Consider an example: studies of the tumor suppressor protein TP53 and its effect on head and neck cancer. A number of studies suggested that measurements of TP53 could be used to predict cancer mortality rates since it serves to regulate cell growth and development and hence must function correctly to prevent cancer. When all 18 published studies on TP53 and cancer were analyzed together, the result was a highly statistically significant correlation. TP53 could clearly be measured to tell how likely a tumor is to kill you.

But then suppose we dig up unpublished results on TP53: data that had been mentioned in other studies but not published or analyzed. Add this data to the mix, and the statistically significant effect vanishes.17 After all, few authors bothered to publish data showing no correlation, so the meta-analysis could use only a biased sample.

A similar study looked at reboxetine, an antidepressant sold by Pfizer. Several published studies suggested it was effective compared to a placebo, leading several European countries to approve it for prescription to depressed patients. The German Institute for Quality and Efficiency in Health Care, responsible for assessing medical treatments, managed to get unpublished trial data from Pfizer—three times more data than had ever been published—and carefully analyzed it. The result: reboxetine is not effective. Pfizer had convinced the public that it was effective only by neglecting to mention the studies showing it wasn’t.18

A similar review of 12 other antidepressants found that of studies submitted to the United States Food and Drug Administration during the approval process, the vast majority of negative results were never published or, less frequently, were published to emphasize secondary outcomes.19 (For example, if a study measured both depression symptoms and side effects, the insignificant effect on depression might be downplayed in favor of significantly reduced side effects.) While the negative results are available to the FDA to make safety and efficacy determinations, they are not available to clinicians and academics trying to decide how to treat their patients.

This problem is commonly known as publication bias, or the file drawer problem. Many studies sit in a file drawer for years, never published, despite the valuable data they could contribute. Or, in many cases, studies are published but omit the boring results. If they measured multiple outcomes, such as side effects, they might simply say an effect was “insignificant” without giving any numbers, omit mention of the effect entirely, or quote effect sizes but no error bars, giving no information about the strength of the evidence.

As worrisome as this is, the problem isn’t simply the bias on published results. Unpublished results lead to a duplication of effort—if other scientists don’t know you’ve done a study, they may well do it again, wasting money and effort. (I have heard scientists tell stories of talking at a conference about some technique that didn’t work, only to find that several scientists in the room had already tried the same thing but not published it.) Funding agencies will begin to wonder why they must support so many studies on the same subject, and more patients and animals will be subjected to experiments.

Spotting Reporting Bias

It is possible to test for publication and outcome reporting bias. If a series of studies have been conducted on a subject and a systematic review has estimated an effect size from the published data, you can easily calculate the power of each individual study in the review.[23] Suppose, for example, that the effect size is 0.8 (on some arbitrary scale), but the review was composed of many small studies that each had a power of 0.2. You would expect only 20% of the studies to be able to detect the effect—but you may find that 90% or more of the published studies found it because the rest were tossed in the bin.20

This test has been used to discover worrisome bias in the publication of neurological studies of animal experimentation.21 Animal testing is ethically justified on the basis of its benefits to the progress of science and medicine, but evidence of strong outcome reporting bias suggests that many animals have been used in studies that went unpublished, adding nothing to the scientific record.

The same test has been used in a famous controversy in psychology: Daryl Bem’s 2011 research claiming evidence for “anomalous retroactive influences on cognition and affect,” or psychic prediction of the future. It was published in a reputable journal after peer review but predictably received negative responses from skeptical scientists immediately after publication. Several subsequent papers showed flaws in his analysis and gave alternative statistical approaches that gave more reasonable results. Some of these are too technically detailed to cover here, but one is directly relevant.

Gregory Francis wondered whether Bem had gotten his good results through publication bias. Knowing his findings would not be readily believed, Bem published not one but 10 different experiments in the same study, with 9 showing statistically significant psychic powers. This would seem to be compelling, but only if there weren’t numerous unreported studies that found no psychic powers. Francis found that Bem’s success rate did not match his statistical power—it was the result of publication bias, not extrasensory perception.22

Francis published a number of similar papers criticizing other prominent studies in psychology, accusing them of obvious publication bias. He apparently trawled through the psychological literature, testing papers until he found evidence of publication bias. This continued until someone noticed the irony.23 A debate still rages in the psychological literature over the impact of publication bias on publications about publication bias.

Forced Disclosure

Regulators and scientific journals have attempted to halt publication bias. The Food and Drug Administration requires certain kinds of clinical trials to be registered through its website ClinicalTrials.gov before the trials begin, and it requires the publication of summary results on the ClinicalTrials.gov website within a year of the end of the trial. To help enforce registration, the International Committee of Medical Journal Editors announced in 2005 that it would not publish studies that had not been preregistered.

Compliance has been poor. A random sample of all clinical trials registered from June 2008 to June 2009 revealed that more than 40% of protocols were registered after the first study participants have been recruited, with the median delinquent study registered 10 months late.24 This clearly defeats the purpose of requiring advanced registration. Fewer than 40% of protocols clearly specified the primary outcome being measured by the study, the time frame over which it would be measured, and the technique used to measure it, which is unfortunate given that the primary outcome is the purpose of the study.

Similarly, reviews of registered clinical trials have found that only about 25% obey the law that requires them to publish results through ClinicalTrials.gov.25,26 Another quarter of registered trials have no results published anywhere, in scientific journals or in the registry.27 It appears that despite the force of law, most researchers ignore the ClinicalTrials.gov results database and publish in academic journals or not at all; the Food and Drug Admimistration has not fined any drug companies for noncompliance, and journals have not consistently enforced the requirement to register trials.5 Most peer reviewers do not check trial registers for discrepancies with manuscripts under review, believing this is the responsibility of the journal editors, who do not check either.28

Of course, these reporting and registration requirements do not apply to other scientific fields. Researchers in fields such as psychology have suggested encouraging registration by prominently labeling preregistered studies, but such efforts have not taken off.29 Other suggestions include allowing peer review of study protocols in advance, with the journal deciding to accept or reject the study before data has been collected; acceptance can be based only on the quality of the study’s design, rather than its results. But this is not yet widespread. Many studies simply vanish.

TIPS

§ Register protocols in public databases, such as ClinicalTrials.gov, the EU Clinical Trials Register (http://www.clinicaltrialsregister.eu), or any other public registry. The World Health Organization keeps a list at its International Clinical Trials Registry Platform website (http://www.who.int/ictrp/en/), and the SPIRIT checklist (http://www.spirit-statement.org/) lists what should be included in a protocol. Post summary results whenever possible.

§ Document any deviations from the trial protocol and discuss them in your published paper.

§ Make all data available when possible, through specialized databases such as GenBank and PDB or through generic data repositories such as Dryad and Figshare.

§ Publish your software source code, Excel workbooks, or analysis scripts used to analyze your data. Many journals will let you submit these as supplementary material with your paper, or you can use Dryad and Figshare.

§ Follow reporting guidelines in your field, such as CONSORT for clinical trials, STROBE for observational studies in epidemiology, ARRIVE for animal experiments, or STREGA for gene association studies. The EQUATOR Network (http://www.equator-network.org/) maintains lists of guidelines for various fields in medicine.

§ If you obtain negative results, publish them! Some journals may reject negative results as uninteresting, so consider open-access electronic-only journals such as PLOS ONE or Trials, which are peer-reviewed but do not reject studies for being uninteresting. Negative data can also be posted on Figshare.

[19] Joke shamelessly stolen from the alternate text of http://xkcd.com/552/.

[20] The figure was produced using code written by the authors of the study, who released it into the public domain and deposited it with their data in the Dryad Digital Repository. Their results will likely last longer than those of the studies they investigated.

[21] The Cochrane Collaboration logo is a chart depicting the results of studies on corticosteroids given to women entering labor prematurely. The studies on their own were statistically insignificant, but when the data was aggregated, it was clear the treatment would save lives. This remained undiscovered for years because nobody did a comprehensive review to combine available data.

[22] I am told that even with the right materials, biological experiments can be fiendishly difficult to reproduce because they are sensitive to tiny variations in experimental setup. But that’s not an excuse—it’s a serious problem. How can we treat a result as general when it worked only once?

[23] Note that this would not work if each study were truly measuring a different effect because of some systematic differences in how the studies were conducted. Estimating their true power would be much more difficult in that case.

All materials on the site are licensed Creative Commons Attribution-Sharealike 3.0 Unported CC BY-SA 3.0 & GNU Free Documentation License (GFDL)

If you are the copyright holder of any material contained on our site and intend to remove it, please contact our site administrator for approval.

© 2016-2026 All site design rights belong to S.Y.A.