Expert MySQL: Second Edition (2012)

Part I. Getting Started with MySQL Development

Chapter 2. The Anatomy of a Database System

While you may know the basics of a relational database management system (RDBMS) and be an expert at administering the system, you may have never explored the inner workings of a database system. Most of us have been trained on and have experience with managing database systems, but neither academic nor professional training includes much about the way database systems are constructed. A database professional may never need this knowledge, but it is good to know how the system works so that you can understand how best to optimize your server and even how best to utilize its features.

Although understanding the inner workings of an RDBMS isn’t necessary for hosting databases or even maintaining the server or developing applications that use the system, knowing how the system is organized is essential to being able to modify and extend its features. It is also important to grasp the basic principles of the most popular database systems to understand how these systems compare to an RDBMS.

This chapter covers the basics of the subsystems that RDBMSs contain and how they are constructed. I use the anatomy of the MySQL system to illustrate the key components of modern RDBMSs. Those of you who have studied the inner workings of such systems and want to jump ahead to a look at the architecture of MySQL can skip to “The MySQL Database System.”

Types of Database Systems

Most database professionals work with RDBMSs, but several other types of database systems are becoming popular. The following sections present a brief overview of the three most popular types of database systems: object-oriented, object-relational, and relational. It is important to understand the architectures and general features of these systems to fully appreciate the opportunity that Oracle has provided by developing MySQL as open source software and exposing the source code for the system to everyone. This permits me to show you what’s going on inside the box.

Object-Oriented Database Systems

Object-oriented database systems (OODBSs) are storage-and-retrieval mechanisms that support the object-oriented programming (OOP) paradigm through direct manipulation of the data as objects. They contain true object-oriented (OO)-type systems that permit objects to persist among applications and usage. Most, however, lack a standard query language1 (access to the data is typically via a programming interface) and therefore are not true database-management systems.

OODBSs are an attractive alternative to RDBMSs, especially in application areas in which the modeling power or performance of RDBMSs to store data as objects in tables is insufficient. These applications maintain large amounts of data that is never deleted, thereby managing the history of individual objects. The most unusual feature of OODBSs is that it provides support for complex objects by specifying both the structure and the operations that can be applied to these objects via an OOP interface.

OODBSs are particularly suitable for modeling the real world as closely as possible without forcing unnatural relationships among and within entities. The philosophy of object orientation offers a holistic as well as a modeling-oriented view of the real world. These views are necessary for dealing with an elusive subject such as modeling temporal change, particularly in adding OO features to structured data. Despite the general availability of numerous open source OODBSs, most are based in part on relational systems that support query-language interfaces and therefore are not truly OODBSs; rather, they operate more like relational databases with OO interfaces. A true OODBS requires access via a programming interface.

Application areas of OO database systems include geographical information systems (GISs), scientific and statistical databases, multimedia systems, picture archiving and communications systems, semantic web solutions, and XML warehouses.

The greatest adaptability of the OODBS is the tailoring of the data (or objects) and its behavior (or methods). Most OODBS system integrators rely on OO methods for describing data and build their solutions with that expressiveness in the design. Thus, object-oriented database systems are built with specific implementations and are not intended to be general purpose or generalized to have statement–response-type interfaces like RDBMSs.

Object-Relational Database Systems

Object-relational database-management systems (ORDBMSs) are an application of OO theory to RDBMSs. ORDBMSs provide a mechanism that permits database designers to implement a structured storage-and-retrieval mechanism for OO data. ORDBMSs provide the basis of the relational model—meaning, integrity, relationships, and so forth—while extending the model to store and retrieve data in an object-centric manner. Implementation is purely conceptual in many cases, because the mapping of OO concepts to relational concepts is tentative at best. The modifications, or extensions, to the relational technologies include modifications to SQL that allow the representation of object types, identity, encapsulation of operations, and inheritance. These are often loosely mapped to relational theory as complex types. Although expressive, the SQL extensions do not permit the true object manipulation and level of control of OODBSs. A popular ORDBMS is ESRI’s ArcGIS Geodatabase environment. Other examples include Illustra, PostgreSQL, and Informix.

The technology used in ORDBMSs is often based on the relational model. Most ORDBMSs are implemented using existing commercial relational database-management systems (RDBMSs) such as Microsoft SQL Server. Since these systems are based on the relational model, they suffer from a conversion problem of translating OO concepts to relational mechanisms. Some of the many problems with using relational databases for object-oriented applications are:

· The OO conceptual model does not map easily to data tables.

· Complex mapping implies complex programs and queries.

· Complex programs imply maintenance problems.

· Complex programs imply reliability problems.

· Complex queries may not be optimized and may result in slow performance.

· The mapping of object concepts to complex types2 is more vulnerable to schema changes than are relational systems.

· OO performance for SELECT ALL...WHERE queries is slower because it involves multiple joins and lookups.

Although these problems seem significant, they are easily mitigated by the application of an OO application layer that communicates between the underlying relational database and the OO application. These application layers permit the translation of objects into structured (or persistent) data stores. Interestingly, this practice violates the concept of an ORDBMS in that you are now using an OO access mechanism to access the data, which is not why ORDBMSs are created. They are created to permit the storage and retrieval of objects in an RDBMS by providing extensions to the query language.

Although ORDBMSs are similar to OODBSs, OODBSs are very different in philosophy. OODBSs try to add database functionality to OO programming languages via a programming interface and platforms. By contrast, ORDBMSs try to add rich data types to RDBMSs using traditional query languages and extensions. OODBSs attempt to achieve a seamless integration with OOP languages. ORDBMSs do not attempt this level of integration and often require an intermediate application layer to translate information from the OO application to the ORDBMS or even the host RDBMS. Similarly, OODBSs are aimed at applications that have as their central engineering perspective an OO viewpoint. ORDBMSs are optimized for large data stores and object-based systems that support large volumes of data (e.g., GIS applications). Last, the query mechanisms of OODBSs are centered on object manipulation using specialized OO query languages. ORDBMS query mechanisms are geared toward fast retrieval of volumes of data using extensions to the SQL standard. Unlike true OODBSs that have optimized query mechanisms, such as Object Description Language (ODL) and Object Query Language (OQL), ORDBMSs use query mechanisms that are extensions of the SQL query language.

The ESRI product suite of GIS applications contains a product called the Geodatabase (shorthand for geographic database), which supports the storage and management of geographic data elements. The Geodatabase is an object-relational database that supports spatial data. It is an example of a spatial database that is implemented as an ORDBMS.

![]() Note Spatial database systems need not be implemented in ORDBMSs or even OODBSs. ESRI has chosen to implement the Geodatabase as an ORDBMS. More important, GIS data can be stored in an RDBMS that has been extended to support spatial data. Behold! That is exactly what has happened with MySQL. Oracle has added a spatial data engine to the MySQL RDBMS.

Note Spatial database systems need not be implemented in ORDBMSs or even OODBSs. ESRI has chosen to implement the Geodatabase as an ORDBMS. More important, GIS data can be stored in an RDBMS that has been extended to support spatial data. Behold! That is exactly what has happened with MySQL. Oracle has added a spatial data engine to the MySQL RDBMS.

Although ORDBMSs are based on relational-database platforms, they also provide some layer of data encapsulation and behavior. Most ORDBMSs are specialized forms of RDBMSs. Those database vendors who provide ORDBMSs often build extensions to the statement-response interfaces by modifying the SQL to contain object descriptors and spatial query mechanisms. These systems are generally built for a particular application and are, like OODBSs, limited in their general use.

Relational Database Systems

An RDBMS is a data storage-and-retrieval service based on the Relational Model of Data as proposed by E. F. Codd in 1970. These systems are the standard storage mechanism for structured data. A great deal of research is devoted to refining the essential model proposed by Codd, as discussed by C. J. Date in The Database Relational Model: A Retrospective Review and Analysis.3 This evolution of theory and practice is best documented in The Third Manifesto.4

The relational model is an intuitive concept of a storage repository (database) that can be easily queried by using a mechanism called a query language to retrieve, update, and insert data. The relational model has been implemented by many vendors because it has a sound systematic theory, a firm mathematical foundation, and a simple structure. The most commonly used query mechanism is Structured Query Language (SQL), which resembles natural language. Although SQL is not included in the relational model, SQL provides an integral part of the practical application of the relational model in RDBMSs.

The data are represented as related pieces of information (attributes) about a certain entity. The set of values for the attributes is formed as a tuple (sometimes called a record). Tuples are then stored in tables containing tuples that have the same set of attributes. Tables can then be related to other tables through constraints on domains, keys, attributes, and tuples.

RECORD OR TUPLE: IS THERE A DIFFERENCE?

Many mistakenly consider record to be a colloquialism for tuple. One important distinction is that a tuple is a set of ordered elements, whereas a record is a collection of related items without a sense of order. The order of the columns is important in the concept of a record, however. Interestingly, in SQL, a result from a query can be a record, whereas in relational theory, each result is a tuple. Many texts use these terms interchangeably, creating a source of confusion for many.

When exploring the MySQL architecture and source code, we will encounter the term record exclusively to describe a row in a result set or a row for a data update. While the record data structure in MySQL is ordered, the resemblance to a tuple ends there.

The query language of choice for most implementations is Structured Query Language (SQL). SQL, proposed as a standard in the 1980s,is currently an industry standard. Unfortunately, many seem to believe SQL is based on relational theory and therefore is a sound theoretical concept. This misconception is perhaps fueled by a phenomenon brought on by industry. Almost all RDBMSs implement some form of SQL. This popularity has mistakenly overlooked the many sins of SQL, including:

· SQL does not support domains as described by the relational model.

· In SQL, tables can have duplicate rows.

· Results (tables) can contain unnamed columns and duplicate columns.

· The implementation of nulls (missing values) by host database systems has been shown to be inconsistent and incomplete. Thus, many incorrectly associate the mishandling of nulls with SQL when in fact, SQL merely returns the results as presented by the database system.5

The technologies used in RDBMSs are many and varied. Some systems are designed to optimize some portion of the relational model or some application of the model to data. Applications of RDBMSs range from simple data storage and retrieval to complex application suites with complex data, processes, and workflows. This could be as simple as a database that stores your compact disc or DVD collection, or a database designed to manage a hotel-reservation system, or even a complex distributed system designed to manage information on the web. As I mentioned inChapter 1, many web and social-media applications implement the LAMP stack whereby MySQL becomes the database for storage of the data hosted.

Relational database systems provide the most robust data independence and data abstraction. By using the concept of relations, RDBMS provide a truly generalized data storage-and-retrieval mechanism. The downside is, of course, that these systems are highly complex and require considerable expertise to build and modify.

In the next section, I’ll present a typical RDBMS architecture and examine each component of the architecture. Later, I’ll examine a particular implementation of an RDBMS (MySQL).

IS MYSQL A RELATIONAL DATABASE SYSTEM?

Many database theorists will tell you that there are very few true RDBMSs in the world. They would also point out that what relational is and is not is largely driven by your definition of the features supported in the database system and not how well the system conforms to Codd’s relational model.

From a pure marketing viewpoint, MySQL provides a great many features considered essential for RDBMSs. These include, but are not limited to, the ability to relate tables to one another using foreign keys, the implementation of a relational algebra query mechanism, and the use of indexing and buffering mechanisms. Clearly, MySQL offers all of these features and more.

So is MySQL an RDBMS? That depends on your definition of relational. If you consider the features and evolution of MySQL, you should conclude that it is indeed an RDBMS. If you adhere to the strict definition of Codd’s relational model, however, you will conclude that MySQL lacks some features represented in the model. But then again, so do many other RDBMSs.

Relational Database System Architecture

An RDBMS is a complex system comprising specialized mechanisms designed to handle all the functions necessary to store and retrieve information. The architecture of an RDBMS has often been compared to that of an operating system. If you consider the use of an RDBMS, specifically as a server to a host of clients, you see that they have a lot in common with operating systems. For example, having multiple clients means the system must support many requests that may or may not read or write the same data or data from the same location (such as a table). Thus, RDBMSs must handle concurrency efficiently. Similarly, RDBMSs must provide fast access to data for each client. This is usually accomplished using file-buffering techniques that keep the most recently or frequently used data in memory for faster access. Concurrency requires memory-management techniques that resemble virtual memory systems in operating systems. Other similarities with operating systems include network communication support and optimization algorithms designed to maximize performance of the execution of queries.

I’ll begin our exploration of the architecture from the point of view of the user from the issuing of queries to the retrieval of data. The following sections are written so that you can skip the ones you are familiar with and read the ones that interest you. I encourage you to read all of the sections, however, as they present a detailed look at how a typical RDBMS is constructed.

Client Applications

Most RDBMS client applications are developed as separate executable programs that connect to the database via a communications pathway (e.g., a network protocol such as sockets or pipes). Some connect directly to the database system via programmatic interfaces, where the database system becomes part of the client application. In this case, we call the database an embedded system. For more information about embedded database systems, see Chapter 6.

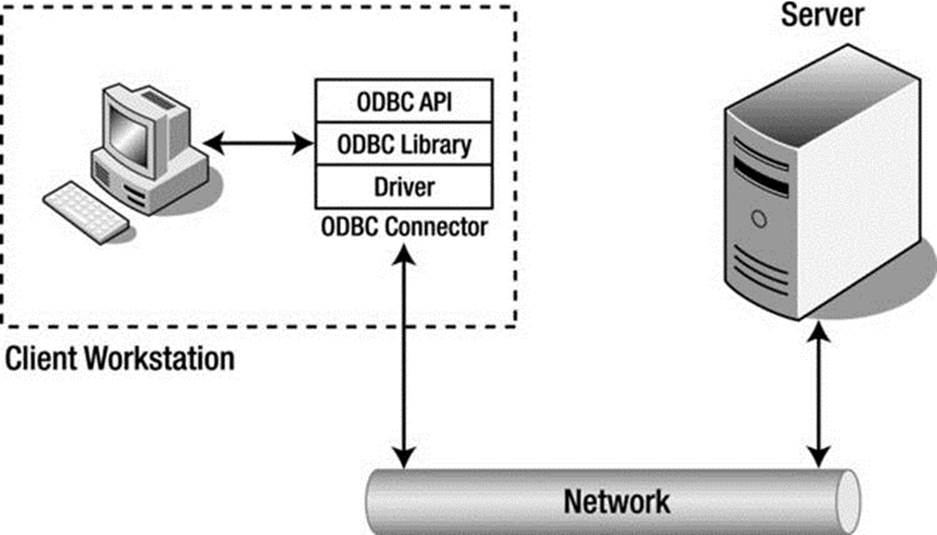

Most systems that connect to the database via a communication pathway do so via a set of protocols called database connectors. Database connectors are most often based on the Open Database Connectivity (ODBC)6 model. MySQL also supports connectors for Java (JDBC), PhP, Python, and Microsoft .NET (see “MySQL Connectors.”). Most implementations of ODBC connectors also support communication over network protocols.

ODBC is a specification for an application-programming interface (API). ODBC is designed to transfer SQL commands to the database server, retrieve the information, and present it to the calling application. An ODBC implementation includes an application designed to use the API that acts as an intermediary with the ODBC library, a core ODBC library that supports the API, and a database driver designed for a specific database system. We typically refer to the set of client access, API, and driver as a connector. Thus, the ODBC connector acts as an “interpreter” between the client application and the database server. ODBC has become the standard for nearly every relational (and most object-relational) database system. Hundreds of connectors and drivers are available for use in a wide variety of clients and database systems.

When we consider client applications, we normally take into account the programs that send and retrieve information to and from the database server. Even the applications we use to configure and maintain the database server are client applications. Most of these utilities connect to the server via the same network pathways as database applications. Some use the ODBC connectors or a variant such as Java Database Connectivity (JDBC). A few use specialized protocols for managing the server for specific administrative purposes. Others, such as phpMyAdmin, use a port or socket.

Regardless of their implementation, client applications issue commands to the database system and retrieve the results of those commands, interpret and process the results, and present them to the user. The standard command language is SQL. Clients issue SQL commands to the server via the ODBC connector, which transmits the command to the database server using the defined network protocols as specified by the driver. A graphical description of this process is shown in Figure 2-1.

Figure 2-1. Client Application/database Server Communication

MYSQL CONNECTORS

Oracle provides several database connectors for developers to enable applications to interact with MySQL. These connectors can be used with applications and tools that are compatible with industry standards, including ODBC and JDBC. This means that any application that works with ODBC or JDBC can use the MySQL connector. The MySQL connectors available for Oracle are:

· Connector/ODBC – standard ODBC connector for Windows, Linux, Mac OS X, and Unix platforms.

· Connector/J[ava] – for Java platforms and development.

· Connector/Net – Windows .Net applications development.

· Connector/MXJ – MBean for embedding the MySQL server in Java applications.

· Connector/C++ – C++ development.

· Connector/C (libmysql) – C applications development.

· Connector/Python – Python applications development.

You can view and download the connectors from at http://www.mysql.com/downloads/connector/

Query Interface

A query language such as SQL is a language (it has a syntax and semantics) that can represent a question posed to a database system. In fact, the use of SQL in database systems is considered one of the major reasons for their success. SQL provides several language groups that form a comprehensive foundation for using database systems. The data definition language (DDL) is used by database professionals to create and manage databases. Tasks include creating and altering tables, defining indexes, and managing constraints. The data manipulation language (DML) is used by database professionals to query and update the data in databases. Tasks include adding and updating data as well as querying the data. These two language groups form the majority of commands that database systems support.

SQL commands are formed using a specialized syntax. The following presents the syntax of a SELECT command in SQL. The notation depicts user-defined variables in italics and optional parameters in square brackets ([]).

SELECT [DISTINCT] listofcolumns

FROM listoftables

[WHERE expression (predicates in CNF)]

[GROUP BY listofcolumns]

[HAVING expression]

[ORDER BY listof columns];

The semantics of this command are:7

1. Form the Cartesian product of the tables in the FROM clause, thus forming a projection of only those references that appear in other clauses.

2. If a WHERE clause exists, apply all expressions for the given tables referenced.

3. If a GROUP BY clause exists, form groups in the results on the attributes specified.

4. If a HAVING clause exists, apply a filter for the groups.

5. If an ORDER BY clause exists, sort the results in the manner specified.

6. If a DISTINCT keyword exists, remove the duplicate rows from the results.

The previous code example is representative of most SQL commands; all such commands have required portions, and most also have optional sections as well as keyword-based modifiers.

Once the query statements are transferred to the client via the network protocols (called shipping), the database server must then interpret and execute the command. A query statement from this point on is referred to simply as a query, because it represents the question for which the database system must provide an answer. Furthermore, in the sections that follow, I assume the query is of the SELECT variety, in which the user has issued a request for data. All queries, regardless whether they are data manipulation or data definition, follow the same path through the system, however. It is also at this point that we consider the actions being performed within the database server itself. The first step in that process is to decipher what the client is asking for—that is, the query must be parsed and broken down into elements that can be executed upon.

Query Processing

In the context of a database system operating in a client/server model, the database server is responsible for processing the queries presented by the client and returning the results accordingly. This has been termed query shipping, in which the query is shipped to the server and a payload (data) is returned. The benefits of query shipping are a reduction of communication time for queries and the ability to exploit server resources rather than using the more limited resources of the client to conduct the query. This model also permits a separation of how the data is stored and retrieved on the server from the way the data is used on the client. In other words, the client/server model supports data independence.

Data independence is one of the principal advantages of the relational model introduced by Codd in 1970: the separation of the physical implementation from the logical model. According to Codd,8

Users of large data banks must be protected from having to know how the data is organized in the machine . . . Activities of users at terminals and most application programs should remain unaffected when the internal representation of data is changed.

This separation allows a powerful set of logical semantics to be developed, independent of a particular physical implementation. The goal of data independence (called physical data independence by Elmasri and Navathe9) is that each of the logical elements is independent of all of the physical elements (see Table 2-1). For example, the logical layout of the data into relations (tables) with attributes (fields) arranged by tuples (rows) is completely independent of how the data is stored on the storage medium.

Table 2-1. The Logical and Physical Models of Database Design

|

Logical Model |

Physical Model |

|

Query language |

Sorting algorithms |

|

Relational Algebra |

Storage mechanisms |

|

Relational Calculus |

Indexing mechanisms |

|

Relvars |

Data representation |

One challenge of data independence is that database programming becomes a two-part process. First, there is the writing of the logical query—describing what the query is supposed to do. Second, there is the writing of the physical plan, which shows how to implement the logical query.

The logical query can be written, in general, in many different forms, such as a high-level language such as SQL or as an algebraic query tree.10 For example, in the traditional relational model, a logical query can be described in relational calculus or relational algebra. The relational calculus is better in terms of focusing on what needs to be computed. The relational algebra is closer to providing an algorithm that lets you find what you are querying for, but still leaves out many details involved in the evaluation of a query.

The physical plan is a query tree implemented in a way that it can be understood and processed by the database system’s query execution engine. A query tree is a tree structure in which each node contains a query operator and has a number of children that correspond to the number of tables involved in the operation. The query tree can be transformed via the optimizer into a plan for execution. This plan can be thought of as a program that the query execution engine can execute.

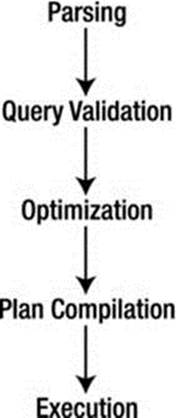

A query statement goes through several phases before it is executed; parsing, validation, optimization, plan generation/compilation, and execution. Figure 2-2 depicts the query processing steps that a typical database system would employ. Each query statement is parsed for validity and checked for correct syntax and for identification of the query operations. The parser then outputs the query in an intermediate form to allow the optimizer to form an efficient query execution plan. The execution engine then executes the query and the results are returned to the client. This progression is shown in Figure 2-2, where once parsing is completed the query is validated for errors, then optimized; a plan is chosen and compiled; and finally the query is executed.

Figure 2-2. Query processing steps

The first step in this process is to translate the logical query from SQL into a query tree in relational algebra. This step, done by the parser, usually involves breaking the SQL statement into parts and then building the query tree from there. The next step is to translate the query tree in logical algebra into a physical plan. Generally, many plans could implement the query tree. The process of finding the best execution plan is called query optimization. That is, for some query-execution-performance measure (e.g., execution time), we want to find the plan with the best execution performance. The plan should be optimal or near optimal within the search space of the optimizer. The optimizer starts by copying the relational-algebra query tree into its search space. The optimizer then expands the search space by forming alternative execution plans (to a finite iteration) and then searching for the best plan (the one that executes fastest).

At this level of generality, the optimizer can be viewed as the code-generation part of a query compiler for the SQL language. In fact, in some database systems, the compilation step translates the query into an executable program. Most database systems, however, translate the query into a form that can be executed using the internal library of execution steps. The code compilation in this case produces code to be interpreted by the query-execution engine, except that the optimizer’s emphasis is on producing “very efficient” code. For example, the optimizer uses the database system’s catalog to get information (e.g., the number of tuples) about the stored relations referenced by the query, something traditional programming language compilers normally do not do. Finally, the optimizer copies the optimal physical plan out of its memory structure and sends it to the query-execution engine, which executes the plan using the relations in the stored database as input and produces the table of rows that match the query criteria.

All this activity requires additional processing time and places a greater burden on the process by forcing database implementers to consider the performance of the query optimizer and execution engine as a factor in their overall efficiency. This optimization is costly, because of the number of alternative execution plans that use different access methods (ways of reading the data) and different execution orders. Thus, it is possible to generate an infinite number of plans for a single query. Database systems typically bound the problem to a few known best practices, however.

A primary reason for the large number of query plans is that optimization will be required for many different values of important runtime parameters whose actual values are unknown at optimization time. Database systems make certain assumptions about the database contents (e.g., value distribution in relation attributes), the physical schema (e.g., index types), the values of the system parameters (e.g., the number of available buffers), and the values of the query constants.

Query Optimizer

Some mistakenly believe that the query optimizer performs all the steps outlined in the query-execution phases. As you will see, query optimization is just one step that the query takes on the way to be executed. The following paragraphs describe the query optimizer in detail and illustrate the role of the optimizer in the course of the query execution.

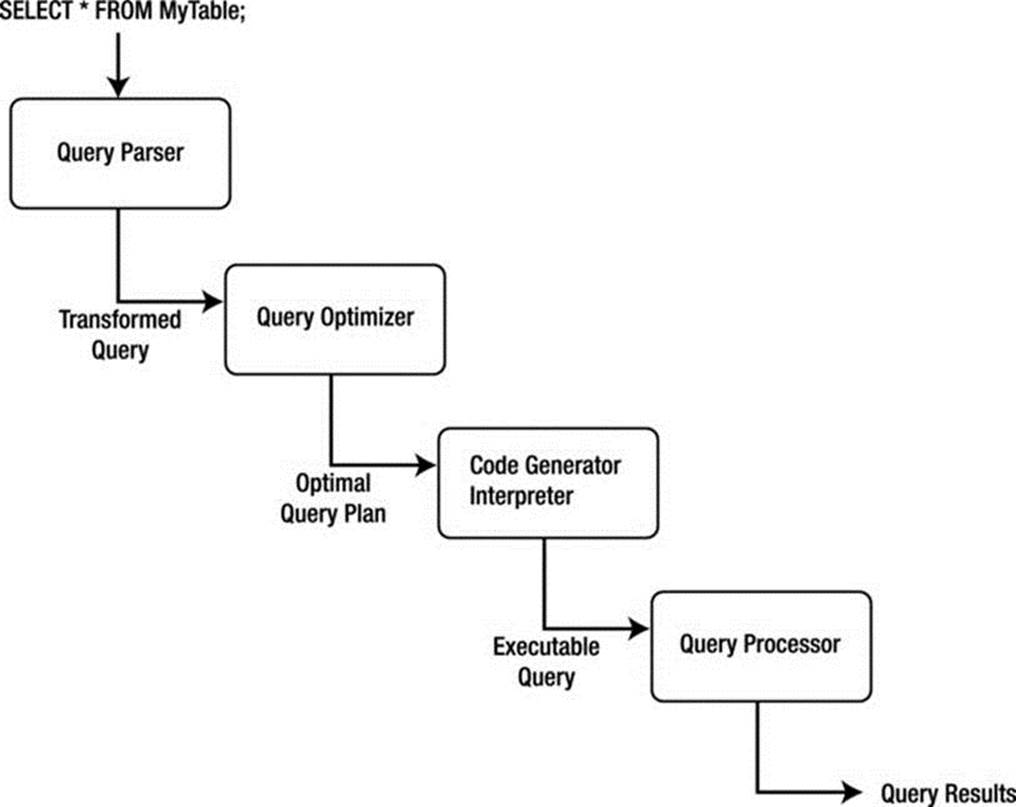

Query optimization is the part of the query-compilation process that translates a data-manipulation statement in a high-level, nonprocedural language, such as SQL, into a more detailed, procedural sequence of operators, called a query plan. Query optimizers usually select a plan by estimating the cost of many alternative plans and then choosing the least expensive among them (the one that executes fastest).

Database systems that use a plan-based approach to query optimization assume that many plans can be used to produce any given query. Although this is true, not all plans are equivalent in the number of resources (or cost) needed to execute the query, nor are all plans executed in the same amount of time. The goal, then, is to discover the plan that has the least cost and/or runs in the least amount of time. The distinction of either resource usage or cost usage is a trade-off often encountered when designing systems for embedded integration or running on a small platform (with low resource availability) versus the need for higher throughput (or time).

Figure 2-3 depicts a plan-based query-processing strategy in which the query follows the path of the arrows. The SQL command is passed to the query parser, where it is parsed and validated, and then translated into an internal representation, usually based on a relational-algebra expression or a query tree, as described earlier. The query is then passed to the query optimizer, which examines all of the algebraic expressions that are equivalent, generating a different plan for each combination. The optimizer then chooses the plan with the least cost and passes the query to the code generator, which translates the query into an executable form, either as directly executable or as interpretative code. The query processor then executes the query and returns a single row in the result set at a time.

This common implementation scheme is typical of most database systems. The machines that the database system runs on have improved over time, however. It is no longer the case that query plans have diverse execution costs. In fact, most query plans execute with approximately the same cost. This realization has led some database-system implementers to adopt a query optimizer that focuses on optimizing the query using some well-known best practices or rules (called heuristics), for query optimization. Some database systems use hybrids of optimization techniques that are based on one form while maintaining aspects of other techniques during execution.

Figure 2-3. Plan-based query processing

The four primary means of performing query optimization are

· Cost-based optimization

· Heuristic optimization

· Semantic optimization

· Parametric optimization

Although no optimization technique can guarantee the best execution plan, the goal of all these methods is to generate an efficient execution for the query that guarantees correct results.

A cost-based optimizer generates a range of query-evaluation plans from the given query by using the equivalence rules, and it chooses the one with the least cost based on the metrics (or statistics) gathered about the relations and operations needed to execute the query. For a complex query, many equivalent plans are possible. The goal of cost-based optimization is to arrange the query execution and table access utilizing indexes and statistics gathered from past queries. Systems such as Microsoft SQL Server and Oracle use cost-based optimizers.

Heuristic optimizers use rules concerning how to shape the query into the most optimal form prior to choosing alternative implementations. The application of heuristics, or rules, can eliminate queries that are likely to be inefficient. Using heuristics to form the query plan ensures that the query plan is most likely (but not always) optimized prior to evaluation. The goal of heuristic optimization is to apply rules that ensure “good” practices for query execution. Systems that use heuristic optimizers include Ingres and various academic variants. These systems typically use heuristic optimization to avoid the really bad plans rather than as a primary means of optimization.

The goal of semantic optimization is to form query-execution plans that use the semantics, or topography, of the database and the relationships and indexes within to form queries that ensure the best practice available for executing a query in the given database. Though not yet implemented in commercial database systems as the primary optimization technique, semantic optimization is currently the focus of considerable research. Semantic optimization operates on the premise that the optimizer has a basic understanding of the actual database schema. When a query is submitted, the optimizer uses its knowledge of system constraints to simplify or to ignore a particular query if it is guaranteed to return an empty result set. This technique holds great promise for providing even more improvements to query processing efficiency in future RDBMSs.

Parametric query optimization combines the application of heuristic methods with cost-based optimization. The resulting query optimizer provides a means of producing a smaller set of effective query plans from which cost can be estimated, and thus, the lowest-cost plan of the set can be executed.

An example of a database system that uses a hybrid optimizer is MySQL. The query optimizer in MySQL is designed around a select-project-join strategy, which combines a cost-based and heuristic optimizer that uses known optimal mechanisms, thus resulting in fewer alternatives from which cost-based optimization can choose the minimal execution path. This strategy ensures an overall “good” execution plan, but it does not necessarily generate the best plan. This strategy has proven to work well for a vast variety of queries running in different environments. The internal representation of MySQL has been shown to perform well enough to rival the execution speeds of the largest of the production database systems.

An example of a database system that uses a cost-based optimizer is Microsoft’s SQL Server. The query optimizer in SQL Server is designed around a classic cost-based optimizer that translates the query statement into a procedure that can execute efficiently and return the desired results. The optimizer uses information, or statistics,11 collected from values recorded in past queries and the characteristics of the data in the database to create alternative procedures that represent the same query. The statistics are applied to each procedure to predict which one can be executed more efficiently. Once the most efficient procedure is identified, execution begins and results are returned to the client.

Optimization of queries can be complicated by using unbound parameters, such as a user predicate. For example, an unbound parameter is created when a query within a stored procedure accepts a parameter from the user when the stored procedure is executed. In this case, query optimization may not be possible, or it may not generate the lowest cost unless some knowledge of the predicate is obtained prior to execution. If very few records satisfy the predicate, even a basic index is far superior to the file scan. The opposite is true if many records qualify. If the selectivity is not known when optimization is performed because the predicate is unbound, the choice among these alternative plans should be delayed until execution.

The problem of selectivity can be overcome by building optimizers that can adopt the predicate as an open variable and perform query-plan planning by generating all possible query plans that are likely to occur based on historical query execution, and by utilizing the statistics from the cost-based optimizer, which include the frequency distribution for the predicate’s attribute.

Internal Representation of Queries

A query can be represented within a database system using several alternate forms of the original SQL command. These alternate forms exist due to redundancies in SQL, the equivalence of subqueries and joins under certain constraints, and logical inferences that can be drawn from predicates in the WHERE clause. Having alternate forms of a query poses a problem for database implementers, because the query optimizer must choose the optimal access plan for the query regardless of how it was originally formed by the user.

Once the query optimizer has either formed an efficient execution plan (heuristic and hybrid optimizers) or has chosen the most efficient plan (cost-based optimizers), the query is then passed to the next phase of the process: execution.

Query Execution

Database systems can use several methods to execute queries. Most use either an iterative or an interpretative execution strategy.

Iterative methods provide ways of producing a sequence of calls available for processing discrete operations (join, project, etc.), but they are not designed to incorporate the features of the internal representation. Translation of queries into iterative methods uses techniques of functional programming and program transformation. Several available algorithms generate iterative programs from algebra-based query specifications. For example, some algorithms translate query specifications into recursive programs, which are simplified by sets of transformation rules before the algorithm generates an execution plan. Another algorithm uses a two-level translation. The first level uses a smaller set of transformation rules to simplify the internal representation, and the second level applies functional transformations prior to generating the execution plan.

The implementation of this mechanism creates a set of defined compiled functional primitives, formed using a high-level language, that are then linked together via a call stack, or procedural call sequence. When a query-execution plan is created and selected for execution, a compiler (usually the same one used to create the database system) is used to compile the procedural calls into a binary executable. Due to the high cost of the iterative method, compiled execution plans are typically stored for reuse for similar or identical queries.

Interpretative methods, on the other hand, form query execution using existing compiled abstractions of basic operations. The query-execution plan chosen is reconstructed as a queue of method calls, which are each taken off the queue and processed. The results are then placed in memory for use with the next or subsequent calls. Implementation of this strategy is often called lazy evaluation because the set of available compiled methods is not optimized for best performance; rather, the methods are optimized for generality. Most database systems use the interpretative method of query execution.

One often-confusing area is the concept of compiled. Some database experts consider a compiled query to be an actual compilation of an iterative query-execution plan, but in Date’s work, a compiled query is simply one that has been optimized and stored for future execution. I won’t use the word compiled, because the MySQL query optimizer and execution engine do not store the query-execution plan for later reuse (an exception is the MySQL query cache). I don’t think we can compare or mention query cache here. The evaluation of the executed query is not even related to a plan, but instead is related to a literary comparison between SQL received and SQL stored directly related to a Set of already retrieved information, nor does the query execution require any compilation or assembly to work. Interestingly, the concept of a stored procedure fits this second category; it is compiled (or optimized) for execution at a later date and can be run many times on data that meet its input parameters.

Query execution evaluates each part of the query tree (or query as represented by the internal structures) and executes methods for each part. The methods supported mirror those operations defined in relational algebra, project, restrict, union, intersect, and so on. For each of these operations, the query-execution engine performs a method that evaluates the incoming data and passes the processed data along to the next step. For example, only some of the attributes (or columns) of data are returned in a project operation. In this case, the query-execution engine would strip the data for the attributes that do not meet the specification of the restriction and pass the remaining data to the next operation in the tree (or structure). Table 2-2 lists the most common operations supported and briefly describes each.

Table 2-2. Query Operations

|

Operation |

Description |

|

Restrict |

Returns tuples that match the conditions (predicate) of the WHERE clause (some systems treat the HAVING clause in the same or a similar manner). This operation is often defined as SELECT. |

|

Project |

Returns the attributes specified in the column list of the tuple evaluated. |

|

Join |

Returns tuples that match a special condition called the join condition (or join predicate). There are many forms of joins. See “Joins” for a description of each. |

JOINS

The join operation can take many forms. These are often confused by database professionals and in some cases avoided at all costs. The expressiveness of SQL permits many joins to be written as simple expressions in the WHERE clause. While most database systems correctly transform these queries into joins, it is considered a lazy form. The following lists the types of joins you are likely to encounter in an RDBMS and describes each. Join operations can have join conditions (theta joins), a matching of the attribute values being compared (equijoins), or no conditions (Cartesian products). The join operation is subdivided into:

· Inner: The join of two relations returning tuples where there is a match.

· Outer (left, right, full): Returns all rows from at least one of the tables or views mentioned in the FROM clause, as long as those rows meet any WHERE search conditions. All rows are retrieved from the left table referenced with a left outer join; all rows from the right table are referenced in a right outer join. All rows from both tables are returned in a full outer join. Values for attributes of nonmatching rows are returned as null values.

· Right outer: The join of two relations returning tuples where there is a match, plus all tuples from the relation specified to the right, leaving nonmatching attributes specified from the other relation empty (null).

· Full outer: The join of two relations returning all tuples from both relations, leaving nonmatching attributes specified from the other relation empty (null).

· Cross product: The join of two relations mapping each tuple from the first relation to all tuples from the other relation.

· Union: The set operation in which only matches from two relations with the same schema are returned.

· Intersect: The set operation in which only the nonmatches from two relations with the same schema are returned.

Deciding how to execute the query (or the chosen query plan) is only half of the story. The other thing to consider is how to access the data. There are many ways to read and write data to and from disk (files), but choosing the optimal one depends on what the query is trying to do. File-access mechanisms are created to minimize the cost of accessing the data from disk and maximize the performance of query execution.

File Access

The file-access mechanism, also called the physical database design, has been important since the early days of database-system development. The significance of file access has lessened due to the effectiveness and simplicity of common file systems supported by operating systems, however. Today, file access is merely the application of file storage and indexing best practices, such as separating the index file from the data file and placing each on a separate disk input/output (I/O) system to increase performance. Some database systems use different file-organization techniques to enable the database to be tailored to specific application needs. MySQL is perhaps the most unique in this regard due to the numerous file-access mechanisms (called storage engines) it supports.

Clear goals must be satisfied to minimize the I/O costs in a database system. These include utilizing disk data structures that permit efficient retrieval of only the relevant data through effective access paths, and organizing data on disk so that the I/O cost for retrieving relevant data is minimized. The overriding performance objective is thus to minimize the number of disk accesses (or disk I/Os).

Many techniques for approaching database design are available. Fewer are available for file-access mechanisms (the actual physical implementation of the data files). Furthermore, many researchers agree that the optimal database design (from the physical point of view) is not achievable in general and, furthermore, should not be pursued. Optimization is not achievable, mainly because of the much-improved efficiency of modern disk subsystems. Rather, it is the knowledge of these techniques and research that permits the database implementer to implement the database system in the best manner possible to satisfy the needs of those who will use the system.

To create a structure that performs well, you must consider many factors. Early researchers considered segmenting the data into subsets based on the content or the context of the data. For example, all data containing the same department number would be grouped together and stored with references to the related data. This process can be perpetuated in that sets can be grouped together to form supersets, thus forming a hierarchical file organization.

Accessing data in this configuration involves scanning the sets at the highest level to access and scan only those sets that are necessary to obtain the desired information. This process significantly reduces the number of elements to be scanned. Keeping the data items to be scanned close together minimizes search time. The arrangement of data on disk into structured files is called file organization. The goal is to design an access method that provides a way of immediately processing transactions one by one, thereby allowing us to keep an up-to-the-second stored picture of the real-world situation.

File-organization techniques were revised as operating systems evolved in order to ensure greater efficiency of storage and retrieval. Modern database systems create new challenges for which currently accepted methods may be inadequate. This is especially true for systems that execute on hardware with increased disk speeds with high data throughput. Additionally, understanding database design approaches, not only as they are described in textbooks but also in practice, will increase the requirements levied against database systems and thus increase the drive for further research. For example, the recent adoption of redundant and distributed systems by industry has given rise to additional research in these areas to make use of new hardware and/or the need to increase data availability, security, and recovery.

Since accessing data from disk is expensive, the use of a cache mechanism, sometimes called a buffer, can significantly improve read performance from disk, thus reducing the cost of storage and retrieval of data. The concept involves copying parts of the data either in anticipation of the next disk read or based on an algorithm designed to keep the most frequently used data in memory. The handling of the differences between disk and main memory effectively is at the heart of a good-quality database system. The trade-off between the database system using disk or using main memory should be understood. See Table 2-3 for a summary of the performance trade-offs between physical storage (disk) and secondary storage (memory).

Table 2-3. Performance Trade-offs

|

Issue |

Main Memory vs. Disk |

|

Speed |

Main memory is at least 1,000 times faster than disk. |

|

Storage space |

Disk can hold hundreds of times more information than memory for the same cost. |

|

Persistence |

When the power is switched off, disk keeps the data, and main memory forgets everything. |

|

Access time |

Main memory starts sending data in nanoseconds, while disk takes milliseconds. |

|

Block size |

Main memory can be accessed one word at a time, and disk one block at a time. |

Advances in database physical storage have seen many of the same improvements with regard to storage strategies and buffering mechanisms, but little in the way of exploratory examination of the fundamental elements of physical storage has occurred. Some have explored the topic from a hardware level and others from a more pragmatic level of what exactly it is we need to store. The subject of persistent storage is largely forgotten, because of the capable and efficient mechanisms available in the host operating system.

File-access mechanisms are used to store and retrieve the data that are encompassed by the database system. Most file-access mechanisms have additional layers of functionality that permit locating data within the file more quickly. These layers are called index mechanisms. Index mechanisms provide access paths (the way data will be searched for and retrieved) designed to locate specific data based on a subpart of the data called a key. Index mechanisms range in complexity from simple lists of keys to complex data structures designed to maximize key searches.

The goal is to find the data we want quickly and efficiently, without having to request and read more disk blocks than absolutely necessary. This can be accomplished by saving values that identify the data (or keys) and the location on disk of the record to form an index of the data. Furthermore, reading the index data is faster than reading all of the data. The primary benefit of using an index is that it allows us to search through large amounts of data efficiently without having to examine, or in many cases read, every item until we find the one we are searching for. Indexing, therefore, is concerned with methods of searching large files containing data that is stored on disk. These methods are designed for fast random access of data as well as sequential access of the data.

Most, but not all, index mechanisms involve a tree structure that stores the keys and the disk block addresses. Examples include B-trees, B + trees, and hash trees. The structures are normally traversed by one or more algorithms designed to minimize the time spent searching the structure for a key. Most database systems use one form or another of the B-tree in their indexing mechanisms. These tree algorithms provide very fast search speeds without requiring a large memory space.

During the execution of the query, interpretative query execution methods access the assigned index mechanism and request the data via the access method specified. The execution methods then read the data, typically a record at a time; analyze the query for a match to the predicate by evaluating the expressions; and then pass the data through any transformations and finally on to the transmission portion of the server to send the data back to the client.

Query Results

Once all the tuples in the tables referenced in the query have been processed, the tuples are returned to the client, following the same, or sometimes alternative, communication pathways. The tuples are then passed on to the ODBC connector for encapsulation and presentation to the client application.

Relational Database Architecture Summary

In this section, I’ve detailed the steps taken by a query for data through a typical relational-database system architecture. As you’ll see, the query begins with an SQL command issued by the client; then it is passed via the ODBC connector to the database system using a communications pathway (network). The query is parsed, transformed into an internal structure, optimized, and executed, and the results are returned to the client.

Now that I’ve given you a glimpse of all the steps involved in processing a query, and you’ve seen the complexity of the database system subcomponents, it is time to take a look at a real-world example. In the following section, I present an in-depth look at the MySQL database-system architecture.

The MySQL Database System

The MySQL source code is a highly organized and built using many structured classes (some are complex data structures and some are objects, but most are structures). While efforts toward making the system more modular through the addition of plugins are underway, the source code is not yet a truly modular architecture but is much closer now with the new plugin mechanism. It is important to understand this as you explore the architecture and more important later, when you explore the source code. This means that you will sometimes find instances in which no clear division of architecture elements exists in the source code. For more information about the MySQL source code, including how to obtain it, see Chapter 3.

Although some may present the MySQL architecture as a component-based system built from a set of modular subcomponents, the reality is that, while highly organized, it is neither component based nor modular. The source code is built using a mixture of C and C++, and a number of objects are utilized in many of the functions of the system. The system is not object oriented in the true sense of object-oriented programming. Rather, the system is built on the basis of function libraries and data structures designed to optimize the organization of the source code around that of the architecture, with some portions written using objects.

MySQL architecture is, however, an intelligent design of highly organized subsystems working in harmony to form an effective and highly reliable database system. All of the technologies I described previously in this chapter are present in the system. The subsystems that implement these technologies are well designed and implemented with the same precision source code found throughout the system. It is interesting to note that many accomplished C and C++ programmers remark upon the elegance and leanness of the source code. I’ve often found myself marveling at the serene complexity and yet elegance of the code. Indeed, even the code authors themselves admit that their code has a sort of genius intuition that is often not fully understood or appreciated until it is thoroughly analyzed. You, too, will find yourself amazed at how well some of the source code works and how simple it is once you figure it out.

![]() Note The MySQL system has proved to be difficult for some to learn and troublesome to diagnose when things go awry. It is clear, however, that once one has mastered the intricacies of the MySQL architecture and source code, the system is very accommodating and has the promise of being perhaps the first and best platform for experimental database work.

Note The MySQL system has proved to be difficult for some to learn and troublesome to diagnose when things go awry. It is clear, however, that once one has mastered the intricacies of the MySQL architecture and source code, the system is very accommodating and has the promise of being perhaps the first and best platform for experimental database work.

What this means is that the MySQL architecture and source code is often challenging for novice C++ programmers. If you find yourself starting to reconsider taking on the source code, please keep reading; I will be your guide in navigating the source code. But let’s first begin with a look at how the system is structured.

MySQL System Architecture

The MySQL architecture is best described as a layered system of subsystems. While the source code isn’t compiled as individual components or modules, the source code for the subsystems is organized in a hierarchical manner that allows subsystems to be segregated (encapsulated) in the source code. Most subsystems rely on base libraries for lower-level functions (e.g., thread control, memory allocation, networking, logging and event handling, and access control). Together, the base libraries, subsystems built on those libraries, and even subsystems built from other subsystems form the abstracted API that is known as the C client API. This powerful API permits the MySQL system to be used as either a stand-alone server or an embedded database system in a larger application.

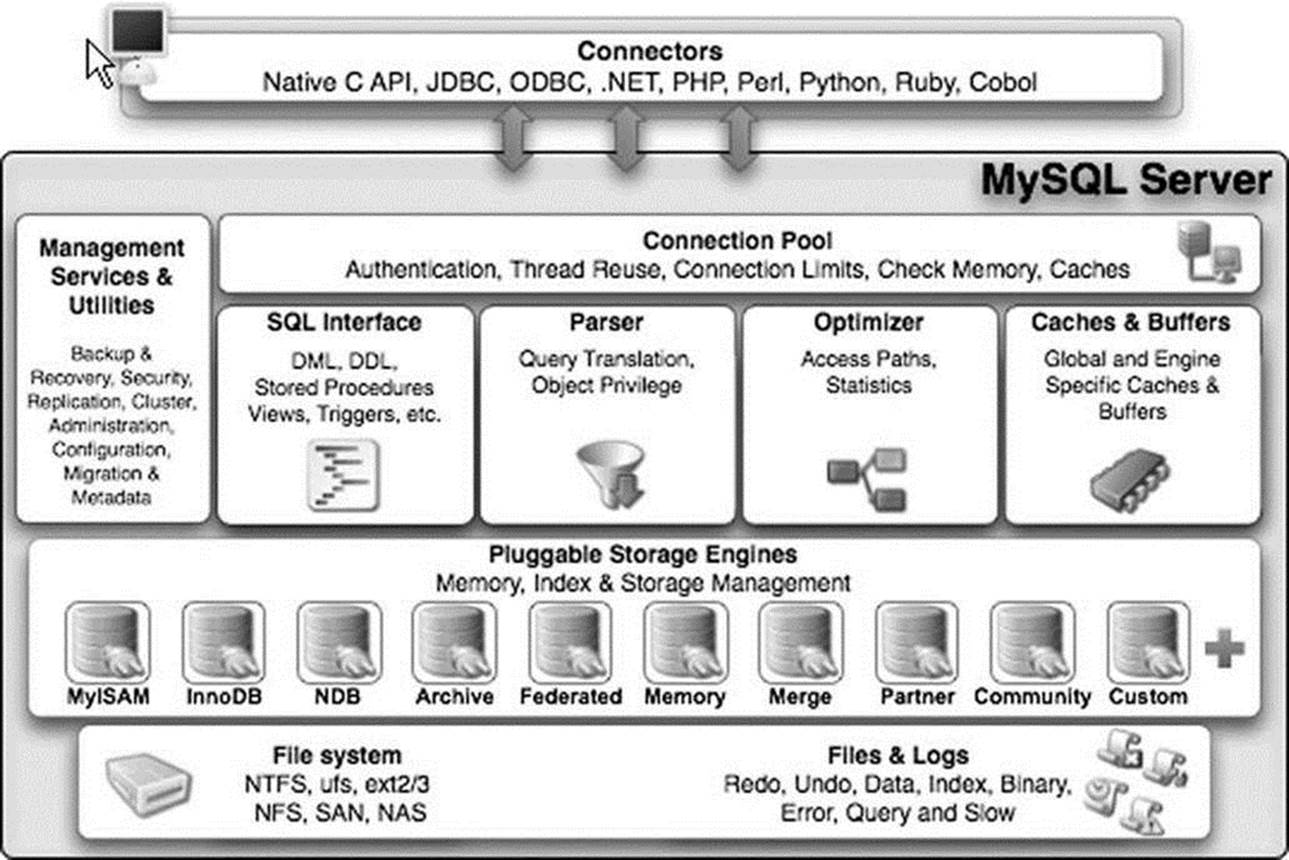

The architecture provides encapsulation for a SQL interface, query parsing, query optimization and execution, caching and buffering, and a pluggable storage engine. Figure 2-4 depicts the MySQL architecture and its subsystems. At the top of the drawing are the database connectors that provide access to client applications. As you can see, a connector for just about any programming environment you could want exists. To the left of the drawing, the ancillary tools are listed grouped by administration and enterprise services. For a complete discussion of the administration and enterprise service tools, see Michael Kruckenberg and Jay Pipes’s Pro MySQL12 an excellent reference for all things administrative for MySQL.

The next layer down in the architecture from the connectors is the connection pool layer. This layer handles all of the user-access, thread processing, memory, and process cache needs of the client connection. Below that layer is the heart of the database system. Here is where the query is parsed and optimized, and file access is managed. The next layer down from there is the pluggable storage-engine layer. At this layer, part of the brilliance of the MySQL architecture shines. The pluggable storage-engine layer permits the system to be built to handle a wide range of diverse data or file storage and retrieval mechanisms. This flexibility is unique to MySQL. No other database system available today provides the ability to tune databases by providing several data storage mechanisms.

![]() Note The pluggable storage-engine feature is available beginning in version 5.1.

Note The pluggable storage-engine feature is available beginning in version 5.1.

Below the pluggable storage engine is the lowest layer of the system, the file-access layer. At this layer, the storage mechanisms read and write data, and the system reads and writes log and event information. This layer is the closest to the operating system, along with thread, process, and memory control.

Let’s begin our discussion of the MySQL architecture with the flow through the system from the client application to the data and back. The first layer encountered once the client connector (ODBC, .NET, JDBC, C API, etc.) has transmitted the SQL statements to the server is the SQL interface.

Figure 2-4. MySQL server architecture (Copyright Oracle. Reprinted with kind permission.)

SQL Interface

The SQL interface provides the mechanisms to receive commands and transmit results to the user. The MySQL SQL interface was built to the ANSI SQL standard and accepts the same basic SQL statements as most ANSI-compliant database servers. Although many of the SQL commands supported in MySQL have options that are not ANSI standard, the MySQL developers have stayed very close to the ANSI SQL standard.

Connections to the database server are received from the network communication pathways, and a thread is created for each. The threaded process is the heart of the executable pathway in the MySQL server. MySQL is built as a true multithreaded application whereby each thread executes independently of the other threads (except for certain helper threads). The incoming SQL command is stored in a class structure and the results are transmitted to the client by writing the results out to the network communication protocols. Once a thread has been created, the MySQL server attempts to parse the SQL command and store the parts in the internal data structure.

Parser

When a client issues a query, a new thread is created and the SQL statement is forwarded to the parser for syntactic validation (or rejection due to errors). The MySQL parser is implemented using a large Lex-YACC script that is compiled with Bison. The parser constructs a query structure used to represent the query statement (SQL) in memory as a tree structure (also called an abstract syntax tree) that can be used to execute the query.

![]() Tip The sql_yacc.yy, sql_lex.h, and lex.h files are where you would begin to construct your own SQL commands or data types in MySQL. These files will be discussed in more detail in Chapter 7.

Tip The sql_yacc.yy, sql_lex.h, and lex.h files are where you would begin to construct your own SQL commands or data types in MySQL. These files will be discussed in more detail in Chapter 7.

Considered by many to be the most complex part of the MySQL source code and the most elegant, the parser is implemented using Lex and YACC, which were originally built for compiler construction. These tools are used to build a lexical analyzer that reads a SQL statement and breaks the statement into parts, assigning the command portions, options, and parameters to a structure of variables and lists. This structure (named imaginatively Lex) is the internal representation of the SQL query. As a result, this structure is used by every other step in the query process. The Lex structure contains lists of tables being used, field names referenced, join conditions, expressions, and all the parts of the query stored in a separate space.

The parser works by reading the SQL statement and comparing the expressions (consisting of tokens and symbols) with rules defined in the source code. These rules are built into the code using Lex and YACC and later compiled with Bison to form the lexical analyzer. If you examine the parser in its C form (a file named /sql/sql_yacc.cc), you may become overwhelmed with the terseness and sheer enormity of the switch statement.13 A better way to examine the parser is to look at the Lex and YACC form prior to compilation (a file named /sql/sql_yacc.yy). This file contains the rules as written for YACC and is much easier to decipher. The construction of the parser illustrates Oracle’s open source philosophy at work: why create your own language handler when special compiler construction tools such as Lex, YACC, and Bison are designed to do just that?

Once the parser identifies a regular expression and breaks the query statement into parts, it assigns the appropriate command type to the thread structure and returns control to the command processor (which is sometimes considered part of the parser, but more correctly is part of the main code). The command processor is implemented as a large switch statement with cases for every command supported. The query parser checks only the correctness of the SQL statement. It does not verify the existence of tables or attributes (fields) referenced, nor does it check for semantic errors, such as an aggregate function used without a GROUP BY clause. Instead, the verification is left to the optimizer. Thus, the query structure from the parser is passed to the query processor. From there, control switches to the query optimizer.

LEX AND YACC

Lex stands for “lexical analyzer generator” and is used as a parser to identify tokens and literals as well as the syntax of a language. YACC stands for “yet another compiler compiler” and is used to identify and act on the semantic definitions of the language. The use of these tools together with Bison (a YACC compiler) provides a rich mechanism for creating subsystems that can parse and process language commands. Indeed, that is exactly how MySQL uses these technologies.

Query Optimizer

The MySQL query-optimizer subsystem is considered by some to be misnamed. The optimizer used is a SELECT-PROJECT-JOIN strategy that attempts to restructure the query by first doing any restrictions (SELECT) to narrow the number of tuples to work with, then performs the projections to reduce the number of attributes (fields) in the resulting tuples, and finally evaluates any join conditions. While not considered a member of the extremely complicated query-optimizer category, the SELECT-PROJECT-JOIN strategy falls into the category of heuristic optimizers. In this case, the heuristics (rules) are simply:

· Eliminate extra data by evaluating the expressions in the WHERE (HAVING) clause.

· Eliminate extra data by limiting the data to the attributes specified in the attribute list. The exception is the storage of the attributes used in the join clause that may not be kept in the final query.

· Evaluate join expressions.

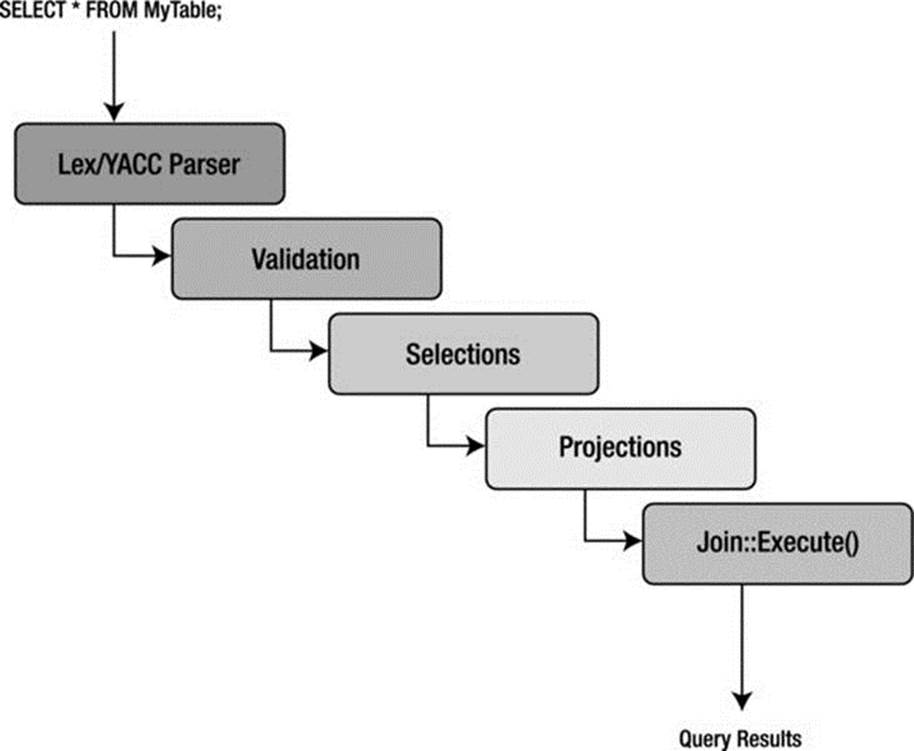

This results in a strategy that ensures a known-good access method to retrieve data in an efficient manner. Despite critical reviews, the SELECT-PROJECT-JOIN strategy has proven effective at executing the typical queries found in transaction processing. Figure 2-5 depicts a block diagram that describes the MySQL query processing methodology.

Figure 2-5. MySQL query processing methodology

The first step in the optimizer is to check for the existence of tables and access control by the user. If there are errors, the appropriate error message is returned and control returns to the thread manager, or listener. Once the correct tables have been identified, they are opened, and the appropriate locks are applied for concurrency control.

Once all the maintenance and setup tasks are complete, the optimizer uses the internal-query structure (Lex) and evaluates the WHERE conditions (a restrict operation) of the query. Results are returned as temporary tables to prepare for the next step. If UNION operators are present, the optimizer executes the SELECT portions of all statements in a loop before continuing.

The next step in the optimizer is to execute the projections. These are executed in a similar manner as the restrict portions, again storing the intermediate results as temporary tables and saving only those attributes specified in the column specification in the SELECT statement. Last, the structure is analyzed for any JOIN conditions that are built using the join class, and then the join::optimize() method is called. At this stage, the query is optimized by evaluating the expressions and eliminating any conditions that result in dead branches or always-true or always-false conditions (as well as many other similar optimizations). The optimizer is attempting to eliminate any known-bad conditions in the query before executing the join. This is done because joins are the most expensive and time consuming of all relational operators. Note that the join-optimization step is performed for all queries that have a WHERE or HAVING clause regardless of whether there are any join conditions. This enables developers to concentrate all of the expression-evaluation code in one place. Once the join optimization is complete, the optimizer uses a series of conditional statements to route the query to the appropriate library method for execution.

The query optimizer and execution engine is perhaps the second most difficult area to understand, because of its SELECT-PROJECT-JOIN optimizer approach. Complicating matters is that this portion of the server is a mixture of C and C++ code, where the typical select execution is written as C methods while the join operation is written as a C++ object. In Chapter 13, I show you how to write your own query optimizer and use it instead of the MySQL optimizer.

Query Execution

Execution of the query is handled by a set of library methods designed to implement a particular query. For example, the mysql_insert() method is designed to insert data. Likewise, there is a mysql_select() method designed to find and return data matching the WHERE clause. This library of execution methods is located in a variety of source-code files under a file of a similar name (e.g., sql_insert.cc or sql_select.cc). All these methods have as a parameter a thread object that permits the method to access the internal query structure and eases execution. Results from each of the execution methods are returned using the network-communication-pathways library. The query-execution library methods are clearly implemented using the interpretative model of query execution.

Query Cache

While not its own subsystem, the query cache should be considered a vital part of the query optimization and execution subsystem. The query cache is a marvelous invention that caches not only the query structure but also the query results themselves. This enables the system to check for frequently used queries and shortcut the entire query-optimization and -execution stages altogether. This is another technology unique to MySQL. Other database-systems cache queries, but no others cache the actual results. As you can appreciate, the query cache must also allow for situations in which the results are “dirty” in the sense that something has changed since the last time the query was run (e.g., an INSERT, UPDATE, or DELETE was run against the base table) and that the cached queries may need to be occasionally purged.

![]() Tip The query cache is turned on by default. If you want to turn off the query cache for the specific SQL statment, use the SQL_NO_CACHE SELECT option: SELECT SQL_NO_CACHE id, lname FROM myCustomer;. Otherwise, it can be GLOBALY disable using the server variables (query_cache_type, query_cache_size to also deallocate the buffer).

Tip The query cache is turned on by default. If you want to turn off the query cache for the specific SQL statment, use the SQL_NO_CACHE SELECT option: SELECT SQL_NO_CACHE id, lname FROM myCustomer;. Otherwise, it can be GLOBALY disable using the server variables (query_cache_type, query_cache_size to also deallocate the buffer).

If you are not familiar with this technology, try it out. Find a table that has a sufficient number of tuples and execute a query that has some complexity, such as a JOIN or complex WHERE clause. Record the time it took to execute, then execute the same query again. Note the time difference. This is the query cache in action.

Listing 2-1 illustrates this exercise using the SHOW command to display the system variables associated with the query cache. Notice how running the query multiple times adds it to the cache, and subsequent calls read it from the cache. Notice also that the SQL_NO_CACHE option does not affect the query cache variables (because it doesn’t use the query cache).

Listing 2-1. The MySQL Query Cache in Action

mysql> CREATE DATABASE test_cache;

Query OK, 1 row affected (0.00 sec)

mysql> CREATE TABLE test_cache.tbl1 (a int);

Query OK, 0 rows affected (0.01 sec)

mysql> INSERT INTO test_cache.tbl1 VALUES (100), (200), (300);

Query OK, 3 rows affected (0.00 sec)

Records: 3 Duplicates: 0 Warnings: 0

mysql> USE test_cache;

Database changed

mysql> SELECT * FROM tbl1;

+------+

| a |

+------+

| 100 |

| 200 |

| 300 |

+------+

3 rows in set (0.00 sec)

mysql> show status like "Qcache_hits";

+---------------+-------+

| Variable_name | Value |

+---------------+-------+

| Qcache_hits | 0 |

+---------------+-------+

1 row in set (0.00 sec)

mysql> select length(now()) from tbl1;

+---------------+

| length(now()) |

+---------------+

| 19 |

| 19 |

| 19 |

+---------------+

3 rows in set (0.00 sec)

mysql> show status like "Qcache_hits";

+---------------+-------+

| Variable_name | Value |

+---------------+-------+

| Qcache_hits | 0 |

+---------------+-------+

1 row in set (0.01 sec)

mysql> show status like "Qcache_inserts";

+----------------+-------+

| Variable_name | Value |

+----------------+-------+

| Qcache_inserts | 1 |

+----------------+-------+

1 row in set (0.00 sec)

mysql> show status like "Qcache_queries_in_cache";

+-------------------------+-------+

| Variable_name | Value |

+-------------------------+-------+

| Qcache_queries_in_cache | 1 |

+-------------------------+-------+

1 row in set (0.00 sec)

mysql> SELECT * FROM tbl1;

+------+

| a |

+------+

| 100 |

| 200 |

| 300 |

+------+

3 rows in set (0.00 sec)

mysql> show status like "Qcache_hits";

+---------------+-------+

| Variable_name | Value |

+---------------+-------+

| Qcache_hits | 1 |

+---------------+-------+

1 row in set (0.00 sec)

mysql> SELECT SQL_NO_CACHE * FROM tbl1;

+------+

| a |

+------+

| 100 |

| 200 |

| 300 |

+------+

3 rows in set (0.00 sec)

mysql> show status like "Qcache_hits";

+---------------+-------+

| Variable_name | Value |

+---------------+-------+

| Qcache_hits | 1 |

+---------------+-------+

1 row in set (0.00 sec)

mysql> SELECT * FROM tbl1;

+------+

| a |

+------+

| 100 |

| 200 |

| 300 |

+------+

3 rows in set (0.00 sec)

mysql> show status like "Qcache_hits";

+---------------+-------+

| Variable_name | Value |

+---------------+-------+

| Qcache_hits | 2 |

+---------------+-------+

1 r

mysql>

Cache and Buffers

The caching and buffers subsystem is responsible for ensuring that the most frequently used data (or structures, as you will see) are available in the most efficient manner possible. In other words, the data must be resident, or ready to read, at all times. The caches dramatically increase the response time for requests for that data, because the data are in memory, and thus no additional disk access is necessary to retrieve it. The cache subsystem was created to encapsulate all of the caching and buffering into a loosely coupled set of library functions. Although you will find the caches implemented in several different source-code files, they are considered part of the same subsystem.

A number of caches are implemented in this subsystem. Most cache mechanisms use the same or similar concept of storing data as structures in a linked list. The caches are implemented in different portions of the code to tailor the implementation to the type of data that is being cached. Let’s look at each of the caches.

Table Cache

The table cache was created to minimize the overhead in opening, reading, and closing tables (the .FRM files on disk). For this reason, it is designed to store metadata about the tables in memory. It does so by utilizing a data-chunking mechanism called Unireg. Unireg, a format created by a founder of MySQL, was once used for writing TTY applications. It stores data in segments called screens that originally were designed to be used to display data on a monitor. Unireq made it easier to store data and formulate displays (screens) for faster data refresh. As you may have surmised, this is an antiquated technology, and it shows the age of the MySQL source code. The good news is there are plans under way to redesign the table cache and eventually replace or remove the Unireg mechanism.

A WORD ABOUT FRM FILES