Real-Time Communication with WebRTC (2014)

Chapter 6. An Introduction to WebRTC API's Advanced Features

In the previous chapters, we described and discussed a simple scenario: a browser talking directly to another browser. The WebRTC APIs are designed around the one-to-one communication scenario, which represents the easiest to manage and deploy. As we illustrated in previous chapters, the basic WebRTC features are sufficient to implement the one-to-one scenario since the built-in audio and video engines of the browser are responsible for optimizing the delivery of the media streams by adapting them to match the available bandwidth and to fit the current network conditions.

In this last chapter we will briefly talk about the conferencing scenario and then list other advanced WebRTC features and mechanisms that are still under active discussion and development within the W3C WebRTC working group (at the time of writing in early 2014).

Conferencing

In a WebRTC conferencing scenario (or N-way call), each browser has to receive and handle the media streams generated by the other N-1 browsers, as well as deliver its own generated media streams to N-1 browsers (i.e., the application-level topology is a mesh network). While this is a quite straightforward scenario, it is nonetheless difficult to manage for a browser and at the same time calls for linearly increasing network bandwidth availability.

For these reasons, video conferencing systems usually rely upon a star topology where each peer connects to a dedicated server that is simultaneously responsible for:

§ Negotiating parameters with every other peer in the network

§ Controlling conferencing resources

§ Aggregating (or mixing) the individual streams

§ Distributing the proper mixed stream to each and every peer participating in the conference

Delivering a single stream clearly reduces both the amount of bandwidth and amount of CPU (and possibly GPU [Graphics Processing Unit]) resources required by each peer involved in a conference. The dedicated server can be either one of the peers or a server specifically optimized for processing and distributing real-time data. In the latter case, the server is usually identified as a Multipoint Control Unit (MCU).

The WebRTC API does not provide any particular mechanism to assist the conferencing scenario. The criteria and process to identify the MCU are delegated to the application. However, this is a big engineering challenge because it envisages the introduction of a centralized infrastructure in the WebRTC peer-to-peer communication model. The upside of such a challenge clearly resides in the consideration that being capable of establishing a peer connection with a proxy server adds to the benefits offered by WebRTC through the additional services offered by the proxy server itself.

We plan to dedicate at least one chapter to videoconferencing in the next version of this book.

Identity and Authentication

The DTLS handshake performed between two WebRTC browsers relies on self-signed certificates. Hence, such certificates cannot be used to also authenticate the peers as there is no explicit chain of trust.

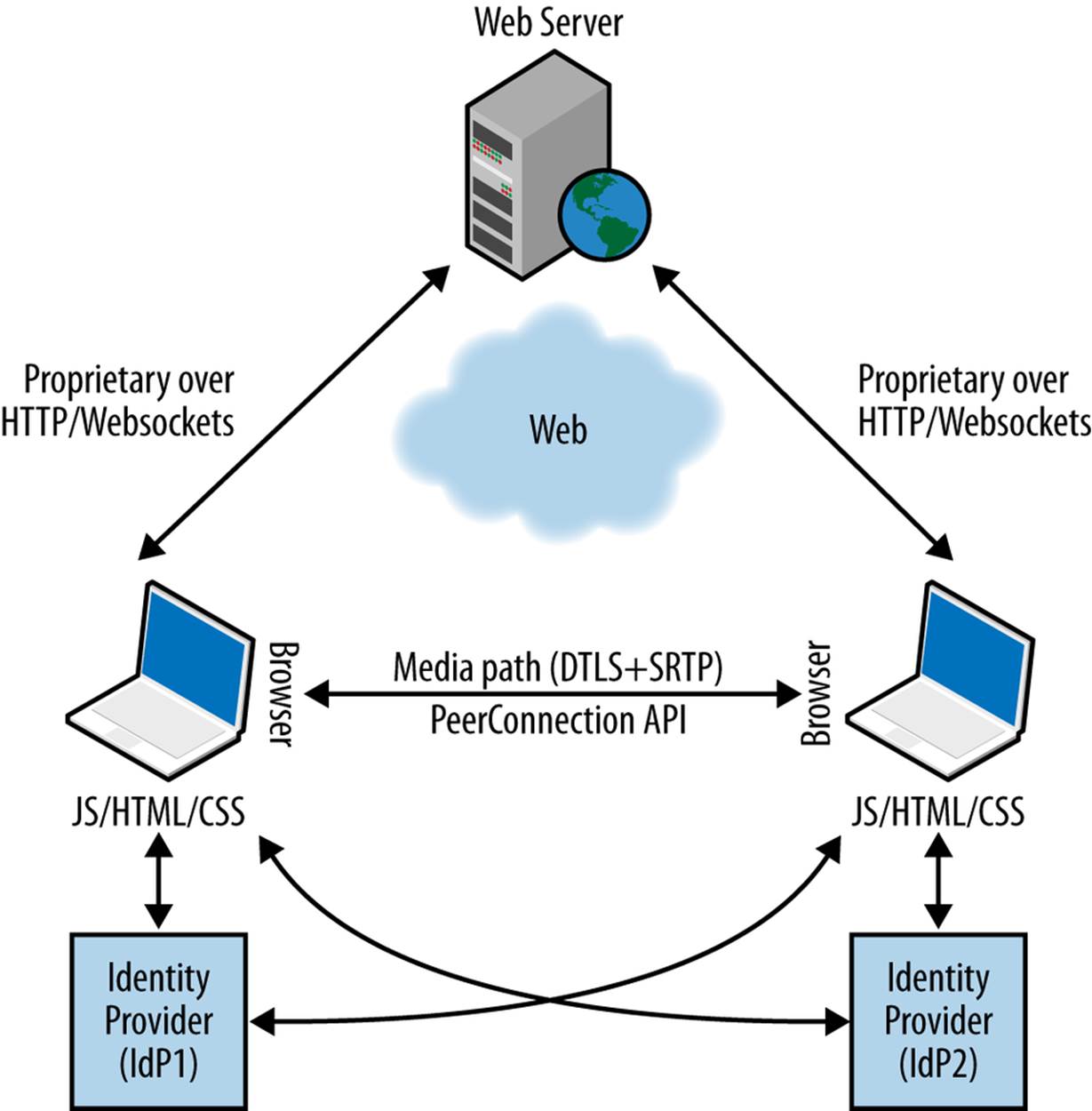

The W3C WebRTC working group is actually working on a web-based Identity Provider (IdP) mechanism. The idea is that each browser has a relationship with an IdP supporting a protocol (for example, OpenId or BrowserID) that can be used to assert its own identity when interacting with the other peers. The interaction with the IdP is designed in such a way as to decouple the browser from any particular Identity Provider (i.e., each browser involved in the communication might have relationships with different IdPs).

NOTE

The setIdentityProvider() method sets the Identity Provider to be used for a given PeerConnection object. Applications do not need to invoke this call if the browser is already configured for a specific IdP. In this case, the configured IdP will be used to get an assertion.

The browser sending the Offer acts as the Authenticating Party (AP) and obtains from the IdP an Identity Assertion binding its identity to its own fingerprint (generated during the DTLS handshake). This identity assertion is then attached to the Offer.

NOTE

The getIdentityProvider() method initiates the process of obtaining an Identity Assertion. Applications do not need to invoke this call; the method is merely intended to allow them to start the process of obtaining Identity Assertions before a call is initiated.

The browser playing the role of the consumer during the Offer/Answer exchange phase (for instance, the one with the RTCPeerConnection on which setRemoteDescription() is called) acts as the Relying Party (RP) and verifies the assertion by directly contacting the IdP of the browser sending the Offer (Figure 6-1). When using the Chrome browser, this allows the consumer to display a trusted icon indicating that a call is coming in from a trusted contact.

Figure 6-1. A WebRTC call with IdP-based identity

Peer-to-Peer DTMF

Dual-Tone Multi-Frequency (DTMF) signaling is an encoding technique used in telephony systems to encode numeric codes in the form of sound signals in the audio band between telephone handsets (as well as other communication devices) and the switching center. As an example, DTMF is used to navigate through an Interactive Voice Responder (IVR).

In order to send DTMF (for example, through the phone keypad) values across an RTCPeerConnection, the user agent needs to know which specific MediaStreamTrack will carry the tone.

NOTE

The createDTMFSender() method creates an RTCDTMFSender object that references the given MediaStreamTrack. The MediaStreamTrack must be an element of a MediaStream that is currently in the RTCPeerConnection object’s local streams set.

Once an RTCDTMFSender object has been created, it can be used to send DTMF tones across that MediaStreamTrack (over the PeerConnection) through the insertDTMF() method.

NOTE

The insertDTMF() method is used to send DTMF tones. The tones parameter is treated as a series of characters. The characters 0 through 9, A through D, #, and * generate the associated DTMF tones.

Statistics Model

A real-time communication framework also requires a mechanism to extract statistics on its performance. Such statistics may be as simple as knowing how many bytes of data have been delivered, or they may be as sophisiticated as measuring the efficiency of an echo canceller on the local device.

The W3C WebRTC working group is in the process of defining a very simple statistics API, whereby a call may return all relevant data for a particular MediaStreamTrack, or for the PeerConnection as a whole. Statistical data has a uniform structure, consisting of a string identifying the specific statistics parameter and an associated simple-typed value.

Providers of this API (such as the different browsers) will use it to expose both standard and nonstandard statistics. The basic statistics model is that the browser maintains a set of statistics referenced by a selector. The selector may, for example, be a specific MediaStreamTrack. For a track to be a valid selector, it must be a member of a MediaStream that is either sent or received across the RTCPeerConnection object on which the stats request was issued.

The calling web application provides the selector to the getStats() method and the browser emits a set of statistics that it believes is relevant to such a selector.

NOTE

The getStats() method gathers statistics for the given selector and reports the result asynchronously.

More precisely, the getStats() method takes a valid selector (e.g., a MediaStreamTrack) as input, along with a callback to be executed when the stats are available. The callback is given an RTCStatsReport containing RTCStats objects. An RTCStatsReport object represents a map associating strings (identifying the inspected objects—RTCStats.id) with their corresponding RTCStats containers.

An RTCStatsReport may be composed of several RTCStats objects, each reporting stats for one underlying object that the implementation thinks is relevant for the selector. The former collects global information associated with the selector by summing up all the stats of a certain type. For instance, if a MediaStreamTrack is carried by multiple SSRCs over the network, the RTCStatsReport may contain one RTCStats object per SSRC (which can be distinguished by the value of the ssrc stats attribute).

The statistics returned are designed in such a way that repeated queries can be linked by the RTCStats id dictionary member (see Table 6-1). Thus, a web application can measure performance over a given time period by requesting measurements both at its beginning and at its end.

Table 6-1. RTCStats dictionary members

|

Member |

Type |

Description |

|

id |

DOMString |

A unique id that is associated with the object that was inspected to produce this RTCStats object. |

|

timestamp |

DOMHiResTimeStamp |

The timestamp, of type DOMHiResTimeStamp [HIGHRES-TIME], associated with this object. The time is relative to the UNIX epoch (Jan 1, 1970, UTC). |

|

type |

RTCStatsType |

The type of this object. |

Currently, the only defined types are inbound-rtp and outbound-rtp, both of which are instances of the RTCRTPStreamStats subclass that additionally provides remoteId and ssrc properties:

§ The outbound-rtp object type is represented by the subclass RTCOutboundRTPStreamStats, providing packetsSent and bytesSent properties.

§ The inbound-rtp object type is represented by the subclass RTCInboundRTPStreamStats, providing analogous packetsReceived and bytesReceived properties.

All materials on the site are licensed Creative Commons Attribution-Sharealike 3.0 Unported CC BY-SA 3.0 & GNU Free Documentation License (GFDL)

If you are the copyright holder of any material contained on our site and intend to remove it, please contact our site administrator for approval.

© 2016-2025 All site design rights belong to S.Y.A.