Fire in the Valley (2014)

Chapter 1

Tinder for the Fire

I think there is a world market for maybe five computers.

Thomas Watson, chairman of IBM

The personal computer sprang to life in the mid-1970s, but its historical roots reach back to the giant electronic brains of the 1950s, and well before that to the thinking machines of 19th-century fiction.

Steam

I wish to God these calculations had been executed by steam.

-Charles Babbage, 19th-century inventor

Intrigued by the changes being wrought by science, the poets Lord Byron and Percy Bysshe Shelley idled away one rainy summer day in Switzerland discussing artificial life and artificial thought, wondering whether “the component parts of a creature might be manufactured, brought together, and endued with vital warmth.” On hand to take mental notes of their conversation was Mary Wollstonecraft Shelley, Percy’s wife and author of the novel Frankenstein. She expanded on the theme of artificial life in her famous novel.

Mary Shelley’s monster presented a genuinely disturbing allegory to readers of the Steam Age. The early part of the 19th century introduced the age of mechanization, and the main symbol of mechanical power was the steam engine. It was then that the steam engine was first mounted on wheels, and by 1825 the first public railway was in operation. Steam power held the same sort of mystique that electricity and atomic power would have in later generations.

The Steampunk Computer

In 1833, when British mathematician, astronomer, and inventor Charles Babbage spoke of executing calculations by steam and then actually designed machines that he claimed could mechanize calculation, even mechanize thought, many saw him as a real-life Dr. Frankenstein. Although he never implemented his designs, Babbage was no idle dreamer; he worked on what he called his Analytical Engine, drawing on the most advanced thinking in logic and mathematics, until his death in 1871. Babbage intended that the machine would free people from repetitive and boring mental tasks, just as the new machines of that era were freeing people from physical drudgery.

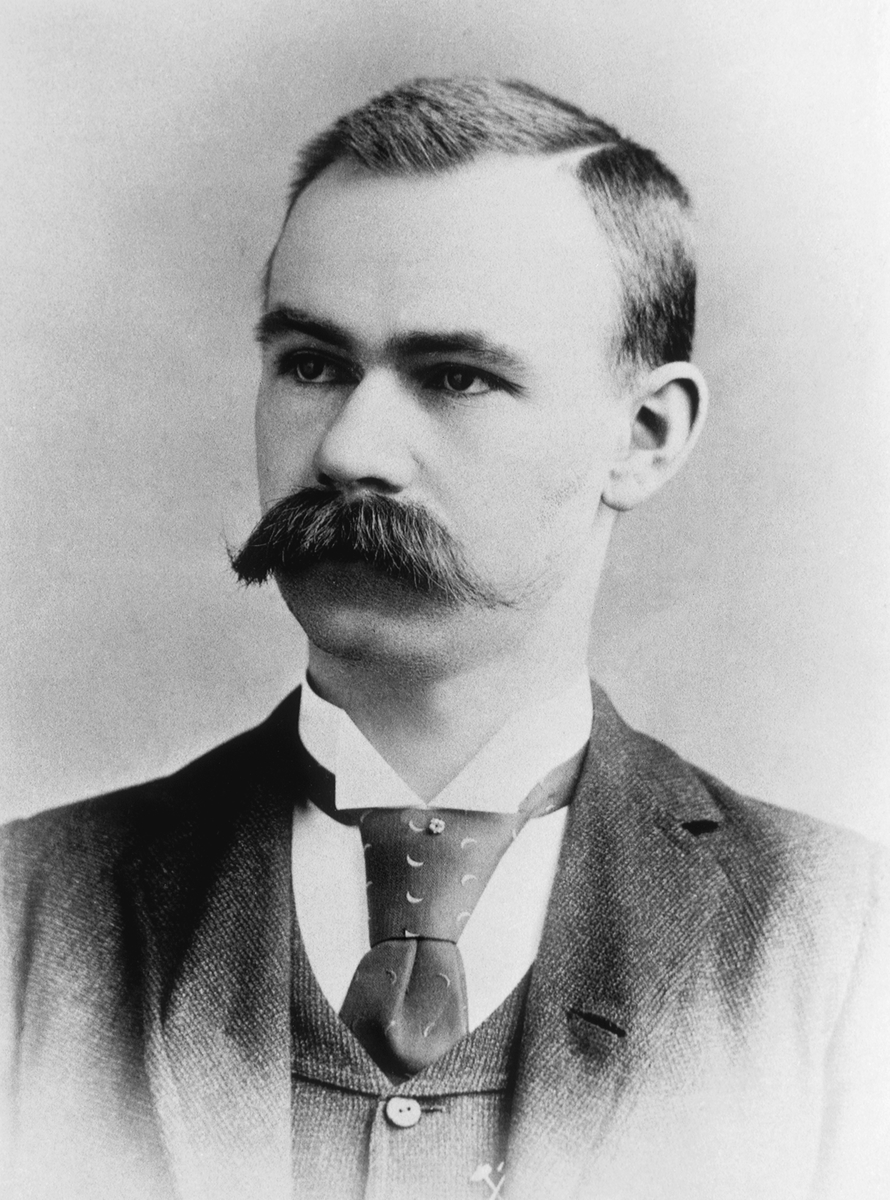

Figure 1. Charles Babbage The 19th-century mathematician and inventor designed a machine to “mechanize thought” 100 years before the first computers were successfully built.

(Courtesy of The Computer Museum History Center, San Jose)

Babbage’s colleague, patroness, and scientific chronicler was Augusta Ada Byron, daughter of Lord Byron, pupil of algebraist Augustus De Morgan, and the future Lady Lovelace. A writer herself and an amateur mathematician, Ada was able, through her articles and papers, to explain Babbage’s ideas to the more educated members of the public and to potential patrons among the British nobility. She also wrote sets of instructions that told Babbage’s Analytical Engine how to solve advanced mathematical problems. Because of this work, many regard Ada as the first computer programmer. The US Department of Defense recognized her role in anticipating the discipline of computer programming by naming its Ada programming language after her in the early 1980s.

No doubt thinking of the public’s fear of technology that Mary Shelley had tapped into with Frankenstein, Ada figured she’d better reassure her readers that Babbage’s Analytical Engine did not actually think for itself. She assured them that the machine could only do what people instructed it to do. Nevertheless, the Analytical Engine was very close to being a true computer in the modern sense of the word, and “what people instructed it to do” really amounted to what we today call computer programming.

The Analytical Engine that Babbage designed would have been a huge, loud, outrageously expensive, beautiful, gleaming, steel-and-brass monster. Numbers were to be stored in registers composed of toothed wheels, and the adding and carrying over of numbers was done through cams and ratchets. It was supposed to be capable of storing up to 1,000 numbers with a limit of 50 digits each. This internal storage capacity would be described today in terms of the machine’s memory size. By modern standards, the Analytical Engine would have been absurdly slow—capable of less than one addition operation per second—but it actually had more memory than the first useful computers of the 1940s and 1950s and the early microcomputers of the 1970s.

Figure 2. Ada Byron, Lady Lovelace Ada Byron (1815-1852) promoted and programmed Babbage’s Analytical Engine, predicting that machines like it would one day do remarkable things such as compose music.(Courtesy of John Murray Publishers Ltd.)

Although he came up with three separate, highly detailed plans for his Analytical Engine, Babbage never constructed that machine, nor his simpler but also enormously ambitious Difference Engine. For more than a century, it was thought that the machining technology of his time was simply inadequate to produce the thousands of precision parts that the machines required. Then in 1991, Doron Swade, senior curator of computing for the Science Museum of London, succeeded in constructing Babbage’s Difference Engine using only the technology, techniques, and materials available to Babbage in his time. Swade’s achievement revealed the great irony of Babbage’s life. A century before anyone would attempt the task again, he had succeeded in designing a computer. His machines would, in fact, have worked, and could have been built. The reasons for Babbage’s failure to carry out his dream all have to do with his inability to get sufficient funding, due largely to his propensity for alienating those in a position to provide it.

If Babbage had been less confrontational or if Lord Byron’s daughter had been a wealthier woman, there may have been an enormous steam-engine computer belching clouds of logic over Dickens’s London, balancing the books of some real-life Scrooge, or playing chess with Charles Darwin or another of Babbage’s celebrated intellectual friends. But—as Mary Shelley had predicted—electricity turned out to be the force that would bring the thinking machine to life.

The computer would marry electricity with logic.

Calculating Machines

Across the Atlantic, the American logician Charles Sanders Peirce was lecturing on the work of George Boole, the fellow who gave his name to Boolean algebra. In doing so, Peirce brought symbolic logic to the United States and radically redefined and expanded Boole’s algebra in the process. Boole had brought logic and mathematics together in a particularly cogent way, and Peirce probably knew more about Boolean algebra than anyone else in the mid-19th century.

But Peirce saw more. He saw a connection between logic and electricity.

By the 1880s, Peirce figured out that Boolean algebra could be used as the model for electrical switching circuits. The true/false distinction of Boolean logic mapped exactly to the way current flowed through the on/off switches of complex electrical circuits. Logic, in other words, could be represented by electrical circuitry. Therefore, electrical calculating machines and logic machines could, in principle, be built. They were not merely the fantasy of a novelist. They could—and would—happen.

One of Peirce’s students, Allan Marquand, actually designed (but did not build) an electric machine to perform simple logic operations in 1885. The switching circuit that Peirce explained how to use to implement Boolean algebra in electric circuitry is one of the fundamental elements of a computer. The unique feature of such a device is that it manipulates information, as opposed to electrical currents or locomotives.

The substitution of electrical circuitry for mechanical switches made possible smaller computing devices. In fact, the first electric logic machine ever made was a portable device built by Benjamin Burack, which he designed to be carried in a briefcase. Burack’s logic machine, built in 1936, could process statements made in the form of syllogisms. For example, given “All men are mortal; Socrates is a man,” it would then accept “Socrates is mortal” and reject “Socrates is a woman.” Such erroneous deductions closed circuits and lit up the machine’s warning lights, indicating the logical error committed.

Burack’s device was a special-purpose machine with limited capabilities. Nevertheless, most of the special-purpose computing devices built around that time dealt with only numbers, and not logic. Back when Peirce was working out the connection between logic and electricity, Herman Hollerith was designing a tabulating machine to compute the US census of 1890.

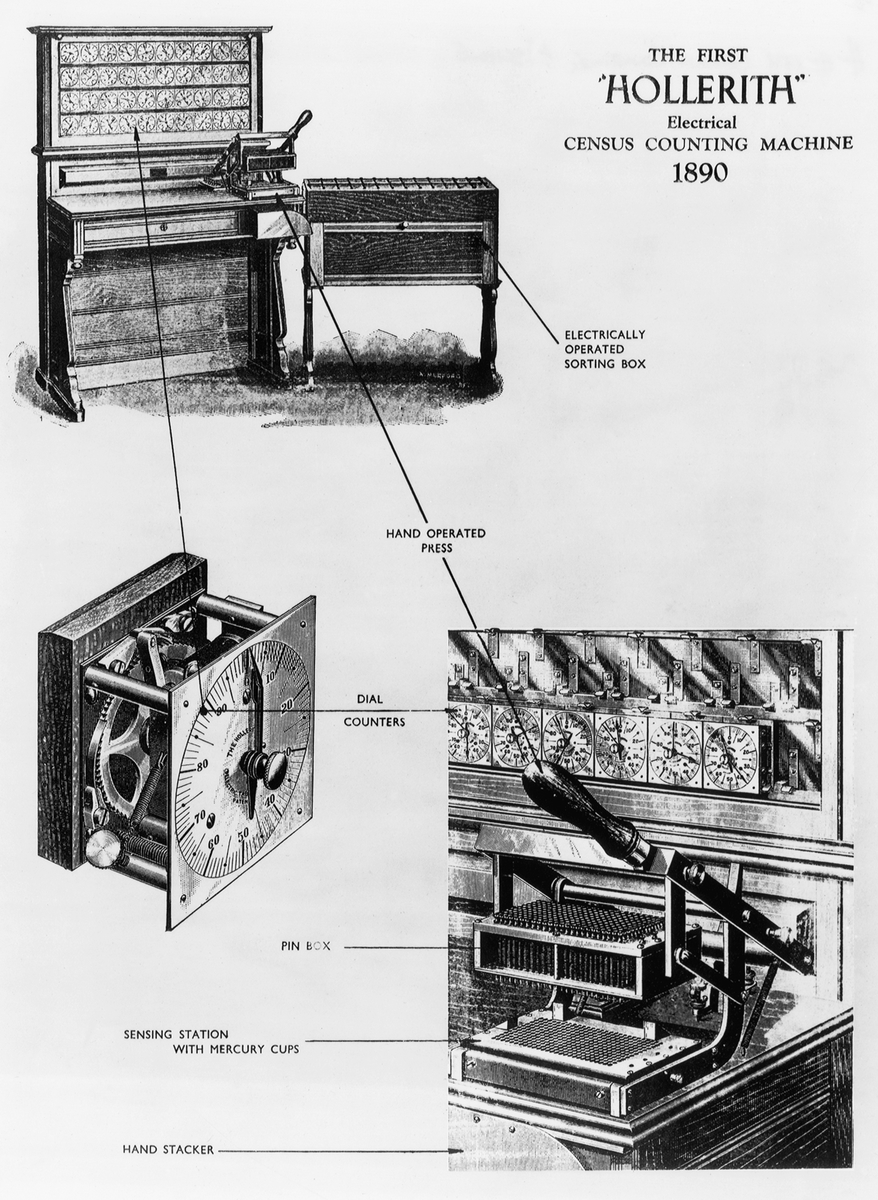

Figure 3. Hermann Hollerith Hollerith invented the first large-scale data-processing device, which was successfully used to compute the 1890 census. His work created the data-processing industry. (Courtesy of IBM Archives)

Figure 4. Hollerith Census Counting Machine Hollerith’s Census Counting Machine cut the time for computing the 1890 census by an order of magnitude. (Courtesy of IBM Archives)

Hollerith’s company was eventually absorbed by an enterprise that came to be called the International Business Machines Corporation. By the late 1920s, IBM was making money selling special-purpose calculating machines to businesses, enabling those businesses to automate routine numerical tasks. But the IBM machines weren’t computers, nor were they logic machines like Burack’s. They were just big, glorified calculators.

The Birth of the Computer

Spurred by Claude Shannon’s PhD thesis at MIT, which explained how electrical switching circuits could be used to model Boolean logic (as Peirce had foreshadowed 50 years earlier), IBM executives agreed in the 1930s to finance a large computing machine based on electromechanical relays. Although they later regretted it, IBM executives gave Howard Aiken, a Harvard professor, the then-huge sum of $500,000 to develop the Mark I, a calculating device largely inspired by Babbage’s Analytical Engine. Babbage, though, had designed a purely mechanical machine. The Mark I, by comparison, was an electromechanical machine with electrical relays serving as the switching units and banks of relays serving as space for number storage. Calculation was a noisy affair; the electrical relays clacked open and shut incessantly. When the Mark I was completed in 1944, it was widely hailed as the electronic brain of science-fiction fame made real. But IBM executives were less than pleased when, as they saw it, Aiken failed to acknowledge IBM’s contribution at the unveiling of the Mark I. And IBM had other reasons to regret its investment. Even before work began on the Mark I device, technological developments elsewhere had made it obsolete.

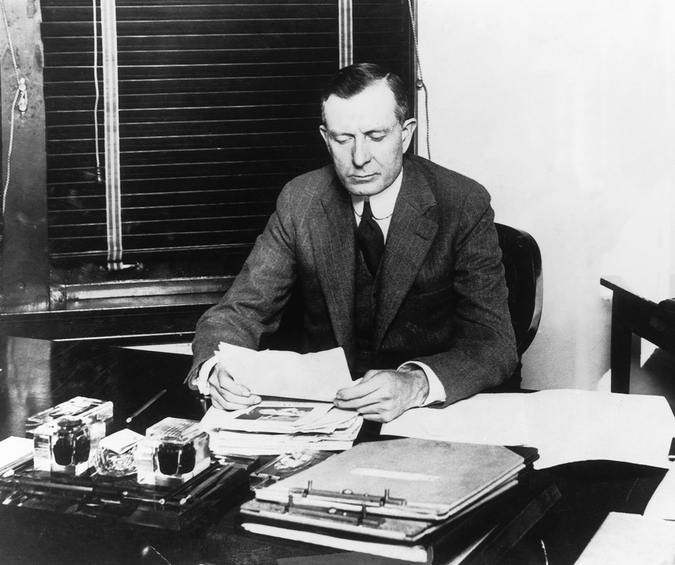

Figure 5. Thomas J. Watson, Sr. Watson went to work for Hollerith’s pioneering data-processing firm in 1914 and later turned it into IBM. (Courtesy of IBM Archives)

Electricity was making way for the emergence of electronics. Just as others had earlier replaced Babbage’s steam-driven wheels and cogs with electrical relays, John Atanasoff, a professor of mathematics and physics at Iowa State College, saw how electronics could replace the relays. Shortly before the American entry into World War II, Atanasoff, with the help of Clifford Berry, designed the ABC, the Atanasoff-Berry Computer, a device whose switching units were to be vacuum tubes rather than relays.

This substitution was a major technological advance. Vacuum-tube machines could, in principle, do calculations considerably faster and more efficiently than relay machines. The ABC, like Babbage’s Analytical Engine, was never completed, probably because Atanasoff got less than $7,000 in grant money to build it. Atanasoff and Berry did assemble a simple prototype, a mass of wires and tubes that resembled a primitive desk calculator. But by using tubes as switching elements, Atanasoff greatly advanced the development of the computer. The added efficiency of vacuum tubes over relay switches would make the computer a reality.

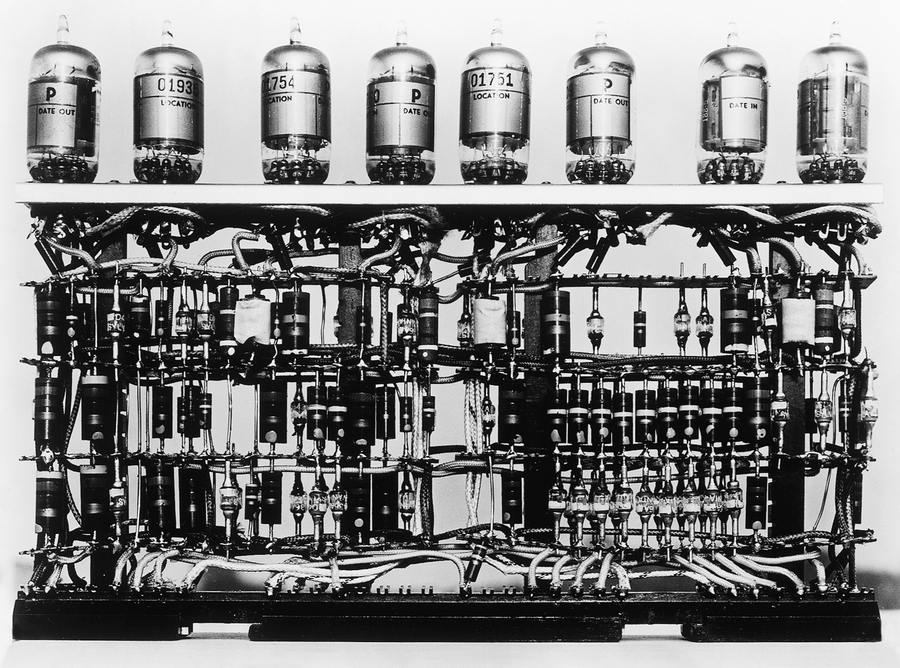

Figure 6. Vacuum tubes In the 1950s computers were filled with vacuum tubes, such as these from the IBM 701. (Courtesy of IBM Archives)

The vacuum tube is a glass tube with the air removed. Thomas Edison discovered that electricity travels through the vacuum under certain conditions, and Lee de Forest turned vacuum tubes into electrical switches using this “Edison effect.” In the 1950s, vacuum tubes were used extensively in electronic devices from televisions to computers. Today you can still see the occasional tube-based computer display or television.

By the 1930s, the advent of computing machines was apparent. It also seemed that computers were destined to be huge and expensive special-purpose devices. It took decades before they became much smaller and cheaper, but they were already on their way to becoming more than special-purpose machines.

It was British mathematician Alan Turing who envisioned a machine designed for no other purpose than to read coded instructions for any describable task and to follow the instructions to complete the task. This was truly something new under the sun. Because it could perform any task described in the instructions, such a machine would be a true general-purpose device. Perhaps no one before Turing had ever entertained an idea this large. But within a decade, Turing’s visionary idea became reality. The instructions became programs, and his concept, in the hands of another mathematician, John von Neumann, became the general-purpose computer.

Most of the work that brought the computer into existence happened in secret laboratories during World War II. That’s where Turing was working. In the US in 1943, at the Moore School of Electrical Engineering in Philadelphia, John Mauchly and J. Presper Eckert proposed the idea for a computer. Shortly thereafter they were working with the US military on ENIAC (Electronic Numerical Integrator and Computer), which would be the first all-electronic digital computer. With the exception of the peripheral machinery it needed for information input and output, ENIAC was purely a vacuum-tube machine.

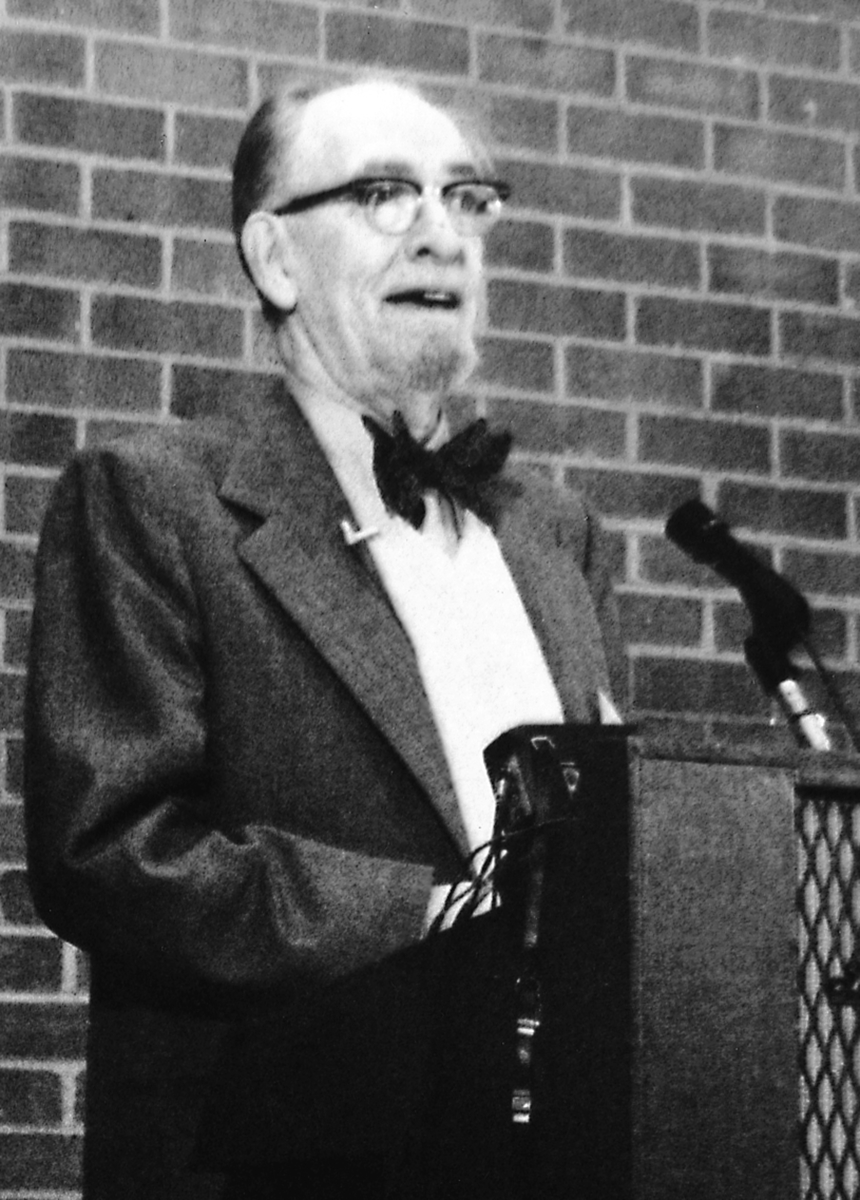

Figure 7. John Mauchly Mauchly, cocreator of ENIAC, is seen here speaking to early personal-computer enthusiasts at the 1976 Atlantic City Computer Festival. (Courtesy of David H. Ahl)

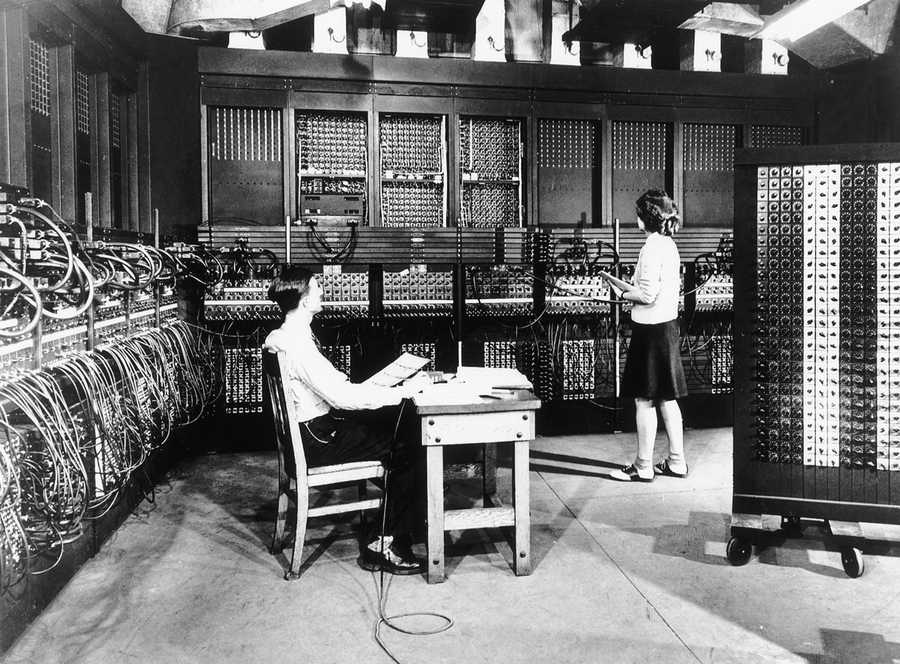

Figure 8. ENIAC The first all-electronic digital computer was completed in December 1945.

(Courtesy of IBM Archives)

Credit for inventing the electronic digital computer is disputed, and perhaps ENIAC was based in part on ideas Mauchly hatched during a visit to John Atanasoff. But ENIAC was real. Mauchly and Eckert attracted a number of bright mathematicians to the ENIAC project, including the brilliant John von Neumann. Von Neumann became involved with the project and made various-and variously reported-contributions to building ENIAC, and in addition offered an outline for a more sophisticated machine called EDVAC (Electronic Discrete Variable Automatic Computer).

Because of von Neumann, the emphasis at the Moore School swung from technology to logic. He saw EDVAC as more than a calculating device. He felt that it should be able to perform logical as well as arithmetic operations and be able to operate on coded symbols. Its instructions for operating on—and interpreting—the symbols should themselves be symbols coded into the machine and operated on. This was the last fundamental insight in the conception of the modern computer. By specifying that EDVAC should be programmable by instructions that were themselves fed to the machine as data, von Neumann created the model for the stored-program computer.

After World War II, von Neumann proposed a method for turning ENIAC into a programmable computer like EDVAC, and Adele Goldstine wrote the 55-operation language that made the machine easier to operate. After that, no one ever again used ENIAC in its original mode of operation.

When development on ENIAC was finished in early 1946, it ran 1,000 times faster than its electromechanical counterparts. But electronic or not, it still made noise. ENIAC was a room full of clanking Teletype machines and whirring tape drives, in addition to the walls of relatively silent electronic circuitry. It had 20,000 switching units, weighed 30 tons, and burned 150,000 watts of energy. Despite all that electrical power, at any given time ENIAC could handle only 20 numbers of 10 decimal digits each. But even before construction was completed on ENIAC, it was put to significant use. In 1945, it performed calculations used in the atomic-bomb testing at Los Alamos, New Mexico.

Figure 9. John von Neumann Von Neumann was a brilliant polymath who made foundational contributions to programming and the ENIAC and EDVAC computers. (Courtesy of The Computer Museum History Center, San Jose)

A new industry emerged after World War II when the secret labs began to disclose their discoveries and creations. Building computers immediately became a business, and by the very nature of the equipment, it became a big business. With the help of engineers John Mauchly and J. Presper Eckert, who were fresh from their ENIAC triumph, the Remington Typewriter Company became Sperry Univac. For a few years, the name Univac was synonymous with computers, just as the name Kleenex came to be synonymous with facial tissues. Sperry Univac had some formidable competition. IBM executives recovered from the disappointment of the Mark I and began building general-purpose computers. The two companies developed distinctive operating styles: IBM was the land of blue pinstripe suits, whereas the halls of Sperry Univac were filled with young academics in sneakers. Whether because of its image or its business savvy, before long IBM took the industry-leader position away from Sperry Univac.

Soon most computers were IBM machines, and the company’s share of the market grew with the market itself. Other companies emerged, typically under the guidance of engineers who had been trained at IBM or Sperry Univac. Control Data Corporation (CDC) in Minneapolis spun off from IBM, and soon computers were made by Honeywell, Burroughs, General Electric, RCA, and NCR. Within a decade, eight companies came to dominate the growing computer market, but with IBM so far ahead of the others in revenues, they were often referred to as Snow White (IBM) and the Seven Dwarfs.

But IBM and the other seven were about to be taught a lesson by some brash upstarts. A new kind of computer emerged in the 1960s—smaller, cheaper, and referred to, in imitation of the then-popular miniskirt, as the minicomputer. Among the most significant companies producing smaller computers were Digital Equipment Corporation (DEC) in the Boston area and Hewlett-Packard (HP) in Palo Alto, California. The computers these companies were building were general-purpose machines in the Turing--von Neumann sense, and they were getting more compact, more efficient, and more powerful. Soon, advances in core computer technology would allow even more impressive advances in computer power, efficiency, and miniaturization.

The Breakthrough

Inventing the transistor meant the fulfillment of a dream.

-Ernest Braun and Stuart MacDonald, Revolution in Miniature

In the 1940s, the switching units in computers were mechanical relays that constantly opened and closed, clattering away like freight trains. In the 1950s, vacuum tubes replaced mechanical relays. But tubes were a technological dead end. They could be made only just so small, and because they generated heat, they had to be spaced a certain distance apart from one another. As a result, tubes afflicted the early computers with a sort of structural elephantiasis.

But by 1960, physicists working on solid-state elements introduced an entirely new component into the mix. The device that consigned the vacuum tube to the back-alley bin was the transistor, a tiny, seemingly inert slice of crystal with interesting electrical properties. The transistor was immediately recognized as a revolutionary development. In fact, John Bardeen, Walter Brattain, and William Shockley shared the 1956 Nobel Prize in physics for their work on the innovation.

The transistor was significant for more than merely making another bit of technology obsolete. Resulting from a series of experiments in the application of quantum physics, transistors changed the computer from a “giant electronic brain” that was the exclusive domain of engineers and scientists to a commodity that could be purchased like a television set. The transistor was the technological breakthrough that made both the minicomputers of the 1960s and the personal-computer revolution of the 1970s possible.

Bardeen and Brattain introduced “the major invention of the century” in 1947, two days before Christmas. But to understand the real significance of the device that came into existence that winter day in Murray Hill, New Jersey, you have to look back to research done years before.

Inventing the Transistor

In the 1940s, Bardeen and Shockley were working in apparently unrelated fields. Experiments in quantum physics resulted in some odd predictions (which were later born out) about the behavior that chemical element crystals, such as germanium and silicon, would display in an electrical field. These crystals could not be classified as either insulators or conductors, so they were simply called semiconductors. Semiconductors had one property that particularly fascinated electrical engineers: a semiconductor crystal could be made to conduct electricity in one direction but not in the other. Engineers put this discovery to practical use. Tiny slivers of such crystals were used to “rectify” electrical current; that is, to turn alternating current into direct current. Early radios, called crystal sets, were the first commercial products to use these crystal rectifiers.

The crystal rectifier was a curious item, a slice of mineral material that did useful work but had no moving parts: a solid-state device. But the rectifier knew only one trick. A different device soon replaced it almost entirely: Lee de Forest’s triode, the vacuum tube that made radios glow. The triode was more versatile than the crystal rectifier; it could both amplify a current passing through it and use a weak secondary current to alter a strong current passing from one of its poles to the other. It was a step in the marriage of electricity and logic, and this capability to change one current by means of another would be essential to EDVAC-type computer design. At the time, though, researchers saw that the triode’s main application lay in telephone switching circuits.

Naturally, people at AT&T, and especially at its research branch Bell Labs, became interested in the triode. William Shockley was working for Bell Labs at the time and looking at the effect impurities had on semiconductor crystals. Trace amounts of other substances could provide the extra electrons needed to carry electrical current in the devices. Shockley convinced Bell Labs to let him put together a team to study this intriguing development. His team consisted of experimental scientist Walter Brattain and theoretician John Bardeen. For some time the group’s efforts went nowhere. Similar research was underway at Purdue University in Lafayette, Indiana, and the Bell group kept close tabs on the work going on there.

Then Bardeen discovered that an inhibiting effect on the surface of the crystal was interfering with the flow of current. Brattain conducted the experiment that proved Bardeen right, and on December 23, 1947, the transistor was born. The transistor did everything the vacuum tube did, and it did it better. It was smaller, it didn’t generate as much heat, and it didn’t burn out.

Integrated Circuits

Most important, the functions performed by several transistors could be incorporated into a single semiconductor device. Researchers quickly set about the task of constructing these sophisticated semiconductors. Because these devices integrated a number of transistors into a more complex circuit, they were called integrated circuits, or ICs. Because they essentially were tiny slivers of silicon, they also came to be called chips.

Building ICs was a complicated and expensive process, and an entire industry devoted to making them soon sprang up. The first companies to begin producing chips commercially were the existing electronics companies. One very early start-up company was Shockley Semiconductor, which William Shockley founded in 1955 in his hometown of Palo Alto. Shockley’s firm employed many of the world’s best semiconductor people. Some of those folks didn’t stay with the company for long. Shockley Semiconductor spawned Fairchild Semiconductor, and Silicon Valley grew from this root.

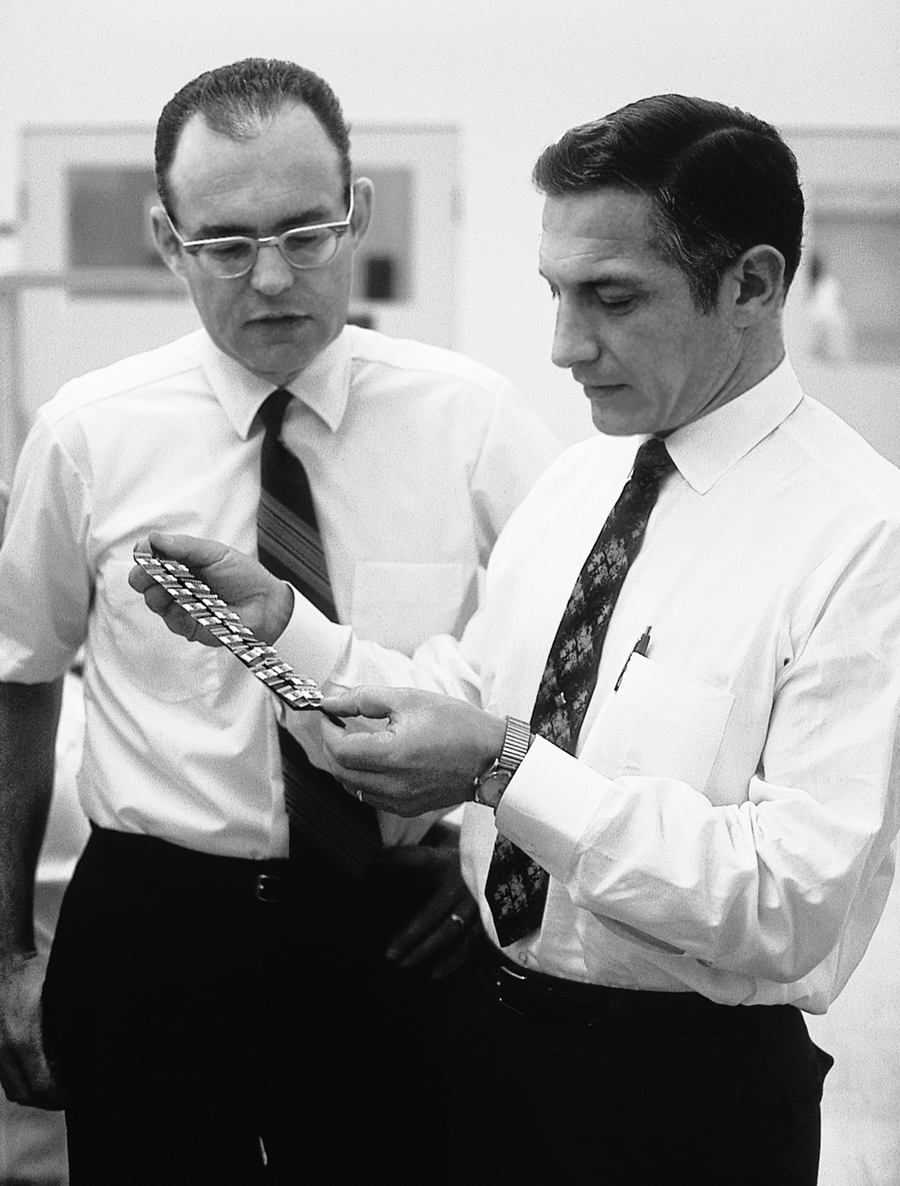

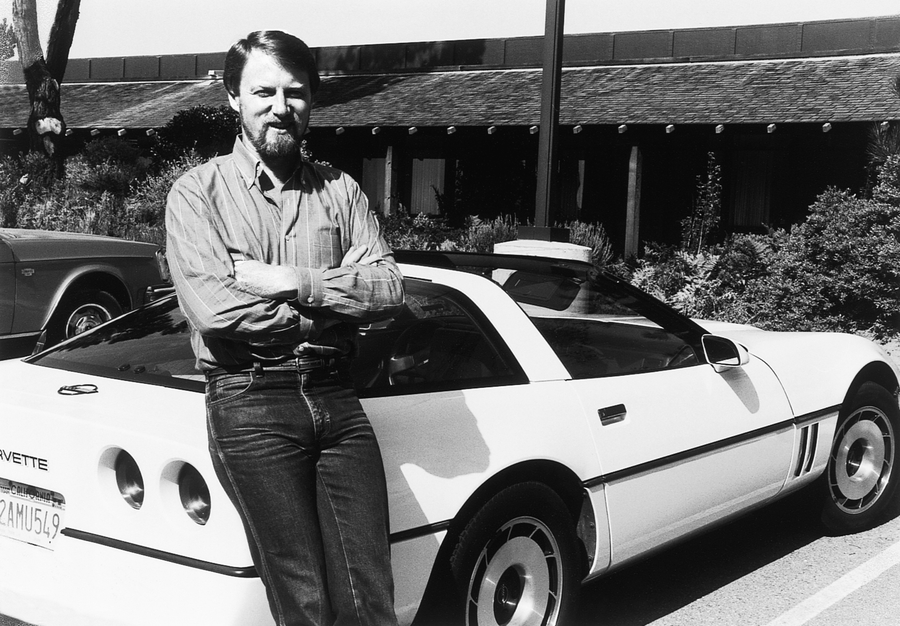

Figure 10. Gordon Moore and Robert Noyce Gordon Moore (left) and Robert Noyce founded Intel, which became the computer industry’s semiconductor powerhouse. (Courtesy of Intel)

A decade after Fairchild was formed, virtually every semiconductor company in existence could boast a large number of former Fairchild employees. Even the big electronics companies, such as Motorola, that entered the semiconductor industry in the 1960s employed ex-Fairchild engineers. And except for some notable exceptions—RCA, Motorola, and Texas Instruments—most of the semiconductor companies were located within a few miles of Shockley’s operation in Palo Alto in the Santa Clara Valley, soon to be rechristened Silicon Valley. The semiconductor industry grew with amazing speed, and the size and price of its products shrank at the same pace. Competition was fierce.

At first, little demand existed for highly complex ICs outside of the military and aerospace industries. Certain kinds of ICs, though, were in common use in large mainframe computers and minicomputers. Of paramount importance were memory chips—ICs that could store data and retain them as long as they were fed power. Memory chips were the wedge that took semiconductors mainstream.

Memory chips at the time embodied the functions of hundreds of transistors. Other ICs weren’t designed to retain the data that flowed through them, but instead were programmed to change the data in certain ways in order to perform simple arithmetic or logic operations on it. Then, in the early 1970s, the runaway demand for electronic calculators led to the creation of a new and considerably more powerful computer chip.

Critical Mass

The microprocessor has brought electronics into a new era. It is altering the structure of our society.

-Robert Noyce and Marcian Hoff, Jr. “History of Microprocessor Development at Intel,” IEEE Micro, 1981

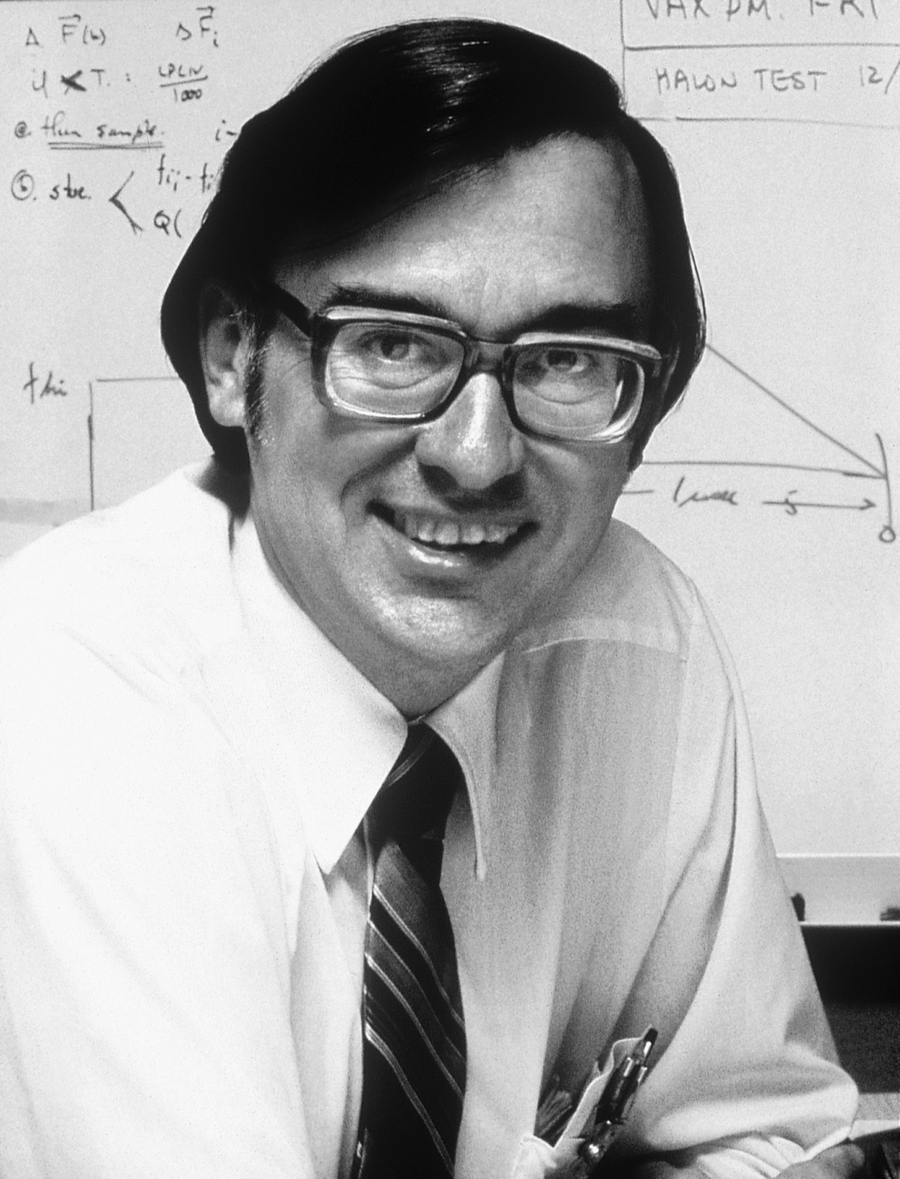

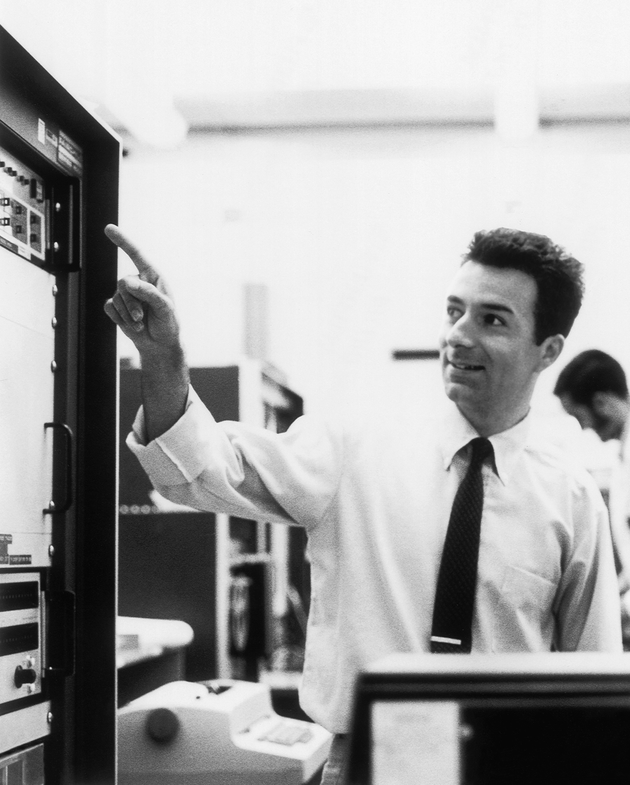

Figure 11. Ted Hoff Marcian “Ted” Hoff led the design effort for Intel’s first microprocessor.

(Courtesy of Intel Corp.)

In early 1969, Intel Development Corporation, a Silicon Valley semiconductor manufacturer, received a commission from a Japanese calculator company called Busicom to produce chips for a line of its calculators. Intel had the credentials: it was a Fairchild spinoff, and its president, Robert Noyce, had helped invent the integrated circuit. Although Intel had opened its doors for business only a few months earlier, the company was growing as fast as the semiconductor industry.

An engineer named Marcian “Ted” Hoff had joined Intel a few months earlier as its twelfth employee, and when he began working on the Busicom job, the company already employed 200 people. Hoff was fresh from academia. After earning a PhD, he continued as a researcher at Stanford University’s Electrical Engineering Department, where his research on the design of semiconductor memory chips led to several patents and to the job at Intel. Noyce felt that Intel should produce semiconductor memory chips and nothing else, and he had hired Hoff to dream up applications for these memory chips.

But when Busicom proposed the idea for calculator chips, Noyce allowed that taking a custom job while the company was building up its memory business wouldn’t hurt.

The 4004

Hoff was sent to meet with the Japanese engineers who came to discuss what Busicom had envisioned. Because Hoff had a flight to Tahiti scheduled for that evening, the first meeting with the engineers was brief. Tahiti evidently gave him time for contemplation, because he returned from paradise with some firm ideas about the job.

In particular, he was annoyed that the Busicom calculator would cost almost as much as a minicomputer. Minicomputers had become relatively inexpensive, and research laboratories all over the country were buying them. It was not uncommon to find two or three minicomputers in a university’s psychology or physics department.

Hoff had worked with DEC’s new PDP-8 computer, one of the smallest and cheapest of the lot, and found that it had a very simple internal setup. Hoff knew that the PDP-8, a computer, could do everything the proposed Busicom calculator could do and more, for almost the same price. To Ted Hoff, this was an affront to common sense.

Hoff asked the Intel bosses why people should pay the price of a computer for something that had a fraction of the capacity. The question revealed his academic bias and his naiveté about marketing: he would rather have a computer than a calculator, so he figured surely everyone else would, too.

The marketing people patiently explained that it was a matter of packaging. If someone wanted to do only calculations, they didn’t want to have to fire up a computer to run a calculator program. Besides, most people, even scientists, were intimidated by computers. A calculator was just a calculator from the moment you turned it on. A computer was an instrument from the Twilight Zone.

Hoff followed reasoning, but still rankled at the idea of building a special-purpose device when a general-purpose one was just as easy—and no more expensive—to build. Besides, he thought, a general-purpose design would make the project more interesting (to him). He proposed to the Japanese engineers a revised design loosely based on the PDP-8.

The design’s comparison to the PDP-8 computer was only partly applicable. Hoff was proposing a set of chips, not an entire computer. But one of those chips would be critically important in several ways. First, it would be dense. Chips at the time contained no more than 1,000 features—the equivalent of 1,000 transistors—but this chip would at least double that number. In addition, this chip would, like any IC, accept input signals and produce output signals. But whereas these signals would represent numbers in a simple arithmetic chip and logical values (true or false) in a logic chip, the signals entering and leaving Hoff’s chip would form a set of instructions for the IC.

In short, the chip could run programs. The customers were asking for a calculator chip, but Hoff was designing an IC EDVAC, a true general-purpose computing device on a sliver of silicon. A computer on a chip. Although Hoff’s design resembled a very simple computer, it left out some computer essentials, such as memory and peripherals for human input and output. The term that evolved to describe such a device was microprocessor, and microprocessors were general-purpose devices specifically because of their programmability.

Because the Intel microprocessor used the stored-program concept, the calculator manufacturers could make the microprocessor act like any kind of calculator they wanted. At any rate, that was what Hoff had in mind. He was sure it was possible, and just as sure that it was the right approach. But the Japanese engineers weren’t impressed. Frustrated, Hoff sought out Noyce, who encouraged him to proceed anyway, and when chip designer Stan Mazor left Fairchild to come to Intel, Hoff and Mazor set to work on the design for the chip.

At that point, Hoff and Mazor had not actually produced an IC. A specialist would still have to transform the design into a two-dimensional blueprint, and this pattern would have to be etched into a slice of silicon crystal. These later stages in the chip’s development cost money, so Intel did not intend to move beyond the logic-design stage without talking further with its skeptical customer.

In October 1969, Busicom representatives flew in from Japan to discuss the Intel project. The Japanese engineers presented their requirements, and in turn Hoff presented his and Mazor’s design. Despite the fact that the requirements and design did not quite match, after some discussion Busicom decided to accept the Intel design for the chip. The deal gave Busicom an exclusive contract for the chips. This was not the best deal for Intel, but at least they were going ahead on the project.

Hoff was relieved to have the go-ahead. They called the chip the 4004, which was the approximate number of transistors the single device replaced and an indication of its complexity. Hoff wasn’t the only person ever to have thought of building a computer on a chip, but he was the first to launch a project that actually got carried out. Along the way, he and Mazor solved a number of design problems and fleshed out the idea of the microprocessor more fully. But there was a big distance between planning and execution.

Leslie Vadasz, the head of a chip-design group at Intel, knew who he wanted to implement the design: Federico Faggin. Faggin was a talented chip designer who had worked with Vadasz at Fairchild and had earlier built a computer for Olivetti in Italy. The problem was, Faggin didn’t work at Intel. Worse, he couldn’t work at Intel, at least not right away: in the United States on a work visa, he was constrained in his ability to change jobs and still retain his visa. The earliest he would be available was the following spring. The clock ticked and the customer grew frustrated.

Figure 12. Federico Faggin Faggin was one of the inventors of the microprocessor at Intel, and founded Zilog. (Courtesy of Federico Faggin)

When Faggin finally arrived at Intel in April 1970, he was immediately assigned to implement the 4004 design. Masatoshi Shima, an engineer for Busicom, was due to arrive to examine and approve the final design, and Faggin would set to work turning it into silicon.

Unfortunately, the design was far from complete. Hoff and Mazor had completed the instruction set for the device and an overall design, but the necessary detailed design was nonexistent. Shima understood immediately that the “design” was little more than a collection of ideas. “This is just idea!” he shouted at Faggin. “This is nothing! I came here to check, but there is nothing to check!”

Faggin confessed that he had only arrived recently, and that he was going to have to complete the design before starting the implementation. With help from Mazor and Shima, who extended his stay to six months, he did the job in a remarkably short time, working 12-to-16-hour days. Since he was doing something no one had ever done before, he found himself having to invent techniques to get the job done. In February 1971, Faggin delivered working kits to Busicom, including the 4004 microprocessor and eight other chips necessary to make the calculator work. It was a success.

It was also a breakthrough, but its value was more in what it signified than in what it actually delivered. On one hand, this new thing, the microprocessor, was nothing more than an extension of the IC chips for arithmetic and logic that semiconductor manufacturers had been making for years. The microprocessor merely crammed more functional capability onto one chip. Then again, there were so many functions that the microprocessor could perform, and they were integrated with each other so closely that using the device required learning a new language, albeit a simple one. The instruction set of the 4004, for all intents and purposes, constituted a programming language.

Today’s microprocessors are more complex and powerful than the roomful of circuitry that constituted a computer in 1950. The 4004 chip that Hoff conceived in 1969 was a crude first step toward something that Hoff, Noyce, and Intel management could scarcely anticipate. The 8008 chip that Intel produced two years later was the second crucial step.

The 8008

The 8008 microprocessor was developed for a company then called CTC—Computer Terminal Corporation—and later called Datapoint. CTC had a technically sophisticated computer terminal and wanted some chips designed to give it additional functions.

Once again, Hoff presented a grander vision of how an existing product could be used. He proposed a single-chip implementation of the control circuitry that replaced all of its internal electronics with a single integrated circuit. Hoff and Faggin were interested in the 8008 project partly because the exclusive deal for the 4004 kept that chip tied up. Faggin, who was doing lab work with electronic test equipment, saw the 4004 as an ideal tool for controlling test equipment, but the Busicom deal prevented that.

Because Busicom had exclusive rights to the 4004, Hoff felt that perhaps this new 8008 terminal chip could be marketed and used with testers. The 4004 had drawbacks. It operated on only four binary digits at a time. This significantly limited its computing power because it couldn’t even handle a piece of data the size of a single character in one operation. The new 8008 could. Although another engineer was initially assigned to it, Faggin was soon put in charge of making the 8008 a reality, and by March 1972 Intel was producing working 8008 chips.

Before this happened, though, CTC executives lost interest in the project. Intel now found it had invested a great deal of time and effort in two highly complex and expensive products, the 4004 and 8008, with no mass market for either of them. As competition intensified in the calculator business, Busicom asked Intel to drop the price on the 4004 in order to keep its contract. “For God’s sake,” Hoff urged Noyce, “get us the right to sell these chips to other people.” Noyce did. But possession of that right, it turned out, was no guarantee that Intel would ever exercise it.

Intel’s marketing department was cool to the idea of releasing the chips to the general engineering public. Intel had been formed to produce memory chips, which were easy to use and were sold in volume—like razor blades. Microprocessors, because the customer had to learn how to use them, presented enormous customer-support problems for the young company. Memory chips didn’t.

Hoff countered with ideas for new microprocessor applications that no one had thought of yet. An elevator controller could be built around a chip. Besides, the processor would save money: it could replace a number of simpler chips, as Hoff had shown in his design for the 8008. Engineers would make the effort to design the microprocessor into their products. Hoff knew he himself would.

Hoff’s persistence finally paid off when Intel hired advertising man Regis McKenna to promote the product in a fall 1971 issue of Electronic News. “Announcing a new era in integrated electronics: a microprogrammable computer on a chip,” the ad read. A computer on a chip? Technically the claim was puffery, but when visitors to an electronics show that fall read the product specifications for the 4004, they were duly impressed by the chip’s programmability. And in one sense McKenna’s ad was correct: the 4004 (and even more the 8008) incorporated the essential decision-making power of a computer.

Programming the Chips

Meanwhile, Texas Instruments (TI) had picked up the CTC contract and delivered a microprocessor. TI was pursuing the microprocessor market as aggressively as Intel; Gary Boone of TI had, in fact, just filed a patent application for something called a single-chip computer. Three different microprocessors now existed. But Intel’s marketing department had been right about the amount of customer support the microprocessors demanded. For instance, users needed documentation on the operations the chips performed, the language they recognized, the voltage they used, the amount of heat they dissipated, and a host of other things. Someone had to write these information manuals. At Intel the job was given to an engineer named Adam Osborne, who would later play a very different part in making computers personal.

The microprocessor software formed another kind of essential customer support. A disadvantage with a general-purpose computer or processor is that it does nothing without programs. The chips, as general-purpose processors, needed programs, the instructions that would tell them what to do. To create these programs, Intel first assembled an entire computer around each of its two microprocessor chips. These computers were not commercial products but instead were development systems—tools to help write programs for the processor. They were also, although no one used this term at the time, microcomputers.

One of the first people to begin developing these programs was a professor at the Naval Postgraduate School located down the coast from Silicon Valley, in Pacific Grove, California. Like Osborne, Gary Kildall would be an important figure in the development of the personal computer.

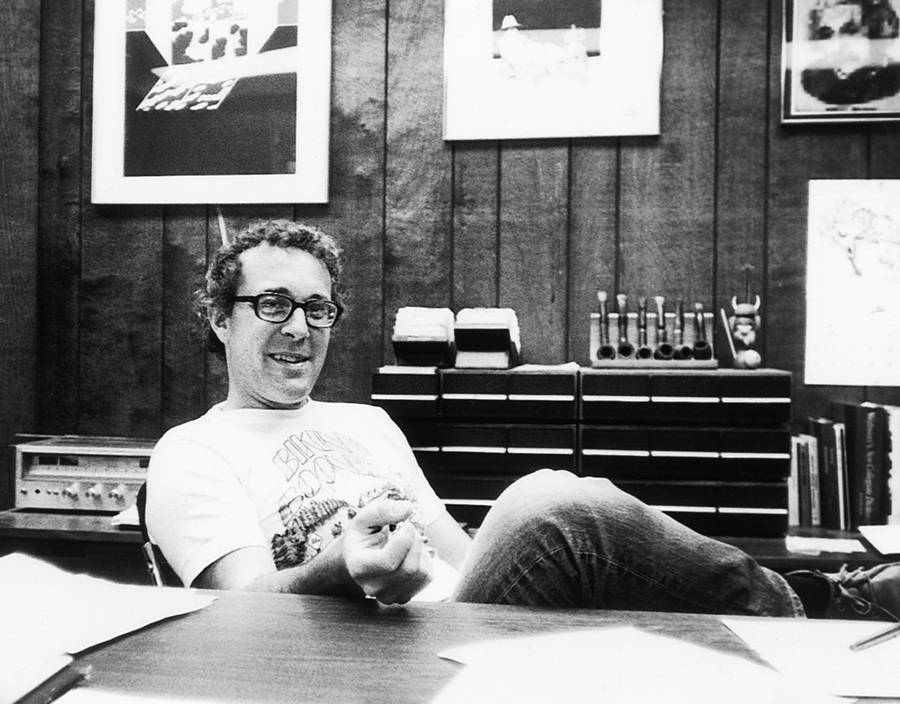

Figure 13. Gary Kildall Kildall wrote the first programming language for Intel’s 4004 microprocessor, as well as a control program that he would later turn into the personal-computer industry’s most popular operating system. (Courtesy of Tom G. O’Neal)

In late 1972, Kildall already had written a simple language for the 4004—a program that translated cryptic commands into the more cryptic ones and zeroes that formed the internal instruction set of the microprocessor. Although written for the 4004, the program actually ran on a large IBM 360 computer. With this program, one could type commands on an IBM keyboard and generate a file of 4004 instructions that could then be sent to a 4004 if a 4004 were somehow connected to the IBM machine.

Connecting the 4004 to anything at all was hardly a trivial task. The microprocessor had to be plugged into a specially designed circuit board that was equipped with connections to other chips and to devices such as a Teletype machine. The Intel development systems had been created for just this type of problem solving. Naturally, Kildall was drawn to the microcomputer lab at Intel, where the development systems were housed.

Eventually, Kildall contracted with Intel to implement a language for the chip manufacturer. PL/M (Programming Language for Microcomputers) would be a so-called high-level language, in contrast to the low-level machine language that was made up of the instruction set of the microprocessor. With PL/M, one could write a program once and have it run on a 4004 processor, an 8008, or on future processors Intel might produce. This would speed up the programming process.

But writing the language was no simple task. To understand why, you have to think about how computer languages operate.

A computer language is a set of commands a computer can recognize. The computer only responds to that fixed set of commands incorporated into its circuitry or etched into its chips. Implementing a language requires creating a program that will translate the sorts of commands a user can understand into commands the machine can use.

The microprocessors not only were physically tiny, but also had a limited logic to work with. They got by with a minimum amount of smarts, and therefore were beastly hard to program. It was difficult to design any language for them, let alone a high-level language like PL/M. A friend and coworker of Kildall’s later explained the choice, saying that Gary Kildall wrote PL/M largely because it was a difficult task. Like many important programmers and designers before him and since, Kildall was in it primarily for the intellectual challenge.

But the most significant piece of software Kildall developed at that time was much simpler in its design.

CP/M

Intel’s early microcomputers used paper tape to store information. Therefore, programs had to enable a computer to control the paper-tape reader or paper punch automatically, accept the data electronically as the information streamed in from the tape, store and locate the data in memory, and feed the data out to the paper-tape punch. The computer also had to be able to manipulate data in memory and keep track of which spots were available for data storage and which were in use at any given moment. A lot of bookkeeping. Programmers don’t want to have to think about such picayune details every time they write a program. Large computers automatically take care of these tasks through the use of a program called an operating system. For programmers writing in a mainframe language, the operating system is a given; it’s a part of the way the machine works and an integral feature of the computing environment.

But Kildall was working with a primordial setup. No operating system. Like a carpenter building his own scaffolding, Kildall wrote the elements of an operating system for the Intel machines. This rudimentary operating system had to be very efficient and compact in order to operate on a microprocessor, and it happened that Kildall had the skills and the motivation to make it so. Eventually, that microprocessor operating system evolved into something Kildall called CP/M (Control Program for Microcomputers). When Kildall asked the Intel executives if they had any objections to his marketing CP/M on his own, they simply shrugged and said to go ahead. They had no plans to sell it themselves.

CP/M made Kildall a fortune and helped to launch an industry.

Intel was in uncharted waters. By building microprocessors, the company had already ventured beyond its charter of building memory chips. Although the company was not about to retreat from that enterprise, there was solid resistance to moving even farther afield. It was true that there’d been talk about designing machines around microprocessors, and even about using a microprocessor as the main component in a small computer. But microprocessor-controlled computers seemed to have marginal sales potential at best.

Wristwatches.

That was where microprocessors would find their chief market, Noyce thought. The Intel executives discussed other possible applications. Microprocessor-controlled ovens. Stereos. Automobiles. But it would be up to the customers to build the ovens, stereos, and cars; Intel would only sell the chips. There was a virtual mandate at Intel against making products that could be seen as competing against its own customers.

It made perfect sense. Intel was an exciting place to work in 1972. To Intel’s executives it felt like Intel was at the center of all things innovative, and that the microprocessor industry was going to change the world. It seemed obvious to Kildall, to Mike Markkula (the marketing manager for memory chips), and to others that the innovative designers of microprocessors should be working at the semiconductor companies. They decided to stick to putting logic on slivers of silicon and to leave the building (and programming) of computers and such devices to the mainframe and minicomputer companies.

But when the minicomputer companies didn’t take up the challenge, Markkula, Kildall, and Osborne each thought better of their decision to stick to the chip business. Within the following decade, each of them would create a multimillion-dollar personal computer or personal-computer-software company of his own.

Breakout

We [Digital Equipment Corporation] could have come out with a personal computer in January 1975. If we had taken that prototype, most of which was proven stuff, the PDP-8 A could have been developed and put in production in that seven- or eight-month period.

-David Ahl, former DEC employee and founder of pioneer computer magazine Creative Computing

By 1970, there existed two distinct kinds of computers and two kinds of companies selling them.

The room-sized mainframe computers were built by IBM, CDC, Honeywell, and the other dwarfs. These machines were designed by an entire generation of engineers, cost hundreds of thousands of dollars, and were often custom-built one at a time.

Then you had the minicomputers built by such companies as DEC and Hewlett-Packard. Relatively cheap and compact, these machines were built in larger quantities than the mainframes and sold primarily to scientific laboratories and businesses. The typical minicomputer cost one-tenth as much as a mainframe and took up no more space than a bookshelf.

Minicomputers incorporated semiconductor devices, which reduced the size of the machines. The mainframes also used semiconductor components, but they generally used them to create even more powerful machines that were no smaller in size. Semiconductor tools such as the Intel 4004 were beginning to be used to control peripheral devices, including printers and tape drives, but it was obvious to everyone concerned that the chips could also be used to shrink the computer and make it cheaper. The mainframe computer and minicomputer companies had the money, expertise, and unequaled opportunity to place computers in the hands of nearly everyone. It didn’t take a visionary to see a personal-sized computer that could fit on a desktop or in a briefcase or in a shirt pocket at the end of the path toward increased miniaturization. In the late 1960s and early 1970s, the major players among mainframe and minicomputer companies seemed the most logical candidates for producing a personal computer.

It was obvious that computer development was headed in that direction. Ever since the 1930s when Benjamin Burack was developing his “logic machine,” people had been building desktop- and briefcase-sized machines that performed computerlike functions. Computer-company engineers and designers at semiconductor companies foresaw a continuing trend of components becoming increasingly cheap, fast, and small year after year. The indicators pointed undoubtedly to the development of a small personal computer by, most likely, a minicomputer company.

It was only logical, but it didn’t happen that way. Every one of the existing computer companies passed up the chance to bring computers into the home and to every work desk. The next generation of computers, the microcomputer, was created entirely by individual entrepreneurs working outside the established corporations.

It wasn’t that the idea of a personal computer had never occurred to the decision makers at the major computer companies. Eager engineers at some of those firms offered detailed proposals for building microcomputers and even working prototypes, but the proposals were rejected and the prototypes shelved. In some cases, work actually commenced on personal-computer projects, but eventually they, too, were allowed to wither and die.

The mainframe companies apparently thought that no market existed for low-cost personal computers, and even if there were such a market, they figured it was the minicomputer companies who would exploit it. They were wrong.

Take Hewlett-Packard, a company that grew up in Silicon Valley and was producing everything from mainframe computers to pocket calculators. Senior engineers at HP studied and eventually spurned a design offered by one of their employees, an engineer without a degree named Stephen Wozniak. In rejecting his design, the HP engineers acknowledged that Wozniak’s computer worked and could be built cheaply, but they told him it was not a product for HP. Wozniak eventually gave up on his employers and built his computers out of a garage in a start-up enterprise called Apple.

Likewise, Robert (Bob) Albrecht, who worked for CDC in Minneapolis during the early 1960s, quit in frustration over the company’s unwillingness to even consider looking into the personal-computer market. After leaving CDC, he moved to the San Francisco Bay Area and established himself as a sort of computer guru. Albrecht was interested in exploring ways computers could be used as educational aids. He produced what could be called the first publication on personal computing and spread information on how individuals could learn about and use computers.

DEC

The prime example of an established computer company that failed to explore the new technology was Digital Equipment Corporation. With annual sales close to a billion dollars by 1974, DEC was the first and the largest of the minicomputer companies. DEC made some of the most compact computers available at the time. The PDP-8, which had inspired Ted Hoff to design the 4004, was the closest thing to a personal computer one could find. One version of the PDP-8 was so small that sales reps routinely carried it in the trunks of their cars and set it up at the customer’s site. In that sense, it was one of the first portable computers. DEC could have been the company that created the personal computer. The story of its failure to seize that opportunity gives some indication of the mentality in computer companies’ boardrooms during the early 1970s.

For David Ahl, the story began when he was hired as a DEC marketing consultant in 1969. By that time, he had picked up degrees in electrical engineering and business administration and was finishing up his PhD in educational psychology. Ahl came to DEC to develop its educational product line, the first product line at DEC to be defined in terms of its potential users rather than its hardware.

Figure 14. David Ahl Ahl left Digital Equipment Corporation in 1974 to start Creative Computing magazine and popularize personal computers. (Courtesy of David H. Ahl)

Four years later, responding to the recession of 1973, DEC cut back on educational-product development. When Ahl protested the cuts, he was fired. Rehired into a division of the company dedicated to developing new hardware, he soon became entirely caught up in building a computer that was smaller than any yet built. Ahl’s group didn’t know what to call the machine, but if it had taken off it certainly would have qualified as a personal computer.

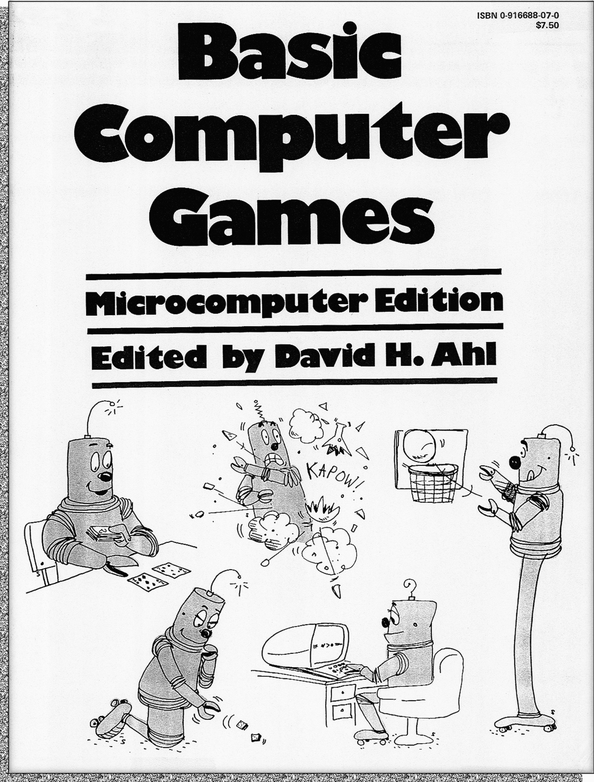

Figure 15. Basic Computer Games David Ahl’s book Basic Computer Games was translated into eight languages and sold more than a million copies, playing an important role in popularizing personal computers in the late 1970s. (Courtesy of David H. Ahl)

Ahl’s interests had grown somewhat incompatible with the DEC mindset. DEC viewed computers as an industrial product. “Like pig iron. DEC was interested in pushing out iron,” Ahl later recalled. When he was working in DEC’s educational division, Ahl wrote a newsletter that regularly published instructions for playing computer games. Ahl talked the company into publishing a book he had put together, Basic Computer Games. He was beginning to view the computer as an individual educational tool, and games seemed a natural part of that.

DEC wasn’t set up to sell computers to individuals, but Ahl had learned something about the potential market for personal computers while working in DEC’s educational-products division. The division would occasionally receive requests from doctors or engineers or other professionals who wanted a computer to manage their practices. Some of DEC’s machines were actually cheap enough to sell to professionals, but the company wasn’t prepared to handle such requests. A big difference existed between selling to individuals and selling to an organization that could hire engineers and programmers to maintain a computer system and could afford to buy technical support from DEC. The company was not ready to handle customer support for individuals.

The team Ahl was working with intended that this new product bring computers into new markets such as schools. Although its price tag would keep it out of the reach of most households, Ahl saw schools as the wedge to get the machines into the hands of individuals, specifically schoolkids. The machines could be sold in large quantities to schools, to be used individually by students. Ahl figured that Heath, a company specializing in electronics hobby equipment, would be willing to build a kit version of the DEC minicomputer, which would lower the price even more.

The new computer was built into a DEC terminal, inside of which circuit boards thick with semiconductor devices were jammed around the base of the tube. The designers had packed every square inch of the terminal case with electronics. The computer was no larger than a television set, although heavier. Ahl had not designed the device, but he felt as protective of it as if it were his own child. He presented his plan for marketing personal computers at a meeting of DEC’s operations committee.

Kenneth Olsen, the president of the company and regarded throughout the industry as one of its wisest executives, was there along with some vice presidents and a few outside investors. As Ahl later recalled, the board was polite but not enthusiastic about the project, although the engineers seemed interested. After some tense moments, Olsen said that he could see no reason why anyone would want a home computer. Ahl’s heart sank. Although the board had not actually rejected the plan, he knew that without Olsen’s support it would fail.

Ahl was now utterly frustrated. He had been getting calls from executive search firms offering him jobs, and told himself the next time a headhunter called he would accept the offer. Ahl, like Wozniak and Albrecht and many others, had walked out the door and into a revolution.

Hackers

I swore off computers for about a year and a half—the end of the ninth grade and all of the tenth. I tried to be normal, the best I could.

-Bill Gates, cofounder of Microsoft Corporation

Had the personal-computer revolution waited for action from the mainframe-computer and minicomputer companies, the PC might still be a thing of the future. But there were those who would not wait patiently for something to happen, and their very impatience led them to take steps toward creating a revolution of their own. Some of those revolutionaries were incredibly young.

In the late 1960s, before David Ahl lost all patience with DEC, Paul Allen and his school friends at Seattle’s private Lakeside School were working at a company called Computer Center Corporation (or “C Cubed” to Allen and his friends). The boys volunteered their time to help find bugs in the work of DEC system programmers. They learned fast and were getting a little cocky. Soon they were adding touches of their own to make the programs run faster. Bill Gates wasn’t shy about criticizing certain DEC programmers, and pointed out those who repeatedly made the same mistakes.

Hacking

Perhaps Gates got too cocky. Certainly the sense of power he got from controlling those giant computers exhilarated him. One day he began experimenting with the computer security systems. On time-sharing computer systems, such as the DEC TOPS-10 system that Gates knew well, many users shared the same machine and used it simultaneously, via a terminal connected to a mainframe or minicomputer that was often kept in a locked room. Safeguards had to be built into the systems to prevent one user from invading another user’s data files or “crashing” a program—thereby causing it to fail and terminate—or worse yet, crashing the operating system and bringing the whole computer system to a halt.

Gates learned how to invade the DEC TOPS-10 system and, later, other systems. He became a hacker, an expert in the underground art of subverting computer-system security. His baby face and bubbly manner masked a very clever and determined young man who could, by typing just 14 characters on a terminal, bring an entire TOPS-10 operating system to its knees.

He grew into a master of electronic mischief. Hacking brought Gates fame in certain circles, but it also brought him grief. After learning how easily he could crash the DEC operating system, Gates cast about for bigger challenges. The DEC system had no human operator and could be breached without anyone noticing and sounding an alarm. On other systems, human operators continually monitored activity.

For instance, Control Data Corporation had a nationwide network of computers called Cybernet, which CDC claimed was completely reliable at all times. For Gates, that claim amounted to a dare.

A CDC computer at the University of Washington had connections to Cybernet. Gates set to work studying the CDC machines and software; he studied the specifications for the network as though he were cramming for a final exam.

“There are these peripheral processors,” he explained to Paul Allen. “The way you fool the system is you get control of one of those peripheral processors and then you use that to get control of the mainframe. You’re slowly invading the system.”

Gates was invading the CDC hive dressed as a worker bee. The mainframe operator observed the activity of the peripheral processor that Gates was controlling, but only electronically in the form of messages sent to the operator’s terminal. Gates then figured out how to gain control of all the messages the peripheral processor sent out. He hoped to trick the operator by maintaining a veneer of normalcy while he cracked the system wide open.

The scheme worked.

Gates gained control of a peripheral processor, electronically insinuated himself into the main computer, bypassed the human operator without arousing suspicion, and planted the same “special” program in all the component computers of the system. His tinkering caused them to all crash simultaneously.

Gates was amused by his exploits, but CDC was not, and he hadn’t covered his tracks as well as he thought he had. CDC caught him and sternly reprimanded him. A humiliated Bill Gates swore off computers for more than a year.

Despite the dangers, hacking was the high art of the technological subculture; all the best talent was hacking. When Gates wanted to establish his credentials a few years later, he didn’t display some clever program he had written. He just said, “I crashed the CDC,” and everyone knew he was good.

BASIC

When Intel’s 8008 microprocessor came out, Paul Allen was ready to build something with it. He lured Gates back into computing by getting an Intel 8008 manual and telling his friend, “We should write a BASIC for the 8008.”

BASIC was a simple yet high-level programming language that had become popular on minicomputers over the previous decade. Allen was proposing that they write a BASIC interpreter—a translator that would convert statements from BASIC input into sequences of 8008 instructions. That way, anyone could control the microprocessor by programming in the BASIC language. It was an appealing idea because controlling the chip directly via its instruction set was, as Allen could see, a painfully laborious process.

Gates was skeptical. The 8008 was the first 8-bit microprocessor, and it had severe limitations.

“It was built for calculators,” Gates told Allen, although he wasn’t quite accurate in his statement. But Gates eventually agreed to lend a hand, and came up with the $360 needed to buy what Gates believed was the first 8008 sold through a distributor. Then, their plan somehow was diverted: they got themselves a third enthusiast, Paul Gilbert, to help with the hardware design, and together the trio built a machine around the 8008.

The machine the youngsters built was not a computer by a long shot, but it was complicated enough to cause them to set aside BASIC for a while. They constructed a machine to generate traffic-flow statistics using data collected by a sensor they had installed in a rubber tube strung across a highway. They figured there would be a sizable market for such a device. Allen wrote the development software, which allowed them to simulate the operation of their machine on a computer, and Gates used the development software to write the actual data-logging software that their machine required.

Traf-O-Data

It took Gates, Allen, and Gilbert almost a year to get the traffic-analysis machine running. When they finally did, in 1972, they started a company called Traf-O-Data—a name that Allen is quick to point out was Gates’s idea—and began pitching their new product to city engineers.

Traf-O-Data was not the brilliant success they had hoped for. Perhaps some of the engineers balked at buying computer equipment from kids. Gates, who did most of the talking, was then 16 and looked younger. At the same time, the state of Washington began to offer no-cost traffic-processing services to all county and city traffic controllers, and Allen and Gates found themselves competing against a free service.

Soon after this early failure, Allen left for college, leaving Gates temporarily at loose ends. TRW, a huge corporation that produced software products in Vancouver, Washington, had heard about the work Gates and Allen did for C Cubed and shortly thereafter offered them jobs in a software-development group.

At something like $30,000 a year, the offer was too good for the two students to pass up. Allen came back from college, Gates got a leave of absence from high school, and they went to work. For a year and a half, Gates and Allen lived a computer nut’s dream. They learned a great deal more than they had by working at C Cubed or as the inventors of Traf-O-Data. Programmers can be protective of their hard-earned knowledge, but Gates knew how to use his youth to win over the older TRW experts. He was, as he put it, “nonthreatening.” After all, he was just a kid.

Gates and Allen also discovered the financial benefits that such work can bring. Gates bought a speedboat, and the two frequently went water-skiing on nearby lakes. But programming offered other rewards that appealed to Gates and Allen far more than their increasingly fat bank accounts. Clearly, they had been bitten by the bug. They had worked late nights at C Cubed for no financial gain, and pushed themselves at TRW harder than anyone had asked them to. Something in the clean precision of computer logic and the sportsmanship in the game of programming was irresistible.

The project they worked on at TRW eventually fizzled out, but it had been a profitable experience for the two hackers. It wasn’t until Christmas 1974, after Gates went off to Harvard and Allen took a job with Honeywell, that the bug bit them once more, and this time the disease proved incurable.

Copyright © 2014, The Pragmatic Bookshelf.

All materials on the site are licensed Creative Commons Attribution-Sharealike 3.0 Unported CC BY-SA 3.0 & GNU Free Documentation License (GFDL)

If you are the copyright holder of any material contained on our site and intend to remove it, please contact our site administrator for approval.

© 2016-2026 All site design rights belong to S.Y.A.