Fire in the Valley (2014)

Chapter 9

The PC Industry

You were dealing with entrepreneurs mostly. Egos, a lot of egos.

Ed Faber

By 1985, IBM had entered the fledgling personal-computer field and transformed it into an industry, while Apple’s Macintosh had changed expectations about how people interacted with personal computers. But now the hobbyist days were fading and personal computing was entering a new phase. This new era would be a long and impressive period of growth with no direct parallel in any other industry. There was no question any longer that this was an industry, yet the indications of its hobbyist beginnings were still visible. People who came out of that culture still wielded great influence, people like Bill Gates and Steve Jobs. In fact, this era would be bracketed at the beginning and end by two eerily parallel events: the ignominious departure of Jobs from the company he cofounded, and his triumphant return.

Losing Their Religion

We found ourselves a big company, glutted from years of overspending. Then the money supply dried up, and that caused the first of a series of convulsions.

-Chris Espinosa, employee no. 8 at Apple Computer

In the 1980s Steve Jobs’s dream machine became reality. And market reality can be brutal.

The Mac’s Shortcomings

After the release of the Macintosh in 1984, Steve Jobs felt vindicated. Plaudits from the press and an immediate cult following assured him that the machine was, as he had proclaimed it, “insanely great.” He had every right to take pride in the accomplishment. The Macintosh would never have existed if it hadn’t been for Jobs. He had seen the light on a visit to Xerox PARC in 1979. Inspired by the innovations presented by the PARC researchers, he longed to get those ideas implemented in the Lisa computer. When Jobs was nudged out of the Lisa project, he hijacked a team of Apple developers working on an interesting idea for an appliance computer and turned them into the Macintosh skunk works.

Jobs hounded the people on the Macintosh project to do more than they thought possible. He sang their praises, bullied them mercilessly, and told them they weren’t making a computer; they were making history. He promoted the Mac passionately, making people believe that he was talking about much more than just a piece of office equipment.

His methods all worked, or so it seemed. Early Mac purchasers bought the Jobs message along with the machine, and forced themselves to overlook the little Mac’s serious shortcomings. For about three months, Mac sales more or less measured up to Jobs’s ambitious projections. For a while, reality matched the image in Steve Jobs’s head.

Then Apple ran out of zealots.

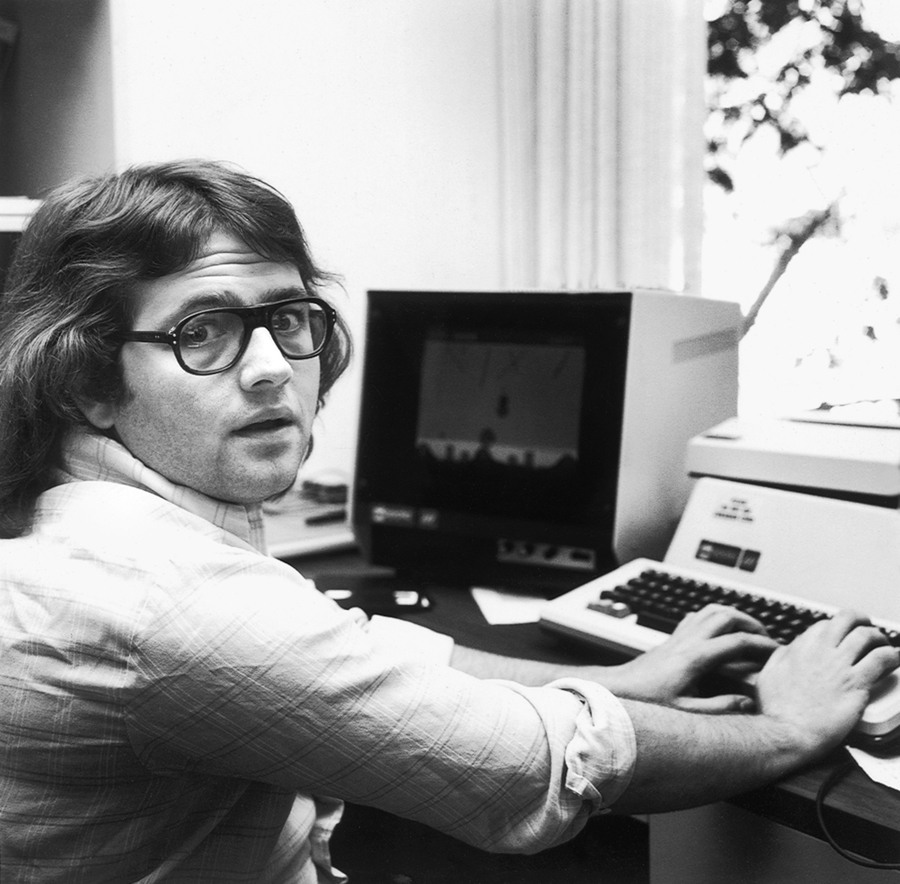

Figure 89. Andy Hertzfeld After Jobs pulled him off Apple II development, Hertzfeld wrote the key software for the Macintosh.

(Courtesy of Andy Hertzfeld)

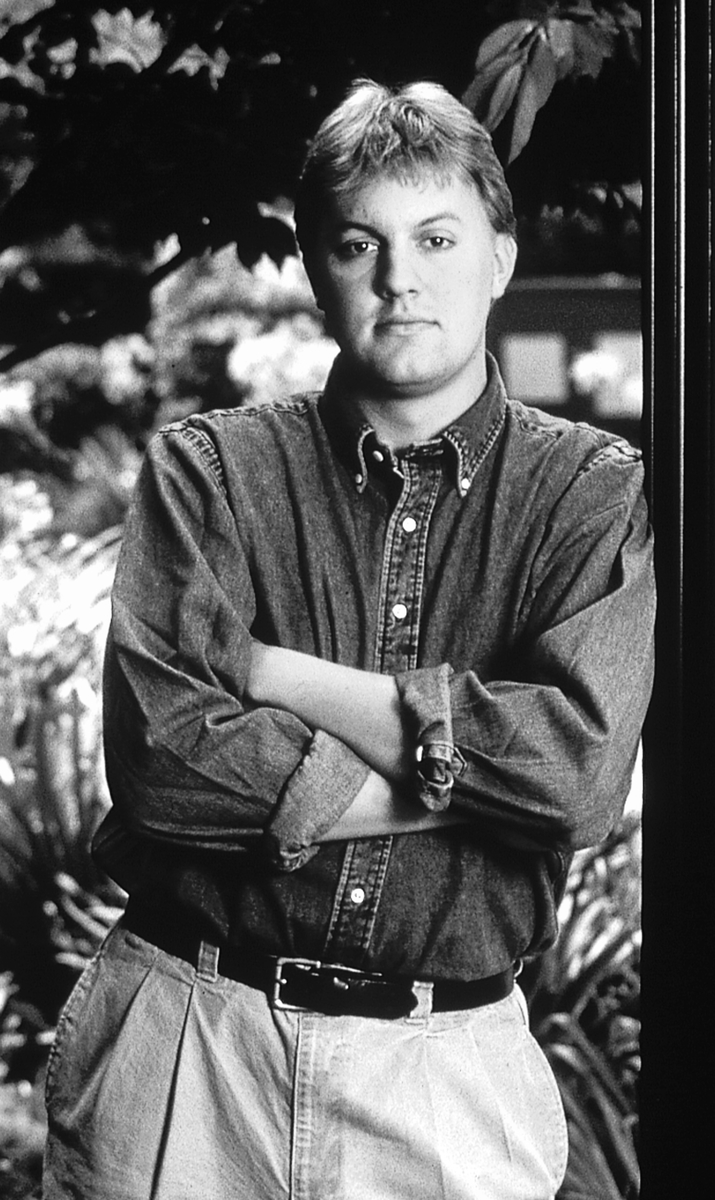

Figure 90. The Mac design team Left to right: Andy Hertzfeld, Chris Espinosa, Joanna Hoffman, George Crow, Bill Atkinson, Burrell Smith, Jerry Manock (Courtesy of Apple Computer Inc.)

The first Macintosh purchasers were early adopters—technophiles willing to accept the inevitable quirks of new technologies for the thrill of being the first to use them. Those early adopters all bought Mac machines in the first three months of sales, and then the well dried up. In 1984 and 1985, the first two years of the Mac’s life, sales routinely failed to reach the magnitude of Jobs’s expectations—sales projections that the company was counting on. For those two years, it was the old reliable Apple II that kept the company afloat. If Apple had depended on Mac sales alone, it would have folded before the 1980s were over.

All the while, the Mac team still got the perks, the money, and the recognition, and the Apple II staff got the clear message that they were part of the past. Jobs told the Lisa team outright that they were failures and “C players.” He called the Apple II crew “the dull and boring division.”

Chris Espinosa came to the Mac division from the Apple II group. He had family and friends still on the Apple II side. The 20-year-old Espinosa had worked at Apple since he was 14. The us-versus-them syndrome he witnessed saddened him.

Customers, third-party developers, and Apple stockholders weren’t all that happy, either. The Mac wasn’t selling slowly for lack of an advertising budget. It deservedly failed in the market. It lacked some essential features that users had every reason to demand. Initially, it had no hard-disk drive, and a second floppy-disk drive was an extra-price option. With only a single disk drive, making backup copies of files was a nightmare of disk-swapping.

At 128K RAM, it seemed that the Mac’s memory should have been more than adequate; 64K was the standard in the industry. But the system and application software ate up most of the 128K, and it was clear that more memory was needed. Dr. Dobb’s Journal ran an article showing how anyone brave enough to attack the innards of their new Mac machine with a soldering iron could “fatten” their Mac to 512K, six months before Apple got around to delivering a machine with that much RAM.

But the memory limitations weren’t a problem unless you had the software that used the RAM, and therein was the real problem. The Mac shipped with a collection of Apple-developed applications that allowed users to do word processing and draw bitmapped pictures. Beyond that, the application choices were slim because the Mac proved to be a difficult machine to develop software for.

The Departure of Steve Jobs

Jobs was entirely committed to his vision of the Macintosh, to the point that he continued to use the 10-times-too-large sales projections as though they were realistic. To some of the other executives, it began to look like Jobs was living in a dream world of his own. The Mac’s drawbacks, such as the lack of a hard-disk drive, were actually advantages, he argued, and the force of his personality was such that no one dared to challenge him.

Even his boss found it difficult to stand up to Jobs. John Sculley, whom Jobs had hired from Pepsi to run Apple, concluded that the company couldn’t afford to have its most important division headed up by someone so out of touch with reality. But could Sculley bring himself to demote the founder of the company? The situation was getting critical: early in 1985, the company posted its first-ever quarterly loss. Losses simply weren’t in the plan for Apple, which stood as an emblem of the personal-computer revolution, a modern-day legend, and a company that had risen to the Fortune 500 in record time.

Sculley decided to take drastic action. In a marathon board meeting that began on the morning of April 19, 1985, and continued into the next day, he told the Apple board that he was going to strip Jobs of his leadership over the Mac division and any management role in the company. Sculley added that if he didn’t get full backing from the board on his decision, he couldn’t stay on as president. The board promised to back him up.

But Sculley failed to act on his decision immediately. Jobs heard about what was coming, and in a plot to get Sculley out, began calling up board members to rally their support. When Sculley heard about this, he called an emergency executive board meeting on May 24. At the meeting he confronted Jobs, saying, “It has come to my attention that you’d like to throw me out of the company.”

Jobs didn’t back down. “I think you’re bad for Apple,” he told Sculley, “and I think you’re the wrong person to run the company.” The two men left the board no room for hedging. The individuals in that room were going to have to decide, then and there, between Sculley and Jobs.

They all got behind Sculley. It was a painful experience for everyone involved. Apple II operations manager Del Yocam, for one, was torn between his deep feelings of loyalty toward Jobs and what he felt was best for the future of the company. Yocam recognized the need for the grown-up leadership at Apple that Sculley could supply and that Jobs hadn’t, and he cast his vote for maturity over vision.

Jobs was understandably bitter. In September, he sold off his Apple stock and quit the company he had cofounded, announcing his resignation to the press. The charismatic young evangelist who had conceived the very idea of Apple Computer, had been the driving force behind getting both the Apple II and the Macintosh to market, had appeared on the cover of every major newsmagazine, and was viewed as one of the most influential people in American business was now out the door.

Clones

Had Compaq or IBM changed in ’88 or ’89, Dell would not have been a factor. Now Dell is driving the industry.

-Seymour Merrin, computer-industry consultant

Apple was struggling with the new realities of the rapidly growing computer industry, but every company found itself challenged to adapt. The fear in the fledgling personal-computer industry had been that IBM would enter the market and transform everything. That fear came true in the 1980s. But the personal-computer industry transformed IBM as well.

Imitating IBM

While Apple was losing its way in the wake of IBM’s entry into the market, IBM’s own fortunes followed a strange path. When IBM released its Personal Computer, very little about the machine was proprietary. IBM had embraced the Woz principle of open systems, not at all an IBM-like move. One crucial part of the system was proprietary, though, and that part was, ironically, the invention of Gary Kildall.

Like Michael Shrayer, who had written different versions of his pioneering word processing program for over 80 brands of computers, Kildall had to come up with versions of his CP/M operating system for all the different machines in the market. Unlike Shrayer, however, Kildall found a solution to the problem. With the help of IMSAI’s Glen Ewing, he isolated all the machine-specific code that was required for a particular computer in a piece of software that he called the basic input-output system, or BIOS.

Everything else in CP/M was generic, and didn’t need to be rewritten when Kildall wanted to put the operating system on a new machine from a new manufacturer. Only the very small BIOS had to be rewritten for each machine, and that was relatively easy.

Tim Paterson realized the value of the BIOS technique and implemented it in 86-DOS, from which it found its way into PC-DOS.

The BIOS for the IBM PC defined the machine. There was essentially nothing else proprietary in the PC, so IBM guarded this BIOS code and would have sued anyone who copied it.

Not that IBM thought it could prevent others from making money in “its” market. That was a given. In the mainframe market, people spoke of IBM as The Environment, and many companies existed solely to provide equipment that worked with IBM machines. When IBM moved into the personal-computer market, many companies found ways to work with the instant standard that the IBM PC represented.

Employees of Tecmar were among the first in the doors of Chicago’s Sears Business Center the morning the IBM PC first went on sale. They took their PC back to headquarters and ran it through a battery of tests to determine just how it worked. As a result, they were among the first companies to supply hard-disk drives and circuit boards to work with the PC. These businesses took advantage of the opportunity to compete in this market with price, quality, or features. Ed Roberts’s pioneering company, MITS, had encountered a similar situation in 1976 and Roberts had labeled these companies “parasites.”

And just as IMSAI had produced an Altair-like machine to compete with MITS, many microcomputer companies came out with “IBM workalikes,” computers that used MS-DOS (essentially PC-DOS but licensed from Microsoft) and tried to compete with IBM by offering a different set of capabilities, perhaps along with different marketing or pricing. Without exception, the market resoundingly rejected these IBM workalikes. Consumers might buy a computer that made no pretense of IBM compatibility—Apple certainly hoped so—but they weren’t going to put up with any almost-compatible machine. Any computer claiming IBM compatibility would have to run all the software that ran on the IBM PC, support all the PC hardware devices, and accept circuit boards designed for the IBM PC, including boards not yet designed. But IBM’s proprietary BIOS made it very hard for other manufacturers to guarantee total compatibility.

The Perfect Imitation

Yet the potential reward of creating a 100 percent IBM PC-compatible computer was so great that it was to be assumed that someone would find a way. In the summer of 1981, three Texas Instruments employees were brainstorming in a Houston, Texas, House of Pies restaurant about starting a business. Two options they considered were a Mexican restaurant and a computer company. By the end of the meal, Rod Canion, Jim Harris, and Bill Murto had deep-sixed the Mexican restaurant and sketched out, on the back of a House of Pies placemat, a business plan for a computer company, detailing what the ideal IBM-compatible computer would be like. With venture capital supplied by Ben Rosen, the same investor who had backed Lotus, they launched Compaq Computer and built their IBM-compatible. It was a portable or, at 28 pounds, more of a “luggable,” had a nine-inch screen and a handle, and looked something like an Osborne 1.

Unlike the workalikes, the key to their machine was that it was 100 percent IBM compatible. Compaq had performed a so-called “clean-room” re-creation of the IBM BIOS, meaning that engineers reconstructed what the BIOS code had to be, based solely on the IBM PC’s behavior and on published specifications, without ever having seen the IBM code. This gave Compaq the legal defense it needed for the lawsuit that they knew they would face from IBM.

Compaq marketed aggressively. It hired away the man who had set up IBM’s dealer network, sold directly against IBM through dealers that IBM had approved to sell its PC, and offered those dealers better margins than IBM did. The plan worked. In the first year, Compaq’s sales totaled $111 million. There were soon thousands of offices where a 28-pound “portable” from Compaq was the worker’s only computer.

The idea of a clean-room implementation of the IBM PC BIOS was vindicated. In theory, any other company could do what Compaq had done.

Few companies had the kind of financial backing that Compaq could call on to compete head-to-head with IBM, even if they had their own clean-room re-creations of the IBM BIOS. However, one did have enough savvy to leverage the technology. After Phoenix Technology performed its clean-room implementation of the BIOS, it licensed its technology to others rather than build its own machine. Now anyone who wanted to build fully IBM-compatible machines without risk of incompatibility or lawsuit could license the BIOS from Phoenix. Consumers and computer magazines tested the “100 percent compatibility” claim, often using the extremely popular Lotus 1-2-3: if a new computer couldn’t run the IBM PC version of 1-2-3, it was history. If it could, it usually could run other programs, also. The claims, generally, held up.

Soon there were dozens of companies making IBM-compatible personal computers. Tandy and Zenith jumped in early, as did Sperry, one of the pioneering mainframe companies. Osborne built an IBM-compatible just before going broke. ITT, Eagle, Leading Edge, and Corona were some of the less familiar names that became very familiar as they bit off large chunks of this growing IBM-compatibles market.

Suddenly, IBM had no distinction but its name. Until now, that had always been enough. IBM had been The Environment, but now it had leaped into a business environment that it apparently didn’t control. The clone market had arrived.

Imitating Apple

Apple stood virtually alone in not embracing the new IBM standard, initially with its Apple II and Apple III, and soon thereafter with the Macintosh. Although user loyalty and an established base of software kept the Apple II alive for years, it was not really competitive with the PC and compatibles, particularly when IBM began introducing new models based on successive generations of Intel processors and the Apple II was locked into the archaic 6502. But the Macintosh graphical user interface, or GUI, gave Apple the edge in innovation and ease of use, and kept it among the top personal-computer companies in terms of machines sold. Jobs’s prediction that it would come down to Apple and IBM was initially borne out, although the clones were not to be overlooked.

Software was growing increasingly important, too. With the advent of the clone market, the choice of a personal computer was becoming a matter of price and company reputation, not technological innovation. And since people bought machines specifically to run certain programs, such as Lotus 1-2-3, Apple’s appeal was diminished. Even if Apple was selling as many machines as IBM or Compaq, its platform was a minority player, while computers using the magic combination of IBM’s architecture, Intel’s microprocessor, and Microsoft’s operating system increasingly became the dominant platform.

Why didn’t anyone clone the Mac? There simply was no Mac equivalent of Kildall’s BIOS—what made the Mac unique was incorporated in many thousands of lines of code. It was, in short, much harder to clone the Macintosh. It couldn’t be done without Apple’s approval, which the company invariably withheld.

Consolidation

You have to think it’s a fun industry. You’ve got to go home at night and open your mail and find computer magazines or else you’re not going to be on the same wavelength as the people [at Microsoft].

-Bill Gates, 1983

Microsoft became the dominant company in the personal-computer industry in the 1980s, surpassing IBM in influence, and its founders became billionaires. But as the 1980s began, Microsoft and Bill Gates were known only within the tight community of personal-computer companies.

In 1981 the company’s business focused on programming languages, with some application software and a lone hardware product thrown in—Paul Allen’s brainchild, the SoftCard, which let people run CP/M programs on an Apple computer. DOS, which would begin the company’s rise to prominence, was under development, but did not come out until months later, when the IBM PC was released.

Although Gates had insisted that Microsoft should not sell directly to end users, an aggressive salesman named Vern Raburn convinced him otherwise, using the now-standard method of impressing Bill: he challenged him, had his facts straight, and didn’t back down. After winning his argument with Gates, Raburn became president of a new Microsoft subsidiary, Microsoft Consumer Products, which began selling both Microsoft-developed products and other licensed products, including some applications, in computer stores and anywhere else Raburn could find shelf space. But in 1981 this operation was just beginning, and the company wasn’t a major player, even in the young computer industry.

Microsoft’s total revenues for 1981 were about $15 million. It seemed like a lot of money to Gates, but by way of contrast, Apple’s annual gross revenues were running just about 20 times as high, and IBM was in another league altogether.

Microsoft converted from a partnership to a corporation in June 1981. Much of the stock was held by three people: founders Gates and Allen and Bill’s friend from Harvard and increasingly powerful executive at Microsoft, Steve Ballmer. A clear majority of the stock was in the hands of the unkempt, squeaky-voiced president, who new employees sometimes mistook for some teenaged hacker trespassing in the president’s office.

Such new employees soon learned that the 26-year-old president, who looked 18, was a force to be reckoned with. And they learned that the company they had come to work for was, in many ways, as unusual as its young president. The company was, in fact, a lot like Bill Gates.

This was not surprising because Gates made it a point to hire people who were like himself—bright, driven, competitive, and able to argue effectively for what they believed in. A small but influential number of the new employees came from fabled Xerox PARC, the research lab where Steve Jobs saw the vision that would become the Macintosh.

Gates invited employees to argue with him about important technical issues. He hardly gave what could be called positive feedback; he frequently characterized work or ideas as “brain damaged” or “the stupidest thing I ever heard.” But he prided himself on being open to good ideas from any source, and even when he delivered one of his devastating denouncements, it was always the idea he attacked, not the individual. Because Gates was such a demanding critic, employees who impressed him gained credibility and influence. It was the key to the executive washroom, the prime parking place in a company that had no executive washroom and no reserved parking places.

The easy access to the president and his willingness to listen to good arguments from any employee gave the appearance of democracy to the company culture. Even if you couldn’t nail him down in the hall, anyone could send an email message directly to billg and know that Bill G. himself would read it. But Microsoft was far from a democracy. The flattened communication structure was a double-edged sword. Although displeasing Bill Gates was death, getting positive feedback from billg on your work or ideas was money in the bank. Those who were most favored tended to see Microsoft as a meritocracy.

In a meritocracy, the real power resides in the authority to judge merit. At Microsoft, Bill’s judgment was the final word.

One competent employee who just didn’t fit the Bill mold was Jim Towne. Towne was hired from Tektronix in July 1982 to serve as president of Microsoft. Gates was conscious that a lot of early microcomputer companies had failed because they didn’t know when to bring in more-experienced managers. Entrepreneur’s disease was at least part of what had killed MITS, IMSAI, and Proc Tech. Bill was juggling a lot of balls, and he brought Towne in to lighten his load and take the official title of president. Towne served about a year, but Gates never thought he had the right feel for the company. There was no real problem with his management; ultimately, Towne failed to “take” at Microsoft because he wasn’t Bill. It began to appear that what Bill wanted was not a president but a way to clone himself.

Through the early 1980s, the IBM deal and its aftermath gave the company a huge boost, particularly when Compaq and Phoenix Technologies created a clone market for Microsoft to sell MS-DOS to.

By the end of 1981, Microsoft had grown to 100 employees and had moved to new offices in Bellevue, Washington. The stresses of the IBM deal and the company’s growing pains were getting to some employees. Soon some long-timers left, including Bob Wallace, who had been a mainstay since the Albuquerque days. But Wallace’s leaving was a blip compared to the departure of Paul Allen, Bill’s lifelong friend and partner. Although Allen’s departure was due to a health issue (he had Hodgkin’s disease) and not stress, it increased Gates’s own level of stress. Now the company was totally his to run.

Pam Edstrom, Microsoft’s PR chief, was pushing an image of Bill as the nerd who made good. Another story was equally true, however: the privileged child of comfortable affluence, weaned on competition and the importance of winning, who read Fortune magazine in high school and became a ruthless cutthroat businessman determined to dominate markets and crush the competition. But the general press could handle only one image of Gates, and Edstrom made sure they got the “right” one—right for Microsoft, that is. Journalists certainly had no trouble believing the official tale; a few minutes with Gates would convince anyone that he was a nerd, and the balance sheet made the case for his having done well.

Gates, meanwhile, was presenting an image of Microsoft that stuck in the craw of industry insiders. He insisted that Microsoft produced quality products, whereas the reality was that the general run of stuff was less than top-notch work. Microsoft’s software was of varying quality, sometimes buggy, sometimes slow. And within the company the image of quality and professionalism was a joke. Internal systems were a disaster. There were not enough computers. The huge plastic boxes in which Microsoft was packaging its products were a warehouse nightmare and one more indication that all was not rosy inside Bill’s empire. If Microsoft reflected Bill Gates’s personality and values, its organization could have been modeled on Bill’s personal life. He ate fast food, neglected to shower, and had trouble remembering to pay his bills. Microsoft paid its bills, but otherwise its internal systems looked a lot like Bill. The second try at getting somebody in to clean up the mess made Radio Shack’s Jon Shirley the new president of Microsoft.

Playing Rough

There was another way in which the image of Microsoft didn’t match the reality. Microsoft would have people believe that its OEM customers bought its products solely for their quality, not because of Microsoft’s aggressive business dealings. (OEM, or original equipment manufacturer, refers to the computer companies who licensed software from Microsoft to include with their machines.) Microsoft’s cutthroat tactics with its OEMs were most evident in Microsoft’s efforts to win the GUI market.

Microsoft was one of the first companies to develop software for the Mac, and had been briefed on Apple’s project for months before the release. So closely did Microsoft work with Apple that its programmers were making suggestions about the operating system as it was being refined. Microsoft Windows was based on what Microsoft learned during that process.

On November 10, 1983, Microsoft staged an impressive media blitz for its upcoming Windows product that trumpeted to the industry the scores of vendors who had signed on to develop application software that would be Windows-compatible. Some of these had also signed on with VisiCorp’s pioneering GUI product, Visi On, and were wavering in their commitment to Microsoft, so the message was a little disingenuous—plus Windows wasn’t anywhere to be seen.

According to one OEM customer, Microsoft agreed to give its OEMs an early beta version of Windows—absolutely necessary if the OEMs wanted to have a Windows-compatible application out when Windows itself came out—but only if the OEMs agreed not to develop for a competing product such as Visi On. The Justice Department might have considered such tactics—and other tactics Microsoft was engaging in at the time—as restraint of trade or unfair business practices, but nobody was talking about these backroom deals. Later there would be charges of “undocumented system calls”—code in Windows or DOS that Microsoft reserved for its own use to give its application software an advantage over any competitor’s. Microsoft was engaging regularly in behavior that would eventually lead the Justice Department to its door.

Windows was finally released in 1985. After an initial burst of good press, the actual reviews began to come in—and they were not kind.

Given the wide variety of hardware configurations that MS-DOS had to support, cloning the Mac GUI to run snappily on top of it was a tough problem, and Microsoft hadn’t adequately solved it. And yet Windows did have to run on top of MS-DOS. The MS-DOS operating system was installed in all the IBM and clone machines, which made up most of the market. Microsoft had to maintain compatibility with all those machines when it released this Mac-like user interface, and the only way to do that was to make Windows merely an interface between the user and the real operating system. Underneath, it had to be MS-DOS, dealing with application programs, data files, printers, and disk drives, just as MS-DOS always had.

But Microsoft continued to prosper, with MS-DOS making up a larger and larger share of revenues. The company’s March 1985 initial public offering of stock was eagerly anticipated in the financial community. When the numbers were tallied after the IPO, Bill’s 45 percent of the company was worth $311 million. Microsoft had grown to over 700 employees, and it had moved to larger headquarters.

By 1987, Microsoft passed Lotus as the top software vendor. This remarkable ascendancy came about in large part due to its control over the MS-DOS operating system used on nearly all non-Apple PCs. But Microsoft had increasingly developed ambitions to offer products for most software categories, including spreadsheets, word processors, presentation programs, and educational tools. There were now some 1,800 Microsoft employees worldwide.

Microsoft Supplants IBM

Meanwhile, IBM, beginning to face real competition from the clone manufacturers, decided to replace DOS (and its unsuccessful GUI product, TopView) with a new, powerful operating system with a graphical user interface. The new operating system would be called OS/2.

Microsoft was commissioned once again to work on the operating system, but the arrangement was rocky from the start. By 1990, the final split with IBM was imminent.

Microsoft had committed a lot of resources to OS/2, as had IBM. But it appeared that neither party was entirely faithful in this software-development marriage.

Microsoft was alarmed when IBM seemed to be hedging its bets by leading an industry effort to standardize the Unix operating system and by licensing NeXTSTEP, the operating system from Steve Jobs’s NeXT Inc. This was normal behavior; IBM typically had a number of alternate plans in the works, with different divisions of the company competing with one another to see whose project would actually be chosen to ship. But that was hardly a comfortable position for Microsoft, which would be in trouble if OS/2 were scrapped in favor of Unix or NeXTSTEP while Microsoft had spent years developing an operating system that IBM decided it didn’t want to support.

And, of course, at the same time Microsoft was developing Windows. Windows wasn’t actually an operating system, but Windows plus DOS was, so Microsoft had its own hedge. At first, the plan was to make Windows and the graphical interface of OS/2 work alike. Microsoft started telling programmers that if they developed software for Windows they would be ready for OS/2 when it came out. This grew less plausible as time went on.

Before long, Microsoft programmers working on OS/2 weren’t talking to IBM OS/2 programmers and vice versa. The companies officially denied the friction, but the marriage was on the rocks. IBM was convinced that Microsoft had shifted its efforts to Windows, that Microsoft was only pretending to be concentrating on OS/2, and that Microsoft was claiming Windows would not compete with OS/2 when competition was exactly the plan. That was all true, eventually.

IBM announced that OS/2 would be released in two versions, the more sophisticated of which would be sold exclusively by IBM. That wasn’t news Bill Gates wanted to hear.

Finally, Gates told Steve Ballmer that they were going to go for broke on Windows and that he wasn’t worrying about what IBM thought about it. Then a Gates memo that called OS/2 “a poor product” was made public. The arrangement fell apart, IBM took over OS/2, and Microsoft indeed went for broke on Windows.

Windows 3.0 rolled out in 1990, which was the first adequate release of the GUI product. Jon Shirley also departed from Microsoft in 1990. Although things had worked out all right, the Texan just figured it was time to move on. In six weeks, Gates had hired ex-IBMer Mike Hallman as the new president. Although he lasted only into 1992, it hardly mattered to the direction and atmosphere of the company. Microsoft was Bill Gates’s baby.

As Gates approached 40, neither he nor Microsoft seemed to lose any vitality. The break with IBM invigorated Microsoft and left IBM floundering. Windows was finally getting positive reviews while IBM’s OS/2 and its GUI product, Presentation Manager, were not. Computer companies and software developers believed that Microsoft was in charge and followed its lead.

Sculley Saves Apple

With Jobs gone, Sculley set to work saving the company from ruin. Under his direction, Apple dropped the Lisa computer, and brought out a high-end Macintosh—the Macintosh II—along with new models of the original Mac, in particular a model called the Mac Plus that was introduced in January 1986.

The Mac Plus addressed most of the shortcomings of the original Mac and got the money flowing in the right direction. Sculley had stopped the financial bleeding and put the company back on its feet. For the next few years Apple was golden.

Sculley had turned around the demoralization that followed Jobs’s departure, got the Mac line moving, and made the company profitable again. Eventually he retired the Apple II line, but not without first giving the Apple II employees a measure of the credit they had been denied during the latter part of Jobs’s tenure. As a sign of his support of the Apple II team, he promoted Del Yocam to chief operating officer.

Sculley began relying on two Europeans more than ever before. German Michael Spindler, savvy about technology and the European market, headed Apple’s European efforts, while Jean-Louis Gassée, a charismatic and witty Frenchman, got the job of inspiring and motivating the engineering troops.

Gassée, who had made Apple France the company’s most successful subsidiary, quickly became the second most visible executive in one of the world’s great corporate fishbowls. He had a penchant for metaphor and bold pronouncements; he once gave a speech called “How We Can Prevent the Japanese from Eating Our Sushi.” Unlike Sculley, he was technical, and he won the respect and affection of Apple’s engineers.

When alumni of Xerox PARC came up with a language for controlling printers and a program for designing publications, Apple released a laser printer, and a lucrative desktop publishing (DTP) market was born. The Macintosh, with the artful typography that Jobs had insisted on, was a natural DTP machine. Apple dominated the DTP market for years after that.

“We were in the catbird seat,” Chris Espinosa recalled, with a product that was such a favorite among consumers that Apple could raise prices and get away with it. “We were making 55 percent gross margins, on our way to becoming a $10 billion corporation. We were in fat city.”

One portentous event went unnoticed by the outside world: on October 24, 1985, Microsoft was threatening to stop development on crucial applications for the Mac unless Apple granted Microsoft a license for the Mac operating-system software. Microsoft was developing its graphical user interface (the items and options a user sees and selects onscreen) for DOS, which it was calling Windows, and didn’t want Apple to sue over the similarity between the Windows GUI and the Mac interface. Although Microsoft might not have followed through on its threat to stop development of the applications, Sculley decided not to take the chance. He granted Microsoft its license, a move that he would later regret, and try, unsuccessfully, to undo.

But the company was prospering. Investors, customers, and employees were happy. On the horizon, though, new and disturbing problems were developing for Apple.

Other companies had entered the personal-computer market and were making machines that worked like the IBM PC and that ran all the same software. Prices for these IBM-compatibles or clones were dropping, and Apple’s machines, already premium-priced, were getting too far out of line. Throughout the late ’80s, the Windows user interface was getting better and better and was taking increasingly more market share from Apple.

Microsoft’s Windows wasn’t the only alternative GUI. IBM had its TopView; Digital Research its GEM; DSR, a small company run by a programmer named Nathan Myhrvold, had something called Mondrian; and VisiCorp (née Personal Software) had Visi On.

The graphical user interface was beginning to be taken for granted, undermining the most apparent advantage of the Mac. Personal computers were becoming more standardized, with the particular hardware mattering less to consumers than the software. Third-party software (software developed by companies other than the computer maker) was being written first for Windows and then maybe for the Mac. The early perception in the corporate world that the Mac was a toy, not a serious business machine, was never really refuted. The walls were closing in on Apple.

It seemed clear as the ’80s wound down that Apple couldn’t go it alone indefinitely against the whole IBM-clone market. Apple appeared to have just two options. One was to rediscover the Woz principle—open the architecture so that other companies could clone the Mac, but do it under license so that Apple would make money on every clone sold. The other option was to join forces with another company.

The licensing idea had been around since at least 1985, when Sculley got a letter from Bill Gates, of all people, detailing the reasons why Apple should license the Mac technology. At Apple, Dan Eilers, the director of investor relations, was a persistent proponent of licensing, both at that time and then for years after. Jean-Louis Gassée fought the idea, questioning whether Apple could really protect the company’s precious intellectual property after its release. The question in Gassée’s mind was, “How do you ensure that another company will only sell into a market that complements your own?”

A deal that may have worked to the benefit of Apple was close to completion in 1987. Apple would license its operating system to Apollo, the first workstation company, for use in high-end workstations, a market that seemed to nicely complement Apple’s. But Sculley nixed the deal at the last moment.

Apple’s waffling over the partnership may have helped to sink Apollo. Its workstation competitor, Sun, was pursuing an open systems model, licensing its operating system and swallowing up more and more of the workstation market.

Another option for Apple was a merger or acquisition. Early on, Commodore had tried to buy Apple and came very close to a deal. Other merger or acquisition discussions were held over the years, and they grew more and more compelling as the PC-clone market grew. In the late 1980s, Sculley had Dan Eilers exploring the possibility of Apple buying Sun. A decade later, the relative fortunes of the two companies would be such that Sun would explore the possibility of buying Apple.

In 1988, Del Yocam got squeezed out as chief operating officer (COO) in a management reshuffling. Gassée and Spindler were immediate beneficiaries of the restructuring. “Reorgs” were routine at Apple by now. In a 1990 reorganization, Spindler was anointed COO, Sculley named himself chief technology officer (CTO), and Gassée was sidelined. Gassée soon resigned and left the company.

It was becoming an open question whether Apple could survive in this new market created by the entry of IBM.

Commoditization

We were going to change the world. I really felt that. Today we’re creating jobs, benefiting customers. I talk in terms of customer benefits, adding value. Back then, it was like pioneering.

-Gordon Eubanks, software pioneer

By the end of the 1980s, the personal-computer industry was big business, making billionaires and creating tremors in the stock market.

A Snapshot

On October 17, 1989, the 7.1-magnitude Loma Prieta earthquake that hit the San Francisco Bay Area also rocked Silicon Valley. When systems came back online, this was the state of the industry:

There was a renewed rivalry between the sixers, users of microprocessors from the Motorola/MOS Technology camp, and the eighters, users of Intel microprocessors. Intel had released several generations of processors that upgraded the venerable 8088 in the original IBM PC, and IBM and the clone makers had rolled out newer, more powerful computers based on them. Motorola, in the meantime, had come out with newer versions of the 68000 chip it had released a decade earlier. This 68000 was a marvel, and the chief reason why the Macintosh could do processor-intensive things such as display dark letters on a white background and maintain multiple overlapping windows on the screen without grinding to a halt. Intel’s 80386 and Motorola’s 68030 were the chips that most new computers were using, although Intel had recently introduced its 80486 and Motorola was about to release its 68040. The two lines of processors battled for the lead in capability.

Intel, though, held the lead in sales quite comfortably. Its microprocessors powered most of the IBM computers and clones, whereas Motorola had one primary customer for its processors—Apple. (Atari and Commodore, with their 680x0-based ST and Amiga computers, had to wait in line for chips behind Apple, foreshadowing Apple supply-chain strategies that would be solidified under Tim Cook as COO.)

In 1989 “Moore’s law,” Intel cofounder Gordon Moore’s two-decade-old formulation that memory-chip capacity would double every 18 months, was proving to still roughly predict growth in many key aspects of the technology, including memory capacity and processor speed. The industry seemed to be on an exponential-growth path, just as Moore had predicted.

The best-selling software package in 1989 was Lotus 1-2-3; its sales were ahead of WordPerfect’s, the leading word processor, and MS-DOS. The top 10 best-selling personal computers were all various models of IBM, Apple Macintosh, and Compaq machines. Compaq was no mere clone maker; it was innovating, pushing beyond IBM in many areas. It introduced a book-sized IBM-compatible computer in 1989 that changed the definition of portability. Compaq also introduced a new, open, nonproprietary bus design called EISA, which was accepted by the industry, demonstrating the strength of Compaq’s leadership position. IBM had unsuccessfully attempted to introduce a new, proprietary bus called MicroChannel two years earlier. IBM was fast losing control of the market, and it was losing something else: money.

By the end of 1989 IBM would announce a plan to cut 10,000 employees from its payroll, and within another year Compaq and Dell would each be taking more profits out of the personal-computer market than IBM. In another three years, IBM would cut 35,000 employees and suffer the biggest one-year loss of any company in history.

ComputerLand’s dominance of the early computer retail scene was short-lived. During ComputerLand’s heyday, consumers wanting to buy a particular brand had to visit one of the major franchises and distribution was restricted to a few chosen chains, the largest of which was ComputerLand. But in the late 1980s, the market changed. Price consciousness took precedence over brand name, and manufacturers had to sell through any and all potential distributors. The cost of running a chain store such as ComputerLand was higher than competitive operations, which could now sell hardware and software for less.

Another line of computer stores, called Businessland, gained a foothold and became the nation’s leading computer dealer in the late 1980s by concentrating on the corporate market and promising sophisticated training and service agreements. But consumers were more comfortable with computers and no longer willing to pay a premium for hand-holding. Electronics superstores such as CompUSA, Best Buy, and Fry’s, which offered a wide range of products and brands at the lowest possible prices, eclipsed both ComputerLand and Businessland. Computers were becoming commodities, and low prices mattered most.

Bill Gates and Paul Allen were billionaires by 1989; Gates was the richest executive in computer industry. In the industry, only Ross Perot and the cofounders of another high-tech firm, Hewlett-Packard, had reached billionaire status, but most of the leaders of the industry had net worths in the tens of millions, including Compaq’s Rod Canion and Dell Computers’ Michael Dell. In 1989, Computer Reseller News named Canion the second-most-influential executive in the industry, deferentially placing him behind IBM’s John Akers. But perspective matters: in the same year, Personal Computing asked its readers to pick the most influential people in computing from a list that included Bill Gates, Steve Jobs, Steve Wozniak, Adam Osborne, and the historical Charles Babbage. Only billionaire Bill made everyone’s list.

There was a lot of money being made, and that meant lawsuits. Like much of American society, the computer industry was becoming increasingly litigious. In 1988, Apple sued Microsoft over Windows 2.01, and extended the suit in 1991 after Microsoft released Windows 3.0. Meanwhile Xerox sued Apple, claiming that the graphical user interface was really its invention, which Apple had misappropriated. Xerox lost, and so, eventually, did Apple in the Microsoft suit, although it was able to pressure Digital Research into changing its GEM graphical user interface cosmetically, making it look less Mac-like.

GUIs weren’t the only contentious issue. A number of lawsuits over the “look and feel” of spreadsheets were bitterly fought at great expense to all and questionable benefit to anyone. The inventors of VisiCalc fought it out in court with their distributor, Personal Software. Lotus sued Adam Osborne’s software company, Paperback Software, as well as Silicon Graphics, Mosaic, and Borland, over the order of commands in a menu. Lotus prevailed over all but Borland, where the facts of the case were the most complex, but the Borland suit dragged on until after Borland had sold the program in question.

Borland was also involved in two noisy lawsuits over personnel. Microsoft sued Borland when one of its key employees, Rob Dickerson, went to Borland with a lot of Microsoft secrets in his head. Borland didn’t sue in return when its key employee, Brad Silverberg, defected to Microsoft, but it did when Gene Wang left for Symantec. After Wang left, Borland executives found email in its system between Wang and Symantec CEO Gordon Eubanks—email that they claimed contained company secrets. Borland brought criminal charges, threatening not just financial pain but also jail time for Wang and Eubanks. The charges were eventually dismissed.

Through essentially the whole of the 1980s, Intel and semiconductor competitor Advanced Micro Devices (AMD) were in litigation over what technology Intel had licensed to AMD.

Meanwhile, in the lucrative video-game industry, everyone seemed to be suing everyone else. Macronix, Atari, and Samsung sued Nintendo; Nintendo sued Samsung; Atari sued Sega; and Sega sued Accolade.

By 1989 the pattern was clear, and it persisted into the next decade—personal computers were becoming commodities, increasingly powerful but essentially identical. They became obsolete every three years by advances in semiconductor technology and software, where innovation proceeded unchecked. Personal computers were becoming accepted and spreading throughout society; the personal-computer industry had become big business, with ceaseless litigation and the focused attention of Wall Street; and this technology, pioneered in garages and on kitchen tables, was driving the strongest, most sustained economic growth in memory.

During the 1990s, Moore’s law and its corollaries continued to describe the growth of the industry. IBM had become just one of the players in what originally had been called the “IBM-compatible market,” then was called the “clone market,” and later was called just the “PC market.”

Within two decades the personal-computer market launched in 1975 with the Popular Electronics cover story on the Altair surpassed the combined market for mainframes and minicomputers. As if to underscore this, in the late 1990s Compaq bought Digital Equipment Corporation, the company that had created the minicomputer market. Those still working on mainframe computers demanded Lotus 1-2-3 and other personal-computer software for these big machines. Personal computers had ceased to be a niche in the computer industry. They had become the mainstream.

Sun

As the center of the computing universe shifted toward PCs, other computer-industry sectors suffered. In particular, it was becoming a tough haul for the traditional minicomputer companies. Forbes magazine proclaimed, “1989 may be remembered as the beginning of the end of the minicomputer. [M]inicomputer makers Wang Laboratories, Data General, and Prime Computer incurred staggering losses.”

However, minicomputers were being squeezed out not by mainstream PCs but rather by their close cousins, workstations. These workstations were, in effect, the new top of the line in personal computers, equipped with one or more powerful, possibly custom-designed processors, running the Unix minicomputer operating system developed at AT&T’s Bell Labs, and targeted at scientists and engineers, software and chip designers, graphic artists, movie makers, and others needing high performance. Although they sold in much smaller quantities than ordinary personal computers, they sold at significantly higher prices.

The Apollo, which used a Motorola 68000 chip, had been the first such workstation in the early 1980s, but by 1989 the most successful of the workstation manufacturers was Sun Microsystems, one of whose founders, Bill Joy, had been much involved in developing and popularizing the Unix operating system.

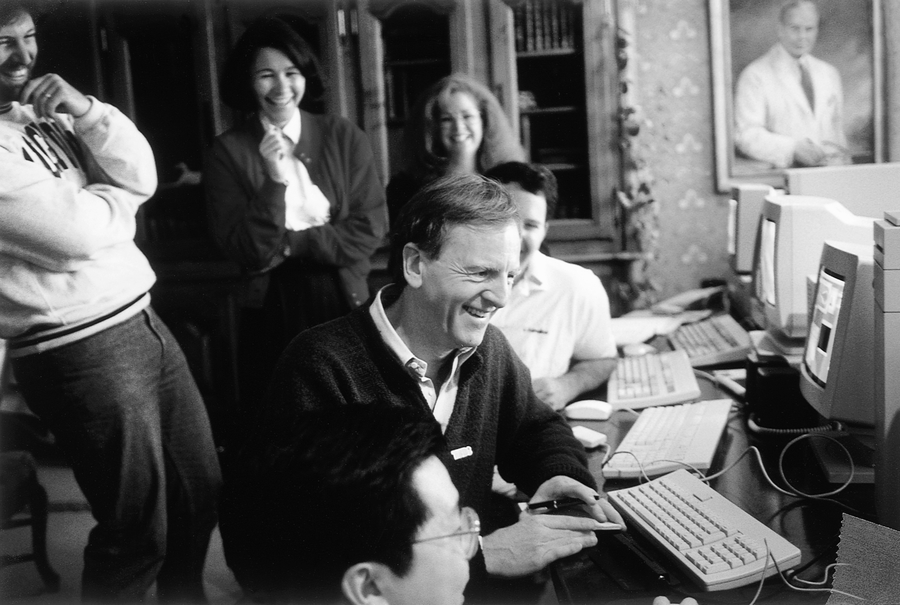

Figure 91. Sun Microsystems founders From left to right: Vinod Khosla, Bill Joy, Andreas “Andy” Bechtolsheim, and Scott McNealy (Courtesy of Sun Microsystems)

Riding the general PC-industry wave, Sun had gone public in 1986, exceeded $1 billion in sales in six years, and became a Fortune 500 company by 1992. In the process, it displaced minicomputers and mainframes, and made workstation an everyday term in the business world.

But Sun now cast its eye on the general PC market, at the same time that Microsoft was taking steps to threaten Sun on its own turf.

Gates’s firm had a new operating system called Windows NT, which was intended to give business PCs all the power of workstations. McNealy decided to wage not only a technical war but also a public-relations war. In public talks and interviews, he ridiculed Microsoft and its products. Along with Oracle CEO Larry Ellison, he tried to promote a new kind of device, called a network computer, which would get its information and instructions from servers on the Internet. This device did not immediately catch on.

But Sun had a hidden advantage in the consumer market—its early, foursquare advocacy of networks. People were repeating its slogan, “the network is the computer,” and it seemed prescient as the Internet emerged.

Sun was a magnet for talented programmers who enjoyed the smart, free-spirited atmosphere of the Silicon Valley firm. In 1991, McNealy gave one of its star programmers, James Gosling, carte blanche to create a new programming language. Gosling realized that almost all home-electronics products were now computerized. But a different remote device controlled each, and few worked in the same way. The user grappled with a handful of remotes. Gosling sought to reduce it to one. Patrick Naughton and Mike Sheridan joined him, and they soon designed an innovative handheld device that let people control electronics products by touching a screen instead of pressing a keyboard or buttons.

The project, code-named Green, continued to evolve as the Internet and World Wide Web began their spectacular bloom. But more than the features evolved; the whole purpose of the product changed. The team focused on allowing programs in the new language to run on many platforms with diverse central processors. They devised a technical Esperanto, universally and instantly understood by many types of hardware. With the Web, this capacity became a bonanza.

Although the project took several years to reach market, Sun used the cross-platform programming concepts from Green, which became known as Java, to outmaneuver its competition. Sun promoted Java as “a new way of computing, based on the power of networks.” Many programmers began to use Java to create the early, innovative, interactive programs that became part of the appeal of websites, such as animated characters and interactive puzzles.

Java was the first major programming language to have been written with the Web in mind. It had built-in security features that would prove crucial for protecting computers from invasion now that this electronic doorway—the web connection—had opened them up to the world. It could be used to write programs that didn’t require the programmer to know what operating system the user was running, which was typically the case for applications running over the Web.

Java surprised the industry, and especially Microsoft. The software titan was slow to grasp the importance of the Internet, and opened the door for others to get ahead. But once in the fray, Gates made the Internet a top priority.

At the same time, Gates was initially skeptical about Java. But as the language caught on, he licensed it from Sun, purchased a company called Dimension × that possessed Java expertise, and assigned hundreds of programmers to develop Java products. Microsoft tried to slip around its licensing agreement by adding to its version of Java capabilities that would work only on Microsoft operating systems. Sun brought suit. Gates saw Sun and its new language as a serious threat. Why, if Java was a programming language, and not an operating system? Because the ability to write platform-independent programs significantly advanced the possibility for a browser to supplant the operating system. It didn’t matter if you had a Sun workstation, a PC, a Macintosh, or what have you; you could run a Java program through your browser.

And Sun was serious about pursuing its “the network is the computer” mantra to challenge Microsoft’s hegemony. In 1998, Sun agreed to work with Oracle on a line of network server computers that would use Sun’s Solaris operating system and Oracle’s database so that desktop-computer users could scuttle Windows. Moreover, Sun began to sell an extension to Java, called Jini, which let people connect a variety of home-electronics devices over a network. Bill Joy called Jini “the first software architecture designed for the age of the network.” Although Jini didn’t take the world by storm, something like Sun’s notion of network computing and remotely connected devices would re-emerge in the post-PC era in cloud computing and mobile devices.

The NeXT Thing

As Apple Computer struggled to survive in a Microsoft Windows—dominated market, Steve Jobs was learning to live without Apple. When he left, he gathered together some key Apple employees and started a new company.

That company was NeXT Inc., and its purpose was to produce a new computer with the most technically sophisticated, intuitive user interface based on windows, icons, and menus, equipped with a mouse, running on the Motorola 68000 family of processors. In other words, its purpose was to show them all—to show Apple and the world how it should be done. To show everyone that Steve was right.

NeXT and Steve Jobs were quiet for three years while the NeXT machine was being developed. Then, at a gala event at the beautiful Davies Symphony Hall in San Francisco, Steve took the stage, dressed all in black, and demonstrated what his team had been working on all those years. It was a striking, elegant black cube, 12 inches on a side. It featured state-of-the art hardware and a user interface that was, in some ways, more Mac-like than the Mac. It came packaged with all the necessary software and the complete works of Shakespeare on disc, and it sold for less than the top-of-the-line Mac. It played music for the audience and talked to them. It was a dazzling performance, by the machine and by the man.

Technologically, the NeXT system did show the world. While the Mac had done an excellent job of implementing the graphical user interface that Steve had seen at PARC, the NeXT machine implemented much deeper PARC technologies. Its operating system, built on the Mach Unix kernel from Carnegie-Mellon, made it possible for NeXT engineers to create an extremely powerful development environment called NeXTSTEP for corporate custom software development. NeXTSTEP was regarded by many as the best development environment that had ever been seen on a computer.

Jobs had put a lot of his own money into NeXT, and he got others to invest, too. Canon made a significant investment, as did computer executive and occasional presidential candidate Ross Perot. In April 1989, Inc magazine selected Steve Jobs as its “entrepreneur of the decade” for his achievements in bringing the Apple II and Macintosh computers to market, and for the promise of NeXT.

NeXT targeted higher education as its first market, because Jobs realized that the machines and software that graduate students use are the machines that they will ask the boss to buy them when they leave school. NeXT made some tentative inroads into this target market. It made sense to academics to buy machines for which graduate students, academia’s free labor force, could write the software. NeXTSTEP meant that you could buy the machine and not have to buy a ton of application software. Good for academic budgets, but not so good for building a strong base of third-party software suppliers.

The company had some success in this small market, and a few significant wins. But after its proverbial “15 minutes’ worth,” the black box was ultimately a commercial failure. In 1993, NeXT finally acknowledged the obvious and killed off its hardware line, transforming itself into a software company. It immediately ported NeXTSTEP to other hardware, starting with Intel’s. By this time, all five of the Apple employees that Jobs had brought along to NeXT had left. Ross Perot resigned from the board, saying it was the biggest mistake he’d ever made.

The reception given to the NeXT software was initially heartening. Even conservative chief information officers who perpetually worry about installed bases and vendor financial statements were announcing their intention to buy NeXTSTEP. It got top ratings from reviewers, and custom developers were citing spectacular reductions in development time from using NeXTSTEP, which ran “like a Swiss watch,” according to one software reviewer.

But for all the praise, NeXTSTEP did not take the world by storm. Not having to produce the hardware its software ran on made NeXT’s balance sheet look less depressing, but NeXTSTEP was really no more of a success than the NeXT hardware. While custom development may have been made easy, commercial applications of the “killer app” kind, which could independently make a company’s fortune, didn’t materialize. NeXT struggled along, continuing to improve the operating system and serve its small, loyal customer base well, but it never broke through to a market share that could sustain the company in the long run without Jobs’s deep pockets.

The Birth of the Web

What one user of a NeXT computer did on his machine, though, changed the world.

Electronics enthusiasts in Albuquerque and Silicon Valley didn’t invent the World Wide Web, but its origin owes much to that same spirit of sharing information that fueled the first decade of the personal-computer revolution. In fact, it could be argued that the Web is the realization of that spirit in software.

The genesis of the Web goes back to the earliest days of computing, to a visionary essay by Franklin Delano Roosevelt’s science advisor, Vannevar Bush, in 1945. Bush’s essay, which envisioned information-processing technology as an extension of human intellect, inspired two of the most influential thinkers in computing, Ted Nelson and Douglas Engelbart, who each labored in his own way to articulate Bush’s sketchy vision of an interconnected world of knowledge. Key to both Engelbart’s and Nelson’s visions was the idea of a link; both saw a need to connect a word here with a document there in a way that allowed the reader to follow the link naturally and effortlessly. Nelson gave the capability its name: hypertext.

Hypertext was merely an interesting theoretical concept, glimpsed by Bush and conceptualized by Nelson and Engelbart, without a global, universal network on which to implement it. Such a network was not developed until the 1970s, at the Defense Advanced Research Projects Agency (DARPA) and at several universities. The DARPA network (DARPAnet) didn’t just link individual computers; it linked whole networks together. As the DARPAnet expanded, it came to be called the Internet, a vast global network of networks of computers. The Internet finally brought hypertext to life. And by the DARPA programmers having developed a method for passing data around the Internet, and the personal-computer revolution having put the means of accessing the Internet in the hands of ordinary people, the pieces of the puzzle were all on the table.

Tim Berners-Lee, a researcher at CERN, a high-energy research laboratory on the French-Swiss border, created the World Wide Web in 1989 by writing the first web server, a program for putting hypertext information online, and by writing the first web browser, a program for accessing that information. The information was displayed in manageable chunks called pages.

It was a fairly stunning achievement, and it impressed the relatively small circle of academics who could use it. That circle would soon expand, thanks to two young men at the University of Illinois. But the NeXT machine on which Berners-Lee had created the Web was no more.

Nevertheless, the NeXT saga was not over.

Cyberspace

There was no particular reason why it took two guys at the University of Illinois to do it, any more than it should have taken two guys in Sunnyvale to do the Apple I. It’s just that sometimes the establishment needs a kick in the pants.

-Marc Andreessen, cofounder of Netscape

In 1994, Microsoft was riding high and Bill Gates was a billionaire. But that didn’t mean he wasn’t still driven by fear.

No matter that he was the richest person in America, viewed by most people as the symbol, and possibly the inventor, of the personal-computer revolution. Nor did it matter that he was the founder and leader of the company whose products dominated most of the industry. Gates truly believed that he and Microsoft held their position only by virtue of constant vigilance, aggressive competition, and tirelessly applied intelligence. He was convinced that some bright young hacker somewhere could knock out a few thousand lines of clever code in a couple of months that would change the rules of the game, marginalizing Microsoft overnight.

Gates knew about these hackers because he had been one. He had known the thrill of proving your mettle by outsmarting a giant corporation. Now he was on the other side, and somewhere out there he could picture the bright young hacker who would one day succeed in outsmarting him.

Microsoft’s Muscle

Microsoft continued to push into new areas. When the OS/2 deal fell apart, an operating-system project that had been quietly in development since 1988 was pulled to center stage, given serious funding, and dubbed “Windows NT.” NT would be sold into “corporate mission-critical environments”—chiefly the server market, which was then dominated by the venerable Unix operating system.

Server computers provided resources to—or “served”—computers connected to them on a network. File servers were like libraries, holding shared files; application servers held application programs used by many machines; mail servers managed electronic mail for offices. Servers were increasingly being used in business and academia, tended to be more expensive than individual users’ computers, and, because many users depended on them, were typically maintained by technically sophisticated personnel. And they typically ran the Unix operating system.

Unix came on the scene in 1969. Invented by two Bell Labs programmers, Ken Thompson and Dennis Ritchie, it was the first easily portable operating system, meaning that it could be run on many different types of computers without too many modifications. As Unix was stable, powerful, and widely distributed, it quickly became the operating system of choice in academia, with two results: lots of people wrote utility programs for it, which they distributed free of charge, and virtually all graduating computer scientists knew Unix inside and out. On the job, they typically had control of a server, and on it they preferred to run the familiar Unix, with its large, loose collection of utility programs.

Microsoft hoped to supplant Unix and take over the server market.

In addition to its NT project, Microsoft continued to exert a powerful influence over its MS-DOS and Windows OEMs. The control extended to dictating what icons representing third-party programs could appear on the user’s desktop when the computer first started up.

In 1994, Compaq, by then the leading personal-computer maker, decided to install a program on all its machines that would run “in front of” Microsoft Windows. This small “shell” program would display icons that let the user start selected programs. Although this shell program was very simple and wouldn’t supplant Windows, it would have undermined the gatekeeper role that Windows was coming to play, and thus undermined Windows’s control of the user’s desktop.

“We’ve got to stop this,” Gates said.

And he did. What was said, what was intimated, may never be known, but Compaq removed the program.

Compaq also backed down two years later, when Microsoft threatened to stop selling it Windows unless Compaq included Microsoft’s Internet browser on its machines.

Microsoft had become a powerhouse and Bill Gates was not reluctant to use that power.

The Internet Threat

In the 1980s, online systems had become a big thing. These were an outgrowth of the early computer bulletin-board systems (which offer a public area for posting messages to other users, much like a typical “corkboard” bulletin board found in an office), or BBSs. BBSs provided content, such as news services, discussion groups, stock quotes, and electronic mail, to their subscribers. CompuServe, Prodigy, America Online, and others maintained their own proprietary systems, which users could access with a local phone call.

When the Internet blossomed in popularity with the invention of the World Wide Web in 1994, the online systems began having trouble justifying their existence independent of the Internet, and all began offering Internet access. The sudden popularity of the Internet and the World Wide Web began changing the whole nature of computing, shifting the emphasis away from the operating system and the individual desktop to the network.

Every company struggled to forge an Internet strategy. New companies emerged to take advantage of this shift in the market. Amazon and others exploited radical new forms of electronic commerce. Cisco Systems provided the networking infrastructure for this new market.

Microsoft responded promptly, trying several approaches to carve out its profits from the phenomenon, and just as promptly cutting these attempts short when the market moved in a different direction. The company hadn’t settled on an Internet strategy, but it responded quickly to the rapidly changing landscape, more easily than most large companies could.

Perhaps it was because of the structure Gates installed when Hallman left.

In 1992, Gates set up an organizational arrangement that he could live with: the Office of the President. It was also known as BOOP—Bill and the Office of the President. It consisted of Bill and three close friends: Steve Ballmer, Mike Maples, and Frank Gaudette. By this time these friends had been influenced by Gates, and had arguably influenced him to the extent that he could trust them to make decisions he’d approve of.

He had succeeded in spreading himself thinner.

For so large a company as Microsoft to be able to change directions so quickly was impressive. The biggest fish in the pond and maneuverable, too: Microsoft in the mid-1990s dominated the personal-computer industry and seemed utterly invincible.

Until that bright young hacker came along.

Creating Cyberspace

One of the places where Tim Berners-Lee’s achievement in creating the Web was fully appreciated was at the National Center for Supercomputing Applications (NCSA) at the University of Illinois’s Urbana-Champaign campus. NCSA had a large budget, a lot of hot technology, and a large staff with “frankly, not enough to do,” according to one of the young programmers privileged to work there.

Even at $6.85 an hour, Marc Andreessen saw it as a privilege to work at NCSA. He was a sharp undergraduate programmer who loved being in an environment where he could talk about Unix code. Andreessen looked at what Berners-Lee had done and saw the potential of the Web, but he also saw that potential being restricted to a few academics, accessed on expensive hardware through archaic, arcane software. The opportunity to open up the Web to everyone looked to him like “a giant hole in the middle of the world.”

Riding in friend Eric Bina’s car one night late in 1992, Andreessen put the challenge to Bina: “Let’s go for it,” he said. Let’s fill that hole.

Figure 92. Marc Andreessen After cocreating the first visual web browser while a student at the University of Illinois, Andreessen cofounded Netscape.

(Courtesy of Netscape Communications Corp.)

They coded like mad. Between January and March of 1993, they wrote a 9,000-line program called Mosaic. It was a web browser, but not like Berners-Lee’s. Mosaic was a browser for the GUI generation, a web browser for everyone. It displayed graphics, it let you use a mouse and click on buttons to do things—no, to go places. Mosaic completed the process of turning abstract connection into place; using Mosaic, one had a compelling sense of going from one location to another in some sort of space. Some called it cyberspace.

This was exactly what Bill Gates had feared: some bright young hacker—well, two—had knocked out a few thousand lines of clever code in a couple of months that would change the rules of the game forever, putting the biggest software company in the world on the defensive, and threatening everything Gates had built.

Andreessen and Bina released Mosaic on the Internet. They signed on other NCSA kids to port Mosaic from the Unix operating system to other platforms, and released those on the Internet, too. Millions of people downloaded it. No piece of software had ever got into so many hands so quickly.

This thing let you travel the planet, virtually. It was amazing. It let you read about Shakespeare on a computer in a New York library, click once to zip across the ocean to England to look at a picture of the Globe Theater, click again to return to the stacks to read Hamlet—except that they’re different stacks. This copy of Hamlet happens to be on a website in Uzbekistan. Doesn’t matter; terrestrial geography isn’t relevant in cyberspace. You can browse a world of information without leaving your chair. None of this could happen until people had created the websites, but this took place in tandem with the spread of Mosaic. Everyone who tried it “got it”; Mosaic was a hit and Andreessen was a hero.

In December 1993, Andreessen graduated from college, wondering what to do for an encore. Gravitating to Silicon Valley, he met Jim Clark, founder of Silicon Graphics. Clark was impressed by Mosaic and by Andreessen’s grasp of the potential of the Web. By April 1994, the two had founded a company, first called Electric Media, then Mosaic Communications, and finally Netscape Communications. They were going to produce software in support of this new thing, this World Wide Web.

The Browser Boom

They were not alone. By midyear there were dozens of web browsers: some free, some commercial; some available for Windows and the Macintosh and Unix, some platform-specific; some stripped-down, some festooned with bells and whistles. In addition to Mosaic, there were MacWeb, WinWeb, Internetworks, SlipKnot, Cello, NetCruiser, Lynx, Air Mosaic, GWHIS, WinTapestry, WebExplorer, and others.

Personal web pages became a fad. So did new uses of the Web, such as ordering pizza. Webcams were another fad—digital cameras feeding a continuous stream of pictures to a website. You could visit a website and watch coffee perk at MIT, check the commute traffic on Highway 17 coming up from the Santa Cruz beaches into Silicon Valley, or monitor the waves along the California coast. Steve Wozniak set up a Wozcam so friends could watch him work. The Web was a wave and Clark and Andreessen were riding it.

They hired Eric Bina and other NCSA kids from Mosaic, wrote a new browser from scratch, and made it as bulletproof and nifty as they knew how. By October 1994, they had a beta version out on the Internet. By December, they were shipping the release version of Netscape Navigator, along with other web software products. By the end of 1996, 45 million copies of Navigator were in people’s hands. The company was growing at a delirious rate, and Clark brought in industry veteran Jim Barksdale, who was widely respected for his management of McCaw Cellular Communications, as president.

Netscape was perceived in the industry, and on Wall Street, as a hot property. One admirer was Steve Case of America Online (AOL), the premier online service. He offered to put up money for Netscape’s first round of financing, but Clark turned him down, concerned that AOL’s involvement might put off potential customers who considered AOL to be competition. On August 9, 1995, Netscape filed its IPO of five million shares at $28 each. Its stock doubled by the end of the day, and Netscape was suddenly worth billions.

That year, Microsoft responded.

In May 1995, Gates had already described the Internet to his staff as “the most important single development to come along since the IBM PC in 1981.” In December, he announced publicly that the Internet would be pervasive in all that Microsoft did. Netscape’s stock dropped 17 percent and never recovered. Microsoft was going to enter the browser market. It did, rapidly and impressively, licensing some browser technology and developing its own browser, Internet Explorer, to compete with Netscape’s browser. The company launched by the young coder and the industry veteran was, in Gates’s opinion, a threat to Microsoft’s existence, and it needed to be snuffed out.

Gates was not alone in seeing the Internet as a threat to Microsoft’s dominance in the PC market. Bob Metcalfe, the networking guru who had developed the Ethernet protocol, wrote a weekly column for the industry magazine InfoWorld. In February 1995, he predicted that the browser would in effect become the dominant operating system for the next era. The dominant operating system for the current era was, of course, Microsoft’s Windows. Metcalfe was predicting that Windows’s dominance was on the verge of ending.

How, exactly, could a browser replace an operating system? Partly by providing the same capabilities; Netscape Navigator could launch application programs, display directories of files, and do most of the things an operating system did. Partly by making the choice of operating system invisible and irrelevant—Navigator ran on Macs and PCs and workstations and looked and acted the same on all of them—and partly by moving the center of the computing universe. Sun Microsystems had a slogan, “the network is the computer.” With Navigator, it didn’t matter if an application program or a data file was on your hard disk, on a server in the next office, or on a computer in another country. It didn’t matter what computer—Mac, PC, or Amiga—it was on; it just mattered whether you could get to it with your browser.

If the network was the computer, the browser was the operating system, and single-computer-based operating systems were irrelevant. Gates wasn’t going to let Windows become irrelevant.

Over the next few years, Microsoft, Netscape, and other companies whose interests intersected at the Internet crossroads performed a complex dance. Mostly it was Microsoft against everybody else, but there were complications.

Browser Wars

One of the most significant of those other companies was Sun Microsystems.

AOL was continuing a courtship with Netscape and a battle with Microsoft.

When Jim Clark rebuffed AOL’s offer to buy into Netscape, AOL purchased a browser from another source—grabbing the other browser just before Microsoft could—but Steve Case was still interested in both Netscape and its browser. The browser was regarded as the best available, and the company was a hot property. But there was another reason for his interest in Netscape: most AOL executives viewed Netscape’s team as people they could relate to. At AOL, Netscape was viewed as a natural ally in the war against Microsoft.

Microsoft had been steadily moving into AOL’s territory, online systems, for years. Although AOL was enabling people to get on the Internet with its web browser, its business was primarily as an online service. Since the 1980s, online services provided Internet connections, hosted electronic discussion groups, and provided email services. All these were also available on the Internet, but online services were a lot easier to work with—and familiar. Microsoft had entered this market with its Microsoft Network, MSN. AOL was still the unchallenged leader of the online companies, but it was worried.

The future of online companies was becoming cloudy as browsers made the Internet easier to navigate. That was why AOL needed a browser and why it was interested in Netscape, and it was why Microsoft was willing to undercut its own MSN in order to beat Netscape.