Institutionalization of UX: A Step-by-Step Guide to a User Experience Practice, Second Edition (2014)

Part III. Organization

As the business world better understands and values UX, executive championship is becoming increasingly easy to obtain. The infrastructure can be bought and customized. The Organization phase—the people side of things—is where you will face the most serious challenges in institutionalizing UX.

No one wants a scenario where an executive wants excellence in customer experience and money invested in a UX infrastructure, but then finds that there are no people who can actually do the work. Having other people in the organization fail to include your user experience team in their projects would be equally problematic.

In Part III, we will first cover governance, which is how we ensure that the UX process is controlled, that user experience work is really performed, and that the institutionalization process is growing in maturity. It is all too easy to have a UX team sidelined, or called in only when it is too late to take advantage of their expertise. UX teams can also degrade over time and travel backward on the road to full institutionalization.

Some organizations have poor design quality simply because the organizational structure is inappropriate. There is more involved in a good UX process than just having a user experience team. The team must report to the right part of the organization—and it is important that they have the right structure and roles. Only in that way can the UX infrastructure be supported, and projects be completed effectively.

You will find that there is a huge demand for user experience work in your organization. But it is fanciful to think it can be done without enough UX practitioners! I recently talked to a major technology company. It was doubling their team size, which would give the company approximately 1.2% of the people needed to do the work. I reminded this company that there is no substitute for trained practitioners.

With the right capabilities in place, UX work can move forward. Initial showcase projects become large-scale programs that occur on a routine basis. Quality designs are produced. Outstanding designs may be produced. But the real measure of success and maturity is that user-centered design becomes a routine part of the organization.

Chapter 10. Governance

![]() Pay attention to governance; it is likely to be your biggest challenge.

Pay attention to governance; it is likely to be your biggest challenge.

![]() Understand your organization’s culture and remove impediments to being customer centered.

Understand your organization’s culture and remove impediments to being customer centered.

![]() Create a meme that resonates with your organization’s imperatives.

Create a meme that resonates with your organization’s imperatives.

![]() Counteract the Dunning-Kruger effect with training.

Counteract the Dunning-Kruger effect with training.

![]() Design processes that close the loop so the executive champion can see if the UX initiative is working properly.

Design processes that close the loop so the executive champion can see if the UX initiative is working properly.

Today, there is rarely a serious problem with executive championship. Top executives are informed enough to know the value of a serious user experience design operation. They help plan the strategy to build an organization’s user experience design capability, fund the program, and remove political obstacles that might hinder its evolution.

The infrastructure to do good user experience design is rarely a problem now. You can buy a coherent set of methods, standards, and tools. It is not that difficult to adapt them to your unique needs.

But risks to implementation of user experience design remain in the area of governance. Top management clearly demands great user experience design; these executives ask for world-class practices. But all too often those practices aren’t put in place, or they are implemented inconsistently—that is, there is a degree of user experience design in some of the organization’s processes, sometimes, depending on the charisma of the user experience design leaders. How does this happen? And what can you do about it?

The Roots of the Governance Problem

User experience design is reasonably straightforward. It is a practice based on a field of research and best practices—but it also represents a profound shift in the way things get designed. Organizations start out with a way that things get done. Just as there is an established process and established realities of political power, so there is an established focus for design (think technology, process, product management, or executive fiat). Changing this reality to a customer-centered process and focus not infrequently means pushing against years of organizational culture. It takes a lot of momentum to make such a change. Even when such a shift is made, there is a tendency for processes to snap back to their old configuration: take your hand off the design process for a moment—just blink—and you find everyone has reverted to the non-user-centered configuration.

HFI recently worked with a bank that had a long history of process-driven design, complicated by business staff inserting design ideas in a somewhat random and capricious manner. An HFI team spent 18 months on a showcase program. We developed a very advanced UX strategy, and then a solid and validated structural design. After just a few weeks away, I returned to find that the detailed design had been planned—but the planning process involved business analysts only, and the company was back to a process-oriented approach. Instead of designing screens that fit into the structural design, members of the bank’s staff were picking processes based on a functional analysis and were planning to design each in isolation. If you do that, then you design recurring payment in isolation—and you tend to make an interface where recurring payment is a menu choice. The reality is that customers don’t think of recurring payment, but rather see it as just one type of “payment.” So the team had thrown 18 months of design out the window just in their planning process. We had to catch that, reeducate them, and get the design reworked.

Along with the inertia of the old process, vested interests may also thwart the transition to a user-centered process. User experience design is one of those fields where everyone is an expert. Making random and unsupported design decisions will be the highlight of some people’s day. Those staff feel quite upset when you switch to an evidence-based, process-driven, validated process. In response, they will often work to marginalize the user experience design team. Excluding the user experience design team is one of the most common issues—or at least excluding them until the very end of the development process, when it is impossible to do much that’s useful.

Memes That Kill

There are always ideas flowing around an organization that have the potential to derail a user experience design transformation. Organizations vary in their beliefs, but it is worthwhile to diagnose the specific, retrograde ideas prevalent within yours. You can then attack them as a part of the cultural change.

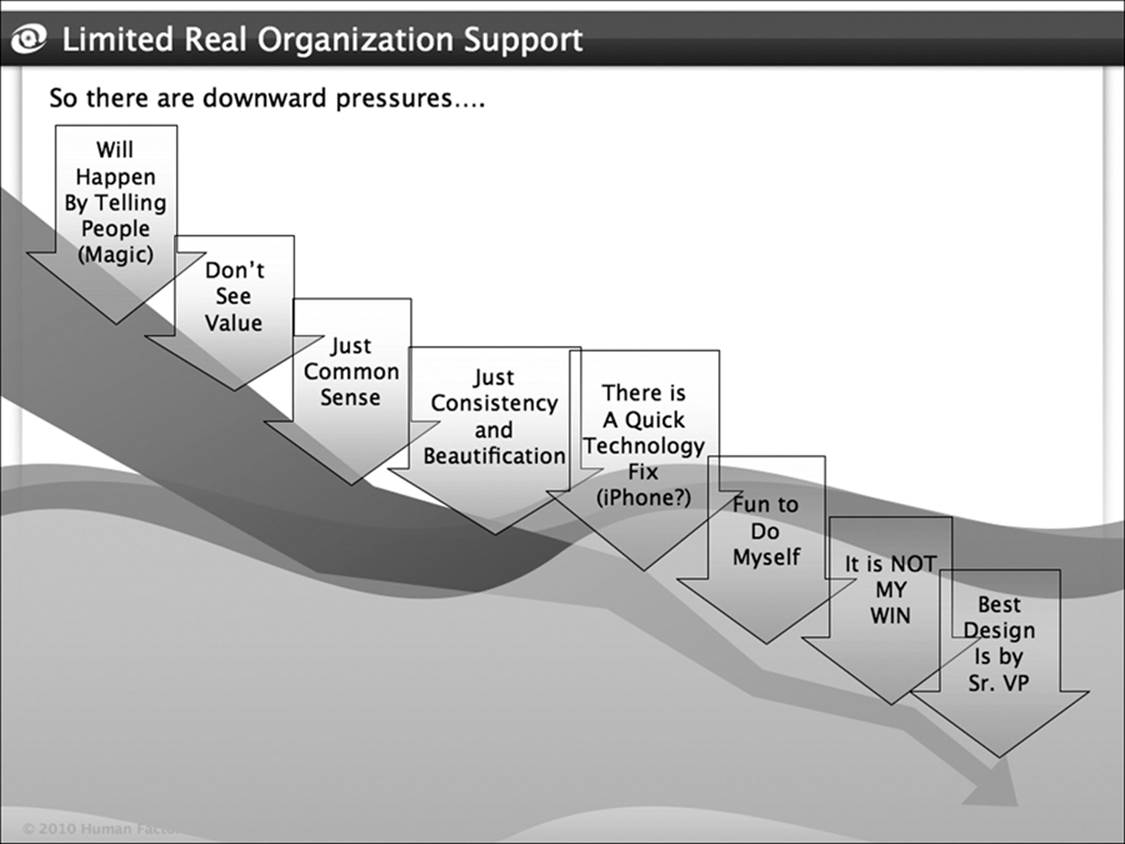

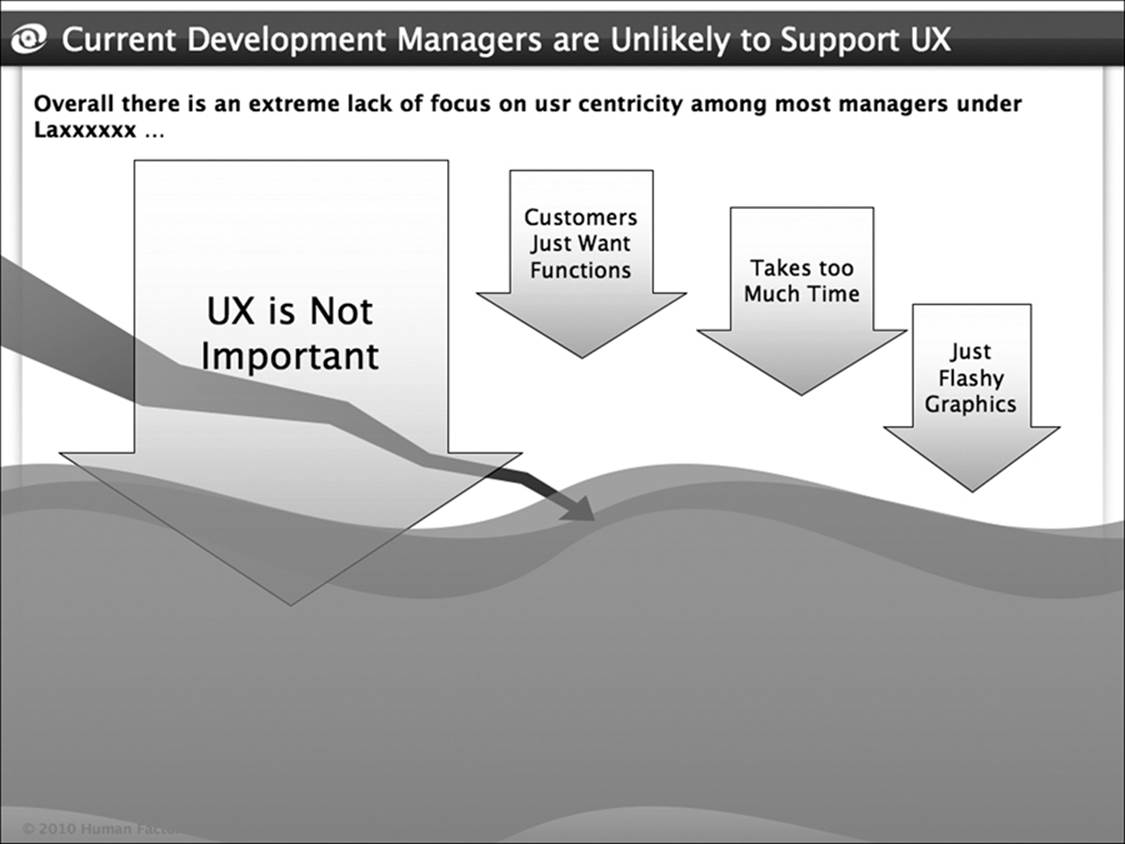

These memes are diverse (see Figures 10-1 and 10-2), but they share a few common roots. The first of these memes is that user experience design is not really important. People working hard on server efficiency may think that success is a function of fast response time. When you see everything in terms of milliseconds, user experience design objectives seem secondary. “Nothing works if the servers don’t function,” they will say. The fact is that all competitors have functional servers today—or simply use the cloud. Thus it is primarily user experience design that provides differentiation.

Figure 10-1: Example of particularly numerous and problematic memes in a bank attempting a user experience design transformation. This organization did not create a practice that thrived.

Figure 10-2: Example of a more typical set of problematic memes in a product development company that was attempting a user experience design transformation. This organization succeeded nicely.

The second cluster of evil memes hovers around the misperception that everyone is a user experience design expert. It’s a lot like the near-universal expertise in branding. There are numerous corollaries of this fallacy, such as it’s just a matter of common sense! All you really need to do UX design is the motivation of your employees, this line of thinking goes. So the user experience design journey devolves into a motivational speech, bonuses around survey scores, or a few well-placed KITAs (kicks in the ass). Then there’s the all-too-common notion that design can be done by senior executives based on intuition, anecdotes, and comments from relatives. Because being senior means those executives are exceptional people, this rationale states, it must also mean they are exceptional human factors engineers. For senior executives, it’s a recreational break—especially when they are unencumbered by measurable assessments of their efforts—or, for that matter, by education, training, certification, or cracking a serious book.

The third and last meme insists that user experience design is inefficient and expensive. I like to say that “Usability is free.” And that is true—it is the reason that user experience design is becoming so commonplace. But many people have a very different experience: immature user experience design is expensive and slow. Taking someone with a limited amount of training; tossing him or her into a corner of an organization without accepted processes, standards, templates, knowledge bases, and team support; and loading that person with top-priority projects—that is not a recipe for efficiency. In other cases, user experience design consultancies are hired that contract for large research projects that yield volumes of shelfware—or solve the wrong problem altogether—because the organization has a culture of Ready, Fire, AIM! When these immature approaches characterize the UX effort, management hopes something useful and efficient happens. Of course, hope is not a strategy.

It is hardly surprising that many people experience user experience design as impractical. Mature user experience design requires that all the parts come together. You need an organization in which user experience design work is done on each and every project as a matter of course, and you need trained and certified staff working in a cohesive UX operation. Your staff needs access to methods, templates, standards, and the history of previous work.

The result of a mature user experience design capability is that the work becomes far more efficient than it is in an organization where the person making user experience design decisions is just guessing. You save time and money because the process is faster, cheaper, and better. You don’t have to redo work. You discover changes that are needed early, when making changes is easy. Most importantly, you don’t pay the price for failures.

Education Helps

One of the most universal roots of governance problems is the Dunning-Kruger effect [Kruger and Dunning 1999]. In the brilliant work by Kruger and Dunning, we see that people who know very little about a topic tend to substantially overestimate what they know. This gives rise to the frustrating and debilitating tendency of completely unqualified staff, often with executive positions in the organization, to make design pronouncements. A recent example is the insistence that the action buttons should be purple because that will cause more people to select them.

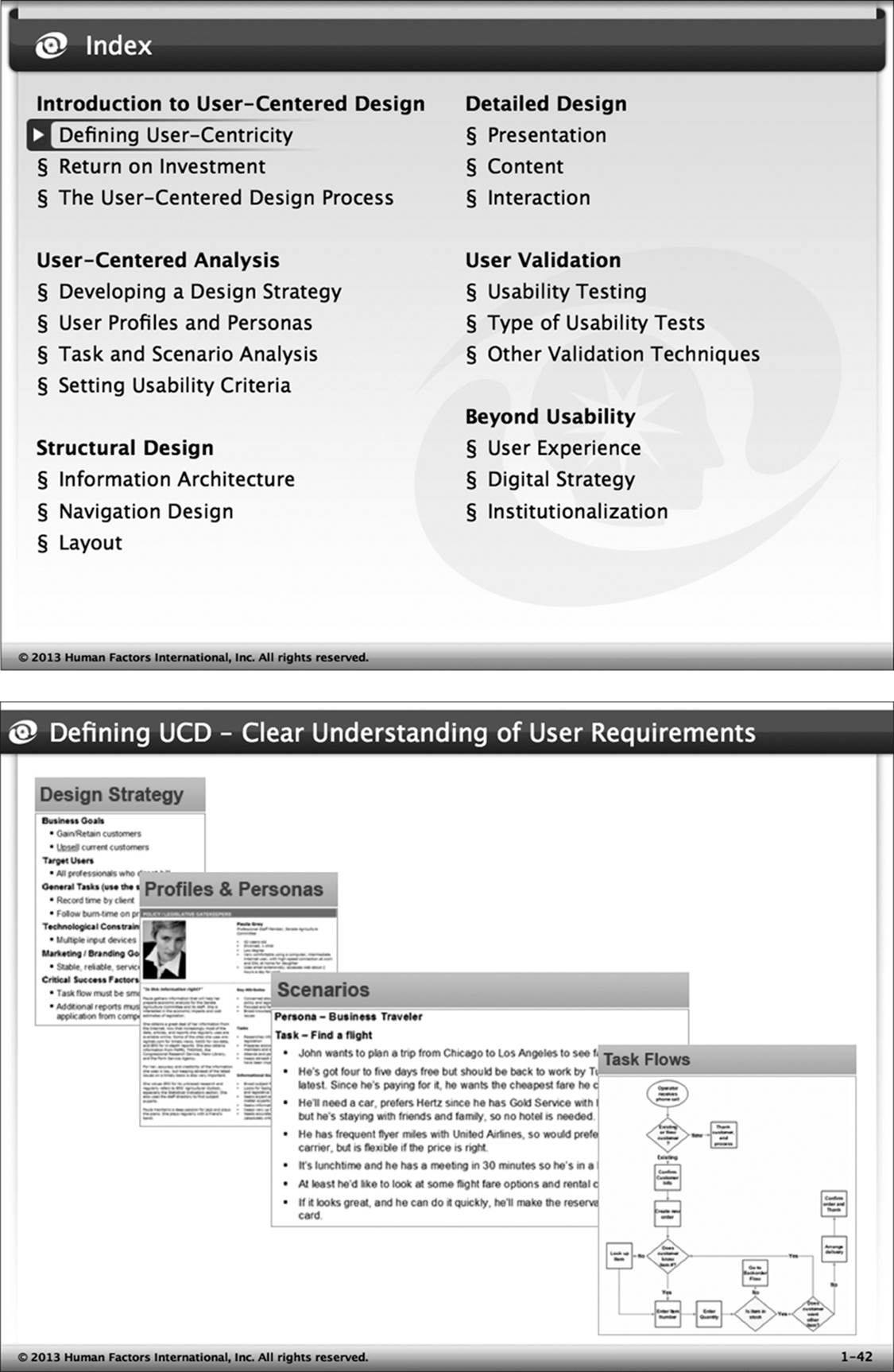

There is only one antidote mentioned for the Dunning-Kruger effect: education. As we make people smarter about a topic, the first thing that happens is they get a sense of what they don’t know. Give people a day of training on user experience design and they will certainly not get a solid foundation to do the work. But they will gain some level of respect for the fact that UX is not common sense and not trivial, and that it cannot be addressed by random guesswork. At HFI, we have built training specific to that purpose (Figure 10-3). It was a departure for us, as we have generally built professional-level training to provide a solid set of stills. Nevertheless, we found that there was a need for the knowledge training that creates a general appreciation for UX, quickly.

Figure 10-3: Slides from training specifically geared to educating managers, instead of providing usable skills. Such training mostly helps executives appreciate what they don’t know.

Verify That a Methodology Is Applied

It is still common to find organizations with dozens of user experience design staff working on an ad hoc basis. They dash about, reviewing designs when they can find them and have time. They sit in group design sessions (which usually have a fancy new name for what is, at its core, the old Joint Application Development process). This kind of immature process is not any faster, cheaper, or better than the past techniques.

The organization must have a set of documented methodologies that covers most of the user experience design functions that are needed. It is true that user experience design work is not done from recipes in a cookbook; these are often big and complex programs. Even so, the methodological document provides real value. In particular, it acts as a planning guide. When you see what is involved in a best-practices structural design program, you are unlikely to ask for it to be done in two weeks. These methods concretize what has to be accomplished. Consequently, there must be a full set of methods that cover most of the user experience design work. The methods also make communication far easier. For 20 years at HFI, we have just said, “I’m working on a UIS” (user interface structure), and everyone basically knows what will happen.

Having a methodology in place is a baseline requirement, but getting a method is straightforward: you can buy one, or buy it and customize it. Making sure that the methodology is implemented is a more involved process.

Whatever your methodology, the question that should guide it is this: “Are the projects following the prescribed user-centered process?” Sadly, many professionals don’t even know what that means! It does not mean that you care about users, nor does it mean that you have user experience design staff employed. It does not even mean that you follow your methodology. Instead, it means that the first thing you work on is the user’s experience. Having completed that step, you then build out the processes, business models, and technology to make it happen. In terms of governance of such a user-centered design program, you need to have methods in place so that the first steps deal with defining the needs of the user and crafting a planned model of the future user’s experience.

Even if you have a user experience design methodology, your approach can shift all too easily to technology-centered design (which starts with the database or the technical framework) or function-centered design (preparing a set of banking methods out of a high school textbook, for example, without researching what your customers and staff will really do). The staff might keep the user experience design team in the dark while performing the initial “preparatory work,” and then ask for forgiveness for not starting with a true, user-centered process. It might not seem a significant misstep, but in effect, it fundamentally undermines the whole user experience design practice and assures that the user experience design team will not be able to succeed. In essence, the “old-timers” in staff and management, invested and comfortable with previous processes and systems, have consigned the user experience design team to screen beautification and cleaning up the mess.

In this scenario, things quickly devolve into a “finger in the dike” scenario, in which the user experience design staff dashes about, trying unsuccessfully to manage the cascading effects of a bad design. For staff resistant to a transformation toward user-centered design, that kind of chaos can be fun to watch. Of course, it might not be so fun to have their company fail due to lack of differentiation and a poor market position. That happens—and not infrequently.

Once the user experience design team is on board, engaged, and involved from the start of the project, it’s essential to make sure that the work described in the methodology is the correct level of effort and is actually completed. A very general recommendation—the user experience practice burns approximately 10% of the budget and approximately 10% of the headcount on a given project—is a useful rule of thumb. In reality, of course, the question is more complicated.

It is difficult to figure out what is really needed to establish a user experience practice and transform an organization’s development culture so that it is based on user-centered processes. At HFI, the people who decide what we bid on a project are highly skilled and receive special training. They have to be tested and certified. I meet with them weekly. Even then, they still have to kick ideas around with a client organization and ask lots of questions—and they very occasionally miss the mark.

Project planning in user-centered design means balancing cost and risk. There are risks to quality decisions, and risks to time-to-market decisions. The project needs to fit into the development culture and strategy of the organization. All the while, it must maintain good practices. The stakes for getting this right are very high.

In most cases, you can immediately spot the stupid stuff—for example, people wanting to test three subjects, major reliance on focus groups or surveys as a way of gathering data or assessing designs, projects proceeding without human performance or experience objectives, nothing done until a test at the end. Given that you have gotten this far in the book, we won’t need to spend any time on these very basic mistakes.

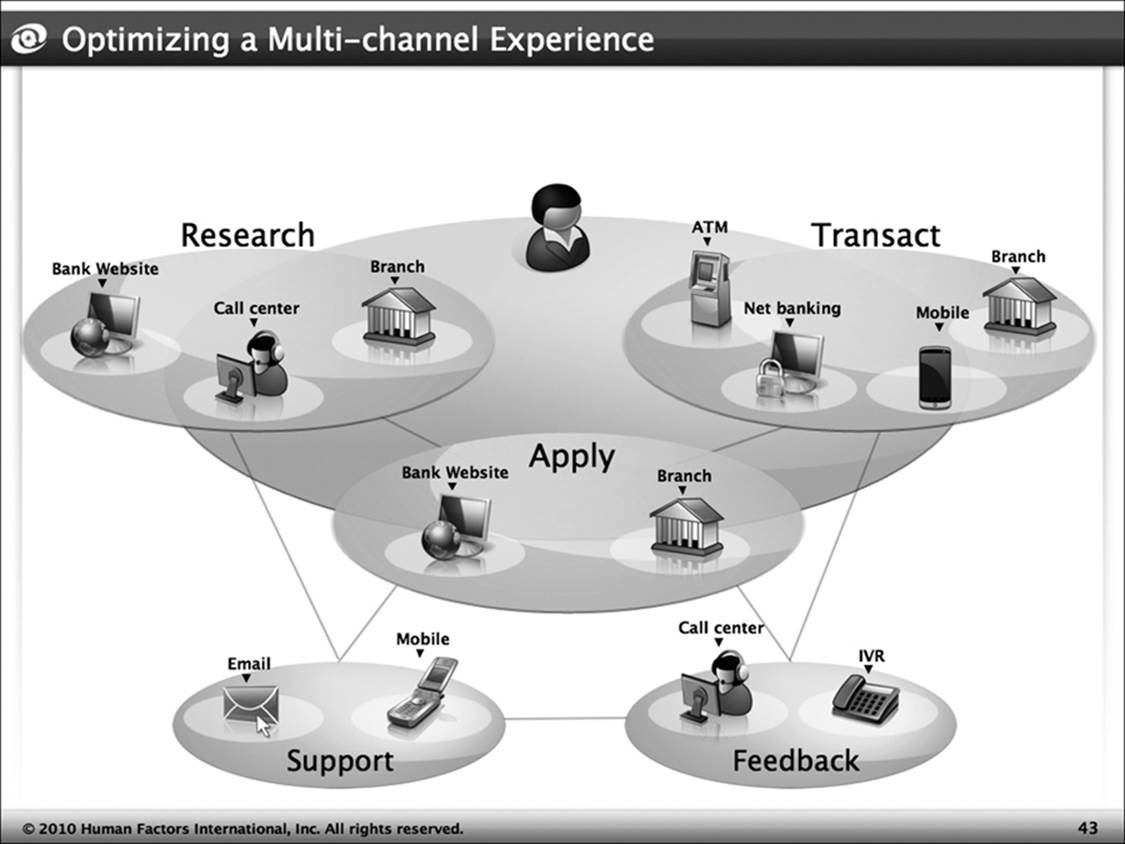

The most important foundation for user experience design is a solid UX strategy for the overall cross-channel solution. This has two elements. The first part is the motivational aspect: why customers will want to convert and how they will be retained. The second part is the overall design and alignment of the multiple channels that customers will access (e.g., browser, mobile applications, call centers, stores). Without this top-level strategic design, you will almost certainly create a set of siloed and uncoordinated offerings, whose purpose and position will not align.

Today, many companies try to substitute “be like Apple” for a real UX strategy. On the face of it, that is not a bad idea. Apple has done a wonderful job at providing an ecosystem solution in which all the parts hang together. But Apple also has a unique position and style. Would that style be the best choice for a low-cost superstore? No, of course not. Thus the first challenge in developing a strategy is designing the right emotional schema—that is, understanding why people convert and are retained.

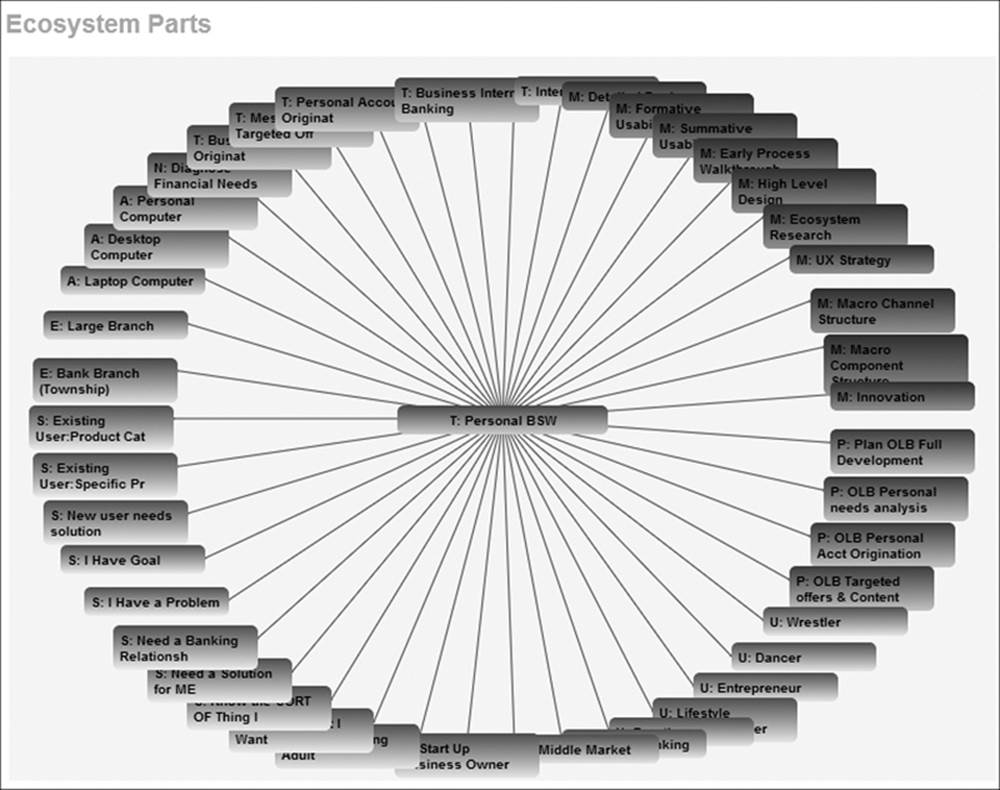

The second challenge is to identify how the various channels will be understood (Can users even figure out which channel to use?), interact (Are there places where the call center appears as a part of the Web site?), and align. We need to consider if there is a need for a pervasive information architecture, where the structure and language of offerings and functions align between channels (Figure 10-4). For example, do the tabs on the Web site and the signs in the store have the same language and same sequence? There is also a need for interface standards to maintain consistency. Without a UX strategy, then, your methods will not be ideal. You may be designing “usable wrong things.”

Figure 10-4: Planning the role of various channels for a bank

Once a UX strategy is in place, the key to successful implementation is maintaining a continuous thread through the rest of the design process. That doesn’t mean simply having a series of user experience design activities at every stage; it’s essential to uphold the innovation and logical progression defined by the UX strategy as you move toward increasingly specific and concrete designs. The structural design determines the detailed design. In turn, the periodic validation references the human performance and preference objectives defined in previous stages.

Governance means that there are documented methods, and it means that those methods provide a solid progression from executive intent to UX strategy, through the entire design process—a consistent logic and intent from which the organization should never stray. Be aware that there will be a tendency to marginalize these methods instead of making them central to the process. Governance must ensure that the organization retains this new user-centered design methodology.

Closing the Loop on Standards

At HFI, we have completed many hundreds of customized user interface standards. Today, I never worry about successfully creating a usable, effective standard. Our generic templates simply require adaptation to an organization’s conventions and domain, and offer close to 100% reliability. Creating standards from scratch can be taxing. It can be an extremely hazardous chore for an organization to create a massive set of design patterns. Eventually, so many of these components are created that the designers can’t really find anything. Conversely, organizations may try creating one template that will do everything (good luck with that). Outside of these odd cases, the main worry is about implementation.

I’ve asked many hundreds of developers how they feel about designing under a solid and strict set of design standards. They understand the value of those standards in providing consistency and speed of design. They understand that coding is faster, as is maintenance. Even so, it is the rare designer who really likes standards. Standards make people feel restricted and less creative. I have demonstrations that make it clear that this relationship does not hold. Nevertheless, an emotional resistance is still there—and you can count on meeting it as you try to apply your UX methodology across the organization.

To manage resistance to standards, you need to start with training. The design staff, including the user experience design staff, need about half a day of training on the standard. This training must share the templates, of course, and explain how to design using templates. Almost more important is training on why standards are really important. HFI uses all sorts of methods to drive this point home (see Figure 10-5), and to make clear that standards enable more creativity, as designers don’t have to spend time reinventing the wheel. Yet despite how important and helpful this training is, it is rarely enough on its own.

Figure 10-5: The “Mouse Maze” is the most downloaded offering on the HFI site. It is a simple maze with three levels. In the first level, all the keys work normally; this maze is easy to get through. In the second level, the same maze is much harder, because the keys are switched around (so the left arrow goes up, for example). This represents poor design. In the last level, the keys are switched around, but also work differently in different parts of the maze. This third level is designed to be awful, and it shows what happens then there is inconsistency—when standards are not observed. We use this demonstration in our standards training classes.

Governance needs to ensure that standards are being followed. Just how that is done must be tuned to the organizational culture. In some organizations, it’s a straightforward process to enforce standards and to require designs to comply with them. In these types of organizations, a review cycle can be put in place in which quality control staff ensure compliance and provide feedback. In other organizations, strict enforcement is impossible, because it goes against the culture. In such cases, the most that can be done is to provide feedback when someone is not following the standards. At that point, compliance with the interface standard is the responsibility of the author.

One other cross-check we have started to use at HFI is a counter to check whether the online standards are being accessed. It is easy to fake access, of course. Even so, if you don’t have anyone looking at your standards, it’s an indicator to start worrying.

Checking If the Practice Is Alive

Unfortunately, a user experience design practice is fragile. Once you invest in methods and standards and tools, they merely need to be kept up-to-date. Other parts of the organization, however, can come apart quickly—particularly your staff. There is a strong market for experienced user experience design staff, so staff who have lost faith in your operation are likely to leave. Even if they are enthusiastic about your practice, they may be pulled away with various incentives (e.g., more money, a more important job title). The cost of developing replacement staff is substantial, and once you slip under a critical mass of technical specialists (generally about seven highly competent practitioners), it becomes difficult to bring onboard and integrate new people. Even with a mature, well-documented operation, your new staff need guidance from senior established in-house practitioners on where to look for materials and how to apply the UX infrastructure and facilities.

Yet even with infrastructure and staff in place, a practice can disintegrate. It is not uncommon for user experience design organizations that were mature and had sophisticated approaches to design to find themselves relegated to ad hoc tactical work because they have a new misaligned management. The stream of investment in training and evolving the UX practice can quickly shut off—at which point the user experience design organization begins to degrade.

How can executive sponsors spot these problems before they send the practice into an unstoppable downward spiral? Early detection and clear criteria are key elements to sustainability. Detection, however, can be difficult. Managers whose lack of understanding is destroying your UX investment will tout their improved efficiency. Even the staff who are voting with their feet and leaving the organization can seem to be part of normal attrition. For these reasons, it can be impossible for an executive champion to really know if the practice is strong. While you would think that an involved executive champion would quickly notice the downturn, failure to become deeply involved in the details of the team and a layer of management or two can combine to obscure a serious decline. As a consultant who specializes in institutionalization of user experience design practices, it has often been my job to walk into an executive’s office and provide that warning.

You might not have a consultant who will be willing to put his or her relationship with the company at risk to protect the practice, however. Therefore we will outline three approaches that let an executive provide governance for team sustainability: measuring progress, tools support, and certification.

Measuring Progress

Metrics provide a classic method of governance. If you want to know if your team is alive and functional, you can get an indication by looking at customer experience metrics such as your Net Promoter Score, conversion, and customer retention. The only problem is that these indicators lag behind the actual UX design process. The work that the team does on designs takes time. Once those designs are envisioned, created, tested, refined, and documented, they still need to be implemented. Sure, some tactical changes may bump up your scores in the short run. Nevertheless, even these can mask a failure to create a solid strategy, to innovate, or to have holistic structural designs that fit into an ecosystem solution. It is not unusual to see good numbers for a year while your middle managers are effectively decimating your practice.

Metrics are a good way to make managers feel accountable and under pressure to attend to user experience design issues. Conversely, they are a very poor way to get an early warning of a disintegrating practice. The lag time is too long.

Tools Support for Governance

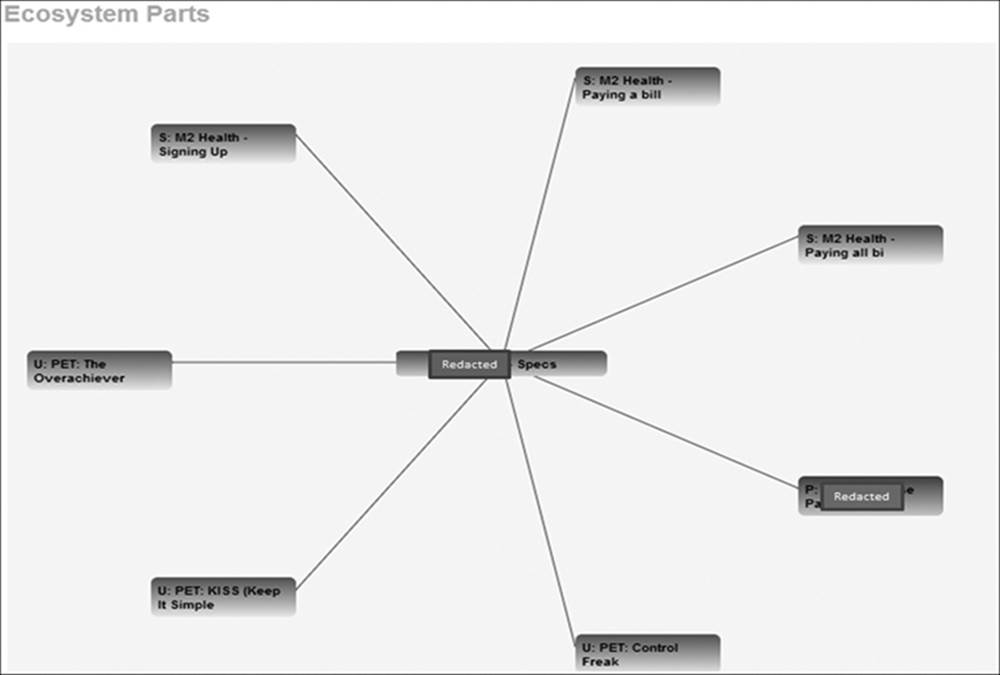

If the user experience design team is operating in an enterprise environment (like HFI’s UX Enterprise), this environment is likely to provide a substantial increase in transparency. An even moderately trained executive is then able to assess the health of a practice in short order. The information used in design is instantly accessible, rather than hidden in voluminous documents and decks. Figure 10-6 depicts three different charts drawn instantly by UX Enterprise. Each chart shows the objects linked to a given design (UX specification). The number of links gives a good idea of the amount of supporting content guiding a design. Is the design evidence-based or a wild guess? It does not take an in-depth review of the documentation to tell.

Figure 10-6: These three charts show the users, scenarios, environments, and other elements that are the foundation for a given design. You can quickly see if a design is well supported, or just thrown together in a wild guess.

HFI has been working on specific, governance-oriented reports and displays that let an executive gauge the quality of a user experience design practice in about 10 minutes. Certainly, a metrics system can be gamed with fake events and data. But if your design team is forced to fabricate the implementation of your UX program, it is probably running into other, possibly extreme problems that you are likely to notice without report—issues that major surgery will be required to correct. It is far better to find the problem early. To do so, we can check how often staff refer to methods and standards. As mentioned earlier, if your team has gotten out of the habit of referring to these core materials, they have reverted to an ad hoc approach to UX design far short of true institutionalization. We can also track how much data we have on user profiles, environments, and other objects. If objects are rich, based on observation and user research, we can be confident that the team is working in a serious way. If everything is based on stakeholder opinions, then we can be sure that the team is failing, because even the best stakeholders see things differently from customers (even if they were customers once).

Using Certification for Governance

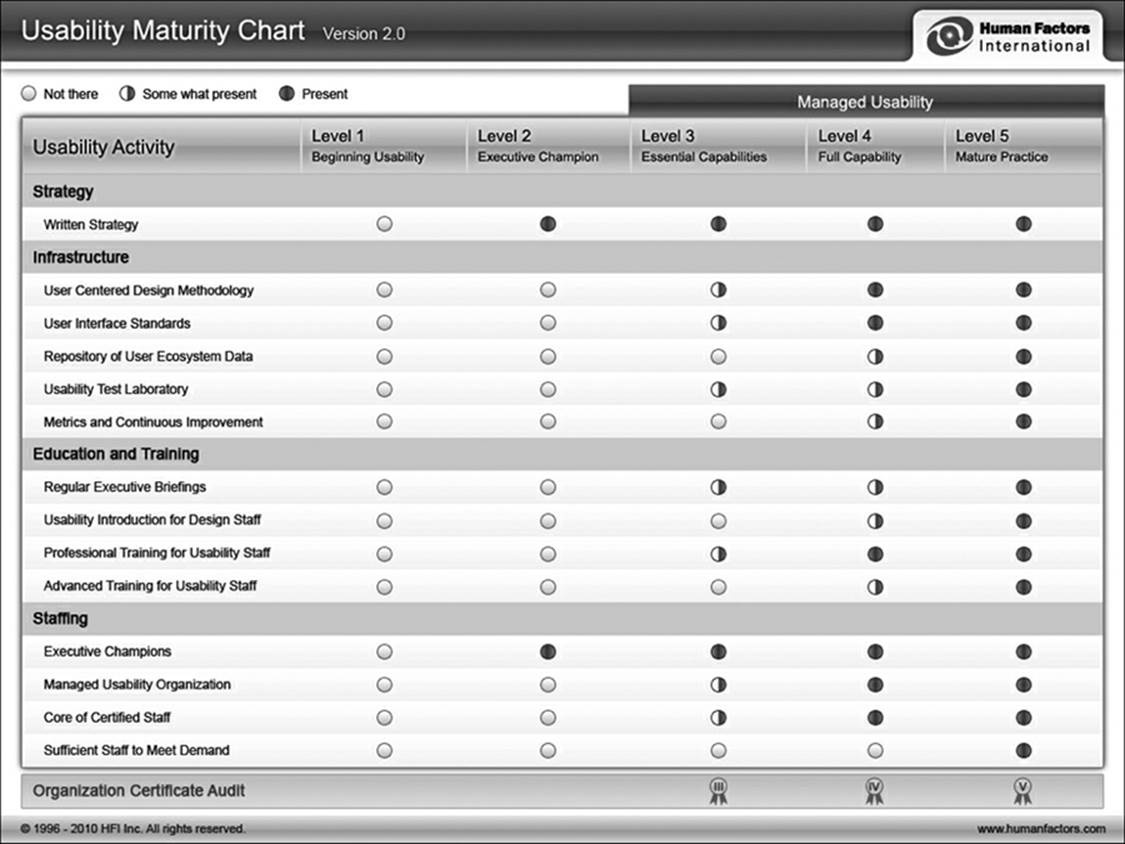

It is hard for executives to get time to sit through briefings, monitor metrics, and explore data. This fact of life makes certification a great way to ensure that your user experience design practice is still operating effectively. When we created the Certified Practice in Usability at HFI, we did not really understand its potential role in governance; we simply knew that the need existed for solid, objective criteria for the maturity of a practice (Figure 10-7). We offered to police compliance with the criteria as well: a failure to renew your certification or a drop in certification level should sound a clear alarm that your practice is unraveling.

Figure 10-7: The HFI Usability Maturity Model that is the foundation of the Certified Practice in Usability

Summary

Today, governance is the challenge most likely to emerge in the institutionalization of user experience design. Executives understand that customer experience is a key strategic objective, but middle management may not share that perspective—and may be quite resistant to a transformation toward user-centered design. Therefore, a combination of measures must be taken to ensure that the practice is sustainable: that the customer-centered focus is not sidelined or the practice itself dissipated. This must include education (ideally targeted at specific areas of resistance and misinformation in the organization). It also must include practical methods of surveillance to alert the executive champion before reconstruction of the practice becomes onerous.

All materials on the site are licensed Creative Commons Attribution-Sharealike 3.0 Unported CC BY-SA 3.0 & GNU Free Documentation License (GFDL)

If you are the copyright holder of any material contained on our site and intend to remove it, please contact our site administrator for approval.

© 2016-2026 All site design rights belong to S.Y.A.