Threat Modeling: Designing for Security (2014)

Appendix E. Case Studies

This appendix lays out four example threat models. The first three are presented as fully worked-through examples; the fourth is a classroom exercise presented without answers in order to encourage you to delve in. Each example is a threat model of a hypothetical system, to help you identify the threats without getting bogged down in a debate over what the real threat model or requirements are for the particular product.

The models in this appendix are as follows:

§ The Acme database

§ Acme's operational network

§ Sending login codes over a phone network

§ The iNTegrity classroom exercise

Each model is structured differently because there's more than one way to do it. For example, the Acme database is modeled element by element, which is good if your primary audience is component owners who want to focus their reading on their components; while the Acme network is organized by threat, to enable systems administrators to manage those threats across the business. The login codes model shows how to focus on a particular requirement and consider the threats against it.

The Acme Database

The Acme database is a software product designed to be run on-premises by organizations of all sizes. The currently shipping version is 3.1, and this is the team's first threat model. They have chosen to model what they have and then determine how each new feature interacts with this model as part of the same process in which they do performance and reliability analysis. This modeling is inspired by a series of recent design flaws that affected company revenue. The output of this modeling would be a clear list of bugs and action items. Because the important take-away from this appendix is not the bugs or action items, but the approach that finds them in your software or system, the bug list is not provided as a list.

Security Requirements

Acme has formalized security requirements for the first time. Those requirements are as follows:

§ The product is no less secure than the typical competitor (Acme's software is currently very insecure, and as such, stronger goals are deferred to a later release).

§ The product can be certified for sales to the U.S. government.

§ The product will ship with a security operations manual. A security configuration analysis tool is planned but will ship after the next revision.

§ Non-requirement: protect against the DBA.

§ As the product will hold arbitrary data, the team will not be actively looking for privacy issues but nor will they be willfully blind.

§ Additional requirements will be applied to specific components.

Software Model

After a series of design meetings over the course of a week, run by Paul (project management lead) and attended by Debbie (architecture), Mike (documentation), and Tina (test), the team agrees on the model shown in Figure E.1. These meetings took longer than expected, because details emerged whose relevance to the threat model was not initially clear, leading to a discussion of questions such as “Does this add a trust boundary,” and “Does this accept connections across a trust boundary?”

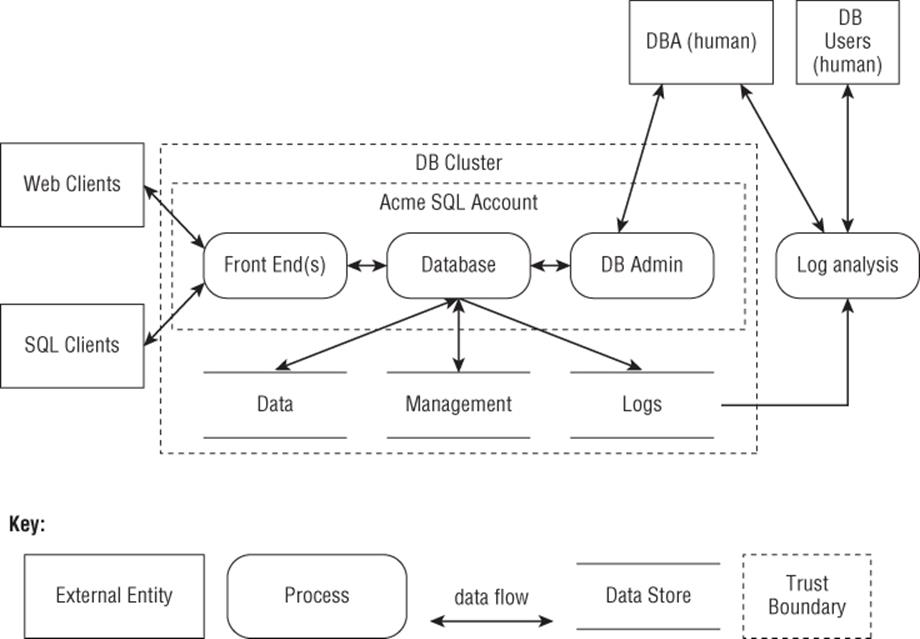

Figure E.1 The Acme database

Threats and Mitigations

The threats identified to the system are organized by module, to facilitate module owner review. They were identified three ways:

§ Walking through the threat trees in Appendix B, “Threat Trees”

§ Walking through the requirements listed in Chapter 12, “Requirements Cookbook”

§ Applying STRIDE-per-element to the diagram shown in Figure E.1

Acme would rank the threats with a bug bar, although because neither the bar nor the result of such ranking is critical to this example, they are not shown. Some threats are listed by STRIDE, others are addressed in less structured text where a single mitigation addresses several threats. The threats are shown in italic to make them easier to skim.

Finding these threats took roughly two weeks, with a one-hour threat identification meeting early in the day during which the team examined a component and its data flows. The examination consisted of walking through the threat trees in Appendix B and the requirements checklist in Chapter 12, and then considering whether other aspects of STRIDE might apply to that one component (and its data flows). Part of each meeting was reserved for follow-up from previous meetings. Part of the rest of the day was used for follow-up to check assumptions, file bugs as appropriate, and ensure that component owners were aware of what was found. Those two weeks could have been compressed into a “do little but threat model” week, but the team chose a longer time period to avoid blocking other work that depended on them, and to give the new skills and tasks time to “percolate”—that is, time for reflection, and consideration of what the team was learning.

Front Ends

The front ends are the interface between the database and various clients, ranging from websites to complex programs that make SQL queries. The front ends are intended to handle authentication, load balancing, and related functions so that the core database can be as fast as possible.

Additional requirements for the front end are taken from the “Requirements Cookbook,” Chapter 12:

§ Only authenticated users will have create/read/update/delete permissions according to policies set by database designers and administrators. (This combines authorization and confidentiality requirements.)

§ Single-factor authentication will be sufficient for front-end users.

§ Accounts will be created by processes designed by customers deploying the Acme DB software.

§ Data will be subject to modification only by enumerated authorized users, and actions will be logged according to customer configuration. (Such configuration will need to be added to the security operations guide.)

The threats identified are as follows:

§ Spoofing: The authentication from both web and SQL clients is weaker than the team would like. However, the requirement to support web browsers and third-party SQL clients imposes limits on what can be required. Product management has been asked to determine what current customers have in place, and a discussion will be added to the product security operations guide.

§ Tampering: A number of modules included for authentication were poorly vetted for security properties. It seems at least one has an auto-update feature whose security properties and implications require analysis.

§ Repudiation: Logging at the front end is nearly non-existent, but what logs exist are stored on the front end, and will need to be sent to the back end.

§ Information Disclosure: There are both debugging interfaces and error messages, which reveal information about the database connection parameters.

§ Denial of Service: Reliability engineering has already removed most of the application-specific static limits, but how to configure a few will be added to the operations guide.

§ Elevation of Privilege: A separate security engineering pass will perform a variety of testing on input validation. Additionally, the front-end team will document the limited validation it performs on data, and review that against assumptions that the core database makes about protection.

Connections to Front Ends

The web front end always runs over SSL, addressing tampering and information disclosure issues. The SQL client and client libraries will be upgraded so that connecting without SSL requires setting special options (one on the server to allow such connections, one on the client to allow fallback). The Acme client and libraries will always attempt to connect with SSL first, unless a second option, “DontEvenBotherWithSecurity,” is set.

Denial-of-service threats are again generally well addressed by the reliability and performance engineering that has already been done. The security team sends a congratulatory box of donuts to the reliability team.

Core Database

Requirements:

§ All database permissions rules will be centralized into a single authorization engine to enforce confidentiality, integrity, and authorization policies.

Threats:

§ Spoofing: The core database is designed to run on a dedicated system, and as such is unlikely to come under spoofing attacks. The one exception, which will be analyzed further, is that the front end has the ability to impersonate and perform actions as any user account.

§ Tampering: Input validation raises questions of SQL injection, and those lead to questions about what assumptions are being made about the front ends. An intersystem review is planned according to the approach described in Chapter 18 “Experimental Approaches.”

§ Repudiation: Reviews found that the database logs nearly everything originating from the front end, except several key session establishment APIs fail to log how the session was authenticated.

§ Information Disclosure: SQL injection attacks against the database can lead to information disclosure in all sorts of ways. The team plans to investigate ways to architecturally restrict SQL injection attacks.

§ Denial of Service: Various complex cross-table requests may have a performance impact. A tester is assigned to investigate clever ways to perform small, expensive queries.

§ Elevation of Privilege: A review finds two routines that by design allow any caller to run arbitrary code on the system. The team plans to add ACLs to those routines and possibly turn them off by default.

Data (Main Data Store)

Preventing tampering, information disclosure, and denial of service all rely on the presence of a limited set of connections, with those connections controlled by operating system permissions. If the data store is remote and runs on network attached storage, the storage controller can bypass all the controls on the data. Additionally, the network connections would be vulnerable. The team will document this, and perhaps add additional cryptographic features in a future release that address such threats with untrusted data stores. That decision will hinge on how important the business requirement is, the effort involved in implementation, and possible performance impact.

Management (Data Store)

The same problems that could affect the data store are magnified if the management data store is on remote storage. The team plans to move management data to the same device as the database, and document the security effect of moving it elsewhere.

Connections to the Core Database

The team has been assuming that these connections are within a security boundary, with the previously unstated assumptions that the front ends, database, and DB admin portals would be on a trusted network. Given the work to address all the threats that this assumption allowed, and to ensure performance, that assumption is updated to an isolated network for those with a packet filter. That assumption is added to the operations guide. A bug to encrypt and authenticate between components is filed for a future version.

DB Admin Module

The requirements are as follows:

§ Authentication Strength: Required authentication strength is debated, and a bug is opened to ensure that the agreed upon requirement is crisp.

§ Account creation: Creating new DBA accounts will require two administrators, and all administrators will be notified. (This will be a new feature.)

§ Sensitive Data: There can be requirements to protect information from DBAs—for example, a column of social security numbers. This will be supported in two ways: first, with the option to create a deny ACL for an object, and second, with the option to encrypt data with a key that's passed in. (There's a third option, which is for the caller to encrypt the data, but that's always been implicitly present.) All three are to be documented, along with a discussion of the importance of key management, and the known plaintext attacks against simplistic encryption of a small set of a billion SSNs.

§ Spoofing: The DBA can connect in two ways: via a web portal and via SSH. The web portal uses SSL, which incorporates by reference all several hundred SSL CAs in a browser, and as such the portal may be spoofable. That may or may not be acceptable risk, so it is now documented in the operations guide. The DBA currently connects with single-factor authentication, and it may be that support for stronger authentication makes sense.

§ Tampering: The DBA can tamper, and in some sense that's their job.

§ Repudiation: The admin can change logs. This is documented as a risk, and while logged, the risk is recursive.

§ Information Disclosure: Previously, the DBA login page provided a great deal of “dashboard” and overview information pre-login as a convenience feature. That is now configurable, and the default state is under discussion. It may also be necessary to protect against DBAs, a requirement set that the team (and author) missed when applying STRIDE-per-element, and discovered when checking requirements.

§ Denial of Service: The DBA module can turn off the database, re-allocate storage space, and prioritize or de-prioritize jobs. Ancillary denial-of-service attacks may also be possible, which are not logged.

§ Elevation of Privilege: There is a single type of DBA. It may be sensible to add several layers, and clarifying customer requirements should precede such work.

Logs (Data Store)

Unlike the main data store, logs are, by design, read by log analysis tools that are outside the trust boundary.

§ Tampering: The logs are presented as read-only to the analysis tools.

§ Repudiation: The logs are key to analyzing an attempt at repudiation, but it turns out that not all logs are delivered to the central log store. Some are held in other locations, which is tracked in a set of bugs.

§ Information Disclosure: Because the log analysis code is outside the trust boundary, the logs must not contain information that should not be disclosed, and a review of logging will be required, especially focused on personal information.

§ Denial of Service: The log analysis code has the capability to make numerous requests. If logs are stored on the same system as the main database, then managing log requests could limit database performance by consuming resources needed for controller bandwidth, disk operations, and other tasks. This issue is added to the operations guide, with a suggestion to send logs to a separate system.

Log Analysis

The threats are as follows:

§ Spoofing: All the typical spoofing threats are present (roughly everything covered in Appendix B's figure and table B-1 applies). As the number of database users is typically small, the team decides to add persistent tracking of login information to aid in authentication decisions.

§ Tampering: The log analysis module has several plugins to connect to popular account management tools, each of which presents a tampering threat. As the logs are already read-only with respect to the log analysis tool, tampering threats are less important.

§ Repudiation: The log analysis tools may help an attacker figure out how to engage in a repudiation that is hard to dispute.

§ Information Disclosure: The log analysis tools, by design, expose a great deal of information, and a bug was filed based on the Information Disclosure item under “Logs (Data Store)” to control that information. (In a real threat model, you'd just refer to a bug number.)

§ Denial of Service: Complex queries from log analysis can absorb a lot of processing time and I/O bandwidth.

§ Elevation of Privilege: There are probably a number of elevation paths based on calls from the log analysis module to other parts of the system, designed in before trust boundaries were made explicit.

In summary, it seems that Acme's development team has learned a lot, and has a good deal of work in front of them. Because this work was kicked off after a series of embarrassing security incidents, management is cautiously optimistic. They have a set of issues to work on, and if more incidents happen, they can use those incidents to see if their threat modeling work found the threats, and prioritize that fix. They believe such surprises are far less likely than they were before they started threat modeling.

Acme's Operational Network

The Acme Corporation are makers of fine database software. It used to produce jet-propelled pogo sticks, tornado seeds, and other products before a leveraged buyout drove it to a more traditional corporate structure, including a more traditional operational network. After its project to threat model its software goes well, producing useful and actionable bugs, it decides to take a crack at modeling its internal network.

Security Requirements

These requirements were built on those from the Requirements Cookbook (see Chapter 12):

1. Operational vulnerability management will track all products deployed on the attack surface.

a. Henceforth, all newly deployed software will be checked to ensure it has a vulnerability announcement policy.

b. Paul, a project coordinator, has been assigned to track down vulnerability announcement policies per product in use, and to subscribe to all of them.

2. Operations will ensure that its firewalls align with the trust boundaries shown in diagrams.

3. The sales portion of the network will need to be PCI compliant.

4. Complete business requirements are somewhat hard to pin down, and of the form “Let's not have bad stuff happen.” Those will be made more precise as questions of how to mitigate are analyzed, using the feedback process between threats, mitigations, and requirements shown in Figure 12-1 (in Chapter 12).

Acme has decided to focus on a STRIDE threat-oriented approach, as it worked reasonably well for their software threat modeling. They are aware that a balance between prevent, detect, and respond is probably also important, but wanted to build on their success with software modeling, and so will consider those requirements at a later date.

Operational Network

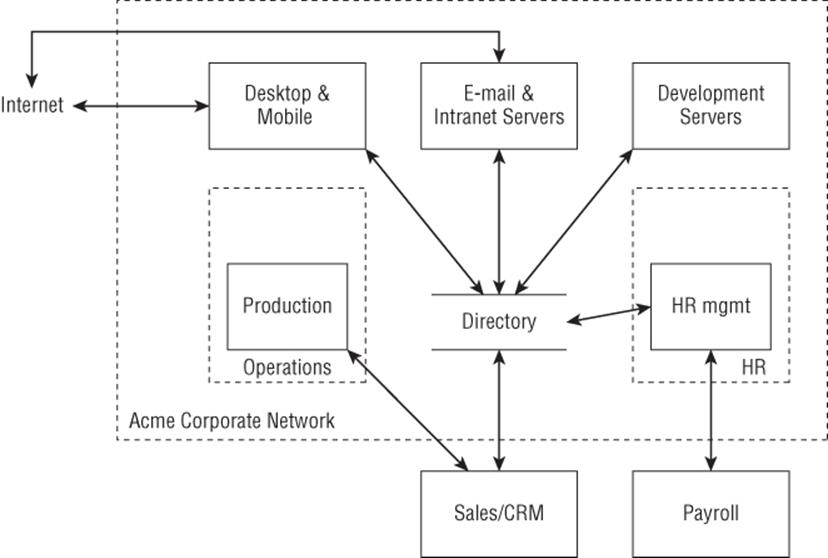

Acme's operational network was shown earlier in Chapter 2, “Strategies for Threat Modeling” and is reproduced here as Figure E.2. The remainder of this section is written in the form of a summary, as if it were from the team. The main thing missing is bug numbers, because adding fake bug numbers won't make the examples more readable.

Figure E.2 Acme's operational business network

The team has decided that this diagram will suffice to get started even though there are several obvious bugs, including the fact that it does not show payment processing. That will be considered later, as making sure no one steals the plans for the rocket-powered pogo sticks or dehydrated boulders is considered a top priority.

The systems that make up the operational network are as follows:

§ Desktop and mobile: are the end-user systems that everyone in the company uses.

§ E-mail and intranet: are an Exchange server and a set of internal wikis and blog servers.

§ Development servers: includes the local source-control repository, along with bug tracking, build, and test servers.

§ Production: This is where products are made using a just-in-time approach. It includes an operations network that is full of machine tools and other equipment that is finicky and hard to keep operational, never mind secure.

§ Directory: This is an Active Directory server, which is used for account management across most of the systems at Acme.

§ HR Management: This is a personnel database, time-card system for hourly employees, and related services.

§ Website/Sales/CRM: This is the website through which orders are placed. The website runs at an IaaS cloud provider. It has a direct connection to the production shop. The website is locally built and managed with a variety of dependencies.

§ Payroll: This is an outsourced payroll company.

Threats to the Network

The team made an initial decision to look at operations threat by threat, rather than system by system, as looking at the systems makes it a little harder to rank threats (and would result in a threat model that looks like the prior example). In the interests of presenting a somewhat compact example, additional requirements are only called out occasionally.

Spoofing

The team decided to look at spoofing threats by the victim—that is, what happens if someone spoofs the connection to each system or set of systems within the diagram.

The requirements for Acme's network are as follows:

§ No anonymous access to corporate systems.

§ There may be a requirement for whistleblower anonymity. The question is sent to legal.

§ Single-factor authentication is sufficient for all systems.

§ All systems should default to authorization against the directory system.

§ Account creation is performed by a single administrator.

The requirements for the website are as follows:

§ Facebook logins will be accepted.

§ Anyone with a validated e-mail account can create an account.

Spoofing threats to specific areas of the operational network include:

§ Desktop and mobile: Several developers have installed their own remote access software, believing that “no one would ever find it” is sufficient security. Rather than have a debate on the subject, the team realized there's no good alternative, and adds this as justification for the VPN project to move faster.

§ E-mail and intranet services: These services are exposed to the Internet. Password protection on the intranet servers is spotty, as some of them were deployed with the assumption that they're “behind the firewall,” while others were deployed with a shared username/password, and yet others really do need exposure to the Internet for mobile workers. That last need will be addressed with a VPN, which is not yet deployed.

§ Development servers: These servers being spoofed seems to turn largely into an integrity (tampering) threat against either source or development documents.

§ Production: Most obviously, someone could drive the creation of extra products, have those products either stack up in the warehouse or, worse, be delivered to fake customers. More subtly, a rascally attacker might be able to deliver fake product plans, resulting in product malfunctions, unhappy customers, and bad press for the company and its products.

§ Directory: The biggest issue would be spoofing of HR management. If someone can pretend to be HR, they end up with a lot of power.

§ HR Management: The digital data flows are almost exclusively outbound. Inbound processes still involve a lot of face-to-face discussion and paper forms.

§ Sales/CRM: These systems have a direct connection to production, where orders are sent. It turns out that the server in production will take entries authenticated only by IP address, and anyone with that address can enter orders.

§ Payroll: Spoofing threats to the payroll system loom large in everyone's mind, especially because HR e-mails payroll data every week. Unfortunately, the payroll company has no options to use anything stronger than a username and password. After a raucous debate, the team decides to file a bug (also noting the information disclosure and tampering threats associated with e-mail), and then moves to other threats.

§ Other spoofing: A single directory server acts as a single point of failure for spoofing security. While this worries some team members, the “solution” of adding a second directory system doesn't help because even after a lot of work to keep them in sync, most spoofing attacks will have two potential targets.

Tampering

Currently, a great deal of reliance is placed on firewalls to prevent tampering attacks. Few of the internal data flows are integrity protected. Many of the following threats can be implemented either against the endpoint or against network data flows:

§ Desktop and mobile: There is little control over what software runs on desktops across the company. Developers run high-privilege accounts on both their e-mail and development machines. There's no integrity checking or whitelisting software in use.

§ E-mail and intranet: The e-mail servers are reasonably well protected, while the intranet servers are a mixed bag. One wiki runs off a common account, known to most of the developers.

§ Development servers: The source control server is fairly locked down, with constrained access that seems to have been implemented to log all changes. The test servers are known to be a mess, with anyone able to make changes; and tracking what has changed when and where is manual.

§ Production: The department has a wide variety of equipment, some of which even talks to each other without humans involved. Tampering threats abound, from tampering with raw materials to tampering with computer-controlled devices in order to produce either subtly or very wrong output, to tampering with delivery instructions.

§ Directory: This is fairly locked down, with a limited number of administrator accounts, all of whom can tamper by design. However, anyone who breaks in or misbehaves here can likely obtain full credentials to do so elsewhere in the network.

§ HR management: These systems are not as locked down as anyone would like. An employee who made changes there could likely do so undetected by technology. The change would have to be noticed by a person. Changes, such as not paying a salary, would be caught by employees, while paying too much would (we hope) be caught by accounting. Changes to job titles, dates of hire (which affect pension, vacation, and other benefits) would likely go undetected.

§ Sales/CRM: There are a number of issues. Perhaps most important, someone who can alter data on that site can alter prices, either subtly or aggressively. Someone can also alter customer records, making a new customer appear long-standing or vice-versa, and changing how they are treated by customer service or anti-fraud.

§ Payroll: The tampering attacks here range from the obviously bad, such as adding employees or changing salaries, to the more subtle, such as changing tax withholding or deductions at the same time (“We're sorry, Mr. Smith, but we have not received insurance premiums from you. . .”).

Repudiation

The team decided to focus most repudiation attention on sales order repudiation, and has planned a review of logs on the sales server. There are certainly other repudiation issues they could examine, including repudiation of check-ins to the development servers, repudiations of HR changes, or repudiation of changes to production. However, for a first pass at threat modeling, other threats are given priority.

Information Disclosure

Acme is very protective of trade secrets regarding product creation, and worried about the contents of the customer support database, as a few customers seem to regularly encounter product reliability issues. Customer support believes that many of these customers are merely hasty, not taking time to read the instructions. Threats apply to:

§ Desktop and mobile systems: Unfortunately, these must have access to most data. Data encryption software may be an important addition here, to protect against information disclosure if the machines are stolen. This mitigation applies across most of the systems in the company, and is not repeated per section.

§ E-mail servers: E-mail contains a tremendous amount of confidential information. The team considers a pilot project for e-mail encryption.

§ Intranet servers: These servers are a different beast. It's challenging to add encryption for application data such that only certain readers have the keys (in contrast to full disk encryption). It might be possible to use a more well-considered set of permissions. Additionally, it's possible to add SSL to most of these servers.

§ Development servers: It is similarly challenging to use encryption for application data.

§ Production servers: These are locked down primarily for reliability reasons, which has nice side effects for security.

§ HR management: These include information on salaries, performance reviews, sufficient personal data to commit identity theft, as well as a host of information on prospective candidates.

§ Sales/CRM: These systems contain information about upcoming sales, coupon codes, customer names and addresses, and—probably accidentally—credit card numbers. The data flow from sales to production needs to have encryption added.

Denial of Service

Somewhat similar to repudiation, denial-of-service threats are treated as a lower priority than spoofing, tampering, or information disclosure. The team notes that production is dependent on a non-scalable set of machines and skilled machine operators, and so a leap in sales would be a denial of vacation.

Elevation of Privilege

Outsiders can attempt to elevate to insider privileges via desktop (attacking via e-mail, IM, and web browsing), and attacking sales/CRM or payroll, each of which is exposed to the Internet. To address the desktop elevation attacks, the team looks to vulnerability management and sandboxing. The web applications that deliver sales and CRM are exposed to a variety of attacks, including SQL and command injection, cross-site scripting (XSS), cross-site request forgery (CRSF), and other web attacks. Testing for those attacks will be managed by the QA team, which will need additional security training. The team resolves to ask external vendors some questions about patching and secure development at the payroll company. Acme has decided to defer insider threats for now, as there's a lot to be done in the near term.

In summary, Acme has used STRIDE threat modeling and a model of their operational network to identify many threats. Again, they have moved from a vague sense of unease to a well justified set of concerns, which they can work through. From here, they'd need to decide on a prioritization scheme for those concerns, or consider additional security requirements, depending on their unique needs.

Phones and One-Time Token Authenticators

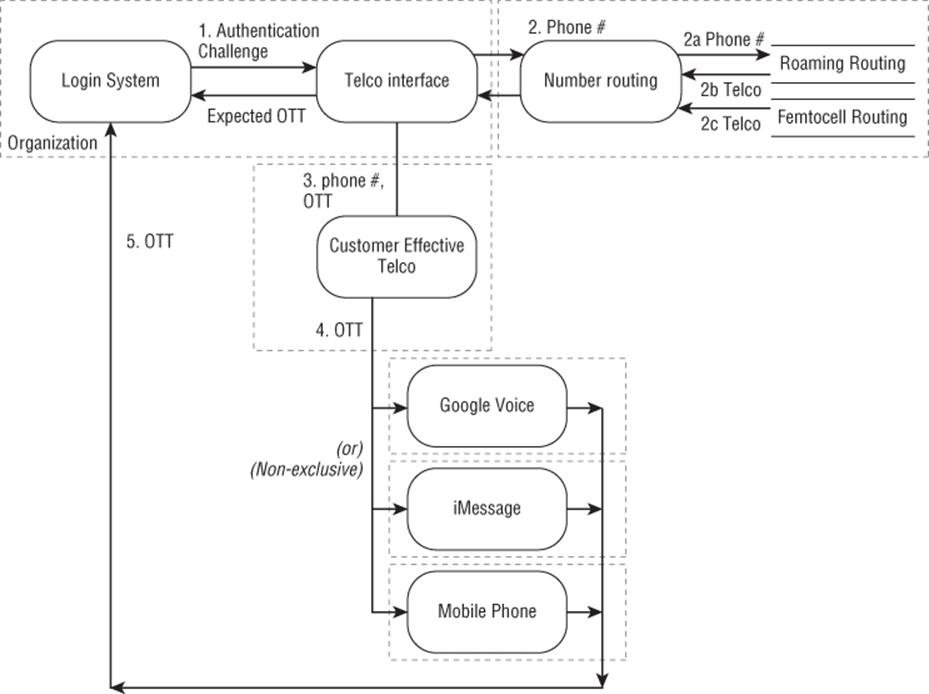

Chapter 9, “Trade-Offs When Addressing Threats,” describes a threat model (shown in Figure E.3) that illustrates how threat models can be used to drive the evolution of an architecture. This model is also a useful example of a focused threat model. It ignores a great deal of important mechanisms, and shows how the trust boundaries and requirements can quickly identify threats. It should not be taken as commentary on any particular commercial system, some of which may mitigate threats shown here. Also, many of these systems support text to speech that can read the code to a person using an old-fashioned telephone; the alternatives suggested in the following material do not have that capability.

Figure E.3 A one-time token authentication system

The Scenario

A wide variety of systems are designed to send auxiliary passwords—one-time tokens (OTT)—over the phone network to someone's phone. During an enrollment phase, the user is asked to provide a phone number, which is then associated with the account. The scenario shown in Figure E.3 starts when someone attempts to log in (to an account with which a phone is associated) at the “Login system.” A model of how that works is as follows:

1. The login attempt triggers a message to some telephone company (“telco”) interface, and that message is a phone number and a message to be sent to the phone number.

2. The telco interface does some form of lookup to find out how to route the message. That's modeled as a “number routing” process in a separate trust domain. There are a number of ways in which mobile phones associate with other phone carriers, including roaming and femtocells. Similarly, but not shown, with U.S. phone number portability, simple routing by area code and exchange no longer works, even for landlines. The number routing system returns a pointer to a “Customer effective telco.”

3. The telco interface then sends the phone number and OTT to the customer's effective telco.

4. The OTT is then sent to one or more systems that may be delivering the message. That might simply be the phone in question, but it could also be an interface such as Google Voice or Apple iMessage. (All products are listed for illustrative purposes only.)

5. The person enters the OTT at the login page, and it is compared to the expected value.

The security requirement is that the OTT is not disclosed to an attacker. There are three requirements which might apply. First, the OTT should get through intact—that is, free of tampering. Second, the system should remain operational. Both those requirements apply to almost any variant of this system, and as such they do not enhance the value you get from a comparative threat model. The third requirement which may be relevant is privacy; people may not want to give you their mobile phone number and risk it being abused for sales calls or other purposes. This is a threat to your ability to use OTT to improve authentication. The privacy issue would weigh in favor of applications on mobile devices.

The Threats

This model focuses on threats to the confidentiality of the OTT. Some of those are direct threats, others are impacts of first-order threats such as spoofing and tampering:

1. The login system and telco interface communicate inside a trust boundary, and you can ignore threats there for this model.

2. The phone number being sent to a routing service outside the trust boundary presents a number of threats. If the reply is not accurate, step 3 will expose the OTT. The reply can be inaccurate for a variety of reasons, including but not limited to the following:

§ Lies (inaccurate or misleading data regardless of whether that's accidental, intentional by the database, or intentional by someone who's hacked the database) from the roaming database

§ Lies from the femtocell database

§ Attacks against the routing service (EoP, tampering, spoofing)

§ Attacks against the return data flow (tampering, spoofing)

3. The “customer effective telco” can see the OTT.

4. Systems designed to improve text message processing can see the OTT.

Possible Redesigns

Information disclosure threats can be addressed by adding cryptographic functions. Simplified versions of ways to do that include the following:

§ Send a nonce, encrypted to a key held on a smartphone, then send the decrypted nonce to the authentication server (message 1 = ephone(noncen), message 2 = noncen). Then the server checks whether the noncen is the one that it encrypted for the phone, approving the transaction if it is.

§ Send a nonce to the smartphone, and then send a signed version of the nonce to the server [message 1 = noncephone, message 2 = signkey(phone)(noncephone)]. The server validates that the signature on the noncephone is from the expected phone's key, and is a good signature on the expected nonce. If both those checks pass, then the server approves the transaction.

§ Send a nonce to the smartphone. The smartphone hashes the nonce with a secret value it holds, and sends back the hash.

For each of these, it's important to manage the keys appropriately, and it's probably useful to include time stamps, message addressing, and other elements to make the system fully secure. Including those in this discussion makes it hard to see how cryptographic building blocks could be applied.

The key in all design changes is understanding the differences introduced by the changes, and how those changes interact with the software requirements as a whole.

Redesigns that focus on using the phone as a processor preclude the use of an old-fashioned telephone, or even a mobile phone (of the kind where the end user can't easily install software). Because such phones still exist, it may be that the threats just enumerated are considered acceptable risk, or even an improvement over traditional passwords.

Sample for You to Model

You can use the models presented above as training models with answer keys. (That is, use the software model in Figure E.1 and the operational model in Figure E.2 and find threats against them yourself. You can treat the example threats as an answer key; but if you do, please don't feel limited to or constrained by them. There are other example threats.) In contrast this section presents a model without an answer key. It's a lightly edited version of a class exercise that was created by Michael Howard and used at Microsoft for years. It's included with their kind permission. I've personally taught many classes using this model, and it is sufficiently detailed for newcomers to threat modeling to find many threats.

Background

This tool, named iNTegrity, is a simple file-integrity checking tool that reads resources, such as files in the filesystem, determining whether any files or registry keys have been changed since the last check. This is performed by looking at the following:

§ File or key names

§ File size or registry data

§ Last updated time and date

§ Data checksum (MD5 and/or SHA1 hash)

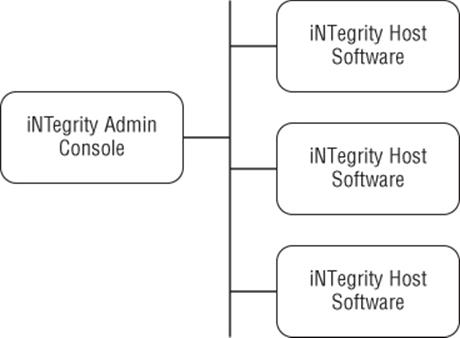

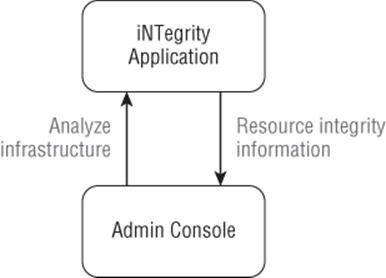

Architecturally, the tool is split into two parts: a host component and an administrative console. As shown in Figure E.4, one client can communicate with multiple servers, rather than running the tool locally on each computer.

Figure E.4 The networked host/admin console nature of the iNTegrity tool

In another operational environment, it might be known that a machine has been compromised and can no longer be trusted, and the server and client software can be run off, say, a bootable CD or USB drive. In this case, the integrity-checking code is running under a trusted, read-only Windows environment, and the host and admin components both read data from the compromised machine, but not using the potentially compromised OS. The host process does not run as a Windows service in this mode, but as a standalone console application.

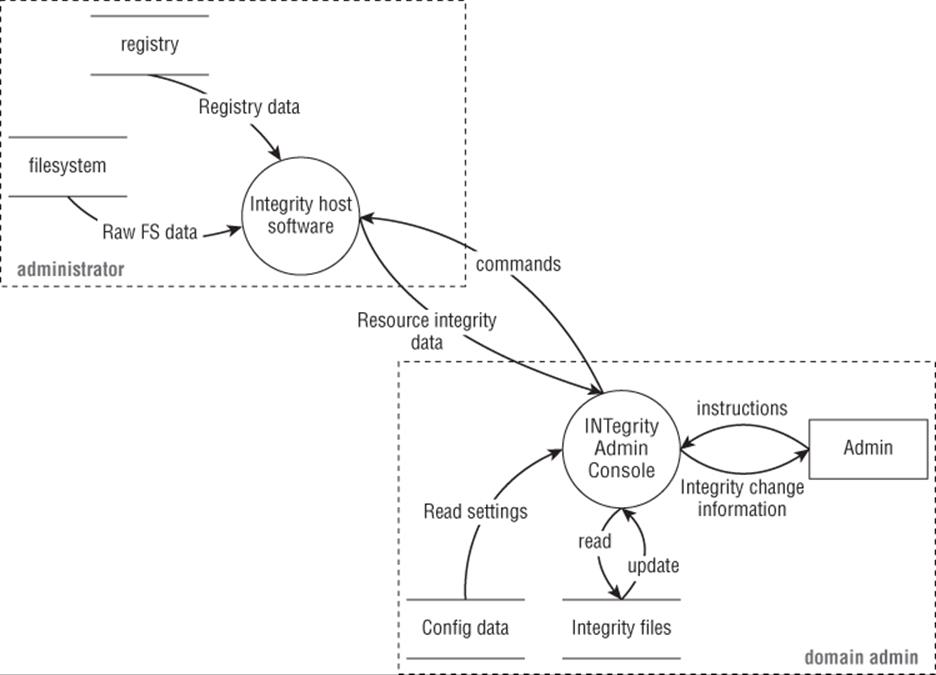

The Host Component

This small host component is written in C++ and runs as a service on a Windows server. Its role is to take requests from the admin console and respond to those requests. Valid requests include getting information about host component version, and recursive and non-recursive file properties. Note that the host software performs no analysis; it sends raw integrity data (filenames, sizes, hashes, ACLs, and so on) to the admin console, which performs the core analysis.

The Admin Console

The admin console code stores and analyzes resource (file, registry) version information that comes from one or more host processes. A user can instruct the admin console to connect to a host running the iNTegrity host software, get resource information, and then compare that data with a local, trusted data store of past resource information to see if anything has changed.

The iNTegrity Data Flow Diagrams

The iNTegrity data flow diagrams are shown in Figures E.5 and E.6.

Note

The iNTegrity example comes from a time when the standard advice was to create a context diagram, which can be helpful when an external threat modeling consultant is being used, acting as a forcing function to consider the scope and boundaries of the threat model.

Figure E.5 Context diagram

Figure E.6 Main DFD

Exercises

The following exercises were designed to walk students through the activities they'll need to perform to find threats. They can be used by readers of this example without modification:

1. Identify all the DFD elements. (People often miss the data flows.)

2. Identify all threat types to each element.

3. Identify three or more threats: one for a data flow, one for a data store, and one for a process.

4. Identify first-order mitigations for each threat.

Extra credit: The level 1 diagram is not perfect. What would you change, add, or remove?