The Mikado Method (2014)

Part 2. Principles and patterns for improving software

Chapter 7. Common restructuring patterns

This chapter covers

· Patterns for drawing Mikado Graphs

· Patterns for moving code around

· Some tricks for changing code

Your Mikado Graphs will likely never be exactly the same, but often you’ll get the feeling that you’ve seen something similar before, or that you’re doing a chain of refactorings you’ve done previously. When you see such a form recurring or see yourself repeating almost the same thing you did last week, it’s possibly a pattern. We call them patterns because they’re more or less prepackaged solutions that can be applied to similar situations. With a little bit of mapping to your problem domain, patterns are good tools to have in your toolbox.

The patterns included in this book are ones that have helped us; they’re not by any means an exhaustive list. We encourage you to observe your behavior when it comes to restructuring and programming and to find your own patterns.

In this chapter, we’ll show you some patterns for moving and grouping code that’s been scattered in different packages in a codebase, a central task when working with legacy code. In addition, we’ll show you some code tricks we’ve used. But we’ll start out easy with some graph patterns for drawing clearer graphs.

7.1. Graph patterns

When we use the Mikado Method to draw a graph and restructure software, the graph sometimes becomes a bit cluttered or unclear in different ways. We don’t want to create too many rules about how to draw the graph, but we also want to have clear graphs, and there’s a balance to strike. In the following sections, you’ll read about some patterns that make graphs easier to draw, work with, and understand. You’ll come across patterns that deal with exploring options, splitting graphs, concurrent goals, and other issues.

7.1.1. Group common prerequisites in a grouping node

Situation

You have a group of nodes that depend on the same other node, or nodes.

Solution

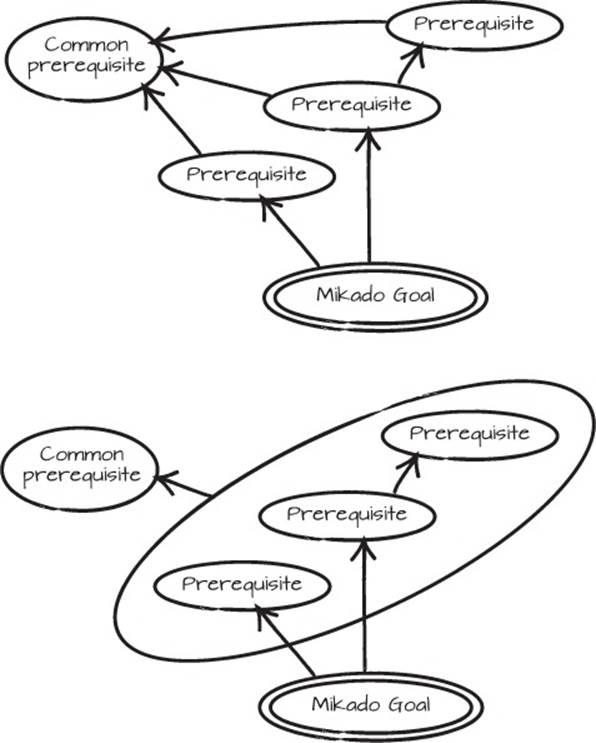

Gather the group of nodes in a grouping node and draw arrows to or from that grouping node (see figure 7.1).

Figure 7.1. Two equivalent graphs; the second one groups nodes with common dependencies

Scenario

The need to group nodes often arises when several changes depend on one and the same prerequisite. For example, when classes need to be moved to a new project, you need to set up that project before any classes can be moved. Figure 7.1 could describe such a case where three actions are grouped. If there are only three actions that depend on the same prerequisite, the graph isn’t very cluttered, but if you come across a case where 10 changes depend on the same prerequisite, the need to group nodes becomes more compelling.

7.1.2. Extract preparatory prerequisites

Situation

You have a set of tasks that need to be carried out before starting on a goal, but the tasks aren’t directly related to the goal.

Solution

Extract the preparatory prerequisites to a separate Mikado Graph, and complete them before you start.

Scenario

You want to complete some preparations before you even start to explore the code and work the Mikado Method. If you add those preparatory prerequisites to the graph, all the other nodes will point to them, wasting space and cluttering the paper or whiteboard. Examples of such changes are general code cleanup (like removing unnecessary or unused code), commented out code, or applying a formatting template on all the code.

Cleanups

Remember to put cleanups, formatting, and the like in separate commits. That way it becomes a lot easier to find the relevant differences between commits.

7.1.3. Group a repeated set of prerequisites as a templated change

Situation

You have a common set of prerequisites to perform at different places in the code, and repeating these prerequisites at all the different places in the graph creates too many nodes, cluttering the graph.

Solution

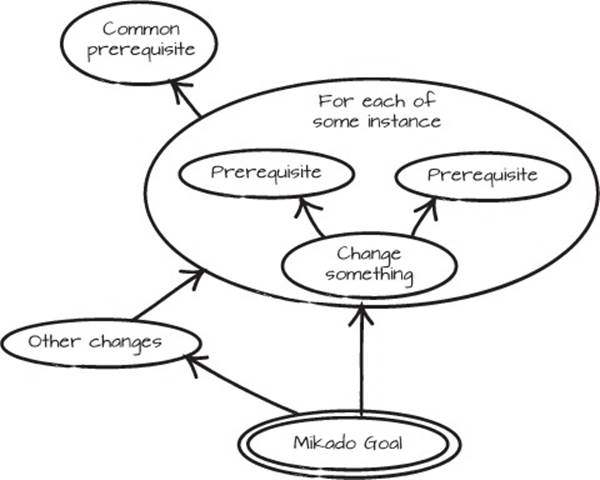

Draw the prerequisites in a separate template bubble, and annotate the bubble with the items for which you need to repeat the template.

The template change also becomes a grouping node that itself can have prerequisites, as in figure 7.2.

Figure 7.2. The template change is the big bubble, whose actions (nodes) are performed over every item with the same prerequisites.

Scenario

If you decide to change a method signature, the callers of that method will need to be updated in several places and you can record that information in a templated change. When you work with a dynamically typed language, or if your development environment doesn’t have automated refactorings that can perform a change across the entire codebase, you’ll need to do this more frequently.

7.1.4. Graph concurrent goals with common prerequisites together

Situation

You have different Mikado Goals that need to be dealt with simultaneously.

Solution

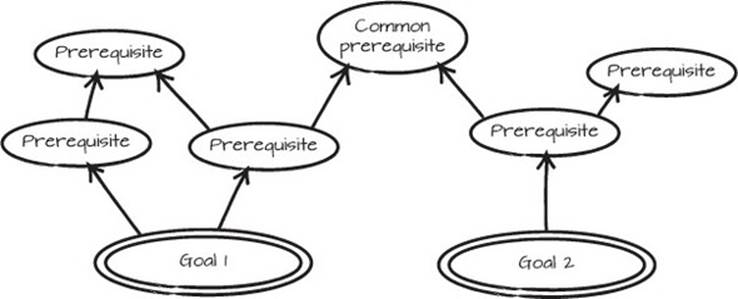

Draw the goals in the same graph and let them share common prerequisites.

Scenario

If you have two goals, it might look like the graph in figure 7.3. We had a similar scenario in chapter 5 where we wanted to separate the loan applications from loan approvals. If, after the restructuring in that chapter, we wanted to put the query part of the application on a separate server for performance reasons, those two goals would have had some common refactorings, such as moving the LoanApplication classes to the common project we created in that same chapter. When the graphs for the different goals are set side by side, the common hot spots are easier to identify and deal with, their importance is elevated, and you have more information about how that piece of software should be restructured. Figure 7.3 represents an abstract version of such a graph.

Figure 7.3. Two goals with common prerequisites

7.1.5. Split the graph

Situation

You need to distribute work from one graph to a separate graph. This can be because you need to spawn the work to another working unit, or perhaps you just ran out of whiteboard space.

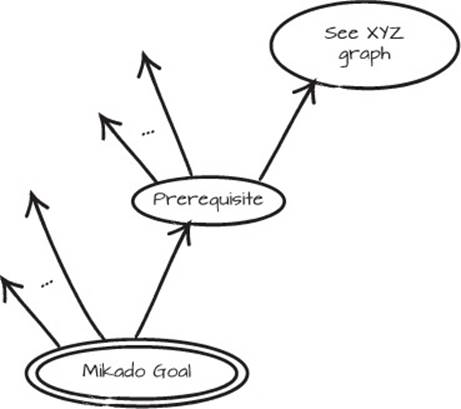

Solution

Create another graph that’s really a subgraph, and then draw a node that contains a clear reference to where the subgraph is (see figure 7.4, where the subgraph is named XYZ graph). Preferably the broken-out part shouldn’t have any arrows back to the original drawing.

Scenario

When you’re doing larger restructurings and improvements, splitting a graph can be a way of limiting the scope. You might want to focus on a smaller part of the graph because you don’t have time or you don’t have enough developers. A smaller portion of the work can often be completed in a shorter time, and splitting the graph makes it easier to distribute the work. If you split it into several parts, multiple people or teams can help complete the work.

Figure 7.4. Splitting a Mikado Graph

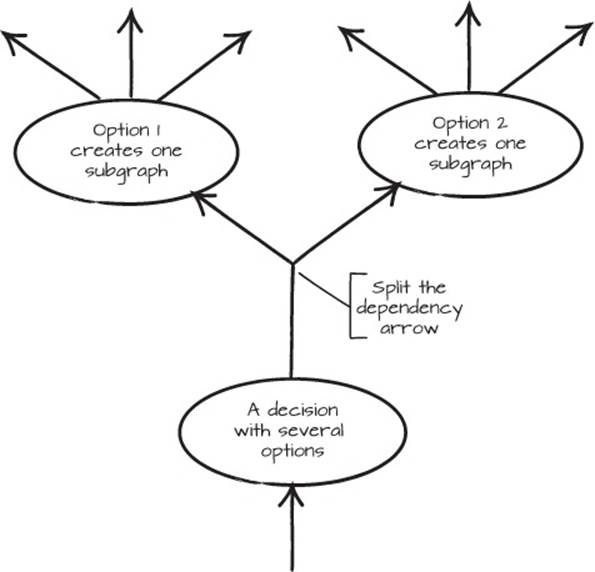

7.1.6. Explore options

Situation

You have a problem that can be solved in more than one way, and you don’t know which is the best solution.

Solution

Draw a split-dependency arrow to the options you have. Explore each option as far as you need to get sufficient information and to understand reasonably well what the consequences are for choosing each option. See the graph in figure 7.5 for an example.

Figure 7.5. Exploring two different options for solving a problem

Scenario

A common example of choosing between options is breaking circular dependencies. Imagine a scenario where class A depends on B, B on C, and C in turn on A. Circular dependencies can effectively be broken by introducing abstractions. In Java or C#, you can extract an interface, and then use a class that implements that interface. But before you decide which class you’re going to use, you can experiment with two or maybe all three of them, as in figure 7.5.

Exploring options gives you more information, and more information makes it easier to resolve design discussions if you’re uncertain which solution is superior. This is especially important when you can’t predict the consequences properly. Seeing what an implementation actually looks like is a lot more powerful than imagining it.

If you can complete other parts of the restructuring, we suggest you do them first and delay the parts where you’re not sure which path to take. Working with the system and the Mikado Method will help you build more knowledge about the system. When you’ve taken care of the other paths, you might find you’ve gained enough knowledge about the options to know which to choose. Occasionally the problems you want to solve with one of your options may have already been resolved, but don’t count on it.

Once you’ve decided on an option, the options not chosen and the prerequisites it doesn’t share with the rest of the graph can be removed from the graph.

7.2. Scattered-code patterns

Packages, or projects, and their dependencies create restrictions on what code can reference what other code. This can be used to your advantage, but in the face of change it may also pose a problem if you can’t move or access code as you want to.

A common scenario we face when we deal with legacy code is that applications have been split into packages, but little care has been put into what goes in what part, resulting in a mess of dependencies. Code that should be together is scattered across the codebase, rather than cohesively placed behind a service or an API.

What’s a package?

When we talk about packages, it’s the UML component concept we mean. Java developers can think of projects, and .NET programmers can think of assemblies.

The packaging principles described in section 6.2 are usually violated in these scenarios, and when these principles are broken, problems usually manifest themselves in a couple of different ways:

· You might have difficulty finding the code you’re looking for, because the package names don’t clearly identify what’s in them.

· You might discover dependency problems between libraries and other packages when you move things around, typically when you use the Naive Approach. When logic is spread across the codebase, a nasty web of dependencies forms and keeps everything entangled.

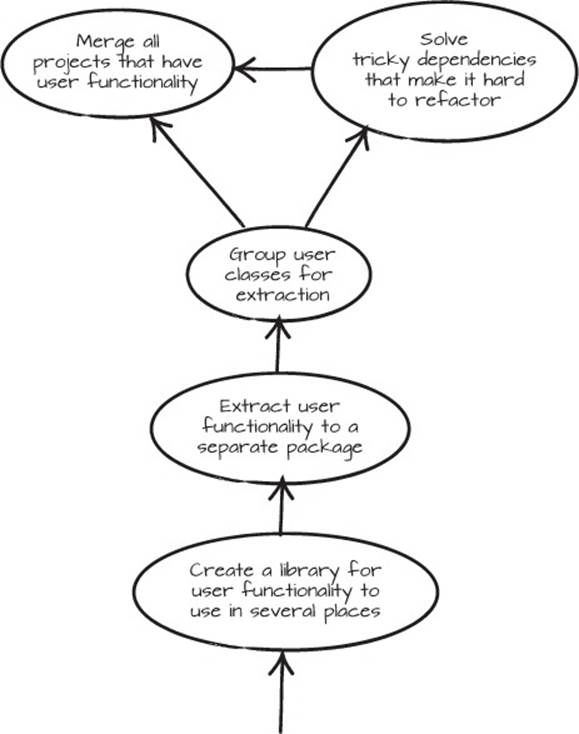

A common Mikado scenario is extracting a piece of code from such a codebase to create a library that can be used in several places. In the following sections, you’ll see a few powerful ways to move code around, remove code, or put parts of your code into new packages.

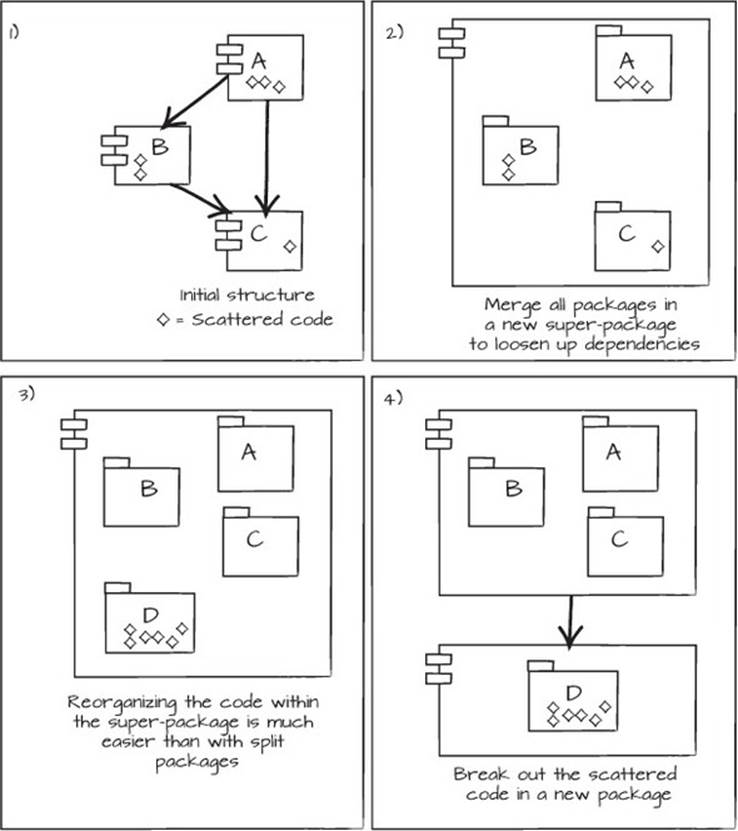

7.2.1. Merge, reorganize, split

Situation

You have code that isn’t partitioned in the right packages, and you need to rearrange them in a new structure.

Solution

Merge the relevant packages, reorganize the code, and then extract the new libraries to new packages, as illustrated in figure 7.6.

Figure 7.6. Merging code to split it out another way: 1) Code is scattered in a structure. 2) Merge all the code into a single super-package. 3) Reorganize the code within the super-package and gather the scattered code into a new package. 4) Extract the new package containing the formerly scattered code.

Isn’t merging packages just a way to hide the dependency problems?

Well, yes, in a way. This is often not the permanent solution, but a stepping stone to something better. But if you can make this move and avoid having these dependency problems block critical paths, you could just leave them there until they enter your critical path again.

Scenario

When you deal with scattered code, it’s often beneficial to merge the affected packages to temporarily avoid any blocking dependencies, and thereby get more flexibility to implement the changes you want. You can often start by merging packages, reorganizing them, and then extracting a new library, as illustrated in figure 7.6. After you’ve merged the packages, you can group the scattered code and, following the Naive Approach, move it to the new package. The compiler, analysis, or your tests will then show if you missed any dependencies that you need to take care of first.

In most cases, it’s fairly straightforward to merge two packages. There’s rarely more to it than moving the contents of one package into the other, which then becomes the union of both. After that, you just make all other packages that depended on either of them depend on the newly created union package. If you run into trouble, it’s typically with dependencies that reference parts of a package (such as A, B, or C, as described in figure 7.6) and can’t reference back to the newly created A-B-C package, because that would create a cyclical dependency. The usual solution is to use the Dependency Inversion Principle, and insert an interface.

If you remember the door-and-lock DIP example from Chapter 6 (section 6.1.5), this is the same thing. Use an abstraction to break and invert a dependency, create an interface, and put that with the scattered code in the newly created package, D.

After such a change, there’s more information available on how you can structure the software. If you need to extract more functionality from the A, B, or C packages, you can do that by following the same procedure. If the packages don’t have to be split for any reason, then keep them together.

The resulting Mikado Graph can vary in appearance, but a typical graph might look like figure 7.7.

Figure 7.7. Extracting a library containing user functionality

Transforming Problems

One way to look at this merge-move is as a common trick in mathematics: transforming a problem into another coordinate system where solving it is easy, and then transforming the result back to the original coordinate system. Merging packages is a little bit like transforming a problem to another coordinate system, and then transforming it back into a better solution by extracting the packages you need.

Sometimes the packages can’t be merged. Then you have to use another tactic to extract the new package.

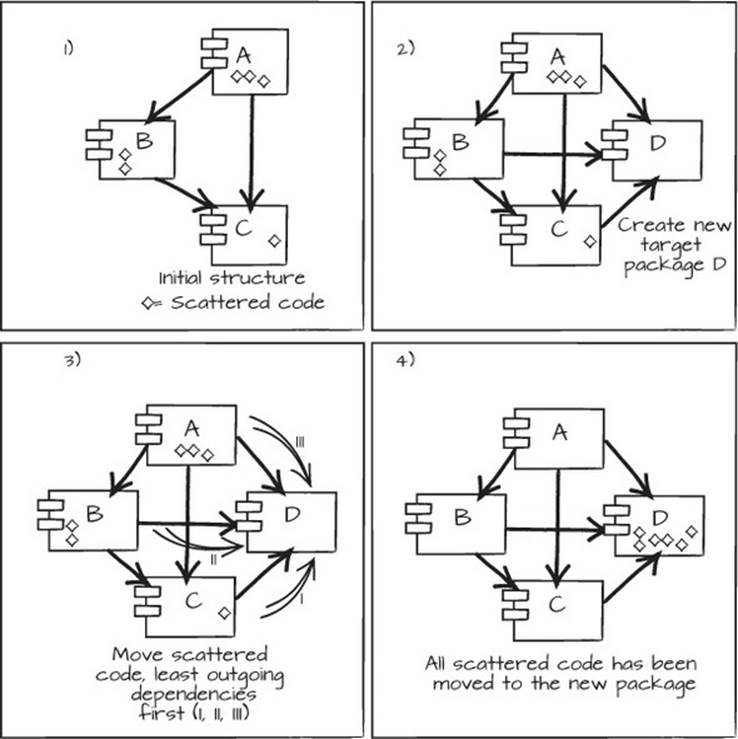

7.2.2. Move code directly to a new package

Situation

You need to regroup scattered code into a new package, but for some reason you can’t merge the code before splitting out the new package, as in the merge-reorganize-split pattern.

Solution

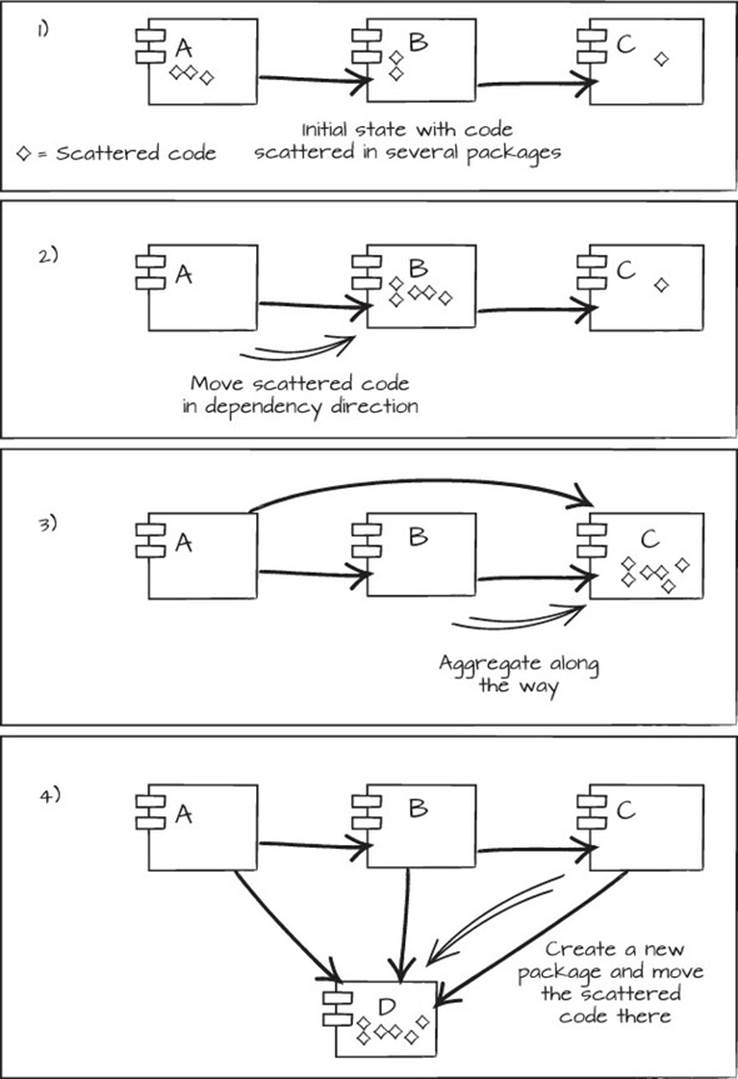

Create a new package that all concerned packages depend on, and move the scattered code directly to the new package, as shown in figure 7.8.

Figure 7.8. Move code directly to a new package: 1) The initial situation with scattered code in several packages. 2) Create a new package for the scattered code. 3) Move the scattered code into the new package, starting with the package with the least number of outgoing dependencies. 4) The result with all scattered code gathered in one package.

As you can see in the figure, you start out with packages that have scattered code (1). Then you create the new empty package and add that package as a dependency for the other packages (2). Next, you move the scattered code from the package with the least number of outgoing dependencies first, which in this example is package C, and then in turn B and A (3). By doing so, the risk of breaking dependencies by moving code is minimized, although not eliminated. You end up with the scattered code gathered in the new package (4).

Scenario

In the loan server example from Chapter 5, you wouldn’t want to merge the public application and administrative approval services in order to extract common code from those packages, because that would cause significant security issues. In that case, moving code directly to a new package would be a better strategy.

By following the Naive Approach and just moving the code to the new package, you’ll quickly find dependencies that have to be taken care of. As always, that information needs to go into the Mikado Graph. In the example in figure 7.8, the package dependencies are rather simple, but in reality, dependencies can be much more extensive. Analyzing the dependencies to find the leaves can be helpful, but tedious. Instead, you can use the Naive Approach to narrow down what parts of the code you need to analyze more closely to figure out what you need to do. This pattern generally requires you to take care of more accidental dependency problems than the merge-reorganize-split pattern does.

7.2.3. Move code gradually to a new package

Situation

You want to gather scattered code, but you can’t, or don’t want to, merge the packages or extract a new package immediately. It’s also possible that the moved code could benefit from some intermediate processing as you extract and move it between packages in the codebase.

Solution

Accumulate the new package as you move the code throughout the system, as shown in figure 7.9. In some cases, it’s possible to just move scattered code in the dependency direction, and then extract a new package. In other cases, the code needs some preparation in order to be moveable. The Naive Approach can help you narrow down what analysis and refactoring needs to be performed.

Figure 7.9. Move code gradually to a new package: 1) Initial state with code scattered in dependent packages. 2) Move the scattered code in the dependency direction to minimize problems. 3) Aggregate along the way; the A package acquired a dependency to C, in order to be able to use the scattered code there. 4) Create the destination package and gather the scattered code there; all packages depend on this new package because they all use the scattered code’s functionality.

Moving code and dependency directions

When you move code through a system, it’s always easier to move it in the dependency direction. If package A depends on package B, it’s generally easier to move code from package A to package B than in the opposite direction. For moving code in the opposite direction, you need to use the tricks from the Dependency Inversion Principle to make sure the dependencies are going exclusively from the moving code to the code left in package B. This can be tedious work, and it almost always requires changing larger parts of the package structure.

In this figure, the first step is the original situation, and each subsequent step moves scattered code along the dependency direction until it’s all in one package. After that, it’s possible to move the scattered code to a separate package altogether.

Scenario

This pattern has the least impact on existing code of all the scattered-code patterns, especially when you can move the code in the dependency direction. This pattern is also a little bit more stealthy than the previous patterns. Creating new packages communicates an intent, and this pattern can be a good option if you don’t want to expose that intent at an early stage.

7.2.4. Create an API

Situation

You need to hide implementation details from the consumer of a package.

Solution

Create an API by extracting interfaces and types wrapping the implementation details, and move the API code to a separate package.

Scenario

Breaking out functionality as described in the previous examples may make your code comply with cohesion principles or enable you to do what you want, but there might still be some work left to do. Maybe the extracted code contains too many classes or too much information for the consumer of that library. Maybe parts of the implementation shouldn’t, or couldn’t, be provided. Maybe the implementation of the package changes a lot, but the API of that package is rather stable. Maybe there are to be several implementations of that API.

API—application programming interface

An API is part of a program that’s exposed to the consumer of the program. It’s the interface used to separate the what from the how of the program, and it’s often a good complexity reducer. We prefer APIs that are self-explanatory, that guide the user toward correct usage, and that require no additional documentation to be used correctly. Any error messages should clearly state the detailed cause of the problem and the measures required to correct the error.

In these cases, it might be a good idea to create an interface package for the API and an implementation package as an example or default implementation of the API. This works a bit like the Dependency Inversion Principle, but for packages. The consumers of the package create their program using the interface package, or use the default implementation, or provide an implementation of their own at runtime. By separating the what from the how, the packages adhere to the Stable Dependencies Principle and the Stable Abstractions Principle.

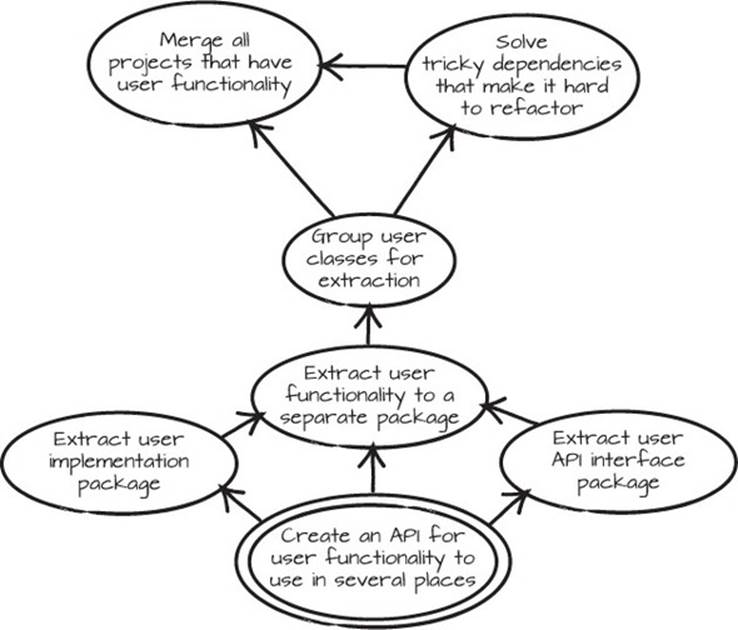

Use the Naive Approach to try moving out the implementation part to see where it breaks, and use that information in the Mikado Graph. Try to separate, or extract, the necessary interfaces and classes. When they’re the only ones used directly, you can move the implementation part to a separate package. A typical Mikado Graph might look like figure 7.10.

Figure 7.10. Extracting a library with user functionality, including an interface and an implementation package

7.2.5. Use a temporary shelter

Situation

You have code for a concept—maybe single methods or even blocks of code—that’s scattered across the codebase, and you can’t decide in advance how to partition the code for the concept.

Solution

Gather the pieces in a temporary shelter—a class, or possibly a module—where you can get an overview of what functionality you have for the concept. From there, it’s often easier to get an overview, remove duplication, and see the patterns for repartitioning the code.

Scenario

One type of problematic code is duplication on the domain level. It usually manifests itself as related code scattered all over the codebase. The preceding examples relate to scattered classes, but sometimes code is scattered on a more fine-grained level, with methods here and there, or even blocks of code within methods. This scattered code is usually copy-paste parts, as in listing 7.1, or methods that do almost the same thing, as in listing 7.2.

Listing 7.1. Plain old copy-paste

...

public boolean isParent() {

return isValid() && isActive();

}

public boolean canHaveSubComponent() {

return isValid() && isActive();

}

...

Listing 7.2. Subtle domain-level duplication

class Customer {

List accounts;

...

public boolean isActive(Date date) {

return accounts.size() > 0 &&

!transactionsWithinPeriod(0, date).isEmpty();

}

public boolean canTerminateAllAccounts(Date today) {

return !accounts.isEmpty() &&

futureTransactions(today).size() == 0 ();

}

...

}

The duplication in the copy-paste example is easy to spot. In the second example, it’s much harder to spot. The accounts.size() > 0 expression is actually the same as !accounts.isEmpty(). The futureTransactions() could be the same as transactionsWithinPeriod()because they sort of suggest that you’re looking at transactions within a period. At some point, someone decided that the isActive() method and the canTerminate-AllAccounts() method should go down separate paths.

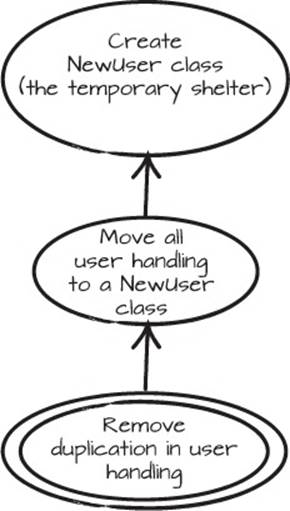

Instead of continuing down the same path or scattering code even more, try to find a couple of core ideas behind the scattered code. Examples of this can be User or Payment—something that fits the domain at hand. Choose an existing class or create a new one for these core areas—these classes will be temporary shelters for the functionality. Then try to move all related functionality to the temporary shelter. This might be ugly, but it’s a stepping stone. figure 7.11 shows what this might look like in a Mikado Graph.

Figure 7.11. Move functionality to a temporary shelter before performing a change.

After a while, you’re likely to see patterns of similar code in those rather ugly temporary shelters. At that point, it’s time to remove the duplication by extracting common sections. Sometimes this is best done with a base class and variations in subclasses or in separate functions. Sometimes it’s better to break out separate concerns to new classes and delegate to those classes. It’s the old composition versus inheritance decision again.

With some effort, the previously scattered blocks of code can end up in a comprehensive and cohesive structure, and the temporary shelter is gone.

The Mikado Method in the real world

Even though it’s desirable, sometimes it isn’t feasible to reach a goal by taking small steps that can be committed to a versioning system continuously. It could be that other developers are working on the code, and your changes would result in merge conflicts that are very tedious and disruptive to resolve. It could also be that making half a change would introduce a pending state where there are two ways of doing things, which might be confusing at best and error-prone at worst.

We’ve used the Mikado Method in such situations. We practiced our refactoring over and over again until we felt confident that we could perform the change in the time slot available. This included heavy use of automated refactorings and refactoring scripts to speed up the process and relieve the drudgery of doing the same refactorings over and over again.

We particularly remember a time when we performed a large, single-step refactoring where the objective was to replace an old XML configuration with pure code. The XML glued together business logic and caused some serious problems for the developers. The XML had been a big concern for about five years, but no one had dared touch it.

The major reason for the change was to simplify code navigation and enable further refactorings, which was almost impossible due to the fact that the XML configuration was so intertwined with the code. Wherever we turned, the XML was buried deep in the code. To make matters worse, there were only a few tests in place, partly because the framework made it hard to test at a good level, and partly because of historical neglect. There was no time to cover the application in tests, so the change had to be made in a single step or development of the project would grind to a halt during the change. This was not an option at the time.

We created a Mikado Graph for the change and started the Mikado dance. Make a change, see what breaks, extend the graph, and revert. After almost every revert, we synced the code with the main repository so we were working with the latest version from the other developer.

Some of the prerequisites in the Mikado Graph were running scripts that generated code from existing XML. Other prerequisites were regular expression replacements that could be performed in the IDE. There were also the standard refactorings that the IDE usually provides, like move method, change method signature, and extract method.

Some of the prerequisites were leaves that could be checked in and marked as ready in the Mikado Graph, but most of the changes required replacing all the XML with code before they were complete. This could not be checked into the common code repository until it all worked and all XML files were replaced.

To perform such a change without serious merge conflicts, it was necessary to have everyone check in their code. In this case, the development couldn’t be halted because the majority of the team wasn’t working with the XML replacement. The switch from XML configuration to the new solution needed to be performed one evening, and everyone had to check in the code before 5 p.m. that afternoon.

In the days before, we practiced and refined the Mikado Graph so we could perform the change as swiftly as possible. We probably rehearsed parts of the graph 20 or 30 times before it was time to actually perform the change.

At 5 p.m. the refactorings began, this time for real. Scripts were run, code was generated, and refactorings were performed across the codebase. At 7 p.m. we realized that we had, after all, missed a special case, and we had to revert the code and start over. After an additional couple of hours, and no further mishaps, we had performed the refactorings and all the XML code was gone. We verified the solution by running the few scenario test cases that existed, and then we checked the code into the VCS. At about 1 a.m. we could leave the building.

In spite of the size of this massive change, there were just a couple of minor problems reported over the next six months from the refactoring. This result would have been hard to achieve in such a short time in any other way.

7.3. Code tricks

When we’ve restructured code, we’ve used a few other tricks that have eased the burden. These tricks aren’t quite patterns, but they’re still useful in different situations.

7.3.1. Update all references to a field or method

When the need to change a field or a method implementation arises, you need to update all the references as well. For example, if you have a private field that’s accessed directly in a class, but you want to move that field into a Person object instead, you can follow a fairly simple process:

1. Encapsulate the field.

2. Change the implementation within the encapsulation method.

3. Inline the contents of the encapsulation method.

This makes the whole refactoring safer. In code, it would look like this:

public class Employee {

private String name;

public Employee(String name) {

this.name = name;

}

public String greet() {

return "Hello "+name;

}

}

First, you use the Introduce Indirection refactoring to encapsulate the member name, resulting in the following:

public class Employee {

private String name;

public Employee(String name) {

this.name = name;

}

private String getName() {

return name;

}

public String greet() {

return "Hello "+getName();

}

}

Then you change the one usage of name to use an introduced Person object instead:

public class Employee {

private Person person;

public Employee(String name) {

this.person = new Person(name);

}

private String getName() {

return person.getName();

}

public String greet() {

return "Hello "+getName();

}

}

After that, do the Inline Method refactoring on the Employee.getName() method:

public class Employee {

private Person person;

public Employee(String name) {

this.person = new Person(name);

}

public String greet() {

return "Hello "+person.getName();

}

}

Granted, for this simple code, the process may seem wasteful, but often members and properties are used in several places in a class or across the codebase. In those cases, getting consistent help from automated refactorings can be crucial.

The same technique can be used when you need to change calls to a method. Use the Introduce Indirection refactoring on the original method to wrap it in a new method, and call the new method in all places the original method is used. Then change the implementation in the new wrapping method. After that, you can use the Inline Method refactoring as previously to introduce the change of the wrapping method in all places it’s called from. Remember to make sure that any objects referenced in the new wrapping object are also available from the calling places. Use the Naive Approach and inline the new wrapper immediately to see what objects are missing and where they’re missing.

Extract Method and Inline Method automated refactorings

Extract Method is a powerful automated refactoring in IDEs for simplifying long methods or heavy duplicated code. But its counterpart, Inline Method, can be equally powerful when it comes to refactoring and restructuring code. If you don’t use both, you’re missing an important tool. Play around with them and see how creative you can be.

7.3.2. Freeze a partition

When you have external users, like developers in another project or customers that use an API you’ve created, you need to stay backwards compatible, and you can’t change the code in whatever way you like. The users expect certain parts of the code to be stable. To avoid changes in those parts, you can freeze them.

The more code you change, the likelier it is that you’ll accidentally change an API or some implementation that external developers depend on. People downstream of yourself who rely on the product of your work aren’t as interested in your refactorings as you are. While automated refactoring is a powerful tool, sometimes it can affect too much of the code, changing parts that shouldn’t change.

Sometimes, being aware of what can’t change is enough, but sometimes a more structured approach is needed. When you need to be extra careful, you can move parts of the code into a special project or directory, and watch that area closely. That way you can quickly see if the frozen parts are changed when you check what files have changed before checking in.

Another useful strategy is to write reflective API tests that aren’t affected by refactorings whose sole purpose is to verify signatures, classes, and interfaces that can’t change. A variation on this approach is placing the API tests in a project that isn’t affected by automated refactorings. This will also provide excellent documentation for anyone who uses the API regarding what parts will be more stable.

7.3.3. Develop a mimic replacement

Every now and then you need to introduce an indirection around an entire framework that’s used in your application. The reason could be that you want to replace it, or that you want to be able to test without calling the actual framework, but the problem is that the framework is used almost everywhere in the codebase. This makes it very tedious and difficult to implement detailed changes in all the places where the framework is used. Instead of changing the users of the framework to fit the replacement, you can change the replacement to fit the users.

In this situation you can develop a mimic replacement of the framework you want to replace. This mimicking version won’t have all the functionality of the framework. Only the functionality that’s actually used in the application is implemented.

When the mimic is implemented, a switch is made from the old framework classes to the new mimic classes in one of two ways. You can give the mimic exactly the same class names and package names, or namespaces, as the old mimicked framework. Or you can give it the same class names except for the package name. When you switch, you change the import or usage statements, just linking in the new framework instead of the old. This way the framework can be replaced without changing any of the code that uses the framework. The mimicking version must, of course, also do the work that the framework did, possibly by relaying to a new framework or the old framework.

A variant of this is when you’re pleased with the existing framework, but you need an interception point to be able to alter behavior for testing or to introduce alternative implementations. The mimic version can have the option of just forwarding to the old framework, almost in a one-to-one mapping, or of having mocked or stubbed responses.

7.3.4. Replace configuration with code

Basically, there are two types of things that end up in configuration files: configuration of behavior and configuration of environment. The former doesn’t change after the code is built, and the latter must be editable before, during, and after the program is executed.

Many software developers, and others too, believe that applications should be extensively configurable from within the application or with configuration files, thus minimizing changes to source code. The reason usually offered is that it’s better to have a flexible application. The real reason is more likely that no one knows how the software will be used, or no one dares make the decision. This usually leads to complex code and extensive configuration files that are sometimes equally complex.

These configuration files often stand in the way of navigating, understanding, and changing a codebase. They also add another layer of complexity when it comes to testing code and testing configuration options that aren’t allowed. This tedious work adds little value, because most of the possible configurations are often left unused.

Because the type of flexibility that developers hope to achieve with behavioral configuration parameters rarely changes at runtime, you can either skip them entirely or build a separate product or distribution for those cases. In the following example of customer-specific code, creating a plugin architecture is probably better, and the plug-ins can be built and delivered as separate modules:

...

if (customerType == PAYING_CUSTOMER) {

editor = "FullFledgedEditor";

}

...

If you have a lot of this type of configuration, you might make up time by generating the code from the configuration files and throwing the configuration files away. If you have too many variations, it’s probably an indication that you can’t handle the complexity you’ve created, and the solution needs simplification.

Configuration parameters that must vary in and around runtime are the parameters that actually need configuration files. When your environment forces you to use configuration files, make sure the intersection with them is as limited as possible. This will greatly simplify any immediate and future refactoring work.

7.4. Summary

In this chapter, you’ve seen a few patterns for avoiding cluttered graphs:

· Group common prerequisites in a grouping node

· Extract preparatory prerequisites

· Group a repeated set of prerequisites as a templated change

· Graph concurrent goals with common prerequisites together

· Split the graph

· Explore options

You’ve also learned a few patterns for changing code:

· Merge, reorganize, split

· Move code directly to a new package

· Move code gradually to a new package

· Create an API

· Use a temporary shelter

In addition, we showed you some code tricks and tactics that we’ve used for restructuring software. These are just a few patterns and tricks we’ve found useful, and there are certainly more to be discovered. We bet that the more you use the Mikado Method, the more recurring structures and patterns you’ll find.

This is the end of the book, but it’s not the end of your Mikado Method journey. One of the most important things to take away from this book is to try things and see what happens. We hope you’ll try the Mikado Method and see where it takes you. There are still vast amounts to learn about it, and to learn about restructuring code. We hope to see your thoughts on the matter, and to learn from your experience. Sharing is caring. :-)

In case you don’t want to put the book down and start restructuring that big ball of mud just yet, there are still three appendixes you can explore. The first is about technical debt and how to analyze where your problems come from. The second is about things you should think about before starting a Big Change. In the last appendix, we’ll revisit the loan server, but this time in a dynamically typed language, to see how to get feedback when there’s no compiler to lean on.

Try this

· If you’ve ever tried the Mikado Method, did you make any changes to the graph to suit your specific needs? What changes? Why?

· What did you think were the three most interesting patterns? Why?

· Can you think of any occasions when any of the patterns in this chapter would have been useful?

· Have you discovered any patterns of your own?