Total Information Risk Management (2014)

PART 1 Total Information Risk Management Background

OUTLINE

Chapter 1 Data and Information Assets

Chapter 2 Enterprise Information Management

Chapter 3 How Data and Information Create Risk

Chapter 4 Introduction to Enterprise Risk Management

CHAPTER 1 Data and Information Assets

Abstract

This chapter introduces key concepts about data and information assets, including a discussion on characteristics of data and information assets, quality of data and information assets, and their business impact.

Keywords

Data and Information Assets; Characteristics; Information Manufacturing; Data and Information Quality; Business Impact

“I would like to assert that data will be the basis of competitive advantage for any organization that you run.”

—Ginni Rometty, CEO of IBM

What you will learn in this chapter

![]() How data and information have become the most important assets of the 21st century

How data and information have become the most important assets of the 21st century

![]() How to define data and information assets and what their unique characteristics are

How to define data and information assets and what their unique characteristics are

![]() Key concepts of data and information quality

Key concepts of data and information quality

![]() The business impact of having low-quality data and information assets

The business impact of having low-quality data and information assets

Where napoleon meets michael porter: data and information are assets

Napoleon Bonaparte, the great French emperor, conquered nearly the whole of Europe in the early 19th century before he was finally defeated at Waterloo. According to some sources, one of his famous sayings was “war is 90% information.”

In the Second World War, the allies could decrypt the secret codes generated by the ENIGMA machine that the Nazi regime used for communicating. This enabled the allies to end Nazi Germany’s submarine dominance in the world’s oceans.

More recently, in the mid-1980s, Michael Porter, one of the great management thinkers of our generation, observed, together with Victor Millar, that “the information revolution is sweeping through our economy,” thus making information the foremost competitive differentiator.

In a way, in the time between Napoleon and Porter, not much seems to have changed in this regard: information was and is one of the most important factors for competitive advantage. Data and information can help to win wars—both real ones and those we fight in business and our everyday life. And even if you are not competing against another organization, your biggest obstacles might be the constraint of time and resources, thereby limiting the means with which to follow your noble goals. It is no understatement to say that information can make the difference in the fight against poverty, environmental pollution, climate change, and diseases like cancer, malaria, and HIV.

It really does not matter what type of business you are in—information will be key to your success. Your organization could be in the private, public, or nonprofit sector. It may be local, national, or global. It may be a sole trader, a partnership, a company, or a charity. It may be large or small. The permutations are varied, but what is homogenous is the reliance upon information.

Analyzing large volumes of data can provide us with such valuable insights that it can be a true game changer. And because data and information are so valuable and powerful, we call them assets. This chapter explores what is behind the concept of data and information assets. We start with some recent history, look at data and information themselves, including how they are manufactured, their quality, and how they influence the success of organizations.

How data and information have become the most important assets of the 21st century

When you think of the most significant innovation drivers in the 20th century, one of them is surely information technology (IT). IT has fundamentally changed how organizations do business and has revolutionized both product offerings and processes. Think, for example, of the level of automation in manufacturing today, where computer-controlled robot arms have replaced human work, or the digitalization of services, which has led to the emergence of companies like Amazon, Facebook, and Google. In recent years, we have seen IT assets increasingly turn into a commodity (similar to, for example, electricity and water), to such a degree that they are in many cases relatively insignificant in terms of competitive advantage. However, IT has provided the foundation for the rise of another type of resource, which has become central from a strategic point of view: information.

There are two major trends that brought us to the Information Age, and made information a central resource in the 21st century. The first is the massive abundance of information due to the rise of the capabilities and the decreasing costs of IT over the last few decades. Today, information can be automatically captured with technologies like sensors and radio-frequency identification (RFID), efficiently processed using large computer systems, and accessed at any time and place via the Internet. The retailer Wal-Mart, alone, processes one million customer transactions every hour—that is, 2.5 petabytes (The Economist, 2010)—that can be used, for instance, to analyze customer behaviors in ways that have not been possible before.

Of course, this is not exclusive to Wal-Mart. Large IT vendors such as IBM, having recognized the potential that organizations can leverage from all this data, have strategically aligned themselves for a future of Big Data (see, for instance, http://www-01.ibm.com/software/data/bigdata/). The degree of automation that is possible when it comes to managing large quantities of data and information is constantly advancing. Today, we are living in the middle of what has been defined as the Information Age. Nearly every aspect of private and corporate life can and often is captured, processed, and exchanged digitally using personal computers, mobile devices, cameras, microphones, and other types of sensors, radio-frequency identification, Internet, e-commerce applications, social networking, emails, enterprise applications, and corporate and public databases. Potentially, every piece of information, wherever it may reside, could be accessed from any place within seconds. It can be automatically analyzed and combined to provide higher-level insights. Big Data is a resource that we not only have to protect, but also utilize in a way that is most beneficial for society.

The second major trend is the globalization of all economies, which puts organizations under constantly increasing pressure to adapt, innovate, and speed up their processes to keep up with their competitors. Information, using the enormous capabilities of today’s IT, can help companies to make better-informed timely decisions and innovate the business. In a world where almost everything can be outsourced to a cheaper supplier, information (and knowledge) are often the only remaining effective differentiators when no other traditional market barriers exist.

So, “Is data the new oil?” (see Figure 1.1), a question posed by Perry Rotella in an article for Forbes.com, a leading business magazine (Rotella, 2012), referring to a comparison first expressed by Clive Humbyat at the ANA Senior Marketer’s Summit 2006 at Kellogg School of Management, and to Michael Palmer’s blog post in which he wrote: “Data is just like crude. It’s valuable, but if unrefined it cannot really be used. It has to be changed into gas, plastic, chemicals, etc., to create a valuable entity that drives profitable activity; so must data be broken down, analyzed for it to have value” (Palmer, 2006). Jer Thorp responded in a blog article for Harvard Business Review, in which he points out a very valid distinction between data and oil: “Information is the ultimate renewable resource. Any kind of data reserve that exists has not been lying in wait beneath the surface; data are being created, in vast quantities, every day. Finding value from data is much more a process of cultivation than it is one of extraction or refinement” (Thorp, 2012). To be more precise, we will take a closer look at what data and information assets actually are and the characteristics they possess.

FIGURE 1.1 Is data the new oil?

What are data and information assets?

When data and information are important for the success of an organization, data and information become assets for the organization. Data and information assets can be in the form of structured, semi-structured, or unstructured data that is physically stored not only in computer systems, but also in paper records, drawings, photographs, etc. A data and information asset might even be something as simple as a regular phone call.

![]() IMPORTANT

IMPORTANT

When data and information are important for the success of an organization, data and information become assets for the organization.

![]() IMPORTANT

IMPORTANT

Data and information assets can be in the form of structured, semi-structured, or unstructured data; they can also be stored on mediums other than in a database, for example, on paper or even not stored at all (e.g., information given in a phone call).

Structured (electronic) data is data stored in tables in relationship or other types of databases. Table 1.1 provides a summary description of different data types. Master data is probably one of the most valuable types of structured data; it contains more permanent information about important things such as customers (e.g., address data), suppliers (e.g., an evaluation of the suppliers), physical assets (e.g., pipelines, machines, facilities), products, and product parts (e.g., their materials and subparts).

Table 1.1

Data Types

|

Data Type |

Description |

|

Master data |

Master data is key business information about customers, suppliers, products, etc., and remains relatively static. |

|

Transactional data |

Transactional data describes events happening at a particular time and refers usually to one or more master or reference data elements. This data type is very volatile. |

|

Historical data |

Historical data is data about past transactions that often need to be retained for compliance purposes. Historical data may be saved in an obsolete format or in computer (legacy) systems, which can make them difficult to access and process in an organization. Historical data will also include master and transactional data. |

|

Temporary data |

When applications require additional memory in addition to the virtual memory available, temporary data is saved. |

|

Reference data |

Reference data is classification schemas and sets of values (e.g., country codes) provided by bodies external to the organization. It may also include internal classification schema and sets of values. |

|

Business metadata |

Business metadata is characterized by a lot of free text information describing business terms, key performance indicators (KPIs), etc. It can also contain business rules. |

|

Technical metadata |

This is structured data that describes objects such as tables, attributes, etc.; the database structure and technical rules are defined in this data type. |

|

Operational metadata |

Operational metadata describes operational characteristics happening in IT systems, such as the number of rows inserted by other software applications. |

A large volume of data is automatically collected in transactions about events that occur in the organization. Transactional data records the status of organizational transactions, such as product sales, and therefore this data type record can grow to become extremely large, and is highly volatile. Reference data is classification schemas and sets of values (e.g., country codes) that are referred to by other data types, which are important to make the usage of other data (e.g., master data or interoperable data). Metadata is data about data (e.g., field names, value types, field definitions, etc.). Much of the data today is historical data about transactions in the past that often need to be stored for compliance reasons, but that can also be used for data analysis.

![]() IMPORTANT

IMPORTANT

Structured (electronic) data is data stored in tables in relationships or other types of databases (e.g., SQL database), while semi-structured data is stored in a less well-defined form (e.g., XML file). Unstructured data is the other extreme and has no predefined structure at all (e.g., JPEG picture file).

Semi-structured data can be, for example, XML or HTML files; this data type can predominantly be found on the Internet. Another example of semi-structured data is unstructured data, for instance, in the form of text processing, emails, and presentations saved on personal computers, mobile devices, and network storage systems, but also in hardcopy documents. Besides information from IT systems and documents, information also comes from communications among people who share their knowledge and observations, in both formal and informal ways. This information is not readily accessible because it is tacit, hidden in human brains. (The role of the discipline known as knowledge management seeks to make such tacit information more tangible—that is, explicit—or at least seeks to make knowledge-sharing mechanisms more effective.)

We do not make a distinction between data and information in this book. Of course, there is a difference between the two terms. But the problem is that it is very hard to draw a correct line between data and information. This is because there is no agreement on a clear definition of what information actually is, in the academic literature. A theoretical discussion of the most common definitions of data and information is presented in the following box.

![]() ATTENTION

ATTENTION

Data and information assets are not distinguished from each other in this book.

![]() THEORETICAL EXCURSION: DATA VERSUS INFORMATION

THEORETICAL EXCURSION: DATA VERSUS INFORMATION

There seems to be a general agreement that data can be defined as a symbol, sign, or raw fact (Mingers, 2006). Defining information is yet more difficult as Shannon, the thought-leader of information theory, points out: “The word ‘information’ has been given different meanings by various writers in the general field of information theory …. It is hardly to be expected that a single concept of information would satisfactorily account for the numerous possible applications of this general field” (Shannon, 1993).

Thus far, in the information systems (IS) discipline, there are two different definitions that dominate:

1. Information is “data that has been processed in some way to make it useful” (Mingers, 1996). This definition of information implies that the concept of data is objective, which means “it has an existence and structure in itself, independent of an observer” and that information “can be objectively defined relatively to a particular task or decision” (Mingers, 1996).

2. “Information equals data plus meaning” (in a specific context) (Checkland and Scholes, 1990). This definition implies that information is subjective—dependent on the values, beliefs, and expectations of the observer—and as a consequence there can be different information created from the same piece of data.

A survey of 39 introductory IS texts indicates that the first definition seems to have wider acceptance (Lewis, 1993).

The unique characteristics of data and information assets

Data and information assets carry unique characteristics that make them different from traditional assets (Eaton and Bawden, 1991; Cleveland, 1985). Bear in mind though that there are exceptions to every rule. Still the observations give us some more insight about the characteristics of data and information assets.

One problem with data and information assets is that they are not easily quantifiable when compared to traditional goods. There are many approaches to valuing data and information assets (e.g., see Glazer, 1993); however, there are different opinions about which approaches are the best. Applying an approach often leads to inconsistent valuations when it is applied for a second or subsequent time. As a consequence, data and information assets are therefore not typically considered in financial accounting.

![]() IMPORTANT

IMPORTANT

It is often difficult to measure the financial value of data and information assets consistently.

Data and information assets are usually transportable at ultra-high speed for a low cost. In an instant of a second, using a computer in London, one can access information from Wikipedia from a server that is based in San Francisco. Transporting a car from London to San Francisco will take much more effort, would cost more, and would take more time. In a way, this is a new development and has only been possible on a large scale since the advent of broadband Internet. The way our economies work today would not be imaginable without this development. Only the ultra-fast speed of communication allows an organization to become a globally integrated organization. Nobody is surprised anymore when telephoning a call center that the caller on the other end of the line is based in a different country or different continent.

![]() IMPORTANT

IMPORTANT

Data and information assets can be transported at ultra-high speed for a low cost.

Data and information assets often can be reused over and over again after initial consumption, without necessarily losing their value—with a few exceptions. When one uses a brick to build a house, one cannot use the same brick to build another house. The particular brick has already been used. Data and information assets behave very differently—they are sharable. They do not lose value when they get used per se. Let’s take the example of a weather forecast. Just because somebody else has seen the weather forecast before you might have, it does not diminish its value to you. Nevertheless, there are situations in which one has an advantage when one’s opponents do not have the same information. For instance, information that guides an investment might be worthless if everyone else has availability to the same information. Unlike physical goods, information usually has several life cycles, as it can be combined with other resources and there is no clear point of obsolescence. Data and information assets can still decay as they can get out of date and become less valuable.

![]() IMPORTANT

IMPORTANT

Data and information assets are reusable.

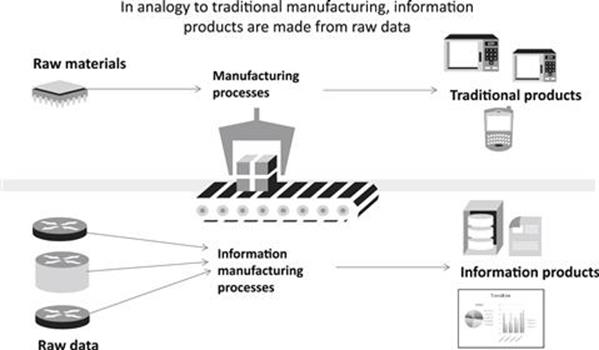

The analogy to traditional manufacturing: how raw data is transformed into information products

The idea of an information manufacturing system was pioneered by Ballou, Wang, Pazer, and Tayi (Ballou et al., 1998). As in a traditional manufacturing system, in which manufacturing processes transform raw materials into products, an information manufacturing system transforms raw data (e.g., records in databases) into information products (e.g., a quarterly report) that are consumed by information users (e.g., shareholders). An illustration is shown in Figure 1.2. The quality of the information product is dependent on both the quality of the raw data and the quality of the information manufacturing processes. Information manufacturing processes can be automated data processed by a computer, for example, age is calculated based on a consumer’s birth date. It can also be manually processed by a human, such as, for instance, writing a report that scrutinizes the financial results of a company. Like physical economic goods, data and information assets can be a commodity, which are interchangeable with other commodities of the same type, or a good that is diversified (Glazer, 1993). Many concepts from traditional manufacturing, like quality management, can be transferred to information production using this analogy.

![]() IMPORTANT

IMPORTANT

Similar to transformation processes in a traditional manufacturing system, information management processes can transform raw data into information products.

FIGURE 1.2 A comparison of traditional manufacturing to information manufacturing.

A number of practitioners and academics have advocated that data and information should be managed as a product similar to the ways in which physical products are managed. In particular, Wang and colleagues set four principles to succeed in treating information as a product instead of a by-product of a system (Wang et al., 1998). First, it is necessary to understand the needs of the customers, the information users. Second, information production has to be managed as a process with adequate quality controls, similar to those seen in traditional manufacturing. Third, information needs to be managed during its whole life cycle. And fourth, a new organizational role is required, the information product manager, who is responsible for the supplies of raw information, the production of information products, the safe storage and stewardship of data, and for the information consumers.

Life cycle of data and information assets

Considering data and information as a product, one can draw a parallel between the manufacture of a product and the manufacture of information. Like a product in its early life, information needs to be

![]() IMPORTANT

IMPORTANT

The life-cycle stages of data and information assets can be summarized as:

![]() Capture/creation

Capture/creation

![]() Organization

Organization

![]() Storage

Storage

![]() Processing

Processing

![]() Distribution

Distribution

![]() Retrieval

Retrieval

![]() Usage

Usage

![]() Archiving

Archiving

![]() Disposal

Disposal

created, organized, and stored. To make use of information for analysis and decision making, it needs to be distributed to the relevant storage systems and retrieved from these storage systems. Processing may also occur before retrieval. Finally, at the end of the life of the information, it can be archived, if required, or deleted.

Quality of data and information assets

A new discipline emerged in the 1990s dealing explicitly with data and information quality management (e.g., see Wang and Strong, 1996; English, 1999); it has its roots in the concepts of quality management, most prominently, quality control, quality assurance, and total quality management, being pioneered by the quality gurus W. E. Deming (1981), J. M. Juran (1988), and P. B. Crosby (1979). Quality management revolutionized the manufacturing industry in Japan and helped Japan’s economy to its astonishing growth during the second part of the 20th century. Western manufacturing companies subsequently copied the quality management principles to catch up with the new competition emanating from Japan. The data and information quality discipline provides many valuable new concepts and techniques that can be used to manage the quality of data and information assets. Some of the most important concepts are discussed in the following sections.

What is data and information quality?

Not all data and information assets can provide data of the same amount of quality. In fact, most organizations suffer from information quality problems and are not able to support activities in the way they should. Data and information quality is defined as “the fitness for use of data and information” (Wang and Strong, 1996). This means that it is a user-centric concept and strongly depends on the context of usage. A data and information asset might be of high enough quality for one task, but the same data and information asset can be of low quality for a different task. For instance, a spelling error in the address might prevent a parcel being delivered to a customer and is therefore of poor quality for this task, while it can be good enough for the marketing department to perform a customer segmentation analysis.

![]() IMPORTANT

IMPORTANT

Data and information quality is defined as the fitness for use of data and information. It is strongly dependent on the user and the context of usage. The terms data quality and information quality are usually used interchangeably.

In the data and information quality literature, the terms data quality and information quality are usually used synonymously and interchangeably, which as explained earlier, is also done in this book. It is noted though that “there is a tendency to use data quality to refer to technical issues and information quality to refer to nontechnical issues” (Madnick et al., 2009).

Different dimensions of data and information quality

Data and information quality are multidimensional concepts and go beyond accuracy, as illustrated in Figure 1.3. In the following, we will give some examples of information quality dimensions and how they can be defined.

![]() IMPORTANT

IMPORTANT

Data and information quality are multidimensional concepts.

![]() EXAMPLE DEFINITIONS OF DATA AND INFORMATION QUALITY DIMENSIONS

EXAMPLE DEFINITIONS OF DATA AND INFORMATION QUALITY DIMENSIONS

Accuracy: The extent to which data and information are correct, for instance, the values in a database correspond to the real-world values (e.g., the address data in the customer database does not correspond to the right address in the real world).

Completeness: Data and information have all the required parts of an entity’s description (e.g., the attributes of a record are not null, or the zip code of a customer address is missing).

Consistency: Data and information have a consistent unit of measurement (e.g., some lengths of steel tubes are provided in centimeters and others in inches).

Timeliness: Extent to which data and information are sufficiently up to date for a task (e.g., the address used to be correct but the customer moved).

Interpretability: The extent to which data and information are sufficiently understandable for a task by the information user (e.g., the user manual is written in French and most users cannot understand it).

FIGURE 1.3 Data and information quality dimensions.

Conceptual frameworks for information quality give a systematic set of criteria for evaluation of information, help to analyze and solve information quality problems, and can be a basis for measurement and proactive management of information quality (Eppler and Wiitig, 2000). Some examples of data and information quality frameworks are shown in the following box.

![]() THEORETICAL EXCURSION: DATA AND INFORMATION QUALITY DIMENSIONS AND FRAMEWORKS

THEORETICAL EXCURSION: DATA AND INFORMATION QUALITY DIMENSIONS AND FRAMEWORKS

Many different frameworks have been proposed that provide different sets, categorizations, and definitions of information quality dimensions. Some examples are presented here.

In 1995, Goodhue investigated user evaluations of information systems and identified accuracy, reliability, currency, detail level, compatibility, meaning, and presentation as important information quality dimensions (Goodhue, 1995).

Maybe the most prominent example of an information quality framework was proposed by Wang and Strong in 1996, which defines four categories of information quality dimensions (Wang and Strong, 1996):

1. The intrinsic information quality category implies that information has quality in its own right and consists of the dimensions accuracy, precision, reliability, and freedom from bias.

2. The contextual information quality category highlights the requirement that information quality must be considered within the context of the task and includes the information quality dimensions importance, relevance, usefulness, informativeness, content, sufficiency, completeness, currency, and timeliness.

3. The representational information quality category concentrates on representational aspects with the dimensions understandability, readability, clarity, format, appearance, conciseness, uniqueness, and comparability.

4. The accessibility information quality category focuses on the ability of IT systems to store and access information and contains the dimensions usability, quantitativeness, and convenience of access.

In 1996, Wand and Wang also developed an ontological approach to information quality, using the dimensions correctness, unambiguous, completeness, and meaningfulness. They claimed that dimensions could be assessed by comparing the values in a system to the true real-world values they represent (Wand and Wang, 1996).

Finally, Bovee and colleagues developed the AI1RI2 framework, which comprises the information quality dimensions accessibility, interpretability, relevance, and integrity (Bovee et al., 2003). They strongly criticized existing inconsistencies in Wang and Strong’s 1996 framework.

Many more frameworks are proposed in the literature but there does not seem to be any agreement on a fixed set of dimensions (Batini et al., 2009). As there is no standard set of information quality dimensions, Lee and colleagues argued that it is essential for every organization to choose the most relevant information quality dimensions for their organization depending on the tasks that have to be performed (Lee et al., 2006).

Sources of poor data and information quality

A lot of the data and information are not in the right format, are inaccurate, incomplete, have a poor representation, are out of date, or suffer from other defects. The reasons for poor data and information quality are varied and diverse:

![]() Organizations have a large amount of legacy data that contains flaws.

Organizations have a large amount of legacy data that contains flaws.

![]() Many systems are not very well integrated and do not have the functionality that is actually required.

Many systems are not very well integrated and do not have the functionality that is actually required.

![]() Data is not maintained and kept up to date.

Data is not maintained and kept up to date.

![]() Information collection is often seen as a side activity with not much relevance compared to other parts of the business processes in the organization.

Information collection is often seen as a side activity with not much relevance compared to other parts of the business processes in the organization.

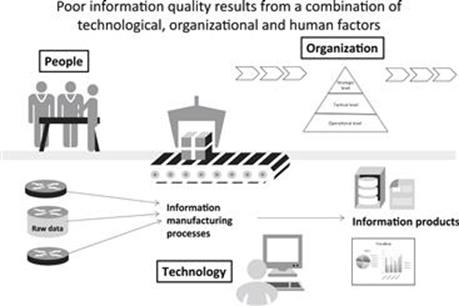

The sources for poor data and information quality can be categorized into technological, organizational, and human factors (Figure 1.4).

FIGURE 1.4 The sources of poor data and information quality.

Assessment of data and information quality

Data and information quality can be measured in two different ways. Subjective measurement is based on users’ expectations. For instance, a survey of all users of an IT system might reveal that data in the IT system is often incorrect and not fit for use for the tasks that the information users perform. Objective measurement directly examines the data and information assets, for instance, by using data profiling algorithms (see Chapter 12). Information quality metrics are often calculated automatically; information users have to provide rules that define what fitness for use means, giving due regard to both the considered task and the given metric.

![]() ACTION TIP

ACTION TIP

Data and information quality can be measured in two different ways: (1) subjectively, based on users’ expectations, for example, by using a questionnaire, or (2) objectively, using defined information quality metrics.

![]() ATTENTION

ATTENTION

Data and information quality improvement has to address the root causes of the problems to be effective. There is a wide range of potential improvement activities, which are strongly dependent on the nature of the root causes.

Improvement of data and information quality

Improvement of data and information quality has to address the root causes of poor data and information quality. Therefore, this can cover a wide range of options, for instance, the redesign of data collection processes, the introduction of new IT systems, the enrichment of data with data from external sources, and the change of organizational culture and data responsibilities.

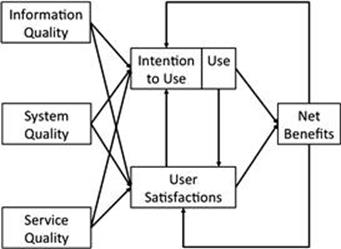

How data and information assets influence organizational success

One of the most important models in the information systems discipline is the Delone and McLean model for information system success (Delone and McLean, 1992, 2003), shown in Figure 1.5. It provides an insight into the key factors that explain why some organizations have better working information systems than other organizations. It is not all too surprising that information quality has been identified as being one of the few key determinants of business success of information systems.

FIGURE 1.5 Updated Delone and McLean IS success model. (Source: Delone and McLean, 2003.)

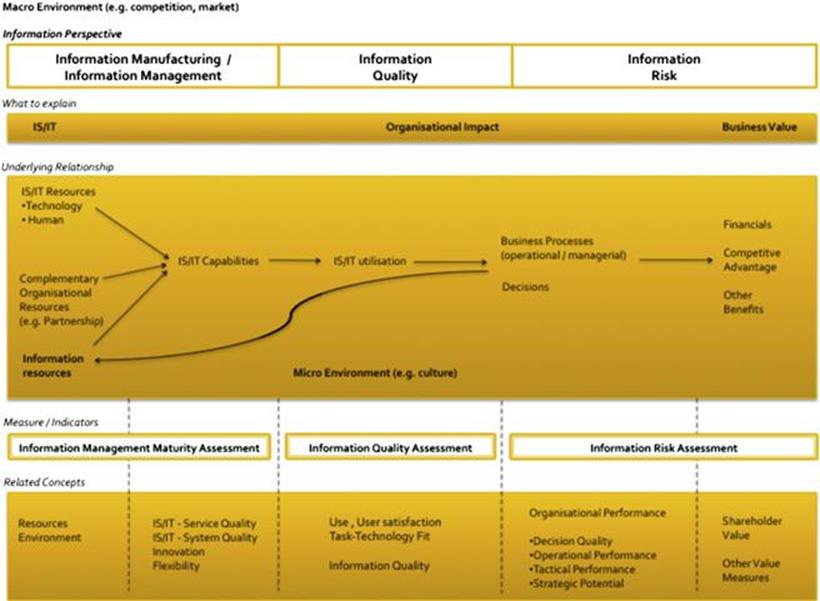

A few years ago, we conducted research in conjunction with Dr. Markus Helfert, Lecturer at Dublin City University, and Dr. Mouzhi Ge, Adjunct Assistant Professor at Universitaet der Bundeswehr Munich, to understand, more explicitly, the role of information in the IS/IT business value chain (Borek et al., 2012). The model links IS/IT business value and information quality literature and shows how different elements of the value chain are interlinked from an information perspective. The developed model, which is illustrated in Figure 1.6, explains the IS/IT business value chain as follows. Information is manufactured and managed with the help of IS/IT capabilities that use a variety of IS/IT assets and complementary organizational assets. The quality of the resulting information products determines if IS/IT utilization can meet its purpose. It influences the business processes, the decision making, and ultimately the organizational performance. When information is manufactured and managed poorly, it leads to poor information quality, which then further creates information risk, which can be both financial and nonfinancial.

FIGURE 1.6 An information-oriented framework for relating IT assets and business value. (Source: Borek et al., 2012.)

Note that the relationship between information quality and the potential for information risk is not deterministic. This means that even though information is of poor quality, the effect on the business of using that poor-quality information will not necessarily be the same each time. Therefore, there is inherent uncertainty in the relationship between poor-quality data and information assets, and the impact they have on business outcomes.

How data and information quality influence decision making

Many studies provide evidence of the clear link between the level of data and information quality and organizational success. For instance, Slone finds empirical evidence for all four categories of the information quality dimensions of Wang and Strong’s information quality framework—information soundness, dependability, usefulness, and usability—to have a significant effect on organizational outcomes (which he divides into strategic and transactional benefits) (Slone, 2006). Even more studies have shown the tremendous effect that data and information quality has on decision making (see the following box).

![]() THEORETICAL EXCURSION: THE IMPACT OF DATA AND INFORMATION QUALITY ON DECISION MAKING

THEORETICAL EXCURSION: THE IMPACT OF DATA AND INFORMATION QUALITY ON DECISION MAKING

Findings in the literature provide evidence that information quality has a strong influence upon decision quality. Some of the works are described in more detail in the following commentary.

O’Reilly III discovered in a study with decision makers how perceived quality and accessibility influence the use of different information sources (e.g., written documents, internal group members, external sources) in tasks of varying complexity and uncertainty (O’Reilly III, 1982). He found that accessibility is the main driver for the use of an information source. Moreover, perceived information quality is also a critical factor for use of an information source; this is more important than the type of task or information source and personal attributes of the decision maker.

Keller and Staelin investigated the effects of quality and quantity of information on decision effectiveness of consumers in a three-phase job-choice experiment using second-year MBA students (Keller and Staelin, 1987). They conducted an experiment with four different levels of information quantity and four different levels of information quality. Their results indicate that increasing information quantity impairs decision effectiveness and, in contrast, increasing information quality improves decision effectiveness.

Ahituv and colleagues analyzed the effects of time pressure and information completeness on decision making in an experiment with military commanders with different levels of experience (mid-level field versus top strategic commanders) using a simulation of the Israeli Air Force (IAF) (Ahituv et al., 1998). The results show that complete information improves performance, yet less advanced commanders (as opposed to top strategic ones) did not improve their performance when presented with complete information under time pressure. Moreover, time pressure normally, but not always, had a negative effect on performance. Finally, higher-qualified subjects (top commanders) usually made fewer changes in previous decisions than less senior field commanders.

Chengalur-Smith and colleagues made an exploratory analysis of the impact of data quality information on decision making (Chengalur-Smith et al., 1999). They found that the subjects often ignored the information about data quality in their decision making and rather used it in the simple scenario when an interval scale was given compared to no data quality information, and seemed to not use it in the complex scenario, probably due to an information overload.

Raghunathan explored the relationship between information quality, decision-maker quality, and decision quality using a theoretic and simulation approach (Raghunathan, 1999). He concluded that information quality can have a positive effect on decision quality when a decision maker has knowledge about the relationship among problem variables, otherwise the effect can also be negative.

Fisher and colleagues investigated the effect of providing metadata about the quality of information used during decision making (Fisher et al., 2003). Their results indicate that the usefulness of metadata about information quality is positively correlated with the amount of experience of the decision maker.

Jung and colleagues conducted a study to explore the impact of representational data quality (which comprises the information quality dimensions interpretability, easy to understand, concise, and consistent representation) on decision effectiveness in a laboratory experiment with two tasks that have different levels of complexity (Jung et al., 2005). The results strongly support the hypothesis that a higher representational data quality improves the decision-making performance regarding problem-solving accuracy and time.

Ge and Helfert presented a framework to measure the relationship between information quality and decision quality. They simulated the influence of two information quality dimensions—completeness and accuracy—in a simple yes or no decision scenario, in which they measured decision quality as the ratio of the number of right decisions in relation to the number of total decisions. Their results suggest that poor information quality can mislead decision makers and can be even worse than no information at all (Ge and Helfert, 2006). Based on this work, Ge and Helfert found statistically significant results that show the improvement of information quality in the intrinsic category (e.g., accuracy) and in the contextual category (e.g., completeness) enhance decision quality (Ge and Helfert, 2008).

Altogether, there is strong evidence that data sets with poor information quality can substantially affect the outcomes of decision making.

Organizational costs and impacts of data and information quality

Some groundwork in laying out how poor data and information quality impacts the business has been established by three pioneers in information quality: Larry English, Tom Redman, and David Loshin.

Larry English identified three types of costs—process failure costs, information scrap and rework costs, and lost and missed opportunity costs—as the major costs that are caused by poor information quality (English, 1999). Process failure costs are those that occur when a process does not perform properly; this encompasses irrecoverable costs (e.g., costs of mailing a catalog twice to the same person), liability and exposure costs, and recovery costs of unhappy customers. Information scrap (marking as an error) and rework (cleansing) costs occur when information is defective and can include a number of costs such as redundant data.

Tom Redman identified a list of impacts of information quality on the organization at three different organizational levels: operational, tactical, and strategic (see Table 1.2; Redman, 1998). Some of the impacts are tangible (e.g., reduced customer satisfaction, increased cost, ineffective decision making, reduced ability to make and execute strategy), whereas others are intangible (e.g., lower employee morale, organizational mistrust, difficulties in aligning the enterprise, issues of ownership/politics).

Table 1.2

Classification of Information Quality Business Impacts

|

Operational Impacts |

Tactical Impacts |

Strategic Impacts |

|

Lowered customer satisfaction |

Poorer decision making; poorer decisions that take longer to make |

More difficult to set strategy |

|

Increased cost: 8–12% of revenue in the few, carefully studied cases; for service organizations, 40–60% of expenses |

More difficult to implement data warehouses |

More difficult to execute strategy |

|

Lowered employee satisfaction |

More difficult to reengineer |

Contribute to issues of data ownership |

|

Increased organizational mistrust |

Compromise ability to align organizations |

|

|

Divert management attention |

Source: Redman, 1998.

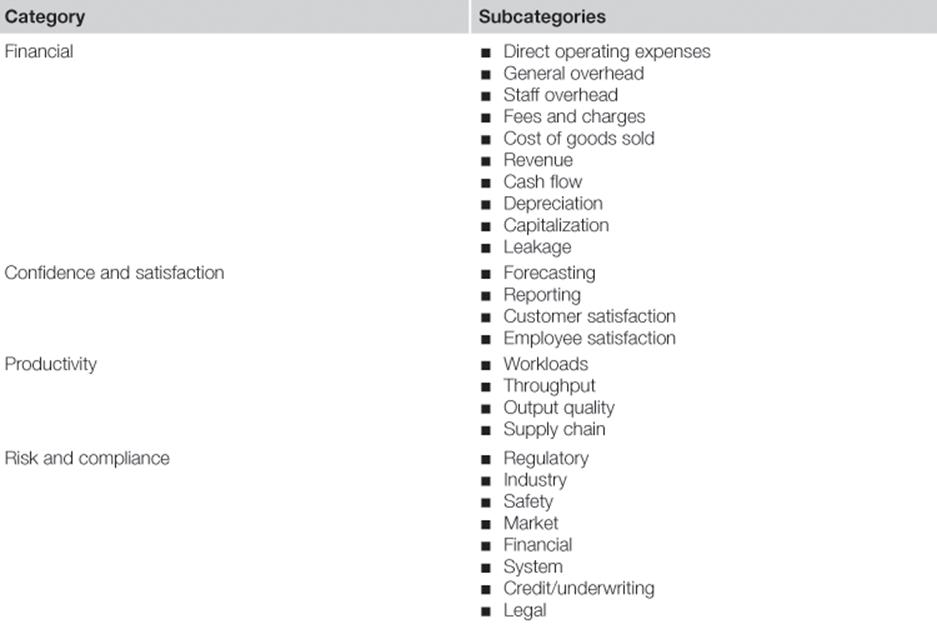

A list of impacts is also given by David Loshin (2010) in Table 1.3. There are four major categories of impact identified: financial impact, confidence and satisfaction, productivity, and risk and compliance. Each of the categories has a number of subcategories, which are shown in the table, and many examples for each subcategory are given in the book. It is emphasized that this list is not inclusive and strategies are provided for organizations to iteratively refine the impact list to better fit their application domain and industry.

Table 1.3

Classification of Information Quality Business Impacts

Source: Loshin, 2010.

Why we need better methods to understand and measure the impact of data and information quality

Many practitioners complain that they struggle to understand and measure the impact of poor data and information quality. They, therefore, have trouble building believable business cases for investments in data and information asset quality. When data and information assets are of poor quality, it is likely that something will go wrong, but it does not have an impact every time; the impact occurs only with a certain likelihood. This makes it even more complicated to measure the impact. Tom Redman emphasized in his keynote speech at the International Conference on Information Quality in November 2011 that assessing the business impact of information quality is still one of the big unsolved research problems for the data and information quality discipline. The assessment of the business impact of poor data and information quality is currently only a side element to data and information quality methodologies (many do not assess it at all). As we are living in a world of restrained assets, we believe that this puts the wrong emphasis on the dominant data and information quality improvement approaches. The methodology in this book is an attempt to shift information quality management into being more value driven and better aligned with business goals and strategy. We are convinced that understanding where data and information assets create the biggest pain points or where they can provide the biggest opportunities is a cornerstone to a more value-driven enterprise information management program in your organization.

References

1. Ahituv N, Igbaria M, Sella A. The Effects of Time Pressure and Completeness of Information on Decision Making. Journal of Management Information Systems. 1998;15(2):153–172.

2. Ballou D, Wang R, Pazer H, Kumar G. Modeling Information Manufacturing Systems to Determine Information Product Quality. Management Science. 1998;44(4):462–484.

3. Batini C, Cappiello C, Francalanci C, Maurino A. Methodologies for Data Quality Assessment and Improvement. ACM Computing Surveys (CSUR). 2009;41.

4. Borek A, Helfert M, Ge M, Parlikad AK. IS/IT Resources and Business Value: Operationalization of an Information-Oriented Framework. Enterprise Information Systems 13th International Conference, ICEIS 2011, Beijing, China, June 8–11, 2011, Revised Selected Papers. Vol. 102. Lecture Notes in Business Information Processing Berlin: Springer; 2012.

5. Bovee M, Srivastava RP, Mak B. A Conceptual Framework and Belief-function Approach to Assessing Overall Information Quality. International Journal of Intelligent Systems. 2003;18(1):51–74.

6. Checkland P, Scholes J. Soft Systems Methodology in Action. New York: John Wiley and Sons; 1990.

7. Chengalur-Smith IN, Ballou DP, Pazer HL. The Impact of Data Quality Information on Decision Making: An Exploratory Analysis. IEEE Transactions on Knowledge and Data Engineering. 1999;11(6):853–864.

8. Cleveland H. The Twilight of Hierarchy: Speculations on the Global Information Society. Public Administration Review. 1985;45(1):185–195.

9. Crosby PB. Quality Is Free. New York: McGraw-Hill; 1979.

10. Deming WE. Management of Statistical Techniques for Quality and Productivity. New York: New York University, Graduate School of Business; 1981.

11. Delone WH, McLean ER. Information Systems Success: The Quest for the Dependent Variable. Information Systems Research. 1992;3(1):60–95.

12. Delone, W. H. & McLean, E. R. The Delone and McLean Model of Information Systems Success: A Ten-year Update. Journal of Management Information Systems 19(4), 9–30.

13. Eaton JJ, Bauden D. What Kind of Resource Is Information. Internatinal Jouarnal of Information Management. 1991;11(2):156–165.

14. Economist. Data, Data Everywhere—A Special Report on Managing Information. available at http://www.economist.com/node/15557443; 2010.

15. Eppler MJ, Wittig D. Conceptualizing Information Quality: A Review of Information Quality Frameworks from the Last Ten Years. Proceedings of 5th International Conference on Information Quality (ICIQ 2000) USA: MIT, Cambridge, MA; 2000; October 20–22 2000.

16. English LP. Improving Data Warehouse and Business Information Quality: Methods for Reducing Costs and Increasing Profits. New York: John Wiley and Sons; 1999.

17. Fisher CW, Chengalur-Smith IS, Ballou DP. The Impact of Experience and Time on the Use of Data Quality Information in Decision Making. Information Systems Research. 2003;14(2):170–188.

18. Ge M, Helfert M. A Framework to Assess Decision Quality Using Information Quality Dimensions. Proceedings of the 11th International Conference on Information Quality—ICIQ. 2006;6:10–12.

19. Ge M, Helfert M. Effects of Information Quality on Inventory Management. International Journal of Information Quality. 2008;2(2):177–191.

20. Glazer R. Measuring the Value of Information: The Information-intensive Organization. IBM Systems Journal. 1993;32(1):99–110.

21. Goodhue DL. Understanding User Evaluations of Information Systems. Management Science. 1995;41(12):1827–1844.

22. Jung W, Olfman L, Ryan T, T Park Y. An Experimental Study of the Effects of Representational Data Quality on Decision Performance. AMCIS 2005 Proceedings 2005;298.

23. Juran JM. Quality Control Handbook. 4th ed. New York: McGraw-Hill; 1988.

24. Keller KL, Staelin R. Effects of Quality and Quantity of Information on Decision Effectiveness. The Journal of Consumer Research. 1987;14(2):200–213.

25. Lee YW, Pipino LL, Funk JD, Wang RY. Journey to Data Quality. Cambridge, MA: MIT Press; 2006.

26. Lewis PJ. Linking Soft Systems Methodology with Data-focused Information Systems Development. Information Systems Journal. 1993;3(3):169–186.

27. Loshin D. The Practitioner’s Guide to Data Quality Improvement. San Francisco: Morgan Kaufmann; 2010.

28. Madnick SE, Wang RY, Lee YW, Zhu H. Overview and Framework for Data and Information Quality Research. Journal of Data and Information Quality (JDIQ). 2009;1(1):1–22.

29. Mingers J. Realizing Systems Thinking: Knowledge and Action in Management Science. Berlin: Springer; 2006.

30. Mingers J. An Evaluation of Theories of Information with Regard to the Semantic and Pragmatic Aspects of Information Systems. Systemic Practice and Action Research. 1996;9(3):187–209.

31. O’Reilly III CA. Variations in Decision Makers’ Use of Information Sources: The Impact of Quality and Accessibility of Information. Academy of Management Journal. 1982;25(4):756–771.

32. Palmer M. Data Is the New Oil. 2006; available at http://ana.blogs.com/maestros/2006/11/data_is_the_new.html; 2006.

33. Raghunathan S. Impact of Information Quality and Decision-maker Quality on Decision Quality: A Theoretical Model and Simulation Analysis. Decision Support Systems. 1999;26(4):275–286.

34. Redman TC. The Impact of Poor Data Quality on the Typical Enterprise. Communications of the ACM. 1998;41(2):79–82.

35. Rotella P. Is Data the New Oil?”. 2012; available at http://www.forbes.com/sites/perryrotella/2012/04/02/is-data-the-new-oil/; 2012.

36. Shannon CE. The Lattice Theory of Information. In: Sloane NJA, Wyner AD, eds. Claude Elwood Shannon: Collected Papers. New York: IEEE Press, Institute of Electrical and Electronics Engineers; 1993;180.

37. Slone JP. Information Quality Strategy: An Empirical Investigation of the Relationship Between Information Quality Improvements and Organizational Outcomes. Doctoral Thesis Minneapolis: Capella University; 2006.

38. Thorp J. Big Data Is Not the New Oil. 2012; available at http://blogs.hbr.org/cs/2012/11/data_humans_and_the_new_oil.html; 2012.

39. Wand Y, Wang RY. Anchoring Data Quality Dimensions in Ontological Foundations. Communications of the ACM. 1996;39(11):95.

40. Wang RY, Lee YW, Pipino LL, Strong DM. Manage Your Information as a Product. Sloan Management Review. 1998;39:95–105.

41. Wang RY, Strong DM. Beyond Accuracy: What Data Quality Means to Data Consumers. Journal of Management Information Systems. 1996;12(4):33.