Threat Modeling: Designing for Security (2014)

Part IV. Threat Modeling in Technologies and Tricky Areas

Chapter 15. Human Factors and Usability

Usable security matters because people are an important element in the security of any system. If you don't consider how people will use your system, the odds are against them using it well. The security usability community is learning to model people, the sorts of decisions you need them to make, and the sorts of scenarios in which they act. The toolbox for addressing these issues is small but growing, and the tools are not yet as prescriptive as you might like. As such, this chapter gives you (in particular the security expert) deeper background than some other chapters, and the best advice available.

Because humans are different from other elements of security, and because the models, threats, and means of addressing them are different, this chapter covers challenges of modeling human factors in terms of security, and the techniques available to address them.

The chapter starts with models of people, as they motivate everything in this chapter. It continues with models of software which are useful for human factors work. These two models interact with threat elicitation techniques which are covered next, and are similar to those you learned about in Part II of this book. Threats in this chapter include those in which an attacker tries to convince a person to take action, and threats in which the computer needs to defer to a person for a decision. After threat elicitation, you'll learn tools and techniques for addressing human factors issues, as well as some user interface principles for addressing those threats, and advice for designing configuration, authentication, and warnings. These are the human-factors equivalents of the defensive tactics and technologies covered Chapter 8 “Defensive Tactics and Technologies.” From there, you'll learn about testing for human factors issues, mirroring what you learned in Chapter 10 “Validating That Threats are Addressed.” The chapter closes with my perspective on usability and ceremonies.

Threat modeling for software or systems generally involves keeping two models in mind: a model of the software, and a model of the threats. Considering human factors adds a third model to the mix: a model of people. Ironically, that additional cognitive load can present a real usability challenge for those threat modeling human factors.

A Few Brief Notes on Terminology

§ Usability refers to the subset of human factors work designed to help people accomplish their tasks. Usability work includes the creation of user interfaces. User interfaces, along with their discoverability, suitability for purpose, and the success or failure of the people using those interfaces, make up one or more user experiences.

§ Human factors covers how technology needs to help people under attack, how to craft mental models, how to test the designs you're building.

§ Ceremony refers to the idea of a protocol, extended to include its “human nodes.” For now, consider a ceremony to be similar to a user experience, but from the perspective of a protocol analyst. Ceremonies are explained in depth later in the chapter.

§ People are at the center of this chapter. You'll see them referred to as users in user testing, user interface, and similar terms of art, and sometimes as humans, to align with sources.

Models of People

This chapter opens with models of people because they are at the center of the work you will sometimes need to do when addressing security where people are in the loop. This is a new type of model which parallels models of software and threats.

We all know some people, know how they behave, what they want. Aren't these informal models enough? Unfortunately, the answer seems to be no. (Otherwise, security wouldn't have a usability problem.) There are two reasons to create more structured models. First, people who work in software usually construct informal models of people that appear good enough for day-to-day use (but are often full of contempt for normal folks). They aren't robust enough to lead us to good decisions. Second, and more important, our implicit models of people are rarely focused on how people make security decisions.

As a profession, we need better models showing how people arrive at a security task, their mental models of the security tasks they're being asked to do, or the security-related skills or knowledge we can expect various types of people to have. It might be possible to build all that into a single model, or we might have several models which are designed to work together. Additionally, we'd like a set of models that help those who are not experts in usable security.

Even if we had those models, attaining a consistent and repeatable automated analysis of the human beings within a ceremony is unlikely. (There's an argument that it is probably equivalent to developing artificial intelligence: If a computer could perfectly predict how a human being will respond to a set of stimuli, then it could exhibit the same responses. If it could exhibit the same responses, then it could pass the Turing test.) However, recall that all models are wrong, and some models are useful. You don't need a perfect model to help you predict design issues. All of the models in this chapter are on the less structured end of the spectrum and are most useful in a structured brainstorm or expert consideration of a ceremony.

Applying Behaviorist Models of People

Does the name Pavlov ring a bell? If so, one explanation is that your repeated exposure to a stimulus has conditioned a response. The stimulus is stories about Pavlov's famous dog experiments. The behaviorists believe that all observable behaviors are learned responses to stimuli. The behaviorist model has obvious and well-trod limitations, but it would not have had such a good run if it didn't at least have some explanatory or predictive power. Some of the ways in which behavioral models of people can apply to ceremonies are explored in this section.

Conditioning and Habituation

People learn from their environment. If their environment presents them with frequently repeated stimuli, they'll learn ways to respond to those stimuli. For example, if you put a username/password prompt in front of people, they're likely to fill it out. (That's a conditioned response, as pointed out by Chris Karlof and colleagues [Karlof, 2009].) It is hard for people to evoke deep, careful thought each time they encounter the stimulus, because such effort would usually be wasted. Closely related to this idea of a conditioned response is a habituation response such as automatically clicking a button in a dialog you have repeatedly seen (e.g., “Some files might be dangerous”).

It's hard to argue that such behavior is even wrong. Aesop's tale about the boy who cried wolf ends with the boy not getting help when a real wolf appears. People learn to ignore repeated, false warnings, for good reasons. If thinking carefully about the dialog mentioned in the example takes five seconds and a person sees it 30 times per day, that's three minutes per day, or 1,000 minutes per year spent thinking about that one dialog. Suppose someone were successfully attacked once per year by a malicious file that exploits a bug not yet patched on the computer, and their anti-virus wouldn't catch it. Will the cost of cleanup exceed 16 hours? If not, then the person is rationally ignoring the advice to spend those five seconds (Herley, 2009).

Conditioning and habituation can be addressed by reducing the frequency of the stimulus. For example, the SmartScreen feature in Windows and Internet Explorer checks whether a file is from a leading publisher or very frequently downloaded and simply asks the person what to do with the file, rather than issuing a warning. Conditioning can also be addressed by ensuring that the ceremony conditions people to take steps for their security (see “Conditioned-Safe Ceremonies” later in this chapter for more information).

Wicked Environments

Educators have a concept of “kind” learning environments. A kind learning environment includes appropriate challenges and immediate feedback. These environments can be contrasted with wicked environments, which make learning hard. Jay Jacobs has brought the concept of wicked environments to information security. Quoting his description:

[Feedback is] the prime discriminator between a kind and wicked environment and consequently the quality of our intuition. A kind environment will offer unambiguous, timely, and accurate feedback. For example, most sports are a kind environment. When a tennis ball is struck, the feedback on performance is immediate and unambiguous. If the ball hits the net, it was aimed too low, etc. When the golf ball hooks off into the woods, the performance feedback to the golfer is obvious and immediate. However, if we focus on the feedback within information security decisions, we see feedback that is not timely, extremely ambiguous, and often misperceived or inaccurate. Years may pass between an information security decision and any evidence that the decision was poor. When information security does fail, proper attribution to the decision(s) is unlikely, and the correct lessons may not be learned, if lessons are learned at all. Because of this untimely, ambiguous, and inaccurate feedback, decision makers do not have the opportunity to learn from the environment in which the risk-based decisions are being made. It is safe to say that these decisions are being made in a wicked environment.

—Jay Jacobs, “A Call to Arms” (ISSA Journal, 2011)

As a simple heuristic, you can ask, is this design wicked or kind? What can you do to provide better feedback or make the feedback more timely or actionable?

Cognitive Science Models of People

Cognitive science takes an empirical approach to behavior, and attempts to build models from observation of how people really behave. In this section, you'll learn about a variety of models of people that are in the cognitive science mold. They include the following:

§ Carl Ellison's “ceremonies” model of people

§ A model based on the work of behavioral economist Daniel Kahneman

§ A model derived from the work of safety expert James Reason

§ A framework explicitly for reasoning about humans in computer security by CMU professor Laurie Cranor

§ A model derived from work on humans in security by UCL professor Angela Sasse

These models overlap in various ways. Each is, after all, a model of people. In addition, each of these can inform how you threat model the human aspects of a system you're building.

Ellison's Ceremonies Model

A ceremony is a protocol extended to include the people at each end. Security architect and consultant Carl Ellison points out that we, as a community, can learn from post-mortems of real ceremony errors, or perform tests on candidate ceremonies. He describes a model of “installing” programs on “human nodes” by means of a manual, training, or a contract that mandates certain user behavior. Models that require people to make decisions using the identity of the source require that the system effectively communicates about identity to the human node, using a meaningful ID—a concept covered in depth in Chapter 14, “Accounts and Identity.”

Ellison defers the creation of a full model to experimental psychologists or cognitive scientists; and in that mode, the following sections summarize a few models that reflect some of the more insightful cognitive scientists whose work applies to security.

The Kahneman Model

This model is an attempt to extract some of the wisdom in Thinking Fast and Slow (Straus and Giroux, 2011). That excellent book is by Daniel Kahneman, the Nobel Prize-winning founder of behavioral economics. It is chock-full of ways in which people behave that do not resemble a computer, and anyone preparing to threat model for human factors will probably find it repays a close reading. Following are some of the concepts presented in Kahneman's book and ideas about how they can be applied to threat modeling:

§ WYSIATI, or What You See Is All There Is: This concept appears so frequently that Kahneman abbreviates it. The concept is important to threat modeling because it underscores the fact that the information currently presented to a person carries great weight in terms of any decisions they're being asked to make. For example, if a person is told they must contact their bank right now to address a problem, they are perhaps unlikely to remember that their bank never answers the phone after 4:00 P.M., never mind on a Saturday. Similarly, if a person doesn't see a security indicator (such as a lock icon in a browser), they're unlikely to notice it's not there. If an attacker presents cleverly designed advice “for security,” it might crowd out other advice the person has already received.

§ System 1 versus System 2: System 1 refers to the fast part of your brain, which does things like detect danger, add 2+3, drive, or play chess (if you're a chess master). System 2 refers to the part of your brain that makes rational, considered decisions. System 1 influences our decision-making more than most people realize, or are willing to admit, perhaps including clicking away annoying dialogs. If clicking away dialogs is really system 1 activity, a system design that relies on system 2 when a dialog appears requires you to work hard to ensure that system 2 kicks in.

§ Anchoring: Anchoring effects are absolutely fascinating. If you ask people to write down the last two digits of their SSN, people with low values for those digits will subsequently estimate unrelated numbers to be lower, whereas those with high values will estimate higher. Similarly, the sales technique of saying something like “this is a $500 camera, but just today it's $300” makes you think you're getting a great deal, even if your budget a moment ago was $200. Perhaps anchoring effects crowd out wisdom when people are presented with scams or are being conned into behaving in ways that they otherwise would not.

§ Satisficing: Satisfice is a rotten word but it'll do, absent a better one to describe the reality that people attempt to make decisions that are good enough, because the cost of a great decision is too high, or because “decision-making energy” has been exhausted. For example, most people's savings and investment choices are based on a subset of all possible investments, because evaluating options is time-consuming. In security, perhaps people allow system 1 to assess something, make a call regarding whether it's sufficient, and move on.

Reason's Many Models

Professor James Reason studies the ways in which accidents happen in large systems. His work has lead to the creation of a plethora of models of human error. It could be highly productive to take those models and create prescriptive advice for threat modeling.

For example, his model of “strong habit intrusions” describes how the rules, or habits, that help us get through the day can be triggered inappropriately by “environment[s] that contain elements similar or identical to those in highly familiar circumstances. (For example, ‘As I approached the turnstile on my way out of the library, I pulled out my wallet as if to pay—although I knew no money was required.’)” These strong habit intrusions might be usable as a threat elicitation heuristic.

Some of the other models he has presented include the following:

§ A Generic Error-Modeling System, or GEMS (covering errors, lapses, and slips)

§ An intention-centered model

§ A model driven by the ways errors are detected

§ An action model that includes omissions, intrusions, repetitions, wrong objects, mis-orderings, mis-timings, and blends

§ A model based on the context of the errors

All but the first model are covered in depth in The Human Contribution: Unsafe Acts, Accidents and Heroic Recoveries (Ashgate Publishing, 2008).

The Cranor Model

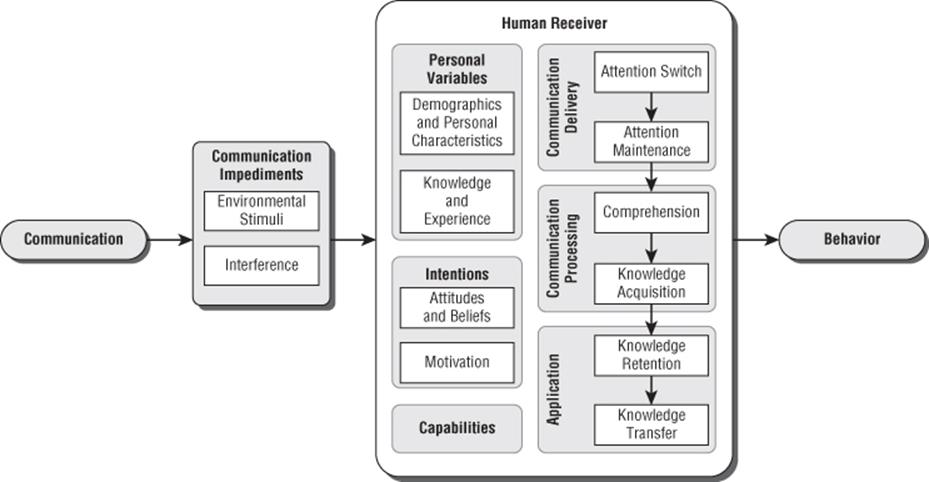

In contrast to the creators of the previous models, CMU Professor Lorrie Faith Cranor focuses specifically on security and usability. She has created “A Framework for Reasoning About the Human in the Loop” (Cranor, 2008). Like Ellison's ceremonies paper, her paper is easy and worthwhile reading; this section simply summarizes her framework, which is shown in Figure 15.1.

Figure 15.1 Cranor's human-in-the-loop framework

Much like Ellison, Cranor's model puts a person at the center of a model in which communication of various forms may influence behavior. However, “it is not intended as a precise model of human information processing, but rather it is a conceptual framework that can be used much like a checklist to systemically analyze the human role in secure systems.” The components of her model are:

§ Communications: The model considers five types of communication: warnings, notices, status indicators, training, and policy. These are roughly ordered by how active or intrusive the communications are, and they are generally self-explanatory. One noteworthy element of training that Cranor calls out is that effective training (by definition) must lead people to recognize a situation where their training should be applied. There is an interesting relationship to what Reason calls rule misapplication. Rule misapplication may be more common when training is not regularly re-enforced or when the situations in which the training should be triggered are infrequent.

§ Communications impediments: These include any interference that prevents the communication from being received, and environmental stimuli that may distract the person from a communication that they have received, leading them to perform a different action. It is important to look for both behavior under attack and under non-attack, and ensure that communications reliably occur at the right time and only the right time.

§ The human receiver: This has six different components, and the “relationships between the various components are intentionally vague.” However, the left-hand column relates to the person, while the right-hand column roughly reflects the chain of events for handling the message.

§ Personal Variables: Includes the person's background, education, demographics, knowledge, and experience, each of which may play into how a person reacts to a message

§ Intentions: Includes attitudes, beliefs, and motivations

§ Capabilities: Even a person who gets a message, wants to act on it, and knows how to act on it may not be able to. For example, he or she might not have a smartcard or a smartcard reader.

§ Communication Delivery: This is related to the person's attention being switched to the communication and maintained there.

§ Communication Processing: Determine whether the person understands the message and has the knowledge to act on it.

§ Application: Does the person understand, from formal or informal training, how to respond to the situation, and can they transfer that knowledge to the specific facts at hand?

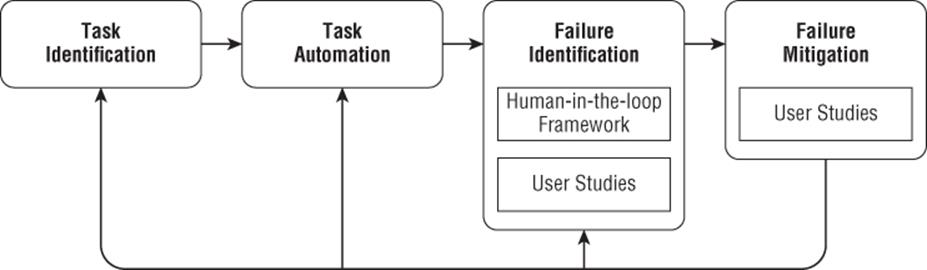

Cranor presents a model that describes how to use her framework, shown in Figure 15.2. It consists of task identification, task automation, failure identification in two ways (her framework and user studies), and mitigating those failures, again using user studies to ensure that the mitigations are functional. Cranor also usefully presents a set of questions to ask and factors to consider for each element of the model.

Figure 15.2 Cranor's human threat identification and mitigation process

Sasse's Model

UCL Professor Angela Sasse also has a model of failures situated in organizational systems, including management and policies, preconditions, productive activities, and defenses that surround the decision-makers (meaning any person, rather than only executives). It is fundamentally a compassionate model of people, based on the reality that most people generally want to be secure, and that their deviations from security are understandable if you take the time to “walk a mile in their shoes.”

The most important threat modeling take-away from Sasse is her approach to those who make decisions that conflict with the suggestions of security experts. Oftentimes, security experts bemoan that “in a contest between being secure and dancing pigs, dancing pigs will win every time” or “stupid users will click anything you put in front of them.” In contrast, she seeks to understand those decisions and the logic that underlies them. Such an understanding is essential to useful models of people.

Heuristic Models of People

The models of people described in this section are less associated with a single researcher, or otherwise don't quite fit into the way behaviorist or cognitive science models of people are presented, but they have been repeatedly and effectively used in studying how people's observed behavior differs from the expectations embedded in systems.

Goal Orientation

People want to get to the task at hand. If a security process stands between them and their goal, then they are likely to view that process as a hindrance. That means they may read a warning dialog as if it says “Do you want to get the job done?” and click OK. Because of this usability, practitioners will often state that, “security is a secondary task.”

There are two main ways to address issues found in goal orientation. The first is to make the obvious path secure. If that's possible, it's by far the best course. The second is to think about when the security information is provided in the ceremony. There are two options: Go early or go late. Going early means providing the information needed as the person is thinking about what to do, rather than as they're committed to a path. For example, an operating system could display an application downloaded from the Internet with a spiky icon overlay, rather than (or in addition to) displaying a warning after someone has double-clicked it. Going late means delaying the decision as long as possible, so that the person has a chance to back out. This approach is used in the gold bar pattern, whereby the secure option is taken by default, and insecure options are made available in a gold bar along the top of the window. This is sufficient to review the document, but not to edit or print it. A nontargeted attack is unlikely to convince anyone to exit the sandbox and expose themselves to more risk. See “User Interface Tools and Techniques” later in this chapter for more information about the gold bar pattern.

Confirmation Bias

Confirmation bias is the tendency people have to look for information that will confirm a pre-existing mental model. For example, believers in astrology will remember the one time their horoscope just nailed what was about to happen, and discount the other 364 days when it was vague or just plain wrong. Similarly, if someone believes they urgently need to contact their bank, they may be less likely to notice slight oddities in the bank's login page.

Scientists and engineers have learned that looking for ways to disprove an idea is much more powerful that looking for evidence which confirms it. Unfortunately, looking for counter-evidence seems to be at odds with how people tend to work. Recalling the discussion of system 1 and system 2 in the section on Kahneman's work, there may be elements of system 1 versus system 2 at play here. System 1 may see a data point and collect it (feeding confirmation bias) or discard it as anomalous, preventing you from seeing the problem.

Addressing confirmation bias is tricky in general, and may be trickier in security circumstances. It might be possible to condition or train people to look for evidence of evil, and use that to undercut confirmation bias.

Is This Section Full of Confirmation Bias?

It is easy to find examples of these hueristics once you watch for them, which raises a worry that they are easy, rather than good explanations. Worse, in putting them here, I may be subject to confirmation bias. Perhaps in reality, some other factor is at work, and using one of these will be misleading. This risk is associated with many of the heuristics in this section, and a poor understanding of the cause may lead to selecting a poor solution. This should not be taken as an argument against using them, but a caution and a reminder of the importance of doing usability testing.

Compliance Budget

The term compliance budget appears in the work of Angela Sasse (introduced above), who has performed anthropological studies of British office workers. Her team noticed that after repeated exposure to the same security policies or tasks they were supposed to perform, the workers would respond differently (Beautement, 2009). During their interviews about security compliance, she noted that the workers were effectively allocating a “budget” to perform security tasks. They may or may not understand the tasks, but they would spend time and energy on them until their budget was exhausted, and then move on to other work tasks. When security requests were considered not as platonic tasks to be accomplished, but as real tasks situated against other tasks, worker behavior was fairly consistent.

Therefore, as you design systems, track how many security demands are being made in each of your scenarios. There is no “right” amount, but fewer is better.

Optimistic Assumptions

Many protocols impose optimistic assumptions about the capabilities of their human nodes. For example, people are sometimes expected to know where a browser's lock icon should be. (Top or bottom, left or right? In the main display area, obviously.) The lock cleverly disappears when it's not needed, which we optimistically assume doesn't make this question harder.

So how do you use this in threat modeling? As you go through the process, you should be keeping a list of assumptions you find yourself making (see Chapter 7, “Processing and Managing Threats”). For each optimistic assumption, look for a weaker assumption, or a way to buttress the assumption.

Models of Visual Perception

In work released as this book was being completed, Devdatta Akhawe and colleagues argue that limitations of human perception make UI security difficult to achieve. They present a number of attacks, including destabilizing perception of the pointer, attacking peripheral vision, attacking motor adaptations of the brain, mislocalization related to fast-moving objects, and abusing visual cues (Akhawe, 2013). Studying models of visual perception will probably be a fruitful area of research over the next few years.

Models of Software Scenarios

There are (at least) two ways to model a scenario, including a software-centered model and an attack-centered model. In this section, you'll learn about modeling both for threat modeling human factors. The software-centered models are somewhat easier, insofar as you know what sorts of circumstances will invoke them. The software models include scenario models of warnings, authentications, and configurations and models that use diagrams to represent the software. The attack-centered model is somewhat more broad. That is, if an attacker wants your software to do something, where can they force it?

Modeling the Software

You can model the goals and affordances of the software that a particular feature is intended to offer. (An affordance is whatever element of the user interface that communicates about the intended use.) One useful model of the interactions you'll offer includes warnings, authentications, and configuration (Reeder, 2008). In that model, warnings are presented in the hope of deterring dangerous behavior, and include warnings dialogs, prods, and notifications. Authentications include the person authenticating to a computer either locally or via a network, and the remote system authenticating to the person. Configuration includes all those ways in which the person makes a security decision regarding the configuration of a computer or system.

These are not always distinct in practice. A single dialog will commonly both warn and ask for configuration or consent. Considering them separately helps you focus on the unique aspects of each, and ensure that you're providing the information that a person needs. In addition, the same advice applies to the warning regardless of the further content. So it applies to authentication, configuration, and consent. The types of information that you'll need to present so that the warning is clear doesn't change because of the additional context or decisions.

Warnings

A warning is a message from one party to another that some action carries risk. Generally, such messages are intended as a risk transference from one party to another, and they may have varying degrees of legal or moral effectiveness in actually transferring risk. Good warning design is covered later in this chapter in the section “Explicit Warnings.”

Good warning design clearly identifies the potential problem, how likely it is, and what can be done to avoid it. Good warnings avoid “the boy who cried wolf” syndrome.

Authentication

People are astoundingly good at recognizing other people. It's probably an evolved trait. However, it turns out to be very difficult to authenticate people to machines or machines to people. Authentication is the process of proving that you are who you've said you are in a manner that's sufficient for a given context—authenticating that you're a student at State University is generally less important than authenticating that you're the missing heir to a massive fortune. Authentications are generally one party authenticating to another, such as a client authenticating itself to a server. They are sometimes bi-directional, and the authentication system in use in each direction may differ. For example, a web browser might connect to a bank and validate a digital certificate to start SSL. This is the server being authenticated via PKI to a client. The client will then authenticate via a password to the bank. There are also authentications that involve more parties.

The best advice on authentication (in both directions) is to treat it as a ceremony and pay close attention to where information comes from, how it could be spoofed, and how it will be validated in the real world.

Configuration

There are many ways to group configuration choices that can be made, including configurations made in advance, and configurations to allow or deny a specific activity. For each, it is helpful to consider the person's mental model, and the difficulty of testing their work. Useful techniques might include asking “how others might see the state of the system,” whether “X can do Y,” or having the person “describe the changes just made.” Configuring a system for security is hard. The person performing the configuration needs to instruct the computer with a degree of precision that's required in few other areas of life, and constructing tests to ensure that it's been done right is often challenging for both technical and nontechnical people. Configuration tasks can be broken out into:

§ Configuration such as altering a setting

§ Consenting to some set of terms

§ Authorization or permission settings

§ Verification of settings or claims (such as the state of a firewall or identity of a website)

§ Auditing or other investigation into the state of a system so people can view and act if appropriate

As you think about configuration, consider using a framework of who, what, why, when, where and how:

§ Who can perform the configuration? Is it anyone? An administrator? Is there a parental role?

§ What can they configure, and to what granularity? Is it on/off? Is it details intrinsic to the feature being configured (e.g., the firewall can block IP addresses or ports), or extrinsic, such as “this user cannot contact me”? The latter requires each channel of contact to know it must check the ACL.

§ Why would someone want to configure the feature, and use those scenarios to determine what the user interface will be, and what mistakes they might make.

§ When will someone be making configuration choices? Is it proactive or reactive? Is it just in time?

§ Where will someone make the change? (And how will they find that interface?)

§ How will users implement their intent? “How” is not only how they make a change, but how they can test it—for example, Windows “effective permissions” or the LinkedIn “Show me how others see this profile.” Consider how users can see the configuration of the system as a whole.

Good design of a configuration system is hard. Decisions made early can make changes impossible or extremely expensive. For example, Reeder has shown that people have a hard time with elements of the Windows file permission model, and that changing to a “specificity preference” over the deny precedence appears to align better with people's expectations (Reeder, 2008). However, changing that ordering would break the way one access control rules have been configured on possibly hundreds of millions of systems, and each rule would require manual analysis to understand why it's set the way it is, what the change would mean, and how to best repair it.

Diagramming for Modeling the Software

There are a variety of diagram types that can be useful for human factors analysis, including swim lanes, data flow diagrams, and state machines. Each is a model of the software, system, or protocol. In this section, you'll learn about how each can be modified for use in looking at the human in the loop.

Swim Lanes

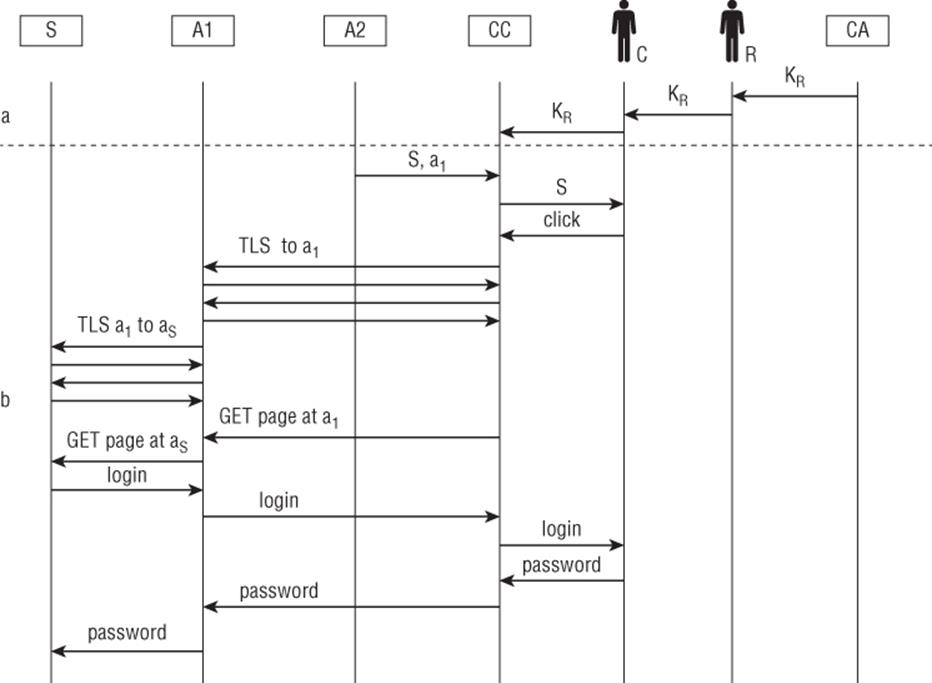

You learned about swim lane diagrams for protocols in Chapter 2, “Strategies for Threat Modeling.” These diagrams can be adapted for modeling people. In ceremony analysis, you add swim lanes representing each participant, as shown in Figure 15.3 (reproduced from Ellison's paper on ceremonies [Ellison, 2007]). There is an implicit trust boundary between each lane. In this diagram, S is the server, A1 and A2 are attackers, CC is the client, and CA is a certificate authority.

Figure 15.3 Ellison's diagram of the HTTPS ceremony

This diagram is worth studying, especially when you realize that A1 and A2 are machines controlled by attackers. Note that the attacker A is not shown; what this attacker knows is not relevant to the security of C, the person being tricked. Also note that messages from computers to humans (“S,” short for server) and humans to computers (“click”) are shown as protocol messages. It is very important to ensure the messages between computers and humans are clearly shown, and the contents of each is modeled. Such modeling will help you identify unreasonable assumptions, information not provided, and other communications issues.

State Machines

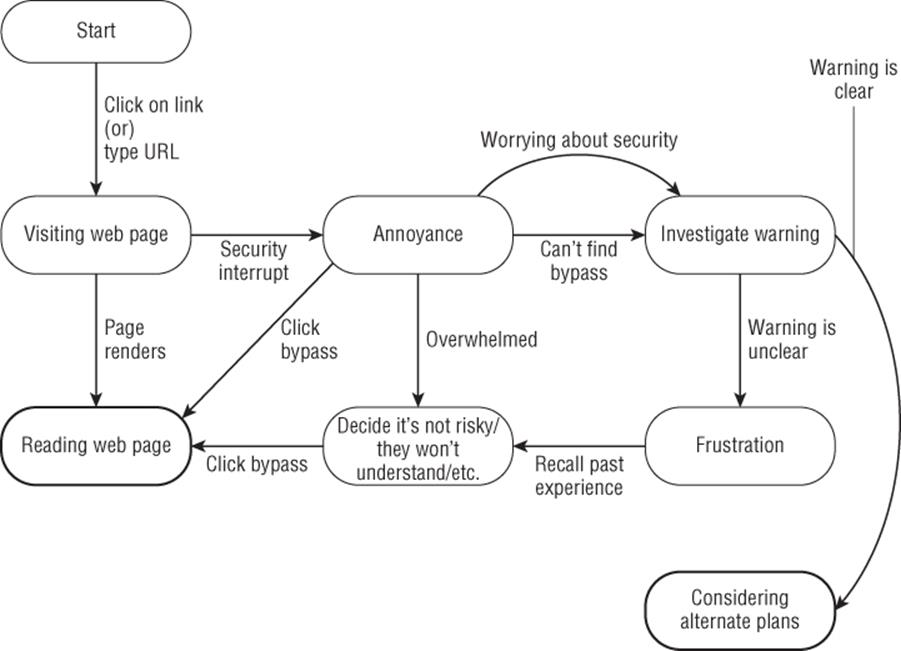

State machines are often used to model the state of inanimate objects. The states of machines are simple compared to the states of humans. However, that doesn't mean that a state machine can't help you think about the state of people using your software. For example, consider Figure 15.4, which explores possible states of a person trying to visit a website and being blocked by a security warning. The state machine has two exit states: reading a web page and considering alternative options. They are indicated by darker lines around the states.

Figure 15.4 A human state machine

Quick consideration of this model reveals several issues:

§ If a “bypass this warning” button is easily visible, it may be pressed by an annoyed person.

§ If people feel overwhelmed by security decisions, they'll likely bypass the warning.

§ The “stars must align” to get someone to the state of considering alternative plans.

You can also use state machines in conjunction with ceremonies. In a ceremony, a node can be modeled as state (including secrets), a state machine with its inputs and outputs, service response times, including bandwidth and attention, and a probability of errors of various sorts. (Ellison provides a longer list with finer granularity for the same set of attributes [Ellison, 2007].)

Modeling Electronic Social Engineering Attacks

A project at Microsoft needed a comprehensive way to describe electronic social engineering attacks. In this context, electronic means roughly all those social engineering attacks that are not in person or over the phone.

We came up with the channels and attributes shown in Table 15.1, which we have found to be a useful descriptive model. It is intended to be used like a Chinese menu, whereby you choose one from column A, one from column B, and so on.

Table 15.1 Attributes of Electronic Social Engineering Attacks

|

Channel of Contact |

Thing Spoofed |

Persuasion to Interact |

Human Act Exploited |

Technical Spoofing |

|

|

An operating system or product user interface element, such as a Mac OS warning, or a Chrome browser pop-up |

Greed/promise of reward |

Open document |

System dialog or alert |

|

Website |

A product or service |

Intimidation/fear |

Click link |

Filename (extension hiding) |

|

Social network |

A person you know |

Maintaining a social relationship |

Attach device/USB stick |

File type (other than extension hiding) |

|

IM |

An organization you have a relationship with |

Maintaining a business relationship |

Install/run program |

Icon |

|

Physical* |

An organization you don't have a relationship with |

Curiosity |

Enter credentials |

Filename (multi-lingual)† |

|

A person you don't know |

Lust/prurient interest |

Establish a relationship‡ |

||

|

An authority |

* Physical is at odds with the online nature of most of these, but sometimes there's an interesting overlap, like a USB drive left in a parking lot.

† Multi-lingual spoofing involves use of languages written left to right and right to left in the same name. There is no way to do so which meets all cultural and clarity requirements.

‡ Establishing a relationship also overlaps; much, but not all electronic social engineering is focused on the installation of malware.

You'll probably find that the descriptors here are sufficient for describing most online social engineering attacks. Unfortunately, some evocative detail is lost (and some verbosity is gained) as you generalize from “a Nigerian prince spam” to “e-mail pretending someone to be someone you don't know, exploiting greed” or from “phishing” to “a website pretending to be an organization you have a relationship with and for which you need to enter credentials in order to do business.” However, the resultant model descriptions capture the relevant details in a way that can inform either training or the design of technology to help people better handle such attacks.

The Technical Spoofing column contains details that are sometimes clarifying. Those elements are more specific versions of the “user interface element” at the top of the Thing Spoofed column.

Threat Elicitation Techniques

Any of the threat discovery techniques covered in Part II of this book can probably be applied to a user experience. Spoofing seems particularly relevant. You can bring any of those to bear while brainstorming. The ceremony approach offers a more structured way to find threats. You can also consider the models of humans presented earlier while considering what a “human state machine” might do, or as ways to explain the results of the tests you perform.

Brainstorming

The models of people and scenarios provided so far can help anyone find threats by brainstorming. That's much more likely to be productive with participation from either security or usability experts. Different goals of these specialists may lead to clashes, however, as usability experts will likely tend toward making it easy to get back to the primary task, and security experts will tend to focus on the risk of doing so. Either structure or moderators may help you.

The Ceremony Approach to Threat Modeling

The ceremony approach to threat modeling, created by Carl Ellison, (and mentioned briefly earlier) is most like “traditional” threat modeling. It starts from the observation that any network protocol, sufficiently fully considered, involves both computers and humans on each end. He developed this observation into an approach to analyzing the security of ceremonies. “Ceremony Design and Analysis,” the paper in which Ellison presents his model, is free, easy to understand, and worth reading when considering the human aspects of your threat model. It introduces ceremonies as follows:

[The ceremony is] an extension of the concept of network protocol, with human nodes alongside computer nodes and with communication links that include UI, human-to-human communication and transfers of physical objects that carry data. What is out-of-band to a protocol is in-band to a ceremony, and therefore subject to design and analysis using variants of the same mature techniques used for the design and analysis of protocols. Ceremonies include all protocols, as well as all applications with a user interface, all workflow and all provisioning scenarios. A secure ceremony is secure against both normal attacks and social engineering. However, some secure protocols imply ceremonies that cannot be made secure.

—Carl Ellison, “Ceremony Design and Analysis” (IACR Cryptology ePrint Archive 2007)

In a “normal” protocol, there are two or more nodes, each with a current state, a state machine (a set of states and rules for transitioning between them), and a set of messages it could send. Ellison extends this to humans, noting that humans can be modeled as having a state, messages they send and receive, and ways to transition into different states. He also notes that they are likely to make errors parsing messages. Ellison calls out three important points about ceremonies:

§ “Nothing is out of band to a ceremony.” If there's an assumption that something happens out of band, you must either model it or design for it being insecure.

§ Connections (data flows) can be human to human, human to computer, computer to computer, physical actions, or even legal actions, such as Alice sells Bob a computer.

§ Human nodes are not equivalent to computer nodes. Failure to take into account the way humans really act will break the security of your system.

Ceremony Analysis Heuristics

There are a set of threats that can be discovered simply and easily with some heuristics, and a set of threats that are more likely to be found with more structured work. Each heuristic has advice for addressing the threat. The first four are extracted from Ellison's “Ceremony Design and Analysis” (IACR Cryptology ePrint Archive 2007) to contextualize them as heuristics, while the final two are other heuristics that may help you.

Missing Information

The first issue to look for is missing information. Does each node has the information you expect it to act upon? For one trivial example, in the HTTPS scenario, the person is expected to know that the server name (S) does not match the URL (A1). However, the message from the computer contains only a server name, not a URL. Therefore, the analysis is particularly trivial: The designer has asked a person to make a decision but has not provided the information necessary to make it.

Ensure that you're explicit about decisions people will need to make, what information is needed to make them, and display it early enough that the person won't anchor on some other element, and close enough to where it is needed that other information will not be distracting.

Distracting Information

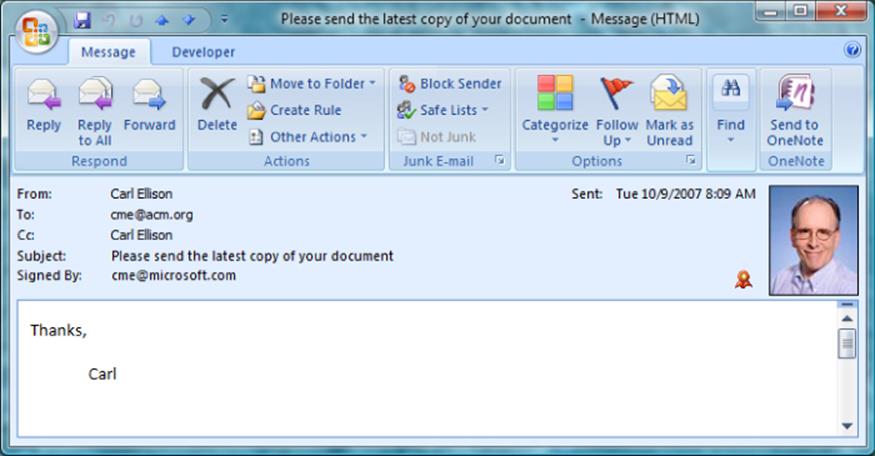

If you'd like to ensure that a person acts on information you present, keep in mind that each additional piece of information acts as a distractor, and may feed into confirmation bias. In discussing the human phase of verifying that a cryptographically signed e-mail was really signed, Ellison offers the screenshot shown in Figure 15.5, pointing out five elements that a human might look at, including the “From: address,” the picture, the “Signed by” address, the text of the message, and other contextual information that the human might have available. The only element that the (cryptographic) protocol designer wants you to look at is the “Signed by” address, which is the fifth element displayed, after “From,” “To,” “CC,” and “Subject”—all of which appears underneath various GUI elements, including the window title, taken from the e-mail subject. There is also a picture, and a small icon (reproduced from Ellison, 2007).

Figure 15.5 An e-mail interface

To address the possibility of distraction, information not key to the ceremony either should not be displayed or should be de-emphasized in some way or ways. In particular, you should take care to avoid showing information that is easily substituted or confused for the information that you want the person to use in the ceremony.

Underspecified Elements

Because a ceremony is all inclusive, it requires us to shine light where system architects might otherwise hand-wave. For example, how did a PKI root key on a system become authorized to suppress or send messages to the owner of that computer? (PKI root keys often suppress warning messages, and may activate messages, such as a green URL bar or a lock within the browser.) To address underspecified elements, specify them. It may be a practical requirement to accept risks associated with them, but a concrete statement of the risk might enable you to find a way to address it.

Fuzzy Comparison

People are pretty bad at comparing strings. For example, are the strings 82d6937ae236019bde346f55bcc84d587bc51af2 and 82d6937ae236019bde346f55bcc84d857bc51af2 the same string? Go on, check. It's important. I'm hopeful that when this book is laid out, the strings don't show up one above the next (and put in extra words to try to mitigate that threat from layout). So, did you check? I'm willing to bet that even most of you, reading the freaking gnarly specifics part of a book on threat modeling, didn't go through and compare them character by character. That may partly be because the answer doesn't really matter; but mostly it's because people satisfice—that is, we select answers that seem good enough at the time. The first (and maybe last) characters are the same, so you assume the rest are too. Often, attackers can do something to push the person to a fuzzy match that they will pass.

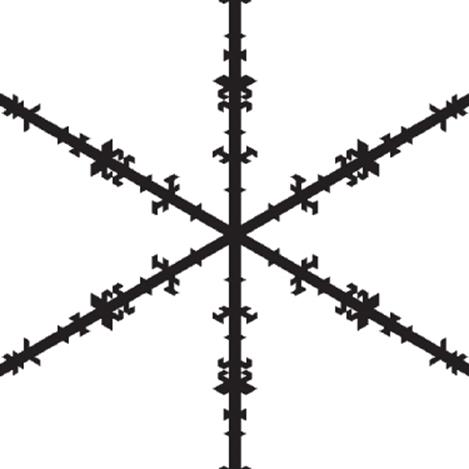

To address fuzzy comparison, look for ways to represent the bits so that they are easily compared. For example, because people are excellent at recognizing faces, can you use faces to represent data? How about other graphical representations? For example, there's a set of techniques for turning data into an image called a visual hash, as shown in Figure 15.6 (Levien, 1996; Dalek, 1996), although there is little or no security usability analysis of these, and they have not taken off.

Figure 15.6 The snowflake visual hash

It may be possible to replace a long string of digits with a string of words, tapping into system 1 word recognition and improving comparability. Lastly, if none of those methods will work, try to create groups of four or five digits so that at least people find natural breakpoints when carrying out the comparative task.

Kahneman, Reason, Behaviorism

The entire section “Models of People,” presented earlier, is suffused with perspectives that can be used as heuristics, or as a review list, asking yourself “how could this apply?”

The Stajano-Wilson Model

Frank Stajano and Paul Wilson have worked with the creators of the BBC's “Real Hustle” TV show to create a model of scams (Stajano, 2011). Their principles are quoted verbatim here, and could be adapted to use for threat elicitation:

1. Distraction Principle: While we are distracted by what grabs our interest, hustlers can do anything to us and we won't notice.

2. Social Compliance Principle: Society trains people to not question authority. Hustlers exploit this “suspension of suspiciousness” to make us do what they want.

3. Herd Principle: Even suspicious marks let their guard down when everyone around them appears to share the same risks. Safety in numbers? Not if they're all conspiring against us.

4. Dishonesty Principle: Our own inner larceny is what hooks us initially. Thereafter, anything illegal we do will be used against us by the fraudsters.

5. Kindness Principle: People are fundamentally nice and willing to help. Hustlers shamelessly take advantage of it.

6. Need and Greed Principle: Our needs and desires make us vulnerable. Once hustlers know what we want, they can easily manipulate us.

7. Time Principle: When under time pressure to make an important choice, we use a different decision strategy, and hustlers steer us toward one involving less reasoning.

The discussion of the herd principle points out how many variations of the sock puppet/Sybil/tentacle attacks exist, a point for which I'm grateful.

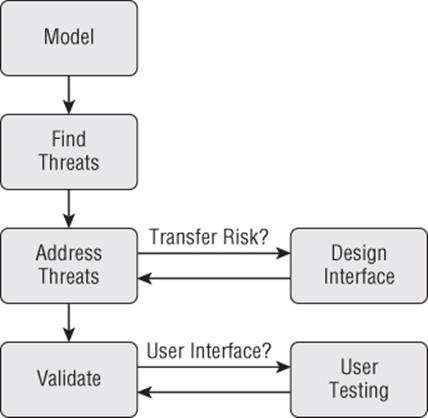

Integrating Usability into the Four-Stage Framework

This chapter advocates looking at usability and ceremony issues as a distinct set of activities from modeling technical threats. It may also be possible to bring usability into the four-stage framework by considering it in two places: mitigation and validation. If the general approach of thinking of mitigations as “change the design, use a standard mitigation; use a custom mitigation, accept the risk” is extended with “transfer risk by asking the person,” then that makes a natural jumping off point to the techniques outlined in this chapter. Similarly, in validation, if a user interface asks the person to make a security or privacy decision, then assessing it with techniques from this chapter will help. This approach, illustrated in Figure 15.7, was developed by Brian Lounsberry, Eric Douglas, Rob Reeder, and myself.

Figure 15.7 Integrating usability into a flow

Tools and Techniques for Addressing Human Factors

Having discussed how to find threats, it's now time to talk about how you can address them. This section begins with myths about people that we as a community need to set aside, it continues with design patterns for helping you find a good solution, and then describes a few design patterns for a kind learning environment.

In an ideal world, everyone would have time to carefully consider each security decision that confronts them, research the trade-offs, and make a call that reflects their risk acceptance given their goals and options. (Also in that ideal world, technology producers would be happy to invest the effort needed to help them.) In the real world, people typically balance their investment of effort against perceived risk, their perception of their own skill, time available, and a host of other factors. Worse, in the case of security, there sometimes is no “right” decision. If you get an e-mail from your boss asking you to print a presentation for an impromptu meeting with the CEO that's happening right now, you can't call to make sure it's really from the boss. You might want to accept the risk that someone has taken over your boss's e-mail. You want to make it easy for people to quickly reach the decision that they would make if they had all the time in the world. You want to make it possible for people to dig deeper, without requiring they do so.

Myths That Inhibit Human Factors Work

There are a number of myths that are threats to human factors work in security. They all center on contempt for normal people. People are not, as is often claimed, the weakest link, or beyond help. The weakest link is almost always a vulnerability in Internet-facing code. Society trusts normal adults to drink alcohol, to operate motor vehicles (ideally not at the same time), and to have children. Is using a computer safely so much harder? If so, whose fault is that? Following are some common myths about human behavior in terms of security:

§ “Given a choice between security and dancing pigs, people will choose dancing pigs every time.” So why make that a choice? More seriously, a better way to state this is, “Given a choice between ignoring a warning that they've clicked through a thousand times before without apparent ill effects and without being entertained, people will bypass a warning every time.”

§ “People don't care about security,” or “People don't want to think about security.” Either or both may well be true. However, people do care about consequences such as having to clean their system of a virus or dealing with fraudulent charges on their debit cards.

§ “People just don't listen.” People do listen. They don't act on security advice because it's often bizarre, time consuming, and sometimes followed by, “Of course, you'll still be at risk.” You need to craft advice that works for the people who are listening to you.

§ “My mom couldn't understand that.” Your mom is very smart. After all, she raised you, and you're reading this book. QED. More seriously, you should design systems that your customers will understand.

Design Patterns for Good Decisions

The design patterns for mitigating threats that exploit people are similar to those for other categories of threats. This section describes the four high-level patterns: minimize what you ask of people, conditioned-safe ceremonies, avoid urgency, and ensure a path to safety.

Minimize What You Ask of People

If people are not good at being state machines running programs, it seems likely that the less that is asked of them, the fewer insecurities a ceremony will have. People must be involved when they have (or can reasonably be expected to have) information that other nodes need to make appropriate decisions. For example, Windows Vista introduced a dialog that asked people what sort of network they had just connected to. This elicited information (such as home, work, or coffee shop) that the computer can't reliably determine, and it was used to configure the firewall appropriately. A person must also be involved when making decisions about meaningful IDs. There are likely other data points or perspectives that a program must ask of a person.

There are two specific techniques to minimize what's asked of people: listing what they need to know, and building consistency:

§ Listing what people need to know: Simply making a list of what people need to know to make a security decision is a powerful technique that Angela Sasse advocates. The technique has several advantages. First, it's among the simplest techniques in this book. It requires no special training or equipment. Second, the act of writing down a list tends to impose a reality check. When the list gets longer than one unit (whiteboard, page, etc.), even the most optimistic will start to question whether it's realistic. Finally, making a list enables you to use that list as a checklist, and asking how the customer will learn each item on the list is an easy (and perhaps obvious) next step.

§ Building consistency: People are outstanding at finding patterns, sometimes even finding patterns where none exist. They use this ability to build models of the world, based on observations of consistency. To the extent that your software is either inconsistent with itself or with the expectations of the operating environment, you are asking more of people. Do not do so lightly. Consistency relates to the wicked versus kind environment problem raised by Jacobs. Ensuring that your security user experiences are consistent within a product is a very worthwhile step, as is ensuring that it's consistent with the interfaces presented by other products. This consistency makes your product a kind learning environment. Of course, this must be balanced with the value of innovation and experimentation. If everything is perfectly consistent, then we can't learn from differences. However, we can try to avoid random inconsistency.

Conditioned-Safe Ceremonies

Consistency will probably help the people who use your system, but a conditioned-safe approach may be even better. The concept of a conditioned-safe ceremony (CSC) is built on the observation that conditioning may play both ways. In other words, rather than train people to expect random shocks or interruptions from security, you could train or condition them to act securely. In their paper introducing the idea (Karlof, 2009), Chris Karlof and colleagues at University of California, Berkeley, proposed four rules governing the use of conditioned-safe ceremonies:

1. CSCs should only condition safe rules, those that can safely be applied even in circumstances controlled by an adversary.

2. CSCs should condition at least one immunizing rule—that is, a rule which will cause attacks to fail.

3. CSCs should not condition the formation of rules that require a user decision to apply the rule.

4. CSCs should not assume people will reliably perform actions which are voluntary, or that they have not been trained/conditioned to perform each time.

They also discuss the idea of forcing functions. In this context, a forcing function is “a type of behavior-shaping constraint designed to prevent human error,” for example, a car that can be started only when the brake pedal is pressed. People regularly make the mistake of omitting steps in a process (it may be one of the most common types of mistakes), and they rarely notice when they've omitted a step. Forcing functions can help ensure that people complete important steps. The forcing function will only work when it is easier to execute than avoid, where avoidance may include avoiding use of the feature set that includes a forcing function. They use the example of Ctrl+Alt+Delete to bring up the Windows Login screen as a forcing function.

Avoiding Urgency

One of the most consistent elements of current online scams is urgency. Urgency is a great tool for the online attackers. They maintain control of the experience, sending the prospective victim to actions of their choice. For example, in a bank phishing scam, if the person sets the e-mail aside, they may later find a real e-mail from the organization, they may use a bookmark, or they may call the number on the back of their card. The fake website may be taken down, blacklisted, or otherwise become unavailable. Therefore, avoiding urgency helps your customers help themselves.

Avoiding urgency can involve something like saying, “We will take no action unless you visit our site and click OK. You can always reach our real security actions through a bookmark, or by using a search engine to get to our site.”

If you've conditioned your customers to expect urgency from you, then urgency is less likely to act as a red flag. Make it harder for the attacker by avoiding urgency in your messages to customers.

Ensuring an Easy Path to Safety

When people are being scammed, is there a way to get back to a safe state? Is it easy to discover (or remember) and take that path to safety? For example, is there always an easy way to access messages you send from your home page? If there is, then potential phishing victims can take control of the experience. They can go to their bookmarked website and get the message. You might use a pattern like the gold bar (discussed in the section “Explicit Warnings,” later in this chapter) or an interstitial page to notify people about important new messages, so they learn that they can find new messages from your site.

If that's always available, then attackers will need to convince people that that system is broken for whatever reason. Today, that's easy, because “go to your bookmark” is lumped in with a barrage of confusing and questionable security advice. (The path to safety pattern was identified by Rob Reeder.)

Design Patterns for a Kind Learning Environment

A kind learning environment contrasts with a wicked one. If you're an attacker, you want the world to be wicked. You want people to be confused and unsure of how to defend themselves, because it makes it easier to get what you want. The following sections provide advice for how to promote a kind environment.

Avoid “Scamicry”

Scamicry is an action by a legitimate organization that is hard for a typical person to distinguish from the action of an attacker—for example, a bank calling a customer and demanding authentication information without an easy way to call back and reach the right person. How can Alice decide whether it's her bank or a fraudster? Trust caller ID? That's trivial to spoof. Similarly, the bank can choose how to track clicks on its marketing campaigns. It can use the domain of the marketing company (example.com/bank/?campaign=123;emailid=345) or it can use a tracking URL within its own domain (bank.com/marketing/?campaign=123;emailid=345) Alice might want to look at the domain to make a decision (and many security people advise her to do so); but if the bank routinely sends e-mail messages with links to tracking domains, then Alice can't look at the URL and decide if it's really her bank. Scamicry makes the world a more wicked environment, disempowers people, and empowers attackers.

What is Scamicry?

My team coined the term scamicry after I received a voicemail claiming to be from a bank. The caller said they needed a callback that day to a number that wasn't listed on their website's Contact Us page. I had written a large check for something, and it triggered their fraud department. However, there was no clear reason for the “call in the next four hours” requirement in the voicemail. The bank could presumably have put their fraud team's number on their website, held the check for a day or so (although this may have been constrained by regulations on check processing). In discussing the experience, we realized that it was a common pattern, and that perhaps naming it would help to identify and then overcome it.

Sometimes it can be remarkably hard to avoid violating common advice, and that should cause us to question the wisdom of that advice. (See the next section for more on good security advice.)

If the steps of a ceremony involve one party violating common security advice, or encouraging another party to do so, then that's a sub-optimal ceremony design. You can think of scamicry as an organization making the usable security problem more wicked.

In their paper on scams, Frank Stajano and Paul Wilson include an insight closely related to scamicry:

System architects must coherently align incentives and liabilities with overall system goals. If users are expected to perform sanity checks rather than blindly follow orders, then social protocols must allow “challenging the authority”; if, on the contrary, users are expected to obey authority unquestioningly, those with authority must relieve them of liability if they obey a fraudster. The fight against phishing and all other forms of social engineering can never be won unless this principle is understood.

—Stajano and Wilson, “Understanding Scam Victims” (Communications of the ACM, 2011)

I would go further, and say that understanding is insufficient; intentional design and coordination between a variety of participants in the “phished ecosystem” is needed.

Properties of Good Security Advice

There's a tremendous amount of advice about how people should act online. In the discussion of behaviorist models of people, you learned about people rationally ignoring security advice. Part of the problem is that there's too much of it, creating a wicked environment. The reasonable question is how should you form good advice? Consider whether your advice meets the following five properties (Shostack, 2011; Microsoft, 2011):

§ Realistic: Guidance should enable typical people to accomplish their goals without inconveniencing them.

§ Durable: Guidance should remain true and relevant, and not be easy for an attacker to use against your people.

§ Memorable: Guidance should stick with people, and should be easy to recall when necessary.

§ Proven effective: Guidance should be tested and shown to actually help prevent social engineering attacks.

§ Concise and consistent: The amount of guidance you provide should be minimal, stated simply, and be consistent within all the contexts in which you provide it.

User Interface Tools and Techniques

Ideally, you should address threats in a way that doesn't require a person to do something. This has the advantage of being more reliable than even the most reliable person, and not conditioning people to click through warnings. Unfortunately, it's not always possible. The methods in this section are a strong parallel to those in Chapter 8. You'll learn about configuration, and how to design explicit warnings and patterns that attempt to force a person to pay attention. This section does not cover authentication, as threats to such systems are covered under spoofing in Chapter 3, “STRIDE,” in the discussion of threats to usability in this chapter, and in Chapter 14.

Configuration

Configuration tasks are generally performed by people because a prompt encourages them to check or improve their security. That prompt could be a story in the news or told by a friend, a policy or reminder e-mail, or an attacker trying to reduce their security (probably as a step to a more interesting goal).

The goals of configuration are as follows:

§ Enable the person to complete a security- or privacy-related goal.

§ Enable the person to complete the goal efficiently.

§ Help people understand the effects of the task they're undertaking.

§ Minimize undesirable side-effects.

§ Do not frustrate the person.

Some metrics you can consider or measure as you're building configuration interfaces include the following:

§ Discoverability: What fraction of people can go from getting a prompt to finding the appropriate interface to accomplish their task? Obviously, it's important that a test not tell the person how to do this, nor start them on the configuration screen.

§ Accuracy: What fraction of people can correctly complete the task they are given?

§ Time to completion: How long does it take to either complete the task or give up? Time to abandonment is likely to be higher, as test subjects will try to please the experimenter or appear diligent or intelligent.

§ Side-effect introduction: What fraction of people do something else by accident while accomplishing the main task?

§ Satisfaction: What fraction of people rate the experience as satisfying, given a scale from “satisfying” to “frustrating”?

The preceding two lists are derived from (Reeder, 2008).

Explicit Warnings

A useful warning consists of a message that there's danger, an assessment of the impact associated with the danger, and steps to avoid the danger. A good warning may also contain some sort of attention-capturing device, such as a picture of a person falling off a ladder. That's a very general definition of a useful warning. For more specific advice, consider the NEAT/SPRUCE combination or the Gold Bar pattern.

NEAT and SPRUCE

NEAT and SPRUCE are mnemonics for creating effective information security warnings. Wallet cards created by Rob Reeder read: “Ask yourself: Is your security or privacy UX (user experience)”:

§ Necessary? Can you change the architecture to eliminate or defer this user decision?

§ Explained? Does your UX present all the information the user needs to make this decision? Have you followed SPRUCE (see below)

§ Actionable? Have you determined a set of steps the user will realistically be able to take to make the decision correctly?

§ Tested? Have you checked that your UX is effective for all scenarios, both benign and malicious? (Our cards refer to the UX being NEAT, which has been changed here to effective to encourage testing the warning.)

SPRUCE is a checklist for what makes an effective warning:

§ Source: State who or what is asking the user to make a decision

§ Process: Give the user actionable steps to follow to make a good decision

§ Risk: Explain what bad result could happen if the user makes the wrong decision

§ Unique knowledge user has: Tell the user what information they bring to the decision

§ Choices: List available options and clearly recommend one

§ Evidence: Highlight information the user should factor in or exclude in making the decision

The cards are available as a free PDF from Microsoft (SDL Team, 2012). So why a wallet card? In a word, usability. Rob Reeder reviewed everything he could find on creating effective warnings, and summarized it in a 24-page document. That document contained 68 elements of advice. In working with real programmers, however, he discovered that was way too long, and created our NEAT guidance: Warnings should be necessary, actionable, explained, and tested. The SPRUCE extension was joint work with myself and Ellen Cram Kowalczyk (Reeder, 2011).

The “Gold Bar” Pattern

A gold bar pattern combines warning, configuration, and sometimes other safety features into a non-intrusive dialog bar appearing across the top or bottom of a program's main window. You've probably seen the pattern in Microsoft Office and in browsers, including IE and Firefox.

The interface appears non-modally while the program does as much as it safely can. For example, a Word document from the Internet will render, allowing you to read it. A bar appears across the top, informing you that this is a safer, limited version of Word. (Underneath, Word has opened the document in a sandboxed and limited version of the program that has less attack surface and functionality. If you watch carefully when clicking one of these, you'll notice that Word disappears and reappears. That's the viewer closing and the full version launching Malhotra, 2009.)

This pattern has a number of important security advantages. First and foremost, it may eliminate the need for the person to make a decision at all. Second, it delays security decision-making to a point when more information is available. Third, it replaces dialogs that said things like “While files from the Internet can be useful, this file type can potentially harm your computer,” or “Would you like to get your job done?”

The gold bar pattern has limits. The bars are intended to be subtle, which can result in them being overlooked. Their size limits the amount of text within the bar. They can also fail to align with the mental model of the person experiencing them. For example, someone might ask, “Why is e-mail from my boss treated as untrusted, and why do I need to leave the protected mode to print?” (Because your boss's computer might be infected, and because printing exposes substantial attack surface. However, those are neither obvious nor explained in the interface.) Lastly, because the bars are subtle, they fail to impose a strong interrupt and may therefore fail to invoke system 2, described earlier. System 1 is the faster, intuitive system, whereas system 2 processes complex problems. They were introduced earlier in the section “Cognitive Science Models of People.”

Patterns That Grab Attention

There is a whole set of patterns that try to force people to slow down before doing something they'll regret. This section reviews some of the more interesting ones. It might help to conceptualize these as ways to invoke system 2. The goal of these mechanisms is to get people encountering them to avoid jumping to the answer using system 1 and instead bring in their system 2.

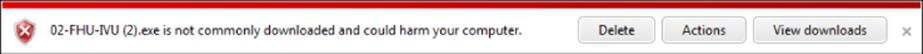

Hiding the Dangerous Choice

Internet Explorer's SmartScreen Filter doesn't put the dangerous run button directly in front of people. It has used a variety of strategies for this. For example, one post-download dialog, shown in Figure 15.8, has buttons labeled Delete, Actions, and View Downloads. When shown this warning, IE users choose to delete or not run malware 95 percent of the time. This interface design has been combined with other usable security wins, including showing the dialog (on average) only twice per year, which may make the hiding pattern less intrusive (Haber, 2011). It is hard to disentangle the precise elements that make this so effective, but much of it has to do with the combination of elements, refined through extensive testing.

Figure 15.8 An IE SmartScreen Filter warning dialog

Modal Dialogs

Modal dialogs are those that capture focus and cannot be closed until a person resolves the choice in the dialog. Modal dialogs fell out of favor for a variety of accidental reasons. For example, a floating modal dialog box was not always the front-most window in an interface, or it could pop up on another monitor or virtual workspace. Modal dialogs also interrupt task flow. Lightboxing is the practice of displaying a darkened interface with only the modal dialog “lit up.” This addresses many of the accidental issues with modal dialogs, and it is commonly used by websites.

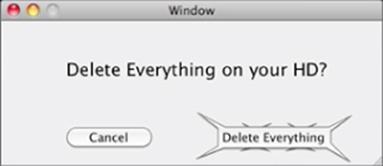

Spiky Buttons

Keith Lang has proposed the use of less friendly user interface elements, such as spiky buttons (Lang, 2009). A sample is shown in Figure 15.9. In a blog discussion about it, the question of how quickly people would habituate to it was raised. Others suggested more standard stop imagery would work better (37Signals, 2010). Spiky buttons may be more of a “don't jump there” message than a “pay attention” message, but without experimentation and a set of people with ongoing exposure to them, it's hard to say. To the best of my knowledge, it has not been used.

Figure 15.9 A spiky button

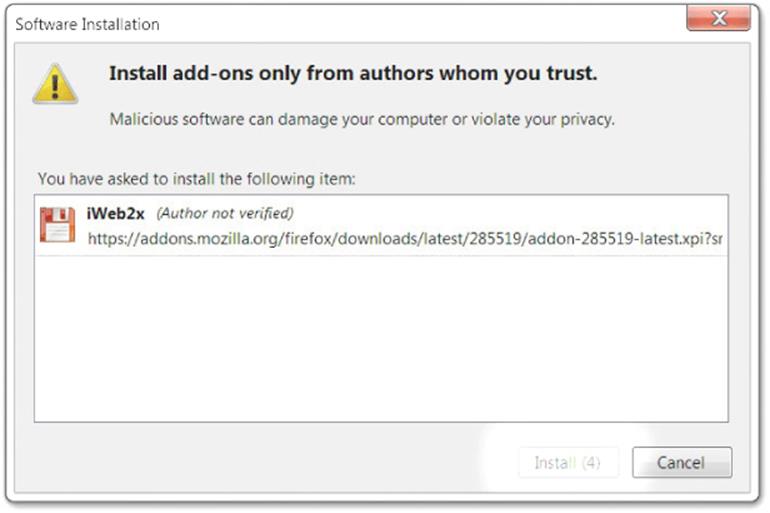

Delay to Click

When you attempt to install a Firefox add-in, Firefox imposes a delay of four seconds. As shown in Figure 15.10, the Install button is grayed out, and displays a countdown from (4). Somewhat surprisingly, this is actually not designed to get you to stop and read the dialog, although it may have that effect. It is intended to block an attack whereby a site shows a CAPTCHA-like interface with the word “only” displayed. The site attempts to install the software when you type the “n,” triggering a security dialog; when you type the “y,” it triggers the “yes” in the dialog, which now has focus (Ruderman, 2004). A related technique, a 500ms delay, is used for other dialogs, such as the geolocation prompt (Zalewski, 2010).

Figure 15.10 Firefox doesn't care if you read this.

Testing for Human Factors

Like every way you address threats, it is important that you test those mitigations for threats that involve people. If an attacker is trying to convince someone to take action, does your interface help the potential victim make a security choice, while letting them get their normal work done? Unfortunately, people are surprising, so testing with real people is a useful and important step.

There are a few issues that make the testing of any security-relevant user interface challenging. The best summary of those issues is in a document by usable security researcher Stuart Schechter (Schechter, 2013). That document is focused on writing about usable security experiments (rather than designing or performing them). However, it still contains very solid advice that will be applicable. You can also find excellent guidance on usability testing in books such as Rubin's “Handbook of Usability Testing” (Wiley, 2008), which unfortunately doesn't touch on the unique issues in testing security scenarios.

Benign and Malicious Scenarios

Normal usability testing asks a question such as “Can someone accomplish task X with reasonable effort?” Good ceremony testing requires testing “Can someone accomplish task X and raise the right exception behavior only when under attack?” That requires far more subtle test design. (That is, unless you expect that your attackers will start with “This file really is malicious! Please click on it anyway!”) It also requires at least twice as many tests. Lastly, unless you have reason to believe that most people will only encounter the user experience being tested extremely rarely, a good test needs to include some element of getting people familiar with the new user experience before springing an attack on them.

Ecological Validity

Participants in modern user studies tend to be aware that they're being studied. The release forms and glass walls tend to give it away. Also, people participating in a user study tend to expect that the tasks asked of them are safe. They have good reason to expect that. The trouble is that if you think what's being asked of you is safe, or at least a designed experience, then you may not worry about things (such as tasks, indicators, or warnings) that would normally worry you. Alternately, people being studied often spend time trying to figure out what's being studied, or what behaviors will please the experimenters. Researchers refer to this as the ecological validity problem, and it's a thorny one. The best sources of current thinking are SOUPS (the Symposium On Usable Privacy and Security) and the security track of the ACM SIGCHI conference. (SIGCHI is the Special Interest Group on Computer-Human Interaction.)

General Advice

The following list is derived from a conversation with Lorrie Cranor, who accurately points out that a simple checklist will be sufficient to explain how to perform effective usability testing. Any errors in this are mine, not Dr. Cranor's:

§ It is hard to predict how people are going to behave without doing a user study, so user studies are important.

§ Find someone, either a colleague or a consultant, who knows how to do user studies and work with that person.

§ The user study expert you work with is unlikely to know much about threat modeling or to have done a usable security study before. You will need to help them understand what they need to know about security and threat modeling.

§ As you work with the usability expert to design a user study, keep in mind that you need to find a way to study how people behave in situations where they are under an active attack. The tricky part is figuring out how to put your study participants in a situation that simulates these threats without actually putting anyone at risk. (See the preceding section on “Ecological Validity.”)