Improving the Test Process: Implementing Improvement and Change - A Study Guide for the ISTQB Expert Level Module (2014)

Chapter 7. Organization, Roles, and Skills

Getting organized will help manage your improvement plans effectively and ensure that test process improvements have a lasting positive impact. This chapter starts by considering different forms of organization that can be set up to manage test improvement programs and then describes the roles of the test process improver and the test process assessor.

As with any organization, success depends on having the right people with the right knowledge and skills. This chapter takes a practical look at the wide range of technical and social skills needed.

7.1 Organization

Image you’re a test manager and you’ve just presented your project’s test improvement plan to the organization’s line management. You’ve proposed some quick wins for your project, but there are also a number of longer-term improvements required. Great! They like your proposals; now you can go ahead and implement the changes. Now the nagging doubts start. You know you have time to implement some of the quick wins in your project, but who is going to take on the longer-term improvements needed? Without someone to take on responsibility for the overall improvement plan, this could be simply too much for you as a test manager.

You may also be concerned that your ideas are confined to your project. Wouldn’t your colleagues be interested in what you are doing here? If you had some kind of organized group of skilled people who could pick up your test improvement plan and also look beyond the project for benefits elsewhere, that could help everyone. This is where a test improvement organization comes in (and it may be embedded within the regular test organization). It gives you the ability to focus on one specific area, test improvement. It’s where the test process improver plays a leading role.

The sections that follow look at the process improvement organization and how it is made up, both structurally and from a human resources point of view. Test improvement organizations are principally found at the departmental or organizational level. The principal focus will be placed on the structure of a typical test improvement organization (the Test Process Group) and its main function. The impacts of outsourcing or offshoring development on this organization are then outlined.

Syllabus Learning Objectives

|

LO 7.1.1 |

(K2) Understand the roles, tasks and responsibilities of a Test Process Group (TPG) within a test improvement program. |

|

LO 7.1.2 |

(K4) Evaluate the different organizational structures to organize a test improvement program. |

|

LO 7.1.3 |

(K2) Understand the impact of outsourcing or offshoring of development activities on the organization of a test process improvement program. |

|

LO 7.1.4 |

(K6) Design an organizational structure for a given scope of a test process improvement program. |

7.1.1 The Test Process Group (TPG)

The organization that takes responsibility for test process improvement can be given a variety of different names:

![]() Process Improvement Group (PIG, although people may not like being a member of the PIG group)

Process Improvement Group (PIG, although people may not like being a member of the PIG group)

![]() Test Engineering Process Group (TEPG)

Test Engineering Process Group (TEPG)

![]() Test Process Improvement Group (possible, but the abbreviation “TPI group” may give the impression that the group is focused only on the TPI model)

Test Process Improvement Group (possible, but the abbreviation “TPI group” may give the impression that the group is focused only on the TPI model)

![]() Test Process Group (TPG), the term used in this chapter (see Practical Software Testing [Burnstein 2003])

Test Process Group (TPG), the term used in this chapter (see Practical Software Testing [Burnstein 2003])

Test Process Group (TPG) A collection of (test) specialists who facilitate the definition, maintenance, and improvement of the test processes used by an organization [After Chrissis, Konrad, and Shrum 2004].

Irrespective of what you call it, the main point is that you understand the following different aspects of a TPG:

![]() Its scope

Its scope

![]() Its structural organization

Its structural organization

![]() The services it provides to stakeholders

The services it provides to stakeholders

![]() Its members and their roles and skills

Its members and their roles and skills

TPG Scope

The scope of a TPG is influenced by two basic aspects: level of independence and degree of permanency.

An independent TPG can become the “owner” of the test process and function effectively as a trusted single point of contact for stakeholders. The message is “The test process is in good hands. If you need help, this is who you come to.” In general, you should aim to make the TPG responsible for the test process at a high organizational level, where decisions can be taken that influence all, or at least many, projects and where standardized procedures and best practices (e.g., for writing test design or the test plan) can be introduced for achieving maximum effectiveness and efficiency. At project level, a test manager has more say in how to manage and improve the test process on a day-to-day basis. A TPG that is set up with only project scope could end up in conflict with the test manager and will often be unable to cross-feed improvements to or from other similar projects. This is just a general rule, of course, that may not hold true in big projects (we know of a project with over 100 testers that lasted more than five years and had a dedicated TPG organization).

Of course “independent” does not mean that they are not accountable to some other person or department. As you will see later, the structure of a TPG ideally includes elements that directly involve higher levels of management.

The aspect of permanency gives the TPG sufficient scope to enable medium- and long-term improvements to be considered. Improvement plans can be more effectively implemented and controlled. TPGs that are “here today and gone tomorrow” often lack consistency in their approach to test process improvement and tend to focus only on short-term issues. At the project level, improvement plans may be at the mercy of key players whose tasks could get re-prioritized, leaving test process improvement initiatives “high and dry.” You should try to avoid these ad hoc TPGs; they are limited in scope and often limited in value.

You may well see changes to the scope and permanency of the test process improvement organization as improvements in their own right. Typically, independent and permanent TPGs are found in more mature organizations where test process improvements are frequently driven by gathered metrics. Organizations striving for this level of “optimizing” test process maturity will consider the setting up of a TPG as part of their test process improvement strategy. If we look at the TMMi model, for example, we see that maturity level 2 organizations have a type of TPG within projects. As the organization matures to TMMi level 3, the TPG typically becomes a more permanent organization.

TPG Organization

The IDEAL model [McFeeley 1996] proposes the structure of a Test Process Group consisting of three distinct components. Even though this would be relevant mostly for larger organizations, smaller organizations can still benefit from considering this structure, which includes sample charters for each of the elements outlined in the following section.

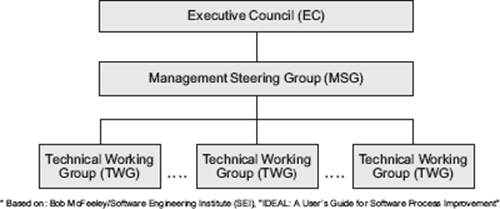

The structure of a TPG for a large organization is shown in figure 7–1.

Figure 7–1 TPG for a large organization

The Executive Council (EC) deals with strategy and policy issues. It establishes the approaches to be used for test process improvement (e.g., analytical, modelbased), specifies what the scope of the TPG should be, and defines test process ownership. Typically, the EC would be the same high-level body that sets up the overall testing policy for the organization. Indeed, aspects relating to test process improvement would often be included as part of the test policy. Given that the TPG cannot function as an “island” within an organization, the EC defines the interfaces to other groups that deal with IT processes, such as a Software Engineering Process Group (SEPG), and resolves any scoping and responsibility issues between these groups. It would be unlikely for the EC to meet often, unless major changes are being proposed with wide-ranging impact.

The operational management of the TPG is performed by the Management Steering Group (MSG). This is a group of mostly high-level line managers (e.g., the manager of the test organization), program managers, and perhaps senior test managers who regularly meet to set objectives, goals, and success criteria for the planned improvement. They steer the implementation of test process improvements in the organization by setting priorities, monitoring the high-level status of test process improvement plans, and providing the necessary resources to get the job done. Of course, some of these resources may come from their own budgets, so the test process improver will need to convince the MSG members that improvement propopsals are worthwhile (e.g., by means of a measureable business case). The soft skills mentioned later in this chapter will be of particular value in such situations. The MSG is not involved in implementing changes; it sets up other groups to do that (see the next paragraph below). Depending on the amount and significance of test process improvements being undertaken, the MSG typically meets on a quarterly or even monthly basis.

Technical Working Groups (TWGs) are where the individual measures in improvement plans are implemented. Members of these teams may be drawn from any number of different departmements, such as development, business analysts, and of course, testing. The individual tasks performed may range from long-term projects (as might be the case for a test automation initiative) to short-term measures, such as developing new checklists to be used in experience-based testing or testability reviews. The MSG may set up temporary TWGs to perform research tasks, investigate proposed solutions to problems, or conduct the early proof-of-concept tasks needed to build a business case for larger-scale improvement proposals. As a result of these activities, it would be expected from a TWG that they adjust their original plans to incorporate lessons learned and take into account any changes proposed by the MSG (the IDEAL model calls these short-term plans tactical action plans). Note, however, that the TWG reports to the MSG, so any changes made from within the TWG itself must be justified to the MSG.

Now, you may be asking yourself, “Is a TWG a permanent part of the overall TPG or not?” The TWG proposed in the IDEAL model suggests that they are nonpermanent and disband after their tasks and objectives have been completed. This may be true for some TWGs, but we would suggest that permanent TWGs are also required to achieve the consistency and permanency mentioned earlier in the section on TPG scope.

If your organization is relatively small (say, less than 30 testers), the structures proposed by the IDEAL model may appear rather heavy weight. In this case, you can scale the whole idea down to suit your organization’s size. You can start, for example, with just a single person who is allocated test improvement responsibility and who meets regularly with senior management to talk about these issues. It’s not necessary to call this a Management Steering Group, but the person would basically be performing the same kind of function.

Establishing an organizational structure for test process improvement may not be trivial; it may be necessary to initiate decision-making at the highest levels of management (e.g., when setting up an Executive Council or Management Steering Group). The practice of outsourcing and offshoring generally increases the complexity of organizations. These are discussed in section 7.1.2.

Services Provided

We like to think of a Test Process Group containing individual permanent TWGs as service providers to the overall IT organization. By adopting a standardized “industrialized” approach to test process improvement, they are able to offer standard packages for projects and the Management Steering Group. The group might typically provide the following services:

![]() Performing test process assessments

Performing test process assessments

![]() Providing coaching and training in testing

Providing coaching and training in testing

![]() Providing individual consultancy to projects to enable improvements to be rolled out successfully

Providing individual consultancy to projects to enable improvements to be rolled out successfully

Note that in this book we are considering the Test Process Group principally from the aspect of test process improvement. It is not uncommon for a TPG to provide other general testing services, such as creation of master test plans, implementation of testing tools, and even some test management tasks.

TPG Skills

A thorough description of the skills needed by the test process improver is provided in section 7.3.. The social skills and expertise that need to be represented in a Test Process Group are summarized here.

Social skills:

![]() Consultancy

Consultancy

![]() Conflict handling

Conflict handling

![]() Negotiation

Negotiation

![]() Enthusiasm and persuasiveness

Enthusiasm and persuasiveness

![]() Honest and open attitude

Honest and open attitude

![]() Able to handle criticism

Able to handle criticism

![]() Patience

Patience

Expertise

![]() Structured testing

Structured testing

![]() Software process improvement

Software process improvement

![]() Test process improvement models

Test process improvement models

![]() Analytical approaches (e.g., test metrics)

Analytical approaches (e.g., test metrics)

![]() Test processes

Test processes

![]() Test tools

Test tools

![]() Test organization

Test organization

What Can Go Wrong?

Creation of a TPG doesn’t, of course, guarantee success. Here are just a few of the risks that need to be considered:

![]() The TPG is perceived by stakeholders as “just overhead.” Remember, if the TPG is established as an overall entity within an organization, there may be people who critically view the resources it consumes.

The TPG is perceived by stakeholders as “just overhead.” Remember, if the TPG is established as an overall entity within an organization, there may be people who critically view the resources it consumes.

![]() The TPG is unable to implement improvements at the organizational level. This may, for example, be due to insufficient backing from management because responsibilities are not clearly defined (e.g., who “owns” the test process), a lack of coordination, or simply not having the right people involved.

The TPG is unable to implement improvements at the organizational level. This may, for example, be due to insufficient backing from management because responsibilities are not clearly defined (e.g., who “owns” the test process), a lack of coordination, or simply not having the right people involved.

![]() Some TWGs are disbanded, resulting in loss of continuity and know-how.

Some TWGs are disbanded, resulting in loss of continuity and know-how.

![]() The products of the TPG are not pragmatic or flexible enough. This applies in particular to standardized templates and procedures.

The products of the TPG are not pragmatic or flexible enough. This applies in particular to standardized templates and procedures.

![]() The people making up the TPG don’t have the necessary skills.

The people making up the TPG don’t have the necessary skills.

It’s the task of the test process improver to manage and mitigate these risks. Many of them can be managed by showing the value a TPG adds whenever possible.

7.1.2 Test Improvement with Remote, Offshore, and Outsourced Teams

When two people exchange information, there is always a “filter” between what the sender of the information wishes to convey and what the receiver actually understands. The skills needed to handle these kinds of communications problem are discussed in section 7.3.. However, the organizations to which the communicating people belong can also influence the ability of people to communicate effectively. This is particularly the case when people are geographically remote from each other (perhaps even offshore) and where development and/or test processes have been outsourced.

For providing an effective and professional message, research has taught us that only 20 percent is determined by the “what” part (e.g., words, conversation, email), 30 percent is determined by the “how” part (e.g., tone of voice), and no less than 50 percent by the “expression” part (e.g., face, gestures, posture). In outsourced and distributed environments thus, at least 80 percent of the communication becomes challenging.

The test process improver needs to communicate clearly to stakeholders on a large number of issues, such as resources, root causes, improvement plans, and results. It is therefore critical to appreciate the impact of outsourcing or offshoring on a test process improvement program. Probably the following questions are the two most significant questions to consider:

![]() Are there any barriers betweeen us?

Are there any barriers betweeen us?

![]() Are communication channels open?

Are communication channels open?

Contractually, it is common to find that the outsourced test process cannot be changed by anyone other than the “new” owner, making improvement initiatives difficult or even impossible. Even if there is some scope for getting involved, the test process improver faces a wide range of different factors that might disrupt their ability to implement improvement plans. These may be of a political, cultural, or contractual nature. On the political front, it could be, for example, that the manager of an onshore part of the organization resists attempts to transfer parts of the test process to an offshore location. There may be subtle attempts to show that suggested improvements “won’t work there,” or there may be outright refusal to enact parts of the plan, perhaps driven by fear (“If I cooperate, I may lose my job”). Cultural difficulties can also be a barrier to test process improvement. Language and customs are the two most significant issues that can prevent proper exchange of views regarding a specific point. Perhaps people are using different words for the same things (e.g., test strategy and test approach), or it could be that long telephone conferences in a foreign language, not to mention different time zones, create barriers to mutual understanding.

For the test process improver, keeping communications open means keeping all involved parties fully informed about status and involved in decision-making. Status information needs to be made available regarding the improvement project itself (don’t forget those time zones), and decisions on improvements need to be made in consultation with offshore partners. This might mean, for example, involving all parties in critical choices and decisions (e.g., choice of pilot project or deciding on the success/failure of improvements) and in the planning of improvements and their rollout into the whole organization.

Good alignment is a key success factor when dealing with teams who are geographically remote or offshore. This applies not just to the communications issues just mentioned but also to process alignment, process maturity, and other “human” factors such as motivation, expectations, and quality culture. Clearly, the success of test process improvements will be placed at higher risk if the affected elements of an organization apply different IT processes (e.g., for software development) or where similar processes are practiced but at different levels of maturity (e.g., managed or optimizing). Where expectations and motivation are not aligned, you might find increased resistance to any proposed improvements. People generally resist change, so if their expectations and motivation are not aligned, you can expect conflict.

Mitigating the Risks

The likelihood of all of the risks just described is generally higher with remote and offshore organizations or where outsourcing is practiced. The test process improver should appreciate these issues and propose mitigating measures should any of the risks apply. What can be done? It may be as simple as conducting joint workshops or web-based conference calls to align expectations. You may introduce a standardized training scheme such as ISTQB to ensure that both parties have a common understanding of testing issues. On the other hand, you may propose far-reaching measures like setting non-testing process maturity entry criteria before embarking on test process improvements. This could mean a major program in its own right (e.g., to raise maturity levels of particular IT processes to acceptable levels). Implementing such changes are outside of the test process improver’s scope, but they should still be proposed if considered necessary. In general, practicing good governance and setting up appropriate organizational structures is a key success factor to any outsourcing program involving onshore and offshore elements.

For test process improvements to take effect, you need to appreciate which organizational structures and roles are defined for dealing with these distributed teams (e.g., offshore coordinators) and then ensure that test process improvement can be “channeled” correctly via those people. Once again, we are looking at good soft skills from the test process improver to forge strong links with those responsible for managing remote, offshore, and outsourced teams; it can make the difference between success and failure for getting test process improvements implemented.

7.2 Individual Roles and Staffing

Syllabus Learning Objectives

|

LO 7.2.1 |

(K2) Understand the individual roles in a test process improvement program. |

In the preceding chapters of this book, and in particular in the discussions earlier in this chapter, the role test process improver has figured strongly. That’s hardly surprising given the nature of the book, but we now bring the various aspects we’ve discussed so far together into a definition of the role itself. In addition, we’ll describe the specific roles for supporting test process assessments. Individual organizations may, of course, tailor these role definitions to suit their specific needs.

Note that having an independent and permanent Test Process Group will generally make it easier to establish these specific roles and provide a solid organizational structure for skills development. In general, it is highly recommended to make these roles formal and therefore part of the human resource process and structure.

7.2.1 The Test Process Improver

The description of the test process improver’s role could be summarized quite simply as “Be able to do all the things contained in this book.” That would be a bit too simple, and after all, it’s useful to have a point of reference from which to get a summary. The expectations discussed in this section are based on the business outcomes for the test process improver, as described in the ISTQB document “Certified Tester Expert Level, Modules Overview” [ISTQB-EL-OVIEW]. Note that the skills and experience are shown as “desired”; each organization would need to decide for itself what is actually “required.”

In general, the test process improver should be perceived by fellow testers and stakeholders as the local testing expert. This is especially true in smaller organizations. In larger organizations, the test process improver could have more of a managment background and be supported by a team of testing experts.

Tasks and expectations

![]() Lead programs for improving the test process within an organization or project.

Lead programs for improving the test process within an organization or project.

![]() Make appropriate decisions on how to approach improvement to the test process.

Make appropriate decisions on how to approach improvement to the test process.

![]() Assess a test process, propose step-by-step improvements, and show how these are linked to achieving business goals.

Assess a test process, propose step-by-step improvements, and show how these are linked to achieving business goals.

![]() Set up a strategic policy for improving the test process and implement that policy.

Set up a strategic policy for improving the test process and implement that policy.

![]() Analyze specific problems with the test process and propose effective solutions.

Analyze specific problems with the test process and propose effective solutions.

![]() Create a test improvement plan that meets business objectives.

Create a test improvement plan that meets business objectives.

![]() Develop organizational concepts for improvement of the test process that include required roles, skills, and organizational structure.

Develop organizational concepts for improvement of the test process that include required roles, skills, and organizational structure.

![]() Establish a standard process for implementing improvement to the test process within an organization.

Establish a standard process for implementing improvement to the test process within an organization.

![]() Manage the introduction of changes to the test process, including cooperation with the sponsors of improvements. Identify and manage the critical success factors.

Manage the introduction of changes to the test process, including cooperation with the sponsors of improvements. Identify and manage the critical success factors.

![]() Coordinate the activities of groups implementing test process improvements (e.g., Technical Working Groups).

Coordinate the activities of groups implementing test process improvements (e.g., Technical Working Groups).

![]() Understand and effectively manage the human issues associated with assessing the test process and implementing necessary changes.

Understand and effectively manage the human issues associated with assessing the test process and implementing necessary changes.

![]() Function as a single point of contact for all stakeholders regarding test process issues.

Function as a single point of contact for all stakeholders regarding test process issues.

![]() Perform the duties of a lead assessor or co-assessor, as shown in section 7.2.2 and section 7.2.3, respectively.

Perform the duties of a lead assessor or co-assessor, as shown in section 7.2.2 and section 7.2.3, respectively.

Test process improver A person implementing improvements in the test process based on a test improvement plan.

Desired skills and qualifications

![]() Skills in defining and deploying test processes

Skills in defining and deploying test processes

![]() Management skills

Management skills

![]() Consultancy and training/presentation skills

Consultancy and training/presentation skills

![]() Change management skills

Change management skills

![]() Soft skills (e.g., communications)

Soft skills (e.g., communications)

![]() Asessment skills

Asessment skills

![]() Knowledge and skills regarding test improvement models (e.g., TPI NEXT and TMMi)

Knowledge and skills regarding test improvement models (e.g., TPI NEXT and TMMi)

![]() Knowledge and skills regarding analytical-based improvements

Knowledge and skills regarding analytical-based improvements

![]() (Optional) ISTQB Expert Level certification in Improving the Test Process and preferably ISTQB Full Advanced certification providing the person with a wide range of testing knowledge. (Note that for TMMi, a specific qualification is available: TMMi Professional.)

(Optional) ISTQB Expert Level certification in Improving the Test Process and preferably ISTQB Full Advanced certification providing the person with a wide range of testing knowledge. (Note that for TMMi, a specific qualification is available: TMMi Professional.)

Desired experience

![]() Experience as a test designer or test analyst in several projects

Experience as a test designer or test analyst in several projects

![]() At least five years of experience as a test manager

At least five years of experience as a test manager

![]() Experience in the software development life cycle(s) being applied in the organization

Experience in the software development life cycle(s) being applied in the organization

![]() Also helpful is some experience in test automation

Also helpful is some experience in test automation

7.2.2 The Lead Assessor

Tasks and expectations

![]() Plan the assessment of a test process.

Plan the assessment of a test process.

![]() Perform interviews and analysis according to a specific assessment approach (e.g., model).

Perform interviews and analysis according to a specific assessment approach (e.g., model).

![]() Write the assessment report.

Write the assessment report.

![]() Propose step-by-step improvements and show how these are linked to achieving business goals.

Propose step-by-step improvements and show how these are linked to achieving business goals.

![]() Present the assessment conclusion, findings, and recommendations to stakeholders.

Present the assessment conclusion, findings, and recommendations to stakeholders.

![]() Perform the duties of a co-assessor (see section 7.2.3).

Perform the duties of a co-assessor (see section 7.2.3).

Assessor A person who conducts an assessment; any member of an assessment team.

Lead assessor The person who leads an assessment. In some cases (for instance, CMMI and TMMi) when formal assessments are conducted, the lead assessor must be accredited and formally trained.

Required skills and qualifications

![]() Soft skills, in particular relating to interviewing and listening

Soft skills, in particular relating to interviewing and listening

![]() Writing and presentation skills

Writing and presentation skills

![]() Planning and managerial skills (especially relevant at larger assessments)

Planning and managerial skills (especially relevant at larger assessments)

![]() Detailed knowledge and skills regarding the chosen assessment approach

Detailed knowledge and skills regarding the chosen assessment approach

![]() (Desirable) At least ISTQB Advanced Level Test Manager certification (Full Advanced qualification recommended so that other areas of testing are also covered)

(Desirable) At least ISTQB Advanced Level Test Manager certification (Full Advanced qualification recommended so that other areas of testing are also covered)

![]() For formal TMMi assessments, accredited as a TMMi lead assessor [van Veenendaal and Wells 2012]

For formal TMMi assessments, accredited as a TMMi lead assessor [van Veenendaal and Wells 2012]

![]() For informal TMMi assessments, TMMi experienced or accredited assessor [van Veenendaal and Wells 2012]

For informal TMMi assessments, TMMi experienced or accredited assessor [van Veenendaal and Wells 2012]

Required experience

![]() At least two assessments performed as co-assessor in the chosen assessment approach

At least two assessments performed as co-assessor in the chosen assessment approach

![]() Experience as a tester or test analyst in several projects

Experience as a tester or test analyst in several projects

![]() At least five years of experience as a test manager

At least five years of experience as a test manager

![]() Experience in the software development life cycle(s) being applied in the organization

Experience in the software development life cycle(s) being applied in the organization

7.2.3 The Co-Assessor

When performing interviews, the lead assessor is required to perform several tasks in parallel (e.g., listen, take notes, ask questions, and guide the discussion). To assist the lead assessor, it may be helpful to define a specific role; the co-assessor.

Tasks and expectations

![]() Take structured notes during assessment interviews.

Take structured notes during assessment interviews.

![]() Provide the lead assessor with a second opinion where a specific point is unclear.

Provide the lead assessor with a second opinion where a specific point is unclear.

![]() Monitor the coverage of specific subjects, and where necessary, remind the lead assessor of any aspects not covered (e.g., a specifc checkpoint when using the TPI NEXT model).

Monitor the coverage of specific subjects, and where necessary, remind the lead assessor of any aspects not covered (e.g., a specifc checkpoint when using the TPI NEXT model).

Required skills and qualifications

![]() Soft skills, in particular relating to note-taking

Soft skills, in particular relating to note-taking

![]() Good knowledge of the chosen assessment approach

Good knowledge of the chosen assessment approach

![]() (Desirable) ISTQB Foundation Level certification

(Desirable) ISTQB Foundation Level certification

Required experience

![]() Experience as a tester or test analyst in several projects

Experience as a tester or test analyst in several projects

7.3 Skills of the Test Process Improver/Assessor

In the previous sections we looked at the roles and organization of test process improvement. We briefly listed some of the skills we would expect to find in these organizations and the people performing those roles. This section goes into more detail about those skills and provides insights into why they are of importance when performing the various tasks of a test process improver.

Syllabus Learning Objectives

|

LO 7.3.1 |

(K2) Understand the skills necessary to perform an assessment. |

|

LO 7.3.2 |

(K5) Assess test professionals (e.g., potential members of a Test Process Group / Technical Working Group) with regard to their deficits of the principal soft skills needed to perform an assessment. |

|

LO 7.3.3 |

(K3) Apply interviewing skills, listening skills and notetaking skills during an assessment, e.g., when performing interviews during “Diagnosing the current situation.” |

|

LO 7.3.4 |

(K3) Apply analytical skills during an assessment, e.g., when analyzing the results during “Diagnosing the current situation.” |

|

LO 7.3.5 |

(K2) Understand presentational and reporting skills during a test process improvement program. |

|

LO 7.3.5 |

(K2) Understand persuasion skills during a test process improvement program. |

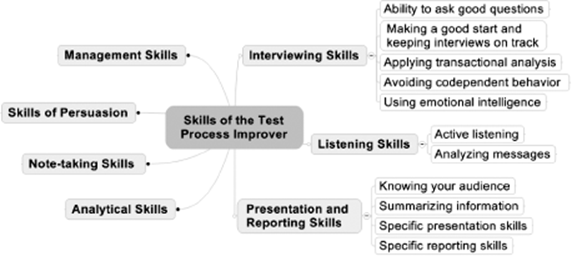

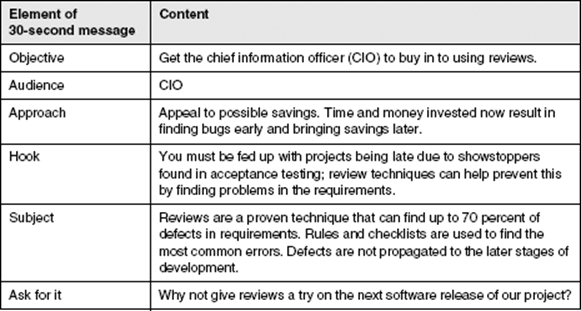

The key skills we will be discussing are shown in figure 7–1.

Figure 7–2 Skills of the test process improver

Note that an independent and permanent TPG (discussed earlier in section 7.1.1) should be responsible for development of specific skills needed for test process improvement. These can also be beneficial for test management and may be acquired together with test management skills.

Before we discuss some of the skills in more details, a word of advice: We, as humble test process improvers, are generally not trained psychologists. Certainly an appreciation of this fascinating subject can help you in performing good interviews, but you should focus on the practical uses rather than the theory. This is where some of the literature on “soft skills for testers or test managers,” in our opinion, is not entirely helpful—too much theory; not enough practical hints on how to apply it.

7.3.1 Interviewing Skills

Interviewing is a key activity in performing assessments. You need information to find out where you are in the test process, and much of that information comes from talking to people. In the context of test process assessment, the talking is generally done in the form of interviews. If you have poor interview skills, you are unlikely to get the most out of the precious time you have with the people that are of most interest to you, like test managers, testers, project leaders, and other stakeholders.

If you conduct interviews without having sufficient practice, you could experience some of the following (unfortunately typical) symptoms:

![]() In a bad interview, the interviewee feels like they are partaking in an interrogation rather than an open interview. This is a common mistake, particularly if you are using a test process improvement model. For the beginner, it’s tempting to think of the checkpoints in the model as being “ready-made” questions; they are not. For the interviewee, there is nothing more tedious than checkpoints being read out like this: “Do you write test plans? Answer yes or no.” After the 10th question like that, they will be heading for the door.

In a bad interview, the interviewee feels like they are partaking in an interrogation rather than an open interview. This is a common mistake, particularly if you are using a test process improvement model. For the beginner, it’s tempting to think of the checkpoints in the model as being “ready-made” questions; they are not. For the interviewee, there is nothing more tedious than checkpoints being read out like this: “Do you write test plans? Answer yes or no.” After the 10th question like that, they will be heading for the door.

![]() In a bad interview, the interviewer doesn’t respond to answers correctly. People give more than factual information when they answer your questions. Ignoring the “hidden messages” may result in the interviewer missing vital information. Look at the expression on their face when they answer your question and be prepared to dig deeper when answers like “In principle we do this” are given. In this case, you should follow up by asking, “Do you actually do this or not? Give me an example, please.” In section 7.3.2, we will discuss the ability to listen well as a separate skill in its own right.

In a bad interview, the interviewer doesn’t respond to answers correctly. People give more than factual information when they answer your questions. Ignoring the “hidden messages” may result in the interviewer missing vital information. Look at the expression on their face when they answer your question and be prepared to dig deeper when answers like “In principle we do this” are given. In this case, you should follow up by asking, “Do you actually do this or not? Give me an example, please.” In section 7.3.2, we will discuss the ability to listen well as a separate skill in its own right.

![]() In a bad interview, the interviewer might get manipulated by the interviewee, by evasive responses, exaggerated claims, or (yes it can happen) half-truths.

In a bad interview, the interviewer might get manipulated by the interviewee, by evasive responses, exaggerated claims, or (yes it can happen) half-truths.

So that’s what can make bad interviews. Now, what are the principal skills that can help to conduct good interviews? These are the ones we’ll be focusing on:

![]() Ability to ask good questions (the meaning of good will be discussed soon)

Ability to ask good questions (the meaning of good will be discussed soon)

![]() Applying transactional analysis

Applying transactional analysis

![]() Making a good start, keeping the interview on track, and closing the interview

Making a good start, keeping the interview on track, and closing the interview

![]() Avoiding codependent behavior

Avoiding codependent behavior

![]() Using emotional intelligence

Using emotional intelligence

Some of these skills may also be applied in the context of other skill areas covered in later sections, such as listening and presenting.

Asking Good Questions

What is a “good” question? It’s one that extracts the information you want from the interviewee and, at the same time, establishes a bond with that person. People are more likely to be open and forthcoming with information if they trust the interviewer and feel relaxed and unthreatened.

The best kind of questions for getting a conversation going are so-called open questions, ones that demand more than a yes or no answer. Here are some typical examples:

![]() How do you specify the test cases for this function?

How do you specify the test cases for this function?

![]() What’s your opinion about the current software quality?

What’s your opinion about the current software quality?

![]() Explain for me the risks you see for this requirement.

Explain for me the risks you see for this requirement.

Now, asking only open questions is not always a good strategy. Think about what it’s like when a young child keeps asking “why.” In the end you are exhausted with the constant demand for information. That’s how an interviewee feels if you keep asking one open question after another. They feel drained, tired, and maybe a bit edgy. You can get around this by interspersing open questions with so-called closed questions. They are quick yes/no questions that help to confirm a fact or simply establish a true or false situation. Here are some examples of closed questions:

![]() Have these test cases been reviewed?

Have these test cases been reviewed?

![]() How many major defects did you find last week?

How many major defects did you find last week?

![]() Did you report this problem?

Did you report this problem?

![]() Did you use a tool for automating tests in this project?

Did you use a tool for automating tests in this project?

Closed questions work like the punctuation in a sentence. They help give structure and can be useful to change the tempo of an interview. A long exchange involving open questions can be concluded, for example, with a short burst of closed questions. This gives light and shade to an interview; it gives the interviewee the chance to recover because the interviewer is the one doing most of the talking. Sometimes a few “easy” closed questions at the start of an interview can also help to get the ball rolling. Remember, though, too many closed questions in one continuous block will lead to the “interrogation” style mentioned earlier and will not deliver the information you need.

Test process assessments generally involve the conduct of more than one interview. This presents the opportunity to practice what is sometimes called the “Columbo” interviewing technique (named after the famous American detective series on television). This technique involves the following principle aspects:

![]() Asking different people the same question

Asking different people the same question

![]() Asking the same person very nearly the same question

Asking the same person very nearly the same question

![]() Pretending to be finished with a subject and then returning with the famous “Oh, just one more point” tactic

Pretending to be finished with a subject and then returning with the famous “Oh, just one more point” tactic

Even though it was entertaining to watch Columbo do this, there’s a lesson to be learned. If people are in any way exaggerating or concealing the truth, this kind of questioning technique, used with care, might just give you a hint of what you are looking for: reality.

When an interview is nearly over, one of the most obvious questions that can be asked ask is a simple, “Did I miss anything?” It’s not uncommon for people to come to interviews prepared to talk about some testing-related issues that they really want to discuss. Maybe your interview didn’t touch directly on these issues. Asking this simple question gives people the chance to say what they want to say and rounds off a discussion. Even if this isn’t of direct relevance to test process improvement, it can still provide valuable background information about, for example, relationships with stakeholders or between the test manager and the testers. These are the areas that you might miss if, for example, you are following a model-based approach and focus too much on coverage of the model’s checkpoints.

Applying Transactional Analysis

Transactional analysis focuses on the way people interact with each other and provides a framework with which verbal exchanges (transactions) can be understood.

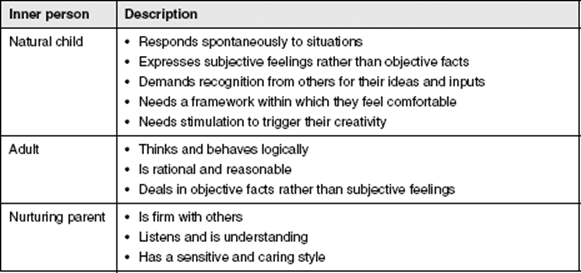

The framework described by Abe Wagner [Wagner 1996] identifies six “inner people” that all of us have. Three of them (Wagner also calls them “ego states”) are effective when it comes to communicating with others and three are ineffective. Let’s consider the effective inner people first (seetable 7–1).

Table 7–1 Effective inner people

Transactional analysis The analysis of transactions between people and within people’s minds; a transaction is defined as a stimulus plus a response. Transactions take place between people and between the ego states (personality segments) within one person’s mind.

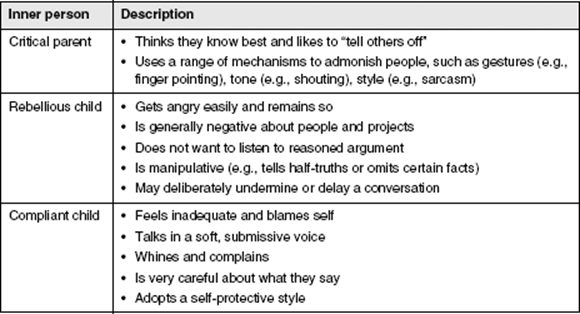

Now let’s consider the ineffective inner people (see table 7–2). These are the “ego states” that we need to avoid in an interview situation, as either interviewer or interviewee or, worst of all, as both.

Table 7–2 Ineffective inner people

The ability to perform transactional analysis is a skill that can be applied by anyone needing a good understanding of how people communicate with each other. This is typically the case for managers but also, in the context of performing interviews, for test process improvers.

So how can transactional analysis be useful to the test process improver as an interviewing skill? Taking your own role as interviewer first, it means that you must strive to establish an “adult-to-adult” interaction with the interviewee. As an interviewer, you must ensure that your “adult” inner person is in the forefront. You are reasonable, understanding, and focused on information gathering. You must not be judgmental (“Oh, that’s bad practice you have there”), blaming (“You can’t be serious!”), or sarcastic (“These reports remind me of a long book I’m reading”). This “critical parent” style is not going to be at all helpful; it could bring out the argumentative “rebellious child” or the whining, self-protective “compliant child” in the interviewee. You could end up in an argument or cause the interviewee to close up.

Recognizing the inner person shown by the interviewee is an essential skill needed by the interviewer. If you, the interviewer, have an “adult” interviewee before you, you can expect a good, factual exchange of information and you can press on with your questions at a reasonbly fast pace.

Interviewing someone showing their “natural child” will mean that you will occasionally need to refocus the discussion back to an objective one. You will need to practice your listening skills here and give objective summaries of what you have understood back to the interviewee (see the discussion of active listening in section 7.3.2).

With interviewees who emphasize their ineffective inner persons, the interviewer may have a much more difficult task in getting the information they need. The “rebellious child” might resent the fact that they are being interviewed, they may exaggerate the negative aspects of the project, they might be devisive and withhold information, and they may challenge the questions you ask rather than answer them.

The “rebellious child” and the “compliant child” often show themselves at the start of interviews. This critical stage of the interview is where we, the interviewer, need to be patient, reassuring, and yet firm (see the discussion of getting started in a bit). Explain what the interview is about and why it is being conducted in terms of benefits. Emphasize your neutrality and objectivity. Your aim is to bring the discussion around so that the interviewee is communicating as one of their three effective inner persons (preferably the “adult”). If this approach does not succeed and the interviewee persistently shows an ineffective inner peson, you may be justified in breaking off the interview to seek a solution (e.g., resolve problems in a project, nominate an alternative interviewee). Making the decision to terminate an interview may be a last resort, but it is preferable to conducting an ineffective interview.

Having skills in transactional analysis can help interviewers to be more discerning about the answers and information they are receiving from an interviewee. In this book, we have only touched on the basics of transactional analysis. If you want to develop your skills further in this area, we suggest you start with Isabel Evan’s book Achieving Software Quality through Teamwork [Evans 2004], which provides a useful summary and some examples. The works of Abe Wagner, specifically The Transactional Manager [Wagner 1996], give further understanding on how transactional analysis can be applied in a management context.

Getting Started, Keeping the Interview on Track, and Closing the Interview

Test process assessments generally involve conducting interviews that should last no more than two hours. This may sound like a long time, but typically there will be several individual subjects to be covered. If practically possible, it can be effective to schedule shorter, more frequent interviews (e.g., each lasting one hour) so that the interviewee’s concentration and motivation can be maintained.

Without a controlled start, you might fail to create the right atmosphere for the interview. Without the skills to keep an interview under control, you may find that some subjects get covered in too much detail while others are missed completely. Here are some tips that will help you in these areas; they provide an insight into the skills needed to conduct an interview (see section 6.3.3):

![]() Don’t dive straight into the factual aspects of an interview. Remember, the ability to make “small talk” at the start of an interview is a perfect chance to build initial bonds with the interviewee and establish a good level of openness (e.g., talk about the weather, the view from the office, the journey to work).

Don’t dive straight into the factual aspects of an interview. Remember, the ability to make “small talk” at the start of an interview is a perfect chance to build initial bonds with the interviewee and establish a good level of openness (e.g., talk about the weather, the view from the office, the journey to work).

![]() Tell the interviewee about the purpose of the interview, what is in scope and out of scope, and the way it will be conducted. Doing so before the first question is asked will reassure the interviewee and enable the discussion to be more open. This “smoothing the way” requires both interviewing skills and the ability to understand the nonfactual aspects of what is said (see the discussion of transactional analysis earlier). Remember, the interviewee may be stressed by their project workload, concerned that having a “good” interview must reflect well on their work or project, or simply withdrawn and defensive because they have not been informed adequately. Running through a quick checklist like the following can help an interview start out well:

Tell the interviewee about the purpose of the interview, what is in scope and out of scope, and the way it will be conducted. Doing so before the first question is asked will reassure the interviewee and enable the discussion to be more open. This “smoothing the way” requires both interviewing skills and the ability to understand the nonfactual aspects of what is said (see the discussion of transactional analysis earlier). Remember, the interviewee may be stressed by their project workload, concerned that having a “good” interview must reflect well on their work or project, or simply withdrawn and defensive because they have not been informed adequately. Running through a quick checklist like the following can help an interview start out well:

– Thanks: Thank them for taking time from their work (they are probably busy).

– Discussion, not interrogation: We are going to have a discussion about the test process.

– Confidentiality: All information will be treated confidentially.

– Focus: The assessment is focused on the test process, not you personally.

– Purpose: The interview will help to identify test process improvements.

![]() Interviews should be conducted according to the plans and preparations made (see section 6.3.2). However, interviews rarely run exactly as planned, so flexibility must be practiced by the interviewer. Some issues may arise during the interview that justify further discussion, and some planned items may turn out to be redundant (e.g., if the interviewee simply states, “We have no tools here” it’s pointless to discuss issues of test automation any further). The interviewer should adjust the plan as required to keep the flow of the discussion going. If topics arise that you prefer to discuss later (e.g., because they are not in the agreed-upon scope), then explicitly communicate this to the interviewee, but try not to defer too often; it can give the impression that you are not interested in what the interviewee is saying.

Interviews should be conducted according to the plans and preparations made (see section 6.3.2). However, interviews rarely run exactly as planned, so flexibility must be practiced by the interviewer. Some issues may arise during the interview that justify further discussion, and some planned items may turn out to be redundant (e.g., if the interviewee simply states, “We have no tools here” it’s pointless to discuss issues of test automation any further). The interviewer should adjust the plan as required to keep the flow of the discussion going. If topics arise that you prefer to discuss later (e.g., because they are not in the agreed-upon scope), then explicitly communicate this to the interviewee, but try not to defer too often; it can give the impression that you are not interested in what the interviewee is saying.

![]() The end of the interview is determined by the assessor and is normally based on achievement of agreed-upon scope or time constraints. Even with the most well-planned and -conducted interviews, time can run out. If this happens, make sure the interviewee is made aware of the areas in scope that could not be covered and make arrangements (where possible, immediately) for a follow-up interview. Get used to mentally running through the following checklist when closing the interview:

The end of the interview is determined by the assessor and is normally based on achievement of agreed-upon scope or time constraints. Even with the most well-planned and -conducted interviews, time can run out. If this happens, make sure the interviewee is made aware of the areas in scope that could not be covered and make arrangements (where possible, immediately) for a follow-up interview. Get used to mentally running through the following checklist when closing the interview:

– Thank the interviewee for their time.

– Ask the interviewee if the interview met their expectations and if any improvements could be made.

– Tell the interviewee what will happen to the information provided.

– Briefly explain what the remainder of the assessment process looks like.

![]() In exceptional situations, the assessor may decide to break off the interview. Perhaps the interview room doesn’t provide enough privacy, maybe the interviewee is simply unable or unwilling to answer questions, or maybe there are communication difficulties that cannot be overcome (e.g., language or technical problems).

In exceptional situations, the assessor may decide to break off the interview. Perhaps the interview room doesn’t provide enough privacy, maybe the interviewee is simply unable or unwilling to answer questions, or maybe there are communication difficulties that cannot be overcome (e.g., language or technical problems).

Avoiding Codependent Behavior

Codependence can occur in a number of situations, especially where we need to reach agreement on something. For the test process improver, these situations typically occur when proposing, agreeing upon, and prioritizing improvements to the test process (see section 6.4.) and when they perform interviews. An awareness of codependence can help the interviewer in two principal ways: to identify situations where a test process contains codependencies and to prevent codependencies from developing during an interview between interviewer and interviewee.

A typical codependency in a test process might be, for example, when the test manager takes on the task of entering defect reports into a defect management tool to compensate for the inability of testers to do this themselves (e.g., through lack of skills, time, or motivation). The testers are content to live with this situation and come to rely on the test manager to take on this task for them. The test manager gets more and more frustrated but can’t find a way out without causing difficulties in the team. They don’t want to let the team down, so they carry on with the codependent situation until it becomes a “standard” part of their test process. When performing interviews, the test process improver needs to recognize such codependencies and get to the bottom of why this practice has become standard. Improvement suggestions then focus on the reason for the codependency; suggestions in this case may be to give testers adequate training and resources in using the defect management tool. The independence of the test process improver is important in resolving such codependent situations; both sides in the codependency can more easily accept a proposed solution from someone outside of the particular situation.

Codependent behavior Excessive emotional or psychological dependence on another person, specifically in trying to change that person’s current (undesirable) behavior while supporting them in continuing that behavior.

Codependencies can develop during an interview when the interviewer compensates for the test process difficiencies revealed in the conversation by the interviewee. Perhaps the interviewee is known and liked by the interviewer and the interviewer doesn’t want to make trouble for the interviewee by identifying weaknesses in their part of the test process. If the interviewer makes this known by saying things like, “Don’t worry, I’ll ignore that,” “Never mind,” or, with a grin, “I didn’t hear that,” the interviewee comes to expect that their parts of the test process that need correcting will remain untouched. There are several reasons codependency can develop in an interview, but the end result is always the same. In the words of Lee Copeland, we end up “doing all the wrong things for all the right reasons.” Once again, using an independent (external) interviewer can help avoid such codependencies. Even then, however, interviewers still need the necessary skills and experienec to recognize and prevent codependent situations from developing in interviews. We must never deny the presence of risk because you or someone you like might be criticized if you mention it.

Ability to Apply Emotional Intelligence

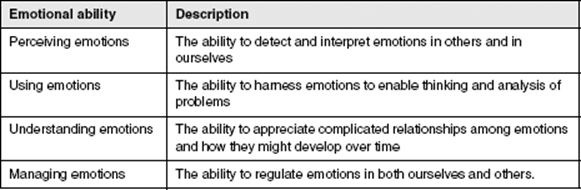

An understanding of emotional intelligence (EI) is important for test process improvers when performing interviews. Generally speaking, the concept of IE (see Emotional Intelligence: Key Readings on the Mayer and Salovey Model [Mayer 2004]) recognizes that factual information is often just one aspect of the information being communicated, (we will discuss this further in section 7.3.2) and that it’s critical to process the nonfactual “emotional” content of messages correctly. A model developed by Mayer and Salovey [Mayer 2004] notes that people vary in their ability to communicate and understand at the emotional level and describes four types of skills that are important in dealing with emotions (see table 7–3).

Table 7–3 Types of emotional ability

The emotionally intelligent test process improver can do the following:

![]() Perceive changes of mood during an interview by recognizing them in an interviewee’s face and voice. Perhaps a question that pinpoints a weakness in the test process causes the interviewee to frown or to become hesitant.

Perceive changes of mood during an interview by recognizing them in an interviewee’s face and voice. Perhaps a question that pinpoints a weakness in the test process causes the interviewee to frown or to become hesitant.

![]() Use perceived emotional information to obtain specific information or views from the interviewee. Perhaps an open follow-up question is asked to obtain more information (e.g., What is your feeling about that?)

Use perceived emotional information to obtain specific information or views from the interviewee. Perhaps an open follow-up question is asked to obtain more information (e.g., What is your feeling about that?)

![]() Can manage emotions to achieve the objectives of the interview. Perhaps the interviewer uses small talk to create a relaxed mood and encourage the interviewee to talk openly.

Can manage emotions to achieve the objectives of the interview. Perhaps the interviewer uses small talk to create a relaxed mood and encourage the interviewee to talk openly.

The ability-based model proposed by Mayer and Salovey also enables IE to be measured, but it is not expected that test process improvers can conduct these measurements themselves.

Emotional intelligence The ability, capacity, and skill to identify, assess, and manage the emotions of one’s self, of others, and of groups.

Test process improvers should use their emotional skills with care during an interview. In particular, the management of emotions, if incorrectly used, may lead to a loss of the “adult-adult” situation you are aiming for in an interview (see the discussion on transactional analysis earlier in this chapter).

7.3.2 Listening Skills

Have you ever played the game where five or more people stand in a line and each passes on a message to the next person in line? When the message reaches the end of the line, the first and the last person write down their messages and they are compared. Everyone laughs at how the original message has changed. For example, a message that started as “Catch the 9 o’clock bus from George Street and change onto the Red Line in the direction of Shady Grove” transforms into “Catch the red bus from Michael Street and change there for Shady Lane.” This bit of fun demonstrates the human tendency to filter information, to leave out or add certain details, to transform our understanding, and to simply get the details mixed up. This transformation effect makes life difficult for developers and testers when they try to interpret incorrect, incomplete, or inconsistent requirements.

When conducting interviews, this is not going to happen to the same degree as illustrated in the example, but there is always a certain degree of transformation that takes place. Maybe you miss an important detail, maybe you interpreted “test data” to be the primary data associated with a test case instead of the secondary, background data needed to make the test case run, or maybe you mixed up the names of departments responsible for particular testing activities.

What can you do about this? Where requirements engineers have techniques available to overcome the problem, such as documenting requirements in a normalized format or applying requirements templates and rules, interviewers need other approaches that can be applied in real-time interview situations. In these situations, the following two approaches can be of particular use:

![]() Practice active listening

Practice active listening

![]() Analyze messages

Analyze messages

Active Listening

When you practice this technique in an interview, you are continuously applying following steps:

![]() Ask a question.

Ask a question.

![]() Listen carefully (apply the transactional analysis discussed earlier).

Listen carefully (apply the transactional analysis discussed earlier).

![]() Maintain eye contact (but don’t stare!).

Maintain eye contact (but don’t stare!).

![]() Make the interviewee aware that you are listening and interested in what they are saying, perhaps with an occasional nod or a quietly spoken “Okay, aha.” Don’t look out of the window or busy yourself with other tasks, other than taking occasional notes.

Make the interviewee aware that you are listening and interested in what they are saying, perhaps with an occasional nod or a quietly spoken “Okay, aha.” Don’t look out of the window or busy yourself with other tasks, other than taking occasional notes.

![]() Wait until the interviewee is finished speaking and avoid interrupting them unless it is absolutely necessary (for example, if they misunderstood the question or are moving way off the subject).

Wait until the interviewee is finished speaking and avoid interrupting them unless it is absolutely necessary (for example, if they misunderstood the question or are moving way off the subject).

![]() Once the interviewee has finished with their answer, give them feedback by repeating what you have understood. This isn’t a playback of every word; it’s a summary of the message you understood, perhaps broken down into the constituent parts discussed earlier when we covered transactional analysis.

Once the interviewee has finished with their answer, give them feedback by repeating what you have understood. This isn’t a playback of every word; it’s a summary of the message you understood, perhaps broken down into the constituent parts discussed earlier when we covered transactional analysis.

![]() Listen for confirmation or correction.

Listen for confirmation or correction.

![]() Continue with the next question.

Continue with the next question.

This continuous cycle of asking, listening, and giving feedback eliminates many of the misunderstandings and transformational errors that might otherwise result in incorrect findings and ultimately even incorrect proposals being made for test process improvement.

Analyze Messages

When someone communicates with us, they don’t just give us facts. You may have heard the expression “reading between the lines.” This comes from the general observation that messages (spoken words or written text) often convey more than their factual content, whether this be deliberate or accidental.

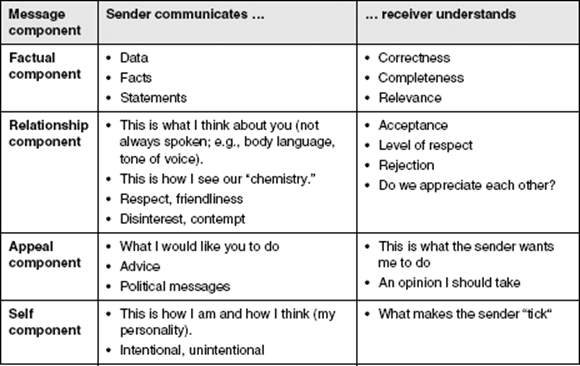

Analyzing the messages you receive helps you understand the hidden, non-factual elements being communicated. With careful listening and an appreciation for the different components in spoken messages, you can improve your ability to understand effectively and make the most of available interview time. The four components of a message (transaction) identified by Schulz von Thun [von Thun 2008] are described in abbreviated form in table 7–4.

Table 7–4 Message components

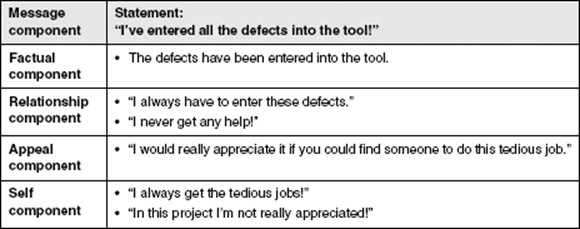

Let’s see how these four components may be identified in a typical message. Imagine you are at the weekly project status meeting. One of the team leads looks at the test manager and says, “I’ve entered all the defects into the tool!” The tone is one of exhaustion and exasperation. The team lead raises both arms over his head as he makes the statement. Now, suppose you want to find out if the defect management is working effectively. How might you analyze this statement according to the four components mentioned in table 7–4 (from the sender’s point of view)? This is shown in table 7–5.

Table 7–5 Example of message components

If you focused on just the facts in this example, you are unlikely to identify ways to improve the defect management. Breaking the message down into its components and then looking at, you might find that the “appeal” component, for example, reveals possibilities for making suggestions. You might see the potential in using additional tool support to make defect entry easier or in training testers to use the defect management tool more efficiently. You would need to find out more before you can actually make these specific suggestions, but with transaction analysis skills, you can more easily identify promising areas for improvement.

In interview situations the ability to analyze messages can also be useful when deciding on the next questions to ask. In that sense, you can also consider this to be an interviewing skill (see section 7.3.1). In this example, your next question might be one of these:

![]() How do testers record defects in your project?

How do testers record defects in your project?

![]() Does everyone have access to the defect management tool?

Does everyone have access to the defect management tool?

![]() Are all testers able to use the defect management tool correctly?

Are all testers able to use the defect management tool correctly?

Analyzing the message is not an easy skill to develop, but it helps you to “listen” well, it can give you better interview techniques, and it can be a useful method to identify potential improvements.

7.3.3 Presentation and Reporting Skills

As experts in the field of test process improvement, one of the most important things we need to do is to get our message across effectively and to the right people. Without these skills, we will fail to get the necessary commitment from decision makers for the specific improvements we propose and the people affected by those improvements will not fully “buy in” (refer to chapter 8, “Managing Change”).

At the Expert Level, it is expected that you already have the basic skills of presenting information (e.g., using slides or flip charts) and writing reports such as test status reports [ISTQB-CTAL-TM]. This section builds on your existing knowledge by considering the following key elements:

![]() Knowing your audience

Knowing your audience

![]() Summarizing information

Summarizing information

![]() Developing your presentation skills

Developing your presentation skills

![]() Developing your reporting skills (including emails)

Developing your reporting skills (including emails)

Knowing Your Audience

If you are unaware of a person’s role and responsibilities and their relevance to the test process as a stakeholder, there is a good chance that your message will not come across as well as it should. Testers or developers who need details will find it difficult to relate high-level presentations and reports to their specific tasks. Line managers need sufficient information to make the right tactical (i.e., project-relevant) and/or strategic (i.e., company-wide) decisions. Give them too much detail and they will likely come back to you with questions like, “What does all this mean?” or statements like, “I can’t make sense of all this detail.” At worst they might simply ignore your message entirely and potentially endanger the chances of implementing your proposed test process improvements.

So how can you sharpen your awareness of stakeholder issues and recognize specific attributes in the people you interact with? One way is to consider the different categories of stakeholders as discussed by Isabel Evans [Evans 2004] and in chapter 2. Each of the stakeholder categories (e.g., developers, managers) has a distinct view of what they consider quality. Recognizing these categories of stakeholders is an essential skill when reporting and presenting because it enables you to adjust the type and depth of information you provide on the test process and any proposed improvements. Developers want detailed information about any proposed changes to, for example, component testing procedures or specifications; they take a “manufacturing-oriented” view of quality. Managers will want consolidated data on how proposals will impact their available resources and overall test process efficiency; they have a “value-oriented” view of quality.

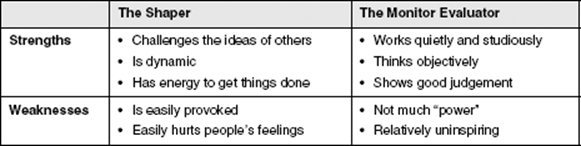

You can extend this concept to include personality types and by considering how specific individuals in a project or organization relate to each other. There are a number of such categorizations that can be used here. Dr. Meredith Belbin provides a scheme that enables us to recognize the principal strengths and weaknesses of a particular person [Belbin 2010]. Work carried out in the field of test team dynamics has shown that natural personality traits can be used to group types of people together based on their behavior. Dr. Belbin carried out research in this field and found nine distinct role types (Team Worker, Plant, Coordinator, Monitor Evaluator, Completer, Finisher, Shaper, Specialist, Implementer, Resource Investigator). Compare, for example, the two Belbin roles Shaper and Monitor Evaluator, which are shown in table 7–6 in highly condensed form:

Table 7–6 Example of Belbin roles

Test managers need to know about these roles to support team building and to understand group dynamics. Test process improvers can also use them to help direct their message in the most appropriate way. Let’s say, for example, that you are presenting a test improvement plan to some people and you know from previous experience they include Monitor Evaluators and Shapers. If some of your proposed changes could meet resistance, who would you pick to introduce and pilot them? Would you address the Monitor Evaluators or the Shapers? Our choice would be the Shapers, the people with the power to get things done in the face of possible resistance. This doesn’t mean we would ignore the others in the group, but it does mean we would pay extra attention to the views of the Shapers, getting their feedback and assessing their commitment to introduce the proposed changes.

If you prefer to use something other than the Belbin roles, the Myers-Briggs Type Indicator (MBTI) is an alternative (see also section 8.6.1). A further option might be to consider the work of Abe Wagner on transaction analysis [Wagner 1996] mentioned earlier. The Myers-Briggs Type Indicator (MBTI) is an instrument that describes individual preferences for using energy, processing information, making decisions, and relating to the external world [Briggs Myers and Myers 1995]. These preferences result in a four-letter “type” that can be helpful in understanding different personalities. The personality types exhibit different ways in which people communicate and how you might best communicate with them. You may have taken an MBTI test in the past. For those who haven’t, here is a basic outline of the MBTI’s four preferences. Each preference has two endpoints:

![]() The first preference describes the source of your energy—introvert or extrovert.

The first preference describes the source of your energy—introvert or extrovert.

![]() How one processes information is the next preference—sensing or intuitive.

How one processes information is the next preference—sensing or intuitive.

![]() Decision-making is the third preference—thinking or feeling.

Decision-making is the third preference—thinking or feeling.

![]() The fourth preference—judging or perceiving—deals with how an individual relates to the external world.

The fourth preference—judging or perceiving—deals with how an individual relates to the external world.

Summarizing Information

Test process assessments often result in a large amount of information being collected, regardless of whether a model-based or analytical approach is followed. Consolidating and summarizing this information to make it “digestible” for the intended stakeholders is a key skill. Without the ability to strip out non-essential details and focus on key points at the right level of abstraction, there is a good chance you will swamp your intended audiences with information. In addition, you will find it more difficult to identify the improvements that will really make a difference to the test process. Here are a few tips that will help you to summarize and consolidate information:

![]() Deliberately limit the time or physical space you have in which to make your points. This could be, for example, a maximum number of slides in a presentation or maximum number of pages in a report. This approach applies in particular to management summaries, which rarely exceed one or two slides/pages. The motto here is “Reduce to the maximum” (i.e., maximum information for the minimum outlay of time or physical space).

Deliberately limit the time or physical space you have in which to make your points. This could be, for example, a maximum number of slides in a presentation or maximum number of pages in a report. This approach applies in particular to management summaries, which rarely exceed one or two slides/pages. The motto here is “Reduce to the maximum” (i.e., maximum information for the minimum outlay of time or physical space).

![]() Don’t try to cover everything. Use the Pareto Principle to find the 20 percent of information that accounts for 80 percent of what really matters. You will not be thanked for including all the minor points in your report or presentation just for the sake of being thorough and complete.

Don’t try to cover everything. Use the Pareto Principle to find the 20 percent of information that accounts for 80 percent of what really matters. You will not be thanked for including all the minor points in your report or presentation just for the sake of being thorough and complete.

![]() Always relate your summary points to the appropriate stakeholder. Describe what a proposed improvement would mean for them, and where possible, try to use their own “language,” whether it’s the language of a manager (fact and figures, costs and benefits) or the language of the test manager (specific test documents, steps in the test process).

Always relate your summary points to the appropriate stakeholder. Describe what a proposed improvement would mean for them, and where possible, try to use their own “language,” whether it’s the language of a manager (fact and figures, costs and benefits) or the language of the test manager (specific test documents, steps in the test process).