Computer Organization and Design (2016)

APPENDIX B

The Basics of Logic Design

Abstract

This appendix provides a brief discussion of the basics of logic design. It begins with an introduction to the basic building blocks of logic, gates. It then shows how to use these building blocks to construct simple combinational logic systems. It covers the design an ALU for the MIPS processor, shows how to make a fast adder, and includes a short introduction to the topic of clocking. Memory elements are also covered, as well as the design and use of finite-state machines. The chapter concludes with an explanation of the basics of how edge-triggered clocking works, an introduction to another clocking scheme, and a brief description of the problem of synchronizing asynchronous inputs.

Keywords

gates, truth tables, logic equations, combinational logic, hardware description language, HDL, arithmetic logic unit, ALU, carry lookahead, clock, flip-flop, latch, register, SRAM, DRAM, finite-state machine, timing, field programmable device, FPGA

I always loved that word, Boolean.

Claude Shannon, IEEE Spectrum, April 1992 (Shannon’s master’s thesis showed that the algebra invented by George Boole in the 1800s could represent the workings of electrical switches.)

B.1 Introduction

B.2 Gates, Truth Tables, and Logic Equations

B.3 Combinational Logic

B.4 Using a Hardware Description Language

B.5 Constructing a Basic Arithmetic Logic Unit

B.6 Faster Addition: Carry Lookahead

B.7 Clocks

B.8 Memory Elements: Flip-Flops, Latches, and Registers

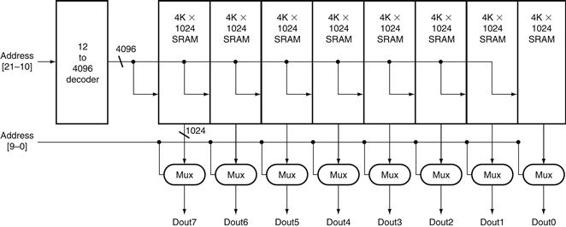

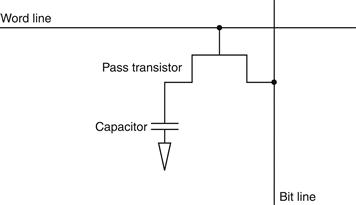

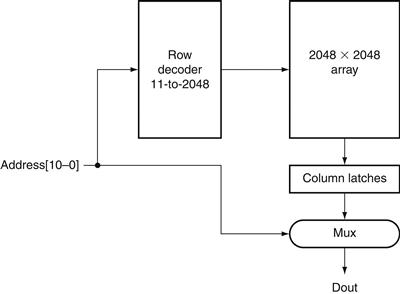

B.9 Memory Elements: SRAMs and DRAMs

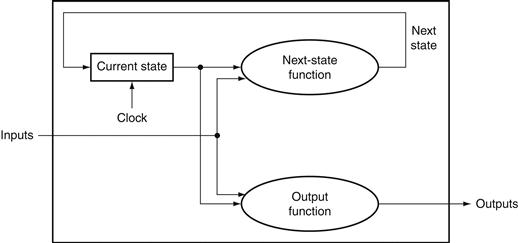

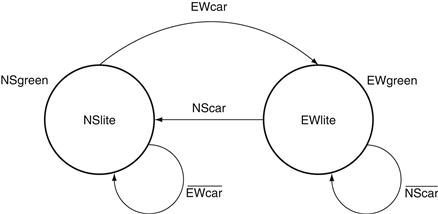

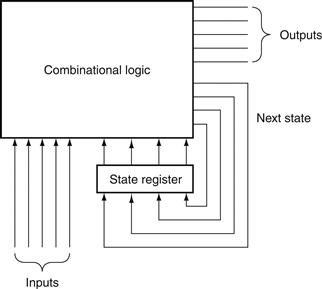

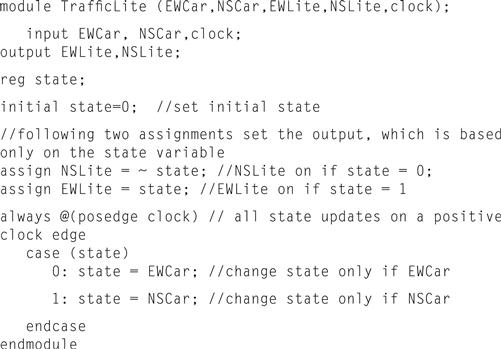

B.10 Finite-State Machines

B.11 Timing Methodologies

B.12 Field Programmable Devices

B.13 Concluding Remarks

B.14 Exercises

B.1 Introduction

This appendix provides a brief discussion of the basics of logic design. It does not replace a course in logic design, nor will it enable you to design significant working logic systems. If you have little or no exposure to logic design, however, this appendix will provide sufficient background to understand all the material in this book. In addition, if you are looking to understand some of the motivation behind how computers are implemented, this material will serve as a useful introduction. If your curiosity is aroused but not sated by this appendix, the references at the end provide several additional sources of information.

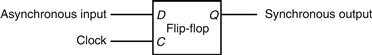

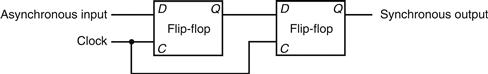

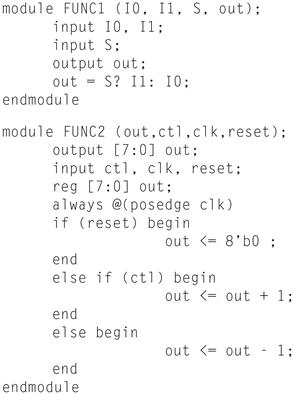

Section B.2 introduces the basic building blocks of logic, namely, gates. Section B.3 uses these building blocks to construct simple combinational logic systems, which contain no memory. If you have had some exposure to logic or digital systems, you will probably be familiar with the material in these first two sections. Section B.5 shows how to use the concepts of Sections B.2 and B.3 to design an ALU for the MIPS processor. Section B.6 shows how to make a fast adder, and may be safely skipped if you are not interested in this topic. Section B.7 is a short introduction to the topic of clocking, which is necessary to discuss how memory elements work. Section B.8 introduces memory elements, and Section B.9 extends it to focus on random access memories; it describes both the characteristics that are important to understanding how they are used in Chapter 4, and the background that motivates many of the aspects of memory hierarchy design in Chapter 5. Section B.10 describes the design and use of finite-state machines, which are sequential logic blocks. If you intend to read Appendix D, you should thoroughly understand the material in Sections B.2 through B.10. If you intend to read only the material on control in Chapter 4, you can skim the appendices; however, you should have some familiarity with all the material except Section B.11. Section B.11 is intended for those who want a deeper understanding of clocking methodologies and timing. It explains the basics of how edge-triggered clocking works, introduces another clocking scheme, and briefly describes the problem of synchronizing asynchronous inputs.

Throughout this appendix, where it is appropriate, we also include segments to demonstrate how logic can be represented in Verilog, which we introduce in Section B.4. A more extensive and complete Verilog tutorial appears elsewhere on the CD.

B.2 Gates, Truth Tables, and Logic Equations

The electronics inside a modern computer are digital. Digital electronics operate with only two voltage levels of interest: a high voltage and a low voltage. All other voltage values are temporary and occur while transitioning between the values. (As we discuss later in this section, a possible pitfall in digital design is sampling a signal when it not clearly either high or low.) The fact that computers are digital is also a key reason they use binary numbers, since a binary system matches the underlying abstraction inherent in the electronics. In various logic families, the values and relationships between the two voltage values differ. Thus, rather than refer to the voltage levels, we talk about signals that are (logically) true, or 1, or are asserted; or signals that are (logically) false, or 0, or are deasserted. The values 0 and 1 are called complements or inverses of one another.

asserted signal

A signal that is (logically) true, or 1.

deasserted signal

A signal that is (logically) false, or 0.

Logic blocks are categorized as one of two types, depending on whether they contain memory. Blocks without memory are called combinational; the output of a combinational block depends only on the current input. In blocks with memory, the outputs can depend on both the inputs and the value stored in memory, which is called the state of the logic block. In this section and the next, we will focus only on combinational logic. After introducing different memory elements in Section B.8, we will describe how sequential logic, which is logic including state, is designed.

combinational logic

A logic system whose blocks do not contain memory and hence compute the same output given the same input.

sequential logic

A group of logic elements that contain memory and hence whose value depends on the inputs as well as the current contents of the memory.

Truth Tables

Because a combinational logic block contains no memory, it can be completely specified by defining the values of the outputs for each possible set of input values. Such a description is normally given as a truth table. For a logic block with n inputs, there are 2n entries in the truth table, since there are that many possible combinations of input values. Each entry specifies the value of all the outputs for that particular input combination.

Truth Tables

Example

Consider a logic function with three inputs, A, B, and C, and three outputs, D, E, and F. The function is defined as follows: D is true if at least one input is true, E is true if exactly two inputs are true, and F is true only if all three inputs are true. Show the truth table for this function.

Answer

The truth table will contain 23 = 8 entries. Here it is:

Truth tables can completely describe any combinational logic function; however, they grow in size quickly and may not be easy to understand. Sometimes we want to construct a logic function that will be 0 for many input combinations, and we use a shorthand of specifying only the truth table entries for the nonzero outputs. This approach is used in Chapter 4 and ![]() Appendix D.

Appendix D.

Boolean Algebra

Another approach is to express the logic function with logic equations. This is done with the use of Boolean algebra (named after Boole, a 19th-century mathematician). In Boolean algebra, all the variables have the values 0 or 1 and, in typical formulations, there are three operators:

■ The OR operator is written as +, as in A + B. The result of an OR operator is 1 if either of the variables is 1. The OR operation is also called a logical sum, since its result is 1 if either operand is 1.

■ The AND operator is written as ·, as in A · B. The result of an AND opera tor is 1 only if both inputs are 1. The AND operator is also called logical product, since its result is 1 only if both operands are 1.

■ The unary operator NOT is written as ![]() . The result of a NOT operator is 1 only if the input is 0. Applying the operator NOT to a logical value results in an inversion or negation of the value (i.e., if the input is 0 the output is 1, and vice versa).

. The result of a NOT operator is 1 only if the input is 0. Applying the operator NOT to a logical value results in an inversion or negation of the value (i.e., if the input is 0 the output is 1, and vice versa).

There are several laws of Boolean algebra that are helpful in manipulating logic equations.

■ Identity law: A + 0 = A and A · 1 = A.

■ Zero and One laws: A + 1 = 1 and A · 0 = 0.

■ Inverse laws: ![]() and

and ![]() .

.

■ Commutative laws: A + B = B + A and A · B = B · A.

■ Associative laws: A + (B + C) = (A + B) + C and A · (B · C) = (A · B) · C.

■ Distributive laws: A · (B + C) = (A · B) + (A · C) and A + (B · C) = (A + B) · (A + C).

In addition, there are two other useful theorems, called DeMorgan’s laws, that are discussed in more depth in the exercises.

Any set of logic functions can be written as a series of equations with an output on the left-hand side of each equation and a formula consisting of variables and the three operators above on the right-hand side.

Logic Equations

Example

Show the logic equations for the logic functions, D, E, and F, described in the previous example.

Answer

Here’s the equation for D:

![]()

F is equally simple:

![]()

E is a little tricky. Think of it in two parts: what must be true for E to be true (two of the three inputs must be true), and what cannot be true (all three cannot be true). Thus we can write E as

![]()

We can also derive E by realizing that E is true only if exactly two of the inputs are true. Then we can write E as an OR of the three possible terms that have two true inputs and one false input:

![]()

Proving that these two expressions are equivalent is explored in the exercises.

In Verilog, we describe combinational logic whenever possible using the assign statement, which is described beginning on page B-23. We can write a definition for E using the Verilog exclusive-OR operator as assign E = (A ^ B ^ C) (A + B + C) (A B C), which is yet another way to describe this function. D and F have even simpler representations, which are just like the corresponding C code: D = A | B | C and F = A & B & C.

Gates

Logic blocks are built from gates that implement basic logic functions. For example, an AND gate implements the AND function, and an OR gate implements the OR function. Since both AND and OR are commutative and associative, an AND or an OR gate can have multiple inputs, with the output equal to the AND or OR of all the inputs. The logical function NOT is implemented with an inverter that always has a single input. The standard representation of these three logic building blocks is shown in Figure B.2.1.

gate

A device that implements basic logic functions, such as AND or OR.

![]()

FIGURE B.2.1 Standard drawing for an AND gate, OR gate, and an inverter, shown from left to right.

The signals to the left of each symbol are the inputs, while the output appears on the right. The AND and OR gates both have two inputs. Inverters have a single input.

Rather than draw inverters explicitly, a common practice is to add “bubbles” to the inputs or outputs of a gate to cause the logic value on that input line or output line to be inverted. For example, Figure B.2.2shows the logic diagram for the function ![]() , using explicit inverters on the left and bubbled inputs and outputs on the right.

, using explicit inverters on the left and bubbled inputs and outputs on the right.

![]()

FIGURE B.2.2 Logic gate implementation of ![]() using explicit inverts on the left and bubbled inputs and outputs on the right.

using explicit inverts on the left and bubbled inputs and outputs on the right.

This logic function can be simplified to ![]() or in Verilog, A & ∼ B.

or in Verilog, A & ∼ B.

Any logical function can be constructed using AND gates, OR gates, and inversion; several of the exercises give you the opportunity to try implementing some common logic functions with gates. In the next section, we’ll see how an implementation of any logic function can be constructed using this knowledge.

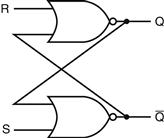

In fact, all logic functions can be constructed with only a single gate type, if that gate is inverting. The two common inverting gates are called NOR and NAND and correspond to inverted OR and AND gates, respectively. NOR and NAND gates are called universal, since any logic function can be built using this one gate type. The exercises explore this concept further.

NOR gate

An inverted OR gate.

NAND gate

An inverted AND gate.

Check Yourself

Are the following two logical expressions equivalent? If not, find a setting of the variables to show they are not:

■ ![]()

■ ![]()

B.3 Combinational Logic

In this section, we look at a couple of larger logic building blocks that we use heavily, and we discuss the design of structured logic that can be automatically implemented from a logic equation or truth table by a translation program. Last, we discuss the notion of an array of logic blocks.

Decoders

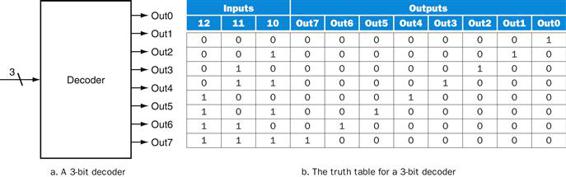

One logic block that we will use in building larger components is a decoder. The most common type of decoder has an n-bit input and 2n outputs, where only one output is asserted for each input combination. This decoder translates the n-bit input into a signal that corresponds to the binary value of the n-bit input. The outputs are thus usually numbered, say, Out0, Out1, …, Out2n − 1. If the value of the input is i, then Outi will be true and all other outputs will be false. Figure B.3.1 shows a 3-bit decoder and the truth table. This decoder is called a 3-to-8 decoder since there are 3 inputs and 8 (23) outputs. There is also a logic element called an encoder that performs the inverse function of a decoder, taking 2n inputs and producing an n-bit output.

decoder

A logic block that has an n-bit input and 2n out puts, where only one output is asserted for each input combination.

FIGURE B.3.1 A 3-bit decoder has 3 inputs, called 12, 11, and 10, and 23 = 8 outputs, called Out0 to Out7.

Only the output corresponding to the binary value of the input is true, as shown in the truth table. The label 3 on the input to the decoder says that the input signal is 3 bits wide.

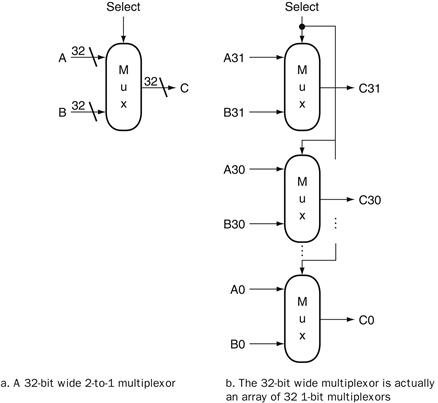

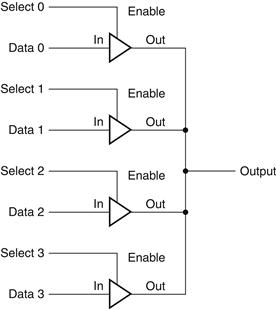

Multiplexors

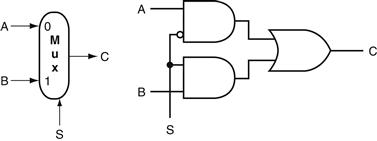

One basic logic function that we use quite often in Chapter 4 is the multiplexor. A multiplexor might more properly be called a selector, since its output is one of the inputs that is selected by a control. Consider the two-input multiplexor. The left side of Figure B.3.2 shows this multiplexor has three inputs: two data val ues and a selector ( or control) value. The selector value determines which of the inputs becomes the output. We can represent the logic function computed by a two-input multiplexor, shown in gate form on the right side of Figure B.3.2, as ![]() .

.

selector value

Also called control value. The control signal that is used to select one of the input values of a multiplexor as the output of the multiplexor.

FIGURE B.3.2 A two-input multiplexor on the left and its implementation with gates on the right.

The multiplexor has two data inputs (A and B), which are labeled 0 and 1, and one selector input (S), as well as an output C. Implementing multiplexors in Verilog requires a little more work, especially when they are wider than two inputs. We show how to do this beginning on page B-23.

Multiplexors can be created with an arbitrary number of data inputs. When there are only two inputs, the selector is a single signal that selects one of the inputs if it is true (1) and the other if it is false (0). If there are n data inputs, there will need to be ![]() selector inputs. In this case, the multiplexor basically consists of three parts:

selector inputs. In this case, the multiplexor basically consists of three parts:

1. A decoder that generates n signals, each indicating a different input value

2. An array of n AND gates, each combining one of the inputs with a signal from the decoder

3. A single large OR gate that incorporates the outputs of the AND gates

To associate the inputs with selector values, we often label the data inputs numerically (i.e., 0, 1, 2, 3, …, n − 1) and interpret the data selector inputs as a binary number. Sometimes, we make use of a multiplexor with undecoded selector signals.

Multiplexors are easily represented combinationally in Verilog by using if expressions. For larger multiplexors, case statements are more convenient, but care must be taken to synthesize combinational logic.

Two-Level Logic and PLAs

As pointed out in the previous section, any logic function can be implemented with only AND, OR, and NOT functions. In fact, a much stronger result is true. Any logic function can be written in a canonical form, where every input is either a true or complemented variable and there are only two levels of gates—one being AND and the other OR—with a possible inversion on the final output. Such a representation is called a two-level representation, and there are two forms, called sum of products and product of sums. A sum-of-products representation is a logical sum (OR) of products (terms using the AND operator); a product of sums is just the opposite. In our earlier example, we had two equations for the output E:

![]()

and

![]()

sum of products

A form of logical representation that employs a logical sum (OR) of products (terms joined using the AND operator).

This second equation is in a sum-of-products form: it has two levels of logic and the only inversions are on individual variables. The first equation has three levels of logic.

Elaboration

We can also write E as a product of sums:

![]()

To derive this form, you need to use DeMorgan’s theorems, which are discussed in the exercises.

In this text, we use the sum-of-products form. It is easy to see that any logic function can be represented as a sum of products by constructing such a representation from the truth table for the function. Each truth table entry for which the function is true corresponds to a product term. The product term consists of a logical product of all the inputs or the complements of the inputs, depending on whether the entry in the truth table has a 0 or 1 corresponding to this variable. The logic function is the logical sum of the product terms where the function is true. This is more easily seen with an example.

Sum of Products

Example

Show the sum-of-products representation for the following truth table for D.

Answer

There are four product terms, since the function is true (1) for four different input combinations. These are:

Thus, we can write the function for D as the sum of these terms:

![]()

Note that only those truth table entries for which the function is true generate terms in the equation.

We can use this relationship between a truth table and a two-level representation to generate a gate-level implementation of any set of logic functions. A set of logic functions corresponds to a truth table with multiple output columns, as we saw in the example on page B-5. Each output column represents a different logic function, which may be directly constructed from the truth table.

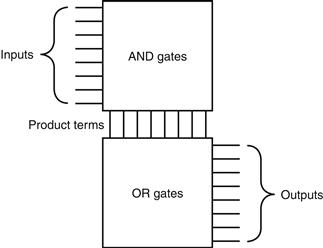

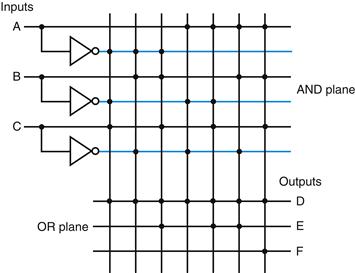

The sum-of-products representation corresponds to a common structured-logic implementation called a programmable logic array (PLA). A PLA has a set of inputs and corresponding input complements (which can be implemented with a set of inverters), and two stages of logic. The first stage is an array of AND gates that form a set of product terms (sometimes called minterms); each prod uct term can consist of any of the inputs or their complements. The second stage is an array of OR gates, each of which forms a logical sum of any number of the product terms. Figure B.3.3 shows the basic form of a PLA.

programmable logic array (PLA)

A structured-logic element composed of a set of inputs and corresponding input complements and two stages of logic: the first generating product terms of the inputs and input complements, and the second generating sum terms of the product terms. Hence, PLAs implement logic functions as a sum of products.

minterms

Also called product terms. A set of logic inputs joined by conjunction (AND operations); the product terms form the first logic stage of the programmable logic array (PLA).

FIGURE B.3.3 The basic form of a PLA consists of an array of AND gates followed by an array of OR gates.

Each entry in the AND gate array is a product term consisting of any number of inputs or inverted inputs. Each entry in the OR gate array is a sum term consisting of any number of these product terms.

A PLA can directly implement the truth table of a set of logic functions with multiple inputs and outputs. Since each entry where the output is true requires a product term, there will be a corresponding row in the PLA. Each output corresponds to a potential row of OR gates in the second stage. The number of OR gates corresponds to the number of truth table entries for which the output is true. The total size of a PLA, such as that shown in Figure B.3.3, is equal to the sum of the size of the AND gate array (called the AND plane) and the size of the OR gate array (called the OR plane). Looking at Figure B.3.3, we can see that the size of the AND gate array is equal to the number of inputs times the number of different product terms, and the size of the OR gate array is the number of outputs times the number of product terms.

A PLA has two characteristics that help make it an efficient way to implement a set of logic functions. First, only the truth table entries that produce a true value for at least one output have any logic gates associated with them. Second, each different product term will have only one entry in the PLA, even if the product term is used in multiple outputs. Let’s look at an example.

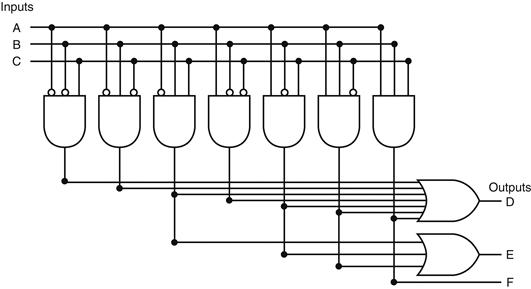

PLAs

Example

Consider the set of logic functions defined in the example on page B-5. Show a PLA implementation of this example for D, E, and F.

Answer

Here is the truth table we constructed earlier:

Since there are seven unique product terms with at least one true value in the output section, there will be seven columns in the AND plane. The number of rows in the AND plane is three (since there are three inputs), and there are also three rows in the OR plane (since there are three outputs). Figure B.3.4 shows the resulting PLA, with the product terms corresponding to the truth table entries from top to bottom.

FIGURE B.3.4 The PLA for implementing the logic function described in the example.

Rather than drawing all the gates, as we do in Figure B.3.4, designers often show just the position of AND gates and OR gates. Dots are used on the intersection of a product term signal line and an input line or an output line when a corresponding AND gate or OR gate is required. Figure B.3.5 shows how the PLA of Figure B.3.4 would look when drawn in this way. The contents of a PLA are fixed when the PLA is created, although there are also forms of PLA-like structures, called PALs, that can be programmed electronically when a designer is ready to use them.

FIGURE B.3.5 A PLA drawn using dots to indicate the components of the product terms and sum terms in the array.

Rather than use inverters on the gates, usually all the inputs are run the width of the AND plane in both true and complement forms. A dot in the AND plane indicates that the input, or its inverse, occurs in the product term. A dot in the OR plane indicates that the corresponding product term appears in the corresponding output.

ROMs

Another form of structured logic that can be used to implement a set of logic functions is a read-only memory (ROM). A ROM is called a memory because it has a set of locations that can be read; however, the contents of these locations are fixed, usually at the time the ROM is manufactured. There are also programmable ROMs (PROMs) that can be programmed electronically, when a designer knows their contents. There are also erasable PROMs; these devices require a slow erasure process using ultraviolet light, and thus are used as read-only memories, except during the design and debugging process.

read-only memory (ROM)

A memory whose contents are designated at creation time, after which the contents can only be read. ROM is used as structured logic to implement a set of logic functions by using the terms in the logic functions as address inputs and the out puts as bits in each word of the memory.

programmable ROM (PROM)

A form of read-only memory that can be pro grammed when a designer knows its contents.

A ROM has a set of input address lines and a set of outputs. The number of addressable entries in the ROM determines the number of address lines: if the ROM contains 2m addressable entries, called the height, then there are m input lines. The number of bits in each addressable entry is equal to the number of output bits and is sometimes called the width of the ROM. The total number of bits in the ROM is equal to the height times the width. The height and width are sometimes collectively referred to as the shape of the ROM.

A ROM can encode a collection of logic functions directly from the truth table. For example, if there are n functions with m inputs, we need a ROM with m address lines (and 2m entries), with each entry being n bits wide. The entries in the input portion of the truth table represent the addresses of the entries in the ROM, while the contents of the output portion of the truth table constitute the contents of the ROM. If the truth table is organized so that the sequence of entries in the input portion constitutes a sequence of binary numbers (as have all the truth tables we have shown so far), then the output portion gives the ROM contents in order as well. In the example starting on page B-13, there were three inputs and three outputs. This leads to a ROM with 23 = 8 entries, each 3 bits wide. The contents of those entries in increasing order by address are directly given by the output portion of the truth table that appears on page B-14.

ROMs and PLAs are closely related. A ROM is fully decoded: it contains a full output word for every possible input combination. A PLA is only partially decoded. This means that a ROM will always contain more entries. For the earlier truth table on page B-14, the ROM contains entries for all eight possible inputs, whereas the PLA contains only the seven active product terms. As the number of inputs grows, the number of entries in the ROM grows exponentially. In contrast, for most real logic functions, the number of product terms grows much more slowly (see the examples in ![]() Appendix D). This difference makes PLAs generally more efficient for implementing combinational logic functions. ROMs have the advantage of being able to implement any logic function with the matching number of inputs and outputs. This advantage makes it easier to change the ROM contents if the logic function changes, since the size of the ROM need not change.

Appendix D). This difference makes PLAs generally more efficient for implementing combinational logic functions. ROMs have the advantage of being able to implement any logic function with the matching number of inputs and outputs. This advantage makes it easier to change the ROM contents if the logic function changes, since the size of the ROM need not change.

In addition to ROMs and PLAs, modern logic synthesis systems will also translate small blocks of combinational logic into a collection of gates that can be placed and wired automatically. Although some small collections of gates are usually not area efficient, for small logic functions they have less overhead than the rigid structure of a ROM and PLA and so are preferred.

For designing logic outside of a custom or semicustom integrated circuit, a common choice is a field programming device; we describe these devices in Section B.12.

Don’t Cares

Often in implementing some combinational logic, there are situations where we do not care what the value of some output is, either because another output is true or because a subset of the input combinations determines the values of the out puts. Such situations are referred to as don’t cares. Don’t cares are important because they make it easier to optimize the implementation of a logic function.

There are two types of don’t cares: output don’t cares and input don’t cares, both of which can be represented in a truth table. Output don’t cares arise when we don’t care about the value of an output for some input combination. They appear as Xs in the output portion of a truth table. When an output is a don’t care for some input combination, the designer or logic optimization program is free to make the output true or false for that input combination. Input don’t cares arise when an output depends on only some of the inputs, and they are also shown as Xs, though in the input portion of the truth table.

Don’t Cares

Example

Consider a logic function with inputs A, B, and C defined as follows:

■ If A or C is true, then output D is true, whatever the value of B.

■ If A or B is true, then output E is true, whatever the value of C.

■ Output F is true if exactly one of the inputs is true, although we don’t care about the value of F, whenever D and E are both true.

Show the full truth table for this function and the truth table using don’t cares. How many product terms are required in a PLA for each of these?

Answer

Here’s the full truth table, without don’t cares:

This requires seven product terms without optimization. The truth table written with output don’t cares looks like this:

If we also use the input don’t cares, this truth table can be further simplified to yield the following:

This simplified truth table requires a PLA with four minterms, or it can be implemented in discrete gates with one two-input AND gate and three OR gates (two with three inputs and one with two inputs). This compares to the original truth table that had seven minterms and would have required four AND gates.

Logic minimization is critical to achieving efficient implementations. One tool useful for hand minimization of random logic is Karnaugh maps. Karnaugh maps represent the truth table graphically, so that product terms that may be combined are easily seen. Nevertheless, hand optimization of significant logic functions using Karnaugh maps is impractical, both because of the size of the maps and their complexity. Fortunately, the process of logic minimization is highly mechanical and can be performed by design tools. In the process of minimization, the tools take advantage of the don’t cares, so specifying them is important. The text book references at the end of this Appendix provide further discussion on logic minimization, Karnaugh maps, and the theory behind such minimization algorithms.

Arrays of Logic Elements

Many of the combinational operations to be performed on data have to be done to an entire word (32 bits) of data. Thus we often want to build an array of logic elements, which we can represent simply by showing that a given operation will hap pen to an entire collection of inputs. For example, we saw on page B-9 what a 1-bit multiplexor looked like, but inside a machine, much of the time we want to select between a pair of buses. A bus is a collection of data lines that is treated together as a single logical signal. (The term bus is also used to indicate a shared collection of lines with multiple sources and uses, especially in Chapter 6, where I/O buses were discussed.)

bus

In logic design, a collection of data lines that is treated together as a single logical signal; also, a shared collection of lines with multiple sources and uses.

For example, in the MIPS instruction set, the result of an instruction that is written into a register can come from one of two sources. A multiplexor is used to choose which of the two buses (each 32 bits wide) will be written into the Result register. The 1-bit multiplexor, which we showed earlier, will need to be replicated 32 times.

We indicate that a signal is a bus rather than a single 1-bit line by showing it with a thicker line in a figure. Most buses are 32 bits wide; those that are not are explicitly labeled with their width. When we show a logic unit whose inputs and outputs are buses, this means that the unit must be replicated a sufficient number of times to accommodate the width of the input. Figure B.3.6 shows how we draw a multiplexor that selects between a pair of 32-bit buses and how this expands in terms of 1-bit-wide multiplexors. Sometimes we need to construct an array of logic elements where the inputs for some elements in the array are outputs from earlier elements. For example, this is how a multibit-wide ALU is constructed. In such cases, we must explicitly show how to create wider arrays, since the individual elements of the array are no longer independent, as they are in the case of a 32-bit-wide multiplexor.

Check Yourself

Parity is a function in which the output depends on the number of 1s in the input. For an even parity function, the output is 1 if the input has an even number of ones. Suppose a ROM is used to implement an even parity function with a 4-bit input. Which of A, B, C, or D represents the contents of the ROM?

FIGURE B.3.6 A multiplexor is arrayed 32 times to perform a selection between two 32-bit inputs.

Note that there is still only one data selection signal used for all 32 1-bit multiplexors.

B.4 Using a Hardware Description Language

Today most digital design of processors and related hardware systems is done using a hardware description language. Such a language serves two purposes. First, it provides an abstract description of the hardware to simulate and debug the design. Second, with the use of logic synthesis and hardware compilation tools, this description can be compiled into the hardware implementation.

hardware description language

A programming lan guage for describing hardware, used for generating simulations of a hardware design and also as input to synthesis tools that can generate actual hardware.

In this section, we introduce the hardware description language Verilog and show how it can be used for combinational design. In the rest of the appendix, we expand the use of Verilog to include design of sequential logic. In the optional sections of Chapter 4 that appear on the CD, we use Verilog to describe processor implementations. In the optional section from Chapter 5 that appears on the CD, we use system Verilog to describe cache controller implementations. System Verilog adds structures and some other useful features to Verilog.

Verilog is one of the two primary hardware description languages; the other is VHDL. Verilog is somewhat more heavily used in industry and is based on C, as opposed to VHDL, which is based on Ada. The reader generally familiar with C will find the basics of Verilog, which we use in this appendix, easy to follow. Readers already familiar with VHDL should find the concepts simple, provided they have been exposed to the syntax of C.

Verilog

One of the two most common hardware description languages.

VHDL

One of the two most common hardware description languages.

Verilog can specify both a behavioral and a structural definition of a digital system. A behavioral specification describes how a digital system functionally operates. A structural specification describes the detailed organization of a digital system, usually using a hierarchical description. A structural specification can be used to describe a hardware system in terms of a hierarchy of basic elements such as gates and switches. Thus, we could use Verilog to describe the exact contents of the truth tables and datapath of the last section.

behavioral specification

Describes how a digital system operates functionally.

structural specification

Describes how a digital system is organized in terms of a hierarchical connection of elements.

With the arrival of hardware synthesis tools, most designers now use Verilog or VHDL to structurally describe only the datapath, relying on logic synthesis to generate the control from a behavioral description. In addition, most CAD systems provide extensive libraries of standardized parts, such as ALUs, multiplexors, register files, memories, and programmable logic blocks, as well as basic gates.

hardware synthesis tools

Computer-aided design software that can generate a gate-level design based on behavioral descriptions of a digital system.

Obtaining an acceptable result using libraries and logic synthesis requires that the specification be written with an eye toward the eventual synthesis and the desired outcome. For our simple designs, this primarily means making clear what we expect to be implemented in combinational logic and what we expect to require sequential logic. In most of the examples we use in this section and the remainder of this appendix, we have written the Verilog with the eventual synthesis in mind.

Datatypes and Operators in Verilog

There are two primary datatypes in Verilog:

1. A wire specifies a combinational signal.

2. A reg (register) holds a value, which can vary with time. A reg need not necessarily correspond to an actual register in an implementation, although it often will.

wire

In Verilog, specifies a combinational signal.

reg

In Verilog, a register.

A register or wire, named X, that is 32 bits wide is declared as an array: reg [31:0] X or wire [31:0] X, which also sets the index of 0 to designate the least significant bit of the register. Because we often want to access a subfield of a register or wire, we can refer to a contiguous set of bits of a register or wire with the notation [starting bit: ending bit], where both indices must be constant values.

An array of registers is used for a structure like a register file or memory. Thus, the declaration

reg [31:0] registerfile[0:31]

specifies a variable registerfile that is equivalent to a MIPS registerfile, where register 0 is the first. When accessing an array, we can refer to a single element, as in C, using the notation registerfile[regnum].

The possible values for a register or wire in Verilog are

■ 0 or 1, representing logical false or true

■ X, representing unknown, the initial value given to all registers and to any wire not connected to something

■ Z, representing the high-impedance state for tristate gates, which we will not discuss in this appendix

Constant values can be specified as decimal numbers as well as binary, octal, or hexadecimal. We often want to say exactly how large a constant field is in bits. This is done by prefixing the value with a decimal number specifying its size in bits. For example:

■ 4’b0100 specifies a 4-bit binary constant with the value 4, as does 4’d4.

■ - 8 ‘h4 specifies an 8-bit constant with the value −4 (in two’s complement representation)

Values can also be concatenated by placing them within { } separated by commas. The notation {x {bit field}} replicates bit field x times. For example:

■ {16{2’b01}} creates a 32-bit value with the pattern 0101 … 01.

■ {A[31:16],B[15:0]} creates a value whose upper 16 bits come from A and whose lower 16 bits come from B.

Verilog provides the full set of unary and binary operators from C, including the arithmetic operators (+, −, *. /), the logical operators (&, |, ∼), the comparison operators (= =, !=, >, <, < =, > =), the shift operators (<<, >>), and C’s conditional operator (?, which is used in the form condition ? expr1 :expr2 and returns expr1 if the condition is true and expr2 if it is false). Verilog adds a set of unary logic reduction operators (&, |, ^) that yield a single bit by applying the logical operator to all the bits of an operand. For example, &A returns the value obtained by ANDing all the bits of A together, and ^A returns the reduction obtained by using exclusive OR on all the bits of A.

Check Yourself

Which of the following define exactly the same value?

l. 8’bimoooo

2. 8’hF0

3. 8’d240

4. {{4{1’b1}},{4{1’b0}}}

5. {4’b1,4’b0)

Structure of a Verilog Program

A Verilog program is structured as a set of modules, which may represent anything from a collection of logic gates to a complete system. Modules are similar to classes in C11, although not nearly as powerful. A module specifies its input and output ports, which describe the incoming and outgoing connections of a module. A module may also declare additional variables. The body of a module consists of:

■ initial constructs, which can initialize reg variables

■ Continuous assignments, which define only combinational logic

■ always constructs, which can define either sequential or combinational logic

■ Instances of other modules, which are used to implement the module being defined

Representing Complex Combinational Logic in Verilog

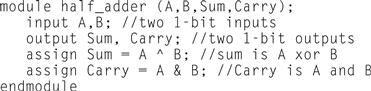

A continuous assignment, which is indicated with the keyword assign, acts like a combinational logic function: the output is continuously assigned the value, and a change in the input values is reflected immediately in the output value. Wires may only be assigned values with continuous assignments. Using continuous assignments, we can define a module that implements a half-adder, as Figure B.4.1 shows.

FIGURE B.4.1 A Verilog module that defines a half-adder using continuous assignments.

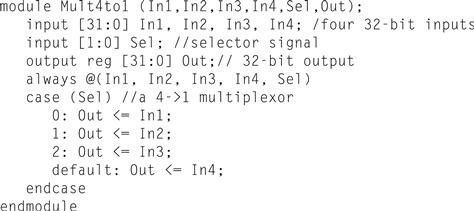

Assign statements are one sure way to write Verilog that generates combina tional logic. For more complex structures, however, assign statements may be awkward or tedious to use. It is also possible to use the always block of a module to describe a combinational logic element, although care must be taken. Using an always block allows the inclusion of Verilog control constructs, such as if-then–else, case statements, for statements, and repeat statements, to be used. These state ments are similar to those in C with small changes.

An always block specifies an optional list of signals on which the block is sensitive (in a list starting with @). The always block is reevaluated if any of the listed signals changes value; if the list is omitted, the always block is constantly reevaluated. When an always block is specifying combinational logic, the sensitivity list should include all the input signals. If there are multiple Verilog statements to be executed in an always block, they are surrounded by the keywords begin and end, which take the place of the { and } in C. An always block thus looks like this:

always @(list of signals that cause reevaluation) begin Verilog statements including assignments and other control statements end

sensitivity list

The list of signals that specifies when an always block should be reevaluated.

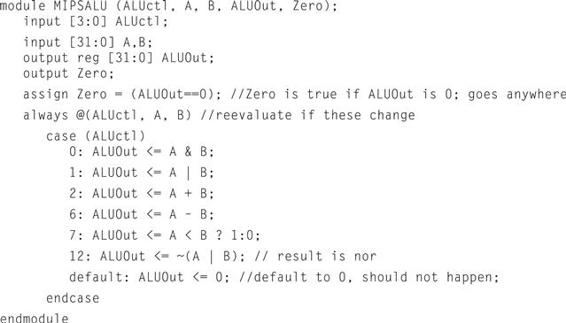

Reg variables may only be assigned inside an always block, using a procedural assignment statement (as distinguished from continuous assignment we saw earlier). There are, however, two different types of procedural assignments. The assignment operator = executes as it does in C; the right-hand side is evaluated, and the left-hand side is assigned the value. Furthermore, it executes like the normal C assignment statement: that is, it is completed before the next statement is executed. Hence, the assignment operator = has the name blocking assignment. This blocking can be useful in the generation of sequential logic, and we will return to it shortly. The other form of assignment (nonblocking) is indicated by <=. In nonblocking assignment, all right-hand sides of the assignments in an always group are evaluated and the assignments are done simultaneously. As a first example of combinational logic implemented using an always block, Figure B.4.2 shows the implementation of a 4-to-1 multiplexor, which uses a case construct to make it easy to write. The case construct looks like a C switch statement. Figure B.4.3 shows a definition of a MIPS ALU, which also uses a case statement.

blocking assignment

In Verilog, an assignment that completes before the execution of the next statement.

FIGURE B.4.2 A Verilog definition of a 4-to-1 multiplexor with 32-bit inputs, using a case statement.

The case statement acts like a C switch statement, except that in Verilog only the code associated with the selected case is executed (as if each case state had a break at the end) and there is no fallthrough to the next statement.

FIGURE B.4.3 A Verilog behavioral definition of a MIPS ALU.

This could be synthesized using a module library containing basic arithmetic and logical operations.

Since only reg variables may be assigned inside always blocks, when we want to describe combinational logic using an always block, care must be taken to ensure that the reg does not synthesize into a register. A variety of pitfalls are described in the elaboration below.

nonblocking assignment

An assignment that continues after evaluating the right-hand side, assigning the left-hand side the value only after all right-hand sides are evaluated.

Elaboration

Continuous assignment statements always yield combinational logic, but other Verilog structures, even when in always blocks, can yield unexpected results during logic synthesis. The most common problem is creating sequential logic by implying the existence of a latch or register, which results in an implementation that is both slower and more costly than perhaps intended. To ensure that the logic that you intend to be combinational is synthesized that way, make sure you do the following:

1. Place all combinational logic in a continuous assignment or an always block.

2. Make sure that all the signals used as inputs appear in the sensitivity list of an always block.

3. Ensure that every path through an always block assigns a value to the exact same set of bits.

The last of these is the easiest to overlook; read through the example in Figure B.5.15 to convince yourself that this property is adhered to.

Check Yourself

Assuming all values are initially zero, what are the values of A and B after executing this Verilog code inside an always block?

C=1;

A <= C;

B = C;

B.5 Constructing a Basic Arithmetic Logic Unit

The arithmetic logic unit (ALU) is the brawn of the computer, the device that per forms the arithmetic operations like addition and subtraction or logical operations like AND and OR. This section constructs an ALU from four hardware building blocks (AND and OR gates, inverters, and multiplexors) and illustrates how combinational logic works. In the next section, we will see how addition can be sped up through more clever designs.

ALU n. [Arthritic Logic Unit or (rare) Arithmetic Logic Unit]

A random-number generator supplied as standard with all computer systems.

Stan Kelly-Bootle, The Devil’s DP Dictionary, 1981

Because the MIPS word is 32 bits wide, we need a 32-bit-wide ALU. Let’s assume that we will connect 32 1-bit ALUs to create the desired ALU. We’ll therefore start by constructing a 1-bit ALU.

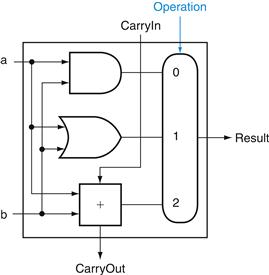

A 1-Bit ALU

The logical operations are easiest, because they map directly onto the hardware components in Figure B.2.1.

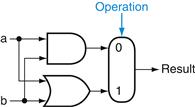

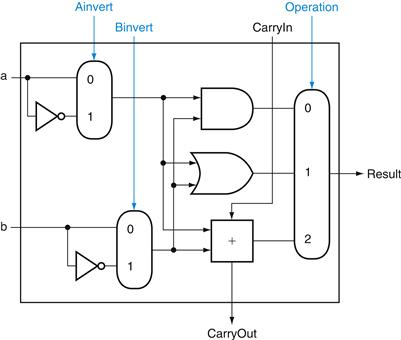

The 1-bit logical unit for AND and OR looks like Figure B.5.1. The multiplexor on the right then selects a AND b or a OR b, depending on whether the value of Operation is 0 or 1. The line that controls the multiplexor is shown in color to distinguish it from the lines containing data. Notice that we have renamed the control and output lines of the multiplexor to give them names that reflect the function of the ALU.

FIGURE B.5.1 The 1-bit logical unit for AND and OR.

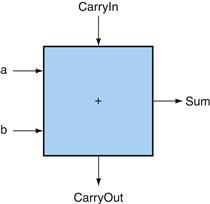

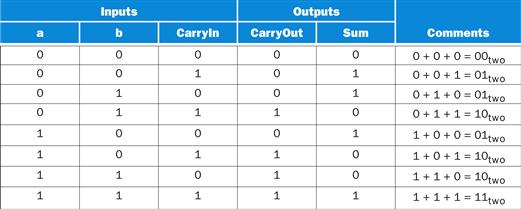

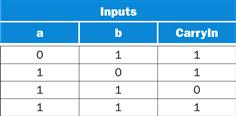

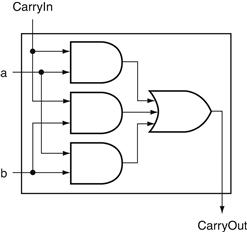

The next function to include is addition. An adder must have two inputs for the operands and a single-bit output for the sum. There must be a second output to pass on the carry, called CarryOut. Since the CarryOut from the neighbor adder must be included as an input, we need a third input. This input is called CarryIn. Figure B.5.2 shows the inputs and the outputs of a 1-bit adder. Since we know what addition is supposed to do, we can specify the outputs of this “black box” based on its inputs, as Figure B.5.3 demonstrates.

FIGURE B.5.2 A 1-bit adder.

This adder is called a full adder; it is also called a (3,2) adder because it has 3 inputs and 2 outputs. An adder with only the a and b inputs is called a (2,2) adder or half-adder

FIGURE B.5.3 Input and output specification for a 1-bit adder.

We can express the output functions Carry Out and Sum as logical equations, and these equations can in turn be implemented with logic gates. Let’s do CarryOut. Figure B.5.4 shows the values of the inputs when CarryOut is a 1.

FIGURE B.5.4 Values of the inputs when CarryOut is a 1.

We can turn this truth table into a logical equation:

![]()

If a · b · CarryIn is true, then all of the other three terms must also be true, so we can leave out this last term corresponding to the fourth line of the table. We can thus simplify the equation to

![]()

Figure B.5.5 shows that the hardware within the adder black box for CarryOut consists of three AND gates and one OR gate. The three AND gates correspond exactly to the three parenthesized terms of the formula above for CarryOut, and the OR gate sums the three terms.

FIGURE B.5.5 Adder hardware for the CarryOut signal.

The rest of the adder hardware is the logic for the Sum output given in the equation on this page.

The Sum bit is set when exactly one input is 1 or when all three inputs are 1. The Sum results in a complex Boolean equation (recall that ![]() means NOT a):

means NOT a):

![]()

The drawing of the logic for the Sum bit in the adder black box is left as an exercise for the reader.

Figure B.5.6 shows a 1-bit ALU derived by combining the adder with the earlier components. Sometimes designers also want the ALU to perform a few more simple operations, such as generating 0. The easiest way to add an operation is to expand the multiplexor controlled by the Operation line and, for this example, to connect 0 directly to the new input of that expanded multiplexor.

FIGURE B.5.6 A 1-bit ALU that performs AND, OR, and addition (see Figure B.5.5).

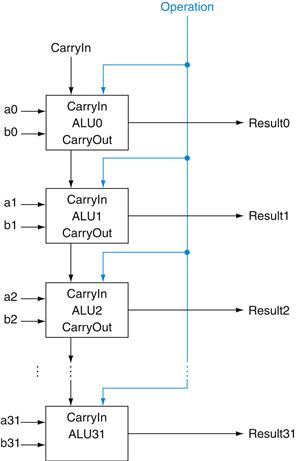

A 32-Bit ALU

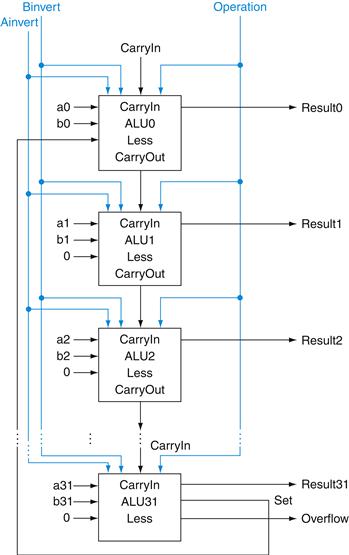

Now that we have completed the 1-bit ALU, the full 32-bit ALU is created by connecting adjacent “black boxes.” Using xi to mean the ith bit of x, Figure B.5.7 shows a 32-bit ALU. Just as a single stone can cause ripples to radiate to the shores of a quiet lake, a single carry out of the least significant bit (Result0) can ripple all the way through the adder, causing a carry out of the most significant bit (Result31). Hence, the adder created by directly linking the carries of 1-bit adders is called a ripple carry adder. We’ll see a faster way to connect the 1-bit adders starting on page B-38.

FIGURE B.5.7 A 32-bit ALU constructed from 32 1-bit ALUs.

CarryOut of the less significant bit is connected to the CarryIn of the more significant bit. This organization is called ripple carry.

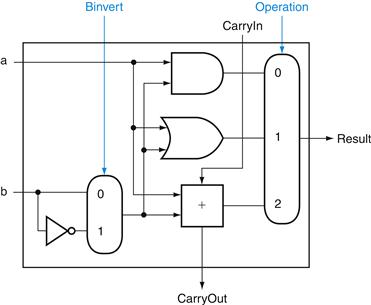

Subtraction is the same as adding the negative version of an operand, and this is how adders perform subtraction. Recall that the shortcut for negating a two’s complement number is to invert each bit (sometimes called the one’s complement) and then add 1. To invert each bit, we simply add a 2:1 multiplexor that chooses between b and ![]() , as Figure B.5.8 shows.

, as Figure B.5.8 shows.

FIGURE B.5.8 A 1-bit ALU that performs AND, OR, and addition on a and b or a and ![]() .

.

By selecting ![]() (Binvert = 1) and setting CarryIn to 1 in the least significant bit of the ALU, we get two’s complement subtraction of b from a instead of addition of b to a.

(Binvert = 1) and setting CarryIn to 1 in the least significant bit of the ALU, we get two’s complement subtraction of b from a instead of addition of b to a.

Suppose we connect 32 of these 1-bit ALUs, as we did in Figure B.5.7. The added multiplexor gives the option of b or its inverted value, depending on Binvert, but this is only one step in negating a two’s complement number. Notice that the least significant bit still has a CarryIn signal, even though it’s unnecessary for addition. What happens if we set this CarryIn to 1 instead of 0? The adder will then calculate a + b + 1. By selecting the inverted version of b, we get exactly what we want:

![]()

The simplicity of the hardware design of a two’s complement adder helps explain why two’s complement representation has become the universal standard for integer computer arithmetic.

A MIPS ALU also needs a NOR function. Instead of adding a separate gate for NOR, we can reuse much of the hardware already in the ALU, like we did for subtract. The insight comes from the following truth about NOR:

![]()

That is, NOT (a OR b) is equivalent to NOT a AND NOT b. This fact is called DeMorgan’s theorem and is explored in the exercises in more depth.

Since we have AND and NOT b, we only need to add NOT a to the ALU. Figure B.5.9 shows that change.

FIGURE B.5.9 A 1-bit ALU that performs AND, OR, and addition on a and b or a and b.

By selecting ![]() (Ainvert = 1) and

(Ainvert = 1) and ![]() (Binvert = 1), we get a NOR b instead of a AND b.

(Binvert = 1), we get a NOR b instead of a AND b.

Tailoring the 32-Bit ALU to MIPS

These four operations—add, subtract, AND, OR—are found in the ALU of almost every computer, and the operations of most MIPS instructions can be performed by this ALU. But the design of the ALU is incomplete.

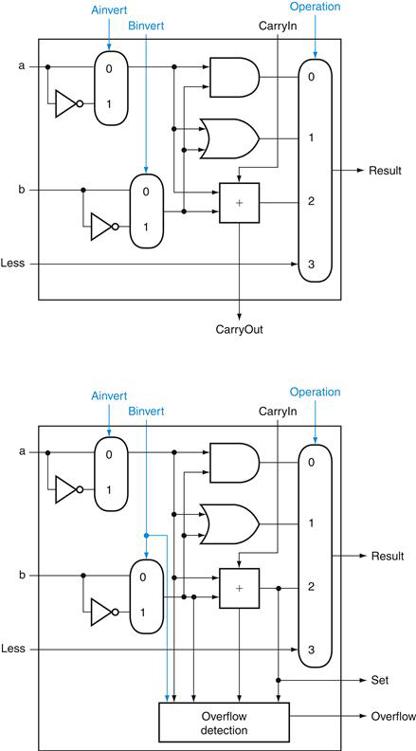

One instruction that still needs support is the set on less than instruction (slt). Recall that the operation produces 1 if rs , rt, and 0 otherwise. Consequently, slt will set all but the least significant bit to 0, with the least significant bit set according to the comparison. For the ALU to perform slt, we first need to expand the three-input multiplexor in Figure B.5.8 to add an input for the slt result. We call that new input Less and use it only for slt.

The top drawing of Figure B.5.10 shows the new 1-bit ALU with the expanded multiplexor. From the description of slt above, we must connect 0 to the Less input for the upper 31 bits of the ALU, since those bits are always set to 0. What remains to consider is how to compare and set the least significant bit for set on less than instructions.

FIGURE B.5.10 (Top) A 1-bit ALU that performs AND, OR, and addition on a and b or b , and (bottom) a 1-bit ALU for the most significant bit.

The top drawing includes a direct input that is connected to perform the set on less than operation (see Figure B.5.11); the bottom has a direct output from the adder for the less than comparison called Set. (See Exercise B.24 at the end of this Appendix to see how to calculate overflow with fewer inputs.)

What happens if we subtract b from a? If the difference is negative, then a < b since

![]()

We want the least significant bit of a set on less than operation to be a 1 if a < b; that is, a 1 if a − b is negative and a 0 if it’s positive. This desired result corresponds exactly to the sign bit values: 1 means negative and 0 means positive. Following this line of argument, we need only connect the sign bit from the adder output to the least significant bit to get set on less than.

Unfortunately, the Result output from the most significant ALU bit in the top of Figure B.5.10 for the slt operation is not the output of the adder; the ALU output for the slt operation is obviously the input value Less.

Thus, we need a new 1-bit ALU for the most significant bit that has an extra output bit: the adder output. The bottom drawing of Figure B.5.10 shows the design, with this new adder output line called Set, and used only for slt. As long as we need a special ALU for the most significant bit, we added the overflow detec tion logic since it is also associated with that bit.

Alas, the test of less than is a little more complicated than just described because of overflow, as we explore in the exercises. Figure B.5.11 shows the 32-bit ALU.

FIGURE B.5.11 A 32-bit ALU constructed from the 31 copies of the 1-bit ALU in the top of Figure B.5.10 and one 1-bit ALU in the bottom of that figure.

The Less inputs are connected to 0 except for the least significant bit, which is connected to the Set output of the most significant bit. If the ALU performs a − b and we select the input 3 in the multiplexor in Figure B.5.10, then Result = 0 … 001 if a < b, and Result = 0 … 000 otherwise.

Notice that every time we want the ALU to subtract, we set both CarryIn and Binvert to 1. For adds or logical operations, we want both control lines to be 0. We can therefore simplify control of the ALU by combining the CarryIn and Binvert to a single control line called Bnegate.

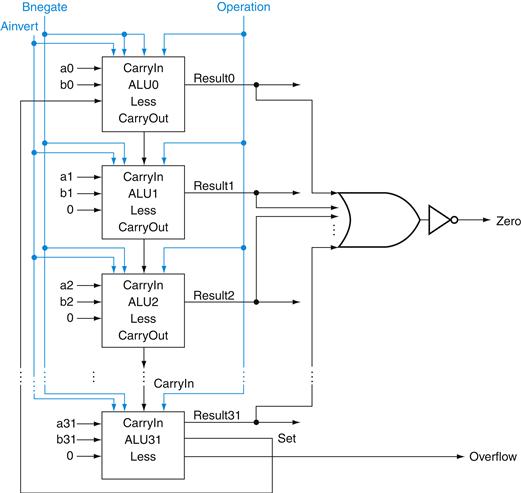

To further tailor the ALU to the MIPS instruction set, we must support conditional branch instructions. These instructions branch either if two registers are equal or if they are unequal. The easiest way to test equality with the ALU is to subtract b from a and then test to see if the result is 0, since

![]()

Thus, if we add hardware to test if the result is 0, we can test for equality. The simplest way is to OR all the outputs together and then send that signal through an inverter:

![]()

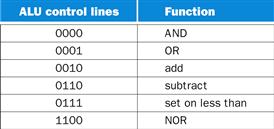

Figure B.5.12 shows the revised 32-bit ALU. We can think of the combination of the 1-bit Ainvert line, the 1-bit Binvert line, and the 2-bit Operation lines as 4-bit control lines for the ALU, telling it to perform add, subtract, AND, OR, or set on less than. Figure B.5.13 shows the ALU control lines and the corresponding ALU operation.

FIGURE B.5.12 The final 32-bit ALU.

This adds a Zero detector to Figure B.5.11.

FIGURE B.5.13 The values of the three ALU control lines, Bnegate, and Operation, and the corresponding ALU operations.

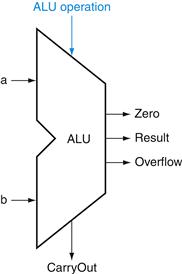

Finally, now that we have seen what is inside a 32-bit ALU, we will use the universal symbol for a complete ALU, as shown in Figure B.5.14.

FIGURE B.5.14 The symbol commonly used to represent an ALU, as shown in Figure B.5.12.

This symbol is also used to represent an adder, so it is normally labeled either with ALU or Adder.

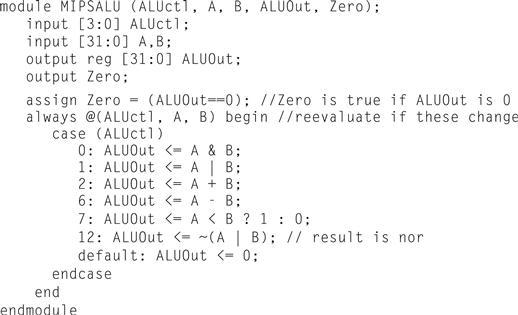

Defining the MIPS ALU in Verilog

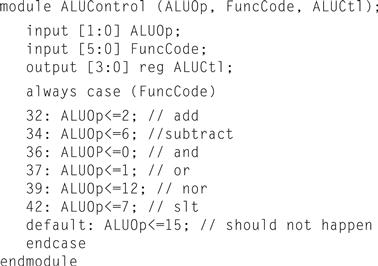

Figure B.5.15 shows how a combinational MIPS ALU might be specified in Verilog; such a specification would probably be compiled using a standard parts library that provided an adder, which could be instantiated. For completeness, we show the ALU control for MIPS in Figure B.5.16, which is used in Chapter 4, where we build a Verilog version of the MIPS datapath.

FIGURE B.5.15 A Verilog behavioral definition of a MIPS ALU.

FIGURE B.5.16 The MIPS ALU control: a simple piece of combinational control logic.

The next question is, “How quickly can this ALU add two 32-bit operands?” We can determine the a and b inputs, but the CarryIn input depends on the operation in the adjacent 1-bit adder. If we trace all the way through the chain of dependencies, we connect the most significant bit to the least significant bit, so the most significant bit of the sum must wait for the sequential evaluation of all 32 1-bit adders. This sequential chain reaction is too slow to be used in time-critical hardware. The next section explores how to speed-up addition. This topic is not crucial to understanding the rest of the appendix and may be skipped.

Check Yourself

Suppose you wanted to add the operation NOT (a AND b), called NAND. How could the ALU change to support it?

1. No change. You can calculate NAND quickly using the current ALU since ![]() and we already have NOT a, NOT b, and OR.

and we already have NOT a, NOT b, and OR.

2. You must expand the big multiplexor to add another input, and then add new logic to calculate NAND.

B.6 Faster Addition: Carry Lookahead

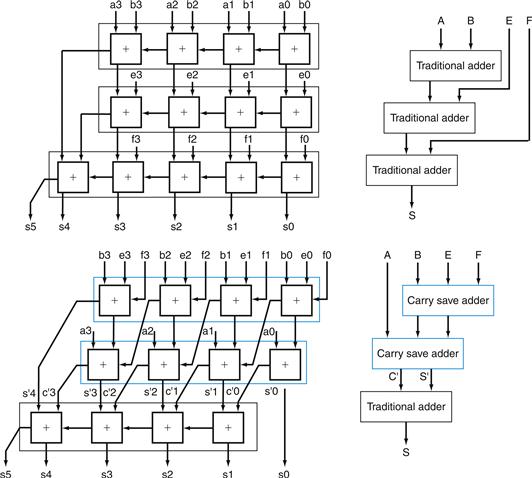

The key to speeding up addition is determining the carry in to the high-order bits sooner. There are a variety of schemes to anticipate the carry so that the worst-case scenario is a function of the log2 of the number of bits in the adder. These anticipatory signals are faster because they go through fewer gates in sequence, but it takes many more gates to anticipate the proper carry.

A key to understanding fast-carry schemes is to remember that, unlike soft ware, hardware executes in parallel whenever inputs change.

Fast Carry Using “Infinite” Hardware

As we mentioned earlier, any equation can be represented in two levels of logic. Since the only external inputs are the two operands and the CarryIn to the least significant bit of the adder, in theory we could calculate the CarryIn values to all the remaining bits of the adder in just two levels of logic.

For example, the CarryIn for bit 2 of the adder is exactly the CarryOut of bit 1, so the formula is

![]()

Similarly, CarryIn1 is defined as

![]()

Using the shorter and more traditional abbreviation of ci for CarryIni, we can rewrite the formulas as

![]()

Substituting the definition of c1 for the first equation results in this formula:

![]()

You can imagine how the equation expands as we get to higher bits in the adder; it grows rapidly with the number of bits. This complexity is reflected in the cost of the hardware for fast carry, making this simple scheme prohibitively expensive for wide adders.

Fast Carry Using the First Level of Abstraction: Propagate and Generate

Most fast-carry schemes limit the complexity of the equations to simplify the hardware, while still making substantial speed improvements over ripple carry. One such scheme is a carry-lookahead adder. In Chapter 1, we said computer systems cope with complexity by using levels of abstraction. A carry-lookahead adder relies on levels of abstraction in its implementation.

Let’s factor our original equation as a first step:

![]()

If we were to rewrite the equation for c2 using this formula, we would see some repeated patterns:

![]()

Note the repeated appearance of (ai · bi) and (ai + bi) in the formula above. These two important factors are traditionally called generate (gi) and propagate (pi):

![]()

Using them to define ci + 1, we get

![]()

To see where the signals get their names, suppose gi is 1. Then

![]()

That is, the adder generates a CarryOut (ci + 1) independent of the value of Car ryIn (ci). Now suppose that gi is 0 and pi is 1. Then

![]()

That is, the adder propagates CarryIn to a CarryOut. Putting the two together, CarryIni + 1 is a 1 if either gi is 1 or both pi is 1 and CarryIni is 1.

As an analogy, imagine a row of dominoes set on edge. The end domino can be tipped over by pushing one far away, provided there are no gaps between the two. Similarly, a carry out can be made true by a generate far away, provided all the propagates between them are true.

Relying on the definitions of propagate and generate as our first level of abstraction, we can express the CarryIn signals more economically. Let’s show it for 4 bits:

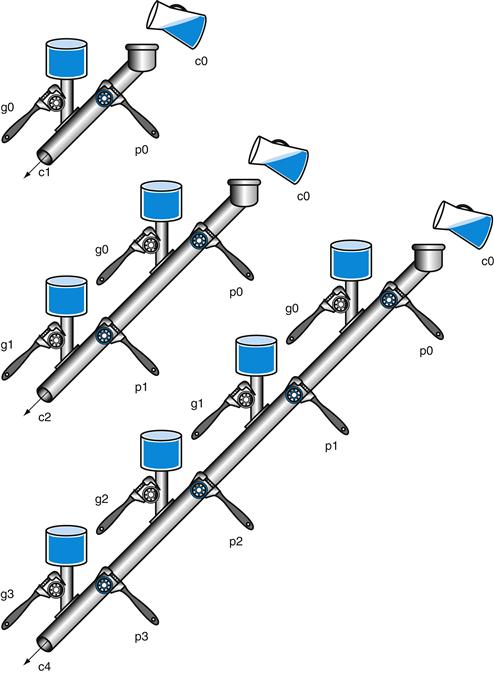

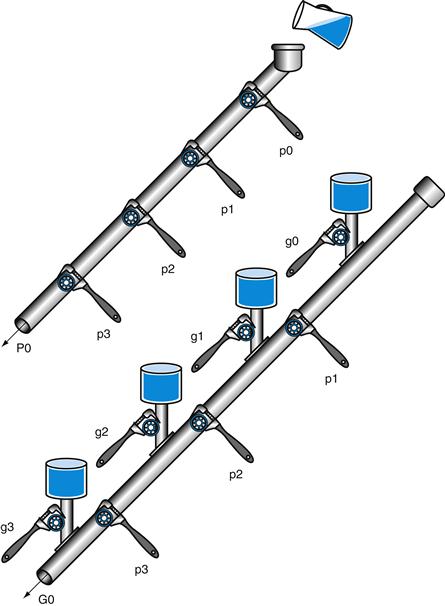

These equations just represent common sense: CarryIni is a 1 if some earlier adder generates a carry and all intermediary adders propagate a carry. Figure B.6.1 uses plumbing to try to explain carry lookahead.

FIGURE B.6.1 A plumbing analogy for carry lookahead for 1 bit, 2 bits, and 4 bits using water pipes and valves.

The wrenches are turned to open and close valves. Water is shown in color. The output of the pipe (ci + 1) will be full if either the nearest generate value (gi) is turned on or if the i propagate value (pi) is on and there is water further upstream, either from an earlier generate or a propagate with water behind it. CarryIn (c0) can result in a carry out without the help of any generates, but with the help of all propagates.

Even this simplified form leads to large equations and, hence, considerable logic even for a 16-bit adder. Let’s try moving to two levels of abstraction.

Fast Carry Using the Second Level of Abstraction

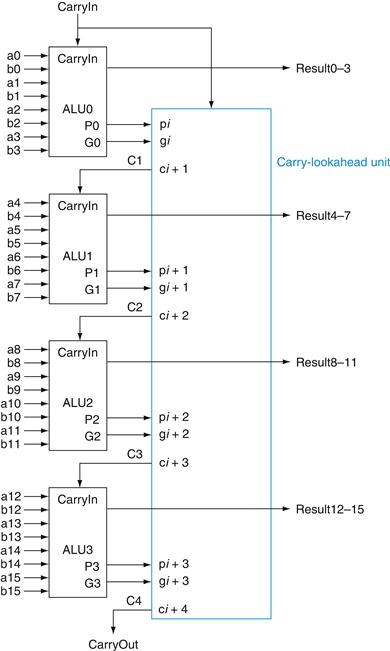

First, we consider this 4-bit adder with its carry-lookahead logic as a single building block. If we connect them in ripple carry fashion to form a 16-bit adder, the add will be faster than the original with a little more hardware.

To go faster, we’ll need carry lookahead at a higher level. To perform carry look ahead for 4-bit adders, we need to propagate and generate signals at this higher level. Here they are for the four 4-bit adder blocks:

That is, the “super” propagate signal for the 4-bit abstraction (Pi) is true only if each of the bits in the group will propagate a carry.

For the “super” generate signal (Gi), we care only if there is a carry out of the most significant bit of the 4-bit group. This obviously occurs if generate is true for that most significant bit; it also occurs if an earlier generate is true and all the intermediate propagates, including that of the most significant bit, are also true:

Figure B.6.2 updates our plumbing analogy to show P0 and G0.

FIGURE B.6.2 A plumbing analogy for the next-level carry-lookahead signals P0 and G0.

P0 is open only if all four propagates (pi) are open, while water flows in G0 only if at least one generate (gi) is open and all the propagates downstream from that generate are open.

Then the equations at this higher level of abstraction for the carry in for each 4-bit group of the 16-bit adder (C1, C2, C3, C4 in Figure B.6.3) are very similar to the carry out equations for each bit of the 4-bit adder (c1, c2, c3, c4) on page B-40:

FIGURE B.6.3 Four 4-bit ALUs using carry lookahead to form a 16-bit adder.

Note that the carries come from the carry-lookahead unit, not from the 4-bit ALUs.

Figure B.6.3 shows 4-bit adders connected with such a carry-lookahead unit. The exercises explore the speed differences between these carry schemes, different notations for multibit propagate and generate signals, and the design of a 64-bit adder.

Both Levels of the Propagate and Generate

Example

Determine the gi, pi, Pi, and Gi values of these two 16-bit numbers:

![]()

Also, what is CarryOut15 (C4)?

Answer

Aligning the bits makes it easy to see the values of generate gi (ai · bi) and propagate pi (ai + bi):

where the bits are numbered 15 to 0 from left to right. Next, the “super” propagates (P3, P2, P1, P0) are simply the AND of the lower-level propagates:

The “super” generates are more complex, so use the following equations:

Finally, CarryOut15 is

Hence, there is a carry out when adding these two 16-bit numbers.

The reason carry lookahead can make carries faster is that all logic begins evaluating the moment the clock cycle begins, and the result will not change once the output of each gate stops changing. By taking the shortcut of going through fewer gates to send the carry in signal, the output of the gates will stop changing sooner, and hence the time for the adder can be less.

To appreciate the importance of carry lookahead, we need to calculate the relative performance between it and ripple carry adders.

Speed of Ripple Carry versus Carry Lookahead

Example

One simple way to model time for logic is to assume each AND or OR gate takes the same time for a signal to pass through it. Time is estimated by simply counting the number of gates along the path through a piece of logic. Compare the number of gate delays for paths of two 16-bit adders, one using ripple carry and one using two-level carry lookahead.

Answer

Figure B.5.5 on page B-28 shows that the carry out signal takes two gate delays per bit. Then the number of gate delays between a carry in to the least significant bit and the carry out of the most significant is 16 × 2 = 32.

For carry lookahead, the carry out of the most significant bit is just C4, defined in the example. It takes two levels of logic to specify C4 in terms of Pi and Gi (the OR of several AND terms). Pi is specified in one level of logic (AND) using pi, and Gi is specified in two levels using pi and gi, so the worst case for this next level of abstraction is two levels of logic. pi and gi are each one level of logic, defined in terms of ai and bi. If we assume one gate delay for each level of logic in these equations, the worst case is 2 + 2 + 1 = 5 gate delays.

Hence, for the path from carry in to carry out, the 16-bit addition by a carry-lookahead adder is six times faster, using this very simple estimate of hardware speed.

Summary

Carry lookahead offers a faster path than waiting for the carries to ripple through all 32 1-bit adders. This faster path is paved by two signals, generate and propagate. The former creates a carry regardless of the carry input, and the latter passes a carry along. Carry lookahead also gives another example of how abstraction is important in computer design to cope with complexity.

Check Yourself

Using the simple estimate of hardware speed above with gate delays, what is the relative performance of a ripple carry 8-bit add versus a 64-bit add using carry-lookahead logic?

1. A 64-bit carry-lookahead adder is three times faster: 8-bit adds are 16 gate delays and 64-bit adds are 7 gate delays.

2. They are about the same speed, since 64-bit adds need more levels of logic in the 16-bit adder.

3. 8-bit adds are faster than 64 bits, even with carry lookahead.

Elaboration

We have now accounted for all but one of the arithmetic and logical operations for the core MIPS instruction set: the ALU in Figure B.5.14 omits support of shift instructions. It would be possible to widen the ALU multiplexor to include a left shift by 1 bit or a right shift by 1 bit. But hardware designers have created a circuit called a barrel shifter, which can shift from 1 to 31 bits in no more time than it takes to add two 32-bit numbers, so shifting is normally done outside the ALU.

Elaboration

The logic equation for the Sum output of the full adder on page B-28 can be expressed more simply by using a more powerful gate than AND and OR. An exclusive OR gate is true if the two operands disagree; that is,

![]()

In some technologies, exclusive OR is more efficient than two levels of AND and OR gates. Using the symbol ![]() to represent exclusive OR, here is the new equation:

to represent exclusive OR, here is the new equation:

![]()

Also, we have drawn the ALU the traditional way, using gates. Computers are designed today in CMOS transistors, which are basically switches. CMOS ALU and bar rel shifters take advantage of these switches and have many fewer multiplexors than shown in our designs, but the design principles are similar.

Elaboration

Using lowercase and uppercase to distinguish the hierarchy of generate and propagate symbols breaks down when you have more than two levels. An alternate notation that scales is gi..j and pi..jfor the generate and propagate signals for bits i to j. Thus, g1..1 is generated for bit 1, g4..1 is for bits 4 to 1, and g16..1 is for bits 16 to 1.

B.7 Clocks

Before we discuss memory elements and sequential logic, it is useful to discuss briefly the topic of clocks. This short section introduces the topic and is similar to the discussion found in Section 4.2. More details on clocking and timing methodologies are presented in Section B.11.

edge-triggered clocking

A clocking scheme in which all state changes occur on a clock edge.

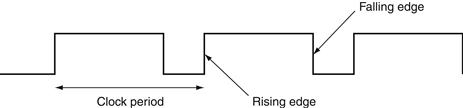

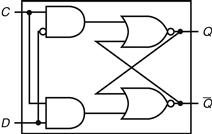

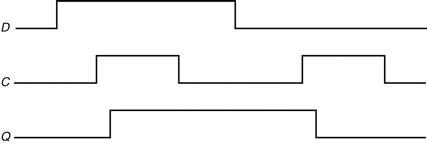

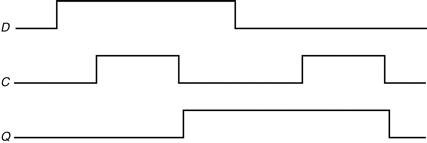

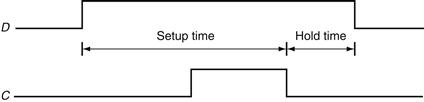

Clocks are needed in sequential logic to decide when an element that contains state should be updated. A clock is simply a free-running signal with a fixed cycle time; the clock frequency is simply the inverse of the cycle time. As shown in Figure B.7.1, the clock cycle time or clock period is divided into two portions: when the clock is high and when the clock is low. In this text, we use only edge-triggered clocking. This means that all state changes occur on a clock edge. We use an edge-triggered methodology because it is simpler to explain. Depending on the tech nology, it may or may not be the best choice for a clocking methodology.

clocking methodology

The approach used to determine when data is valid and stable relative to the clock.

FIGURE B.7.1 A clock signal oscillates between high and low values.

The clock period is the time for one full cycle. In an edge-triggered design, either the rising or falling edge of the clock is active and causes state to be changed.

In an edge-triggered methodology, either the rising edge or the falling edge of the clock is active and causes state changes to occur. As we will see in the next section, the state elements in an edge-triggered design are implemented so that the contents of the state elements only change on the active clock edge. The choice of which edge is active is influenced by the implementation technology and does not affect the concepts involved in designing the logic.

state element

A memory element.

The clock edge acts as a sampling signal, causing the value of the data input to a state element to be sampled and stored in the state element. Using an edge trigger means that the sampling process is essentially instantaneous, eliminating problems that could occur if signals were sampled at slightly different times.

synchronous system

A memory system that employs clocks and where data signals are read only when the clock indicates that the signal values are stable.

The major constraint in a clocked system, also called a synchronous system, is that the signals that are written into state elements must be valid when the active clock edge occurs. A signal is valid if it is stable (i.e., not changing), and the value will not change again until the inputs change. Since combinational circuits cannot have feedback, if the inputs to a combinational logic unit are not changed, the outputs will eventually become valid.

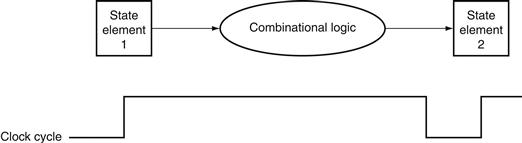

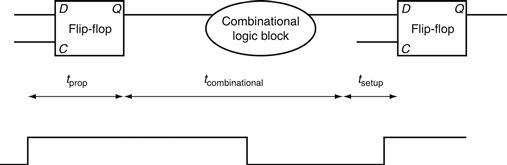

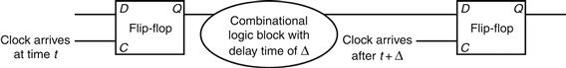

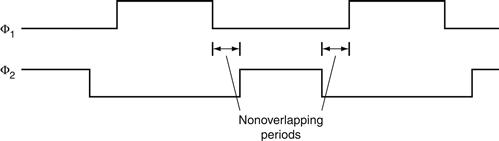

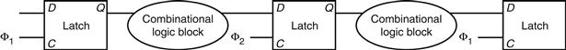

Figure B.7.2 shows the relationship among the state elements and the combinational logic blocks in a synchronous, sequential logic design. The state elements, whose outputs change only after the clock edge, provide valid inputs to the combinational logic block. To ensure that the values written into the state elements on the active clock edge are valid, the clock must have a long enough period so that all the signals in the combinational logic block stabilize, then the clock edge samples those values for storage in the state elements. This constraint sets a lower bound on the length of the clock period, which must be long enough for all state element inputs to be valid.

FIGURE B.7.2 The inputs to a combinational logic block come from a state element, and the outputs are written into a state element.

The clock edge determines when the contents of the state elements are updated.

In the rest of this appendix, as well as in Chapter 4, we usually omit the clock signal, since we are assuming that all state elements are updated on the same clock edge. Some state elements will be written on every clock edge, while others will be written only under certain conditions (such as a register being updated). In such cases, we will have an explicit write signal for that state element. The write signal must still be gated with the clock so that the update occurs only on the clock edge if the write signal is active. We will see how this is done and used in the next section.

One other advantage of an edge-triggered methodology is that it is possible to have a state element that is used as both an input and output to the same combinational logic block, as shown in Figure B.7.3. In practice, care must be taken to prevent races in such situations and to ensure that the clock period is long enough; this topic is discussed further in Section B.11.

FIGURE B.7.3 An edge-triggered methodology allows a state element to be read and written in the same clock cycle without creating a race that could lead to undetermined data values.

Of course, the clock cycle must still be long enough so that the input values are stable when the active clock edge occurs.

Now that we have discussed how clocking is used to update state elements, we can discuss how to construct the state elements.

Elaboration

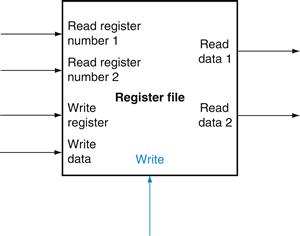

Occasionally, designers find it useful to have a small number of state elements that change on the opposite clock edge from the majority of the state elements. Doing so requires extreme care, because such an approach has effects on both the inputs and the outputs of the state element. Why then would designers ever do this? Consider the case where the amount of combinational logic before and after a state element is small enough so that each could operate in one-half clock cycle, rather than the more usual full clock cycle. Then the state element can be written on the clock edge corresponding to a half clock cycle, since the inputs and outputs will both be usable after one-half clock cycle. One common place where this technique is used is in register files, where simply reading or writing the register file can often be done in half the normal clock cycle. Chapter 4 makes use of this idea to reduce the pipelining over head.

register file

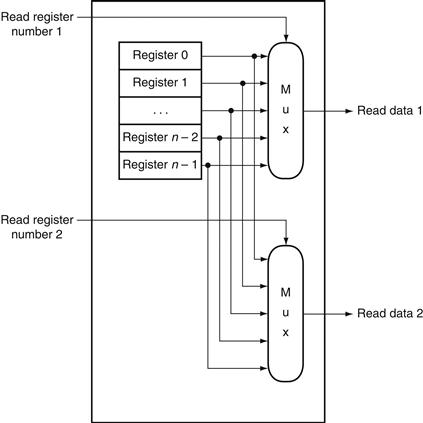

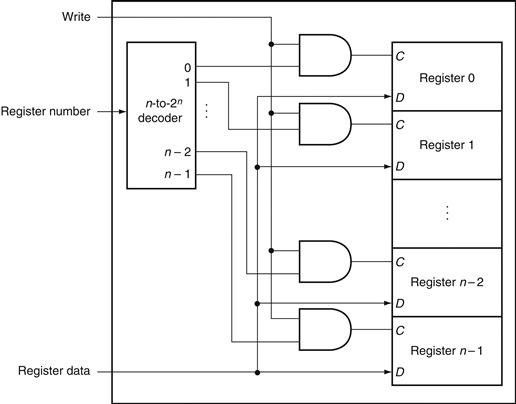

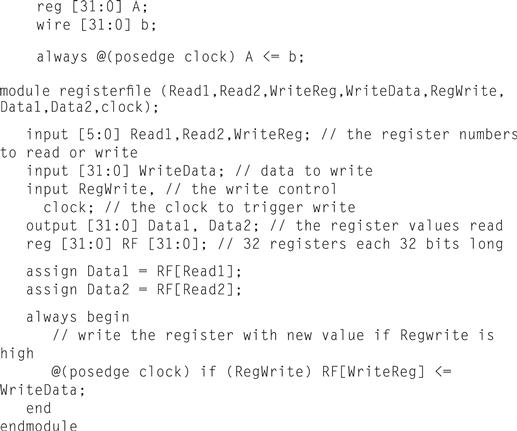

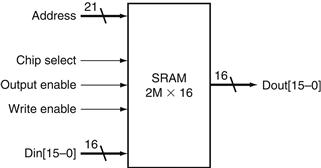

A state element that consists of a set of registers that can be read and written by supplying a register number to be accessed.

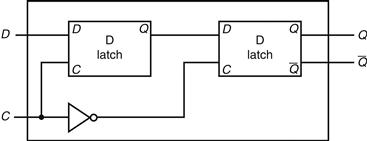

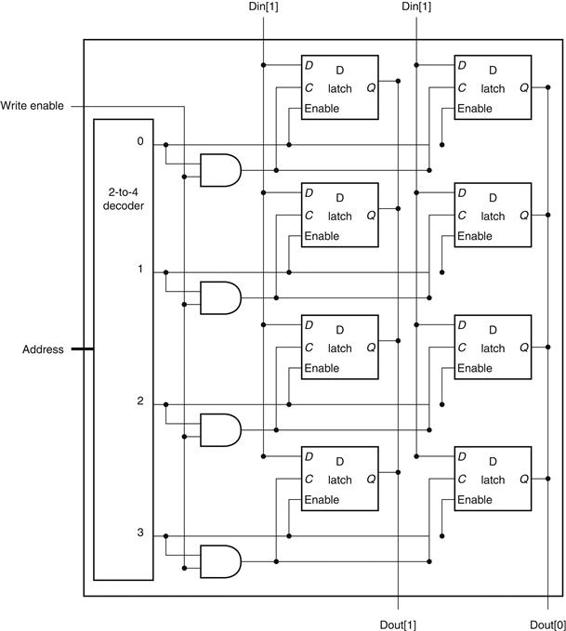

B.8 Memory Elements: Flip-Flops, Latches, and Registers

In this section and the next, we discuss the basic principles behind memory elements, starting with flip-flops and latches, moving on to register files, and finishing with memories. All memory elements store state: the output from any memory element depends both on the inputs and on the value that has been stored inside the memory element. Thus all logic blocks containing a memory element contain state and are sequential.