Mastering Hyper-V 2012 R2 with System Center and Windows Azure (2014)

Chapter 3. Virtual Networking

This chapter covers the networking elements that enable virtual machines to communicate with each other and also with the rest of your environment. Features that are specific to virtual machines will be covered, but also network technologies in the operating system that can bring additional benefit.

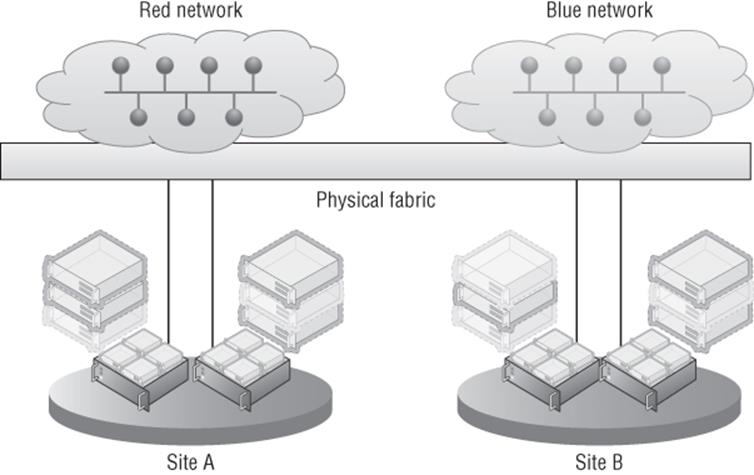

Windows 2012 introduced network virtualization, which closes the remaining gap between virtualization and the goal of complete abstraction of the virtual machine from the underlying fabric. Network virtualization allows virtual machines to be abstracted from the physical network fabric, allowing complete isolation between virtual networks and the ability to use IP schemes independently of the physical network fabric. This technology will be covered in detail along with all the various options available to you.

In this chapter, you will learn to

· Architect the right network design for your Hyper-V hosts and virtual machines using the options available

· Identify when to use the types of NVGRE Gateways

· Leverage SCVMM 2012 R2 for many networking tasks

Virtual Switch Fundamentals

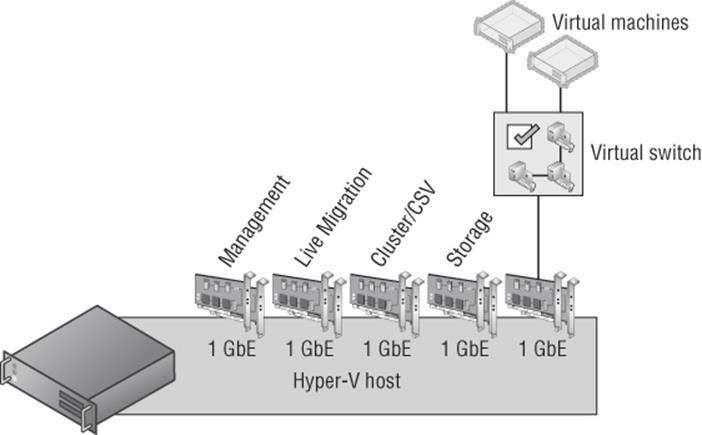

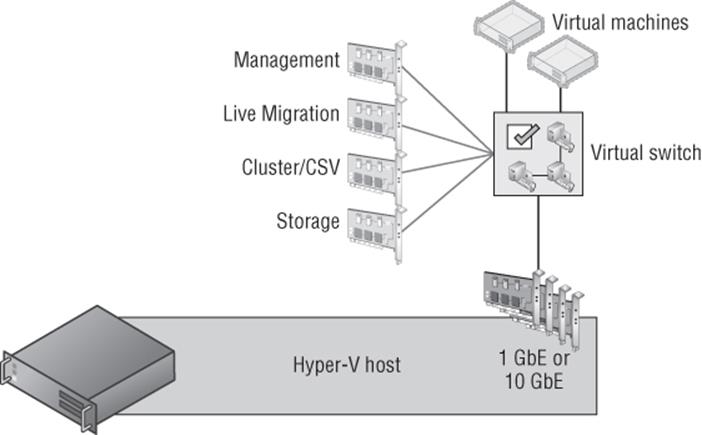

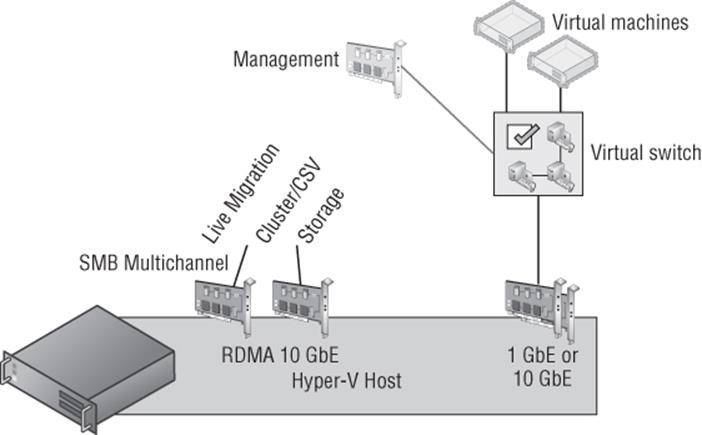

A typical server has one or more network adapters that are configured with an IPv4 and IPv6 address, either statically or dynamically, using services such as Dynamic Host Configuration Protocol (DHCP). The server may be part of a VLAN to provide isolation and control of broadcast traffic. It may require different network connections to connect to different networks, such as a separate, nonrouted network for cluster communications between servers in a failover cluster, a separate network for iSCSI traffic; a separate management network; and so on. With virtualization, the requirements for network connectivity is just as important as with a physical server. However, there are additional options available because essentially there are multiple server instances on a single physical asset, and in some cases they just need to communicate with each other and not externally to the virtualization host.

Three Types of Virtual Switch

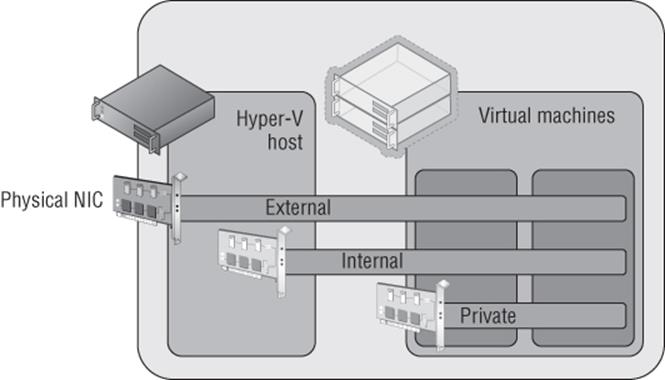

Virtual machines have a number of virtualized resources, and one type is the virtual network adapter (as discussed in the previous chapter, there are actually two types of network adapter for a generation 1 virtual machine, but their connectivity options are the same). One or more virtual network adapters are added to a virtual machine and then each virtual adapter is attached to a virtual switch that was created at the Hyper-V host level. A Hyper-V host can have many virtual switches created. There are three types of virtual switches available: external, internal, and private, as shown in Figure 3.1.

1. External Virtual Networks

These are bound to a physical network card in the host, and virtual machines have access to the physical network via the physical NIC, which is linked to the external switch the virtual network adapter is connected to. Virtual machines on the same virtual switch can also communicate with each other. If they are on different switches that can communicate through the physical network, through routing, then they can also communicate. The virtual machines each see a virtual network device, and the Hyper-V host still sees the network adapter—however, it will no longer use it. The network device on the Hyper-V host is the physical NIC, which is bound only to the Hyper-V extensible virtual switch, which means it is being used by a Hyper-V virtual switch.

It is also possible when creating a virtual switch to enable the Hyper-V host itself, the management OS, to continue using the network adapter even though it has been assigned to a virtual switch. Sharing the adapter works by actually creating a virtual network adapter in the management partition that is connected to the Hyper-V virtual switch so all communication still goes through the virtual switch, which exclusively owns the physical network adapter. In Windows Server 2012, it's actually possible to create multiple virtual network adapters in the management partition, which opens up some new configuration options and scenarios that I will cover later in this chapter. If you had only a single network adapter in the Hyper-V host, you should definitely select the option to share the network adapter with the management operating system. The option to share the network adapter can be enabled or disabled at any time after the external switch has been created.

2. Internal Virtual Networks These are not bound to a physical NIC and so cannot access any machine outside the physical server. An internal network is visible to the Hyper-V host and the virtual machines, which means it can be used for communication between virtual machines and between virtual machines and the Hyper-V host. This can be useful if you are hosting services on the management partition, such as an iSCSI target, that you wish the virtual machines to be able to use. On both the Hyper-V host and virtual machines, a network device will be visible that represents the internal virtual network.

3. Private Virtual Networks These are visible only on virtual machines and are used for virtual machines to communicate with each other. This type of network could be used for virtual machines that are part of a guest cluster, and the private network could be used for the cluster network, providing all hosts in the cluster are running on the same Hyper-V host.

Figure 3.1 The three types of virtual switches available in Hyper-V

In most cases an external switch will be used because most virtual machines will require communications beyond the local Hyper-V host with internal and private networks used in testing and niche scenarios, such as the guest cluster that is confined to a single host. However, most likely if you were creating a production guest cluster in virtual machines, you would want them distributed over multiple Hyper-V hosts to protect against a host failure—in which case an external switch would be required.

A single physical network adapter can only be bound to a single external switch, and in production environments it would be common to use NIC teaming on the Hyper-V host. This would allow multiple network adapters to be bound together and surfaced to the operating system as a single teamed network adapter, which provides resiliency from a network adapter failure but also potentially provides aggregated bandwidth, allowing higher speed communications (there are many caveats around this, which I will cover later in this chapter when I cover NIC teaming in detail). A teamed network adapter can also be used and bound for an external switch with Hyper-V, giving all the virtual network adapters connected to that switch additional resiliency.

If you have different network adapters in a host and they connect to different networks (which may, for example, use VLANs to isolate traffic), then if virtual machines need access to the different networks, you would create multiple external virtual switches, with each bound to the physical network adapter connected to one of the various networks. It may seem obvious, but virtual machines can communicate only with the other services that are available on that physical network or can be routed via that network. Effectively, you are just expanding the connectivity of the physical network adapter to virtual machines via the virtual switch.

Many virtual machines can be connected to the same virtual networks, and one nice feature is that if multiple virtual machines on the same Hyper-V host are connected to the same external network and communicate over that network, the traffic never actually goes to the physical network adapter. The Hyper-V networking stack is smart enough to know that the traffic is going to another VM connected to the same switch and directly passes the traffic to the VM without ever touching the physical network adapter or physical network.

When you start creating virtual switches, it's important to use a consistent naming scheme across all of your various hosts for the switches. This is important because when a virtual machine is moved between Hyper-V hosts, it will look for a virtual switch with the same name as its existing virtual switch connection on the target host. If there is not a matching virtual switch, the virtual network adapter will become disconnected and therefore the virtual machine will lose connectivity. This is critical in failover clusters where virtual machines can freely move between nodes in the cluster, but with the Windows Server 2012 capability of moving virtual machines between any host with no shared resources and no downtime, it's important to have consistent virtual switch naming between all Hyper-V hosts. Take some time now to think about a good naming strategy and stick to it.

It's also possible to create access control lists, called extended port access control lists, within the virtual switch to allow and block communication between different virtual machines connected to the switch based on IP address, protocol, and port. Additionally stateful rules can be created to allow communication only when certain conditions are met. Microsoft has a detailed walk-though on using the ACLs at the following location:

http://technet.microsoft.com/en-us/library/dn375962.aspx

Creating a Virtual Switch

When the Hyper-V role is enabled on a server, an option is given to create an external switch by selecting a network adapter on the host. If this option is chosen, then a virtual switch will already be present on the host and it will be automatically configured to allow the management operating system to share the adapter, so an extra Hyper-V virtual Ethernet adapter will be present on the Hyper-V host. In general, I prefer not to create the virtual switches during Hyper-V role installation but to configure them postinstallation. Also, as you will read later, if your deployment is a production deployment and you're using System Center, then Virtual Machine Manager can do all of the switch configuration for you. I will, however, walk you through manually configuring virtual switches:

1. Launch Hyper-V Manager.

2. Select the Virtual Switch Manager action from the actions pane.

3. In the navigation pane, select New Virtual Network Switch, and in the details pane, select the type of virtual switch to create. In this case, select External and click the Create Virtual Switch button.

4. Replace the default New Virtual Switch name with a meaningful name that matches the naming standard for switches you have selected, such as, for example, External Switch. Optionally, notes can be entered.

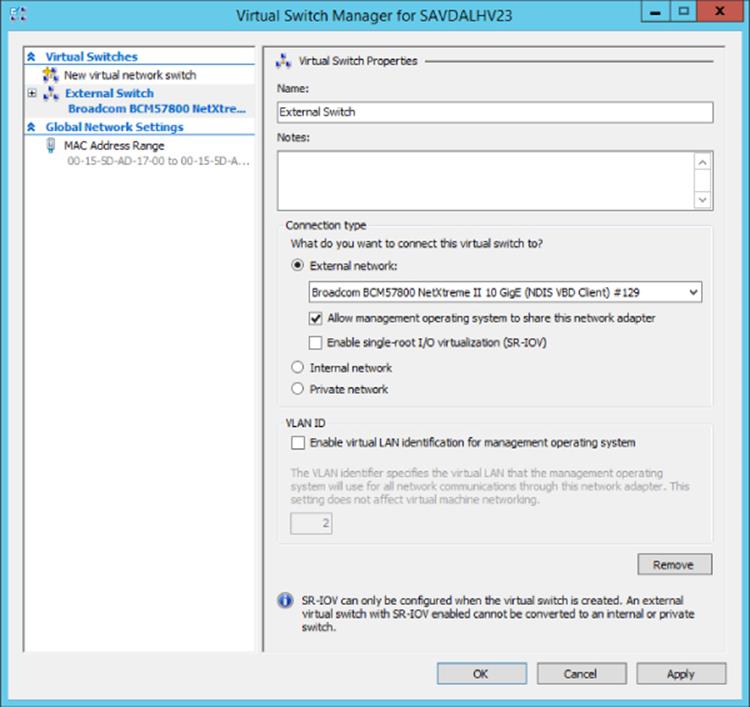

5. If the switch type is external, the specific network adapter or the NIC team that will be bound to the virtual switch must be selected from the list of available network adapters on the system, as shown in Figure 3.2. Note that the type of switch can be changed in this screen by selecting another type of network, such as internal or private. Also note that network adapters/teams bound to other switches are still listed, but the creation will fail if they are selected.

By default the “Allow management operating system to share this network adapter” option is enabled, which creates the virtual network adapter on the management partition, enabling the Hyper-V host to continue accessing the network through the new virtual switch that is bound to the network adapter. However, if you have a separate management network adapter or if you will create it manually later, then disable this option by unchecking the box. If you uncheck this box, you will receive a warning when the switch is being created that you will lose access to the host unless you have another network adapter used for management communication. The warning is shown to protect you from disabling anyway to communicate with the host.

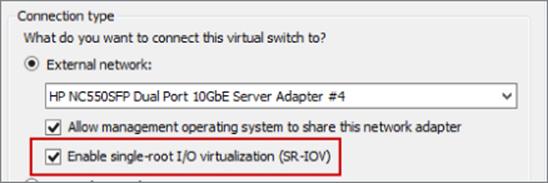

6. If you plan to use SR/IOV, check the Enable Single-Root I/O Virtualization (SR-IOV) box. This cannot be changed once the switch is created. (SR-IOV will be covered later. It's a technology found in newer, advanced networking equipment and servers that allows virtual machines to directly communicate with the networking equipment for very high-performance scenarios.)

7. If the option to allow the management operating system to use the network adapter was selected, it is possible to set the VLAN ID used by that network adapter on the host operating system through the VLAN ID option by checking Enable Virtual LAN Identification For Management Operating System and then entering the VLAN ID. Note that this does not set the VLAN ID for the switch but rather for the virtual network adapter created on the management partition.

8. Once all options are selected, click the OK button and the switch will be created (this is where the warning will be displayed if you unchecked the option to allow the management operating system to use the adapter).

Figure 3.2 Primary configuration page for a new virtual switch

Creating switches is also possible using PowerShell, and the following commands will create an external (without sharing with the management operating system), internal, and private switch and then list switches that are of type External:

#Create new external (implicit external as adapter passed)

New-VMSwitch -Name "External Switch" -Notes "External Connectivity"¿

-NetAdapterName "VM NIC" -AllowManagementOS $false

#Create new internal (visible on host) and private (vm only)

New-VMSwitch -Name "Internal Switch" -SwitchType Internal

New-VMSwitch -Name "Private Switch" -SwitchType Private

Once a switch is created, it can be viewed through the Virtual Switch Manager and modification of the properties is possible. A virtual switch's type can be changed at any time unless it is an external virtual switch with SR/IOV enabled. In that case, its type cannot be changed without deleting and re-creating it. Virtual network adapters can be connected to the switch through the properties of the virtual network adapter.

Extensible Switch

The Hyper-V extensible switch provides a variety of capabilities that can be leveraged by the virtual network adapters that are connected to the virtual switch ports, including features such as port mirroring, protection from rogue DHCP servers and router advertisements, bandwidth management, support for VMQ, and more. However, there is still only a specific set of capabilities that cover the majority of scenarios and customer requirements; they might not cover every requirement that different clients may have. Those familiar with VMware may have heard of the Cisco Nexus 1000V, which is available for ESX and essentially replaces the VMware switching infrastructure completely. The Cisco Nexus 1000V is the only model VMware supports, and the challenge is that not many vendors have the resources available to write a complete virtual switching infrastructure. Microsoft went a different direction in Windows Server 2012.

Windows Server 2012 introduces the extensible switch for Hyper-V. With the extensible switch, it's possible for third parties to plug into the Hyper-V virtual switch at various points without having to completely replace it, thus making it far easier for organizations to bring additional value. It was common to have the ability to add functionality into the Hyper-V switch such as enhanced packet filtering capabilities, firewall and intrusion detection at the switch level, switch forwarding, and utilities to help sniff data on the network. Consider that Windows already has a rich capability around APIs and interfaces for third parties to integrate with the operating system, specifically Network Device Interface Specification (NDIS) filter drivers and Windows Filtering Platform (WFP) callout drivers. The Hyper-V extensible switch uses these exact same interfaces that partners are already utilizing, making it possible for vendors to easily adapt solutions to integrate directly into the Windows 2012 and above extensible switch. InMON's sFlow monitoring extension allows great trending analysis of traffic, NEC has OpenFlow extension, and 5Nine has a complete firewall extension for the Hyper-V extensible switch.

There are four specific types of extensions for the Hyper-V switch, which are listed in Table 3.1.

Table 3.1 Types of extension for Hyper-V virtual switch

Extension |

Purpose |

Potential Examples |

Extensibility Component |

Network packet inspection |

Inspecting network packets, but not altering them |

Network monitoring |

NDIS filter driver |

Network packet filter |

Injecting, modifying, and dropping network packets |

Security |

NDIS filter driver |

Network forwarding |

Third-party forwarding that bypasses default forwarding |

Virtual Ethernet Port Aggregator (VEPA) and proprietary network fabrics |

NDIS filter driver |

Firewall/Intrusion detection |

Filtering and modifying TCP/IP packets, monitoring or authorizing connections, filtering IPsec-protected traffic, and filtering RPCs |

Virtual firewall and connection monitoring |

WFP callout driver |

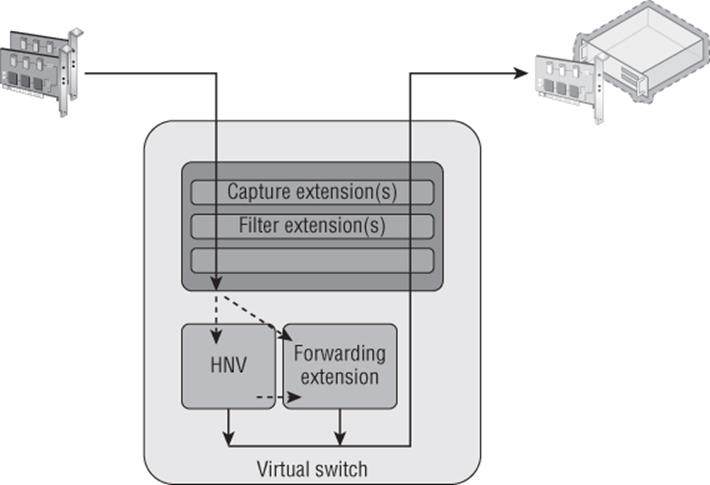

Multiple extensions can be enabled on a virtual switch, and the extensions are leveraged for both ingress (inbound) and egress (outbound) traffic. One big change from Windows Server 2012 is that in Windows Server 2012 R2, the Hyper-V Network Virtualization (HNV) module is moved into the virtual switch instead of being external to the virtual switch. This enables switch extensions to inspect both the provider and customer headers (more on this later, but for now the provider header is the packet that enables Network Virtualization to function across physical networks and the customer header is the IP traffic that virtual machines in a virtual network actually see) and therefore work with Network Virtualization. The move of the Network Virtualization module also enables third-party forwarding extensions like the Cisco Nexus 1000V to work with Network Virtualization, which wasn't the case in Windows Server 2012. And yes, Cisco has a Nexus 1000V for Hyper-V that works with the Hyper-V switch instead of completely replacing it. This is important because many organizations use Cisco networking solutions and the Nexus 1000V enables unified management of both the physical and virtual network environment through the Cisco network management toolset.

The Windows Server 2012 R2 extensible switch also supports hybrid forwarding, which allows packets to be forwarded to different forwarding agents based on the packet type. For example, suppose the Cisco Nexus 1000V extension (a forwarding agent) was installed. With hybrid forwarding, if network virtualization traffic is sent through the switch, it would first go through the HNV module and then to the forwarding agent, the Nexus 1000V. If the traffic was not network virtualization traffic, then the HNV module would be bypassed and the traffic sent straight to the Nexus 1000V.

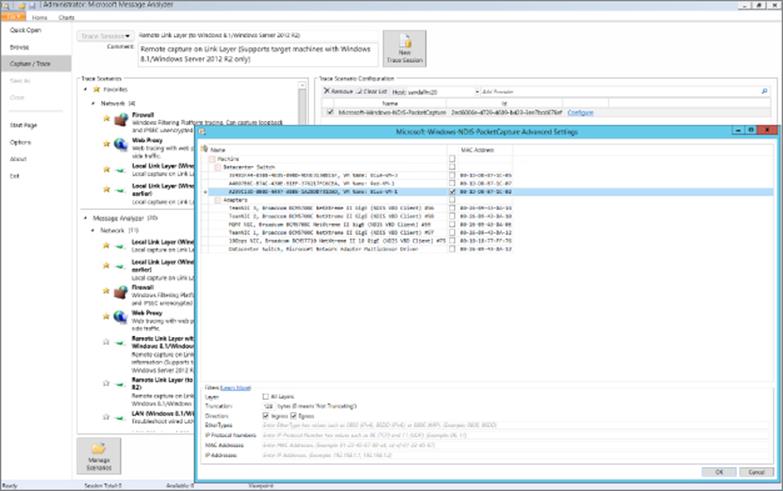

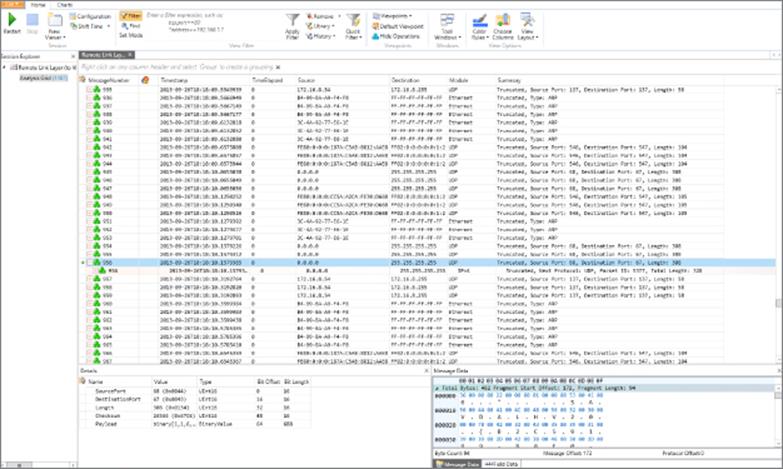

Figure 3.3 best shows the extensible switch and how traffic flows through the extensions. Notice that the traffic flows completely through all layers of the switch twice, once “inbound” into the switch (which could be from a VM or from external sources) and once “outbound” from the switch (which could be to a VM or to an external source).

Figure 3.3 How traffic flows through the extensible switch and registered extensions for the inbound path

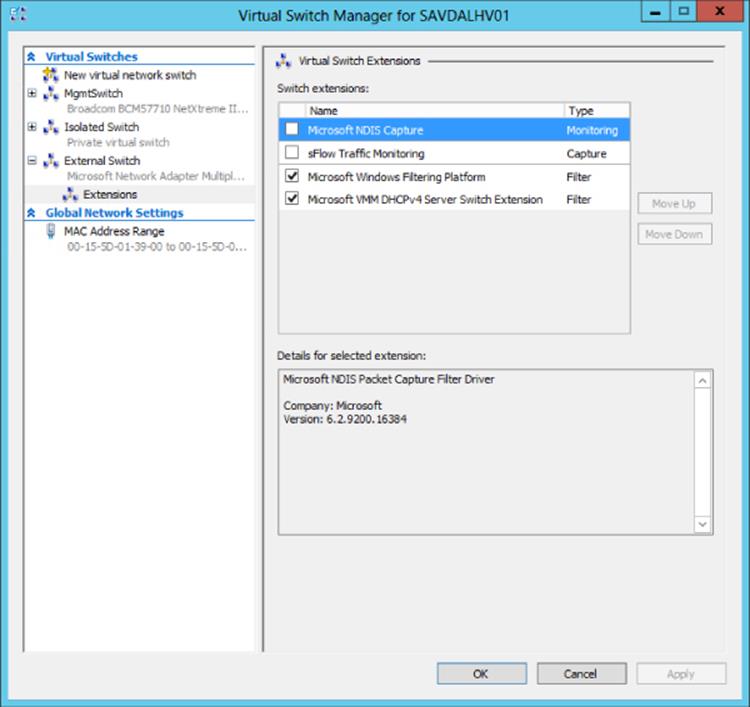

Extensions to the switch are provided by the third parties and installed onto the Hyper-V server and then enabled on a per-virtual-switch basis once installed. The process to enable an extension is simple. Open the Virtual Switch Manager and select the virtual switch for which you want to enable extensions. Then select the Extensions child node of the virtual switch. In the extensions area of the dialog, check the box for the extension(s) you wish to enable, as shown in Figure 3.4. That's it! The extensions are now enabled. InFigure 3.4, a number of different extensions types can be seen, and two are not part of standard Hyper-V: Microsoft VMM DHCPv4 Server Switch Extension and sFlow Traffic Monitoring. When enabled, the sFlow Traffic Monitoring extension sends trending information and more to the sFlowTrend tool for graphical visualization and analysis. The Microsoft VMM DHCPv4 Server Switch Extension is a filter that, when it sees DHCP traffic, intercepts the requests and utilizes IP pools within Virtual Machine Manager to service DHCP requests over the virtual switch instead of using standard DHCP services, enabling VMM to manage all IP configuration.

Figure 3.4 Enabling extensions for a virtual switch in Hyper-V

VLANs and PVLANS

In most datacenters it is not uncommon to see widespread use of virtual LANs(VLANs), which allow for isolation of traffic without the need to use physical separation, such as using different switches and network adapters for the different types of isolated networks. While physical separation works, it is costly to maintain the additional physical infrastructure in terms of hardware, power, and even cooling in the datacenter. It can also be complex to manage large numbers of isolated physical network topologies.

Understanding VLANs

A VLAN is a layer 2 technology that primarily adds the ability to create partitions in the network for broadcast traffic. Normally networks are separated using devices such as routers, which control the transmission of traffic between different segments (a local area network, or LAN) of the network. However, a VLAN allows a single physical network segment to be virtually partitioned so that different VLANs cannot communicate with each other and broadcast traffic such as ARP (to resolve IP addresses to MAC addresses) would not cross VLANs. A great example of explaining the broadcast boundary nature of a VLAN is to consider 10 machines plugged into a single switch and 1 of those machines is a DHCP server. Typically all 9 of the other machines plugged into that switch would be able to get an IP address from the DHCP server. If VLANs were configured and the DHCP server and a few of the machines were put in a specific VLAN, then only the machines in the same VLAN as the DHCP server would be able to get an IP address from the DHCP server. All the other machines not part of that VLAN would not be able to contact the DHCP server and would require another method for IP configuration.

Additionally, through network hardware configuration it is possible for a single VLAN to actually cross different physical network segments and even locations, allowing machines that are physically distributed to act and communicate as if they were on a single physical network segment. The VLAN is at a high level creating virtual LANs that are abstracted from the physical location. For VLANs to communicate with each, other layer 3 technologies (IP) would be used for IP-level routing.

The partitioning of communication and broadcast traffic enables VLANs to provide a number of key features to an environment that make VLANs an attractive technology to implement:

· Separate broadcast domains. This seems obvious, but it can be a huge benefit for larger networks where the amount of broadcast traffic may be causing network performance issues. This also enables a single network to be divided into separate networks as required.

· Isolation between machines. VLANs enable partitions between different groups of servers, which may be required in scenarios such as different departments, Internet-facing networks, hosting providers to separate clients, and more.

· Administrative help. With VLANs, it's possible to move servers between locations but maintain their VLAN membership, avoiding reconfiguration of the host.

· A separation of physical networks from virtual networks. This enables virtual LANs to span different physical network segments.

Typically a VLAN and IP subnet has a one-to-one mapping, although it is possible to have multiple subnets within a single VLAN. Remember, though, that a VLAN represents a broadcast boundary, which means a single subnet cannot cross VLANs because by definition, an IP subnet represents a group of machines with direct communication that rely on broadcasts for translating IP addresses to MAC addresses using ARP.

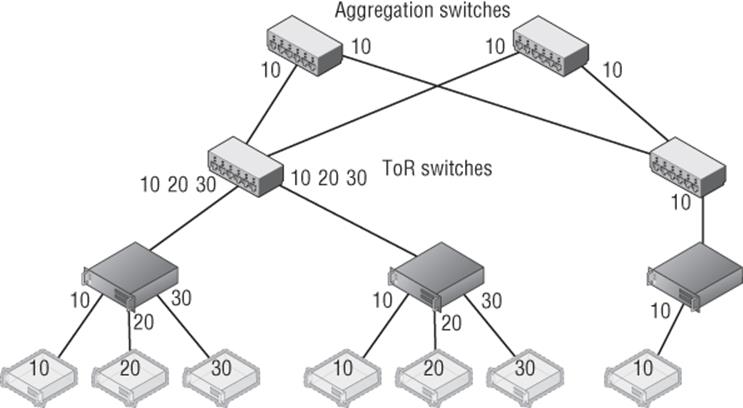

While VLANs seem like a useful technology, and they are, there are some drawbacks and complexity to their configuration. First, consider a typical datacenter network switch configuration with a number of racks of servers. There are typically two types of switches involved; servers within a rack connect to the top-of-rack (ToR) switch in each rack and then connect to aggregation switches. The configuration in Figure 3.5 shows three VLANs in use by Hyper-V servers for different virtual machines, which in this example are VLANs 10, 20, and 30. Notice that machines in VLAN 10 span different racks, which requires configuration of the VLAN in not just the ToR but also aggregation switches. For VLANs 20 and 30, all the VMs are in the same rack, so while the ports from the hosts in the rack to the ToR require access for VLAN 10, 20, and 30, the aggregation switches will see only VLAN 10 traffic passed to them, which is why only VLAN 10 has to be configured.

Figure 3.5 Three VLANs in a two-rack configuration. For redundancy, each ToR has a connection to two separate aggregation switches.

Notice in Figure 3.5 that single ports can be configured to allow traffic from different VLANs (ports between switches are known as trunk ports because they are configured for all the VLAN traffic that has to be passed between them). However, even normal ports to a host can be configured to allow multiple VLANs, which is especially necessary with virtualization where different virtual machines on a single host may be part of different VLANs. Realize that even in this very basic configuration with only two racks, the VLAN configuration can require changes on the network infrastructure at multiple points such as the ToRs and aggregation switches.

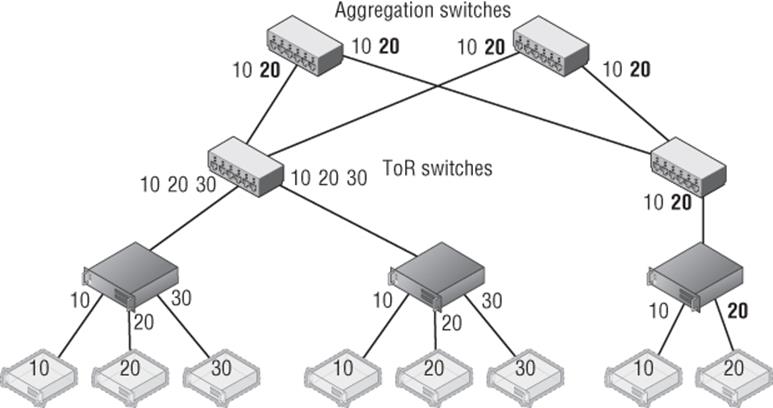

Consider now if a new virtual machine is required for VLAN 20 but there is no capacity in the first rack, which requires the virtual machine to be created in the second rack, as shown in Figure 3.6. This requires changes to the second rack ToR and both aggregation switches. Imagine there are hundreds of racks and hundreds of VLANs. This type of VLAN change can be very complex and take weeks to actually implement because all of the VLAN configuration is static and requires manual updating, which makes the actual network a bottleneck in provisioning new services. You've probably heard of some VLAN configuration problems, although you didn't know it was a VLAN configuration problem. Some of the major “outages” of Internet-facing services have been caused not by hardware failure but actually by changes to network configuration that “went wrong” and take time to fix, specifically VLANs! Suppose you wish to use Live Migration to easily move virtual machines between hosts and even racks; this adds even more complexity to the VLAN configurations to ensure that the virtual machines don't lose connectivity when migrated.

Figure 3.6 New VM in VLAN 20 added to the host in the second rack and the changes to the switch VLAN configuration required

Tagged vs. Untagged Configuration

One thing regarding VLANs confused me when I first started with network equipment (well, lots of things confused me!), and that was whether to configure ports as tagged or untagged, which are both options when configuring a port on a switch.

When a port is configured as tagged, it means that port expects the traffic to already be tagged with a VLAN ID. This means the VLAN must be configured at the host connected to the port or at a VM level running on the host. Additionally, for a tagged port it is possible to configure inclusions and exclusions for the VLAN IDs accepted on that port. For example, a port configured as tagged may be configured to allow only VLAN ID 10 through. A trunk port would be configured with all the VLAN IDs that needed to be passed between switches.

When a port is configured as untagged, it means the port does not require traffic to be tagged with a VLAN ID and will instead automatically tag traffic with the default VLAN ID configured on the port for traffic received from the host and going out to other hosts or switches. For inbound traffic to the switch going to the host, the VLAN ID is stripped out and the packet is sent to the host. On many switches, by default all ports are configured as untagged with a default VLAN ID of 1.

To summarize:

Tagged = Port expects traffic to be tagged when receiving.

Untagged = Port expects traffic to not be tagged and will apply a default VLAN ID. Any traffic that has a VLAN tag will be dropped.

Another limitation with VLANs is the number of VLANs that can be supported in an environment, which is 4,095 because the VLAN ID in the header is 12 bits long and 1 VLAN ID is not usable. So 4,095 is the theoretical number, but most switches limit the number of usable VLANs to 1,000. This may still seem like a lot, but if an organization is a host with thousands of clients, then the 1,000 limitation, or even 4,095, would make it an unusable solution. Also remember the complexity issue. If you have a 1,000 VLANs over hundreds of servers, managing them would not be a pleasant experience!

VLANs and Hyper-V

Even with the pain points of VLANs, the reality is you are probably using VLANs, will still use them for some time, and want to use them with your virtual machines. It is completely possible to have some virtual machines in one VLAN and other virtual machines in other VLANs. While there are different ways to perform configuration of VLANs, with Hyper-V there is really one supported and reliable way to use them and maintain manageability and troubleshooting ability:

· Configure the switch port that is connected to the Hyper-V host in tagged mode and configure it to have inclusions for all the VLAN IDs that will be used by VMs connected to that host. Another option is to run the port essentially in a trunk type mode and allow all VLAN IDs through the port to avoid potential configuration challenges when a new VLAN ID is used by a VM on the host. Definitely do not configure the port as untagged with any kind of default VLAN ID. I cannot stress this enough. If a switch port is configured as untagged and it receives traffic that is tagged, that traffic will be dropped even if the VLAN ID matches the VLAN the port has been configured to set via the untagged configuration.

· Do not set a VLAN ID on the physical NIC in the Hyper-V host that is used by the virtual switch that will be connected to the virtual machines.

· If you are using NIC Teaming, have only a single, default mode team interface configured on the team.

· Run all communications through the Hyper-V virtual switch and apply the VLAN ID configuration on the virtual switch ports that correspond to the virtual network adapters connected to the virtual switch.

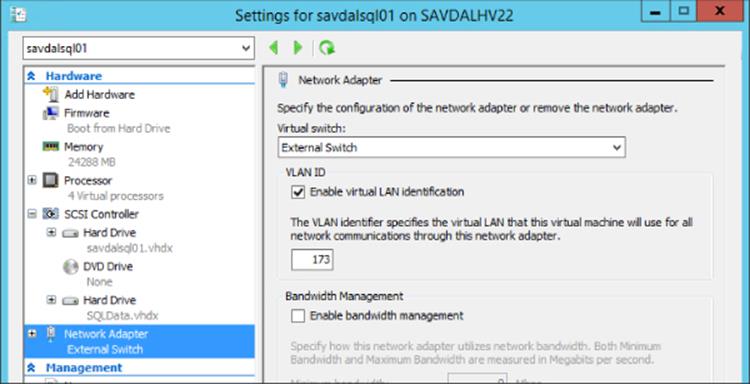

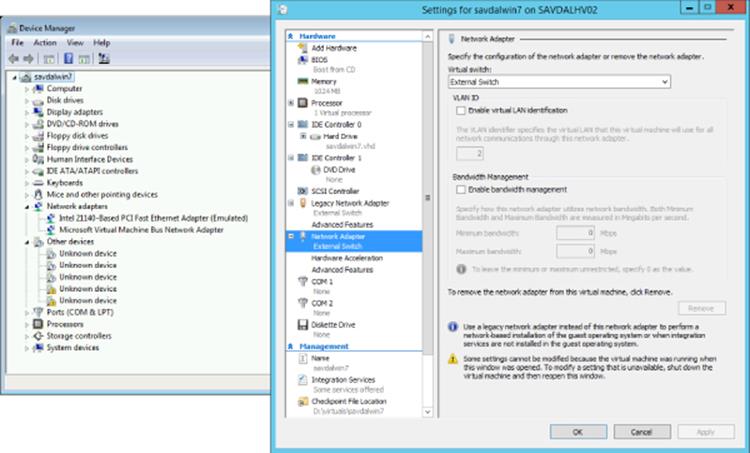

This actually makes configuring a VLAN quite simple. The only VLAN configuration performed in the Hyper-V environment is within the properties of the virtual network adapter as shown in Figure 3.7, where I set the VLAN ID for this specific network adapter for the virtual machine. The Set-VMNetworkAdapterVlan PowerShell cmdlet can also be used to set the VLAN ID for a virtual network adapter, as in the following example:

Set-VMNetworkAdapterVlan –VMName test1 –Access –VlanId 173

Figure 3.7 Setting the VLAN ID for a virtual machine's network adapter

If you refer back to Figure 3.2, there may be something that seems confusing and that is the option to configure a VLAN ID on the virtual switch itself. Does this setting then apply to every virtual machine connected to that virtual switch? No. As the explanation text in the dialog actually explains, the VLAN ID configured on the virtual switch is applied to any virtual network adapters created in the management OS for the virtual switch, which allows the management OS to continue using a physical network adapter that has been assigned to a virtual switch. The VLAN ID configured on the switch has no effect on virtual machine VLAN configuration.

Note that if you do not require different VLAN IDs within the Hyper-V environment and all virtual machines effectively will use the same VLAN ID, then no VLAN configuration is required at the Hyper-V host or virtual machine level. Simply use untagged at the switch and configure whatever VLAN ID you wish all traffic to be tagged with as the default. The previous configuration is when you need different VLAN IDs for the various virtual machines and management OS.

PVLANs

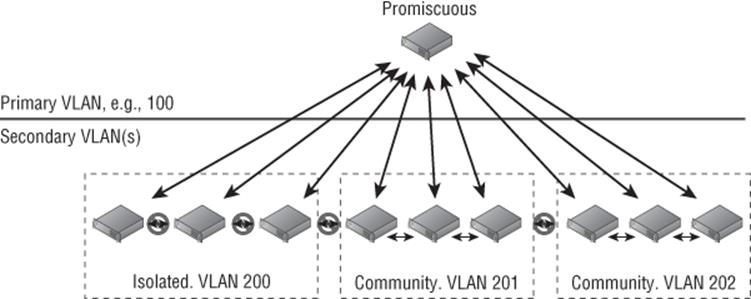

With all the scalability limitations of VLANs, you may wonder how large organizations and hosters specifically handle thousands of clients, which is where private VLANs (PVLANs) are a key feature. Through the use of only two VLAN IDs that are paired, PVLANs enable huge numbers of different environments to remain isolated from each other.

PVLANs enable three modes, as shown in Figure 3.8: isolated, community, and promiscuous. The primary mode that will be used with PVLANs is isolated; no direct communication is possible between hosts that are in isolated mode, but they can talk to their gateway and therefore out to the Internet and other promiscuous resources. This mode is useful if there are many different tenants that have only one host/VM each. Think about that large hosting company that hosts millions of VMs that don't need to communicate with each other or a hotel with 1,000 rooms. Also consider many workloads behind a load balancer that don't need to communicate with each other. Using PVLANs would stop the servers behind the load balancer from being able to communicate with each other, which would provide protection if one of them were compromised in some way, making it very useful for Internet-facing workloads. PVLANs are a great way to isolate every port from every other with only two VLANs required.

Figure 3.8 PVLAN overview and the three types

Community mode enables multiple hosts in the same community to communicate with each other. However, each community requires its own second VLAN ID to use with the shared primary VLAN ID. Finally, there are hosts in promiscuous mode that can communicate with hosts in isolated or community mode. Promiscuous PVLANs are useful for servers that are used by all hosts—perhaps they host a software share or updates that can be used by all.

Hyper-V supports all three PVLAN modes, but this is not exposed through the graphical Hyper-V Manager and instead all configuration is done in PowerShell using the Set-VMNetworkAdapterVlan cmdlet. Remember that each VLAN can be used as the primary VLAN of only one isolated PVLAN, so ensure that different VLANs are used as primary for your isolated PVLANs. Note that the same secondary VLAN can be used in multiple isolated PVLANs without problem. The following configurations are some that you will perform for PVLAN using PowerShell.

To set a VM in isolated mode, use this command:

Set-VMNetworkAdapterVlan –VMName testvm –Isolated –PrimaryVlanId 100 ´

–SecondaryVlanId 200

Use this command to set a VM in community mode (note that the secondary VLAN ID sets the community the VM is part of):

Set-VMNetworkAdapterVlan –VMName testvm2 –Community –PrimaryVlanId 100 ´

–SecondaryVlanId 201

Use this command to set a VM in promiscuous mode (note that the secondary VLAN is now a list of all VLAN IDs used in community and for the isolated):

Set-VMNetworkAdapterVlan –VMName testvm3 –Promiscuous –PrimaryVlanId 100 ´

–SecondaryVlanIdList 200–400

To check the configuration of a virtual machine, use the Get-VMNetworkAdapterVlan cmdlet, as in this example:

Get-VMNetworkAdapterVlan –VMName testvm | fl *

The preceding commands assume that a virtual machine has a single network adapter, which essentially changes the configuration for the entire virtual machine. If a virtual machine has multiple network adapters and you wish to configure only one of the virtual network adapters, then pass the specific network adapter to the Set-VMNetworkAdapterVlan cmdlet. For example, the following command sets the VLAN for the virtual network adapter with the MAC address (remember, you can view the MAC addresses of all the virtual machines' NICs with the command Get-VMNetworkAdapter -VMName “VMName”). This command is working by listing all the adapters for the VM, then narrowing the list down by the one that matches the passed MAC address, and then passing that adapter to the Set-VMNetworkAdapterVlan cmdlet:

Get-VMNetworkAdapter -VMName "VMName" | where {$_.MACAddress -like "00155DADB60A"} ´

| Set-VMNetworkAdapterVlan -Isolated -PrimaryVlanID 100 -SecondaryVlanId 200

Some configuration of PVLANs is also possible using SCVMM, but only isolated mode is supported and not promiscuous or community. If you are using SCVMM and wish to have promiscuous and community mode virtual machines, you will need to continue using PowerShell for those virtual machines. To use SCVMM for isolated mode, it's actually a fairly simple configuration:

1. Open the Virtual Machine Manager interface, open the Fabric workspace, and select Networking ⇒ Logical Networks.

2. Select the Create Logical Network action.

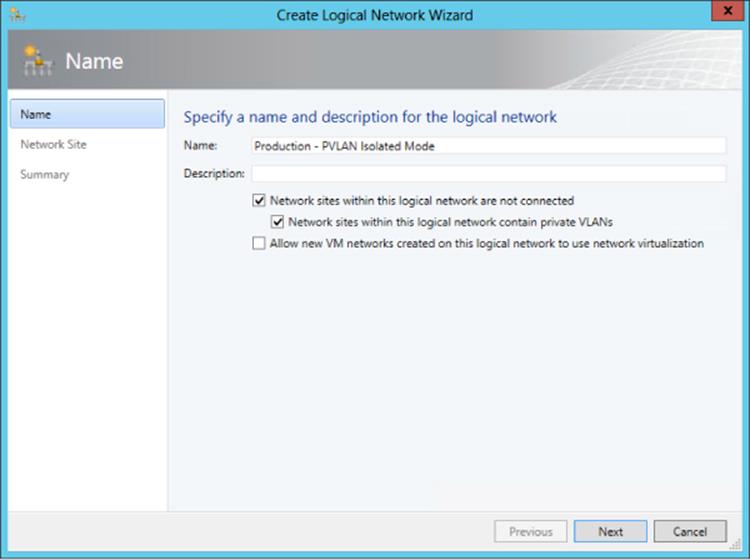

3. For VMM 2012 SP1 on the Name page of the Create Logical Network Wizard dialog check the “Network sites within this logical network are not connected” box and then check the “Network sites within this logical network contain private VLANs” box, as shown inFigure 3.9; then click Next. For VMM 2012 R2, there is actually a new option specifically for PVLAN. You would select the Private VLAN (PVLAN) networks option and click Next.

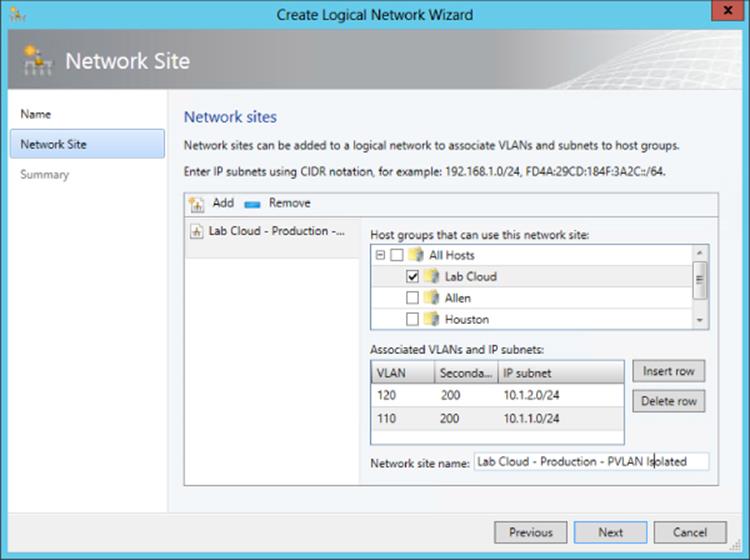

4. On the Network Site page of the wizard, add a site as usual. However, you will enter both a primary and secondary VLAN ID, as shown in Figure 3.10. Multiple rows can be added, each a separate isolated PVLAN if needed. When virtual networks are created later, each virtual network can be can be linked to a specific isolated PVLAN. Click Next.

5. Click Finish to create the new PVLAN isolated configuration.

Figure 3.9 Enabling a PVLAN using SCVMM on a new logical network

Figure 3.10 Using SCVMM to create multiple isolated PVLANs that use the same secondary VLAN ID

Here are the same PowerShell commands for SCVMM to create the isolated PVLAN configuration that matches the configuration previously performed using the SCVMM graphical interface:

$logicalNetwork = New-SCLogicalNetwork -Name "Production - PVLAN Isolated Mode" ´

-LogicalNetworkDefinitionIsolation $true -EnableNetworkVirtualization $false ´

-UseGRE $false -IsPVLAN $true

$allHostGroups = @()

$allHostGroups += Get-SCVMHostGroup -ID "<GUID>"

$allSubnetVlan = @()

$allSubnetVlan += New-SCSubnetVLan -Subnet "10.1.2.0/24" -VLanID 120 ´

-SecondaryVLanID 200

$allSubnetVlan += New-SCSubnetVLan -Subnet "10.1.1.0/24" -VLanID 110 ´

-SecondaryVLanID 200

New-SCLogicalNetworkDefinition -Name "Lab Cloud - Production - PVLAN Isolated" ´

-LogicalNetwork $logicalNetwork -VMHostGroup $allHostGroups ´

-SubnetVLan $allSubnetVlan -RunAsynchronously

It's very important with PVLAN that all the physical switch ports are configured correctly for the VLANs used as part of the PVLAN configuration or traffic will not flow between hosts correctly. While VLANs are used heavily in many environments, most organizations won't use PVLANs that are aimed at specific scenarios where there is a requirement to have large numbers of hosts/virtual machines that cannot talk to each other. The good news is they are all supported with Hyper-V.

How SCVMM Simplifies Networking with Hyper-V

While SCVMM will be covered in detail later in the book, I've already mentioned its use a number of times in this chapter and I'm about to discuss it a lot more as it moves from being an optional management technology to being the only practical way to implement some technologies. I want to discuss some fundamental SCVMM logical components and how to quickly get up and running with them, including deploying some of the components we've already covered in this chapter the “SCVMM way.”

When you consider what configuration was performed with Hyper-V, it really consisted of creating a virtual switch that was tied to a physical network adapter and how what we named the virtual switch could indicate what it would be used for. However, if that switch connected to an adapter that connected to a switch port that supported different VLANs for different networks, then there was no way to convey that and manage it effectively. Also, there was no concept of separating the network seen by the virtual machines from that defined on the Hyper-V server. Additionally, on each Hyper-V server the virtual switch configuration and any extensions were manually configured. Things get a lot more complicated when virtual switches are used for multiple virtual network adapters on the management operating system, as you'll see when we look at a more converged network infrastructure (and this will be covered in detail later this chapter).

SCVMM introduces quite a few new concepts and constructs that initially may seem a little overwhelming, but they are fundamentally designed to let you model your physical networks, your switch, and your network configurations on the Hyper-V hosts and then model a separate abstracted set of definitions for networks available to virtual machines. These constructs can broadly be divided into those that model connectivity and those that model capability.

I want to build these constructs out and then walk through a configuration for a new deployment. One key point is to ideally perform all your configuration through SCVMM for your Hyper-V host. Install the Hyper-V role with no virtual switches and do nothing else. Don't create virtual switches, don't create NIC teams, don't start creating virtual machines. The best experience is to define the configuration in SCVMM and let SCVMM perform all the configuration on the hosts.

One very important point for networking—whether for physical hosts, for virtualization with Hyper-V, or using SCVMM—is proper planning and design and understanding your physical network topology and your actual requirements and then translating this to your virtual network infrastructure. Why this gets emphasized with SCVMM is that SCVMM networking components will force you to do this planning because you need to model your network within SCVMM using its various networking architectural components to achieve desired results.

1. Discovery. Understand the network requirements of your datacenter and your virtual environments. This may require asking questions of the network teams and the business units to find out what types of isolation are required, what address spaces will be used, and what types of networks exist and need to be leveraged. Do certain types of traffic require guaranteed bandwidth, which would dictate the use of separate networks or use Quality of Service (QoS) technologies?

2. Design. Take the information you have discovered and translate it to SCVMM architectural components. Consider any changes to process as part of virtual environments. This may be an iterative process because physical infrastructure such as hardware switches may limit some options and the design for the virtual network solution may need to be modified to match capabilities of physical infrastructure.

3. Deployment. Configure SCVMM with a networking design and deploy the configuration to hosts, virtual machines, and clouds.

SCVMM Networking Architecture

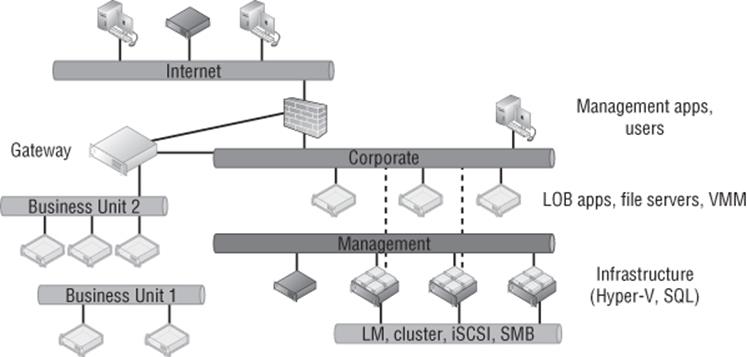

The first architectural component for SCVMM is the logical network, which helps model your physical network infrastructure and connectivity in SCVMM. Consider your virtualization environment and the networks the hosts and the virtual machines will need to connect to. In most datacenters, at a minimum you would see something like Figure 3.11.

Figure 3.11 Common networks seen in a datacenter with virtualization

In this common datacenter, the different types of networks have different connectivity, different capabilities, and different routing available. The networks may require isolation from each other using various technologies, which is explained in more detail later. Remember, these are just examples. Some datacenters will have many more. Here are the different types of networks you could have:

1. The Internet You may have customers or users that access the network via the Internet and connect to the Internet through various routes, so systems with Internet connectivity will likely need to be modeled as a separate network.

2. Corporate This is usually the primary network in your company where users exist and will connect to the various services offered, such as line of business (LOB) applications, file servers, domain controllers, and more. Additionally, administrators may connect to certain management systems via systems available on the corporate network, such as your VMM server. The VMM environment will need to model the corporate environment so virtual machines can be given connectivity to the corporate environment to offer services.

3. Management Infrastructure servers typically are connected on a separate management network that is not accessible to regular users and may not even be routable from the corporate network.

4. Special Networks Certain types of servers require their own special types of communications, such as those required for cluster communications, live migrations, iSCSI, and SMB storage traffic. These networks are rarely routable and may even be separate, isolated switches to ensure desired connectivity and low latencies or they may use separate VLANs. Some organizations also leverage a separate network for backup purposes.

5. Business Units/Tenants/Labs Separate networks may be required to isolate different workloads, such as different business units, different tenants (if you are a hoster), and lab/test environments. Isolation can be via various means, such as VLANs, PVLANs, or network virtualization. These networks may require connectivity out to the Internet, to other physical locations (common in hoster scenarios where a client runs some services on the hoster infrastructure but needs to communicate to the client's own datacenter), or even to the corporate network, which would be via some kind of gateway device. In Figure 3.11, Business Unit 2 requires connectivity out of its isolated network, while Business Unit 1 is completely isolated with no connectivity outside of its own network.

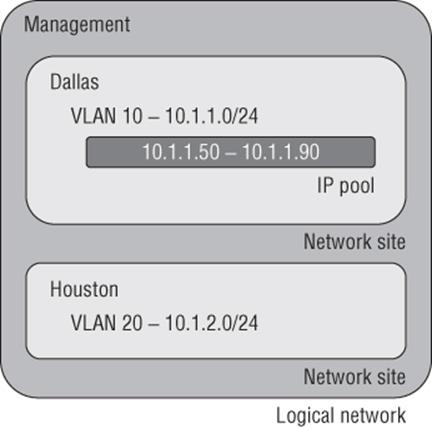

Each of these different types of networks would be modeled as logical networks in SCVMM. Additionally, an organization may have different physical locations/datacenters, and SCVMM allows you to define a logical network and include details of the sites where it exists along with the configuration required at each site, known as a network site. For example, suppose an organization has two locations, Dallas and Houston, and consider just the management network in this example. In Dallas, the management network uses the 10.1.1.0/24 subnet with VLAN 10, while in Houston, the management network uses the 10.1.2.0/24 subnet with VLAN 20. This information can be modeled in SCVMM using network sites, which are linked to a SCVMM host group and contained within a logical network. This enables SCVMM to assign not just the correct IP address to virtual machines based on location and network but also the correct VLAN/PVLAN. This is a key point. The logical network is modeling the physical network, so it's important that your objects match the physical topology, such as correct IP and VLAN configuration.

Note that a network Site in a logical network does not have to reflect an actual physical location but rather a specific set of network configurations. For example, suppose I had a management network that used two physical switches and each switch used a different VLAN and IP subnet. I would create a single logical network for my management network and then a separate site for each of the different network configurations, one for each VLAN and IP subnet pair.

A network site can be configured with just an IP subnet, just a VLAN, or an IP subnet/VLAN pair. You only need to configure IP subnets for a site if SCVMM will be statically assigning IP addresses to the site. If DHCP is present, then no IP subnet configuration is required. If VLANs are not being used, a VLAN does not need to be configured. If DHCP is used in the network and VLANs are not used, you do not have to create any network sites.

Once the sites are defined within a logical network, IP pools can then be added to the IP address subnet that's defined, which enables SCVMM to actually configure virtual machines with static IP addresses as the virtual machines are deployed. If DHCP is used in the network, there is no need to configure IP pools in SCVMM or even specify the IP subnet as part of the site configuration. DHCP would be leveraged for the IP assignment, but if you don't have DHCP, then creating the IP pool allows SCVMM to handle the IP assignment for you. The IP assignment is achieved by modifying the sysprep answer file with the IP address from the SCVMM IP pool as the virtual machine template is deployed. When the virtual machine is deleted, SCVMM reclaims the IP address into its pool. Even if DHCP is primarily used in the network, if you are using features such as load balancing as part of a service, then SCVMM has to be able to allocate and track that IP address, which will require the configuration of an IP pool. If no IP pool is created for a network site, SCVMM configures any virtual machines to use DHCP for address allocation. Both IPv4 and IPv6 are fully supported by SCVMM (and pretty much any Microsoft technology because a Common Engineering Criteria requirement for all Microsoft solutions is support for IPv6 at the same level as IPv4).

At a high level, this means the logical network models your physical network and allows the subnet and VLANs to be modeled into objects and then scoped to specific sites, which can also include static IP address pools for allocation to resources such as virtual machines and load balancer configurations. This is shown in Figure 3.12, with a management logical network that has two network sites, Dallas and Houston, along with the IP subnet and VLAN used at each location. For Dallas, an IP pool was also created for the network site to enable static IP configuration. Houston would use DHCP because no IP pool was created for the Houston network site within the logical network.

Figure 3.12 High-level view of logical networks

When planning your logical networks, try to stay as simple as possible. There should not be hundreds of logical networks. There should be fewer that contain different network sites that reflect the different network configurations within the type of network that is represented by the logical network. Microsoft has a good blog on designing logical networks at

http://blogs.technet.com/b/scvmm/archive/2013/04/29/logical-networks-part-ii-how-many-logical-networks-do-you-really-need.aspx

The information can really be summarized as follows:

1. Create logical networks to mirror the physical networks that exist.

2. Create logical networks to define the networks that have specific purposes.

3. Identify logical networks that need to be isolated and identify the isolation method.

4. Determine required network sites, VLANs, PVLANs, and IP pools required for each logical network and deploy them.

5. Associate logical networks to host computers.

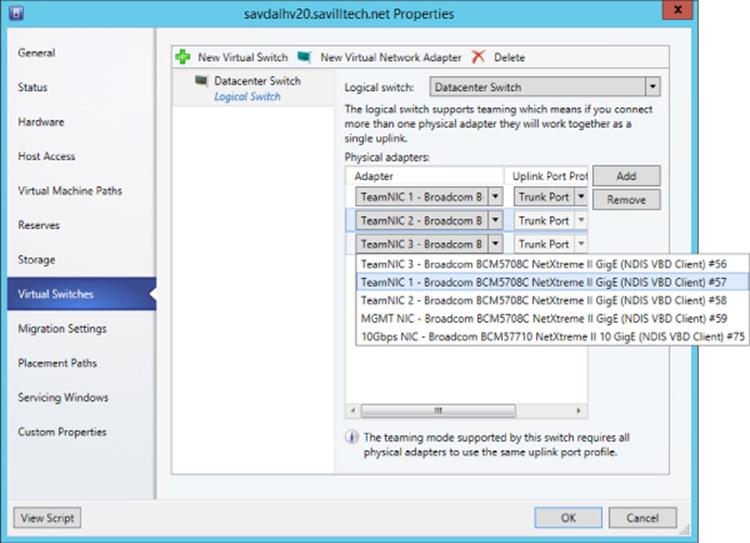

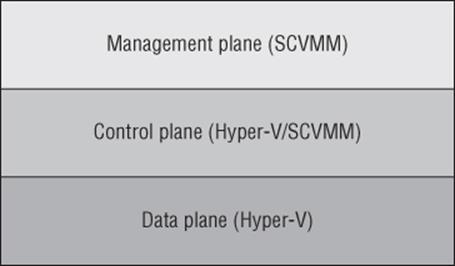

Logical Switch

Earlier in this chapter, we created a virtual switch, and as part of that configuration there were options available and also the ability to enable certain extensions. While it is possible to perform a configuration on a server-by-server basis manually, this can lead to inconsistencies and inhibits automatic deployment of new Hyper-V hosts. SCVMM has the logical switch component, which acts as the container for all virtual switch settings and ensures a consistent deployment across all servers using the logical switch. The automatic configuration using the logical switch is not only useful at deployment, but SCVMM will continue to track the configuration of the host compared to the logical switch, and if the configuration deviates from that of the logical switch, this deviation will be flagged as noncompliant, and that can then be resolved. This may be important in terms of ensuring compliance enforcement in an environment. If the logical switch is updated (for example, a new extension is added), all the Hyper-V hosts using it will automatically be updated.

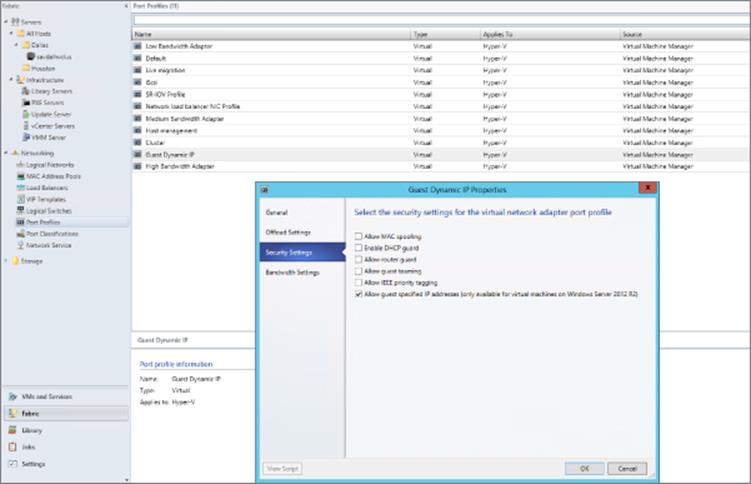

Logical switches use port profiles, which are another SCVMM architectural construct of which there are two types: virtual port profiles and uplink port profiles.

The virtual port profile enables settings to be configured that will be applied to actual virtual network adapters attached to virtual machines or created on the management host OS itself. This can include offload settings such as the settings for VMQ, IPsec task offloading, and SR-IOV and security settings such as those for DHCP Guard. It can also include configurations that may not be considered security related, such as guest teaming and QoS settings such as minimum and maximum bandwidth settings. A number of built-in virtual port profiles are provided in SCVMM for common network adapter uses, many of which are actually aimed at virtual network adapters used in the host OS. Figure 3.13 shows the inbox virtual port profiles in addition to the Security Settings page. Once a virtual port profile is used within a logical switch and the logical switch is deployed to a host, if the virtual port profile configuration is changed, the hosts will be flagged as noncompliant because their configuration no longer matches that of the virtual port profile. The administrator can easily remediate the servers to apply the updated configuration.

Figure 3.13 Viewing the security settings for the built-in Guest Dynamic IP virtual port profile

An uplink port profile defines the connectivity of the virtual switch to logical networks. You need separate uplink port profiles for each set of hosts that require the same physical connectivity (remember that logical networks define the physical network). Conversely, anytime you need to restrict logical networks to specific hosts in the same location or need custom connectivity, you will require different uplink port profiles. Logical networks can be selected that will be available as part of the uplink port profile and also NIC teaming configuration when used on hosts that will assign multiple network adapters. No inbox uplink port profiles are supplied because their primary purpose models the logical networks that can be connected to and by default there are no logical networks. If a change is made to the uplink port profile definition (for example, adding a new VLAN that is available), SCVMM will automatically update all the virtual switches on the Hyper-V hosts that use the uplink port profile via a logical switch with the new VLAN availability or any other settings within the uplink port profile.

When you put all these components together, it does require some additional upfront work, but the long-term deployment and manageability of the environment becomes much simpler and can help identify misconfigurations or where there are actual problems in network connectivity.

The logical switch is a Live Migration boundary for SCVMM's placement logic. Note that a logical switch can be deployed to many hosts, it can stretch clusters, and so on. However, SCVMM needs to ensure that the same capabilities and connectivity are available when virtual machines are moved between hosts, and so the SCVMM placement logic will not allow live migration to hosts using a different logical switch. If you had a scenario where you required different logical switches in the environment (for example, if you required different extension configurations), then a live migration would not be possible and may be a reason for those hosts to not use the logical switch and instead perform the switch configuration directly on the Hyper-V hosts; this type of switch is known as a standard switch. Standard switches are fully supported within SCVMM, and their deployment and configuration will be via Hyper-V Manager or with SCVMM. If you have an existing Hyper-V server with virtual switches defined that will be standard switches in SCVMM, there is no way to convert them to logical switches. The best option is to delete the standard switches and then re-create the switches as logical switches via SCVMM. To delete the standard switches, you would need to evacuate the host of virtual machines, which typically means you have a cluster. However, with Windows Server 2012, you can also move virtual machines with no downtime using Shared Nothing Live Migration between any Hyper-V hosts provided they have a 1 Gbps network connection.

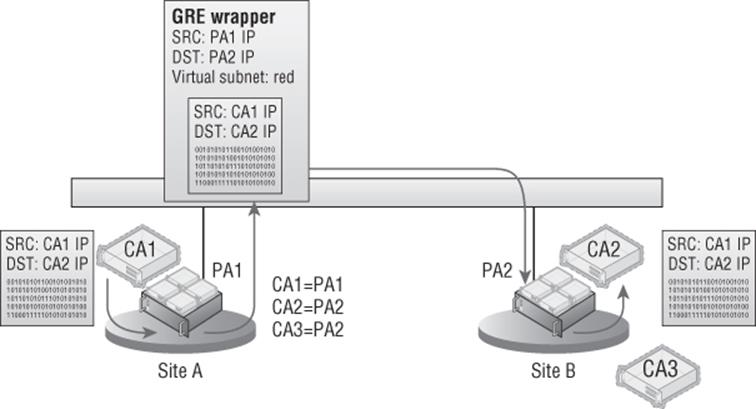

VM Networks

While the logical network provides the modeling of the networks available in the environment and the desired isolation, the goal for virtualization is to separate and abstract these logical networks from the actual virtual machines. This abstraction is achieved through the use of VM networks, which is another networking architectural component in SCVMM. Through the use of VM networks, the virtual machines have no idea of the underlying technology used by the logical networks, for example, if VLANs are used on the network fabric. Virtual machine virtual network adapters can only be connected to a VM network. When Network Virtualization is used, the Customer Address (CA) space is defined as part of the VM network, allowing specific VM subnets to be created as needed within the VM network.

There may be some scenarios where the isolation provided by VM networks is not actually required—for example, where direct access to the infrastructure is required, such as if your SCVMM server is actually running in a virtual machine, or where the network is used for cluster communications. It is actually possible to create a no isolation pass-through VM network that directly passes communication through to the logical network. The VM network is present only because a virtual machine network adapter needs to connect to a VM network. If a logical network has multiple sites defined, then when a virtual machine is deployed, it will automatically pick the correct IP subnet and VLAN configuration at deployment time based on the location to which it's being deployed. Users of self-service-type portals are exposed to VM networks but not the details of the underlying logical networks.

Port Classifications

Port classifications are assigned to virtual machines that are containers for port profile settings. The benefit of the port classification is that it acts a layer of abstraction from the port profiles assigned to logical switches, which allows a port classification to be assigned to a virtual machine template. The actual port profile used depends on the logical switch the VM is using when deployed. Think of port classifications as being similar to storage classifications; you may create a gold storage classification that uses a top-of-the-line SAN and a bronze storage classification that uses a much lower tier of storage. I may create a port classification of High Bandwidth and one of Low Bandwidth. A number of port classifications are included in-box that correlate to the included virtual port profiles. Port classifications are linked to virtual port profiles as part of the logical switch creation process. Like VM networks, port classifications are exposed to users via self-service portals and not the underlying port profiles.

Microsoft Resource

Microsoft has a great poster available that details all the key networking constructs available. If possible, download this poster, get it printed, and put it up on your wall, or if you have a large monitor, set it as your background. The poster can be downloaded from

www.microsoft.com/en-us/download/details.aspx?id=37137

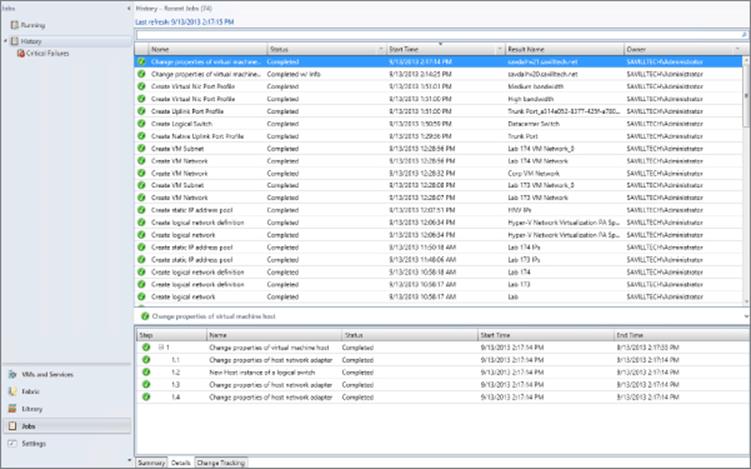

Deploying Networking with SCVMM 2012 R2

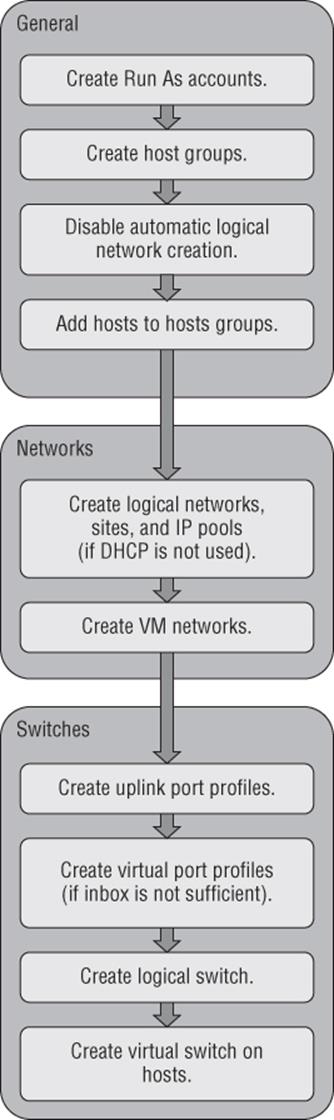

For this part of the chapter, I will assume SCVMM 2012 R2 is up and running in your environment. I cover implementing SCVMM 2012 R2 in Chapter 6, “Maintaining Your Hyper-V Environment,” so if you want to follow along, you may want to jump to Chapter 6 to get a basic deployment in place. The good news is that networking is one of the first components that needs to be configured with SCVMM, so once you have SCVMM deployed and you have created some host groups (which are collections of hosts), you will be ready to follow this next set of steps. Figure 3.14 gives a high-level view of the steps that will be performed.

Figure 3.14 The steps for SCVMM network configuration

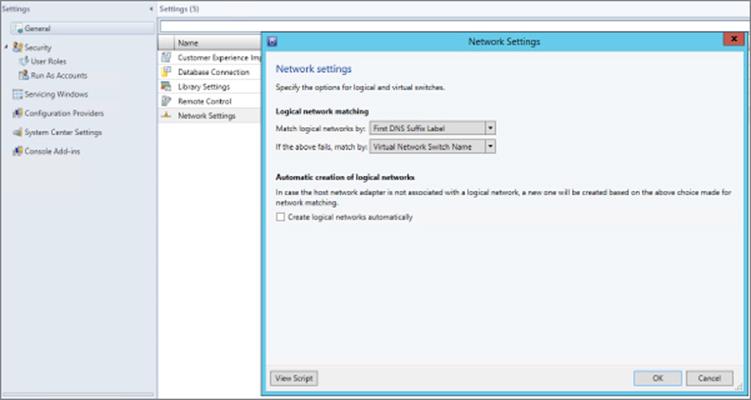

Disable Automatic Logical Network Creation

The very first action related to networking in SCVMM 2012 R2 is to disable the automatic creation of logical networks. This may seem strange that our first configuration is to disable functionality, but it will help ensure your SCVMM modeling consistency. With automatic logical network creation enabled, when a Hyper-V host that already has a virtual switch defined is added to SCVMM, a logical network will automatically be created in SCVMM if SCVMM does not find a match for an existing logical network based on the first DNS suffix label for the network adapter network (which is the default behavior). For example, if the DNS suffix for a network connection was lab.savilltech.net, then a logical network named lab would be used, and if not found, would automatically be created. This automatic creation of logical networks may be fine in a test environment, but in production, where you have done detailed planning for your logical networks and deployed accordingly, it is very unlikely that the automatic creation of logical networks based on DNS suffix labels would be desirable. Therefore, disable this automatic logical network creation as follows:

1. Open Virtual Machine Manager.

2. Open the Settings workspace.

3. Select the General navigation node.

4. Double-click Network Settings in the details pane.

5. In the Network Settings dialog, uncheck the Create Logical Networks Automatically option as shown in Figure 3.15 and click OK. Notice also in this dialog that it is possible to change the logical network matching behavior to a scheme that may better suit your naming conventions and design.

Figure 3.15 Disabling the automatic creation of logical networks in SCVMM 2012 R2

Those of you who used SCVMM 2012 SP1 will notice that the option to also automatically create virtual switches (VM networks) has been removed. The automatic creation of virtual switches, which virtual machines use to connect, actually caused a lot of confusion, so it was removed in R2. At this point you can safely add Hyper-V hosts to the SCVMM environment without them automatically creating logical networks you don't want in the environment.

Creating Logical Networks

In this environment I have three networks available that I will model as logical networks. However, they are all separate VLANs on the same physical network that will be controlled by setting the VLAN ID on the virtual network adapter. The physical ports on the switch have been configured to allow all the various VLANs that can be configured (similar to a trunk port):

· Corporate network. The main address space used by my organization, which on my switches uses VLAN 10 in all locations.

· Lab network. The network used for a number of separate lab environments that each have their own IP subnet and VLAN.

· Network virtualization network. Will be used in the future when network virtualization is explored

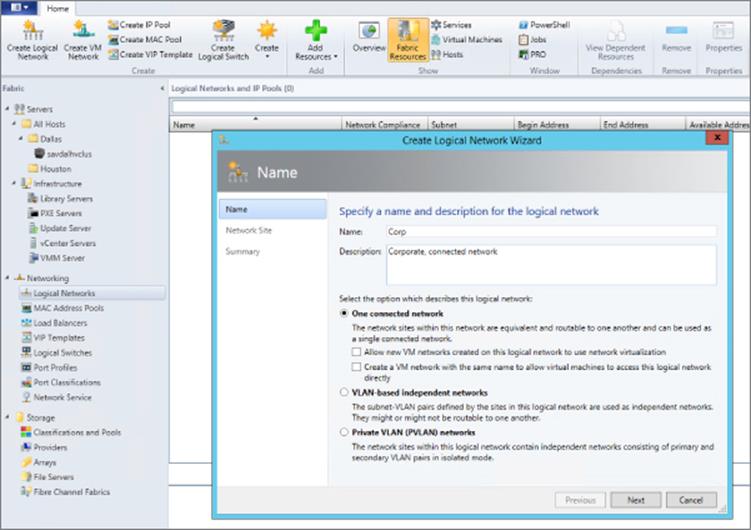

The steps to create a logical network are detailed here:

1. Open Virtual Machine Manager.

2. Open the Fabric workspace.

3. Select the Networking ⇒ Logical Networks navigation node.

4. Click the Create Logical Network button, which launches the Create Logical Network Wizard.

5. As shown in Figure 3.16, a name and description for the logical network is entered along with the type of network. It can be a connected network that allows multiple sites that can communicate with each other and use network virtualization, a VLAN-based independent network, or a PVLAN-based network. Note that when you are creating a network with the One Connected Network option, the option to automatically create a VM network to map to the logical network is available, but in this example I will not use that, so we can manually create it. Because this is the corporate network, I do not intend to use network virtualization. Click Next.

6. The next screen allows configuration of the sites. For corporate, I only need a single site using VLAN 10 because the switch is configured to allow VLAN 10 through to the corporate network. Click the Add button to add a site and then click Insert Row to add VLAN/IP details for the site. The actual IP space is all configured by corporate DHCP servers in this example, so I will actually leave the IP subnet blank, which tells SCVMM to just configure the VM for DHCP.

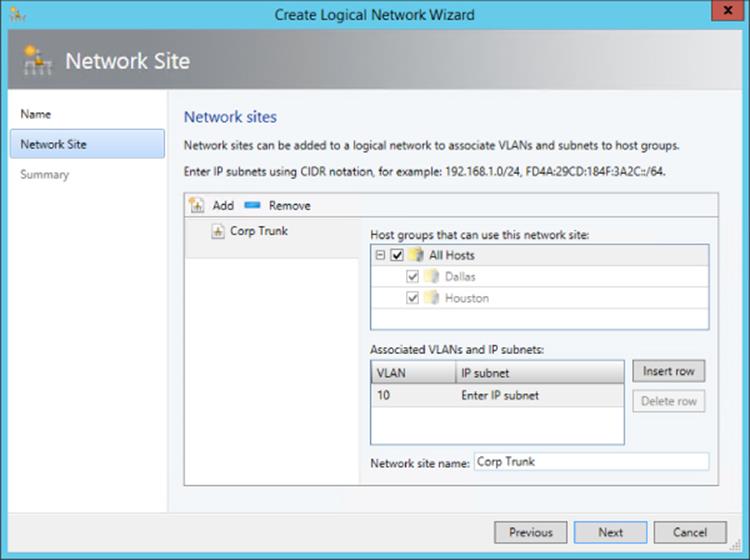

If the network does not use VLANs, then set the VLAN ID to 0, which tells SCVMM that VLANs are not to be configured. By default, sites are given the name <Logical Network>_<number>, but you should rename this to something more useful. For example, as shown in Figure 3.17, I am renaming it Corp Trunk.

For each site, select the host group that contains hosts in that site. Because this can be used in all locations, I select the All Hosts group. Click Next.

7. The Summary screen will be displayed. It includes a View Script button that when clicked will show the PowerShell code that can be used to automate the creation. This can be useful when you are creating large numbers of logical networks, or more likely, large numbers of sites. Click Finish to create the logical network.

Figure 3.16 Creating a logical network that represents a connected collection of sites

Figure 3.17 Adding a single site to a logical network

Here is the PowerShell code used to create my corporate logical network:

$logicalNetwork = New-SCLogicalNetwork -Name "Corp" ´-LogicalNetworkDefinitionIsolation $false -EnableNetworkVirtualization $false ´ -UseGRE $false -IsPVLAN $false -Description "Corporate, connected network"

$allHostGroups = @()

$allHostGroups += Get-SCVMHostGroup -ID "0e3ba228-a059-46be-aa41-2f5cf0f4b96e"

$allSubnetVlan = @()

$allSubnetVlan += New-SCSubnetVLan -VLanID 10

New-SCLogicalNetworkDefinition -Name "Corp Trunk" ´

-LogicalNetwork $logicalNetwork ´ -VMHostGroup $allHostGroups -SubnetVLan $allSubnetVlan -RunAsynchronously

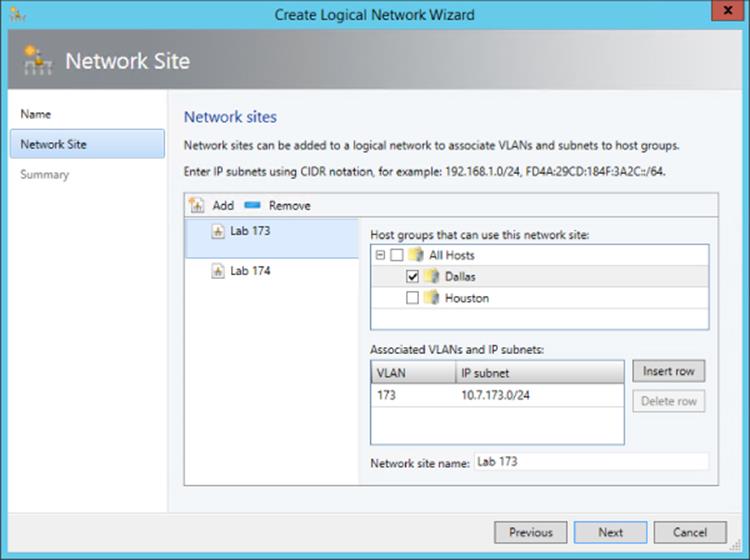

The next network I would create would be my set of lab networks. In this case I will select the VLAN-based independent networks type, and I will create a separate site for each of the VLAN/IP subnet pairs, which represent separate lab environments as shown inFigure 3.18. I'm creating only two of the VLANs in this example because performing this using the graphical tools is actually very slow. My lab environments are all based in Dallas, so only the Dallas host group is selected. Because the sites in this logical network have IP subnets defined, I would also create an IP pool for each site as in the next set of steps. You will notice most of these settings are similar to those configured for a DHCP scope because essentially SCVMM is performing a similar role; it just uses a different mechanism to assign the actual IP address. All of the details are those that will be configured on the virtual machines that get IP addresses from the IP pool.

1. Click the Create IP Pool button or right-click on the logical network and select the Create IP Pool context menu action.

2. Enter a name and description and select the logical network the IP pool is for from the drop-down list.

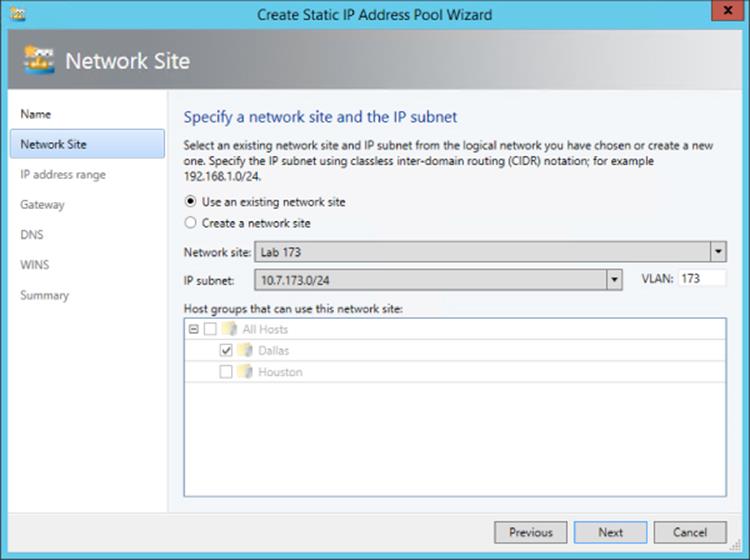

3. The next screen, as shown in Figure 3.19, allows you to use an existing network site or create a new one. Choose to use an existing one and then click Next.

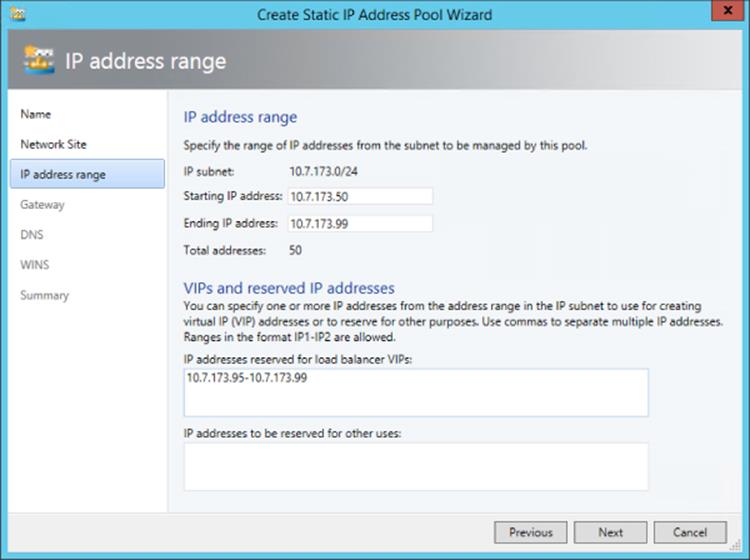

4. The IP Address Range page allows configuration of the IP address range that SCVMM will manage and allocate to resources such as virtual machines and load balancers. Within the range, specific addresses can be configured as reserved for other purposes or for use by load balancer virtual IPs (VIPs) that SCVMM can allocate. In Figure 3.20, you can see that I have reserved 5 IP addresses from the range for use by load balancer VIPs. Fill in the fields and click Next.

5. Click the Insert button and enter the gateway IP address. Then click Next.

6. Configure the DNS servers, DNS suffix, and additional DNS suffixes to append and then click Next.

7. Enter the WINS server details if used and click Next.

8. On the Summary screen, confirm the configuration, click the View Script button to see the PowerShell that will be used, and then click Finish to create the IP pool.

Figure 3.18 Creating a VLAN-based logical network

Figure 3.19 Choose the site for a new IP pool or create a new one.

Figure 3.20 Configuring the IP address range for the IP pool

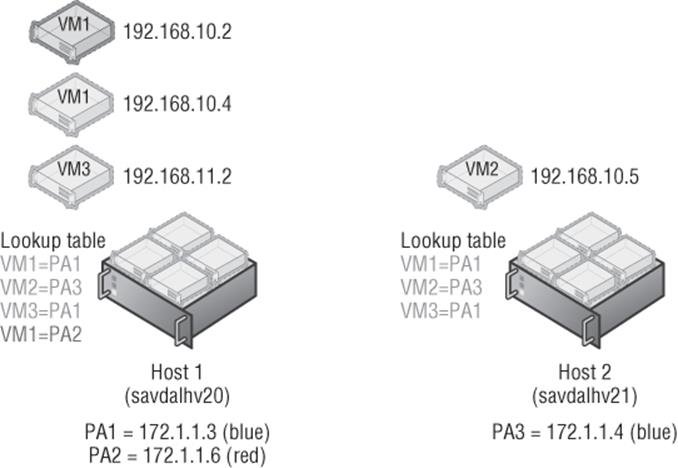

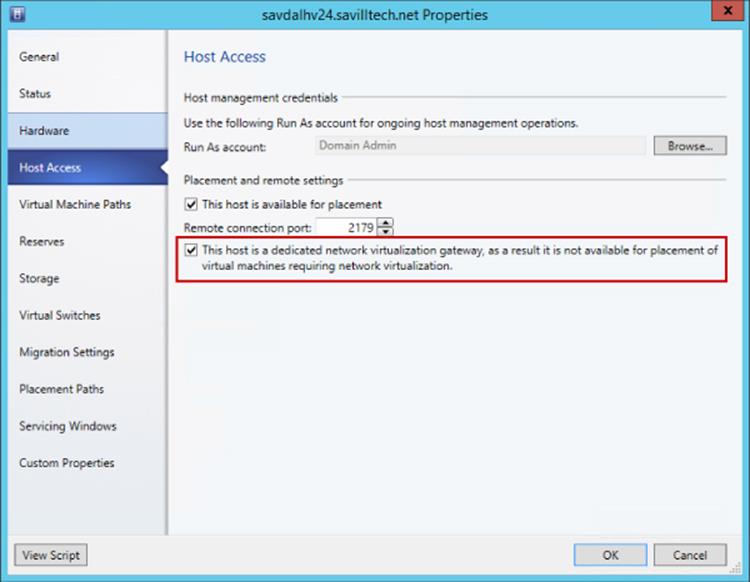

Finally, I will create my Hyper-V network virtualization logical network, which will support network virtualization and be configured with an IP pool that will be used for the provider space for the Hyper-V hosts. This will follow the same process as the other networks, except this time I will select the One Connected Network option and the option “Allow new VM networks created on this logical network to use network virtualization.” A network site is created and a VLAN is configured if needed along with an IP subnet (this must be set), and this will purely be used so that the Hyper-V hosts that are hosting virtual machines that are participating in network virtualization can be allocated their provider address (PA). An IP pool must also be created for the site for the IP address allocation for the PA. No DNS servers are required for the PA network, but if you are using multiple subnets, then a gateway would need to be defined.

Creating Virtual Networks

With logical networks created, the next step is to create the VM networks that virtual machines can actually be connected to. In SCVMM 2012 R2, within the logical networks view, there is a convenient option to create the VM network using the Create VM Network button or by right-clicking on a logical network and selecting Create VM Network. For now we will use the “old-fashioned” way:

1. Open Virtual Machine Manager.

2. Open the VMs And Services workspace (not Fabric, because this is now a construct directly related to virtual machines).

3. Select the VM Networks navigation node.

4. Click the Create VM Network button.

5. Enter a name and description for the VM network, select the logical network, and click Next.

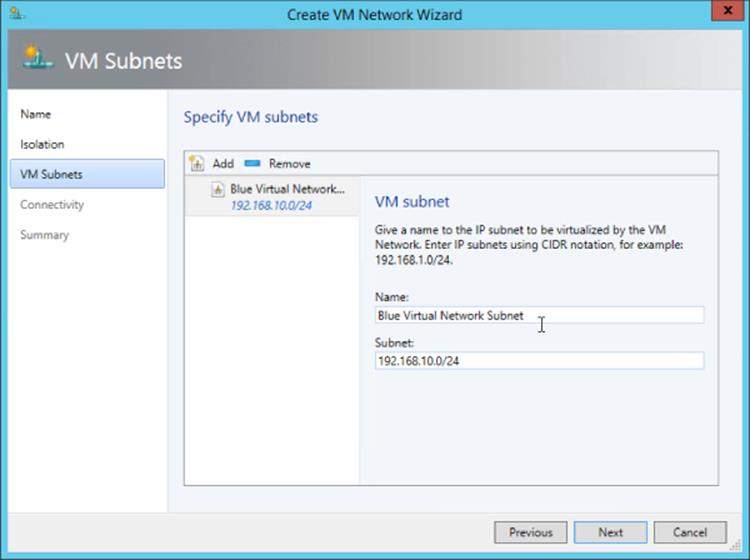

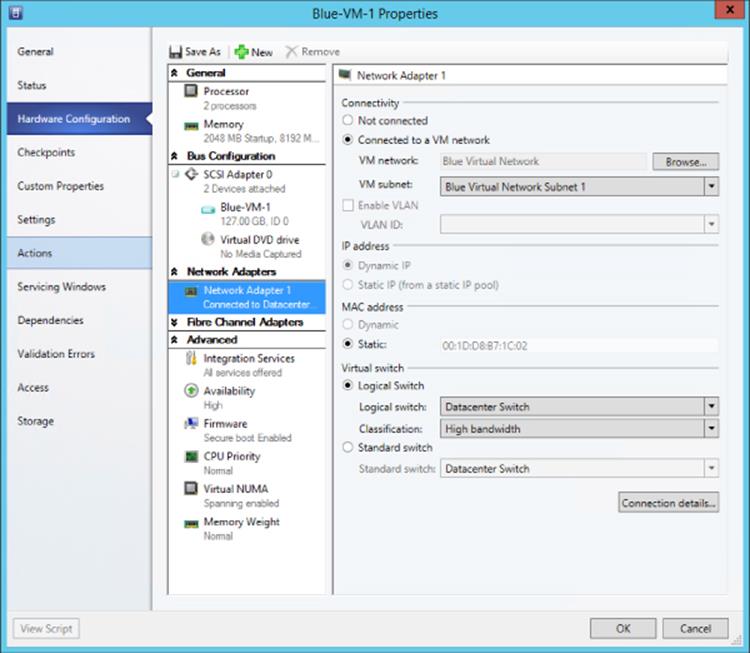

6. Depending on the logical network selected, this may be the end of the configuration. For example, a connected network without network virtualization requires no further configuration. A VLAN type network that is isolated will show an Isolation screen, which allows a specific VLAN (site) to be selected for this specific VM network, or you can select Automatic, which allows SCVMM to automatically select a site based on those available on the logical network. If a network that is enabled for network virtualization is selected, a number of additional configuration pages must be completed to define the configuration for the IP scheme in the virtual network space (CA). I will cover this in detail in the section “Network Virtualization.”

Click Finish to complete the VM Network creation process.

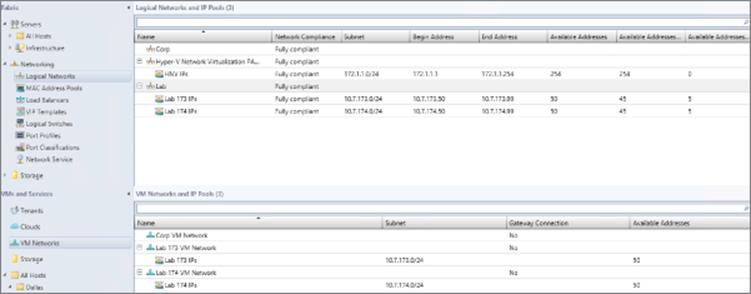

My final configuration is shown in Figure 3.21 for my logical networks and VM networks.

Figure 3.21 The complete logical network and VM network configuration

So far we have done a lot of configuration but have not modeled our network to SCVMM. Consider my lab environment. I configured 2 of the VLANs to separate the different lab environments, but suppose I have 40 or 80 or 200. This is where PowerShell is invaluable, and I created the script that follows to automate this configuration process.

This script creates a separate site for each VLAN with the appropriate IP subnet and also an IP pool (which in my case was just two addresses that were used for the first 2 machines that were domain controllers because the rest were assigned by DHCP). In my lab, the third octet matches the VLAN ID. This script automatically creates all 40 VLAN sites, which run from 150 to 190, and the appropriate IP pools. You can customize it to meet your own needs, including changing the name of the SCVMM server and also replacing with the logical network that all the sites should be added to (you have to create the logical network in advance, although this could also be added to this script if required). To find the GUID of your logical network, run the command Get-SCLogicalNetwork | ft Name, ID -Auto.

Import-Module virtualmachinemanager

Get-VMMServer -ComputerName scvmm

#Replace this with actual ID of the Logical Network.

#Get-SCLogicalNetwork | ft name, id

$logicalNetwork = Get-SCLogicalNetwork -ID "xxxxxxxx-xxxx-xxxx-xxxx-

xxxxxxxxxxxx"

$startNumber = 150

$endNumber = 190

$vlanID = $startNumber

do

{

$allHostGroups = @()

$allHostGroups += Get-SCVMHostGroup -ID "0e3ba228-a059-46be-aa41-2f5cf0f4b96e"

$allSubnetVlan = @()

$allSubnetVlan += New-SCSubnetVLan -Subnet "10.1.$vlanID.0/24" -VLanID $vlanID

$logicalNetworkDefinition = New-SCLogicalNetworkDefinition -Name "VLAN_$vlanID" ´

-LogicalNetwork $logicalNetwork -VMHostGroup $allHostGroups ´

-SubnetVLan $allSubnetVlan -RunAsynchronously

# Gateways

$allGateways = @()

$allGateways += New-SCDefaultGateway -IPAddress "10.1.$vlanID.1" -Automatic

# DNS servers

$allDnsServer = @("10.1.$vlanID.10", "10.1.$vlanID.11")

# DNS suffixes

$allDnsSuffixes = @()

# WINS servers

$allWinsServers = @()

$NewVLANName = "VLAN_" + $vlanID + "_IP_Pool"

New-SCStaticIPAddressPool -Name $NewVLANName ´

-LogicalNetworkDefinition $logicalNetworkDefinition -Subnet "10.1.$vlanID.0/24" ´

-IPAddressRangeStart "10.1.$vlanID.10" -IPAddressRangeEnd "10.1.$vlanID.11" ´

-DefaultGateway $allGateways -DNSServer $allDnsServer -DNSSuffix "" ´

-DNSSearchSuffix $allDnsSuffixes -RunAsynchronously

#Now create VM Network for each

$vmNetwork = New-SCVMNetwork -Name "Customer_VLAN_$vlanID" ´

-LogicalNetwork $logicalNetwork -IsolationType "VLANNetwork" ´

-Description "VM Network for Customer VLAN $vlanID"

$logicalNetworkDefinition = Get-SCLogicalNetworkDefinition -Name "VLAN_$vlanID"

$subnetVLAN = New-SCSubnetVLan -Subnet "10.7.$vlanID.0/24" -VLanID $vlanID

$VMSubnetName = "Customer_VLAN_" + $vlanID + "_0"

$vmSubnet = New-SCVMSubnet -Name $VMSubnetName ´

-LogicalNetworkDefinition $logicalNetworkDefinition -SubnetVLan $subnetVLAN ´

-VMNetwork $vmNetwork

$vlanID += 1

}

until ($vlanID -gt $endNumber)

Creating the Port Profiles and Logical Switch

Now that the logical networks and VM networks exists, I can create my logical switch, but remember, the logical switch uses the uplink port profiles to identify the connectivity available. I also use virtual port profiles and port classifications. I will use the built-in objects for those, but they are easy to create if required using the Fabric workspace and the Port Profiles and Port Classifications navigation areas. I recommend looking at the existing virtual port profiles and port classifications as the foundation of configuration should you need to create your own. Now is a good time to take a look at the inbox port profiles and port classifications, which you can choose to keep, delete, or even modify to exactly meet your own needs.

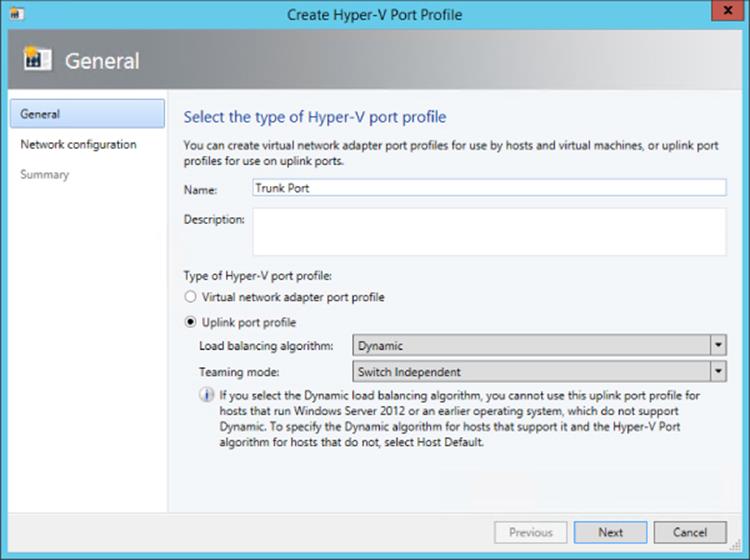

The first step is to create the uplink port profiles. Remember, the uplink port profile models the connectivity available for a specific connection from the host, that is, from the network adapter to the switch. If different network adapters have different connectivity to different switches, you will need multiple uplink port profiles. Here are the steps:

1. Open Virtual Machine Manager.

2. Open the Fabric workspace.

3. Select the Networking ⇒ Port Profiles navigation node.

4. Click the Create button drop-down and select Hyper-V Port Profile.

5. Enter a name and description for the new port profile, as shown in Figure 3.22. Select the Uplink Port Profile radio button. You can additionally configure a teaming mode, which is used if the port profile is used on a host where NIC Teaming is required and the settings configured in the port profile will be applied. Because I am connecting all my Hyper-V boxes to ports configured on the switch with multiple VLANs allowed, I only need one uplink port profile that can connect any of the networks. Click Next.

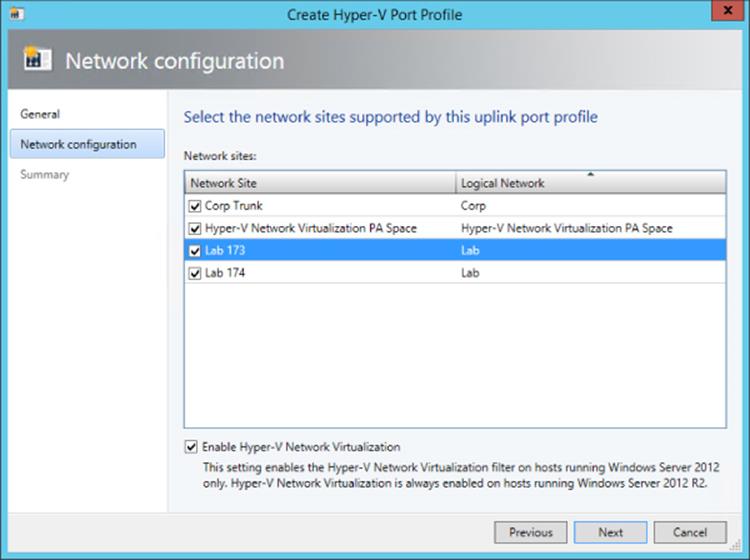

6. Select the network sites (that are part of your logical networks) that can be connected to via this uplink port profile (Figure 3.23). Because all of my networks can, I will select them all and also check the box to enable Hyper-V Network Virtualization. On Windows 2012 Hyper-V hosts, this option enables Network Virtualization in the networking stack on the network adapter, but it does nothing on Windows Server 2012 R2 hosts, which always have Network Virtualization enabled because it's part of the switch. Check the network sites that can be connected via this uplink port profile and click Next.

7. Click Finish to complete the creation of the uplink port profile.

Figure 3.22 Setting the options for a new uplink port profile and NIC Teaming options

Figure 3.23 Selecting the network sites that can be connected to using the uplink port profile

The final step of modeling is the creation of the actual logical switch, which will then be applied to the Hyper-V hosts. The logical switch will bring all the different components together. Follow these steps:

1. Open Virtual Machine Manager.

2. Open the Fabric workspace.

3. Select the Networking ⇒ Logical Switches navigation node.

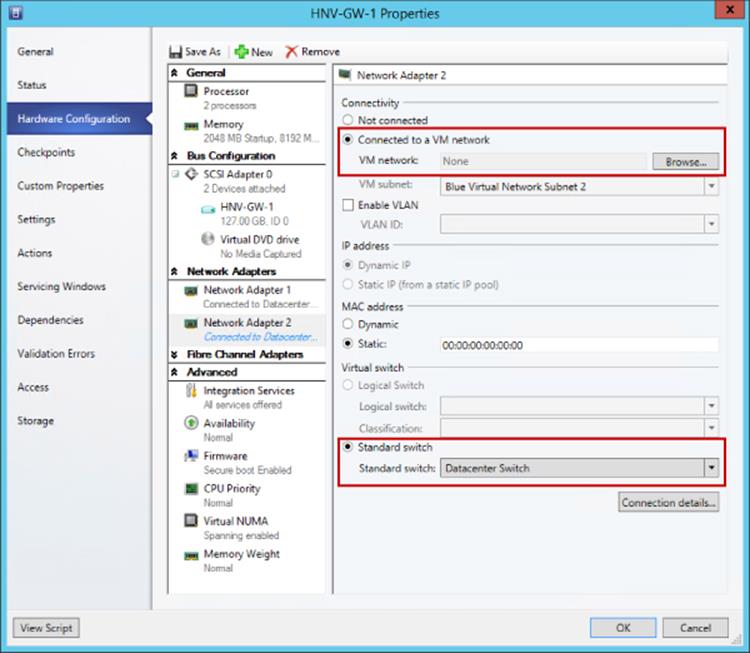

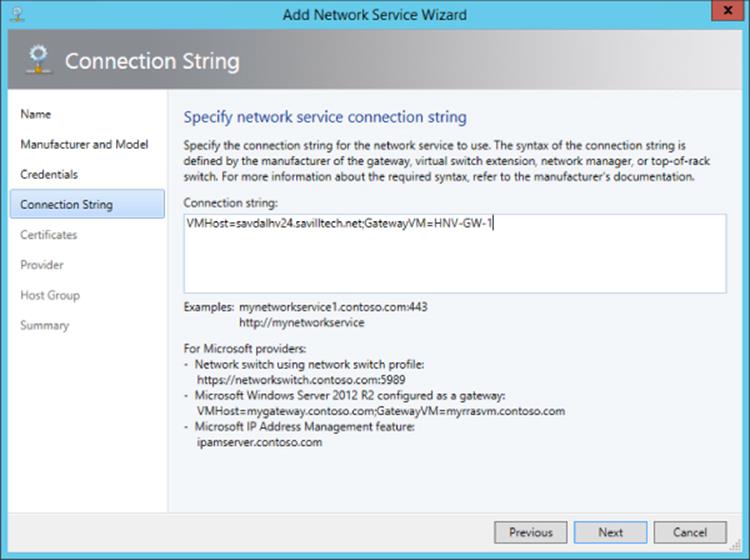

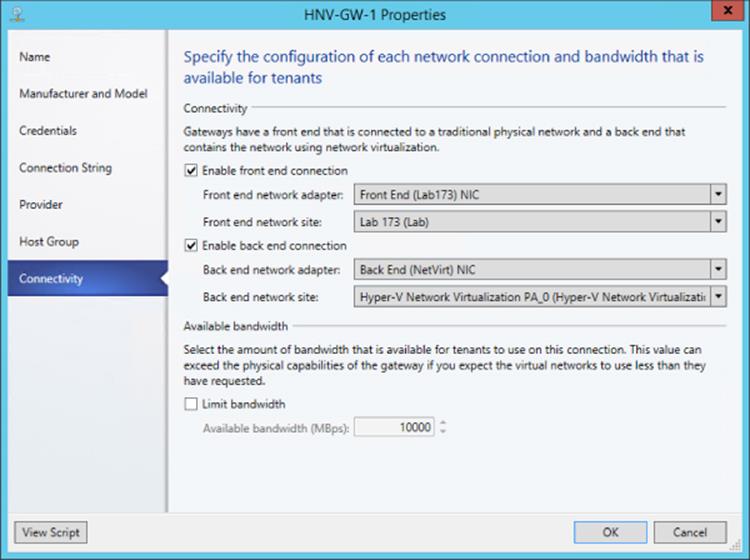

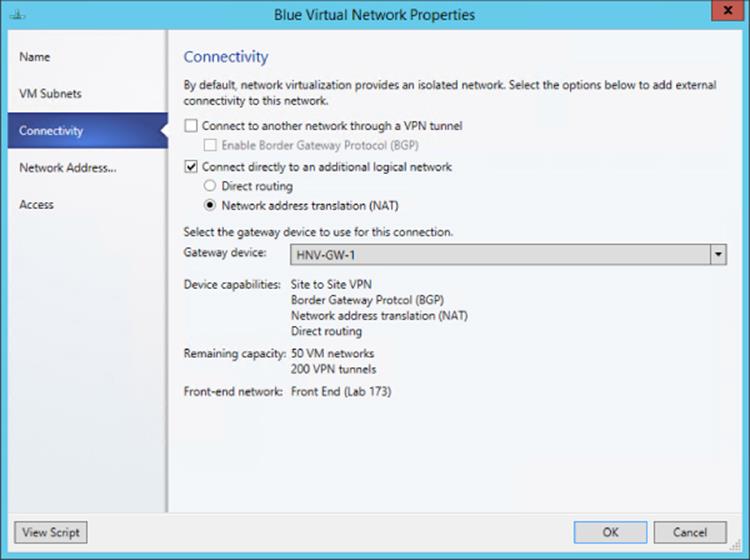

4. Click the Create Logical Switch button.