Improving the Test Process: Implementing Improvement and Change - A Study Guide for the ISTQB Expert Level Module (2014)

Chapter 2. The Context of Improvement

If your intention is to improve the test process in your project or organization, you will need to convince managers, business owners, and fellow testers of the necessity for this and explain to them how to you intend to proceed.

This chapter addresses these fundamental issues and sets the overall context for test process improvement. It starts by asking the basic questions “Why improve testing?” and “What things can I improve?” and then goes on to consider the subject of (product) quality, both in general terms and with regard to different stakeholders. Following this, an overview is provided of the systematic methods and approaches available that can help to achieve the quality objectives in your project or organization by improving the testing process. This chapter introduces some of the concepts and approaches that will be expanded upon in other chapters.

2.1 Why Improve Testing?

Syllabus Learning Objectives

|

LO 2.1.1 |

(K2) Give examples of the typical reasons for test improvement. |

|

LO 2.1.2 |

(K2) Contrast test improvement with other improvement goals and initiatives. |

|

LO 2.1.3 |

(K6) Formulate to all stakeholders the reasons for proposed test process improvements, show how they are linked to business goals and explain them in the context of other process improvements. |

This is an exciting time to be a test professional. Systems in which software is a dominant factor are becoming more and more challenging to build. They are playing an increasingly important role in society. New methods, techniques, and tools are becoming available to support development and maintenance tasks. Because systems play such an important role in our lives, both economically and socially, there is pressure for the software engineering discipline to focus on quality issues. Poor-quality software is no longer acceptable to society. Software failures can result in catastrophic losses. In this context the importance of the testing discipline, as one of the quality measures that can be taken, is growing rapidly. Often 50% of the budget for a project is spent on testing. Testing has truly become a profession and should be treated as such.

Many product development organizations face tougher business objectives every day, such as, for example, decreased time to market, higher quality and reliability, and reduced costs. Many of them develop and manufacture products that consist of over 60 percent software, and this figure is still growing. At the same time, software development is sometimes an outsourced activity or is codeveloped with other sites. Together with the trend toward more reuse and platform architecture, testing has become a key activity that directly influences not only the product quality but also the “performance” of the entire development and manufacturing process.

In addition to these concerns is the increasing importance and amount of software in our everyday world. Software in consumer products doubles in size every 24 months; for example, TVs now have around 300,000 lines of code, and even electric razors now have some software in them. In the safety-critical area, there are around 400,000 lines of code in a car, and planes have become totally dependent on software. The growing amount of software has been accompanied by rapid growth in complexity. If we consider the number of defects per “unit” of software, research has shown that this number has, unfortunately, hardly decreased in the last two decades. As the market demands better and more reliable products that are developed and produced in shorter time periods and with less money, higher test performance is not an option; it is an essential ingredient for success.

The scope of testing is not necessarily limited to the software system. People who buy and use software are not really interested in the code; they need services and products that include a good user experience, business processes, training, user guides, and support. Improvements to testing must be carried out in the context of these wider quality goals, whether they relate to an organization, the customer, or IT teams.

For the past decades, the software industry has invested substantial effort to improve the quality of its products. Despite encouraging results from various quality improvement approaches, the software industry is still far from achieving the ultimate goal of zero defects. To improve product quality, the software industry has often focused on improving its development processes. A guideline that has been widely used to improve the development processes is Capability Maturity Model Integration (CMMI), which is often regarded as the industry standard for software process improvement.

Despite the fact that testing often accounts for at least 30 to 40 percent of the total project costs, only limited attention is given to testing in the various software process improvement models such as CMMI. As an answer, the testing community has created its own improvement models, such as TMMi and TPI NEXT, and is making a substantial effort on its own to improve the test performance and its process. Of course, “on its own” does not mean independent and without context. Test improvement always goes hand in hand with software development and the business objectives.

As stated earlier, the context within which test process improvement takes place includes any business or organizational process improvement and any IT or software process improvement. The following list includes some typical reasons for business improvements that influence testing:

![]() Improve product quality

Improve product quality

![]() Reduce time to market but maintain quality levels

Reduce time to market but maintain quality levels

![]() Save money, improve efficiency

Save money, improve efficiency

![]() Improve predictability

Improve predictability

![]() Meet customer requirements

Meet customer requirements

![]() Be at a capability level; e.g., for outsourcing companies

Be at a capability level; e.g., for outsourcing companies

![]() Ensure compliance to standards; e.g., FDA in the medical domain (see section 2.5.4)

Ensure compliance to standards; e.g., FDA in the medical domain (see section 2.5.4)

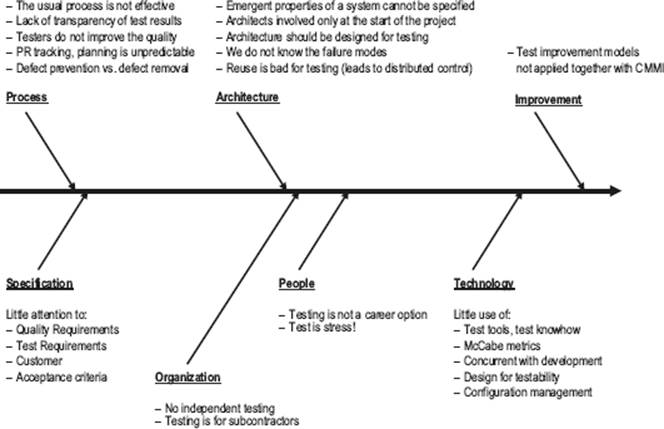

The fishbone diagram in figure 2–1 was the result of a retrospective session in a large embedded software organization and shows some of the causes of poor test performance and possible remedies. It also shows that test performance improvement is not the same as test process improvement because there are many other aspects that are of importance in improving test performance. The conclusions are also that improving test performance is not an easy and straightforward task; it is a challenge that covers many aspects.

Figure 2–1 Fishbone diagram for test performance

2.2 What Can Be Improved?

Syllabus Learning Objectives

|

LO 2.2.1 |

(K2) Understand the different aspects of testing, and related aspects, that can be improved. |

Software process improvement (SPI) is the continuous improvement of product quality, process effectiveness, and process efficiency leading to improved quality of the software product.

Test improvement is the continuous improvement of the effectiveness and/or the efficiency of the testing process within the context of the overall software process. This context means that improvements to testing may go beyond just the process itself; for example, extending to the infrastructure, organization, and testers’ skills. Most test improvement models, as you will see later in this book, also address to a certain extent infrastructure, organizational, and people issues. For example, the TMMi model has dedicated process areas for test environment, test organization, and test training. Although the term most commonly used is test process improvement, in fact a more accurate term would be test improvement since multiple aspects are addressed in addition to the process.

Whereas some models for test process improvement focus mainly on higher test levels, or address only one aspect of structured testing—e.g., the test organization—it is important to initially consider all test levels (including static testing) and aspects of structured testing. With respect to dynamic testing, both lower test levels (e.g., component test, integration test) and higher test levels (e.g., system test, acceptance test) should ultimately be within the scope of the test improvement process.

The four cornerstones for structured testing (life cycle, techniques, infrastructure, and organization) [Pol, Teunnissen, and van Veenendaal 2002] should ultimately be addressed in any improvement. Priorities are based on business objectives and related test improvement objectives. Practical experiences have shown that balanced test improvement programs (e.g., between process, people, and infrastructure) are typically the most successful.

Preconditions

Test process improvements may indicate that associated or complementary improvements are needed for requirements management and other parts of the development process. Not every organization is able to carry out test process improvement in an effective and efficient manner. For an organization to be able to start, fundamental project management should preferably be available. This means, for example, that requirements are documented and managed in a planned and controlled manner (otherwise how can we perform test design?). Configurations should be planned and controlled (otherwise how do we know what to test?), and project planning should exist at an adequate level (otherwise how can we do test planning?). There are many other examples of how improvements to software development can support test improvements. This topic will be discussed in more detail in chapter 3 when test improvement models like TPI NEXT and TMMi are described.

If the aspects just described are missing, or are only partially in place, test process improvement is still possible and can function as a reverse and bottom-up quality push. Generally, the business drives (pushes) the improvement process, the business objectives then drive the software improvement process, and the software development improvement objectives then drive the test improvement process (see figure 2–2). However, with the reverse quality push, testing takes the driver’s seat. As an example, if there are no or poor requirements, test designs become the requirements. If there is poor estimation at the project level, test estimation will dictate the project estimate. Of course, this will not be easily accepted, and much resistance can be expected. However, this reverse quality push is often used as a wake-up call to show how poor software development processes are. This approach is less efficient and perhaps even less effective, but if the involved test process improvement members are fit for the job, the push can be strong enough to have a positive impact on the surrounding improvement “domains.”

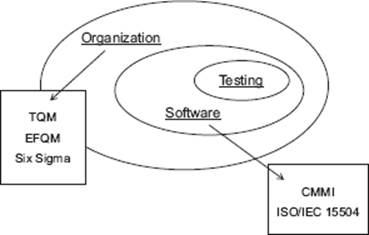

Context

As a result, test process improvement cannot be considered a separate activity (see figure 2–2). It is part of software improvement activities, which in turn should be part of a total quality management program. Progress made in these areas is an indication of how effective the test process investment can be. Test process improvements may also be driven by overall SPI efforts. As previously mentioned, testing goals must always be aligned to business goals, so it is not necessarily the best option for an organization or project to achieve the highest level of test maturity.

Figure 2–2 Context of test process improvement

From figure 2–2, you can see that test process improvement takes place within the context of organizational and business improvement. This may, for example, be managed via one of the following:

![]() Total Quality Management (TQM)

Total Quality Management (TQM)

Total Quality Management An organization-wide management approach centered on quality, based on the participation of all members of the organization, and aiming at long-term success through customer satisfaction and benefits to all members of the organization and to society. Total Quality Management consists of planning, organizing, directing, control, and assurance. [After ISO 8402]

![]() ISO 9000:2000

ISO 9000:2000

![]() An excellence framework such as the EFQM Excellence Model (see section 2.4.3)

An excellence framework such as the EFQM Excellence Model (see section 2.4.3)

![]() Six Sigma (see section 2.4.3)

Six Sigma (see section 2.4.3)

Test process improvement will most often also take place in the context of software process improvement.

Software Process Improvement (SPI) A program of activities designed to improve the performance and maturity of an organization’s software processes and the results of such a program. [After Chrissis, Konrad, and Shrum, 2004]

This may be managed via one of the following:

![]() Capability Maturity Model Integration, or CMMI (see section 3.2.1)

Capability Maturity Model Integration, or CMMI (see section 3.2.1)

![]() ISO/IEC 15504 (see section 3.2.2)

ISO/IEC 15504 (see section 3.2.2)

![]() ITIL [ITIL]

ITIL [ITIL]

![]() Personal Software Process [PSP] (see section 2.5.4) and Team Software Process [TSP]

Personal Software Process [PSP] (see section 2.5.4) and Team Software Process [TSP]

2.3 Views on Quality

Syllabus Learning Objectives

|

LO 2.3.1 |

(K2) Compare the different views of quality. |

|

LO 2.3.2 |

(K2) Map the different views of quality to testing. |

Before starting quality improvement activities, there must be consensus about what quality really means in a specific business context. Only then can wrong expectations, unclear promises, and misunderstandings be avoided. In a single organization or project, we may come across several definitions of quality, perhaps used inadvertently and unacknowledged by all the people in the project. It is important to realize that there is no “right” definition of quality. Garvin showed that in practice, generally five distinct definitions of quality can be recognized [Garvin 1984], [Trienekens and van Veenendaal 1997]. We will describe these definitions briefly from the perspective of software development and testing. Improvement of the test process should consider which of the quality views discussed in this section are most applicable to the organization. The five distinct views on quality are as follows:

![]() Product-based

Product-based

![]() User-based

User-based

![]() Manufacturing-based

Manufacturing-based

![]() Value-based

Value-based

![]() Transcendent-based

Transcendent-based

The Product-Based Definition

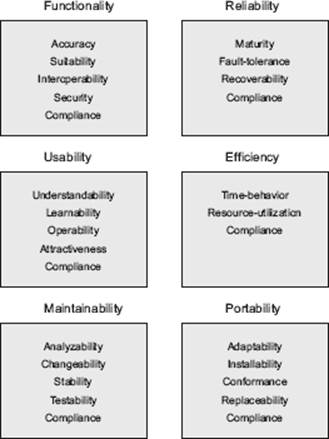

For testing, this view of quality relates strongly to non-functional testing. Product quality is determined by characteristics such as reliability, maintainability, and portability. When the product-based definition of quality is important, we should evaluate which non-functional characteristics are of major importance and start improving non-functional testing based on this. The product-based view is common in the safety critical industry, where reliability, availability, maintainability, and safety (RAMS) are often key areas that determine product quality. For some products, usability is of extreme importance. The products can come from many industries and be used by individuals for activities such as, for example, gaming and entertainment, or are delivered to the public at large (i.e., via ATM). For these types of products, giving the appropriate amount of attention to usability (a non-functional characteristic) during development and testing is essential.

Product-based quality A view of quality, wherein quality is based on a well-defined set of quality attributes. These attributes must be measured in an objective and quantitative way. Differences in the quality of products of the same type can be traced back to the way the specific quality attributes have been implemented. [After Garvin]

Figure 2–3 The ISO 9126 quality model

A typical quality approach is software development and testing based on the ISO 9126 standard (or the ISO 25000 series, which has superseded ISO 9126). The standard defines six quality characteristics and proposes the subdivision of each quality characteristic into a number of subcharacteristics (see figure 2–3). This ISO 9126 set reflects a huge step toward consensus in the software industry and addresses the general notion of software product quality. The ISO 9126 standard also provides metrics for each of the characteristics and subcharacteristics.

The User-Based Definition

User-based quality A view of quality wherein quality is the capacity to satisfy the needs, wants, and desires of the user(s). A product or service that does not fulfill user needs is unlikely to find any users. This is a context-dependent, contingent approach to quality since different business characteristics require different qualities of a product. [After Garvin]

Quality is fitness for use. This definition says that software quality should be determined by the user(s) of a product in a specific business situation. Different business characteristics require different “qualities” of a software product. Quality can have many subjective aspects and cannot be determined on the basis of only quantitative and mathematical metrics.

For testing, this definition of quality highly relates to validation and user acceptance testing activities. During both of these activities, we try to determine whether the product is fit for use. If the main focus of an improvement is on user-based quality, the validation-oriented activities and user acceptance testing should be a primary focus.

The Manufacturing-Based Definition

Manufacturing-based quality A view of quality whereby quality is measured by the degree to which a product or service conforms to its intended design and requirements. Quality arises from the process(es) used. [After Garvin]

This definition of quality points to the manufacturing—i.e., the specification, design, and construction—processes of software products. Quality depends on the extent to which requirements have been implemented in a software product in conformance with the original requirements. Quality is based on inspection, reviews, and (statistical) analysis of defects and failures in (intermediate) products. The manufacturing-based view on quality is also represented implicitly in many standards for safety-critical products, where the standards prescribe a thorough development and testing process.

For testing, this definition of quality strongly relates to verification and system testing activities. During both of these activities we try to determine whether the product is compliant with requirements. If the main focus of an improvement is on manufacturing-based quality, then verification-oriented activities and system testing should be a primary focus. This view of quality also has the strongest process improvement component. By following a strict process from requirement to test, we can deliver quality.

The Value-Based Definition

Value-based quality A view of quality wherein quality is defined by price. A quality product or service is one that provides desired performance at an acceptable cost. Quality is determined by means of a decision process with stakeholders with trade-offs between time, effort, and cost aspects. [After Garvin]

This definition states that software quality should always be determined by means of a decision process involving trade-offs between time, effort, and cost. The value-based definition emphasizes the need to make trade-offs, which is often achieved by means of communication between all parties involved, such as sponsors, customers, developers, and producers. Software or systems being launched as an (innovative) new product apply the value-based definition of quality because if we spend more time to get a better product, we may miss the market window and a competitor might beat us by being first to market.

This definition of testing relates strongly to risk-based testing. We cannot test everything but should focus on the most important areas to test. Testing is always a balancing act: a decision process conducted with stakeholders with trade-offs between time, effort, and cost aspects. This view also means giving priority to business value and doing the things that really matter.

The Transcendent-Based Definition

This “esoteric” definition states that quality can in principle be recognized easily depending on the perceptions and feelings of an individual or group of individuals toward a type of software product. Although this one is the least operational of the definitions, it should not be neglected in practice. Frequently, a transcendent statement about quality can be a first step toward the explicit definition and measurement of quality. The entertainment and game industry may use this view on quality, thereby giving testing a difficult task. Often user panels and beta testing is performed to get feedback from the market on the excitement factor of the new product. Highly innovative product development is another area where one may encounter this view of quality.

As testers or test process improvers, we typically do not like the transcendent-based view of quality. It is very intangible and almost impossible to target. It can probably best be used to start a conversation with stakeholders about a different view of quality and assist them in making their view more explicit.

Transcendent-based quality A view of quality wherein quality cannot be precisely defined but we know it when we see it or are aware of its absence when it is missing. Quality depends on the perception and affective feelings of an individual or group of individuals toward a product. [After Garvin]

The existence of the various quality definitions demonstrates the difficulty of determining the real meaning and relevance of software quality and quality improvement activities. Practitioners have to deal with this variety of definitions, interpretations, and approaches. How we define “quality” for a particular product, service, or project depends on context. Different industries will have different quality views. For most software products and systems, there is not just one view of quality.

The stakeholders are best served by balancing the quality aspects. For these products, we should ask ourselves, What is the greatest number or level of characteristics (product-based) that we can deliver to support the users’ tasks (user-based) while giving best cost benefit (value-based) and following repeatable, quality-assured processes within a managed project (manufacturing-based)? Starting a test process improvement program means we start by having a clear understanding of what product quality means. The five distinct views on quality are usually a great way to start the discussion with stakeholders and achieve some consensus and common understanding of what type of product quality is being targeted.

2.4 The Generic Improvement Process

Syllabus Learning Objectives

|

LO 2.4.1 |

(K2) Understand the steps in the Deming Cycle. |

|

LO 2.4.2 |

(K2) Compare two generic methods (Deming Cycle and IDEAL framework) for improving processes. |

|

LO 2.4.3 |

(K2) Give examples for each of the Fundamental Concepts of Excellence with regard to test process improvement. |

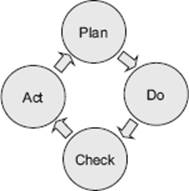

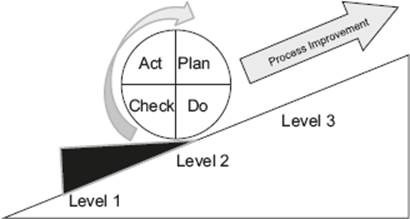

Process improvements are relevant to the software development process as well as to the testing process. Learning from one’s own mistakes makes it possible to improve the process that organizations are using to develop and test software. The Deming improvement cycle—Plan, Do, Check, Act—has been used for many decades and is still relevant when testers need to improve the process in use today.

The following sections provide three generic improvement processes that have been around for many years and have been used as a basis for many (test) improvement approaches or models that exists today. Understanding the background and essentials of the generic improvement processes implies that one understands a number of the underlying principles of test improvement approaches and models discussed later in this book.

2.4.1 The Deming Cycle

Continuous improvement involves setting improvement objectives, taking measures to achieve them, and once they have been achieved, setting new improvement objectives. Continuous improvement models have been established to support this concept. One of the most common tools for continuous improvement is the Deming cycle. This simple yet practical improvement model, initially called the Shewhart cycle, was popularized by Edwards and Deming as Plan, Do, Check, Act (PDCA) [Edwards 1986]. The PDCA method is well suited for many improvement projects.

Deming cycle An iterative four-step problem-solving process

(Plan, Do, Check, Act), typically used in process improvement. [After Deming]

Figure 2–4 Deming cycle

Plan

Figure 2–4 shows how the Deming cycle operates. The Plan stage is where it all begins. Targets are defined for quality characteristics, costs, and service levels. The targets may initially be formulated by management as business improvement goals and successively broken down into individualcontrol points that should be checked (see the following list) to see that the activities have been carried out. An analysis of current practices and skills is performed, after which improvement plans are set up for improving the test process. Prior to implementing a change, you must understand both the nature of your current problem and how your process failed to meet a stakeholder requirement. You and/or your problem-solving team determine the following:

![]() Which process needs to be improved

Which process needs to be improved

![]() How much improvement is required

How much improvement is required

![]() The change to be implemented

The change to be implemented

![]() When the change is to be implemented

When the change is to be implemented

![]() How you plan to measure the effect of the change

How you plan to measure the effect of the change

![]() What will be affected by this change (documents, procedures, etc.)

What will be affected by this change (documents, procedures, etc.)

Once you have this plan, it’s time to move to the Do stage.

Do

The Do stage is the implementation of the change. After the plans have been made, the activities are performed. Included in this step is an investment in human resources (e.g., training and coaching). Identify the people affected by the change and inform them that you’re adapting their process for specific reasons like customer complaints, multiple failures, and continual improvement opportunity. Whatever the reason, it is important to let them know about the change. You’ll need their buy-in to help ensure the effectiveness of the change. Then implement the change, including the measurements you’ll need in the Check stage. Monitor the change after implementation to make sure no backsliding occurs. You wouldn’t want people to return to the old methods of operation. Those methods were causing your company pain to begin with!

Check

The control points identified in the Plan stage are tracked using specific metrics, and deviations are observed. The variations in each metric may be predicted for a specific time interval and compared with the actual observations to provide information on deviations between the actual and expected. At the Check stage is where you’ll perform analysis of the data you collected during the Do stage. Considerations include the following questions:

![]() Did the process improve?

Did the process improve?

![]() By how much?

By how much?

![]() Did we meet the objective for the improvement?

Did we meet the objective for the improvement?

![]() Was the process more difficult to use with the new methods?

Was the process more difficult to use with the new methods?

Act

Using the information gathered, opportunities for performance improvement are identified and prioritized. The answers from the Check stage define your tasks for the Act stage. For example, if the process didn’t improve, there’s no point in asking additional questions during the Check stage. But action can be taken. In fact, action must be taken! The problem hasn’t been solved. The action you’d take is to eliminate the change you implemented in the Do stage and return to the Plan stage to consider new options to implement. If the process did improve, you’d want to know if there was enough improvement. More simply, if the improvement was to speed up the process, is the process now fast enough to meet requirements? If not, consider additional methods to tweak the process so that you do meet improvement objectives. Again, you’re back at the Plan stage of the Deming cycle.

Suppose you met the improvement objectives. Interview the process owner and some process participants to determine their thoughts regarding the change you implemented. They are your immediate customer. You want their feedback. If you didn’t make the process harder (read “more costly or time consuming”) your action in this case would be to standardize your improvement by changing any required documentation and to conduct training regarding the change. Keep in mind that sometimes you will make the process more time consuming. But if the savings from the change more than offset the additional cost, you’re likely to have implemented an appropriate change.

Sustainment

You’re not done yet. You want to sustain the gain as visualized in figure 2–5; know that the change is still in place, and still effective. A review of the process and measures should give you this information. Watch the process to view for yourself whether the process operators are performing the process using the improvements you’ve implemented. Analyze the metrics to ensure effectiveness of your Deming cycle improvements.

Note that in the first two steps (Plan and Do), the sense of what is important plays the central role. In the last two steps (Check and Act), statistical methods and systems analysis techniques are most often used to help pinpoint statistical significance, dependencies, and further areas for improvement.

Figure 2–5 The Deming cycle in context, sustaining the improvements

2.4.2 The IDEAL Improvement Framework

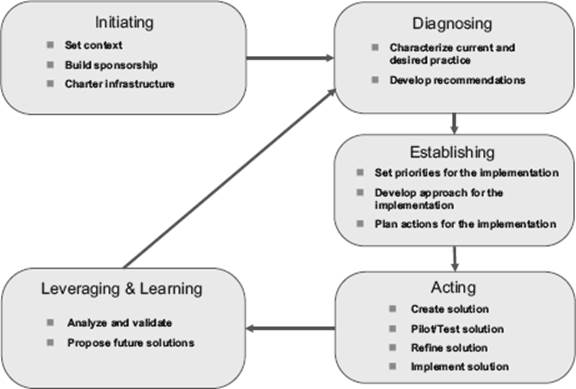

The IDEAL framework [McFeeley/SEI 1996] is basically a more detailed implementation of the previously described Deming cycle specifically developed for software process improvement. The PDCA cycle can easily be recognized within IDEAL, but the model is enriched with a full description of phases and activities to be performed. The IDEAL model is the Software Engineering Institute’s (SEI’s) organizational improvement model that serves as a road map for initiating, planning, and implementing improvement actions. The IDEAL model offers a very pragmatic approach for software process improvement; it also emphasizes the management perspective and commitment. It provides a road map for executing improvement programs using a life cycle composed of sequential phases containing activities (see figure 2–6). It is named for its five phases (initiating, diagnosing, establishing, acting, and learning). IDEAL originally focused on software process improvement; however, as the SEI recognized that the model could be applied beyond software process improvement, it revised the model so that it may be more broadly applied. Again, while the IDEAL model may be broadly applied to organizational improvement, the emphasis herein is on software process improvement.

IDEAL An organizational improvement model that serves as a roadmap for initiating, planning, and implementing improvement actions. The IDEAL model is named for the five phases it describes: initiating, diagnosing, establishing, acting, and learning.

Figure 2–6 Phases of an improvement program according to IDEAL

The IDEAL improvement framework is described in detail in chapter 6 with a special bias toward test process improvement.

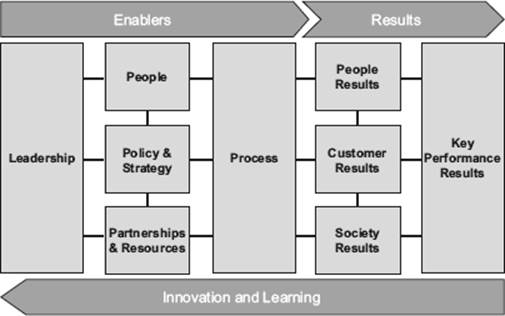

2.4.3 Fundamental Concepts of Excellence

A set of Fundamental Concepts of Excellence has been developed by the European Foundation for Quality Management (EFQM) and serves both as a basis for improvement and as a quality management model. These concepts can also be used to compare models. Many models have since been built using these concepts either explicitly or implicitly, including the EFQM Excellence Model, Malcom Baldrige model, and Six Sigma. Such models are often combined with the balanced scorecard (see section 6.2.2). The balanced scorecard provides a way of discussing the business goals for an organization and deciding how to achieve and measure those goals.

The set of concepts can be used as the basis to describe an excellent organizational culture. They also serve as a common language for senior management. The Fundamental Concepts of Excellence are used in organizational excellence models globally to measure organizations against the nine criteria (see figure 2–7).

The Fundamental Concepts of Excellence [URL: EFQM] are as follows:

![]() Results Orientation: Excellent organizations achieve sustained outstanding results that meet both the short- and long-term needs of all their stakeholders, within the context of their operating environment. Excellence is dependent upon balancing and satisfying the needs of all relevant stakeholders (this includes the people employed, customers, suppliers, and society in general as well as those with financial interests in the organization).

Results Orientation: Excellent organizations achieve sustained outstanding results that meet both the short- and long-term needs of all their stakeholders, within the context of their operating environment. Excellence is dependent upon balancing and satisfying the needs of all relevant stakeholders (this includes the people employed, customers, suppliers, and society in general as well as those with financial interests in the organization).

![]() Customer Focus: Excellent organizations consistently add value for customers by understanding, anticipating, and fulfilling needs, expectations, and opportunities. The customer is the final arbiter of product and service quality, and customer loyalty, retention, and market share gain are best optimized through a clear focus on the needs of current and potential customers.

Customer Focus: Excellent organizations consistently add value for customers by understanding, anticipating, and fulfilling needs, expectations, and opportunities. The customer is the final arbiter of product and service quality, and customer loyalty, retention, and market share gain are best optimized through a clear focus on the needs of current and potential customers.

![]() Leadership and Constancy of Purpose: Excellent organizations have leaders who shape the future and make it happen, acting as role models for the organization’s values and ethics. The behavior of an organization’s leaders creates a clarity and unity of purpose within the organization and an environment in which the organization and its people can excel.

Leadership and Constancy of Purpose: Excellent organizations have leaders who shape the future and make it happen, acting as role models for the organization’s values and ethics. The behavior of an organization’s leaders creates a clarity and unity of purpose within the organization and an environment in which the organization and its people can excel.

![]() Management by Processes and Facts: Excellent organizations are widely recognized for their ability to identify and respond effectively and efficiently to opportunities and threats. Organizations perform more effectively when all interrelated activities are understood and systematically managed and decisions concerning current operations are planned. Improvements are made using reliable information that includes stakeholder perceptions.

Management by Processes and Facts: Excellent organizations are widely recognized for their ability to identify and respond effectively and efficiently to opportunities and threats. Organizations perform more effectively when all interrelated activities are understood and systematically managed and decisions concerning current operations are planned. Improvements are made using reliable information that includes stakeholder perceptions.

![]() People Development and Involvement: Excellent organizations value their people and create a culture of empowerment for the achievement of both organizational and personal goals. The full potential of an organization’s people is best realized through shared values and a culture of trust and empowerment, which encourages the involvement of everyone.

People Development and Involvement: Excellent organizations value their people and create a culture of empowerment for the achievement of both organizational and personal goals. The full potential of an organization’s people is best realized through shared values and a culture of trust and empowerment, which encourages the involvement of everyone.

![]() Continuous Learning, Innovation, and Improvement: Excellent organizations generate increased value and levels of performance through continual improvement and systematic innovation by harnessing the creativity of their stakeholders. Organizational performance is maximized when it is based on the management and sharing of knowledge within a culture of continuous learning, innovation, and improvement.

Continuous Learning, Innovation, and Improvement: Excellent organizations generate increased value and levels of performance through continual improvement and systematic innovation by harnessing the creativity of their stakeholders. Organizational performance is maximized when it is based on the management and sharing of knowledge within a culture of continuous learning, innovation, and improvement.

![]() Partnership Development: Excellent organizations enhance their capabilities by effectively managing change within and beyond the organizational boundaries. An organization works more effectively when it has mutually beneficial relationships, built on trust, sharing of knowledge, and integration with its partners.

Partnership Development: Excellent organizations enhance their capabilities by effectively managing change within and beyond the organizational boundaries. An organization works more effectively when it has mutually beneficial relationships, built on trust, sharing of knowledge, and integration with its partners.

![]() Corporate Social Responsibility: Excellent organizations have a positive impact on the world around them by enhancing their performance while simultaneously advancing the economic, environmental, and social conditions within the communities they touch. The long-term interests of the organization and its people are best served by adopting an ethical approach and exceeding the expectations and regulations of the community at large.

Corporate Social Responsibility: Excellent organizations have a positive impact on the world around them by enhancing their performance while simultaneously advancing the economic, environmental, and social conditions within the communities they touch. The long-term interests of the organization and its people are best served by adopting an ethical approach and exceeding the expectations and regulations of the community at large.

European Foundation for Quality Management (EFQM)

The European Foundation for Quality Management (EFQM) has developed a model (figure 2–7) that was introduced in 1992 and has since been widely adopted by thousands of organizations across Europe. As you might expect, the EFQM Excellence Model meets the Fundamental Concepts of Excellence well. There are many approaches for achieving excellence in performance, but this model is based on the principle that “outstanding results are achieved with respect to People, Customers, Society and Performance with the help of Leadership Policy and Strategy that is carried through Resources, People, Processes and Partnerships.” [URL: EFQM]

EFQM (European Foundation for Quality Management) Excellence Model A non-prescriptive framework for an organization’s quality management system, defined and owned by the European Foundation for Quality Management and based on five “Enabling” criteria (covering what an organization does) and four “Results” criteria (covering what an organization achieves).

The EFQM Excellence Model can be used in different ways:

![]() It can be used to compare your organization with other organizations.

It can be used to compare your organization with other organizations.

![]() It can be used as a guide to identify areas of weakness so that they can be improved.

It can be used as a guide to identify areas of weakness so that they can be improved.

![]() It can be used as a basic structure for an organization’s management system.

It can be used as a basic structure for an organization’s management system.

![]() It can be used as a tool for self-assessment where a set of detailed criteria are given and organizations grade themselves under different headings.

It can be used as a tool for self-assessment where a set of detailed criteria are given and organizations grade themselves under different headings.

On a yearly basis the EFQM Excellence Award is given to recognize Europe’s best-performing organizations, whether private, public or non-profit. To win the EFQM Excellence Award, an applicant must be able to demonstrate that its performance not only exceeds that of its peers but also that it will maintain this advantage into the future.

Figure 2–7 EFQM Excellence Model

The model’s nine boxes represent the criteria against which to assess an organization’s progress toward excellence (see figure 2–7). Each of the nine criteria has a definition, which explains its high-level meaning. To develop the high-level meaning further, each criterion is supported by a number of sub-criteria. These pose a number of questions that should be considered in the course of an assessment. Five of these criteria are so-called “enablers” for excellence and four are measures of “results.”

Enablers – How We Do Things

![]() Leadership: How leaders develop and facilitate the achievement of the mission and vision, develop values required for long-term success and implement them via appropriate actions and behaviors, and are personally involved in ensuring that the organization’s management system is developed and implemented.

Leadership: How leaders develop and facilitate the achievement of the mission and vision, develop values required for long-term success and implement them via appropriate actions and behaviors, and are personally involved in ensuring that the organization’s management system is developed and implemented.

![]() Policy and strategy: How the organization implements its mission and vision via a clear stakeholder-focused strategy, supported by relevant policies, plans, objectives, targets, and processes.

Policy and strategy: How the organization implements its mission and vision via a clear stakeholder-focused strategy, supported by relevant policies, plans, objectives, targets, and processes.

![]() People: How the organization manages, develops, and releases the knowledge and full potential of its people at an individual, team-based, and organization-wide level and plans these activities to support its policy and strategy and the effective operation of its processes.

People: How the organization manages, develops, and releases the knowledge and full potential of its people at an individual, team-based, and organization-wide level and plans these activities to support its policy and strategy and the effective operation of its processes.

![]() Partnership and resources: How the organization plans and manages its external partnerships and internal resources to support its policy and strategy and the effective operation of its processes.

Partnership and resources: How the organization plans and manages its external partnerships and internal resources to support its policy and strategy and the effective operation of its processes.

![]() Process: How the organization designs, manages, and improves its processes to support its policy and strategy and fully satisfy, and generate increasing value for, its customers and other stakeholders.

Process: How the organization designs, manages, and improves its processes to support its policy and strategy and fully satisfy, and generate increasing value for, its customers and other stakeholders.

Results – What We Target, Measure, and Achieve

![]() Customer results: What the organization is achieving in relation to its external customers

Customer results: What the organization is achieving in relation to its external customers

![]() People results: What the organization is achieving in relation to its people

People results: What the organization is achieving in relation to its people

![]() Society results: What the organization is achieving in relation to local and international society as appropriate

Society results: What the organization is achieving in relation to local and international society as appropriate

![]() Key performance results: What the organization is achieving in relation to its planned performance

Key performance results: What the organization is achieving in relation to its planned performance

The nine criteria of the EFQM Excellence model are interlinked with a continuous improvement loop known as RADAR (Results, Approach, Deployment, Assessment, and Review). RADAR is also the method for scoring when using the EFQM model:

![]() Results: Covers what an organization has achieved and what has to be achieved

Results: Covers what an organization has achieved and what has to be achieved

![]() Approach: Covers what an organization plans to do to achieve target goals

Approach: Covers what an organization plans to do to achieve target goals

![]() Deployment: Covers the extent to which the organization uses the approach and in what parts

Deployment: Covers the extent to which the organization uses the approach and in what parts

![]() Assessment and Review: Covers the assessment and review of both approach and deployment of the approach

Assessment and Review: Covers the assessment and review of both approach and deployment of the approach

Malcolm Baldrige Model

The Baldrige Criteria for Performance Excellence is another leading model to provide a systems perspective for understanding performance management [URL: Baldrige]. The American model of the Baldrige Award for Quality is a contrast to the European Excellence Award. However, the Baldrige model is very similar to the European EFQM model in many aspects. The model is widely respected in the United States where its criteria are also the basis for the process. Most US states have quality award programs based on the Baldrige criteria.

The Baldrige Model criteria are divided into seven key categories:

1. Leadership

2. Strategic Planning

3. Customer Focus

4. Measurement, Analysis, and Knowledge Management

5. Workforce Focus

6. Process Management

7. Results

Each category is scored based on the approach used to address it, how well it is deployed throughout the organization, the cycles of learning generated, and its level of integration within the organization. An excellent way to improve your maturity is to use the criteria as a self-assessment and then compare your organization’s methods and processes with winners of the Baldrige Award. An integral part of the Baldrige process is for winners to share nonproprietary information from their applications so there is a ready-made benchmark for your organization’s maturity.

Six Sigma

Many organizations are using the concepts of Six Sigma to improve their business processes [Pyzdek and Keller 2009]. While the name Six Sigma has taken on a broader meaning, the fundamental purpose of Six Sigma is to improve processes such that there are at least six standard deviations between the worst-case specification limit and the mean of process variation. For those of us who are challenged by statistics, that means the process is essentially defect free!

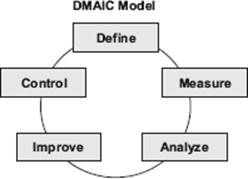

The DMAIC process, used by Six Sigma, is a variation of the PDCA cycle introduced by Deming that many people find helpful. The Six Sigma methodology is basically a structured approach to improvement and problem solving using measurable data to make decisions using the five-step DMAIC project flow: Define, Measure, Analyze, Improve, and Control (see figure 2–8). Six Sigma can help organizations improve processes. The best approach is to align Six Sigma projects with the organization’s strategic business plan by using, for example, the balanced scorecard. When Six Sigma projects are aligned with the organization’s strategic direction, good results can be achieved.

Figure 2–8 Six Sigma DMAIC model

Define

The Six Sigma team, usually consisting of an expert and apprentices, meets with the resource champion, such as the organizational president, to define what project is to be worked on, based on goals. The Define phase is where the problem statement and scope of project are defined and put down in writing. This process usually starts as a top-down approach where the senior managers have certain gaps that they see in their critical metrics and want to close those gaps. Those metrics and gaps need to then be broken down into manageable pieces for them to become projects.

Measure

Process parameters are used to measure progress and to determine that the experiments are having the right effects. This is where you actually start to put all the numbers together. Once you have defined the problem in the Define phase, you should have an output or outputs that you are interested in optimizing. The main focus of the Measure phase is to confirm that the data you are collecting is accurate.

Analyze

Using Design of Experiments (a structured approach to identifying the factors within a process that contribute to particular effects, then creating meaningful tests that verify possible improvement ideas or theories), brainstorming, process mapping, and other tools, the team collects data and analyzes it for clues to the root cause. In this phase, you collect data on all your inputs and outputs and then use one or more of the statistical tools to analyze them. The first thing you are trying to find out from your analysis is if there is a statistically significant relationship between each of the inputs and the outputs.

Improve

The champion and Six Sigma team meet with other stakeholders to find the best way to implement the fix that makes sense for the company. This is the phase where all the work you have done so far in your project can come together and start to show some success. All the data mining and analysis that has been done will give you the right improvements to define your processes. The phase starts with the creation of an improvement implementation plan. In order to create the implementation plan, you need to gather up all the conclusions that have been formed through the analysis that you have done. Now that you know what improvements need to be made, you have to figure out what you need to do in order to implement them.

Control

This phase is where you make sure that the improvements that you have made stay in place and are tightly controlled. The last part of DMAIC, Control, also means getting people trained in the new procedures so that the problem does not return.

2.5 Overview of Improvement Approaches

Syllabus Learning Objectives

|

LO 2.5.1 |

(K2) Compare the characteristics of a model-based approach with analytical and hybrid approaches. |

|

LO 2.5.2 |

(K2) Understand that a hybrid approach may be necessary. |

|

LO 2.5.3 |

(K2) Understand the need for improved people skills and explain improvements in staffing, training, consulting and coaching of test personnel. |

|

LO 2.5.4 |

(K2) Understand how the introduction of test tools can improve different parts of the test process. |

|

LO 2.5.5 |

(K2) Understand how improvements may be approached in other ways, for example, by the use of periodic reviews during the software life cycle, by the use of test approaches that include improvement cycles (e.g., project retrospectives in SCRUM), by the adoption of standards, and by focusing on resources such as test environments and test data. |

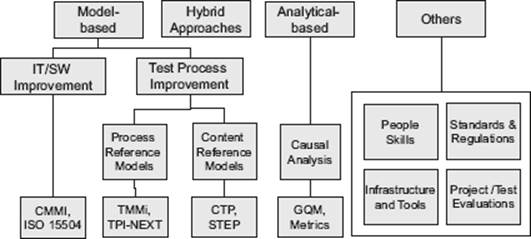

Figure 2–9 Overview of improvement approaches

2.5.1 Overview of Model-Based Approaches

Using a model to support process improvement is a tried and trusted approach that has acquired considerable acceptance in the software and testing industry. The underlying principle and philosophy of using process improvement models is that there is a correlation between an organization’s process maturity and capability in terms of the predictability of the result of functionality, effort, throughput time, and quality of the software process. Practical experiences have shown that excellent results can be achieved using a model-based approach, although many stories from failed process improvement projects are available as well.

Guidelines that have been widely used to improve the system and software development processes are Capability Maturity Model Integration, or CMMI (section 3.2.1) and ISO/IEC 15504 (section 3.2.2). These are often regarded as the industry standard for system and software process improvement. Despite the fact that testing can account for substantial parts of project costs, only limited attention is given to testing in the various software process improvement models such as the CMMI. As an answer, the testing community has created complementary improvement models.

Models for test process improvement can be broadly divided in two groups:

![]() Process reference models

Process reference models

![]() Content reference models

Content reference models

The primary difference between process reference models and content reference models lies in the way in which this core of test process information is leveraged by the model. Process reference models define generic bodies of testing best practices and how to improve different aspects of testing in a prescribed step-by-step manner. Different maturity levels are defined that range from an initial level up to an optimizing maturity level, depending both on the actual testing tasks performed and how well they are performed. The process reference models discussed in this book are Test Maturity Model integration (TMMi) and the Test Process Improvement model TPI NEXT.

Content reference models also have a core body of best testing practices, but they do not implement the concept of different process maturity levels and do not prescribe the path to be taken for improving test processes. The principal emphasis is placed on the judgment of the user to decide on where the test process is and where it should be improved. The content reference models discussed in this book are the Critical Testing Process (CTP), in section 3.3.5, and the Systematic Test and Evaluation Process (STEP), in section 3.3.4.

Chapter 3 covers model-based approaches in detail.

2.5.2 Overview of Analytical Approaches

Analytical approaches are used to identify problem areas in our processes and set specific improvement goals. Achievement of these goals is measured using predefined parameters. Whereas model-based approaches are most often applied top down, analytical approaches are very much bottom up; that is, the focus is on the problems that happen today in our projects.

By using techniques such as causal analysis, possible links between things that happen (causes) and the consequences they may have (effects) are made clear. Causal analysis techniques (section 4.2.) are used as a means of identifying the root causes of defects. Analytical approaches also involve the analysis of specific measures and metrics in order to assess the current situation in a test process and then decide on what improvement steps to take and how to measure their impact. The Goal-Question-Metric (GQM) approach (section 4.3.) is a typical example of such an analytical approach.

Chapter 4 covers analytical approaches in more detail.

2.5.3 Hybrid Approaches

Most organizations, or perhaps even all, use a hybrid approach. In some cases this is explicit, but in most case it happens implicitly. In the process improvement models CMMI and TMMi, process areas exist that specifically address causal analysis of problem areas. Most process improvement models also emphasize the need to gather, analyze, and report on metrics. However, within process improvement models the analytical techniques and aspects are largely introduced at higher maturity levels. A hybrid approach is therefore usually most successful when organizations and projects have already taken their initial steps toward higher maturity; for example, introducing a full-scale measurement system when test planning, monitoring, and control are not yet in place does not make much sense.

It is rather uncommon to find an organization practicing analytical techniques for a longer period of time without having some kind of overall (modelbased) framework in place. Thus, in practice, most often model-based and analytical-based approaches are combined in some way.

2.5.4 Other Approaches to Improving the Test Process

Improvements to the test process can be achieved by focusing on certain individual test improvement aspects described next (see figure 2–9). Note that most of these aspects are also covered within the context of the process improvement models mentioned earlier in section 2.5.1.

Test Process Improvement by Developing People’s Skills

Improvements to testing may be supported by providing increased understanding, knowledge, and skills to people and teams who are carrying out tests, managing testing, or making decisions based on testing. These may be testers, test managers, developers and other IT team members, other managers, users, customers, auditors, and other stakeholders. In addition to having a mature process, people still and often make the difference.

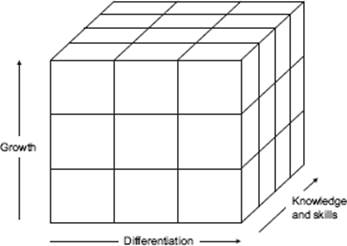

Increases in skills and competence may be provided by training, awareness sessions, mentoring, coaching, networking with peer groups, using knowledge management repositories, reading, and other educational activities. Beware though, it is almost never “just” training, and especially coaching (training on the job) often makes the difference.

Skill levels may be associated with career paths and professional progression. An example of test career paths is the so-called test career cube [van Veenendaal 2010] (see figure 2–10). The test career cube allows testing professionals to grow from tester to test manager (first dimension). Not every tester has the same interests and strong points: the second dimension of the test career cube allows testers to differentiate between technical, methodical, and managerial skills. The final dimension is the backbone for each successful career: the theoretical background that comes from training, coaching, and development of technical and social skills. The cube is a tool for career guidance by the human resource manager to match the ambitions, knowledge, and skills of the professional testers to the requirements of the organization. The test career cube was initially developed as part of the Test Management Approach [Pol, Teunnissen, and van Veenendaal 2002], and is now also part of TMap NEXT [Koomen, et al. 2006].

Figure 2–10 The test career cube

Skills and competencies that need to be improved may be testing skills, other IT technical skills, management skills, soft skills, or domain skills, as in the following examples:

![]() Test knowledge – test principles, techniques, tools, etc.

Test knowledge – test principles, techniques, tools, etc.

![]() Software engineering knowledge – software, requirements, development tooling, etc.

Software engineering knowledge – software, requirements, development tooling, etc.

![]() Domain knowledge – business process, user characteristics, etc.

Domain knowledge – business process, user characteristics, etc.

![]() Soft skills – communication, effective way of working, reporting, etc.

Soft skills – communication, effective way of working, reporting, etc.

Knowledge and skills are rapidly becoming a challenge for many testers. It is just not good enough anymore to understand testing and hold an ISTQB certificate. Many of us will no longer work in our “safe” independent test team. We will work more closely together with business representatives and developers, helping each other when needed and as a team trying to build a quality product. It is expected from testers to have domain knowledge, requirements engineering skills, development scripting skills, and strong soft skills, for example, for communication and negotiation. As products are becoming more and more complex and have interfaces to many other systems both inside and outside the organization, many non-functional testing issues will become extremely challenging. At the same time, businesses, users, and customers do not want to compromise on quality. To be able to still test non-functional aspects such as security, interoperability, performance, and reliability, highly specialized testers will be needed. Even more so than today, these experts will be full-time test professionals with in-depth knowledge and skills in one non-functional testing area only.

Skills for test process improvers are covered further in section 7.3.. However, the skills described are needed not just in the improvement team but across the entire test team, especially for senior testers and test managers.

The “people” aspect may well be managed and coordinated by the test improvement team most often (and preferred) in cooperation with human resources departments. Their main tasks and responsibilities in the context of people would then be as follows:

![]() Increase knowledge and skill levels of testers to support activities in the existing or improved test processes.

Increase knowledge and skill levels of testers to support activities in the existing or improved test processes.

![]() Increase competencies of individual testers to enable them to carry out the activities.

Increase competencies of individual testers to enable them to carry out the activities.

![]() Establish clearly defined testing roles and responsibilities.

Establish clearly defined testing roles and responsibilities.

![]() Improve the correlation between increasing competence and rewards, recognition, and career progression.

Improve the correlation between increasing competence and rewards, recognition, and career progression.

![]() Motivate test staff.

Motivate test staff.

Both TPI NEXT and TMMi have coverage of people aspects (e.g., training, career path) in their model. However, they are far from being people-oriented improvement models. In the following sections, two dedicated people improvement models are briefly discussed. Although not specific to testing, many practices and elements can easily be reused to improve the test workforce.

Personal Software Process (PSP)

An important step in software process improvement was taken with the Personal Software Process (PSP) [Humphrey 97], recognizing that the people factor was of utmost important in achieving quality. The PSP extends the improvement process to the people who actually do the work—the practicing (software) engineers. The PSP concentrates on the work practices of the individual software professionals. The principle behind the PSP is that to produce quality software systems, every software professional who works on the system must do quality work. The PSP is designed to help software professionals consistently use sound engineering practices. It shows them how to plan and track their work, use a defined and measured process, establish measurable goals, and track performance against these goals. The PSP shows software professionals how to manage quality from the beginning of the job, how to analyze the results of each job, and how to use the results to improve the process for the next project. Although not specifically targeted toward testers, many of the defined PSP practices can also be applied by those involved in testing.

The goal of the PSP is to help software professionals produce zero-defect, quality products on schedule. The PSP aims to provide software engineers with disciplined methods for improving personal software development processes. The PSP helps (software) engineers to do the following:

![]() Improve their estimating and planning skills

Improve their estimating and planning skills

![]() Make commitments they can keep

Make commitments they can keep

![]() Manage the quality of their projects

Manage the quality of their projects

![]() Reduce the number of defects in their work

Reduce the number of defects in their work

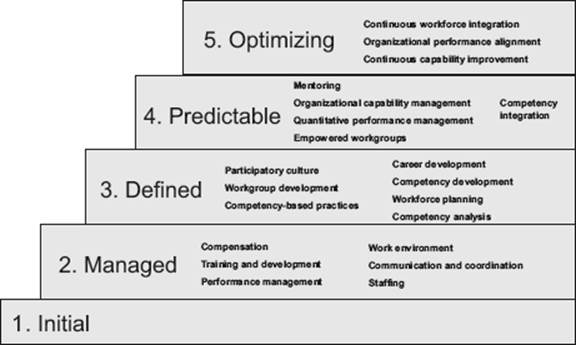

People CMM

The People Capability Maturity Model, or People CMM [Curtis, Hefley, and Miller 2009], has been developed and is being maintained by the Software Engineering Institute (SEI). It aims at helping organizations to develop the maturity of their workforce and address their critical people issues. It is based on current best practices in fields such as human resources, knowledge management, and organizational development. It guides organizations in improving their processes for managing and developing their workforces. It helps organizations to do the following:

![]() Characterize the maturity of their workforce practices

Characterize the maturity of their workforce practices

![]() Establish a program for continuous workforce improvement

Establish a program for continuous workforce improvement

![]() Set priorities for people-oriented improvement actions

Set priorities for people-oriented improvement actions

![]() Integrate workforce development with process improvement

Integrate workforce development with process improvement

![]() Establish a culture of excellence

Establish a culture of excellence

People CMM provides a road map for implementing workforce practices that continuously improve an organization’s workforce capability. Since an organization cannot implement all of the best workforce practices at once, a step-by-step approach is taken. Each progressive level of the model (seefigure 2–11) produces a unique transformation in the culture of an organization. In order to achieve this, organizations are equipped with practices to attract, develop, organize, and motivate their workforce. Thus People CMM established an integrated system of workforce practices that matures through increasing alignment with the organization’s business objectives, performance, and changing needs.

Figure 2–11 People CMM model

The People CMM consists of five maturity levels, each of which is a well-defined evolutionary plateau that institutionalizes new capabilities for developing the organization’s workforce.

Test Process Improvement by Using Tools

The quality of test tools has matured during the past number of years. Their scope, diversity, and application have increased enormously. (Refer to Foundations of Software Testing – ISTQB Certification [Black, van Veenendaal, and D. Graham 2012] for an overview of tools available.) The use of such tools may often bring about a considerable improvement in the productivity of the test process. At a time when time to market is more critical than ever, and applying the latest development methods and tools has shortened the time it takes to develop new systems, it is clear that testing is on the critical path of software development and that having an efficient test process is necessary to ensure that deadlines are met. Faced with these challenges, we need tools to provide the necessary support. In the past, tools have grown to maturity and can, if implemented correctly, provide support in raising the efficiency, quality, and control of the test process.

The critical success factor for the use of test tools is the existence of a standard process for testing and the organization of that process. The implementation of one or more test tools should be based on the standard test process and the techniques that support it. Herein lies a major problem, because test tools do not always fit well enough into an organization’s test process. Within a mature test process, tools will provide added value, but in an immature test process environment they can become counterproductive. Automation requires a certain level of repeatability and standardization regarding the activities carried out. An immature process does not comply with these conditions. Since automation imposes a certain level of standardization, it can also support the implementation of a more mature test process. Improvement and automation should therefore go side by side—to put it briefly, “Improve and Tool.” For example, one of the authors was involved in a project to improve test design by using blackbox test design techniques. The project did not run well and there was much resistance, partly due to the effort that was needed to apply these techniques. However, all of this changed when some easy-to-use test design tools [van Veenendaal 2012] were introduced as part of the project. After the testers started using the tools and applying the test design techniques, the effectiveness of testing was improved (in an efficient way).

Test tool A software product that supports one or more test activities, such as planning and control, specification, building initial files and data, test execution, and test analysis. [Pol, Teunnissen, and van Veenendaal 2002]

Test improvements may be gained by the successful introduction of tools. These may be efficiency improvements, effectiveness improvements, quality improvements, or all of these, as in the following examples:

![]() A large number of tests can be carried out unattended and automatically (e.g., overnight).

A large number of tests can be carried out unattended and automatically (e.g., overnight).

![]() Automation of routine and often boring test activities leads to greater reliability of the activities and to a higher degree of work satisfaction in the test team than when they are carried out manually. This results in higher productivity in testing.

Automation of routine and often boring test activities leads to greater reliability of the activities and to a higher degree of work satisfaction in the test team than when they are carried out manually. This results in higher productivity in testing.

![]() Regression testing can, to a large extent, be carried out automatically. Automating the process of regression testing makes it possible to efficiently perform a full regression test so that it can be determined whether the unchanged areas still function according to the specification.

Regression testing can, to a large extent, be carried out automatically. Automating the process of regression testing makes it possible to efficiently perform a full regression test so that it can be determined whether the unchanged areas still function according to the specification.

![]() Test tools ensure that the test data is the same for consecutive tests so that there is a certainty about the reliability of the initial situation with respect to the data.

Test tools ensure that the test data is the same for consecutive tests so that there is a certainty about the reliability of the initial situation with respect to the data.

![]() Some tools—e.g., static analysis—can detect defects that are difficult to detect manually. Using such tools, it is in principle possible to find all incidences of these types of faults.

Some tools—e.g., static analysis—can detect defects that are difficult to detect manually. Using such tools, it is in principle possible to find all incidences of these types of faults.

![]() Test and defect management tools align working practices regarding the documentation of test cases and logging defects.

Test and defect management tools align working practices regarding the documentation of test cases and logging defects.

![]() Code coverage tools support the implementation of exit criteria at the component level.

Code coverage tools support the implementation of exit criteria at the component level.

Specifically, the process improver can use tools to aid in gathering, analyzing, and reporting data, including performing statistical analysis and process modeling. Note that these are not necessarily testing tools.

Testing tools are implemented with the intention of increasing test efficiency, increasing control over testing, or increasing the quality of deliverables. Implementation of testing tools is not trivial, and the success of the implementation depends on the selected tool addressing the required improvement and the implementation process. A structured selection and implementation is needed and is a critical success factor for achieving the expected improvement to the testing process. In summary, the following activities need to be performed as part of a tool selection process [van Veenendaal 2010]:

![]() Identify and quantify the problem. Is the problem in the area of control, efficiency, or product quality?

Identify and quantify the problem. Is the problem in the area of control, efficiency, or product quality?

![]() Consider alternative solutions. Are tools the only possible solution? For example, look for alternatives in both the test and development process.

Consider alternative solutions. Are tools the only possible solution? For example, look for alternatives in both the test and development process.

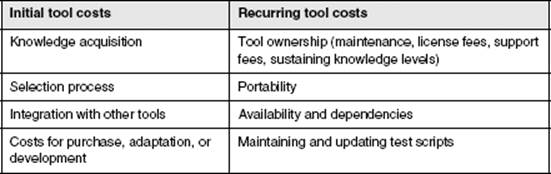

![]() Prepare an overall business case with measurable business objectives. Consider costs (see table 2–1) in the short and long term, expected benefits, and pay-back period.

Prepare an overall business case with measurable business objectives. Consider costs (see table 2–1) in the short and long term, expected benefits, and pay-back period.

Table 2–1 Overview of tool costs categories

![]() Identify and document tool requirements; include constraints and prioritize the requirements. In addition to tool features, consider requirements for hardware, software, supplier, integration, and information exchange.

Identify and document tool requirements; include constraints and prioritize the requirements. In addition to tool features, consider requirements for hardware, software, supplier, integration, and information exchange.

![]() Perform market research and compile a short list. At this point you may also consider developing your own tools, but watch for becoming too people dependent and allow for a long-term solution.

Perform market research and compile a short list. At this point you may also consider developing your own tools, but watch for becoming too people dependent and allow for a long-term solution.

![]() Organize supplier presentations. Let them use your application and prepare an agenda: What do you expect to see?

Organize supplier presentations. Let them use your application and prepare an agenda: What do you expect to see?

![]() Formally evaluate the tool; use it on a real project but allow for additional resources.

Formally evaluate the tool; use it on a real project but allow for additional resources.

![]() Write an evaluation report and make a formal decision on the tool(s).

Write an evaluation report and make a formal decision on the tool(s).

Once the selection has been completed, the tool needs to be deployed in the organization. The following list includes some critical success factors for the deployments of the tools:

![]() Treat it as a project. Formal testing may be needed during the pilot, such as, for example, integration testing with other tools and environments.

Treat it as a project. Formal testing may be needed during the pilot, such as, for example, integration testing with other tools and environments.

![]() Roll out the tool incrementally. Remember, if you don’t know what you’re doing, don’t do it on a large scale.

Roll out the tool incrementally. Remember, if you don’t know what you’re doing, don’t do it on a large scale.

![]() Adapt and improve the testing processes based on the tool.

Adapt and improve the testing processes based on the tool.

![]() Provide training and coaching.

Provide training and coaching.

![]() Define tool usage guidelines.

Define tool usage guidelines.

![]() Perform a retrospective meeting and be open to lessons learned during its application. (This may even result in improvements to the tool selection and deployment process—for example, following the causal analysis for problems during the first large-scale applications of the tool.)

Perform a retrospective meeting and be open to lessons learned during its application. (This may even result in improvements to the tool selection and deployment process—for example, following the causal analysis for problems during the first large-scale applications of the tool.)

![]() Monitor the tool’s use and its benefits.

Monitor the tool’s use and its benefits.

![]() Beware that deployment is a change management process (see chapter 8).

Beware that deployment is a change management process (see chapter 8).

The tool selection and deployment may well be carried out by the test improvement team with the support of tool specialists. Once a thorough selection and implementation process has been carried out, an adequate test tool (suite) will support the test improvement process! In today’s test improvement projects, tools should always be considered. After all, we are living and working in an IT society.