MySQL High Availability (2014)

Part I. High Availability and Scalability

Chapter 4. The Binary Log

“Joel?”

Joel jumped, nearly banging his head as he crawled out from under his desk. “I was just rerouting a few cables,” he said by way of an explanation.

Mr. Summerson merely nodded and said in a very authoritative manner, “I need you to look into a problem the marketing people are having with the new server. They need to roll back the data to a certain point.”

“Well, that depends…” Joel started, worried about whether he had snapshots of old states of the system.

“I told them you’d be right down.”

With that, Mr. Summerson turned and walked away. A moment later a woman stopped in front of his door and said, “He’s always like that. Don’t take it personally. Most of us call it a drive-by tasking.” She laughed and introduced herself. “My name’s Amy. I’m one of the developers here.”

Joel walked around his desk and met her at the door. “I’m Joel.”

After a moment of awkward silence Joel said, “I, er, better get on that thing.”

Amy smiled and said, “See you around.”

“Just focus on what you have to do to succeed,” Joel thought as he returned to his desk to search for that MySQL book he bought last week.

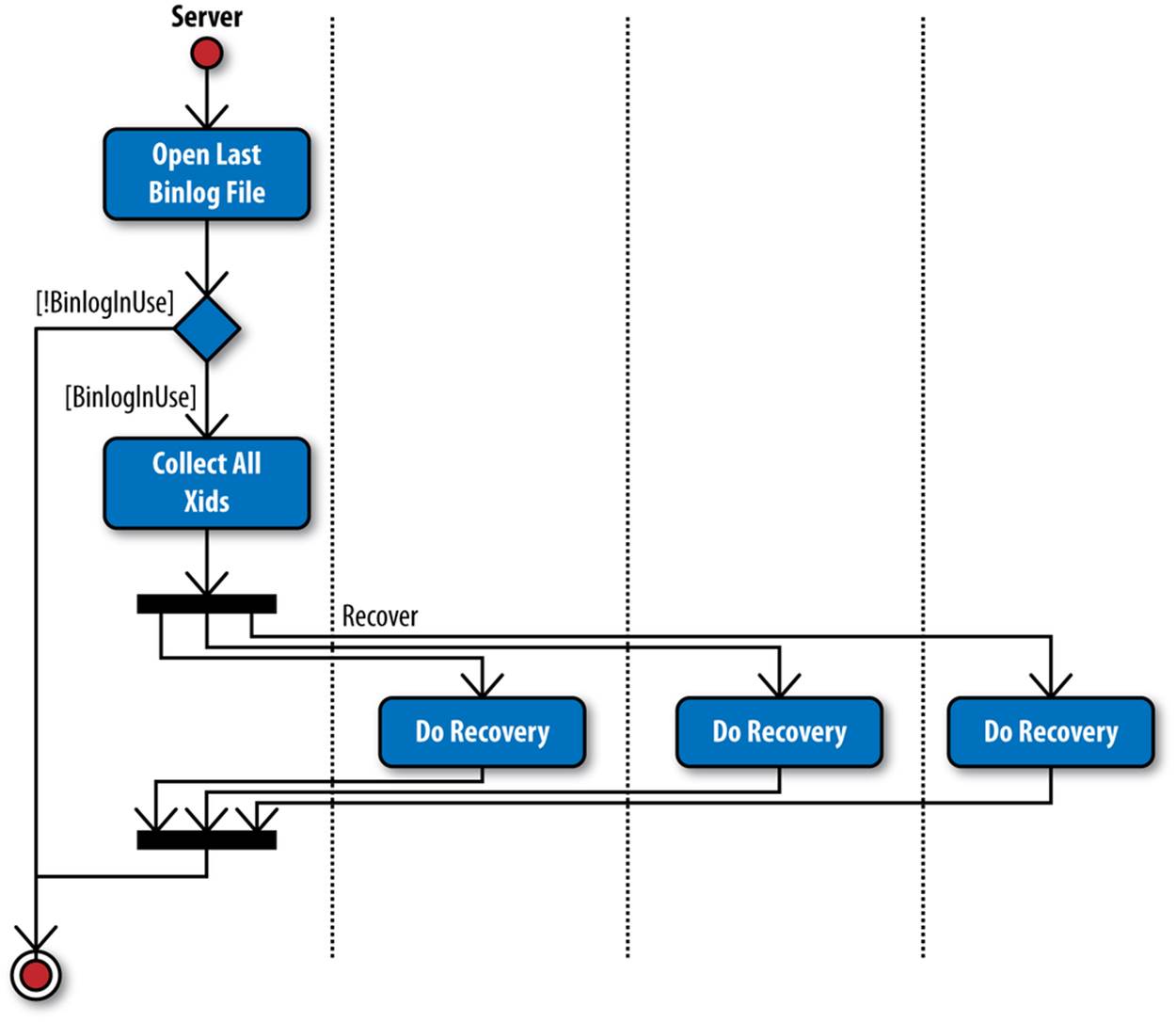

The previous chapter included a very brief introduction to the binary log. In this chapter, we will fill in more details and give a more thorough description of the binary log structure, the replication event format, and how to use the mysqlbinlog tool to investigate and work with the contents of binary logs.

The binary log records changes made to the database. It is usually used for replication, and the binary log then allows the same changes to be made on the slaves as well. Because the binary log normally keeps a record of all changes, you can also use it for auditing purposes to see what happened in the database, and for point-in-time recovery (PITR) by playing back the binary log to a server, repeating changes that were recorded in the binary log. (This is what we did in Reporting, where we played back all changes done between 23:55:00 and 23:59:59.)

The binary log contains information that could change the database. Note that statements that could potentially change the database are also logged, even if they don’t actually do change the database. The most notable cases are those statements that optionally make a change, such as DROP TABLE IF EXISTS or CREATE TABLE IF NOT EXISTS, along with statements such as DELETE and UPDATE that have WHERE conditions that don’t happen to match any rows on the master.

SELECT statements are not normally logged because they do not make any changes to any database. There are, however, exceptions.

The binary log records each transaction in the order that the commit took place on the master. Although transactions may be interleaved on the master, each appears as an uninterrupted sequence in the binary log, the order determined by the time of the commit.

Structure of the Binary Log

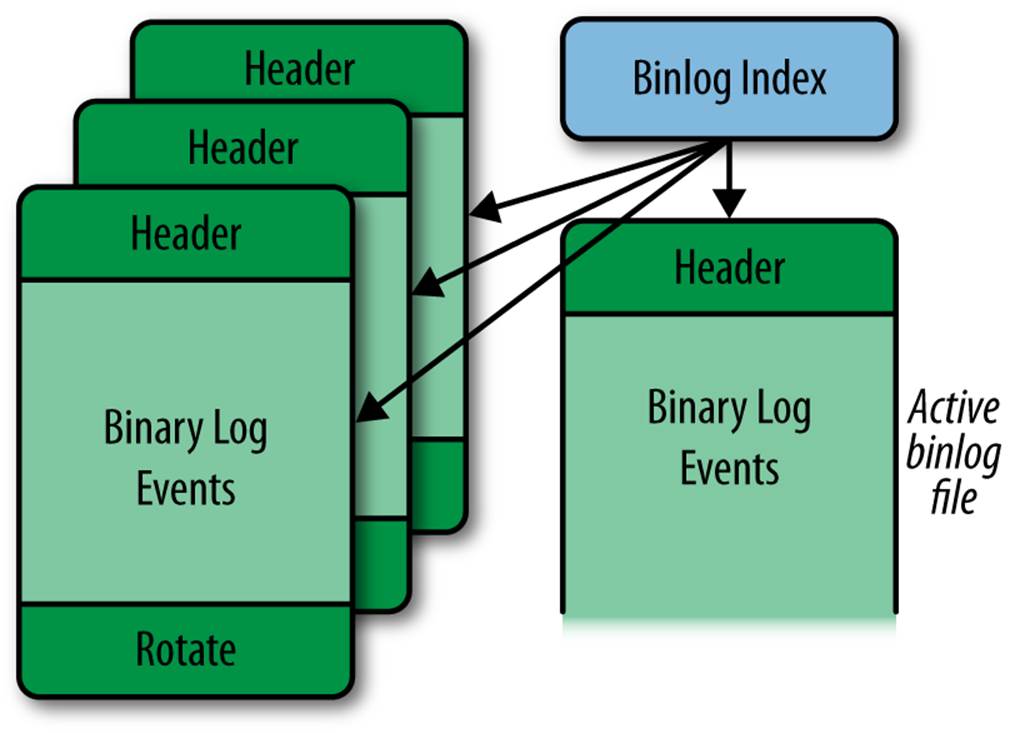

Conceptually, the binary log is a sequence of binary log events (also called binlog events or even just events when there is no risk of confusion). As you saw in Chapter 3, the binary log actually consists of several files, as shown in Figure 4-1, that together form the binary log.

Figure 4-1. The structure of the binary log

The actual events are stored in a series of files called binlog files with names in the form host-bin.000001, accompanied by a binlog index file that is usually named host-bin.index and keeps track of the existing binlog files. The binlog file that is currently being written to by the server is called the active binlog file. If no slaves are lagging, this is also the file that is being read by the slaves. The names of the binlog files and the binlog index file can be controlled using the log-bin and log-bin-index options, which you are familiar with from Configuring the Master. The options are covered in more detail later in this chapter.

The index file keeps track of all the binlog files used by the server so that the server can correctly create new binlog files when necessary, even after server restarts. Each line in the index file contains the name of a binlog file that is part of the binary log. Depending on the MySQL version, it can either be the full name or a name relative to the data directory. Commands that affect the binlog files, such as PURGE BINARY LOGS, RESET MASTER, and FLUSH LOGS, also affect the index file by adding or removing lines to match the files that were added or removed by the command.

As shown in Figure 4-1, each binlog file is made up of binlog events, with the Format_description event serving as the file’s header and the Rotate event as its footer. Note that a binlog file might not end with a rotate event if the server was interrupted or crashed.

The Format_description event contains information about the server that wrote the binlog file as well as some critical information about the file’s status. If the server is stopped and restarted, it creates a new binlog file and writes a new Format_description event to it. This is necessary because changes can potentially occur between bringing a server down and bringing it up again. For example, the server could be upgraded, in which case a new Format_description event would have to be written.

When the server has finished writing a binlog file, a Rotate event is added to end the file. The event points to the next binlog file in sequence by giving the name of the file as well as the position to start reading from.

The Format_description event and the Rotate event will be described in detail in the next section.

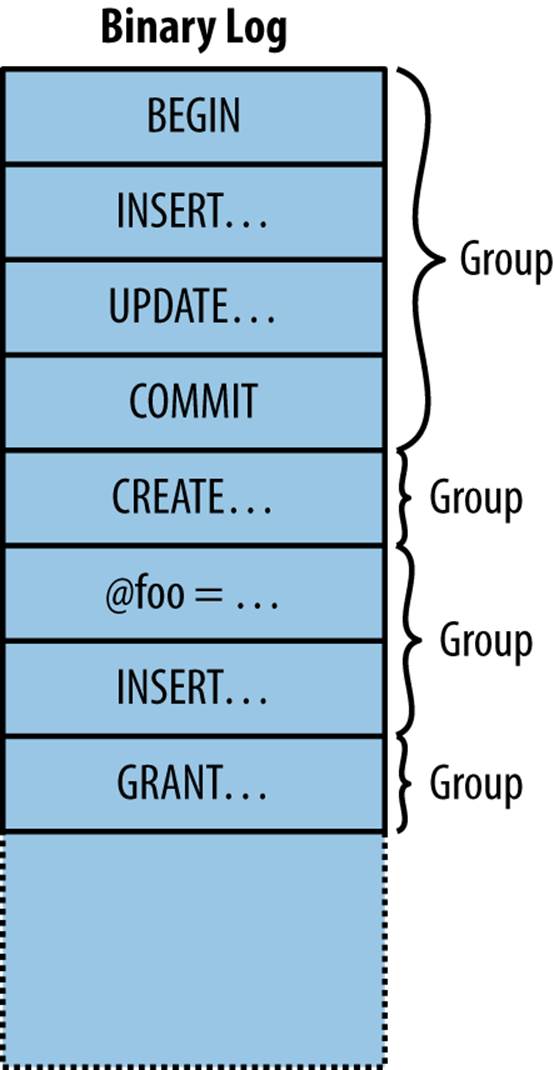

With the exception of control events (e.g., Format_description, Rotate, and Incident), events of a binlog file are grouped into units called groups, as seen in Figure 4-2. In transactional storage engines, each group is roughly equivalent to a transaction, but for nontransactional storage engines or statements that cannot be part of a transaction, such as CREATE or ALTER, each statement is a group by itself.[4]

In short, each group of events in the binlog file contains either a single statement not in a transaction or a transaction consisting of several statements.

Figure 4-2. A single binlog file with groups of events

Each group is executed entirely or not at all (with the exception of a few well-defined cases). If, for some reason, the slave stops in the middle of a group, replication will start from the beginning of the group and not from the last statement executed. Chapter 8 describes in detail how the slaveexecutes events.

Binlog Event Structure

MySQL 5.0 introduced a new binlog format: binlog format 4. The preceding formats were not easy to extend with additional fields if the need were to arise, so binlog format 4 was designed specifically to be extensible. This is still the event format used in every server version since 5.0, even though each version of the server has extended the binlog format with new events and some events with new fields. Binlog format 4 is the event format described in this chapter.

Each binlog event consists of four parts:

Common header

The common header is—as the name suggests—common to all events in the binlog file.

The common header contains basic information about the event, the most important fields being the event type and the size of the event.

Post header

The post header is specific to each event type; in other words, each event type stores different information in this field. But the size of this header, just as with the common header, is the same throughout a given binlog file. The size of each event type is given by theFormat_description event.

Event body

After the headers comes the event body, which is the variable-sized part of the event. The size and the end position is listed in the common header for the event. The event body stores the main data of the event, which is different for different event types. For the Query event, for instance, the body stores the query, and for the User_var event, the body stores the name and value of a user variable that was just set by a statement.

Checksum

Starting with MySQL 5.6, there is a checksum at the end of the event, if the server is configured to generate one. The checksum is a 32-bit integer that is used to check that the event has not been corrupted since it was written.

A complete listing of the formats of all events is beyond the scope of this book, but because the Format_description and Rotate events are critical to how the other events are interpreted, we will briefly cover them here. If you are interested in the details of the events, you can find them in the MySQL Internals Manual.

As already noted, the Format_description event starts every binlog file and contains common information about the events in the file. The result is that the Format_description event can be different between different files; this typically occurs when a server is upgraded and restarted. The Format_description_log_event contains the following fields:

Binlog file format version

This is the version of the binlog file, which should not be confused with the version of the server. MySQL versions 3.23, 4.0, and 4.1 use version 3 of the binary log, while MySQL versions 5.0 and later use version 4 of the binary log.

The binlog file format version changes when developers make significant changes in the overall structure of the file or the events. In MySQL version 5.0, the start event for a binlog file was changed to use a different format and the common headers for all events were also changed, which prompted the change in the binlog file format version.

Server version

This is a version string denoting the server that created the file. This includes the version of the server as well as additional information if special builds are made. The format is normally the three-position version number, followed by a hyphen and any additional build options. For example, “5.5.10-debug-log” means debug build version 5.5.10 of the server.

Common header length

This field stores the length of the common header. Because it’s here in the Format_description, this length can be different for different binlog files. This holds for all events except the Format_description and Rotate events, which cannot vary.

Post-header lengths

The post-header length for each event is fixed within a binlog file, and this field stores an array of the post-header length for each event that can occur in the binlog file. Because the number of event types can vary between servers, the number of different event types that the server can produce is stored before this field.

NOTE

The Rotate and Format_description log events have a fixed length because the server needs them before it knows the size of the common header length. When connecting to the server, it first sends a Format_description event. Because the length of the common header is stored in the Format_description event, there is no way for the server to know what the size of the common header is for the Rotate event unless it has a fixed size. So for these two events, the size of the common header is fixed and will never change between server versions, even if the size of the common header changes for other events.

Because both the size of the common header and the size of the post header for each event type are given in the Format_description event, extending the format with new events or even increasing the size of the post headers by adding new fields is supported by this format and will therefore not require a change in the binlog file format.

With each extension, particular care is taken to ensure that the extension does not affect interpretation of events that were already in earlier versions. For example, the common header can be extended with an additional field to indicate that the event is compressed and the type of compression used, but if this field is missing—which would be the case if a slave is reading events from an old master—the server should still be able to fall back on its default behavior.

Event Checksums

Because hardware can fail and software can contain bugs, it is necessary to have some way to ensure that data corrupted by such events is not applied on the slave. Random failures can occur anywhere, and if they occur inside a statement, they often lead to a syntax error causing the slave to stop. However, relying on this to prevent corrupt events from being replicated is a poor way to ensure integrity of events in the binary log. This policy would not catch many types of corruptions, such as in timestamps, nor would it work for row-based events where the data is encoded in binary form and random corruptions are more likely to lead to incorrect data.

To ensure the integrity of each event, MySQL 5.6 introduced replication event checksums. When events are written, a checksum is added, and when the events are read, the checksum is computed for the event and compared against the checksum written with the event. If the checksums do not match, execution can be aborted before any attempt is made to apply the event on the slave. The computation of checksums can potentially impact performance, but benchmarking has demonstrated no noticeable performance degradation from the addition and checking of checksums, so they are enabled by default in MySQL 5.6. They can, however, be turned off if necessary.

In MySQL 5.6, checksums can be generated when changes are written either to the binary log or to the relay log, and verified when reading events back from one of these logs.

Replication event checksums are controlled using three options:

binlog-checksum=type

This option enables checksums and tells the server what checksum computation to use. Currently there are only two choices: CRC32 uses ISO-3309 CRC-32 checksums, whereas NONE turns off checksumming. The default is CRC32, meaning that checksums are generated.

master-verify-checksum=boolean

This option controls whether the master verifies the checksum when reading it from the binary log. This means that the event checksum is verified when it is read from the binary log by the dump thread (see Replication Architecture Basics), but before it is sent out, and also when usingSHOW BINLOG EVENTS. If any of the events shown is corrupt, the command will throw an error. This option is off by default.

slave-sql-verify-checksum=boolean

This option controls whether the slave verifies the event checksum after reading it from the relay log and before applying it to the slave database. This option is off by default.

If you get a corrupt binary log or relay log, mysqlbinlog can be used to find the bad checksum using the --verify-binlog-checksum option. This option causes mysqlbinlog to verify the checksum of each event read and stop when a corrupt event is found, which the following exampledemonstrates:

$ client/mysqlbinlog --verify-binlog-checksum master-bin.000001

.

.

.

# at 261

#110406 8:35:28 server id 1 end_log_pos 333 CRC32 0xed927ef2...

SET TIMESTAMP=1302071728/*!*/;

BEGIN

/*!*/;

# at 333

#110406 8:35:28 server id 1 end_log_pos 365 CRC32 0x01ed254d Intvar

SET INSERT_ID=1/*!*/;

ERROR: Error in Log_event::read_log_event(): 'Event crc check failed!...

DELIMITER ;

# End of log file

ROLLBACK /* added by mysqlbinlog */;

/*!50003 SET COMPLETION_TYPE=@OLD_COMPLETION_TYPE*/;

Logging Statements

Starting with MySQL 5.1, row-based replication is also available, which will be covered in Row-Based Replication.

In statement-based replication, the actual executed statement is written to the binary log together with some execution information, and the statement is re-executed on the slave. Because not all events can be logged as statements, there are some exceptions that you should be aware of. This section will describe the process of logging individual statements as well as the important caveats.

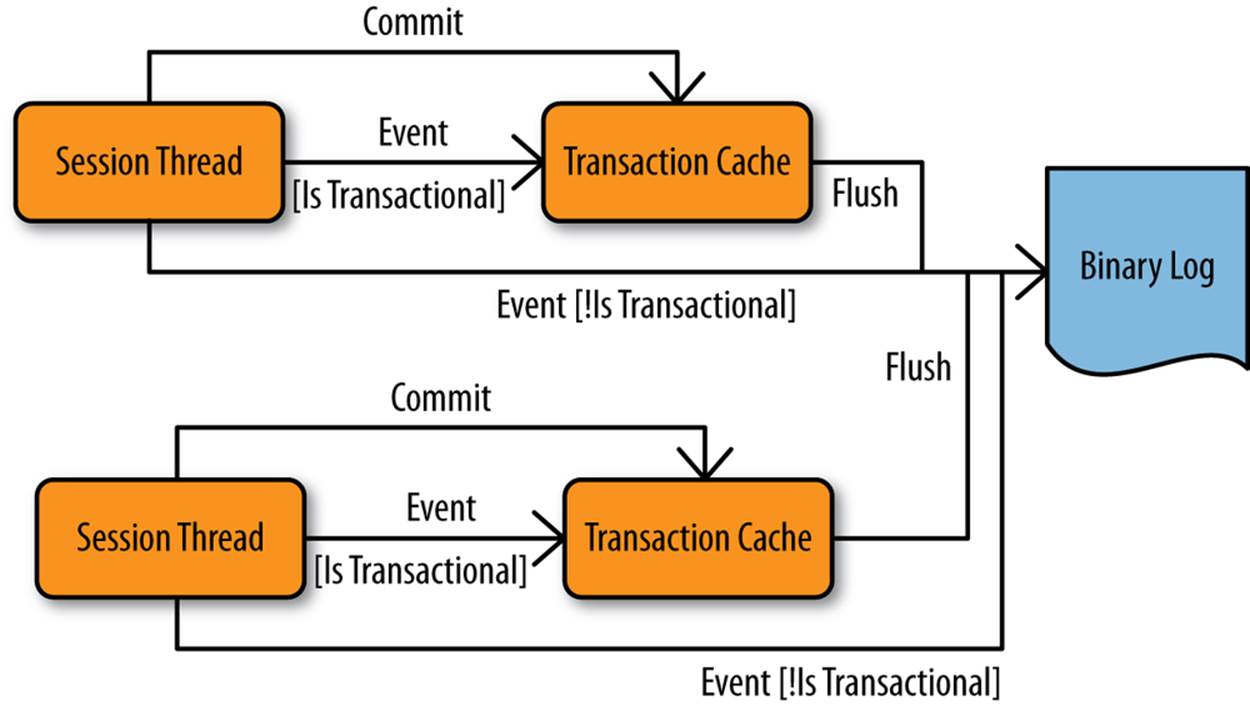

Because the binary log is a common resource—all threads write to it—it is critical to prevent two threads from updating the binary log at the same time. To handle this, a lock for the binary log—called the LOCK_log mutex—is acquired just before each group is written to the binary log and released just after the group has been written. Because all session threads for the server can potentially log transactions to the binary log, it is quite common for several session threads to block on this lock.

Logging Data Manipulation Language Statements

Data manipulation are usuallylanguage (DML) statements are usually DELETE, INSERT, and UPDATE statements. To support logging changes in a consistent manner, MySQL writes the binary log while transaction-level locks are held, and releases them after the binary log has been written.

To ensure that the binary log is updated consistently with the tables that the statement modifies, each statement is logged to the binary log during statement commit, just before the table locks are released. If the logging were not made as part of the statement, another statement could be “injected” between the changes that the statement introduces to the database and the logging of the statement to the binary log. This would mean that the statements would be logged in a different order than the order in which they took effect in the database, which could lead to inconsistencies between master and slave. For instance, an UPDATE statement with a WHERE clause could update different rows on the slave because the values in those rows might be different if the statement order changed.

Logging Data Definition Language Statements

Data definition language (DDL) statements affect a schema, such as CREATE TABLE and ALTER TABLE statements. These create or change objects in the filesystem—for example, table definitions are stored in .frm files and databases are represented as filesystem directories—so the server keeps information about these available in internal data structures. To protect the update of the internal data structure, it is necessary to acquire an internal lock (called LOCK_open) before altering the table definition.

Because a single lock is used to protect these data structures, the creation, alteration, and destruction of database objects can be a considerable source of performance problems. This includes the creation and destruction of temporary tables, which is quite common as a technique to create an intermediate result set to perform computations on.

If you are creating and destroying a lot of temporary tables, it is often possible to boost performance by reducing the creation (and subsequent destruction) of temporary tables.

Logging Queries

For statement-based replication, the most common binlog event is the Query event, which is used to write a statement executed on the master to the binary log. In addition to the actual statement executed, the event contains some additional information necessary to execute the statement.

Recall that the binary log can be used for many purposes and contains statements in a potentially different order from that in which they were executed on the master. In some cases, part of the binary log may be played back to a server to perform PITR, and in some cases, replication may start in the middle of a sequence of events because a backup has been restored on a slave before starting replication.

In all these cases, the events are executing in different contexts (i.e., there is information that is implicit when the server executes the statement but that has to be known to execute the statement correctly). Examples include:

Current database

If the statement refers to a table, function, or procedure without qualifying it with the database, the current database is implicit for the statement.

Value of user-defined variable

If a statement refers to a user-defined variable, the value of the variable is implicit for the statement.

Seed for the RAND function

The RAND function is based on a pseudorandom number function, meaning that it can generate a sequence of numbers that are reproducible but appear random in the sense that they are evenly distributed. The function is not really random, but starts from a seed number and applies a pseudorandom function to generate a deterministic sequence of numbers. This means that given the same seed, the RAND function will always return the same number. However, this makes the seed implicit for the statement.

The current time

Obviously, the time the statement started executing is implicit. Having a correct time is important when calling functions that are dependent on the current time—such as NOW and UNIX_TIMESTAMP—because otherwise they will return different results if there is a delay between the statement execution on the master and on the slave.

Value used when inserting into an AUTO_INCREMENT column

If a statement inserts a row into a table with a column defined with the AUTO_INCREMENT attribute, the value used for that row is implicit for the statement because it depends on the rows inserted before it.

Value returned by a call to LAST_INSERT_ID

If the LAST_INSERT_ID function is used in a statement, it depends on the value inserted by a previous statement, which makes this value implicit for the statement.

Thread ID

For some statements, the thread ID is implicit. For example, if the statement refers to a temporary table or uses the CURRENT_ID function, the thread ID is implicit for the statement.

Because the context for executing the statements cannot be known when they’re replayed—either on a slave or on the master after a crash and restart—it is necessary to make the implicit information explicit by adding it to the binary log. This is done in slightly different ways for different kinds of information.

In addition to the previous list, some information is implicit to the execution of triggers and stored routines, but we will cover that separately in Triggers, Events, and Stored Routines.

Let’s consider each of the cases of implicit information individually, demonstrate the problem with each one, and examine how the server handles it.

Current database

The log records the current database by adding it to a special field of the Query event. This field also exists for the events used to handle the LOAD DATA INFILE statement, discussed in LOAD DATA INFILE Statements, so the description here applies to that statement as well. The current database also plays an important role in filtering on the database and is described later in this chapter.

Current time

Five functions use the current time to compute their values: NOW, CURDATE, CURTIME, UNIX_TIMESTAMP, and SYSDATE. The first four functions return a value based on the time when the statement started to execute. In contrast, SYSDATE returns the value when the function is executed. The difference can best be demonstrated by comparing the execution of NOW and SYSDATE with an intermediate sleep:

mysql> SELECT SYSDATE(), NOW(), SLEEP(2), SYSDATE(), NOW()\G

*************************** 1. row ***************************

SYSDATE(): 2013-06-08 23:24:08

NOW(): 2013-06-08 23:24:08

SLEEP(2): 0

SYSDATE(): 2013-06-08 23:24:10

NOW(): 2013-06-08 23:24:08

1 row in set (2.00 sec)

Both functions are evaluated when they are encountered, but NOW returns the time that the statement started executing, whereas SYSDATE returns the time when the function was executed.

To handle these time functions correctly, the timestamp indicating when the event started executing is stored in the event. This value is then copied from the event to the slave execution thread and used as if it were the time the event started executing when computing the value of the time functions.

Because SYSDATE gets the time from the operating system directly, it is not safe for statement-based replication and will return different values on the master and slave when executed. So unless you really want to have the actual time inserted into your tables, it is prudent to stay away from this function.

Context events

Some implicit information is associated with statements that meet certain conditions:

§ If the statement contains a reference to a user-defined variable (as in Example 4-1), it is necessary to add the value of the user-defined variable to the binary log.

§ If the statement contains a call to the RAND function, it is necessary to add the pseudorandom seed to the binary log.

§ If the statement contains a call to the LAST_INSERT_ID function, it is necessary to add the last inserted ID to the binary log.

§ If the statement performs an insert into a table with an AUTO_INCREMENT column, it is necessary to add the value that was used for the column (or columns) to the binary log.

Example 4-1. Statements with user-defined variables

SET @value = 45;

INSERT INTO t1 VALUES (@value);

In each of these cases, one or more context events are added to the binary log before the event containing the query is written. Because there can be several context events preceding a Query event, the binary log can handle multiple user-defined variables together with the RAND function, or (almost) any combination of the previously listed conditions. The binary log stores the necessary context information through the following events:

User_var

Each such event records the name and value of a single user-defined variable.

Rand

Records the random number seed used by the RAND function. The seed is fetched internally from the session’s state.

Intvar

If the statement is inserting into an autoincrement column, this event records the value of the internal autoincrement counter for the table before the statement starts.

If the statement contains a call to LAST_INSERT_ID, this event records the value that this function returned in the statement.

Example 4-2 shows some statements that generate all of the context events and how the events appear when displayed using SHOW BINLOG EVENTS. Note that there can be several context events before each statement.

Example 4-2. Query events with context events

master> SET @foo = 12;

Query OK, 0 rows affected (0.00 sec)

master> SET @bar = 'Smoothnoodlemaps';

Query OK, 0 rows affected (0.00 sec)

master> INSERT INTO t1(b,c) VALUES

-> (@foo,@bar), (RAND(), 'random');

Query OK, 2 rows affected (0.00 sec)

Records: 2 Duplicates: 0 Warnings: 0

master> INSERT INTO t1(b) VALUES (LAST_INSERT_ID());

Query OK, 1 row affected (0.00 sec)

master> SHOW BINLOG EVENTS FROM 238\G

*************************** 1. row ***************************

Log_name: mysqld1-bin.000001

Pos: 238

Event_type: Query

Server_id: 1

End_log_pos: 306

Info: BEGIN

*************************** 2. row ***************************

Log_name: mysqld1-bin.000001

Pos: 306

Event_type: Intvar

Server_id: 1

End_log_pos: 334

Info: INSERT_ID=1

*************************** 3. row ***************************

Log_name: mysqld1-bin.000001

Pos: 334

Event_type: RAND

Server_id: 1

End_log_pos: 369

Info: rand_seed1=952494611,rand_seed2=949641547

*************************** 4. row ***************************

Log_name: mysqld1-bin.000001

Pos: 369

Event_type: User var

Server_id: 1

End_log_pos: 413

Info: @`foo`=12

*************************** 5. row ***************************

Log_name: mysqld1-bin.000001

Pos: 413

Event_type: User var

Server_id: 1

End_log_pos: 465

Info: @`bar`=_utf8 0x536D6F6F74686E6F6F6...

*************************** 6. row ***************************

Log_name: mysqld1-bin.000001

Pos: 465

Event_type: Query

Server_id: 1

End_log_pos: 586

Info: use `test`; INSERT INTO t1(b,c) VALUES (@foo,@bar)...

*************************** 7. row ***************************

Log_name: mysqld1-bin.000001

Pos: 586

Event_type: Xid

Server_id: 1

End_log_pos: 613

Info: COMMIT /* xid=44 */

*************************** 8. row ***************************

Log_name: mysqld1-bin.000001

Pos: 613

Event_type: Query

Server_id: 1

End_log_pos: 681

Info: BEGIN

*************************** 9. row ***************************

Log_name: mysqld1-bin.000001

Pos: 681

Event_type: Intvar

Server_id: 1

End_log_pos: 709

Info: LAST_INSERT_ID=1

*************************** 10. row ***************************

Log_name: mysqld1-bin.000001

Pos: 709

Event_type: Intvar

Server_id: 1

End_log_pos: 737

Info: INSERT_ID=3

*************************** 11. row ***************************

Log_name: mysqld1-bin.000001

Pos: 737

Event_type: Query

Server_id: 1

End_log_pos: 843

Info: use `test`; INSERT INTO t1(b) VALUES (LAST_INSERT_ID())

*************************** 12. row ***************************

Log_name: mysqld1-bin.000001

Pos: 843

Event_type: Xid

Server_id: 1

End_log_pos: 870

Info: COMMIT /* xid=45 */

12 rows in set (0.00 sec)

Thread ID

The last implicit piece of information that the binary log sometimes needs is the thread ID of the MySQL session handling the statement. The thread ID is necessary when a function is dependent on the thread ID—such as when it refers to CONNECTION_ID—but most importantly for handling temporary tables.

Temporary tables are specific to each thread, meaning that two temporary tables with the same name are allowed to coexist, provided they are defined in different sessions. Temporary tables can provide an effective means to improve the performance of certain operations, but they require special handling to work with the binary log.

Internally in the server, temporary tables are handled by creating obscure names for storing the table definitions. The names are based on the process ID of the server, the thread ID that creates the table, and a thread-specific counter to distinguish between different instances of the table from the same thread. This naming scheme allows tables from different threads to be distinguished from each other, but each statement can access its proper table only if the thread ID is stored in the binary log.

Similar to how the current database is handled in the binary log, the thread ID is stored as a separate field in every Query event and can therefore be used to compute thread-specific data and handle temporary tables correctly.

When writing the Query event, the thread ID to store in the event is read from the pseudo_thread_id server variable. This means that it can be set before executing a statement, but only if you have SUPER privileges. This server variable is intended to be used by mysqlbinlog to emit statements correctly and should not normally be used.

For a statement that contains a call to the CONNECTION_ID function or that uses or creates a temporary table, the Query event is marked as thread-specific in the binary log. Because the thread ID is always present in the Query event, this flag is not necessary but is mainly used to allowmysqlbinlog to avoid printing unnecessary assignments to the pseudo_thread_id variable.

LOAD DATA INFILE Statements

The LOAD DATA INFILE statement makes it easy to fill tables quickly from a file. Unfortunately, it is dependent on a certain kind of context that cannot be covered by the context events we have discussed: files that need to be read from the filesystem.

To handle LOAD DATA INFILE, the MySQL server uses a special set of events to handle the transfer of the file using the binary log. In addition to solving the problem for LOAD DATA INFILE, this makes the statement a very convenient tool for transferring large amounts of data from the master to the slave, as you will see soon. To correctly transfer and execute a LOAD DATA INFILE statement, several new events are introduced into the binary log:

Begin_load_query

This event signals the start of data transfer in the file.

Append_block

A sequence of one or more of these events follows the Begin_load_query event to contain the rest of the file’s data, if the file was larger than the maximum allowed packet size on the connection.

Execute_load_query

This event is a specialized variant of the Query event that contains the LOAD DATA INFILE statement executed on the master.

Even though the statement contained in this event contains the name of the file that was used on the master, this file will not be sought by the slave. Instead, the contents provided by the preceding Begin_load_query and Append_block events will be used.

For each LOAD DATA INFILE statement executed on the master, the file to read is mapped to an internal file-backed buffer, which is used in the following processing. In addition, a unique file ID is assigned to the execution of the statement and is used to refer to the file read by the statement.

While the statement is executing, the file contents are written to the binary log as a sequence of events starting with a Begin_load_query event—which indicates the beginning of a new file—followed by zero or more Append_block events. Each event written to the binary log is no larger than the maximum allowed packet size, as specified by the max-allowed-packet option.

After the entire file is read and applied to the table, the execution of the statement terminates by writing the Execute_load_query event to the binary log. This event contains the statement executed together with the file ID assigned to the execution of the statement. Note that the statement is not the original statement as the user wrote it, but rather a recreated version of the statement.

NOTE

If you are reading an old binary log, you might instead find Load_log_event, Execute_log_event, and Create_file_log_event. These were the events used to replicate LOAD DATA INFILE prior to MySQL version 5.0.3 and were replaced by the implementation just described.

Example 4-3 shows the events written to the binary log by a successful execution of a LOAD DATA INFILE statement. In the Info field, you can see the assigned file ID—1, in this case—and see that it is used for all the events that are part of the execution of the statement. You can also see that the file foo.dat used by the statement contains more than the maximum allowed packet size of 16384, so it is split into three events.

Example 4-3. Successful execution of LOAD DATA INFILE

master> SHOW BINLOG EVENTS IN 'master-bin.000042' FROM 269\G

*************************** 1. row ***************************

Log_name: master-bin.000042

Pos: 269

Event_type: Begin_load_query

Server_id: 1

End_log_pos: 16676

Info: ;file_id=1;block_len=16384

*************************** 2. row ***************************

Log_name: master-bin.000042

Pos: 16676

Event_type: Append_block

Server_id: 1

End_log_pos: 33083

Info: ;file_id=1;block_len=16384

*************************** 3. row ***************************

Log_name: master-bin.000042

Pos: 33083

Event_type: Append_block

Server_id: 1

End_log_pos: 33633

Info: ;file_id=1;block_len=527

*************************** 4. row ***************************

Log_name: master-bin.000042

Pos: 33633

Event_type: Execute_load_query

Server_id: 1

End_log_pos: 33756

Info: use `test`; LOAD DATA INFILE 'foo.dat' INTO...;file_id=1

4 rows in set (0.00 sec)

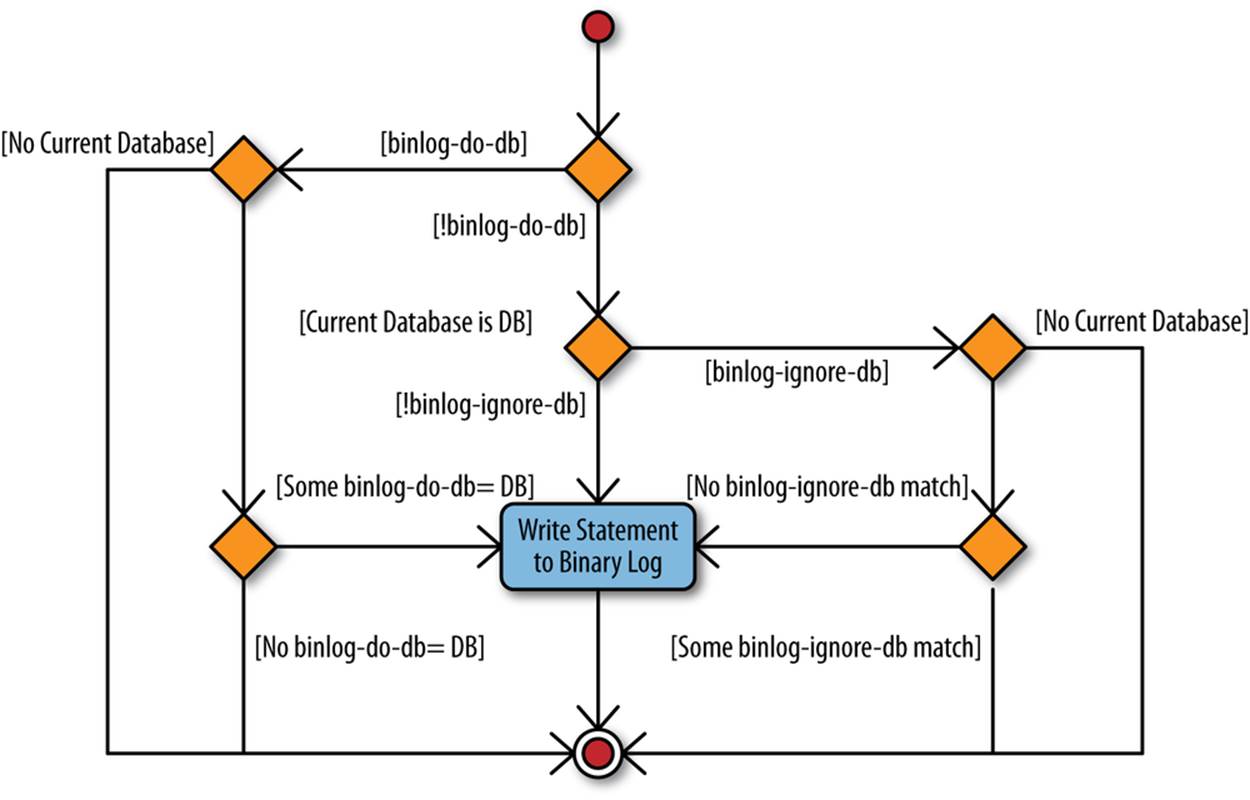

Binary Log Filters

It is possible to filter out statements from the binary log using two options: binlog-do-db and binlog-ignore-db (which we will call binlog-*-db, collectively). The binlog-do-db option is used when you want to filter only statements belonging to a certain database, and binlog-ignore-db is used when you want to ignore a certain database but replicate all other databases.

These options can be given multiple times, so to filter out both the database one_db and the database two_db, you must give both options in the my.cnf file. For example:

[mysqld]

binlog-ignore-db=one_db

binlog-ignore-db=two_db

The way MySQL filters events can be quite a surprise to unfamiliar users, so we’ll explain how filtering works and make some recommendations on how to avoid some of the major headaches.

Figure 4-3 shows how MySQL determines whether the statement is filtered. The filtering is done on a statement level—either the entire statement is filtered out or the entire statement is written to the binary log—and the binlog-*-db options use the current database to decide whether the statement should be filtered, not the database of the tables affected by the statement.

To help you understand the behavior, consider the statements in Example 4-4. Each line uses bad as the current database and changes tables in different databases.

Figure 4-3. Logic for binlog-*-db filters

Example 4-4. Statements using different databases

USE bad; INSERT INTO t1 VALUES (1),(2);![]()

USE bad; INSERT INTO good.t2 VALUES (1),(2);![]()

USE bad; UPDATE good.t1, ugly.t2 SET a = b;![]()

![]()

This line changes a table in the database named bad since it does not qualify the table name with a database name.

![]()

This line changes a table in a different database than the current database.

![]()

This line changes two tables in two different databases, neither of which is the current database.

Now, given these statements, consider what happens if the bad database is filtered using binlog-ignore-db=bad. None of the three statements in Example 4-4 will be written to the binary log, even though the second and third statements change tables on the good and ugly database and make no reference to the bad database. This might seem strange at first—why not filter the statement based on the database of the table changed? But consider what would happen with the third statement if the ugly database was filtered instead of the bad database. Now one database in theUPDATE is filtered out and the other isn’t. This puts the server in a catch-22 situation, so the problem is solved by just filtering on the current database, and this rule is used for all statements (with a few exceptions).

NOTE

To avoid mistakes when executing statements that can potentially be filtered out, make it a habit not to write statements so they qualify table, function, or procedure names with the database name. Instead, whenever you want to access a table in a different database, issue a USE statement to make that database the current database. In other words, instead of writing:

INSERT INTO other.book VALUES ('MySQL', 'Paul DuBois');

write:

USE other; INSERT INTO book VALUES ('MySQL', 'Paul DuBois');

Using this practice, it is easy to see by inspection that the statement does not update multiple databases simply because no tables should be qualified with a database name.

This behavior does not apply when row-based replication is used. The filtering employed in row-based replication will be discussed in Filtering in Row-Based Replication, but since row-based replication can work with each individual row change, it is able to filter on the actual table that the row is targeted for and does not use the current database.

So, what happens when both binlog-do-db and binlog-ignore-db are used at the same time? For example, consider a configuration file containing the following two rules:

[mysqld]

binlog-do-db=good

binlog-ignore-db=bad

In this case, will the following statement be filtered out or not?

USE ugly; INSERT INTO t1 VALUES (1);

Following the diagram in Figure 4-3, you can see that if there is at least a binlog-do-db rule, all binlog-ignore-db rules are ignored completely, and since only the good database is included, the previous statement will be filtered out.

WARNING

Because of the way that the binlog-*-db rules are evaluated, it is pointless to have both binlog-do-db and binlog-ignore-db rules at the same time. Since the binary log can be used for recovery as well as replication, the recommendation is not to use the binlog-*-db options and instead filter out the event on the slave by using replicate-* options (these are described in Filtering and skipping events). Using the binlog-*-db option would filter out statements from the binary log and you will not be able to restore the database from the binary log in the event of a crash.

Triggers, Events, and Stored Routines

A few other constructions that are treated specially when logged are stored programs—that is, triggers, events, and stored routines (the last is a collective name for stored procedures and stored functions). Their treatment with respect to the binary log contains some elements in common, so they will be covered together in this section. The explanation distinguishes statements of two types: statements that define, destroy, or alter stored programs and statements that invoke them.

Statements that define or destroy stored programs

The following discussion shows triggers in the examples, but the same principles apply to definition of events and stored routines. To understand why the server needs to handle these features specially when writing them to the binary log, consider the code in Example 4-5.

In the example, a table named employee keeps information about all employees of an imagined system and a table named log keeps a log of interesting information. Note that the log table has a timestamp column that notes the time of a change and that the name column in the employeetable is the primary key for the table. There is also a status column to tell whether the addition succeeded or failed.

To track information about employee information changes—for example, for auditing purposes—three triggers are created so that whenever an employee is added, removed, or changed, a log entry of the change is added to a log table.

Notice that the triggers are after triggers, which means that entries are added only if the executed statement is successful. Failed statements will not be logged. We will later extend the example so that unsuccessful attempts are also logged.

Example 4-5. Definitions of tables and triggers for employee administration

CREATE TABLE employee (

name CHAR(64) NOT NULL,

email CHAR(64),

password CHAR(64),

PRIMARY KEY (name)

);

CREATE TABLE log (

id INT AUTO_INCREMENT,

email CHAR(64),

status CHAR(10),

message TEXT,

ts TIMESTAMP,

PRIMARY KEY (id)

);

CREATE TRIGGER tr_employee_insert_after AFTER INSERT ON employee

FOR EACH ROW

INSERT INTO log(email, status, message)

VALUES (NEW.email, 'OK', CONCAT('Adding employee ', NEW.name));

CREATE TRIGGER tr_employee_delete_after AFTER DELETE ON employee

FOR EACH ROW

INSERT INTO log(email, status, message)

VALUES (OLD.email, 'OK', 'Removing employee');

delimiter $$

CREATE TRIGGER tr_employee_update_after AFTER UPDATE ON employee

FOR EACH ROW

BEGIN

IF OLD.name != NEW.name THEN

INSERT INTO log(email, status, message)

VALUES (OLD.email, 'OK',

CONCAT('Name change from ', OLD.name, ' to ', NEW.name));

END IF;

IF OLD.password != NEW.password THEN

INSERT INTO log(email, status, message)

VALUES (OLD.email, 'OK', 'Password change');

END IF;

IF OLD.email != NEW.email THEN

INSERT INTO log(email, status, message)

VALUES (OLD.email, 'OK', CONCAT('E-mail change to ', NEW.email));

END IF;

END $$

delimiter ;

With these trigger definitions, it is now possible to add and remove employees as shown in Example 4-6. Here an employee is added, modified, and removed, and as you can see, each of the operations is logged to the log table.

The operations of adding, removing, and modifying employees may be done by a user who has access to the employee table, but what about access to the log table? In this case, a user who can manipulate the employee table should not be able to make changes to the log table. There are many reasons for this, but they all boil down to trusting the contents of the log table for purposes of maintenance, auditing, disclosure to legal authorities, and so on. So the DBA may choose to make access to the employee table available to many users while keeping access to the log table very restricted.

Example 4-6. Adding, removing, and modifying users

master> SET @pass = PASSWORD('xyzzy');

Query OK, 0 rows affected (0.00 sec)

master> INSERT INTO employee VALUES ('mats', 'mats@example.com', @pass);

Query OK, 1 row affected (0.00 sec)

master> UPDATE employee SET name = 'matz'

-> WHERE email = 'mats@example.com';

Query OK, 1 row affected (0.00 sec)

Rows matched: 1 Changed: 1 Warnings: 0

master> SET @pass = PASSWORD('foobar');

Query OK, 0 rows affected (0.00 sec)

master> UPDATE employee SET password = @pass

-> WHERE email = 'mats@example.com';

Query OK, 1 row affected (0.00 sec)

Rows matched: 1 Changed: 1 Warnings: 0

master> DELETE FROM employee WHERE email = 'mats@example.com';

Query OK, 1 row affected (0.00 sec)

master> SELECT * FROM log;

+----+------------------+-------------------------------+---------------------+

| id | email | message | ts |

+----+------------------+-------------------------------+---------------------+

| 1 | mats@example.com | Adding employee mats | 2012-11-14 18:56:08 |

| 2 | mats@example.com | Name change from mats to matz | 2012-11-14 18:56:11 |

| 3 | mats@example.com | Password change | 2012-11-14 18:56:41 |

| 4 | mats@example.com | Removing employee | 2012-11-14 18:57:11 |

+----+------------------+-------------------------------+---------------------+

4 rows in set (0.00 sec)

The INSERT, UPDATE, and DELETE in the example can generate a warning that it is unsafe to log when statement mode is used. This is because it invokes a trigger that inserts into an autoincrement column. In general, such warnings should be investigated.

To make sure the triggers can execute successfully against a highly protected table, they are executed as the user who defined the trigger, not as the user who changed the contents of the employee table. So the CREATE TRIGGER statements in Example 4-5 are executed by the DBA, who has privileges to make additions to the log table, whereas the statements altering employee information in Example 4-6 are executed through a user management account that only has privileges to change the employee table.

When the statements in Example 4-6 are executed, the employee management account is used for updating entries in the employee table, but the DBA privileges are used to make additions to the log table. The employee management account cannot be used to add or remove entries from thelog table.

As an aside, Example 4-6 assigns passwords to a user variable before using them in the statement. This is done to avoid sending sensitive data in plain text to another server.

SECURITY AND THE BINARY LOG

In general, a user with REPLICATION SLAVE privileges has privileges to read everything that occurs on the master and should therefore be secured so that the account cannot be compromised. Details are beyond the scope of this book, but here are some examples of precautions you can take:

§ Make it impossible to log in to the account from outside the firewall.

§ Track all login attempts for accounts with REPLICATION SLAVE privileges, and place the log on a separate secure server.

§ Encrypt the connection between the master and the slave using, for example, MySQL’s built-in Secure Sockets Layer (SSL) support.

Even if the account has been secured, there is information that does not have to be in the binary log, so it makes sense not to store it there in the first place.

One of the more common types of sensitive information is passwords. Events containing passwords can be written to the binary log when executing statements that change tables on the server and that include the password required for access to the tables.

A typical example is:

UPDATE employee SET pass = PASSWORD('foobar')

WHERE email = 'chuck@example.com';

If replication is in place, it is better to rewrite this statement without the password. This is done by computing and storing the hashed password into a user-defined variable and then using that in the expression:

SET @password = PASSWORD('foobar');

UPDATE employee SET pass = @password WHERE email = 'chuck@example.com';

Since the SET statement is not replicated, the original password will not be stored in the binary log, only in the memory of the server while executing the statement.

As long as the password hash, rather than the plain-text password, is stored in the table, this technique works. If the raw password is stored directly in the table, there is no way to prevent the password from ending up in the binary log. But storing hashes for passwords is a standard good practice in any case, to prevent someone who gets his hands on the raw data from learning the passwords.

Encrypting the connection between the master and the slave offers some protection, but if the binary log itself is compromised, encrypting the connection doesn’t help.

If you recall the earlier discussion about implicit information, you may already have noticed that both the user executing a line of code and the user who defines a trigger are implicit. As you will see in Chapter 8, neither the definer nor the invoker of the trigger is critical to executing the trigger on the slave, and the user information is effectively ignored when the slave executes the statement. However, the information is important when the binary log is played back to a server—for instance, when doing a PITR.

To play back a binary log to the server without problems in handling privileges on all the various tables, it is necessary to execute all the statements as a user with SUPER privileges. But the triggers may not have been defined using SUPER privileges, so it is important to recreate the triggers with the correct user as the trigger’s definer. If a trigger is defined with SUPER privileges instead of by the user who defined the trigger originally, it might cause a privilege escalation.

To permit a DBA to specify the user under which to execute a trigger, the CREATE TRIGGER syntax includes an optional DEFINER clause. If a DEFINER is not given to the statements—as is the case in Example 4-7—the statement will be rewritten for the binary log to add a DEFINER clause and use the current user as the definer. This means that the definition of the insert trigger appears in the binary log, as shown in Example 4-7. It lists the account that created the trigger (root@localhost) as the definer, which is what we want in this case.

Example 4-7. A CREATE TRIGGER statement in the binary log

master> SHOW BINLOG EVENTS FROM 92236 LIMIT 1\G

*************************** 1. row ***************************

Log_name: master-bin.000038

Pos: 92236

Event_type: Query

Server_id: 1

End_log_pos: 92491

Info: use `test`; CREATE DEFINER=`root`@`localhost` TRIGGER ...

1 row in set (0.00 sec)

Statements that invoke triggers and stored routines

Moving over from definitions to invocations, we can ask how the master’s triggers are handled during replication. Well, actually they’re not handled at all.

The statement that invokes the trigger is logged to the binary log, but it is not linked to the particular trigger. Instead, when the slave executes the statement, it automatically executes any triggers associated with the tables affected by the statement. This means that there can be different triggers on the master and the slave, and the triggers on the master will be invoked on the master while the triggers on the slave will be invoked on the slave. For example, if the trigger to add entries to the log table is not necessary on the slave, performance can be improved by eliminating the trigger from the slave.

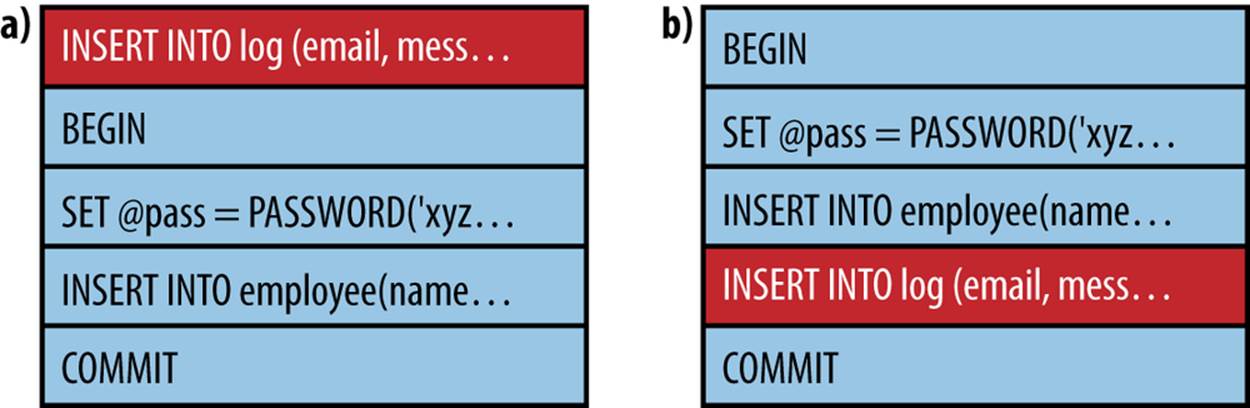

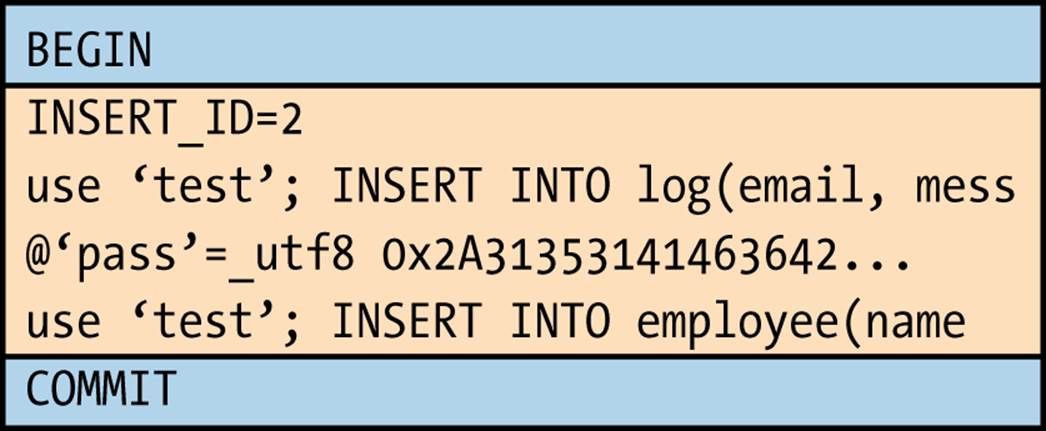

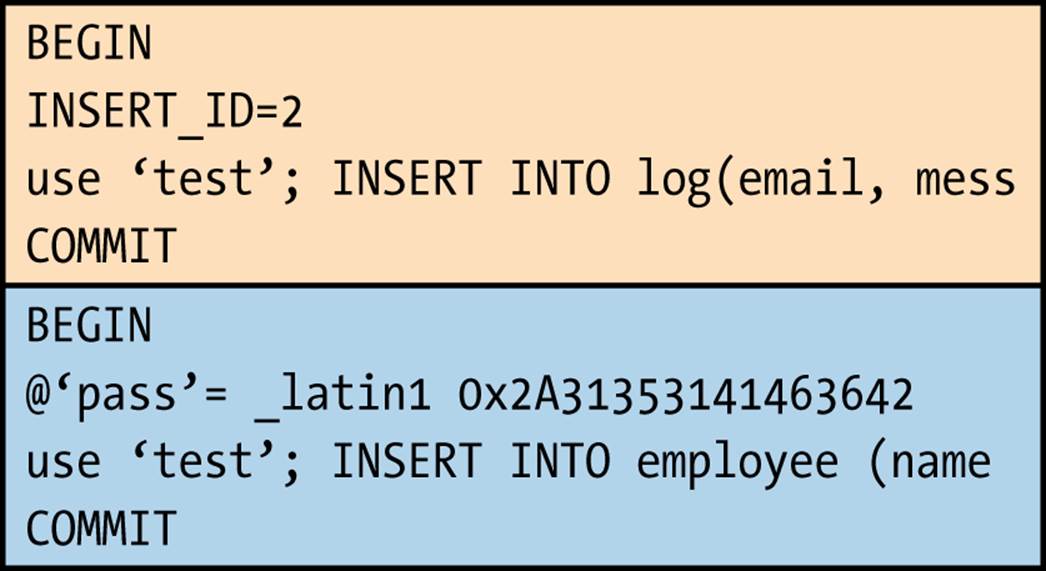

Still, any context events necessary for replicating correctly will be written to the binary log before the statement that invokes the trigger, even if it is just the statements in the trigger that require the context events. Thus, Example 4-8 shows the binary log after executing the INSERT statementin Example 4-5. Note that the first event writes the INSERT ID for the log table’s primary key. This reflects the use of the log table in the trigger, but it might appear to be redundant because the slave will not use the trigger.

You should, however, note that using different triggers on the master and slave—or no trigger at all on either the master or slave—is the exception and that the INSERT ID is necessary for replicating the INSERT statement correctly when the trigger is both on the master and slave.

Example 4-8. Contents of the binary log after executing INSERT

master> SHOW BINLOG EVENTS FROM 93340\G

*************************** 1. row ***************************

Log_name: master-bin.000038

Pos: 93340

Event_type: Intvar

Server_id: 1

End_log_pos: 93368

Info: INSERT_ID=1

*************************** 2. row ***************************

Log_name: master-bin.000038

Pos: 93368

Event_type: User var

Server_id: 1

End_log_pos: 93396h

Info: @`pass`=_utf8 0x2A3942353030333433424335324...

utf8_general_ci

*************************** 3. row ***************************

Log_name: master-bin.000038

Pos: 93396

Event_type: Query

Server_id: 1

End_log_pos: 93537

Info: use `test`; INSERT INTO employee VALUES ...

3 rows in set (0.00 sec)

Stored Procedures

Stored functions and stored procedures are known by the common name stored routines. Since the server treats stored procedures and stored functions very differently, stored procedures will be covered in this section and stored functions in the next section.

The situation for stored routines is similar to triggers in some aspects, but very different in others. Like triggers, stored routines offer a DEFINER clause, and it must be explicitly added to the binary log whether or not the statement includes it. But the invocation of stored routines is handled differently from triggers.

To begin, let’s extend Example 4-6, which defines tables for employees and logs, with some utility routines to work with the employees. Even though this can be handled with standard INSERT, DELETE, and UPDATE statements, we’ll use stored procedures to demonstrate some issues involved in writing them to the binary log. For these purposes, let’s extend the example with the functions in Example 4-9 for adding and removing employees.

Example 4-9. Stored procedure definitions for managing employees

delimiter $$

CREATE PROCEDURE employee_add(p_name CHAR(64), p_email CHAR(64),

p_password CHAR(64))

MODIFIES SQL DATA

BEGIN

DECLARE l_pass CHAR(64);

SET l_pass = PASSWORD(p_password);

INSERT INTO employee(name, email, password)

VALUES (p_name, p_email, l_pass);

END $$

CREATE PROCEDURE employee_passwd(p_email CHAR(64),

p_password CHAR(64))

MODIFIES SQL DATA

BEGIN

DECLARE l_pass CHAR(64);

SET l_pass = PASSWORD(p_password);

UPDATE employee SET password = l_pass

WHERE email = p_email;

END $$

CREATE PROCEDURE employee_del(p_name CHAR(64))

MODIFIES SQL DATA

BEGIN

DELETE FROM employee WHERE name = p_name;

END $$

delimiter ;

For the employee_add and employee_passwd procedures, we have extracted the encrypted password into a separate variable for the reasons already explained, but the employee_del procedure just contains a DELETE statement, since nothing else is needed. A binlog entry corresponding to one function is:

master> SHOW BINLOG EVENTS FROM 97911 LIMIT 1\G

*************************** 1. row ***************************

Log_name: master-bin.000038

Pos: 97911

Event_type: Query

Server_id: 1

End_log_pos: 98275

Info: use `test`; CREATE DEFINER=`root`@`localhost`PROCEDURE ...

1 row in set (0.00 sec)

As expected, the definition of this procedure is extended with the DEFINER clause before writing the definition to the binary log, but apart from that, the body of the procedure is left intact. Notice that the CREATE PROCEDURE statement is replicated as a Query event, as are all DDL statements.

In this regard, stored routines are similar to triggers in the way they are treated by the binary log. But invocation differs significantly from triggers. Example 4-10 calls the procedure that adds an employee and shows the resulting contents of the binary log.

Example 4-10. Calling a stored procedure

master> CALL employee_add('chuck', 'chuck@example.com', 'abrakadabra');

Query OK, 1 row affected (0.00 sec)

master> SHOW BINLOG EVENTS FROM 104033\G

*************************** 1. row ***************************

Log_name: master-bin.000038

Pos: 104033

Event_type: Intvar

Server_id: 1

End_log_pos: 104061

Info: INSERT_ID=1

*************************** 2. row ***************************

Log_name: master-bin.000038

Pos: 104061

Event_type: Query

Server_id: 1

End_log_pos: 104416

Info: use `test`; INSERT INTO employee(name, email, password)

VALUES ( NAME_CONST('p_name',_utf8'chuck' COLLATE ...),

NAME_CONST('p_email',_utf8'chuck@example.com' COLLATE ...),

NAME_CONST('pass',_utf8'*FEB349C4FDAA307A...' COLLATE ...))

2 rows in set (0.00 sec)

In Example 4-10, there are four things that you should note:

§ The CALL statement is not written to the binary log. Instead, the statements executed as a result of the call are written to the binary log. In other words, the body of the stored procedure is unrolled into the binary log.

§ The statement is rewritten to not contain any references to the parameters of the stored procedure—that is, p_name, p_email, and p_password. Instead, the NAME_CONST function is used for each parameter to create a result set with a single value.

§ The locally declared variable pass is also replaced with a NAME_CONST expression, where the second parameter contains the encrypted password.

§ Just as when a statement that invokes a trigger is written to the binary log, the statement that calls the stored procedure is preceded by an Intvar event holding the insert ID used when adding the employee to the log table.

Since neither the parameter names nor the locally declared names are available outside the stored routine, NAME_CONST is used to associate the name of the parameter or local variable with the constant value used when executing the function. This guarantees that the value can be used in the same way as the parameter or local variable. However, this change is not significant; currently it offers no advantages over using the parameters directly.

Stored Functions

Stored functions share many similarities with stored procedures and some similarities with triggers. Similar to both stored procedures and triggers, stored functions have a DEFINER clause that is normally (but not always) used when the CREATE FUNCTION statement is written to the binary log.

In contrast to stored procedures, stored functions can return scalar values and you can therefore embed them in various places in SQL statements. For example, consider the definition of a stored routine in Example 4-11, which extracts the email address of an employee given the employee’s name. The function is a little contrived—it is significantly more efficient to just execute statements directly—but it suits our purposes well.

Example 4-11. A stored function to fetch the name of an employee

delimiter $$

CREATE FUNCTION employee_email(p_name CHAR(64))

RETURNS CHAR(64)

DETERMINISTIC

BEGIN

DECLARE l_email CHAR(64);

SELECT email INTO l_email FROM employee WHERE name = p_name;

RETURN l_email;

END $$

delimiter ;

This stored function can be used conveniently in other statements, as shown in Example 4-12. In contrast to stored procedures, stored functions have to specify a characteristic—such as DETERMINISTIC, NO SQL, or READS SQL DATA—if they are to be written to the binary log.

Example 4-12. Examples of using the stored function

master> CREATE TABLE collected (

-> name CHAR(32),

-> email CHAR(64)

-> );

Query OK, 0 rows affected (0.09 sec)

master> INSERT INTO collected(name, email)

-> VALUES ('chuck', employee_email('chuck'));

Query OK, 1 row affected (0.01 sec)

master> SELECT employee_email('chuck');

+-------------------------+

| employee_email('chuck') |

+-------------------------+

| chuck@example.com |

+-------------------------+

1 row in set (0.00 sec)

When it comes to calls, stored functions are replicated in the same manner as triggers: as part of the statement that executes the function. For instance, the binary log doesn’t need any events preceding the INSERT statement in Example 4-12, but it will contain the context events necessary to replicate the stored function inside the INSERT.

What about SELECT? Normally, SELECT statements are not written to the binary log since they don’t change any data, but a SELECT containing a stored function, as in Example 4-13, is an exception.

Example 4-13. Example of stored function that updates a table

CREATE TABLE log(log_id INT AUTO_INCREMENT PRIMARY KEY, msg TEXT);

delimiter $$

CREATE FUNCTION log_message(msg TEXT)

RETURNS INT

DETERMINISTIC

BEGIN

INSERT INTO log(msg) VALUES(msg);

RETURN LAST_INSERT_ID();

END $$

delimiter ;

SELECT log_message('Just a test');

When executing the stored function, the server notices that it adds a row to the log table and marks the statement as an “updating” statement, which means that it will be written to the binary log. So, for the slightly artificial example in Example 4-13, the binary log will contain the event:

*************************** 7. row ***************************

Log_name: mysql-bin.000001

Pos: 845

Event_type: Query

Server_id: 1

End_log_pos: 913

Info: BEGIN

*************************** 8. row ***************************

Log_name: mysql-bin.000001

Pos: 913

Event_type: Intvar

Server_id: 1

End_log_pos: 941

Info: LAST_INSERT_ID=1

*************************** 9. row ***************************

Log_name: mysql-bin.000001

Pos: 941

Event_type: Intvar

Server_id: 1

End_log_pos: 969

Info: INSERT_ID=1

*************************** 10. row ***************************

Log_name: mysql-bin.000001

Pos: 969

Event_type: Query

Server_id: 1

End_log_pos: 1109

Info: use `test`; SELECT `test`.`log_message`(_utf8'Just a test' COLLATE...

*************************** 11. row ***************************

Log_name: mysql-bin.000001

Pos: 1105

Event_type: Xid

Server_id: 1

End_log_pos: 1132

Info: COMMIT /* xid=237 */

STORED FUNCTIONS AND PRIVILEGES

The CREATE ROUTINE privilege is required to define a stored procedure or stored function. Strictly speaking, no other privileges are needed to create a stored routine, but since it normally executes under the privileges of the definer, defining a stored routine would not make much sense if the definer of the procedure didn’t have the necessary privileges to read to or write from tables referenced by the stored procedure.

But replication threads on the slave execute without privilege checks. This leaves a serious security hole allowing any user with the CREATE ROUTINE privilege to elevate her privileges and execute any statement on the slave.

In MySQL versions earlier than 5.0, this does not cause problems, because all paths of a statement are explored when the statement is executed on the master. A privilege violation on the master will prevent a statement from being written to the binary log, so users cannot access objects on the slave that were out of bounds on the master. However, with the introduction of stored routines, it is possible to create conditional execution paths, and the server does not explore all paths when executing a stored routine.

Since stored procedures are unrolled, the exact statements executed on the master are also executed on the slave, and since the statement is logged only if it was successfully executed on the master, it is not possible to get access to other objects. Not so with stored functions.

If a stored function is defined with SQL SECURITY INVOKER, a malicious user can craft a function that will execute differently on the master and the slave. The security breach can then be buried in the branch executed on the slave. This is demonstrated in the following example:

CREATE FUNCTION magic()

RETURNS CHAR(64)

SQL SECURITY INVOKER

BEGIN

DECLARE result CHAR(64);

IF @@server_id <> 1 THEN

SELECT what INTO result FROM secret.agents LIMIT 1;

RETURN result;

ELSE

RETURN 'I am magic!';

END IF;

END $$

One piece of code executes on the master (the ELSE branch), whereas a separate piece of code (the IF branch) executes on the slave where the privilege checks are disabled. The effect is to elevate the user’s privileges from CREATE ROUTINE to the equivalent of SUPER.

Notice that this problem doesn’t occur if the function is defined with SQL SECURITY DEFINER, because the function executes with the user’s privileges and will be blocked on the slave.

To prevent privilege escalation on a slave, MySQL requires SUPER privileges by default to define stored functions. But because stored functions are very useful, and some database administrators trust their users with creating proper functions, this check can be disabled with the log-bin-trust-function-creators option.

Events

The events feature is a MySQL extension, not part of standard SQL. Events, which should not be confused with binlog events, are handled by a stored program that is executed regularly by a special event scheduler.

Similar to all other stored programs, definitions of events are also logged with a DEFINER clause. Since events are invoked by the event scheduler, they are always executed as the definer and do not pose a security risk in the way that stored functions do.

When events are executed, the statements are written to the binary log directly.

Since the events will be executed on the master, they are automatically disabled on the slave and will therefore not be executed there. If the events were not disabled, they would be executed twice on the slave: once by the master executing the event and replicating the changes to the slave, and once by the slave executing the event directly.

WARNING

Because the events are disabled on the slave, it is necessary to enable these events if the slave, for some reason, should lose the master.

So, for example, when promoting a slave as described in Chapter 5, don’t forget to enable the events that were replicated from the master. This is easiest to do using the following statement:

UPDATE mysql.events

SET Status = ENABLED

WHERE Status = SLAVESIDE_DISABLED;

The purpose of the check is to enable only the events that were disabled when being replicated from the master. There might be events that are disabled for other reasons.

Special Constructions

Even though statement-based replication is normally straightforward, some special constructions have to be handled with care. Recall that for the statement to be executed correctly on the slave, the context has to be correct for the statement. Even though the context events discussed earlier handle part of the context, some constructions have additional context that is not transferred as part of the replication process.

The LOAD_FILE function

The LOAD_FILE function allows you to fetch a file and use it as part of an expression. Although quite convenient at times, the file has to exist on the slave server to replicate correctly since the file is not transferred during replication, as the file to LOAD DATA INFILE is. With some ingenuity, you can rewrite a statement involving the LOAD_FILE function either to use the LOAD DATA INFILE statement or to define a user-defined variable to hold the contents of the file. For example, take the following statement that inserts a document into a table:

master> INSERT INTO document(author, body)

-> VALUES ('Mats Kindahl', LOAD_FILE('go_intro.xml'));

You can rewrite this statement to use LOAD DATA INFILE instead. In this case, you have to take care to specify character strings that cannot exist in the document as field and line delimiters, since you are going to read the entire file contents as a single column.

master> LOAD DATA INFILE 'go_intro.xml' INTO TABLE document

-> FIELDS TERMINATED BY '@*@' LINES TERMINATED BY '&%&'

-> (author, body) SET author = 'Mats Kindahl';

An alternative is to store the file contents in a user-defined variable and then use it in the statement.

master> SET @document = LOAD_FILE('go_intro.xml');

master> INSERT INTO document(author, body) VALUES

-> ('Mats Kindahl, @document);

Nontransactional Changes and Error Handling

So far we have considered only transactional changes and have not looked at error handling at all. For transactional changes, error handling is pretty uncomplicated: a statement that tries to change transactional tables and fails will not have any effect at all on the table. That’s the entire point of having a transactional system—so the changes that the statement attempts to introduce can be safely ignored. The same applies to transactions that are rolled back: they have no effect on the tables and can therefore simply be discarded without risking inconsistencies between the master and the slave.

A specialty of MySQL is the provisioning of nontransactional storage engines. This can offer some speed advantages, because the storage engine does not have to administer the transactional log that the transactional engines use, and it allows some optimizations on disk access. From a replication perspective, however, nontransactional engines require special considerations.

The most important aspect to note is that replication cannot handle arbitrary nontransactional engines, but has to make some assumptions about how they behave. Some of those limitations are lifted with the introduction of row-based replication in version 5.1—a subject that will be covered inRow-Based Replication—but even in that case, it cannot handle arbitrary storage engines.

One of the features that complicates the issue further, from a replication perspective, is that it is possible to mix transactional and nontransactional engines in the same transaction, and even in the same statement.

To continue with the example used earlier, consider Example 4-14, where the log table from Example 4-5 is given a nontransactional storage engine while the employee table is given a transactional one. We use the nontransactional MyISAM storage engine for the log table to improve its speed, while keeping the transactional behavior for the employee table.

We can further extend the example to track unsuccessful attempts to add employees by creating a pair of insert triggers: a before trigger and an after trigger. If an administrator sees an entry in the log with a status field of FAIL, it means the before trigger ran, but the after trigger did not, and therefore an attempt to add an employee failed.

Example 4-14. Definition of log and employee tables with storage engines

CREATE TABLE employee (

name CHAR(64) NOT NULL,

email CHAR(64),

password CHAR(64),

PRIMARY KEY (email)

) ENGINE = InnoDB;

CREATE TABLE log (

id INT AUTO_INCREMENT,

email CHAR(64),

message TEXT,

status ENUM('FAIL', 'OK') DEFAULT 'FAIL',

ts TIMESTAMP,

PRIMARY KEY (id)

) ENGINE = MyISAM;

delimiter $$

CREATE TRIGGER tr_employee_insert_before BEFORE INSERT ON employee

FOR EACH ROW

BEGIN

INSERT INTO log(email, message)

VALUES (NEW.email, CONCAT('Adding employee ', NEW.name));

SET @LAST_INSERT_ID = LAST_INSERT_ID();

END $$

delimiter ;

CREATE TRIGGER tr_employee_insert_after AFTER INSERT ON employee

FOR EACH ROW

UPDATE log SET status = 'OK' WHERE id = @LAST_INSERT_ID;

What are the effects of this change on the binary log?

To begin, let’s consider the INSERT statement from Example 4-6. Assuming the statement is not inside a transaction and AUTOCOMMIT is 1, the statement will be a transaction by itself. If the statement executes without errors, everything will proceed as planned and the statement will be written to the binary log as a Query event.

Now, consider what happens if the INSERT is repeated with the same employee. Since the email column is the primary key, this will generate a duplicate key error when the insertion is attempted, but what will happen with the statement? Is it written to the binary log or not?

Let’s have a look…

master> SET @pass = PASSWORD('xyzzy');

Query OK, 0 rows affected (0.00 sec)

master> INSERT INTO employee(name,email,password)

-> VALUES ('chuck','chuck@example.com',@pass);

ERROR 1062 (23000): Duplicate entry 'chuck@example.com' for key 'PRIMARY'

master> SELECT * FROM employee;

+------+--------------------+-------------------------------------------+

| name | email | password |

+------+--------------------+-------------------------------------------+

| chuck | chuck@example.com | *151AF6B8C3A6AA09CFCCBD34601F2D309ED54888 |

+------+--------------------+-------------------------------------------+

1 row in set (0.00 sec)

master> SHOW BINLOG EVENTS FROM 38493\G

*************************** 1. row ***************************

Log_name: master-bin.000038

Pos: 38493

Event_type: User var

Server_id: 1

End_log_pos: 38571

Info: @`pass`=_utf8 0x2A31353141463642384333413641413...

*************************** 2. row ***************************

Log_name: master-bin.000038

Pos: 38571

Event_type: Query

Server_id: 1

End_log_pos: 38689

Info: use `test`; INSERT INTO employee(name,email,password)...

2 rows in set (0.00 sec)

As you can see, the statement is written to the binary log even though the employee table is transactional and the statement failed. Looking at the contents of the table using the SELECT reveals that there is still a single employee, proving the statement was rolled back—so why is the statement written to the binary log?

Looking into the log table will reveal the reason.

master> SELECT * FROM log;

+----+------------------+------------------------+--------+---------------------+

| id | email | message | status | ts |

+----+------------------+------------------------+--------+---------------------+

| 1 | mats@example.com | Adding employee mats | OK | 2010-01-13 15:50:45 |

| 2 | mats@example.com | Name change from ... | OK | 2010-01-13 15:50:48 |

| 3 | mats@example.com | Password change | OK | 2010-01-13 15:50:50 |

| 4 | mats@example.com | Removing employee | OK | 2010-01-13 15:50:52 |

| 5 | mats@example.com | Adding employee mats | OK | 2010-01-13 16:11:45 |

| 6 | mats@example.com | Adding employee mats | FAIL | 2010-01-13 16:12:00 |

+----+--------------+----------------------------+--------+---------------------+

6 rows in set (0.00 sec)

Look at the last line, where the status is FAIL. This line was added to the table by the before trigger tr_employee_insert_before. For the binary log to faithfully represent the changes made to the database on the master, it is necessary to write the statement to the binary log if there are any nontransactional changes present in the statement or in triggers that are executed as a result of executing the statement. Since the statement failed, the after trigger tr_employee_insert_after was not executed, and therefore the status is still FAIL from the execution of the before trigger.

Since the statement failed on the master, information about the failure needs to be written to the binary log as well. The MySQL server handles this by using an error code field in the Query event to register the exact error code that caused the statement to fail. This field is then written to the binary log together with the event.

The error code is not visible when using the SHOW BINLOG EVENTS command, but you can view it using the mysqlbinlog tool, which we will cover later in the chapter.

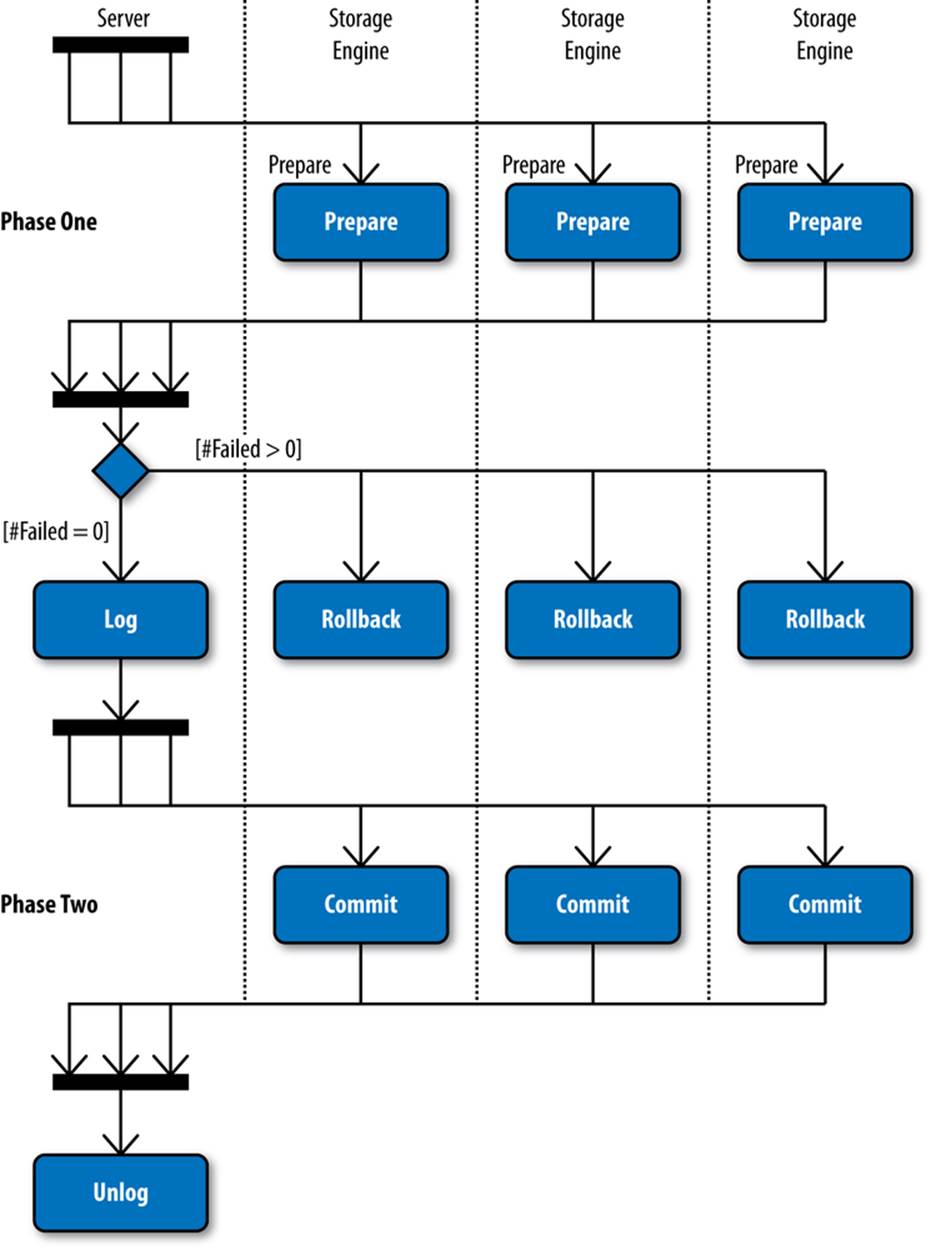

Logging Transactions

You have now seen how individual statements are written to the binary log, along with context information, but we did not cover how transactions are logged. In this section, we will briefly cover how transactions are logged.

A transaction can start under a few different circumstances:

§ When the user issues START TRANSACTION (or BEGIN).

§ When AUTOCOMMIT=1 and a statement accessing a transactional table starts to execute. Note that a statement that writes only to nontransactional tables—for example, only to MyISAM tables—does not start a transaction.

§ When AUTOCOMMIT=0 and the previous transaction was committed or aborted either implicitly (by executing a statement that does an implicit commit) or explicitly by using COMMIT or ROLLBACK.

Not every statement that is executed after the transaction has started is part of that transaction. The exceptions require special care from the binary log.