Institutionalization of UX: A Step-by-Step Guide to a User Experience Practice, Second Edition (2014)

Part II. Setup

Chapter 4. Methodology

![]() A methodological standard describes how to do user-centered design—select a good methodology that will work for your organization.

A methodological standard describes how to do user-centered design—select a good methodology that will work for your organization.

![]() You probably already have a system development life cycle in place, but it is probably not a user-centered methodology. You may need to retrofit a user-centered process onto or in front of the current technical methodology.

You probably already have a system development life cycle in place, but it is probably not a user-centered methodology. You may need to retrofit a user-centered process onto or in front of the current technical methodology.

![]() Implement a quick test of your life cycle. Is it user-centered? Take the test in this chapter.

Implement a quick test of your life cycle. Is it user-centered? Take the test in this chapter.

![]() Review The HFI Framework 7 as an example of a proven user-centered process.

Review The HFI Framework 7 as an example of a proven user-centered process.

Some people do not like standard development methodologies. They prefer to work with each project and figure out what is needed for that one project without thinking about standard practices. They may feel that any inefficiency and nonrepeatability are inherent to the creative process.

With a user-centered design process or methodology in place, the critical steps to make a product usable will not be missed. All projects will follow a similar method, ensuring a standard level of quality.

A methodological standard primarily plays the role of a memory jogger. It makes sure that you do not forget to complete some of the hundreds of major steps needed for good design. Implementing a methodology also provides your company with a mature process—that is, a systematic methodology that can be organized, supported with tools, monitored, and improved. A standard process of user-centered design is essential, and the methodology you choose or develop must enable you to be reliable, successful, and efficient in your design process.

A methodology is also the core of your institutionalization effort. Because many of the other steps to institutionalization are dependent on the methodology project, it is often the first thing that gets done. The methodology program will not only define what needs to be done, but also identify which groups and class of staff will do each type of work. If you decide to have your business analysts do much of the detailed design work (generally with mentoring from user experience design professionals), then you will need training for those business analysts. Doing the methodology first, as you might imagine, is often a good plan.

This chapter describes how to choose a methodology and outlines some of the challenges you may face as you integrate it into your current development process.

What to Look for in a User-Centered Methodology

Mature development organizations today use a defined system development life cycle for software development. That means they have a plan for developing software that includes an entire cycle spanning from feasibility to programming to implementation. Some of these are industry standards with software tools available, such as the Agile approach and the Rational Unified Process (RUP), while others are proprietary processes developed within companies. Although some software development life cycles may mention usability, none seems to provide the comprehensive set of steps necessary to follow a user-centered design process. Software development life-cycle methodologies concentrate on the technical aspects of building a software product, as they should. They do not make engineering the user experience and associated tasks the primary focus of development, which means they build applications from the technology and data, inside out. User-centered design is a different way to approach development: it concentrates on the user and the user tasks, rather than on technical and programming issues. Following a user-centered design process is the only way to reliably create practical, useful, usable, and satisfying technology products. In a user-centered process, you design the user experience first, and then let it drive the technology (as shown in Figure 4-1).

Figure 4-1: The “old” technical-centered solution needs to be replaced by the “new” user-centered solution

Almost all practitioners in the field agree on the general steps required to follow a user-centered design process. You can document these steps yourself and build your own user-centered design process. Accomplishing this feat, however, may be slow, expensive, and time-consuming. You should undertake this task only if you have senior usability staff with many years of experience, as that approach will ensure you are following industry best practices. In most cases, however, you are far better off buying a process and then customizing it for your organization.

Your user-centered process affects the way functional specifications are created. For example, you will craft the user taskflows before you worry too much about the database structures. The user-centered process will mostly bolt onto the front of your technology process. Then throughout the software development process, steps connect the user-centered process to the work that the technical staff is doing as well as to the documents that serve as input to the programming staff. In addition, many linkages ensure that the methods stay coordinated. Document handoffs need to be identified at a detailed level.

This is not to say that technical limitations are ignored in the early design phases. Interface designers need to know about the technical issues for a particular project. They need to understand what the technology can and cannot do to ensure that they have used the technology to the fullest extent and without designing something that will prove difficult to implement. The primary concern here, however, is meeting the customers’ needs. You need to engineer the user experience and performance and derive the user interface structure to support this user taskflow. At that point, the technical staff can design the software to support the user interface design. This effort might require pulling together data from a dozen servers to provide a summary view when entering the site, using graphic preloads to shorten the download time on a website, or using cookies so that users get book offers that relate to their needs. The technology has a huge role to play, but it needs to remain focused on the needs of the users.

Select a user-centered methodology that meets the following criteria:

• The methodology must be comprehensive. It is not acceptable to have a process that relies on usability testing alone—it must address the whole life cycle.

• It must be user-centered. The methodology must be firmly grounded in designing for an optimal user experience and performance first, and the interface design and technology must be based on user needs. It must take those user needs into account and must actually access representative users to obtain data supporting the design and feedback.

• It must have a complete set of activities defined and deliverable documents required. The methodology should not be a loose collection of ideas but rather a specific set of activities with actual documentation throughout the process.

• It must fit with corporate realities. Ill-defined or changing business objects are by far the most important cause of feature creep. The user-centered design process needs to include steps that bring together the diverse strategic views and ideas of your organization’s stakeholders. There need to be activities in the process to ensure that all key stakeholders contribute and feel heard.

• It must include more advanced user experience design activities, if appropriate. Today, methods that simply ensure usability are usually no longer sufficient. As UX practitioners, we need to deal with the complexities of cross-channel alignment and integration—so there needs to be a UX strategy. We are often asked to participate in systematic innovation programs—so we need innovation methods and not just a general intention to be innovative. We need to design for conversion by applying persuasion engineering methods. For most organizations, all of these capabilities must be part of the current methodology.

• It must be a good fit for your organization’s size and criticality of work. Large organizations that build large and critical applications should have a more thorough process and more detailed documentation.

• It should be supported. While it is wonderful to have a process described in a book, implementation requires much more. It requires training, templates, tools, and a set of support services. It is a daunting challenge to create all of these components or cobble them together from a diverse set of sources. You might find a pretty good user-centered design methodology—you might get it from the Web or from a friend. Then, of course, you need to determine what is required to support that methodology. Expect to devote a good half-year of work to this process if you have to create all the deliverable document formats, questionnaires, tools, and standards, and another half-year to develop training to support the standard.

• It must be able to work with your current development life cycle. This is no small task, and is discussed later in this chapter.

• The methodology must have a cross-cultural localization process through which the design is evaluated for language and culture issues (if you are doing cross-cultural or international development).

Integrating Usability into the Development Cycle

Janice Nall, Managing Director, Atlanta, Danya International Former Chief, Communication Technologies Branch, National Cancer Institute

We really want usability to be so integrated into the development cycle that it’s just like graphics: It’s just a process, and it’s where you insert it into the process that matters. It’s not at the end—when it’s ready to go out the door—but rather at the very beginning. We just want to make usability mainstream and not constantly have to argue the cause. We are still in that mode; we feel like we have to prove ourselves every day.

If we can get it into the next realm where we can take it to the next step, where we are not spending all the time justifying why we need to do it, we can pursue research to get answers to the questions that don’t have answers. I think there is a huge interest in really pushing the science—from our end certainly, but from across the federal government as well. We must make better-informed decisions and start advancing the field, sharing that knowledge and really disseminating what all of us are learning effectively.

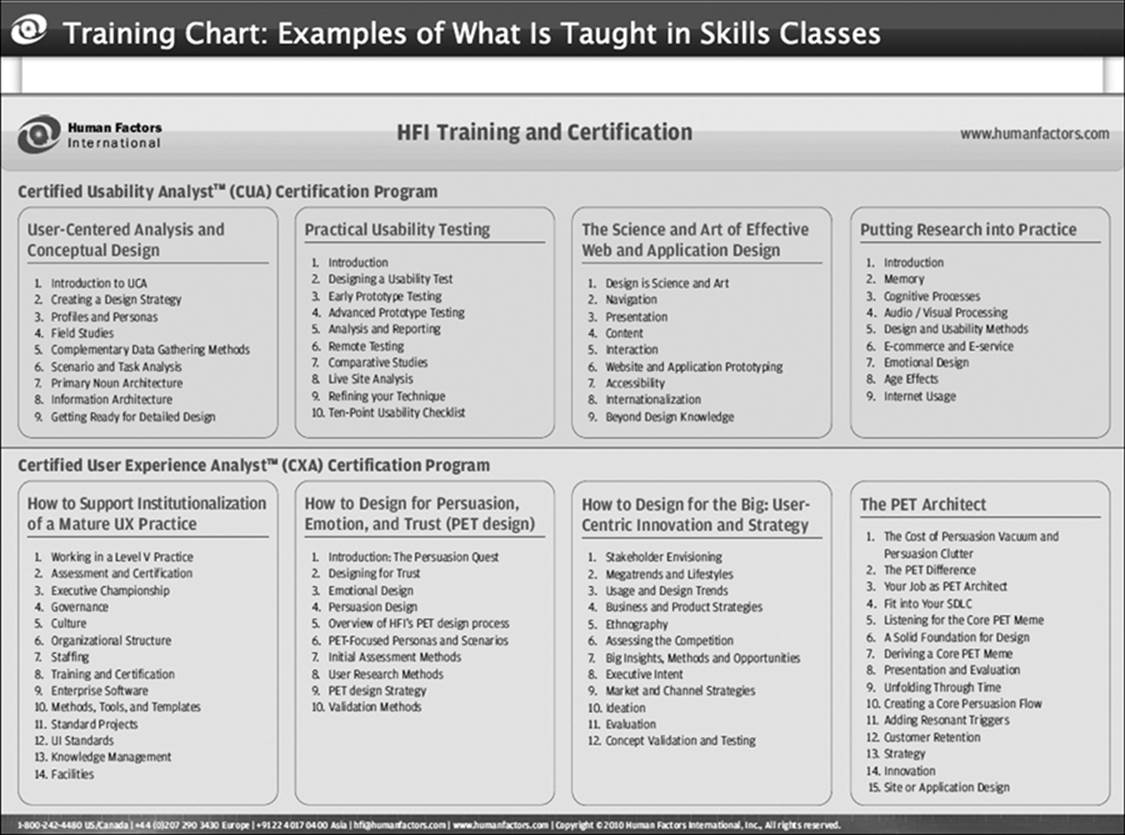

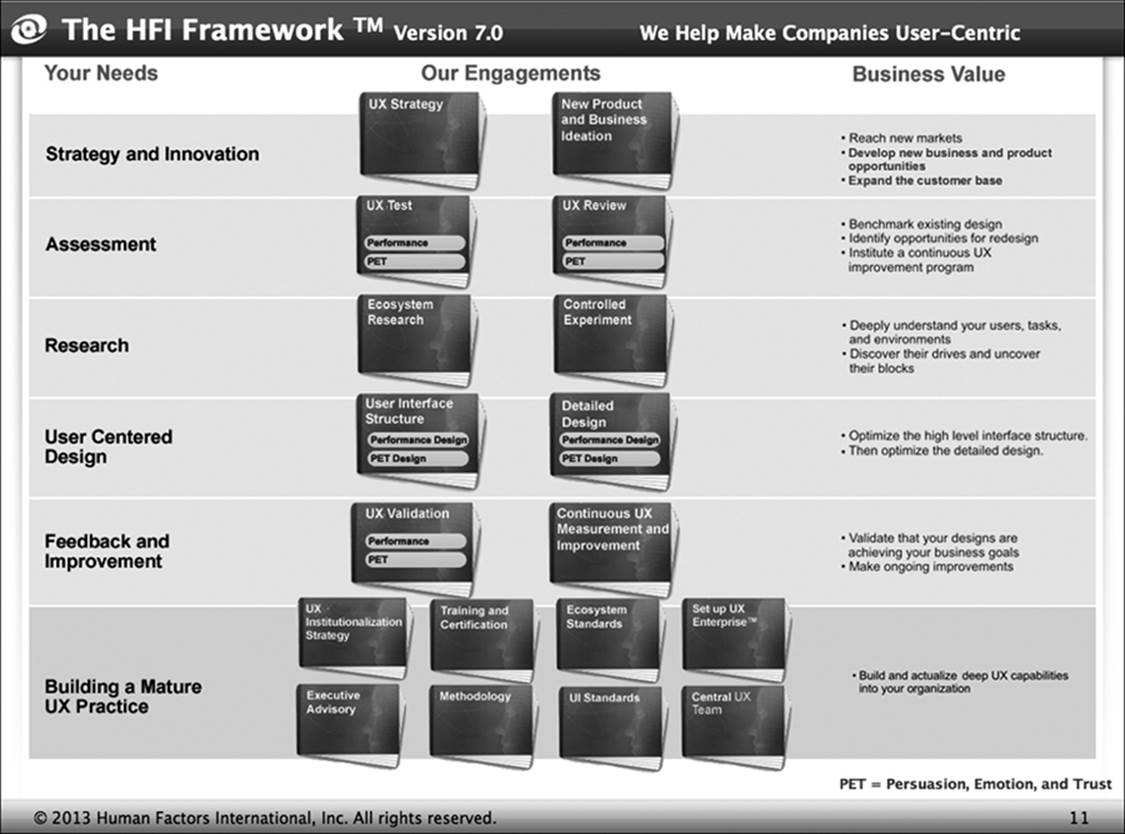

An Outline of The HFI Framework

To give a sense of what a user-centered methodology should include, this section outlines The HFI Framework, a methodology based on the practices that have evolved at HFI over the last 30 years. Driven by cycles of data gathering and refinement, this method pulls in the knowledge and vision of the organization and harmonizes them with user needs and limitations. This solid process for ensuring quality design includes designing screens by using templates (instead of “reinventing the wheel” each time) and supports deployment and localization to different cultures. It covers the whole thread of user experience design work that starts with executive intent, and runs through UX strategy, design, and continuous improvement. If the thread is broken in an organization, sound executive ideas about what is needed for success will eventually fall to the floor, and the design will become nothing more than a set of functions only roughly associated with the intended organizational direction. We follow this process consistently at HFI and have integrated it in practice with almost every commercial system development process and hundreds of bespoke methods.

The technical part of our ISO certification is based on following this process.

Figure 4-2 shows an overview of The HFI Framework. The first column lists needs that are fulfilled, and the second column lists engagements we use to fulfill those needs. The last column outlines the business need that is being fulfilled. Not every type of engagement gets completed on every program. Instead, what is important is a coherent set of engagements ensuring that the thread between executive intent and design is unbroken.

Figure 4-2: The HFI Framework

Strategy and Innovation

The input into this work is the executive intent. Executives have various types of intent statements, as the following examples suggest:

• Reduce service calls and return of tickets in error

• Migrate customers to digital self-service channels

• Increase conversion and market share by 40%

• Gain a 20% share of the mobile market in Kenya

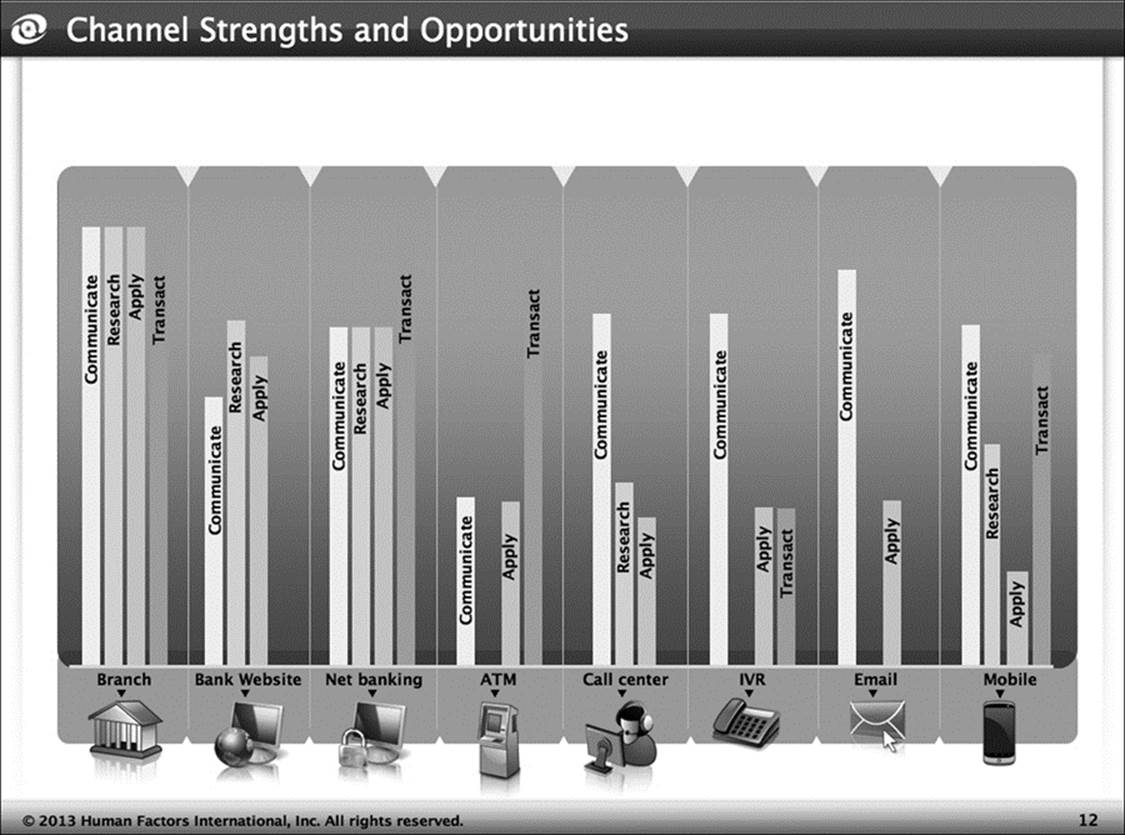

It is the executive’s job to come up with these types of desires. But then as we start our UX strategy program, we must ask the hard question: “How is that going to happen?” We might want to migrate customers to digital self-service. But that won’t happen by just creating a self-service facility. Nor will it happen—on a large scale—by just making the self-service website easy to use. First, we need to determine how to motivate customers. Where a decade ago the journey of a major development program might start with a discussion of the technological framework for the development, today it starts with work on the customer’s motivation. Once the customer’s motivation is understood, then we need to see how that target motivational experience can be supported with the wide range of available channels (Figure 4-3). Success generally requires coordination of applications, across various channels. There may be a physical store and a call center. In the end, the whole ecosystem solution needs to be simple to understand and a good fit for the capabilities and limitations of the various channels.

Figure 4-3: The diverse capabilities of various channels

While it is common to find organizations that do strategy work, in the end they deliver only generalities—and those at a very high price. At HFI, we always illustrate a motivational and cross-channel solution with conceptual designs. This concretizes the recommendations. In the end, those concepts become the initial foundation for ongoing structural design.

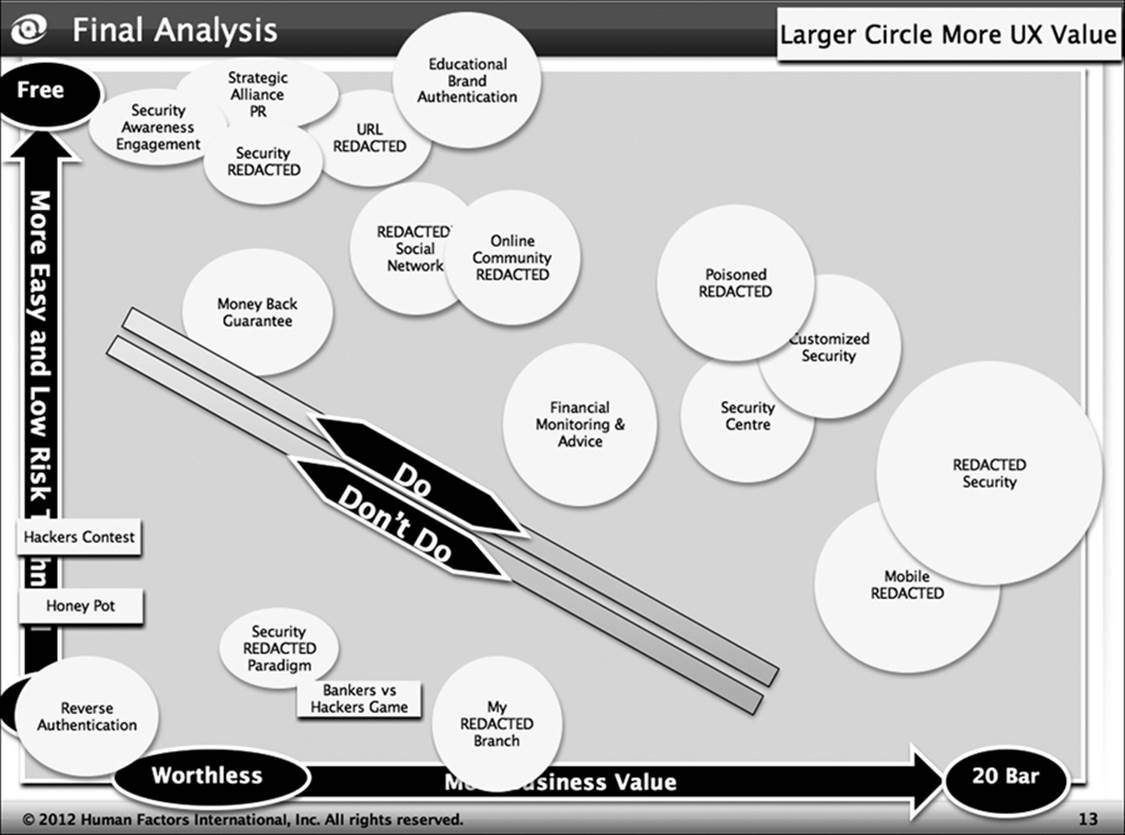

The UX strategy is likely to spawn one or more innovation programs, identifying areas that need innovation in the business model or product design. Many organizations try to innovate by asking their staff to be innovative—they might provide a quick training program on “thinking outside the box,” for example. In reality, a request for lateral thinking is quite different from industrial-strength innovation processes. Serious innovation takes a systematic definition of the context, including the capabilities of the organization and the ecosystem of the customers (Figure 4-4). An innovation process creates many ideas and then determines those worth applying.

Figure 4-4: Results of a systematic innovation program about online security

Assessment

If there is an existing application, it may make sense to evaluate the quality of the current design to justify the redevelopment program and identify areas that need special emphasis during redesign. During this phase, we also may perform a competitive analysis to understand the current best practices and identify competitive imperatives.

Two kinds of assessment methods may be employed. In a usability test, designs are evaluated by having representative users attempt to use the facility or be interviewed about the facility. In an expert review, trained experts in user experience design systematically investigate the design based on research-based principles and models in the field. You might think that the user research is much better than the expert review, but, in fact, the expert review is usually faster, cheaper, and better. User research might result in solid data that the user takes three minutes to complete a transaction. But what does that mean? Is three minutes good? An expert, in contrast, might immediately point out that default values can be used to slash the time requirement. In reality, the best application for usability testing as an assessment is when strong political issues are preventing stakeholders from listening to the expert.

You need different methods to investigate whether people will do a task as opposed to assessing whether they can do a task. PET is HFI’s acronym for persuasion, emotion, and trust. The methods we use to check whether people get confused while using an application don’t really work when we are trying to understand whether they will be motivated to convert. Today usability is no longer enough. It is certainly an expected hygiene factor to have a checkout flow that everyone is able to use, but assessing the customer’s willingness to actually buy the product requires different methods.

Research

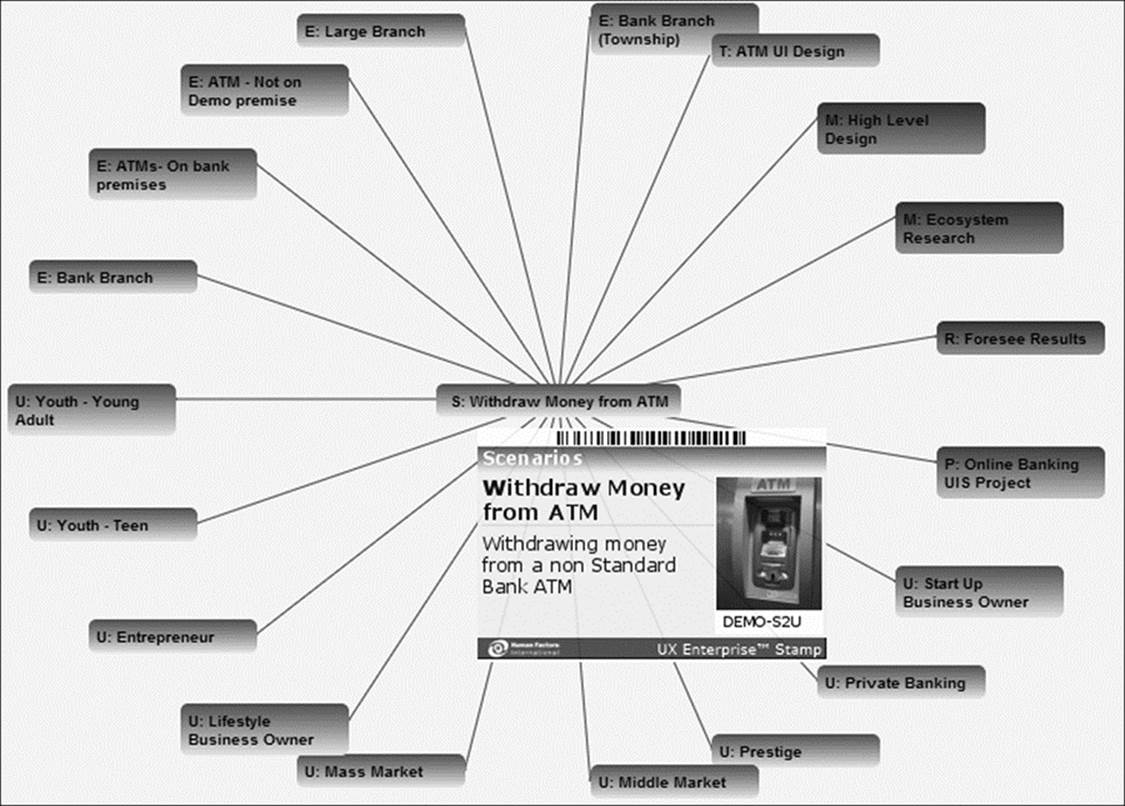

User experience design practitioners rarely do pure research, because they are too busy designing things. Nevertheless, there are two kinds of research that may be needed to perform. One is foundational ecosystem research. This type of research is performed to define the range of users, environments, scenarios, and artifacts for the target application (see Figure 4-5 for an example). You might do this research once, and then apply it to many ongoing projects (which is why we would think of it as foundational research).

Figure 4-5: Example of an ecosystem around ATMs showing scenarios, users, and other elements

The second type of research that user experience design practitioners undertake is a controlled experiment. This scientific research study, with proper controls and statistical analysis, is needed when a critical decision must be made between two different designs and millions of dollars hang in the balance. A controlled study is clearly justified when you really need to be certain which design is better. Controlled experiments are also appropriate to support marketing assertions. For example, HFI recently had a smartphone company approach our firm and tell us the blogosphere had proclaimed that they had the fastest keyboard. The company wanted scientific backing for the claim so it could own that distinction and make it the core of a marketing campaign. So we ran the study (the company’s keyboard was the third fastest).

User-Centered Design

Once the strategy and innovation have ensured that you are building the right thing, then it is time to do the actual design work. It is best to think of this endeavor as two separate programs. The first deals with the user interface structure. Our years of experience at HFI have shown that 80% of usability is determined by a good interface structure: it ensures that users can understand what is offered, find things quickly, and navigate efficiently. There is also a PET aspect to the structure. Just as we have always designed the structure of the navigation, so we must now design the structure of the conversion as well. We need to know which drives, blocks, beliefs, and feelings we are dealing with. We need to know which set of tools will be employed to trigger conversion.

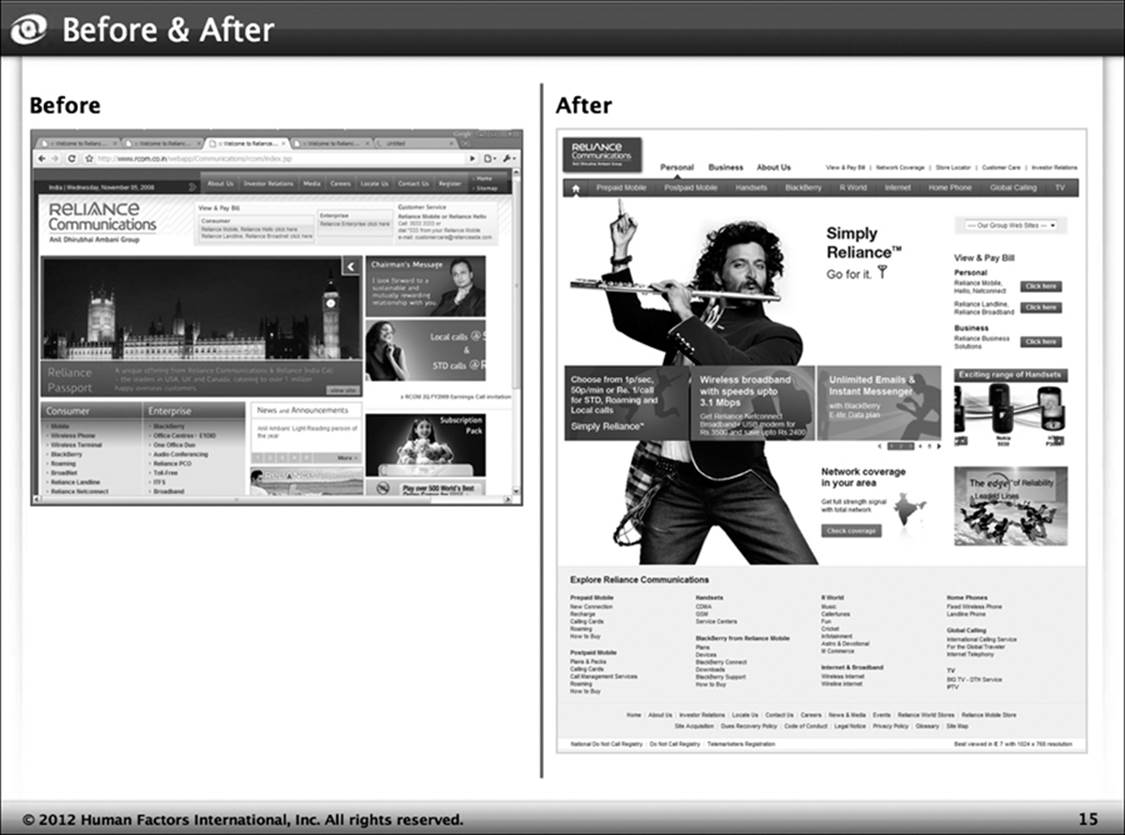

It is often best to complete the structural design before planning the detailed design. The structural design will create the overall container for the interface (Figure 4-6). It defines how the user will navigate, and if done well this structure will match the user’s deep mental model. The result is that the design will seem obvious and laid out in a common-sense manner. The user will be able to find things. The navigational container must also be physically efficient. It must fit with the user’s taskflow so as to minimize the time and physical effort involved. Finally, it must define the persuasion strategy and the style of the site.

Figure 4-6: Example of a structural redesign

The structural design takes a high level of expertise. Once it is done, however, it is easy to plan the ongoing detailed design. Each screen must be fully designed and specified. It is easy to find good structural designs that are built out with poor detailed design: they are good-looking and easy to navigate, but awkward and frustrating as the user proceeds through the interaction.

The detailed design work is easier than the strategic and structural design, but often involves quite a bit of work, and it is critical to maintain quality throughout this step. The detailed design must be guided by user interface standards (or patterns). Detailed design also requires staff who understand a wide range of design methods, principles, and techniques. It cannot be left to graphic artists or business analysts unless they are competent in user experience design principles. The detailed design process also generally requires cycles of usability testing, so that skill set is essential as well.

Feedback and Improvement

Once the design is complete and coded, it is a good idea to do a UX validation. This step offers an opportunity to ensure that the design works as intended by looking at the ability of users to operate the design without getting stuck, and by checking the users’ emotional reactions to the design. This evaluation is a bit different from the UX testing involved in the design process. The testing during the design stage is “formative” testing focused on gaining insights into why the user is having trouble so that you can make design changes. The UX validation, in contrast, seeks to measure the user’s performance to see if the objectives are met. It’s a worthwhile step, as it can stop a bad design from being released as well as provide lessons on what might be done better next time.

The last phase of the user-centered design process, continuous UX measurement and improvement, is often neglected, but is potentially a great investment. If the design is for a large application, it pays to make ongoing improvements. At HFI, we once changed just one page on an office supply company’s website and increased sales by an estimated $6 million per month. Ideally, you will create an ongoing dashboard that provides an array of UX measurements. This can allow you to track the impact of the ongoing work done on the design.

Generally companies have good business metrics on their sites and applications. And in a sense that is exactly the focus of UX work. We really care only that our work has increased conversion, saved time, and reduced call center load. Why, then, would you need user experience design metrics in addition to the business metrics?

A.G. Edwards’ Usability Process and Methodology

Pat Malecek, AVP, CUA, Services Solutions Executive, Dell, Inc. Former User Experience Manager, A.G. Edwards & Sons, Inc.

I’ve heard from usability practitioners at other companies that it’s fairly common to have a formal and well-documented methodology in place but that it’s not often followed to the letter. We certainly have a very well-documented methodology—whether or not it’s followed to the letter varies from project to project, depending on scope, schedule, and things of that nature.

We have a summary version of that methodology that we are putting the finishing touches on. It is a product development life cycle that we’ve crafted, and it provides a much simpler view. This life-cycle document was developed by both the business and technical sides, and it puts forth a mutually agreeable methodology that calls for early attention to presentation issues and lots of opportunities for iteration and look-and-feel corrections. We are hoping to disseminate the life-cycle document to all the relevant parties because it shows in simple terms where various usability issues could be addressed.

In terms of examples of the usability practices that we put into the process—in the grandest terms, we do it all. We’ve completed projects in which we go out and interview users, and we do card sorting, and then we go to navigation mock-ups and move through paper mock-ups and test paper mock-ups, all the way through to a finished product. And when things are being done with great urgency, typically we do wireframing—and when I say “we,” I mean our user experience designers. They have the proper skills and the proper knowledge, and—if time doesn’t permit testing with the appropriate user groups—at the very least they will take those mock-ups to a cluster of people and do some informal testing to shake out some of the big bugs.

By having specific measurements for user experience design, we can tell if that design is likely to improve the business metric. If both the business metric and the user experience design metric yield poor results, then design improvements will most likely be helpful. If the business metric is poor but the user experience design metric is great, then the problem probably stems from something else, such as pricing, marketing, or even the executive intent.

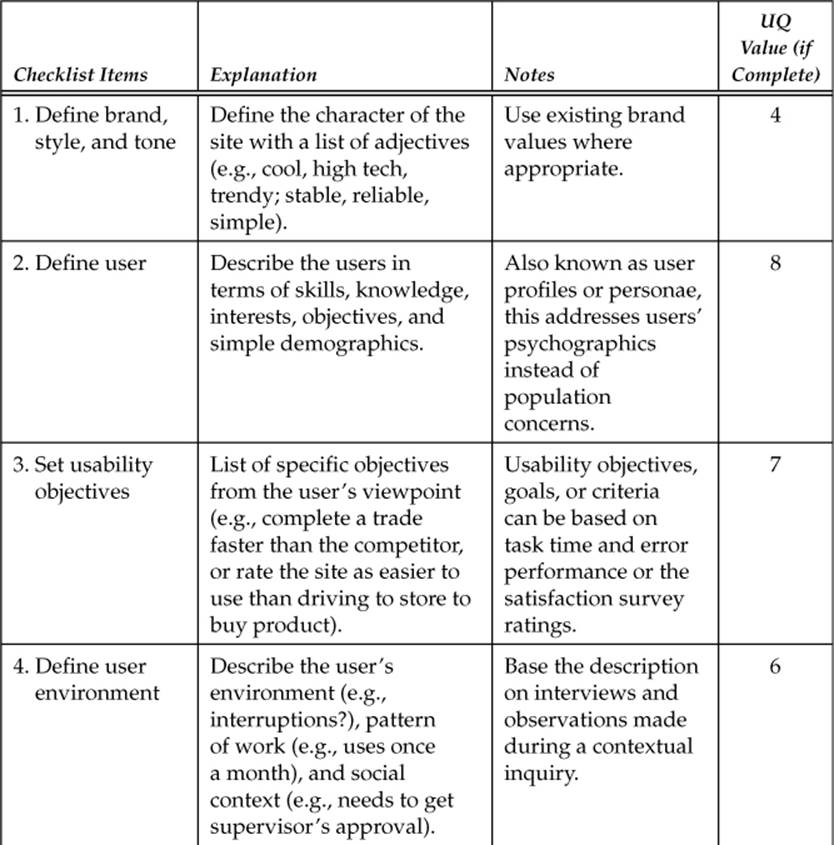

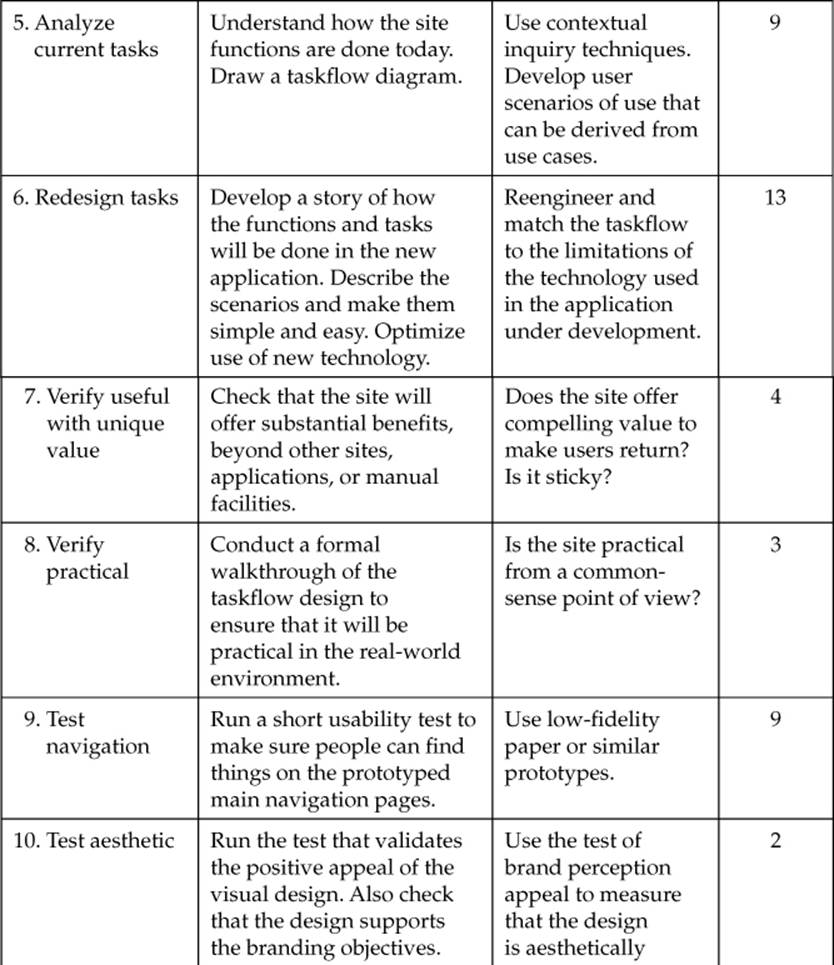

A Quick Check of Your Methodology

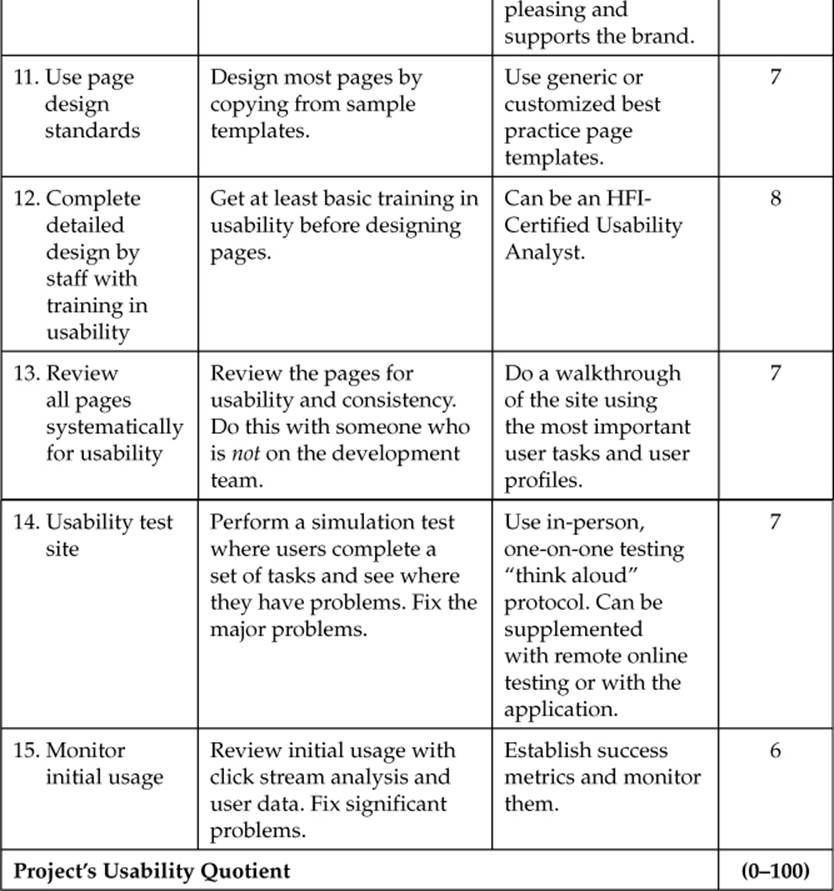

You may discover that your current methodology has some user-centered design in it, but you might be wondering if it is a good process. The following exercise will help you test it. HFI developed this test based on feedback from 35 usability professionals with a great deal of cumulative experience in the field. They were asked to identify the most important activities in user-centered design. HFI then asked the participants to provide weightings for how much the activities influenced the quality of the results, and fine-tuned the rating system by working with clients. This quick check works for websites, applications, and any other software designs.

To take the test, look at the 15 design activities described in Table 4-1. Check which ones are included in your methodology, and then add up the points to get your Usability Quotient. The maximum rating is 100. If your methodology scores less than 75 or so, it is probably in need of enhancement. If it scores less than 50, you probably need a whole new process.

Table 4-1: Calculate Usability Quotient

The Challenges of Retrofitting a Development Life Cycle

It is a rare company that does not have an existing system development life cycle. Your company may have purchased a method, or perhaps you built one from scratch. Someday every method will follow the user-centered paradigm, but today it is unlikely that either system does.

Occasionally, a company needs a whole new development process. This scenario occurs not just within brand-new organizations; a new development process may also be needed when there is a major change in the technology or scope, such as the switch from simple Web brochures to complex Web application development. Usually, however, the organization already has a software development process and needs to deal with the relationship between the existing process and the newer user-centered design methodology. The methods have to be interwoven with proper communication and handoffs between the different types of development staff. There may need to be particular stage-gates to verify that the required user experience design work has been done in one stage before proceeding to another.

There are three likely scenarios when there is an existing process: classic methodologies that are not user-centered, patches where some user-centered activities have been added, and classic methods that just have usability testing added. These same situations tend to appear in commercial methodologies that do not have an ideal user-centered process. The following subsections explore these three scenarios in greater detail.

Using Classic Methodologies

Most software development methodologies seem to follow a classic process. One such method is the waterfall method, in which the steps follow logically and build in sequence. Another classic approach is the spiraling method, in which design cycles are more iterative. Increasingly, there is some sort of rapid development method (e.g., Agile), which moves quickly to prototyping and tends to work well only for small projects. All of these are classic methods because they fail to put the user first: they identify business and technical functions and database design and middleware in progress, before the user tasks and actions are identified and taken into account. Therefore, in this context, classic means old, but not in any way good.

Retrofitting a Method That Has Added User-Centered Activities

Sometimes there has been an attempt to add user-centered activities to a software methodology. The problem is that while the attempt is always well intentioned, it is often not well done. Instead of a thorough user-centered design process, the end result is a software methodology process with a few usability activities added here and there. The effort is neither thorough nor sustained.

Both software methodologies and user-centered design methodologies have many steps. These steps are important for each methodology, and they need to be completed in a certain order. However, the steps are different. Trying to retrofit the steps of one methodology onto the steps of the other methodology does not work, just as trying to force the tools, templates, and documentation of one to fit the other does not work.

Retrofitting a Development Process That Has Only Usability Testing

In still other cases, a team has created a user-centered methodology by simply adding usability testing to its software development process. This is a common practice, though, not surprisingly, an ineffective one. Disappointment in the results is like being surprised at reaching the wrong destination when you travel without being given maps, a compass, or navigation tools for the journey.

Without a full user-centered process, performing a usability test at the end of the development process merely serves to highlight the unacceptable nature of the design. It’s a sad and frustrating result, but there is usually little that can be done except to release the poor design.

With a user-centered process, the usability test becomes a source of useful, easily implemented changes. These changes are not radical because the structural design has been solidly built and tested; there is no need to throw it out when problems crop up. The insights provided by the usability test tend to be small—in the form of minor changes to wording, layout, and graphic treatment—and easily implemented. Yet this type of final usability testing is well worth doing.

Implementing full user-centered development can be compared to creating a wooden statue. In the early phases, there is sawing. Then, you need to switch to a chisel. Finally, you apply sandpaper. The final usability testing becomes the fine sandpaper. Without the full user-centered process in place, the development effort is just like trying to create a wooden statue by starting with fine sandpaper.

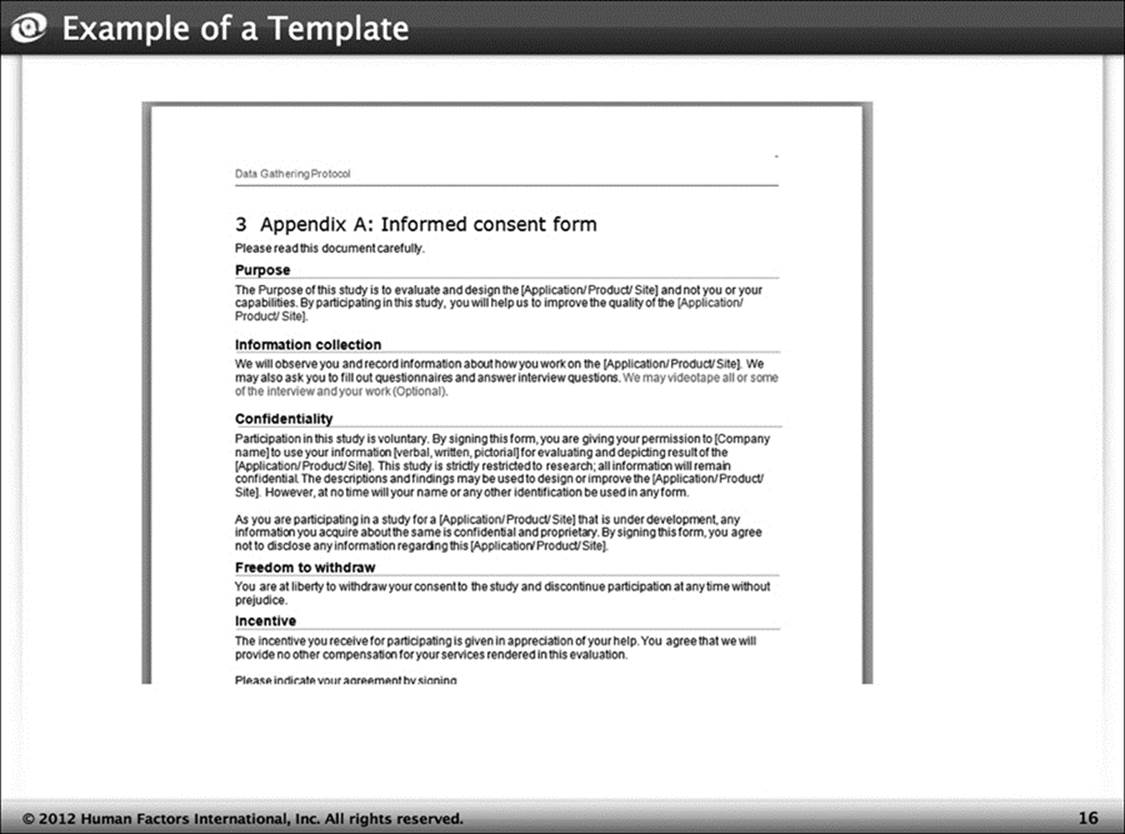

Templates

The methodology reminds practitioners about steps that must be taken. As such, it is helpful in planning projects. Once the methodology is created, however, you can build templates for the various forms and deliverables required to complete the methodology (Figure 4-7 provides an example). It is far faster to edit a template than to create each item from scratch. It is fair to say that “professionals don’t start from scratch.” It is hard to convey this lesson to staff fresh out of school, who have likely been threatened with disciplinary action for copying. In the user experience design group, though, you are not trying to prove you can do it all yourself; you are trying to put out a design that is fast, cheap, and good.

Figure 4-7: Example of a template

Summary

Following a user-centered design methodology makes your design activities reliable and repeatable. Without a methodology, it is difficult to produce high-quality designs. The maturity of your methodology is a reflection of your organization’s commitment to user-centered design, so be sure to invest in the most effective methodology available. The next chapter outlines the interface design standards you will need to design efficiently and consistently while following the methodology.